Is Authentic Control Worth Emotional Ambiguity in AI Music?

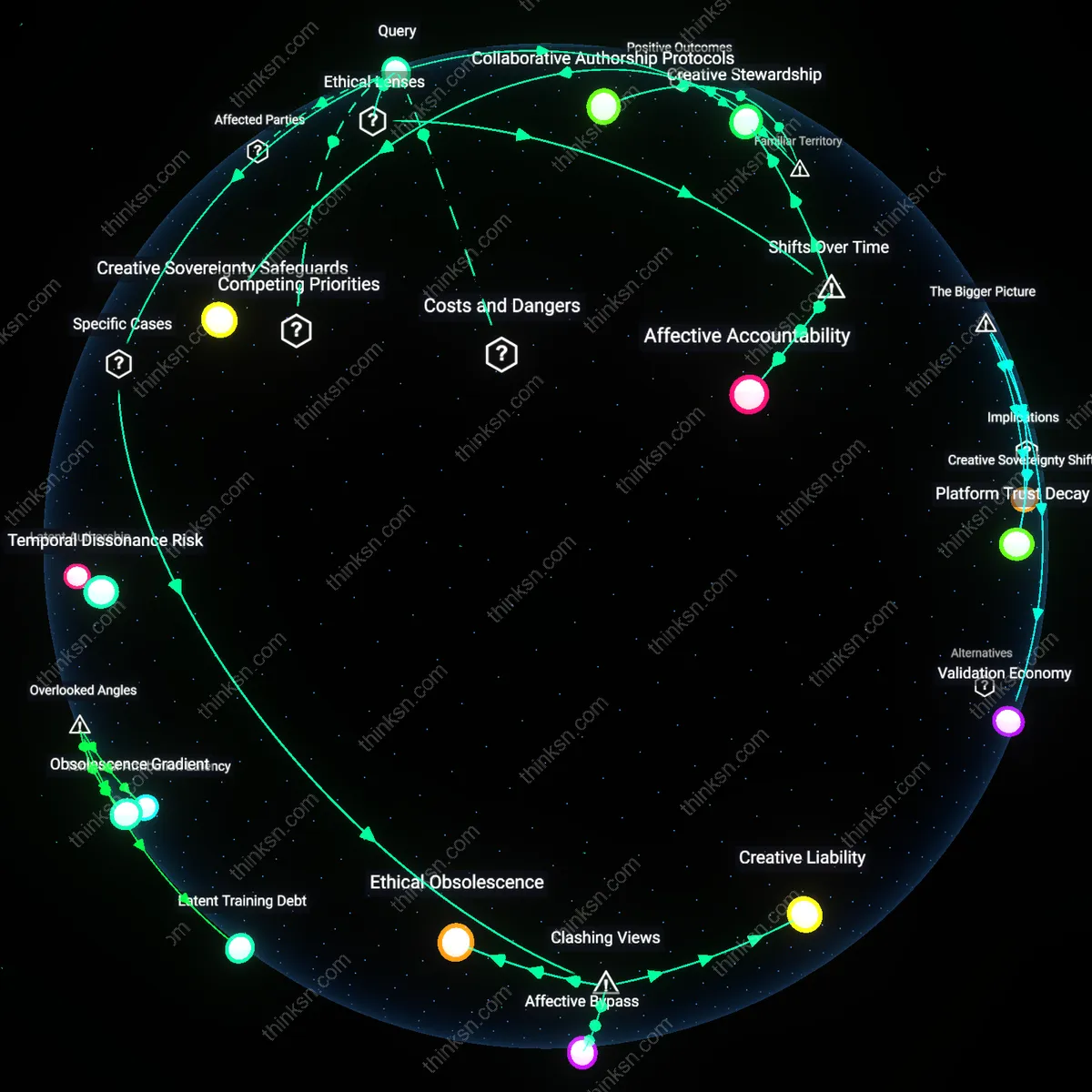

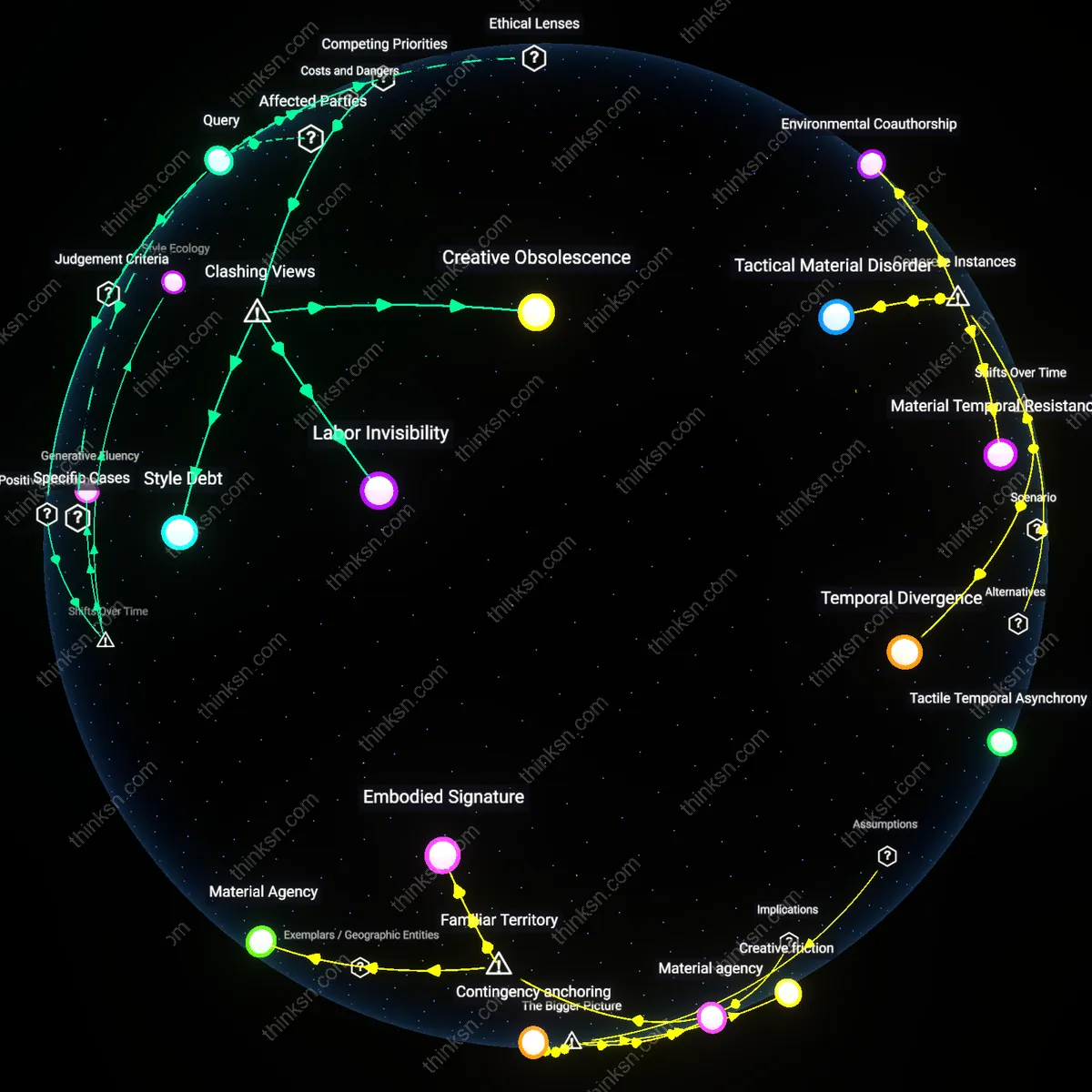

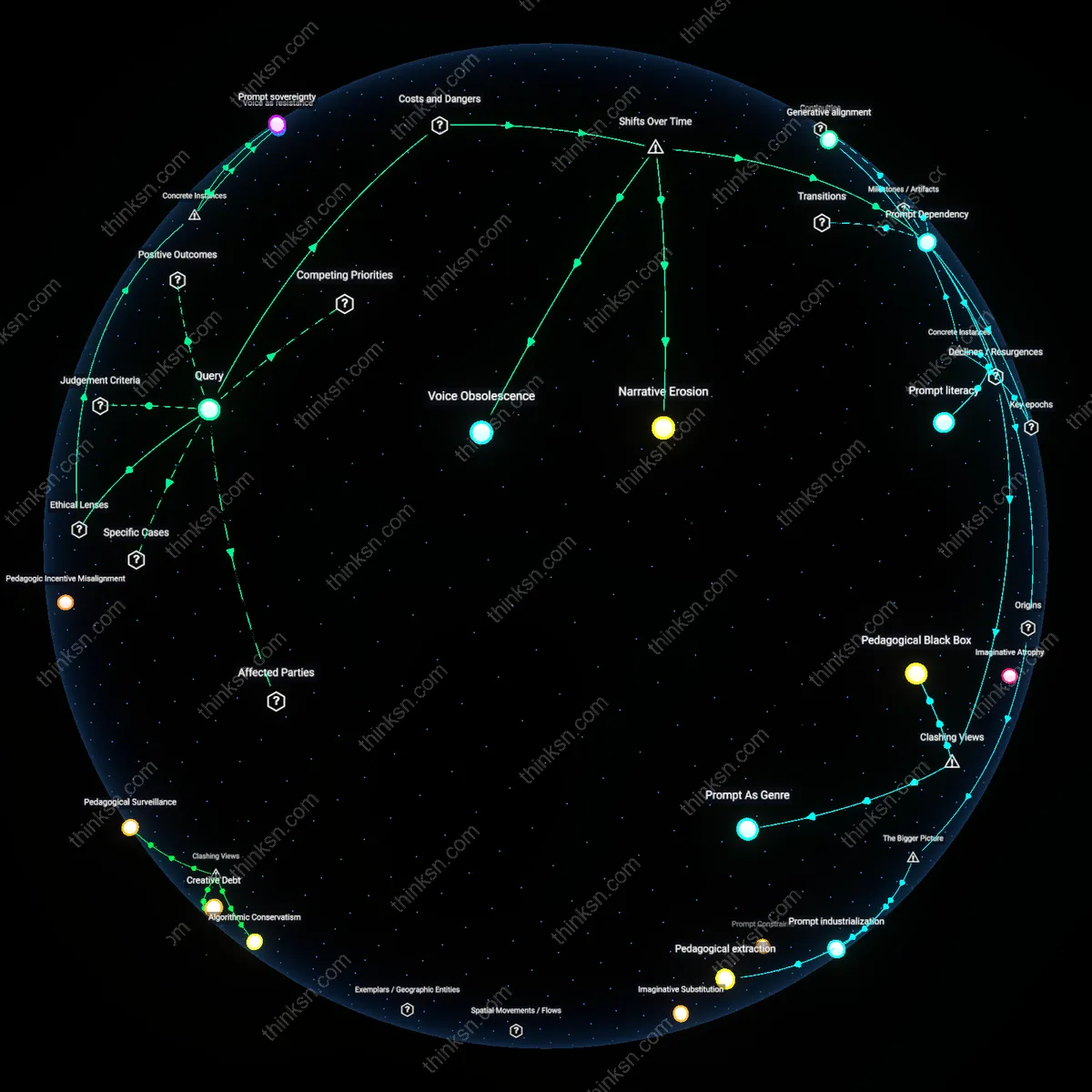

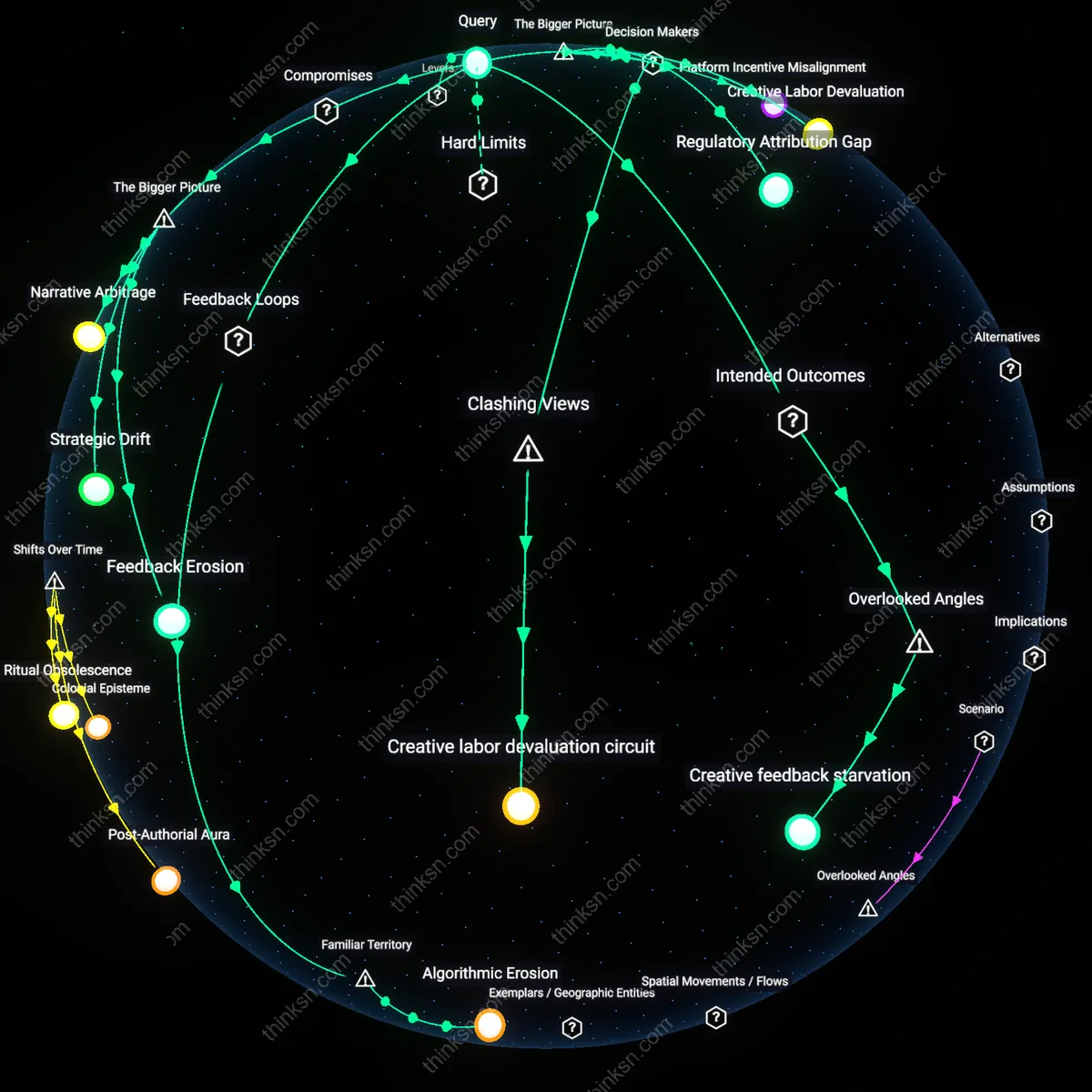

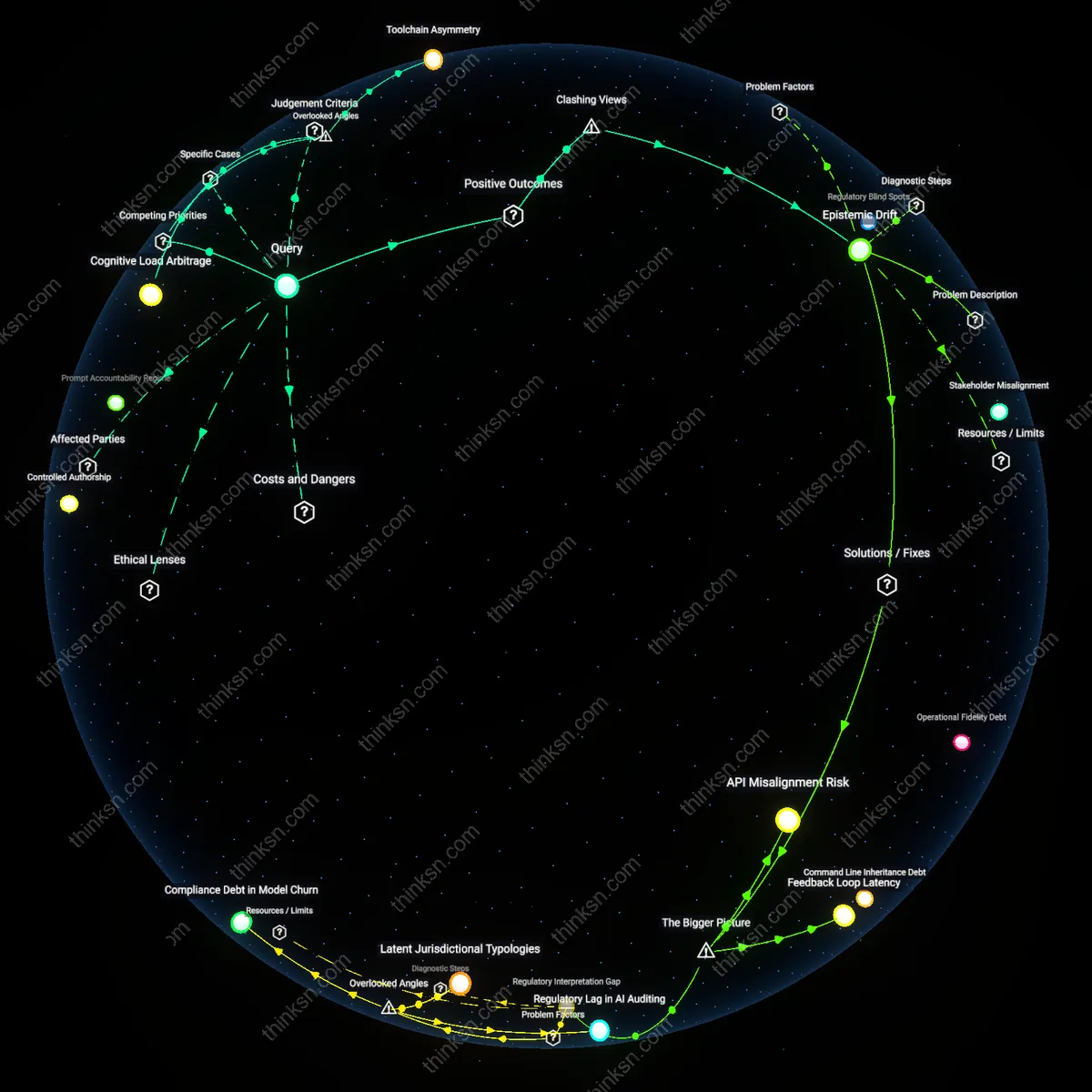

Analysis reveals 11 key thematic connections.

Key Findings

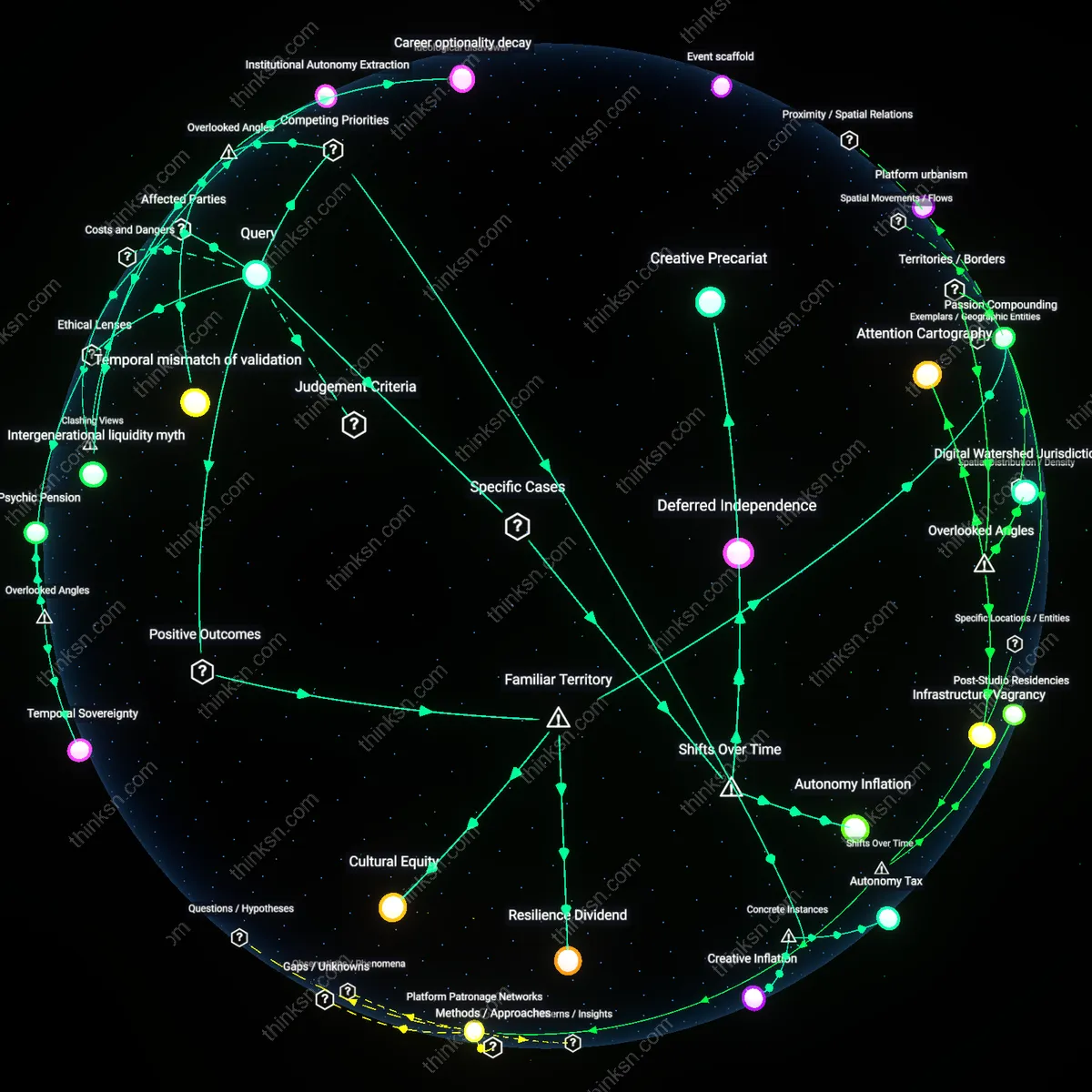

Creative Sovereignty Threshold

Establish a minimum threshold of human authorship required to claim creative control over AI-assisted compositions, judged by the proportion and qualitative role of non-algorithmic input. This requires music rights bodies like ASCAP or GEMA to codify measurable benchmarks—such as original melodic contour, lyrical intent, or structural deviation from training data norms—that determine whether a work qualifies for artist-exclusive rights, thereby distinguishing collaborative enhancement from algorithmic substitution. What’s overlooked is that current debates assume a binary between 'AI-made' and 'human-made,' ignoring the gradient sovereignty musicians actually experience when editing latent vectors or guiding generative prompts—where agency can be diluted below effective ownership despite surface-level involvement.

Temporal Dissonance Risk

Institute time-delayed release protocols for AI-generated music, where works are withheld from public distribution until independent review of their training data lineage and influence on emotional valence in test audiences. This introduces a feedback-integration period that mirrors clinical trial oversight in biotech, acknowledging that emotional effects may only emerge at scale or over longitudinal exposure. The overlooked dynamic is temporal dissonance—the psychological friction caused when audiences retrospectively discover human-like emotional cues were synthetically optimized, undermining trust not just in the work but in the entire emotional economy of music—thus threatening the long-term viability of both AI and human artistry in shared cultural spaces.

Creative Sovereignty Safeguards

Artists must retain final approval rights over AI-generated music used in their name to ensure creative alignment with their intent. This condition operates through existing contractual frameworks in the music industry—such as those enforced by labels, publishers, and performing rights organizations—where artists already exercise control over masters, credits, and licensing; embedding AI outputs into these systems ensures they are subject to the same human veto power. What’s underappreciated is that the legal infrastructure for creative control is already in place and widely recognized—so extending it to AI maintains public trust without requiring new cultural norms.

Emotional Authenticity Benchmarking

Music platforms should implement standardized affective testing for AI-generated tracks to verify emotional resonance before public release. This mechanism mirrors established practices in media testing—like focus groups for film scores or A/B testing in streaming playlists—but applies it specifically to assess whether AI music produces the intended emotional effect in real listeners, using biometric and survey data from diverse user pools. The insight often missed is that 'authenticity' in emotional impact is not exclusive to human creation, but becomes credible when verified through familiar, transparent validation rituals the public already accepts.

Collaborative Authorship Protocols

Producers and AI developers should co-design generative models using artist-specific training data only with explicit, revocable consent. This dynamic exists today in sample clearance and likeness licensing, where personal creative material cannot be reused without permission—extending this to AI training upholds the intuitive principle that one’s artistic 'voice' is proprietary. The overlooked point is that people already treat creative identity as a form of personal domain; reinforcing it through opt-in technical workflows turns ethical boundaries into functional features of innovation.

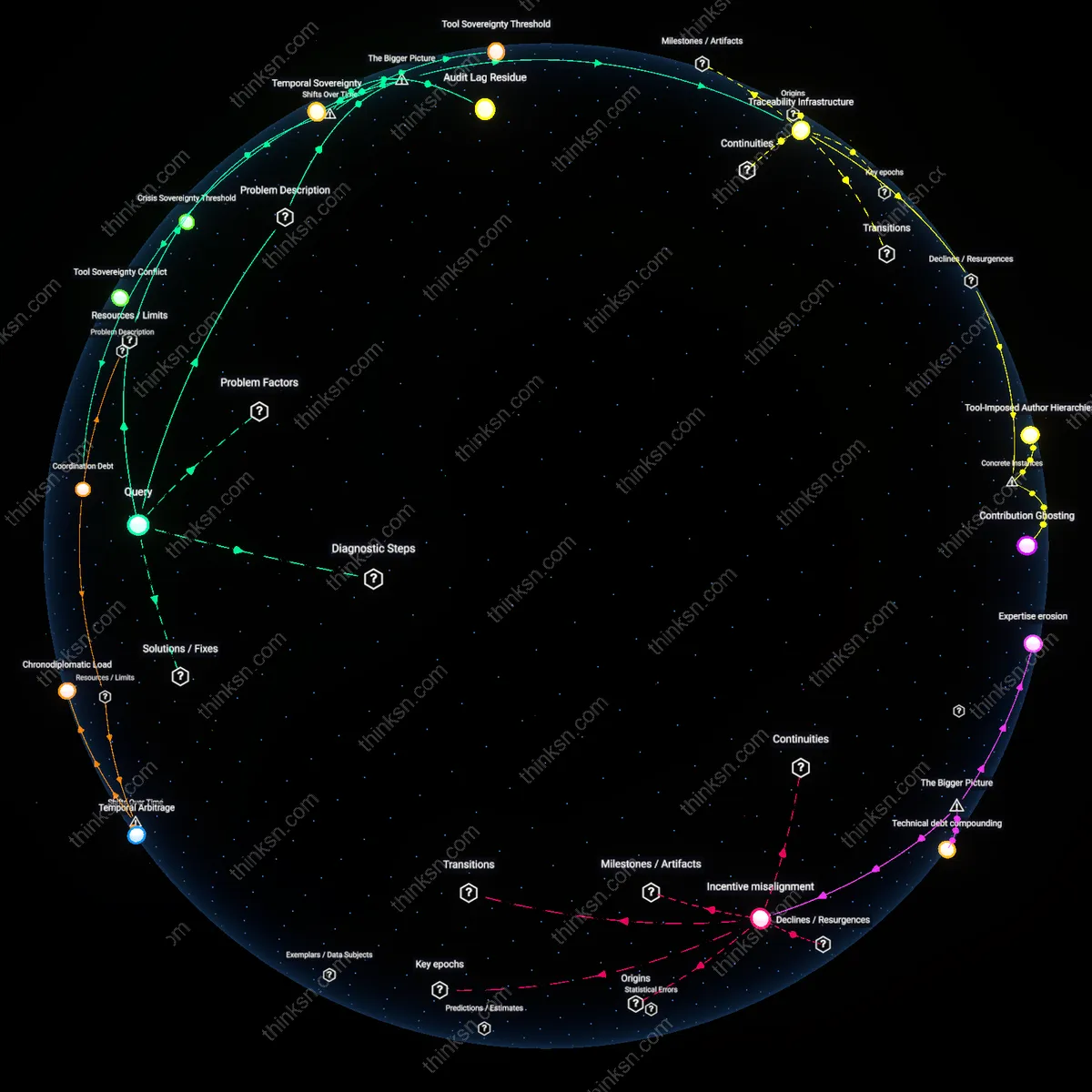

Provenance Rights

Adopt transparent authorship tracking through blockchain-based metadata to legally distinguish human-led from AI-generated musical elements, establishing enforceable moral rights under evolving copyright frameworks like the EU’s revised Directive on Copyright in the Digital Single Market. This mechanism emerged after 2020, when generative AI began undermining traditional authorship norms, revealing a historical rupture in IP law originally built on Romantic ideals of individual creativity; the non-obvious insight is that ethical control now depends not on authorship exclusivity but on verifiable participation layered over time.

Creative Stewardship

Reframe artistic agency as ongoing curatorial oversight rather than sole authorship, aligning with post-1960s avant-garde practices where indeterminacy and collaboration disrupted authorial primacy—now accelerated by AI’s capacity to generate affective material. This shift from creation-as-origin to creation-as-selection embeds ethical responsibility in the artist’s active shaping of AI outputs, revealing that genuine control persists not through exclusion of technology but through historically situated interpretive labor institutionalized in practices like experimental composition and sound art.

Affective Accountability

Institute peer-reviewed impact assessments for AI-composed music released commercially, modeled on bioethical review boards, to balance emotional influence against artistic autonomy—a response to the 2023 rise of emotionally targeted generative playlists on platforms like Spotify that exploit cognitive vulnerabilities. This regulatory turn echoes the 1970s institutionalization of research ethics after Tuskegee, signaling a transition where artistic freedom is no longer absolute but contingent on demonstrated societal non-harm, exposing an emerging duty of care in creative AI deployment.

Creative Liability

Artists must relinquish claimed authorship of AI-assisted works to preserve ethical control, as seen in Holly Herndon’s *PROTO* project, where she treated the AI ensemble as a collective collaborator rather than a tool—this redistributes emotional accountability and exposes the non-obvious reality that maintaining creative control in AI music requires surrendering traditional authorship, undermining the dominant narrative that control must be centralized in the individual artist.

Affective Bypass

Streaming platforms like Spotify are engineering listener emotional responses through AI-generated playlists such as *AI DJ*, which use affective computing to simulate emotional arcs indistinguishable from human curation, thereby creating a feedback loop where perceived authenticity is manufactured—this challenges the assumption that emotional impact validates creative control, revealing instead that emotional effects can be weaponized to displace human artistic agency under the guise of personalization.

Ethical Obsolescence

The rise of generative AI models trained on unlicensed works—exemplified by Universal Music Group’s legal action against Anthropic and Sony’s deployment of its own AI tools—demonstrates that record labels, not artists, are becoming the gatekeepers of creative legitimacy, enforcing a system where artists’ emotional authenticity is rendered legally irrelevant, exposing the dissonance that ethical creative control now depends less on artistic intent than on corporate data governance, reversing the expectation that ethics empower individual creators.