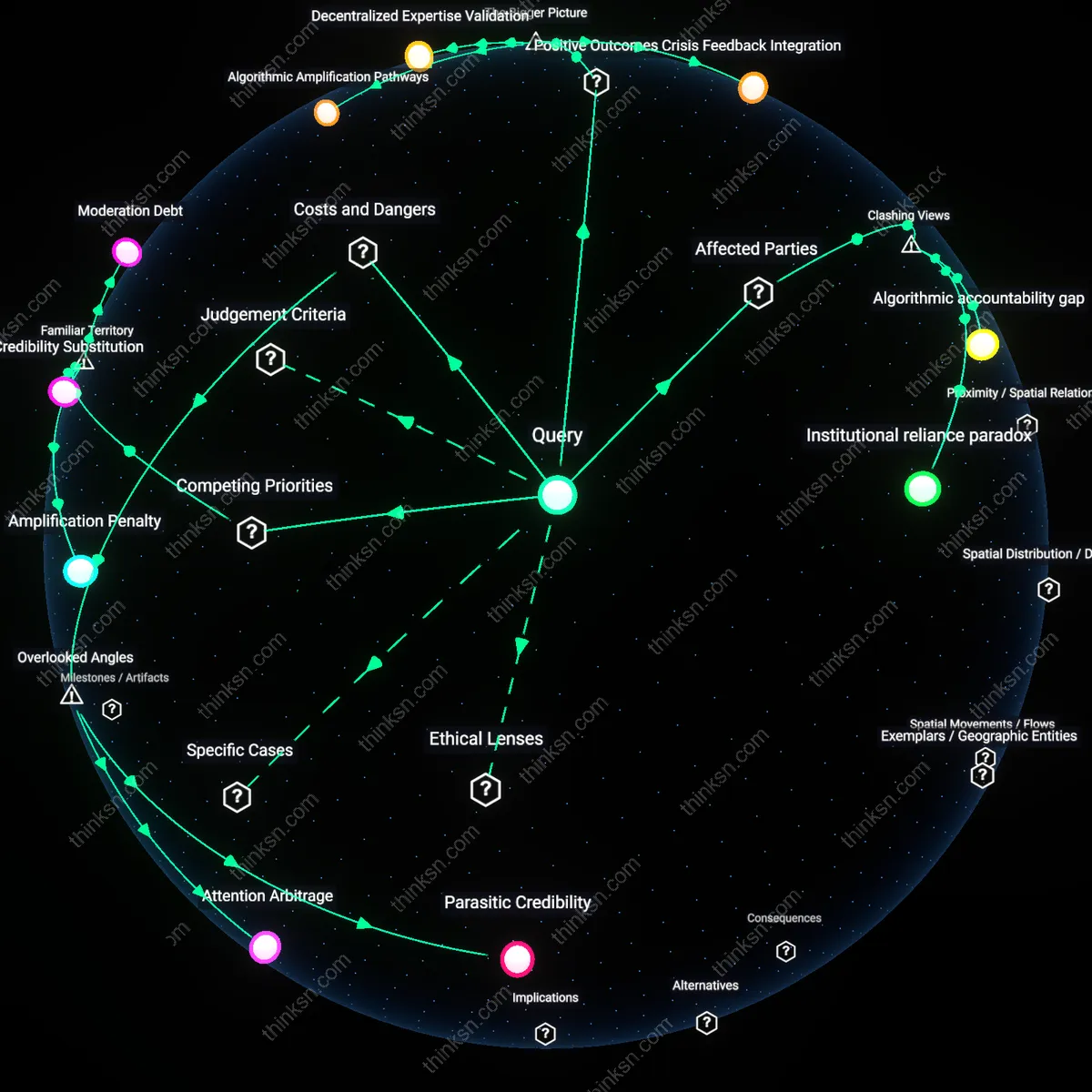

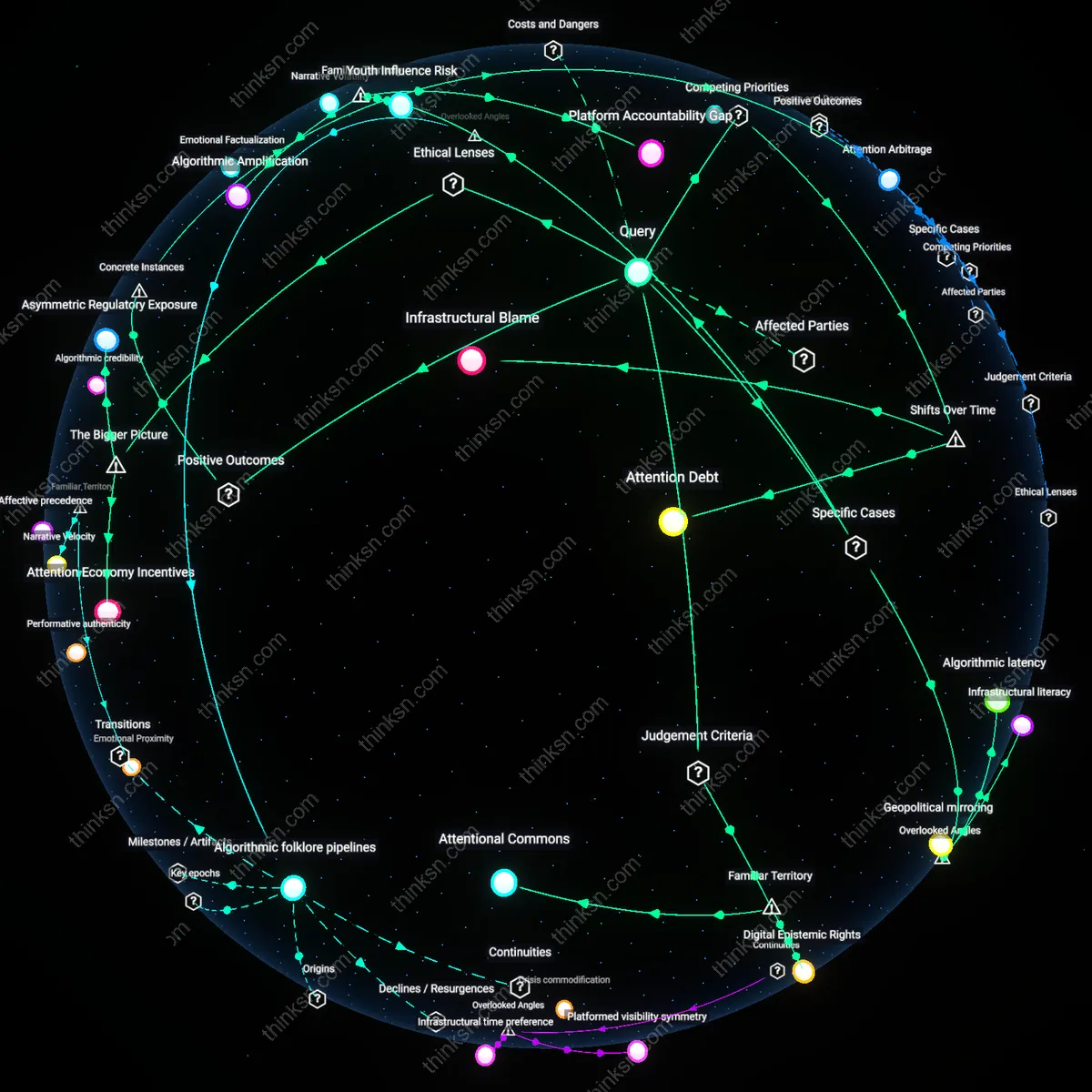

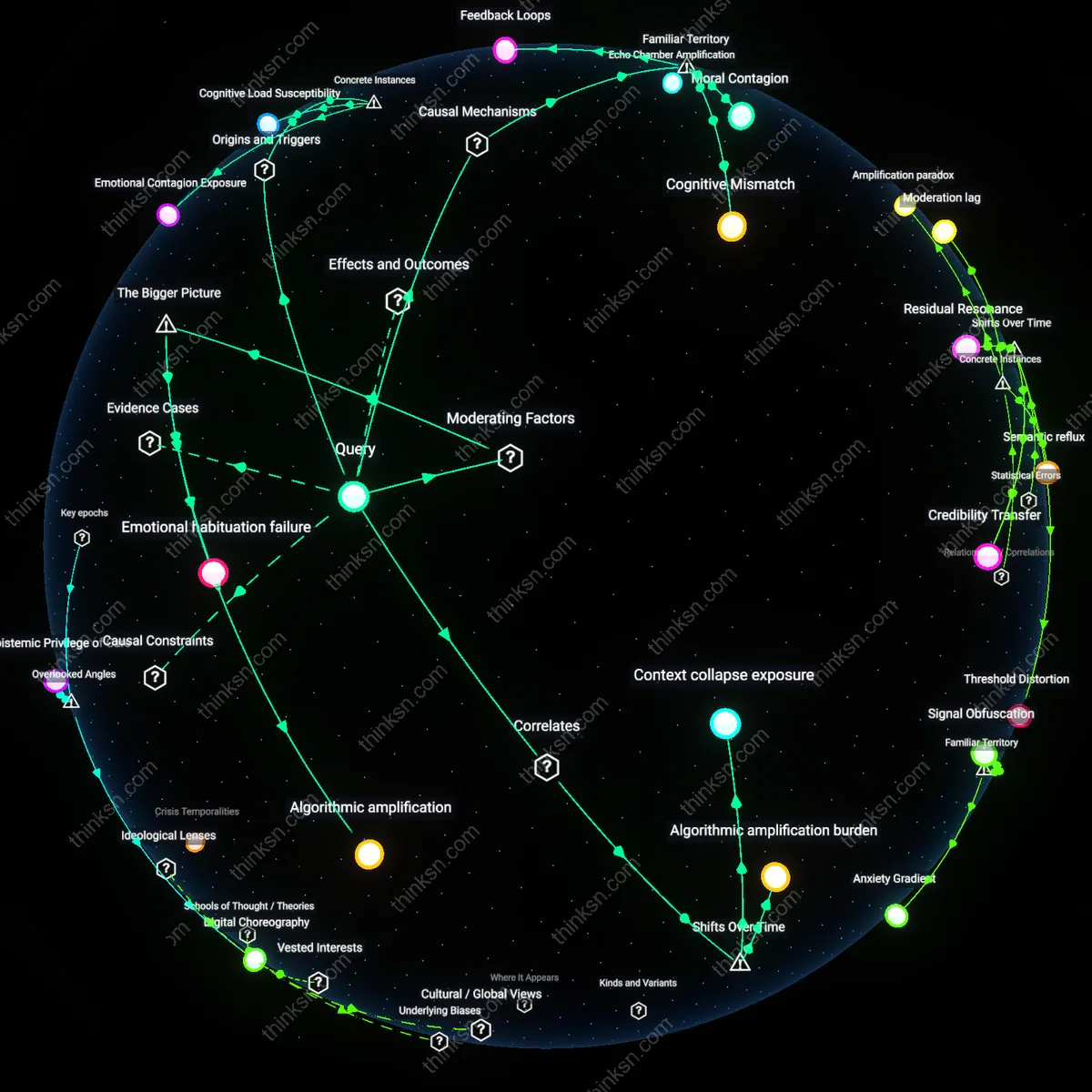

When Does Misinformation Outpace Fact-Checkers?

Analysis reveals 10 key thematic connections.

Key Findings

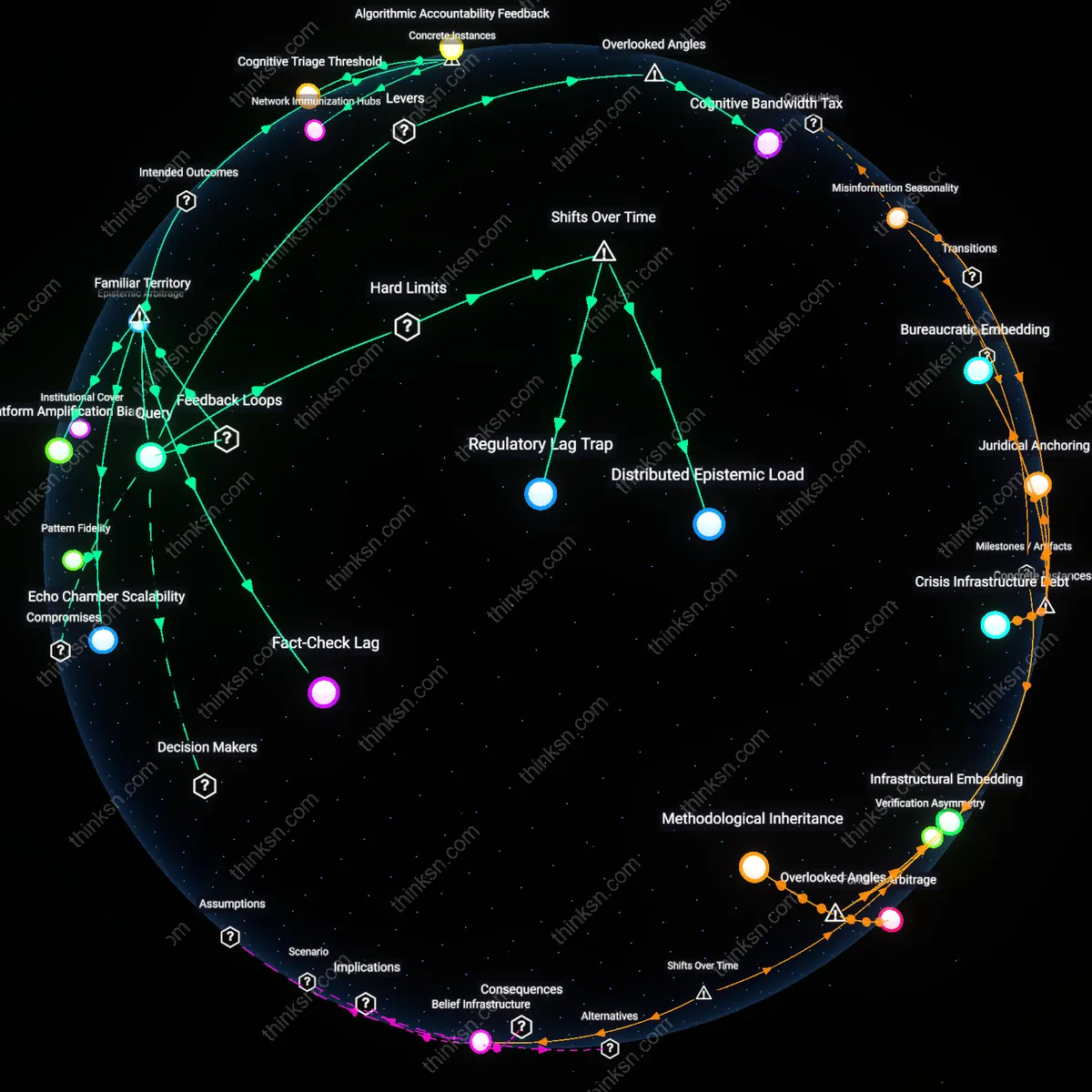

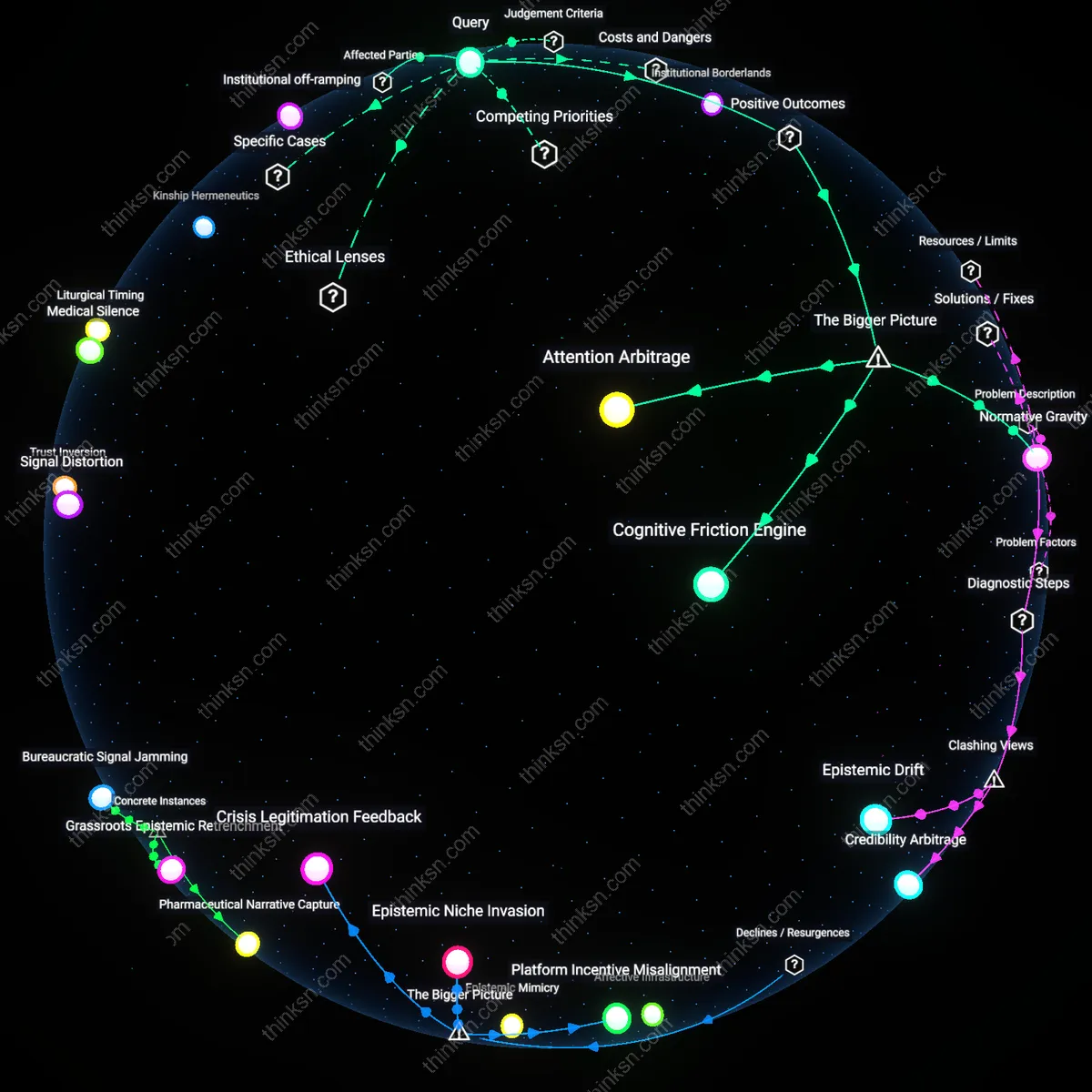

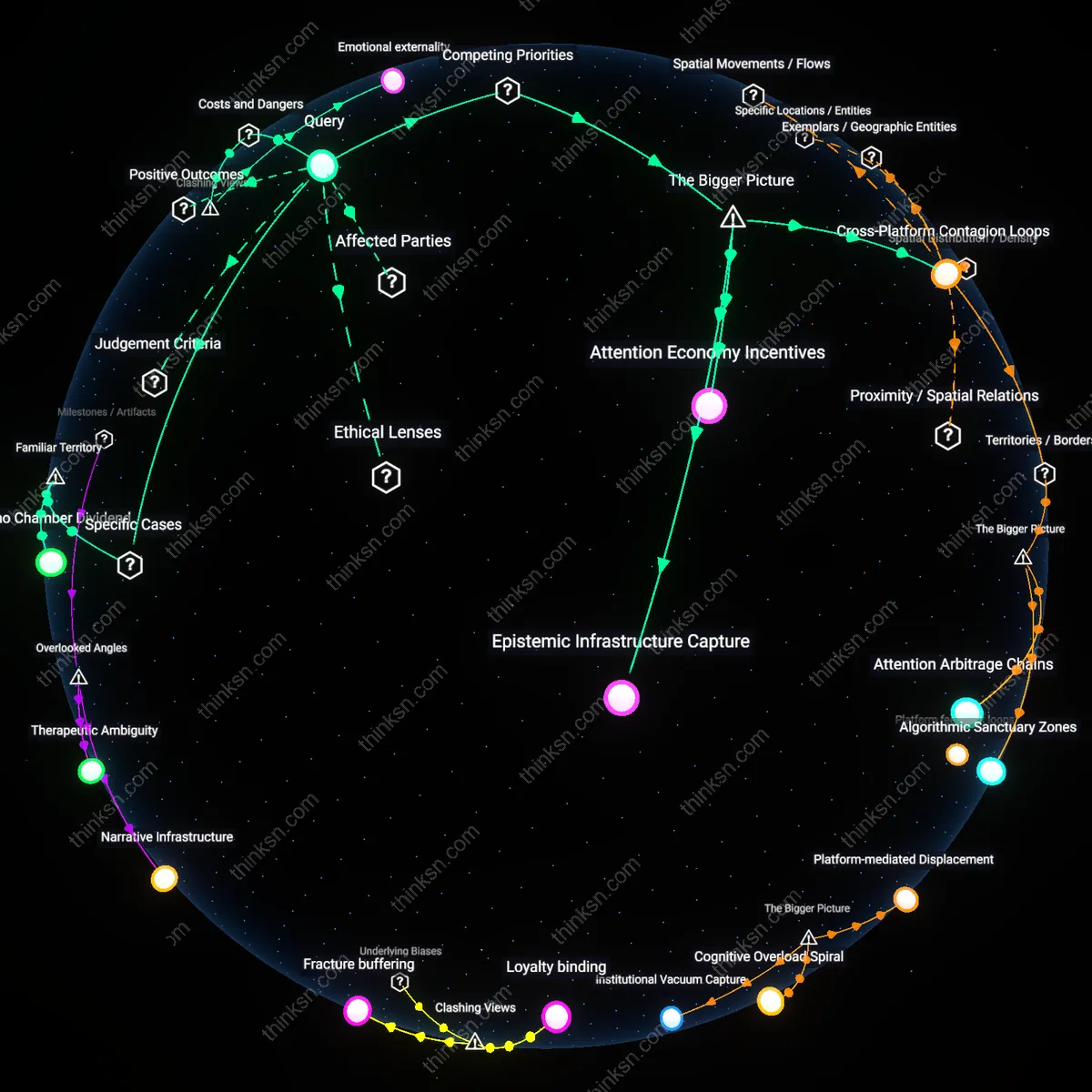

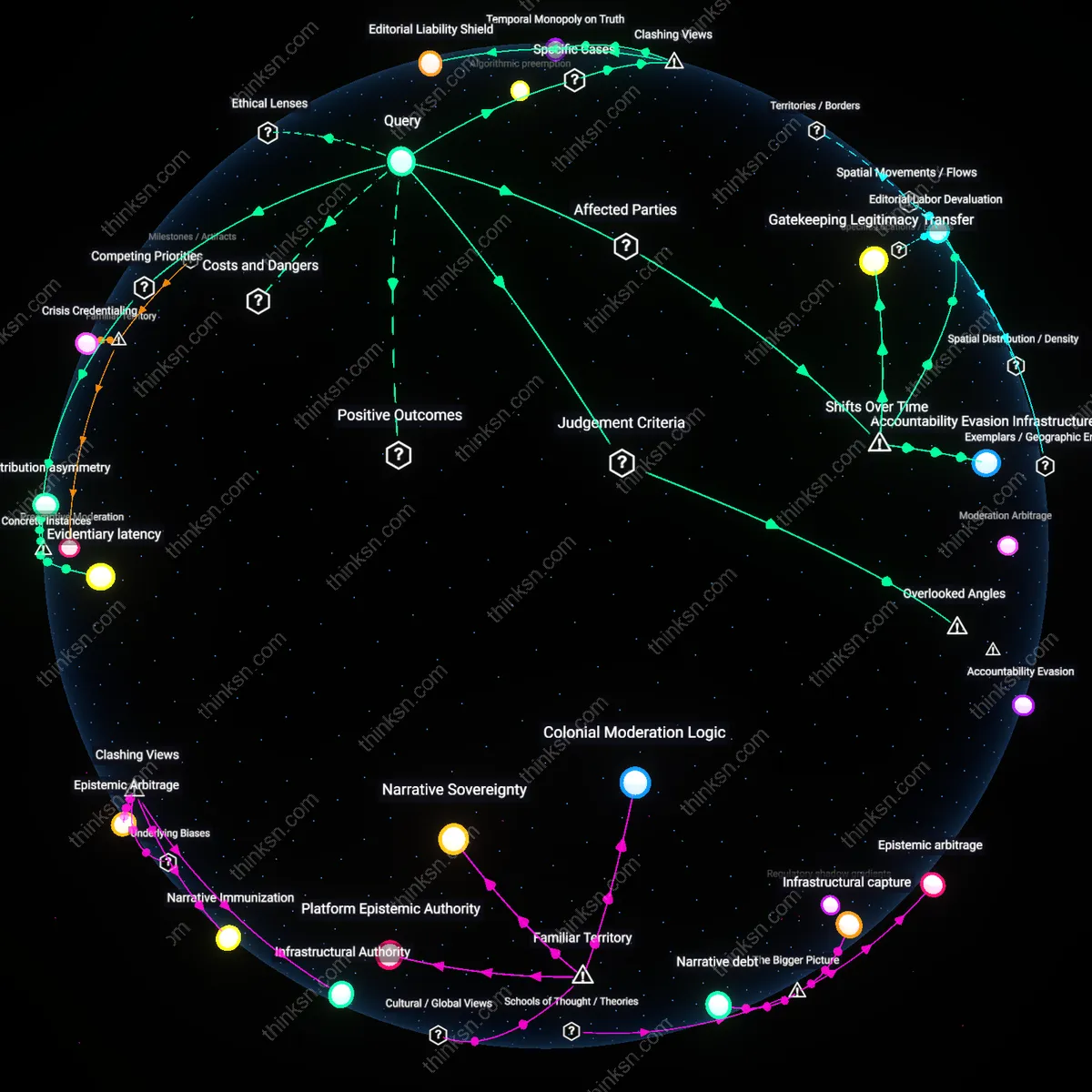

Inflection Threshold

The complexity of misinformation networks overwhelms individual fact-checkers when decentralized rumor economies surpass platform-mediated moderation cycles, as seen after the 2016 U.S. election, where coordinated inauthentic behavior on Facebook and Twitter outpaced third-party validators relying on legacy editorial norms; this shift from institutional gatekeeping to algorithmic virality created a temporal mismatch—fact-checking lags behind synthesis—revealing that speed, not accuracy, became the dominant selection pressure in information ecosystems, a dynamic unheard of in pre-digital media environments where verification could align with publication timelines.

Distributed Epistemic Load

Misinformation overwhelms individual fact-checkers when user-generated content platforms adopted engagement-based ranking in the early 2010s, transforming passive audiences into co-producers of deceptive narratives through micro-amplification; this transition from centralized broadcasting to networked participation meant each user absorbed a fraction of verification responsibility without corresponding tools, turning cognitive labor into a collective action problem masked as personal discernment—a shift that exposed how platforms externalize epistemic risk while claiming neutrality, a structural sleight-of-hand absent in earlier public information regimes.

Regulatory Lag Trap

Individual fact-checkers are overwhelmed when cross-jurisdictional disinformation operations exploit legal pluralism, as emerged distinctly after the EU’s Code of Practice on Disinformation in 2018, where coordinated state-aligned actors relocated infrastructure to regions with weak intermediary liability laws, creating asymmetric enforcement terrain; this spatial fragmentation of regulatory response—driven by the shift from national broadcasting controls to global platform governance—reveals that even technically effective fact-checking fails when jurisdictional boundaries prevent sanctioning, making truth-telling a localized gesture in a transnational deception system.

Cognitive Bandwidth Tax

Designating specific low-engagement time slots in digital platforms to deliver verified contextual information reduces the cognitive load on individuals by preemptively addressing misinformation during periods of minimal attention competition. This lever exploits behavioral economics—specifically, the underappreciated dynamic that misinformation spreads most effectively when users are cognitively overloaded, not just misinformed—by inserting clarifying content when neural resources for evaluation are highest, such as early in a browsing session. Most counter-misinformation efforts assume content accuracy is the primary battlefront, overlooking how timing and mental fatigue govern susceptibility, thereby revealing that the capacity to process truth is a scarce resource as critical as the truth itself.

Fact-Check Lag

Fact-checkers lose decisively to viral misinformation when response timing exceeds platform amplification cycles. On networks like X (formerly Twitter), false claims gain maximum traction within the first two hours, but institutional fact-checkers—such as those at newsrooms or the International Fact-Checking Network—rarely publish verified rebuttals within that window due to editorial workflows, creating a sustained delay loop where falsehoods entrench before corrections emerge; this lag is non-obvious because public trust presumes verification is timely, when in reality the system incentivizes speed over accuracy.

Echo Chamber Scalability

Individuals defer to community consensus over external verification when misinformation spreads through tightly linked social groups such as vaccine-skeptic Facebook clusters or QAnon Telegram cells. These networks function as self-reinforcing belief systems where each corrective fact introduces doubt that the group interprets as evidence of a cover-up, thus amplifying internal cohesion; this dynamic is underappreciated because most public discourse focuses on content accuracy rather than the scalable resilience of identity-anchored networks that treat fact-checking as an outgroup assault.

Platform Amplification Bias

Social media algorithms preferentially promote emotionally charged misinformation, rendering individual fact-checking irrelevant at scale because corrections lack the engagement metrics to reappear in feeds. On Meta’s platforms, posts flagged as false are rarely demoted enough to offset their initial organic spread through shares and comments, creating a reinforcing loop where deplatforming truth compounds visibility inequality; this mechanism is non-obvious because users assume moderation systems correct the record, not realizing that algorithmic distribution policies undermine even verified corrections.

Cognitive Triage Threshold

During the 2016 U.S. presidential election, individual fact-checkers at outlets like PolitiFact and FactCheck.org reached a cognitive triage threshold where the volume and velocity of micro-targeted misinformation on Facebook exceeded their capacity to verify and correct in real time, revealing that human-mediated verification systems are structurally unscalable against algorithmically amplified disinformation; this bottleneck persists even with increased staffing because the rate of novel false claims grows non-linearly with audience fragmentation, exposing an unsustainability in reactive truth maintenance when detection lags propagation.

Network Immunization Hubs

In response to anti-vaccine misinformation surging across WhatsApp in India in 2018, which overwhelmed individual health workers attempting one-on-one debunking, the public health initiative HealthImpulse deployed regional 'digital hygiene trainers' in Maharashtra who mapped local rumor ecosystems and trained village-level influencers to preemptively disseminate counter-narratives, demonstrating that decentralized, socially embedded nodes can interrupt misinformation cascades more effectively than centralized expert fact-checkers by leveraging pre-existing trust architectures; this shift from content correction to social inoculation reveals the strategic superiority of proximity-based resilience over distant authority in high-density information networks.

Algorithmic Accountability Feedback

When YouTube’s recommendation algorithm was found in 2019 to be systematically amplifying conspiracy theory content—such as flat Earth and 9/11 'truthers'—researchers at the Mozilla Foundation’s RegretsReporter project aggregated user-submitted evidence of harmful recommendations and used it to force platform-level adjustments through public pressure and regulatory testimony, proving that collective data pooling from distributed users can expose hidden systemic biases that individual fact-checkers cannot trace, and establishing that scalable oversight requires capturing machine logic through crowd-sourced behavioral audits rather than relying on transparent policies that obscure actual algorithmic outputs.