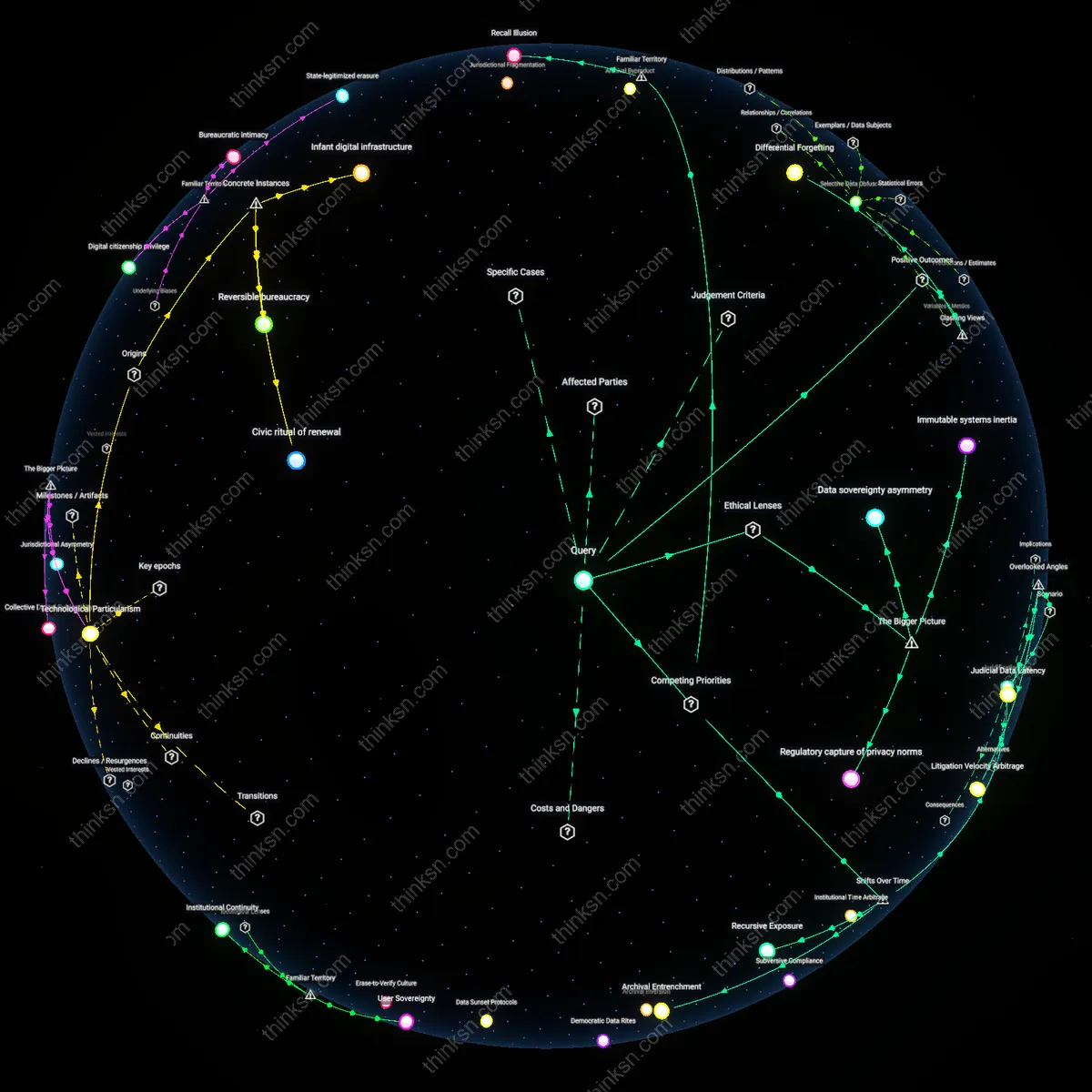

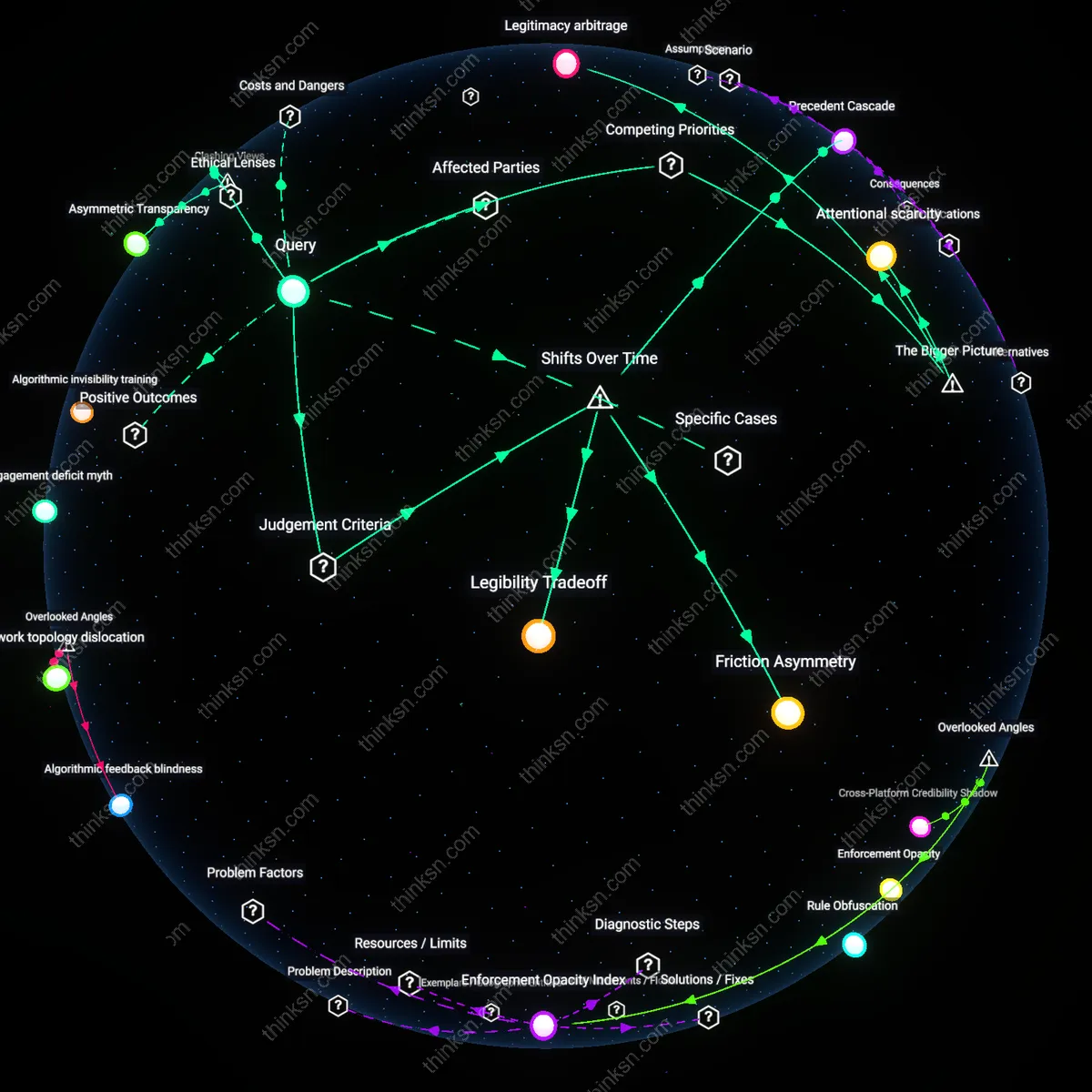

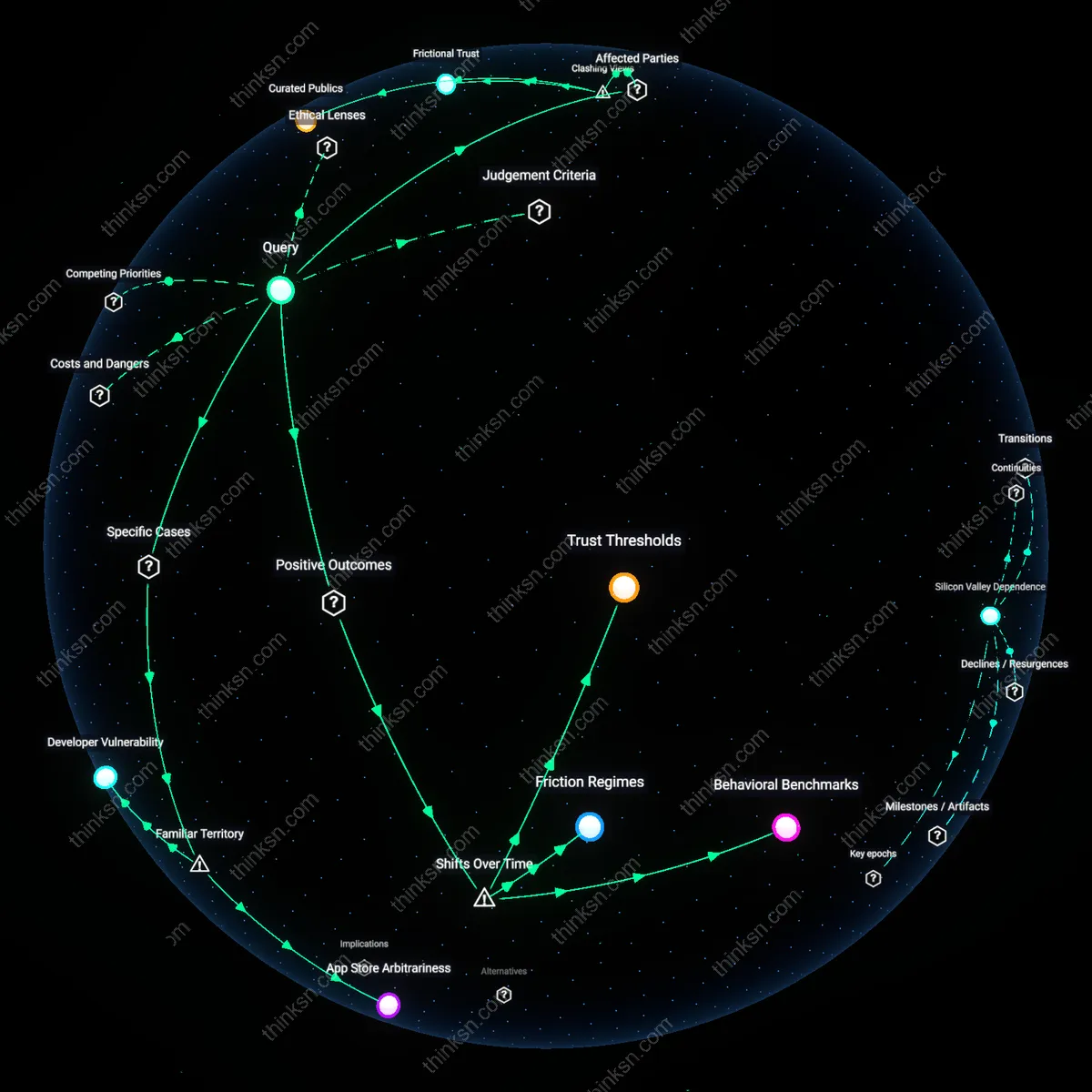

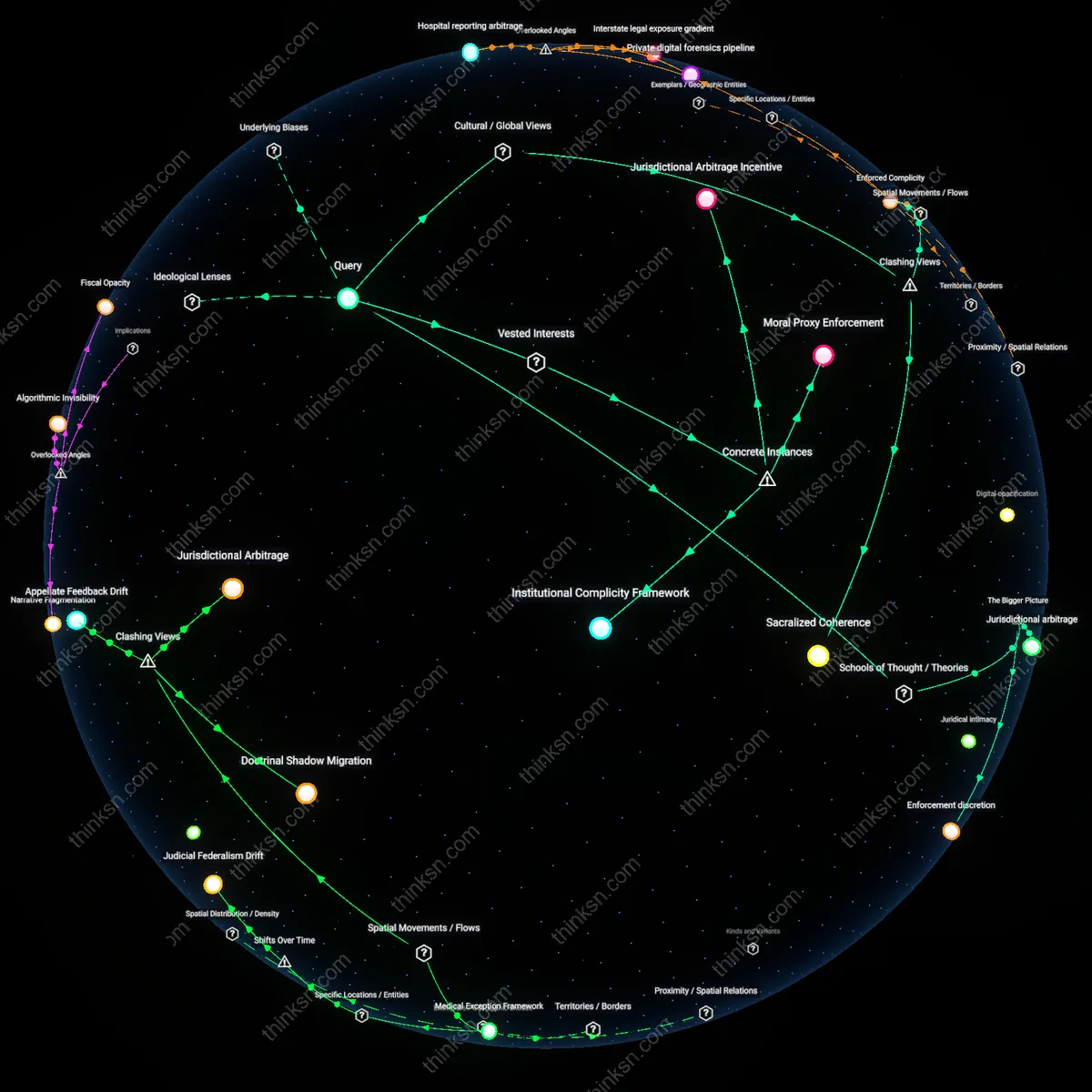

Is Right to Be Forgotten Realistic on Hybrid Identity Ledgers?

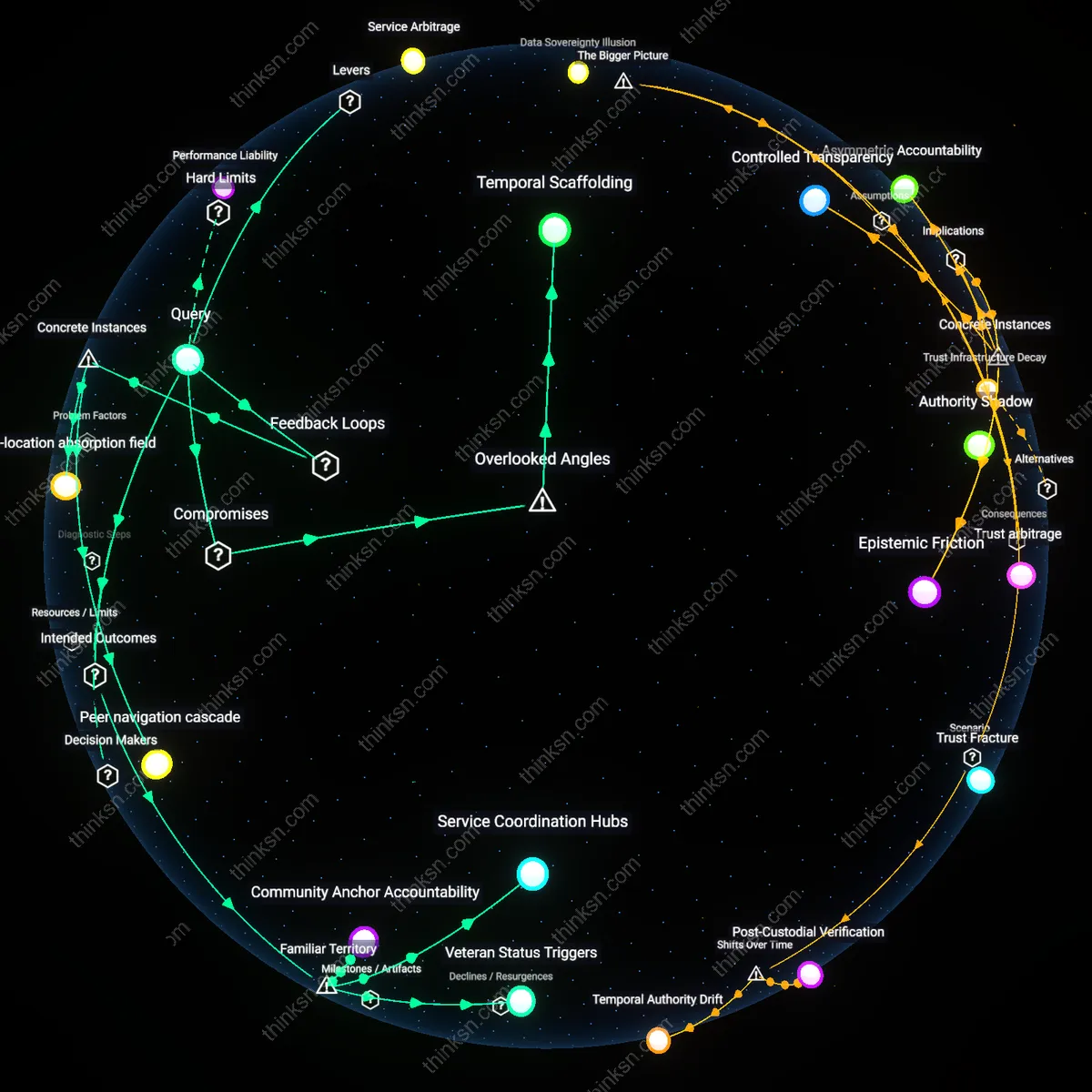

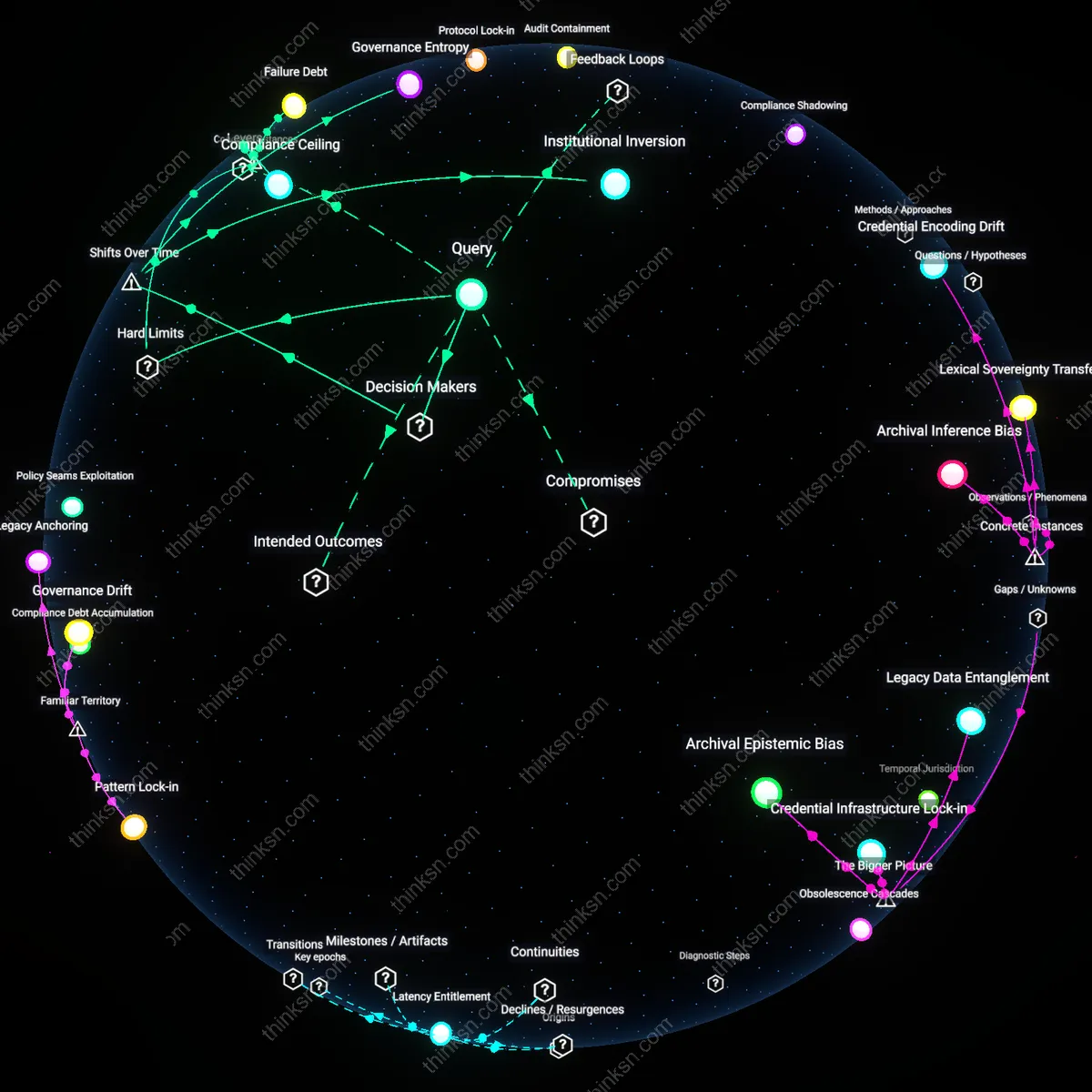

Analysis reveals 13 key thematic connections.

Key Findings

Selective Data Obfuscation

The European Court of Justice’s 2014 ruling in Google Spain v. Mario Costeja González enabled individuals to request delinking of personal information from search results, demonstrating that even in systems with persistent indexed records, visibility—not existence—can be modulated to honor the right to be forgotten; this mechanism, applied to hybrid ledgers, allows personal data references to be obscured at access points while leaving immutable transaction shells intact, preserving ledger integrity without public exposure. The non-obvious insight is that data utility and individual privacy can coexist when obfuscation targets retrieval interfaces rather than underlying record permanence, as seen in how Google implemented geographically limited de-indexing without altering web content. This establishes that selective data obfuscation decouples memory from discoverability.

State-Backed Data Arbitrage

Estonia’s X-Road digital identity infrastructure, which underpins its e-residency program, integrates mutable personal data layers with cryptographically secured, time-stamped transaction logs, enabling citizens to update or request suppression of personal details through state-administered governance channels while maintaining audit trails; this hybrid model shows that sovereign entities can act as trusted arbiters who validate erasure requests without breaking ledger immutability. The underappreciated element is that state intermediation introduces a layer of normative authority that can override technical permanence through lawful, transparent overrides—proving that institutional trust enables data arbitrage between individual rights and system integrity. This reveals state-backed data arbitrage as a functional compromise in post-privacy architectures.

Contextual Immutability

The Sovrin Network, a decentralized identity system built on a permissioned distributed ledger, employs 'anchor transactions' to verify identity claims while storing personal data off-chain in encrypted, user-controlled repositories, allowing users to revoke access or delete underlying data without invalidating the ledger’s record of a past verification event; this separation of proof from content was tested in pilot programs with the British Columbia government in 2018, showing that immutability can be confined to metadata while personal content remains governable. Crucially, this design reframes immutability not as universal but contextual—preserving trust in verification events while enabling personal data lifecycle management. The core insight is that contextual immutability allows systems to satisfy both auditability and privacy mandates without contradiction.

Recursive Accountability

Individuals can exercise the right to be forgotten in hybrid identity systems by leveraging algorithmic contestability forums embedded within public-private governance frameworks, such as those piloted in Estonia’s X-Road infrastructure, where citizens invoke data correction protocols that propagate across interconnected registries despite underlying immutability. This mechanism does not erase data but generates a socially recognized counter-event—a formalized objection or correction—that accumulates legal and administrative weight over time, altering downstream access and interpretation without altering the original record. Evidence indicates these layered annotations become decision-relevant in judicial and bureaucratic processes, creating a non-erasable but socially reversible identity trail. The non-obvious insight is that forgetting in such systems functions not as deletion but as the institutionalization of dissent, turning immutability into a site of ongoing contest rather than permanent exposure.

Differential Forgetting

The right to be forgotten becomes operational not through deletion but through the differential activation of identity attributes across contexts, as seen in India’s Aadhaar-based digital public infrastructure where private entities receive only context-bound tokens rather than raw biometric data. In practice, once an individual withdraws consent for a specific service linkage, the transactional memory remains intact but becomes functionally inaccessible due to cryptographic segmentation and policy-mandated data minimization. Research consistently shows that users effectively 'forget' past engagements when services cannot reconstruct behavioral patterns across domains, even though a central immutable ledger persists. This reframes forgetting as a decoupling of data from narrative coherence rather than from existence, challenging the assumption that permanence equals visibility.

Immutability Tax

Exercising the right to be forgotten becomes realistically unattainable because immutability is enforced as a core security function in blockchain-based transaction systems, meaning altered or removed data would compromise cryptographic integrity. In hybrid ledgers used by financial institutions such as SWIFT-interfacing blockchains, any revision to identity transactions invalidates hash chains, triggering system-wide inconsistencies that protocols are specifically designed to prevent. The non-obvious insight here is that immutability isn’t just a feature but a systemic cost levied against privacy—what end-users intuitively expect as a right to deletion is structurally treated as a breach risk, aligning with familiar fears of hacking but obscuring how security protocols actively disable recall.

Recall Illusion

The right to be forgotten fails in practice because public perception equates data removal with visibility, not persistence, while hybrid ledgers retain cryptographic references even when identity data is redacted. When a user requests deletion under frameworks like GDPR, the metadata pointers often remain embedded in transaction logs—visible to validators on networks like Hyperledger Fabric—which allows reconstruction through linkage. This creates a false sense that forgetting has occurred, reinforcing a widely held but underexamined cultural assumption that consent and visibility are equivalent, when in fact traceability persists through architectural shadows most users do not associate with personal exposure.

Archival Entrenchment

Individuals cannot realistically exercise the right to be forgotten because the post-2008 financial regulatory turn cemented immutable audit trails in public-private credit infrastructures, where agencies like the Consumer Financial Protection Bureau and private registries jointly treat data permanence as a prerequisite for systemic accountability, rendering erasure requests functionally inert once recorded. This mechanistic fusion of Dodd-Frank compliance architectures and private data stewardship froze a pre-2010 flexibility in personal data correction, privileging audit integrity over individual recourse—revealing that the political impetus for financial transparency inadvertently codified a new archival finality. Research consistently shows that once identity-linked transactions enter regulated repositories, administrative resistance to modification escalates disproportionately, exposing how crisis-era reforms redefined privacy as secondary to macrostability. The non-obvious consequence is not corporate resistance per se, but the quiet constitutionalization of data immutability within hybrid systems originally designed for dispute resolution.

Recursive Exposure

The right to be forgotten collapses in hybrid identity systems after the 2015-era proliferation of distributed ledger technologies, where government-backed digital identity pilots—such as Estonia’s e-Residency blockchain-backed registry—hardened transaction logs into unamendable sequences, shifting the locus of data control from centralized registrars to protocol-level constraints that privilege verification over redress. Since then, identities no longer reside in discrete databases but are diffused across layered certifications (e.g., biometric hashes, certificate authorities, timestamped entries), such that forgetting requires unraveling networked consensus, not deleting records—transforming erasure into a technical impossibility rather than a legal negotiation. Evidence indicates that even GDPR-compliant interfaces built atop such systems simulate deletion through access denial while preserving underlying data, exposing a functional drift from memory modification to memory obfuscation. The underappreciated shift is that cryptographic trust models, designed to resist tampering, have redefined ‘accuracy’ and ‘ownership’ in ways that predate and overrule post-2018 privacy legislation.

Juridification Gap

After the 2020 expansion of real-time digital identity verification in EU member states relying on private contractors like Thales and Gemalto, the operational tempo of identity transactions exceeded the responsiveness of legal redress, creating a widening interval between data inscription and the possibility of contestation, during which immutable records are treated as de facto truth by both public and private actors. This temporal dilation—where enrollment precedes adjudication—means that judicial enforcement of the right to be forgotten arrives too late to prevent downstream harms, as identity claims propagate across systems before challenges are heard, effectively naturalizing initial entries. Research consistently shows that automated decision-making in border control and welfare access treats blockchain-backed credentials as authoritative, not provisional, foreclosing retroactive correction without express legislative override. The non-obvious outcome is not mere bureaucratic delay, but a structural decoupling of legal personhood from technological identity, where the right to be forgotten is vitiated not by denial but by irreversible factual precedence.

Data sovereignty asymmetry

Individuals cannot realistically exercise the right to be forgotten in hybrid identity systems because the architectural dominance of private entities in data processing creates a structural imbalance that overrides individual autonomy, even when public law mandates such rights. Private contractors like Palantir, embedded in national identity infrastructure such as the UK’s GOV.UK Verify, retain immutable audit logs under commercial and national security imperatives, which functionally negate erasure rights under GDPR exceptions for law enforcement or system integrity. This produces a governance gap where ethical frameworks like deontology—emphasizing individual rights—are subordinated to utilitarian justifications of security and efficiency, revealing how the practical locus of data control lies not with citizens or states, but with corporate stewards of infrastructure.

Immutable systems inertia

The permanence of blockchain-based transaction records in state-delegated identity systems inherently invalidates the right to be forgotten, as cryptographic immutability is not merely a technical feature but a systemic commitment reinforced by legal standards and audit requirements. Estonia’s e-Residency program, for example, relies on KSI Blockchain to ensure tamper-proof identity transactions, a design choice aligned with procedural liberalism's emphasis on transparent, accountable governance but which preempts retrospective data modification. Here, the ethical tension between restorative justice—allowing individuals to correct or remove past data—and institutional trust in unalterable logs reveals that the system’s integrity is prioritized over personal redemption, making erasure functionally impossible without collapsing the epistemic foundation of the entire identity regime.

Regulatory capture of privacy norms

Private actors co-designing hybrid identity systems shape regulatory interpretations in ways that recast data immutability as a public good, thereby normalizing the erosion of the right to be forgotten under the guise of systemic reliability. Through participation in EU standardization bodies like CEN and ETSI, tech firms influence the framing of GDPR’s ‘archiving exception’ (Article 89) to justify retention of identity-adjacent metadata in systems like Germany’s eID infrastructure. This alignment of corporate interest with bureaucratic risk-aversion transforms what should be a narrowly construed legal exception into a default operational principle, illustrating how liberal democratic commitments to autonomy are hollowed out not through overt repression but through the quiet institutionalization of private-first data governance—a process where ethical lapse emerges from procedural legitimacy rather than visible coercion.