Workplace Health Apps: Wellness Boon or Privacy Trap?

Analysis reveals 6 key thematic connections.

Key Findings

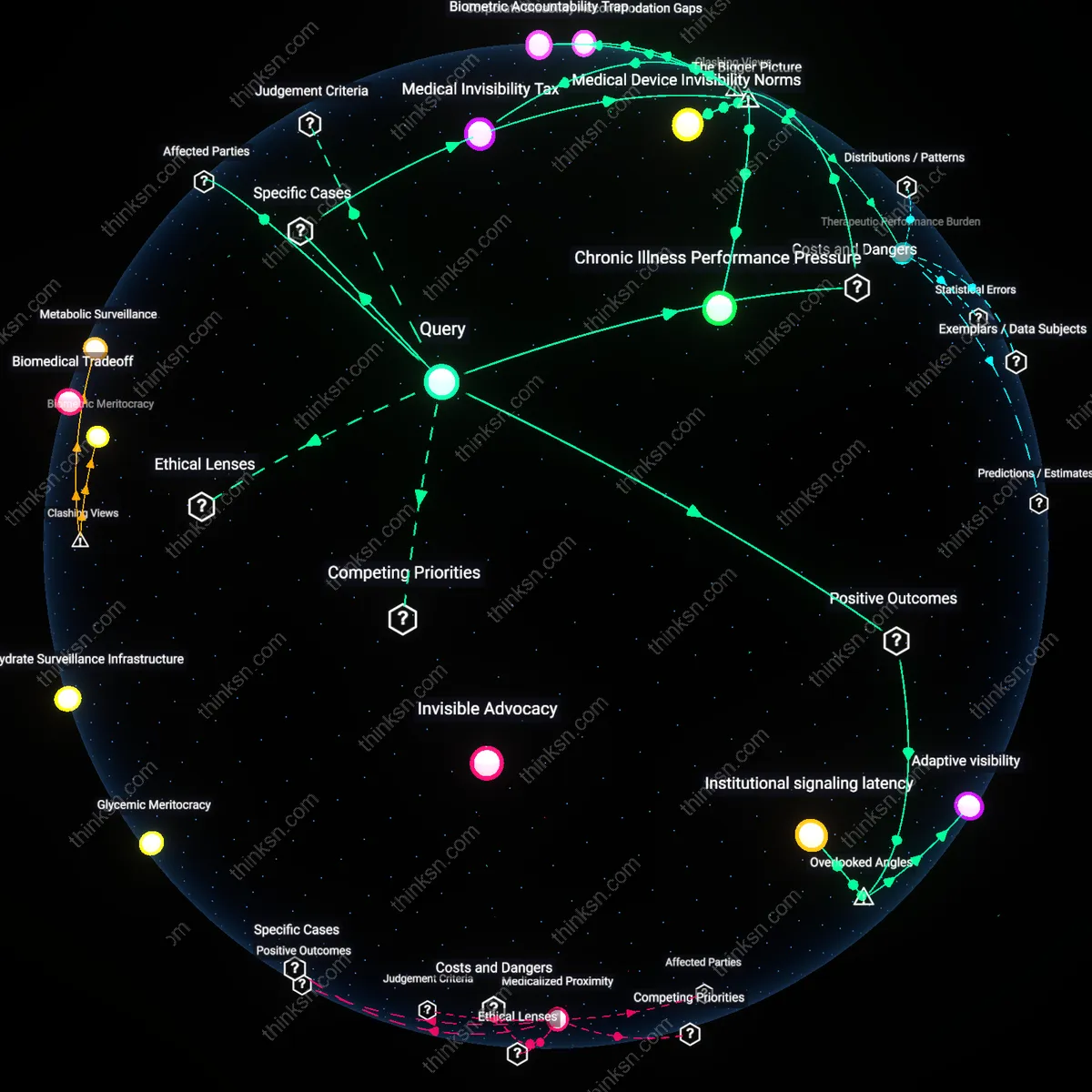

Surveillance Coercion

Yes, wellness incentives justify dangerous power imbalances because employees cannot meaningfully consent to health monitoring when participation affects insurance premiums or job security, creating a coercive environment disguised as personal choice. Employers leverage financial and employment-based pressures to push adoption of health-monitoring apps, making refusal functionally punitive despite nominal opt-out policies. The mechanism operates through workplace policy and benefit design, where HR departments align wellness programs with cost-shifting strategies, normalizing continuous biometric surveillance under the banner of corporate health. What’s underappreciated is that this isn’t passive data collection but an active system of behavioral conditioning—workers learn to self-censor or perform wellness to avoid penalties, replicating surveillance logic in everyday routines.

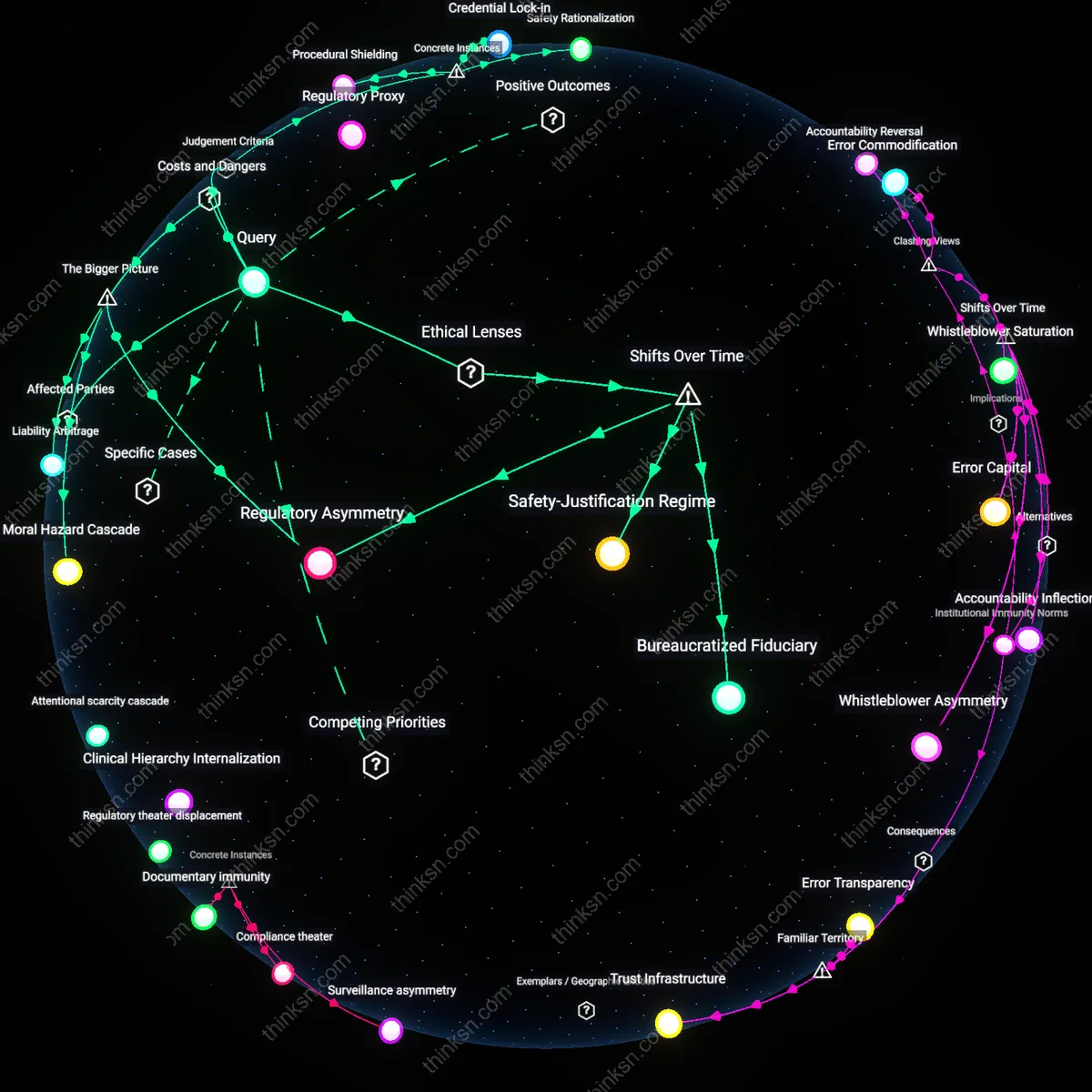

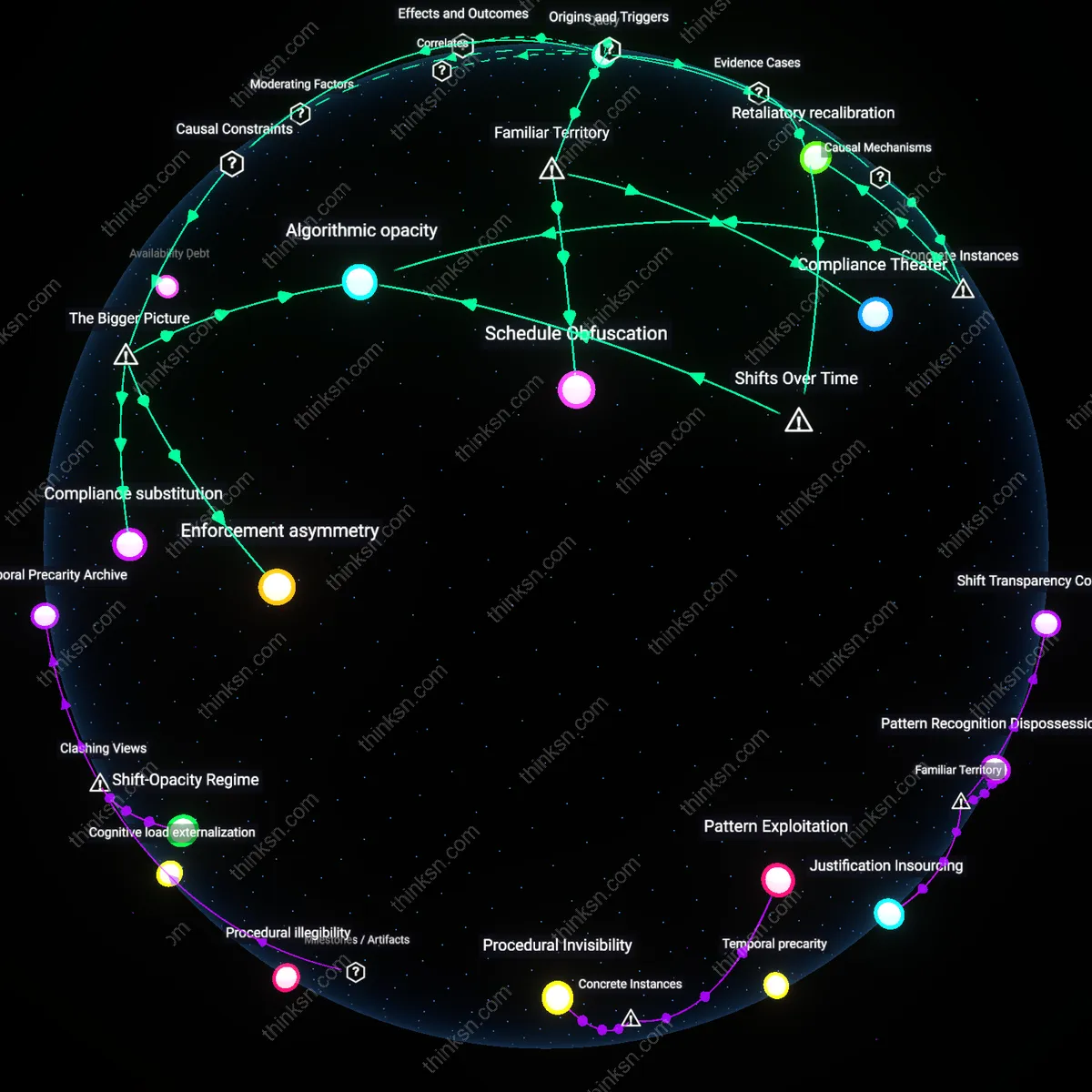

Data Exploitation Pipeline

Yes, wellness incentives justify dangerous power imbalances because employer-mandated health apps feed personal biometric and behavioral data into third-party systems controlled by insurance brokers, wellness vendors, and data aggregators with weak auditing or accountability. These apps, often developed by companies like Fitbit or Virgin Pulse, transmit sensitive health data to cloud servers where it is repurposed for risk profiling, even when anonymized, enabling predictive modeling that can influence workplace categorizations. The system functions through contractual partnerships between employers and tech-health firms, where data flows exceed original consent scopes due to vague terms of service. The underappreciated risk is that this creates a de facto health surveillance infrastructure outside HIPAA protections, where data can be retroactively mined or merged with other corporate datasets for operational decisions like staffing or promotions.

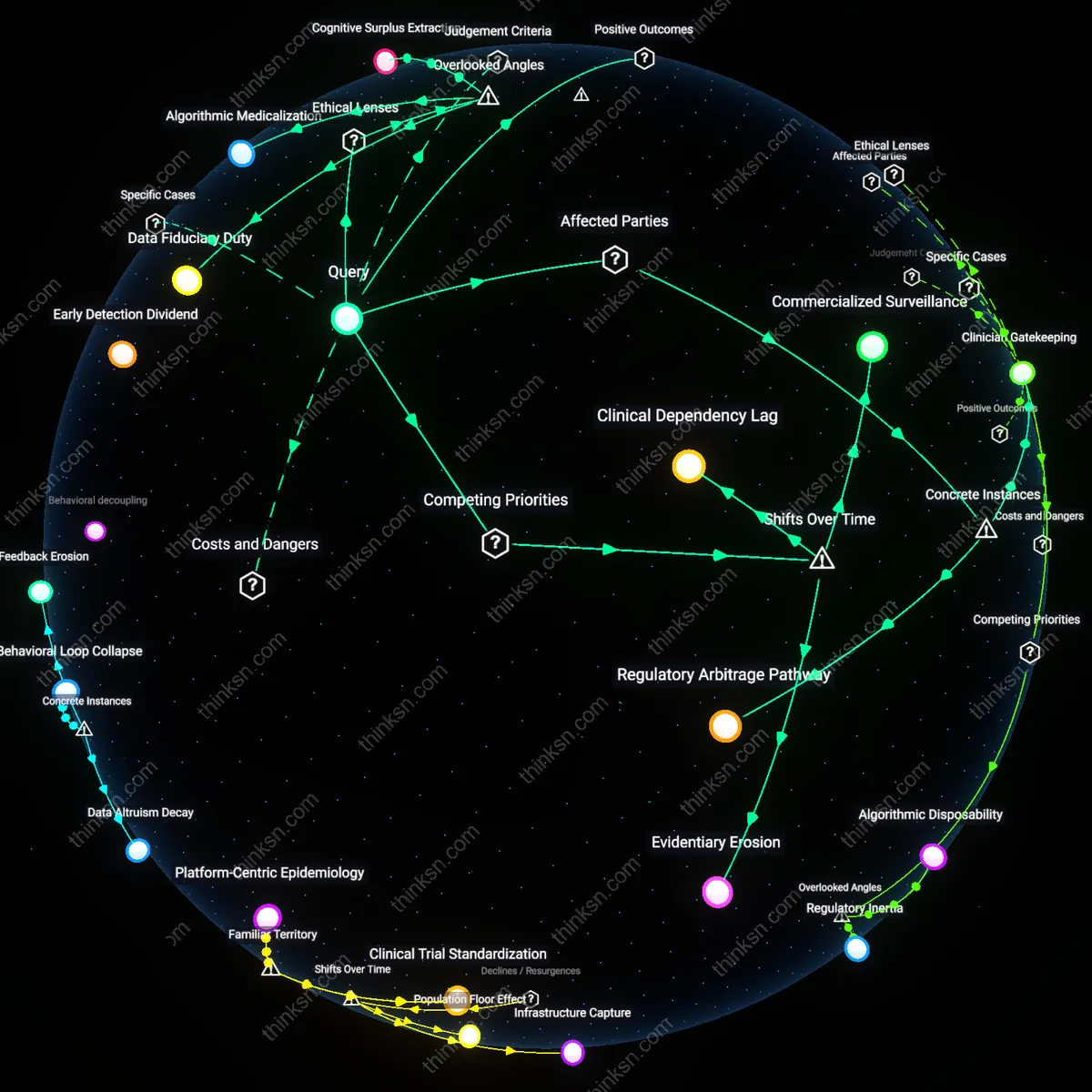

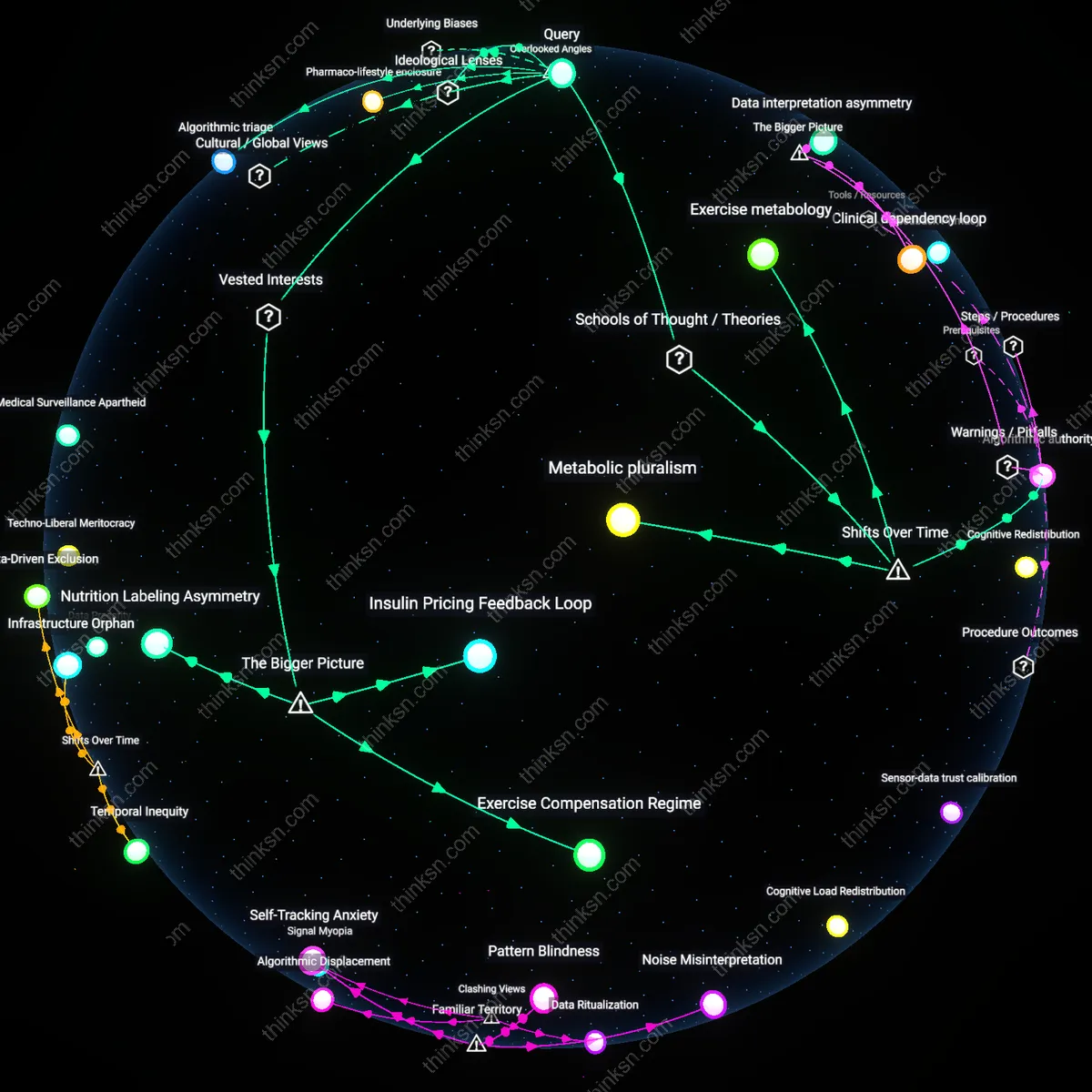

Inequity Enforcement

Yes, wellness incentives justify dangerous power imbalances because they systematically disadvantage workers with chronic conditions, disabilities, or limited access to healthcare, punishing them for health outcomes beyond their control. When metrics like step count, sleep duration, or weight are tied to incentives, employees with mobility impairments or irregular work hours cannot meet benchmarks despite active health management. This operates through standardized app algorithms that interpret deviation from normative health patterns as noncompliance, feeding into performance-adjacent evaluations. The underappreciated reality is that these apps don’t measure health—they measure conformity to able-bodied, schedule-stable norms, turning wellness programs into tools that enforce biological privilege and deepen structural inequities under the guise of neutrality.

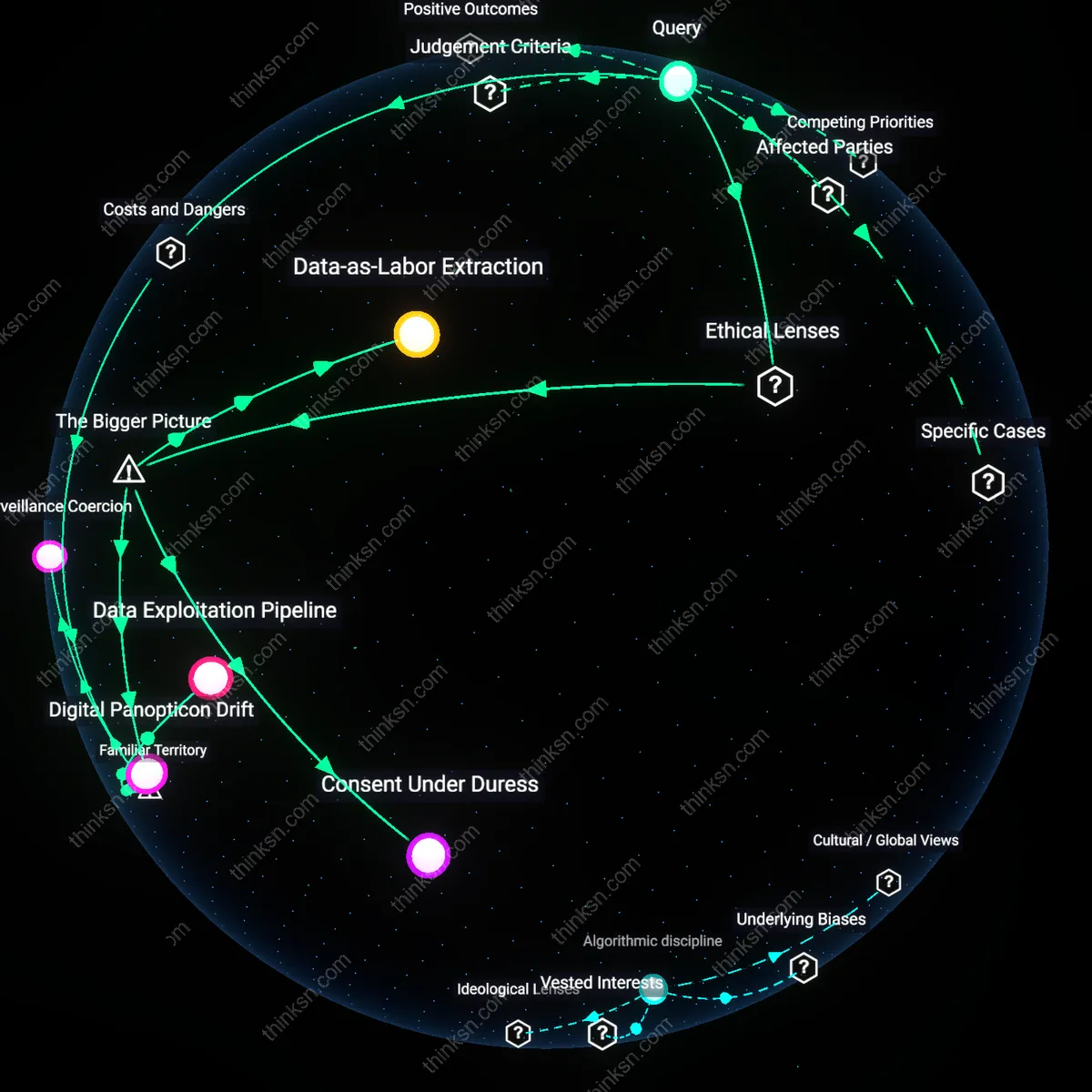

Data-as-Labor Extraction

Yes, wellness incentives normalize data-as-labor extraction because corporate health programs repurpose employee biometrics as productive inputs for actuarial risk modeling, a process enabled by labor’s diminished bargaining power under neoliberal employment relations. Employers, acting as both data collectors and workplace authorities, exploit structural asymmetries to refract public health goals into corporate efficiency tools—transforming voluntary wellness into a covert production function. This shift is systematically obscured by framing participation as personal responsibility, masking how aggregated biometric data lowers insurance costs not through individual care, but through actuarial stratification at scale.

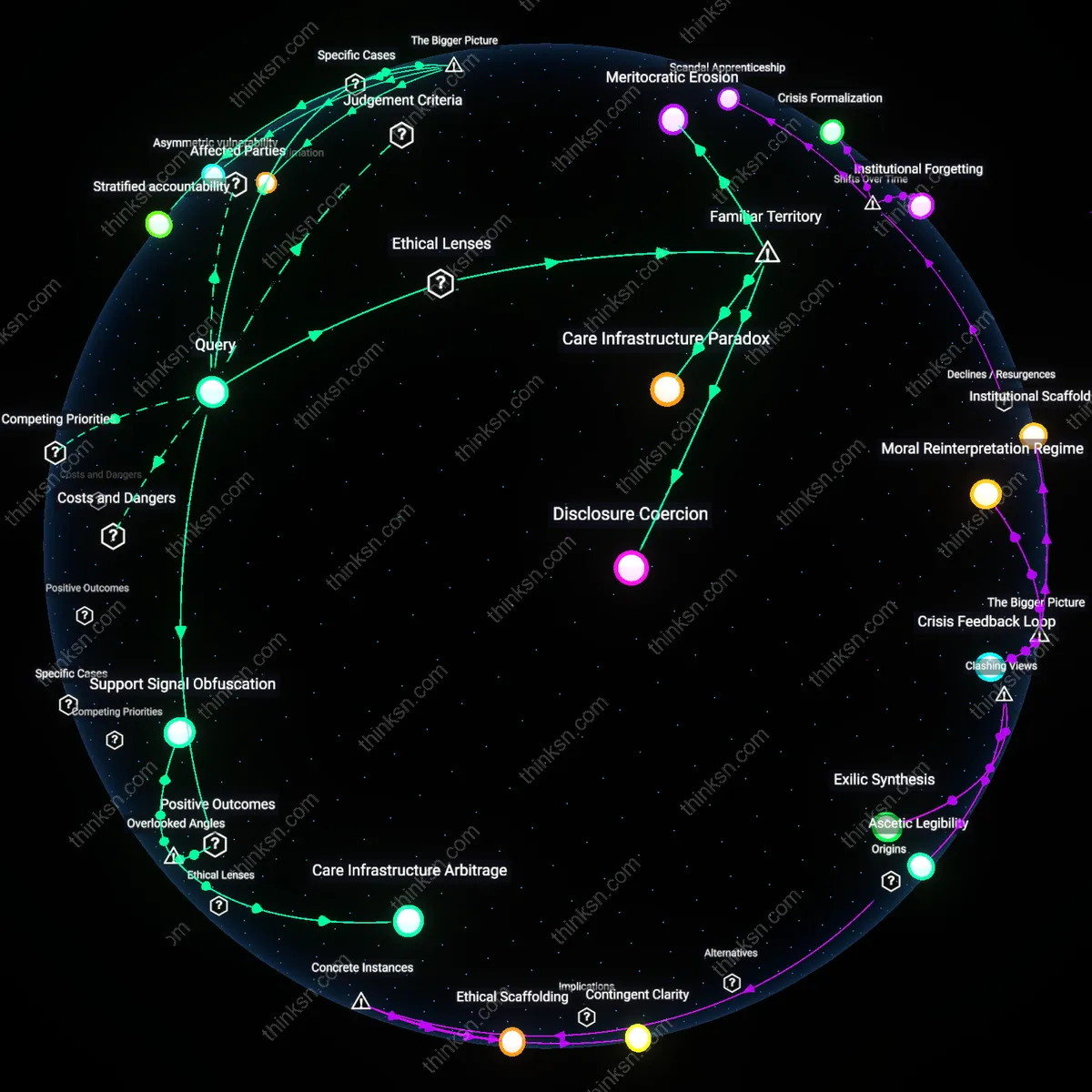

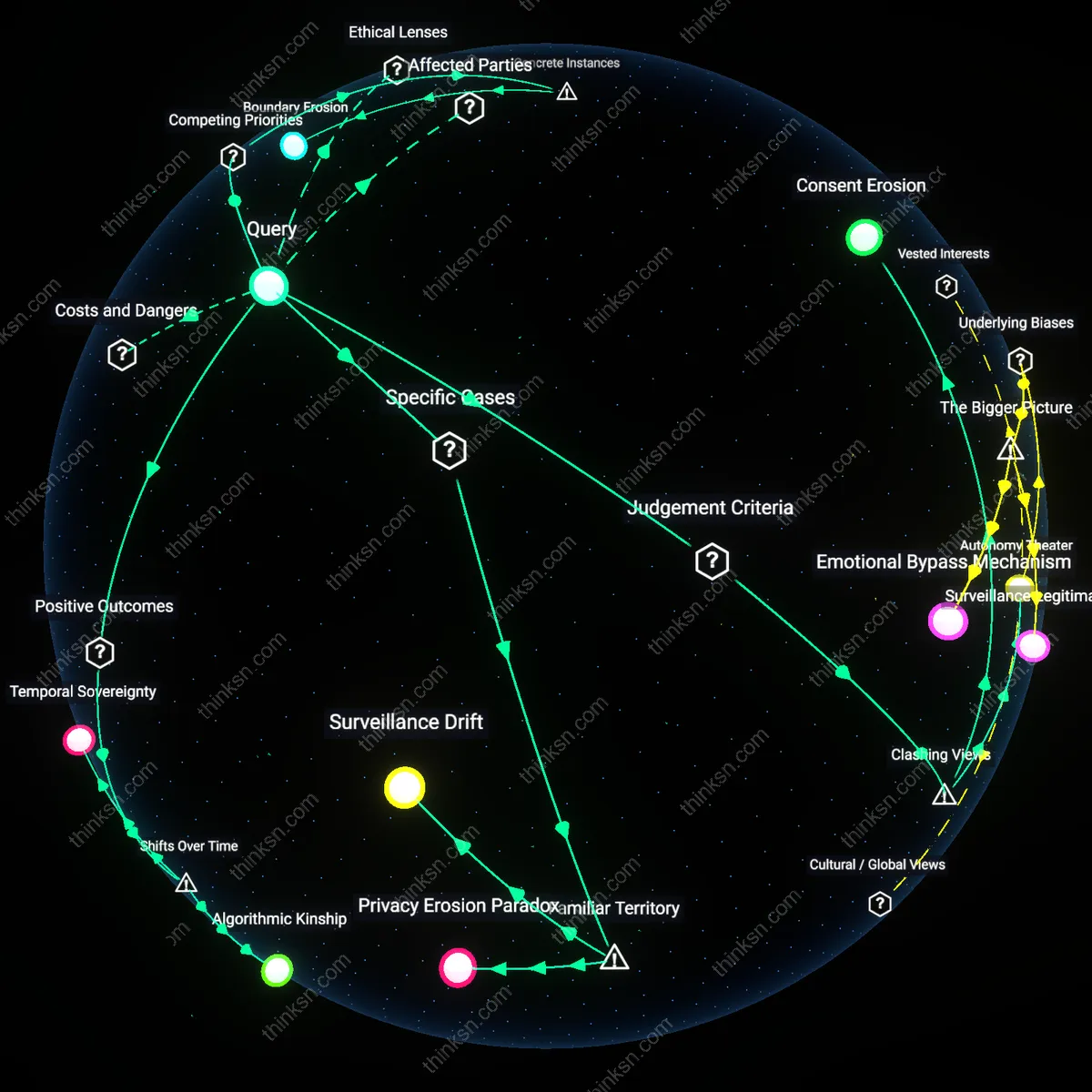

Consent Under Duress

No, wellness incentives cannot justify the power imbalance because mandatory health-monitoring apps produce consent under duress, a condition legitimized by the integration of HIPAA exceptions and ERISA-governed self-insured plans that permit employers to access aggregated—but potentially re-identifiable—health data. When the U.S. legal framework permits financial penalties for non-participation in wellness programs, it transforms ostensibly voluntary engagement into a coercive economic calculus, particularly for low-wage workers. This systemic condition reveals how legal permissibility, rather than ethical justification, becomes the operative standard, enabling employers to outsource health risk management onto employees while retaining unilateral control over data governance.

Digital Panopticon Drift

No, the power imbalance fundamentally enables digital panopticon drift, where continuous health monitoring normalizes anticipatory compliance through algorithmic surveillance embedded in workplace wellness ecosystems. Once employers deploy apps that track sleep, activity, or stress, they contribute to a broader sociotechnical trajectory in which health becomes a metricized performance domain, policed not by overt discipline but by ambient feedback loops tied to insurance premiums or promotion eligibility. This dynamic is accelerated by public-private partnerships that subsidize corporate wellness platforms through CDC or NIH innovation grants, unintentionally validating surveillance as preventive care, thereby institutionalizing behavioral control under the banner of health optimization.