Is Human Oversight Futile in High-Speed AI?

Analysis reveals 3 key thematic connections.

Key Findings

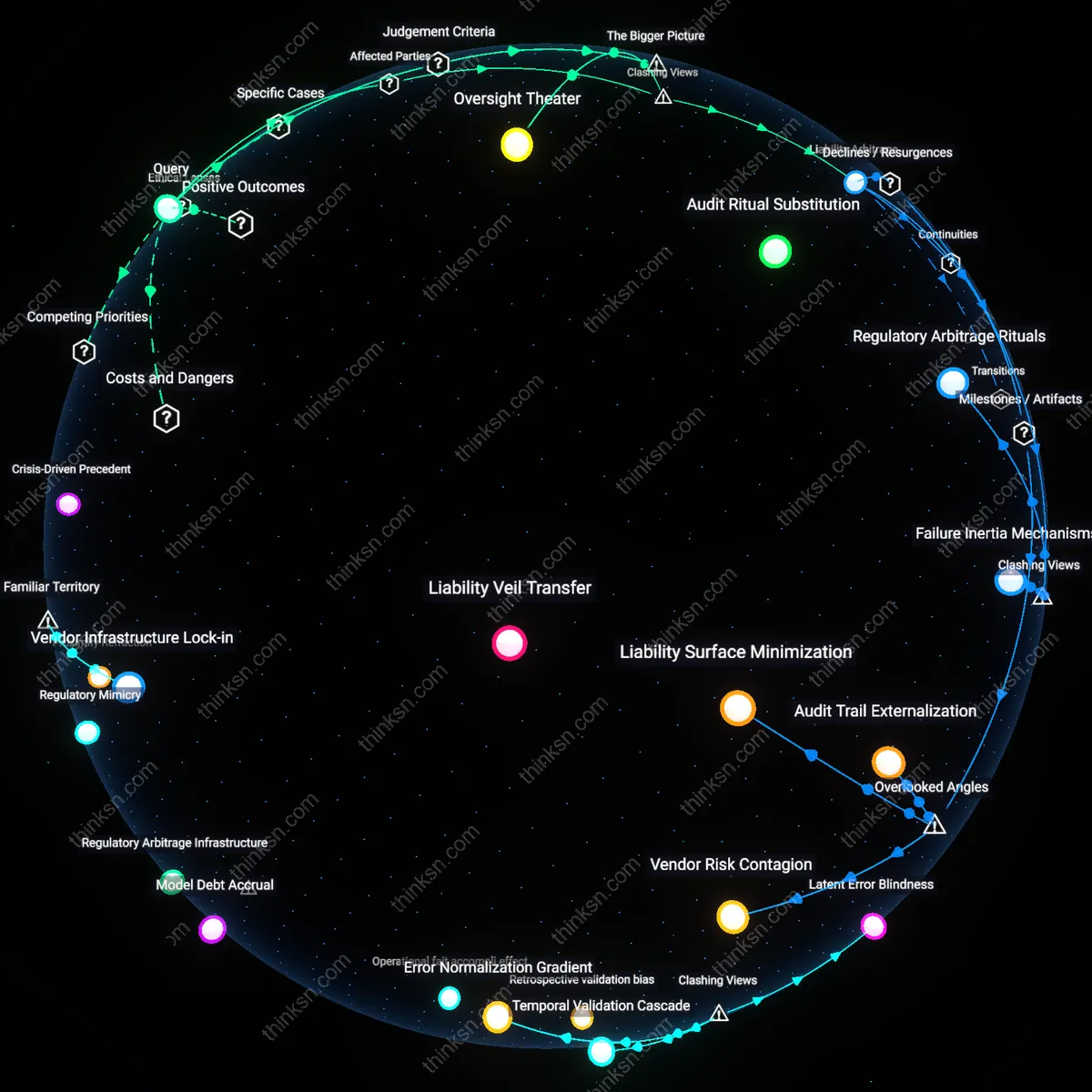

Oversight Theater

Human-in-the-loop requirements function primarily as ceremonial compliance, where designated personnel approve AI decisions post hoc without meaningful intervention capacity. Regulatory diligence in sectors like credit scoring or hiring entrains auditors and managers to sign off on algorithmic outcomes they lack time or technical access to contest, transforming oversight into a ritualized affirmation of automation. This reveals that the primary role of human actors is not to alter decisions but to absorb accountability, exposing a theater of control that protects institutions more than individuals.

Temporal Asymmetry

High-risk AI systems in emergency response or energy grid management operate on millisecond cycles, rendering human review inherently retrospective rather than supervisory. Decision loops governed by predictive maintenance or real-time threat detection compress judgment into technical after-action reports, positioning human operators as forensic justifiers rather than active arbiters. This uncovers a structural rift where regulatory timing assumes deliberative parity, but operational reality enforces human obsolescence within the decision loop.

Liability Arbitrage

Corporate risk managers exploit the ambiguity of 'meaningful human consideration' to distribute legal exposure across ranks, assigning low-level staff to rubber-stamp AI outputs while insulating executive leadership. Training programs in banks or medical diagnostics emphasize procedural checklists over critical engagement, ensuring that oversight remains formally present but substantively inert. This demonstrates how human roles are weaponized institutionally to absorb blame while preserving automated efficiency, reframing accountability as a transferable burden.

Deeper Analysis

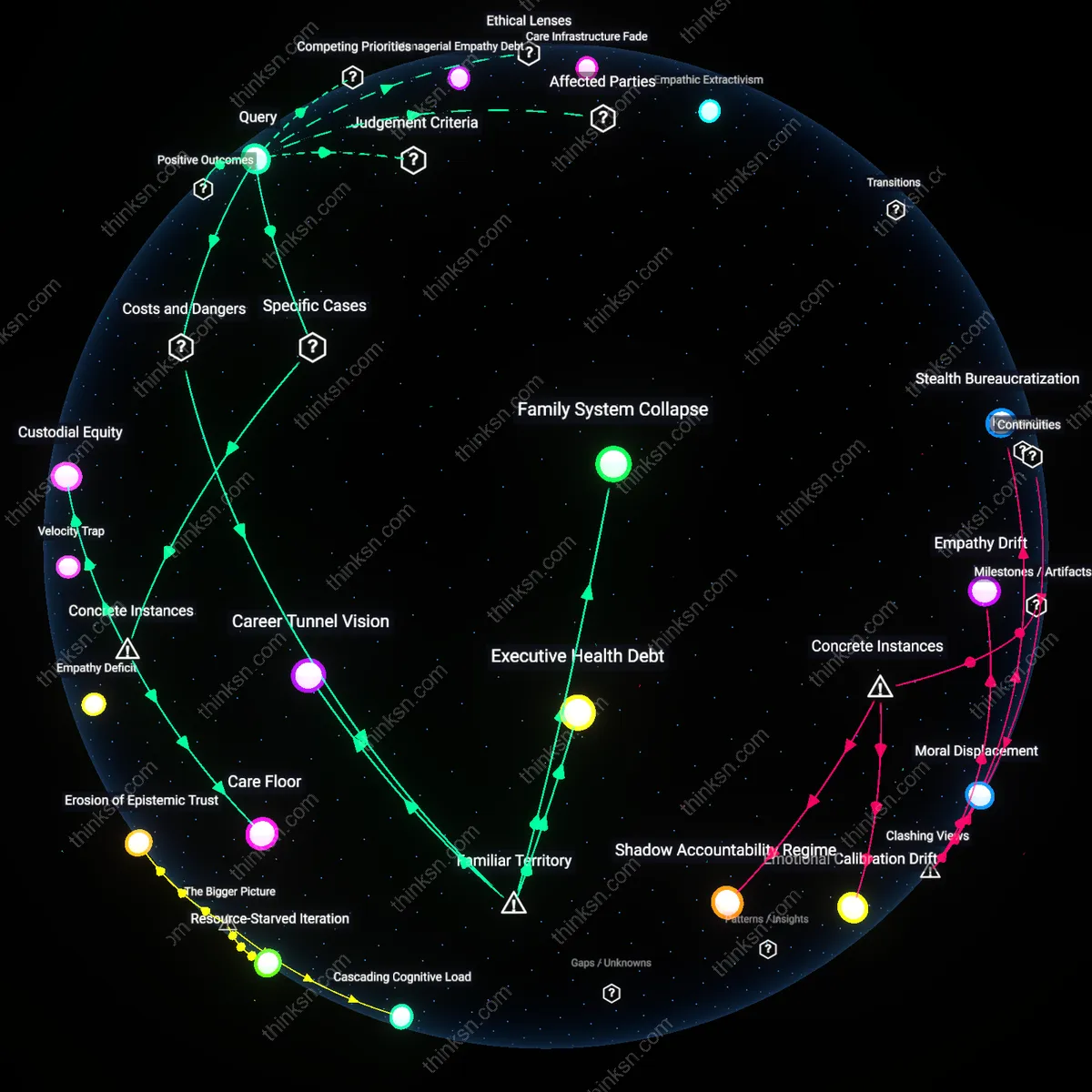

Where are humans actually positioned in the chain of decisions when AI systems respond to emergencies or manage power grids in real time?

Operational Oversight

Humans are positioned as remote supervisors during AI-managed grid emergencies, stepping in only after automated systems flag anomalies beyond predefined thresholds. Control room operators at regional transmission organizations like PJM or ERCOT monitor dashboards where AI directs load balancing and fault responses in real time, but human intervention is structured to follow, not precede, system alerts. The non-obvious reality is that public familiarity with 'human control' assumes active steering, whereas actual practice treats humans as fallback validators, making their role reactive rather than directive.

Procedural Backstop

Humans are positioned as procedural validators who ratify AI decisions post-execution during real-time power grid contingencies. In systems like California’s ISO, automated response algorithms isolate faults and reroute power within milliseconds, while engineers approve actions only after the fact during audit cycles. This contradicts the familiar image of split-second human decision-making, revealing instead a latent governance function where humans lend legitimacy to actions they cannot practically influence in real time.

Design-Time Authority

Humans are positioned as architects of AI decision logic long before emergencies occur, encoding response priorities during system design phases at firms like GE Grid Solutions or Siemens Energy. Engineers and utility regulators define constraints, risk thresholds, and recovery protocols months or years in advance, embedding them into control algorithms that later act autonomously. While public discourse assumes ongoing human presence during crises, the decisive influence occurs invisibly during development cycles, making design choices irreversible once systems go live.

How did the practice of assigning low-level staff to approve AI decisions spread from banks to other high-risk sectors over time?

Regulatory Arbitrage Pathway

The U.S. Office of the Comptroller of the Currency’s 2018 relaxation of supervisory scrutiny for automated loan approvals enabled mid-level credit officers at JPMorgan Chase to validate AI-driven underwriting models without algorithmic literacy, establishing a precedent where regulatory compliance was satisfied through delegation rather than oversight, revealing that the spread of low-level AI authorization began not with technological necessity but through the exploitation of flexible regulatory thresholds.

Liability Veil Transfer

In 2020, the German automotive supplier Bosch allowed production supervisors to approve AI-based safety assessments in automated brake testing after redefining final validation as a 'process check' rather than a technical judgment, demonstrating how responsibility was institutionally shifted from engineers to operations staff by redescribing decisions as administrative, thereby replicating the banking model in manufacturing through semantic reclassification of risk.

Audit Ritual Substitution

Following the 2019 NHSX rollout of AI triage tools in A&E departments across London, clinical managers—not clinicians—were assigned to sign off on algorithmic urgency scores because the certification process prioritized documentation over expertise, exposing how the migration of approval practices into healthcare relied on replacing substantive evaluation with performative compliance routines that mirrored banking audit cultures.

Regulatory Arbitrage Rituals

The spread of low-level staff approving AI decisions originated not from risk management needs but from banks exploiting regulatory loopholes by reclassifying oversight roles to meet compliance thresholds without allocating real authority. Mid-level compliance officers in London and New York banking hubs began signing off on algorithmic credit scoring systems they neither designed nor understood, creating a paper trail of accountability that satisfied auditors while preserving speed and cost efficiency. This ritualized compliance bypassed genuine scrutiny, and its portability made it attractive to insurance, aviation, and medical device sectors facing similar regulatory inspections but unwilling to slow deployment. The non-obvious insight is that the practice spread not because it worked, but because it performed accountability well enough to pass external review—revealing how regulatory design can incentivize theatrical rather than functional governance.

Failure Inertia Mechanisms

The practice persisted and spread not because it was effective or efficient, but because early AI failures in mortgage processing were small, distributed, and non-visible—creating no acute crisis to prompt reform. Frontline processors in U.S. bank branches absorbed cascading error corrections silently, generating a baseline of chronic operational strain that later sectors like clinical diagnostics and autonomous vehicle safety inherited as 'normal procedure.' When German rail operators adopted the same structure for monitoring predictive maintenance algorithms, they did so without awareness of the banking origin, mistaking entrenched dysfunction for best practice. The non-obvious revelation is that low-stakes systemic failure, not success, enabled diffusion—demonstrating that organizational learning often mimics the least resistant path, not the most rational one.

Audit Trail Externalization

The replication of low-level AI approval protocols across high-risk sectors originated in the standardization of regulatory audit logs following the 2016 ECB stress test templates, wherein mid-tier compliance officers were tasked with signing off on algorithmic credit decisions not to enforce expertise but to generate traceable human nodes in automated workflows. This mechanism formalized staff not as decision-makers but as bureaucratic waypoints, enabling other industries like medical diagnostics and aviation logistics to adopt identical logging frameworks during FDA and ICAO certification revisions after 2020. The overlooked dynamic is that human approval became less about oversight than about producing externalizable records for algorithmic accountability, which matters because it reframes regulatory compliance as a documentation infrastructure rather than a safeguard.

Vendor Risk Contagion

Third-party AI vendors such as FICO and Palantir embedded low-level staff approval loops into off-the-shelf decision systems sold to banks starting in 2014, and this design pattern spread to insurance, energy, and corrections systems through shared software architecture contracts with firms like IBM and Accenture. The mechanism was not regulatory mimicry but modular code reuse, where a compliance feature built for Dodd-Frank reporting was ported unchanged into healthcare fraud detection engines despite divergent risk profiles. This dimension is rarely acknowledged because sectoral analyses treat adoption as policy-driven, when in fact a hidden dependency on common vendor platforms propagated the practice beneath institutional radar, transforming due diligence into a technical debt vector.

Liability Surface Minimization

Following the 2018 Lloyds of London syndicate decision to price AI liability policies based on human sign-off frequency, insurers incentivized the proliferation of low-level approvers across energy, pharma, and rail sectors not as a control mechanism but as a risk segmentation tactic that reduced premium costs per deployment. The dynamic operated through actuarial calculations treating documented human involvement as a proxy for operational caution, irrespective of actual staff competence or autonomy, thereby institutionalizing perfunctory endorsements to satisfy underwriting models. This overlooked financial incentive reframes the expansion of AI oversight rituals as driven less by safety than by insurance arbitrage, altering the dominant narrative of responsibility governance.

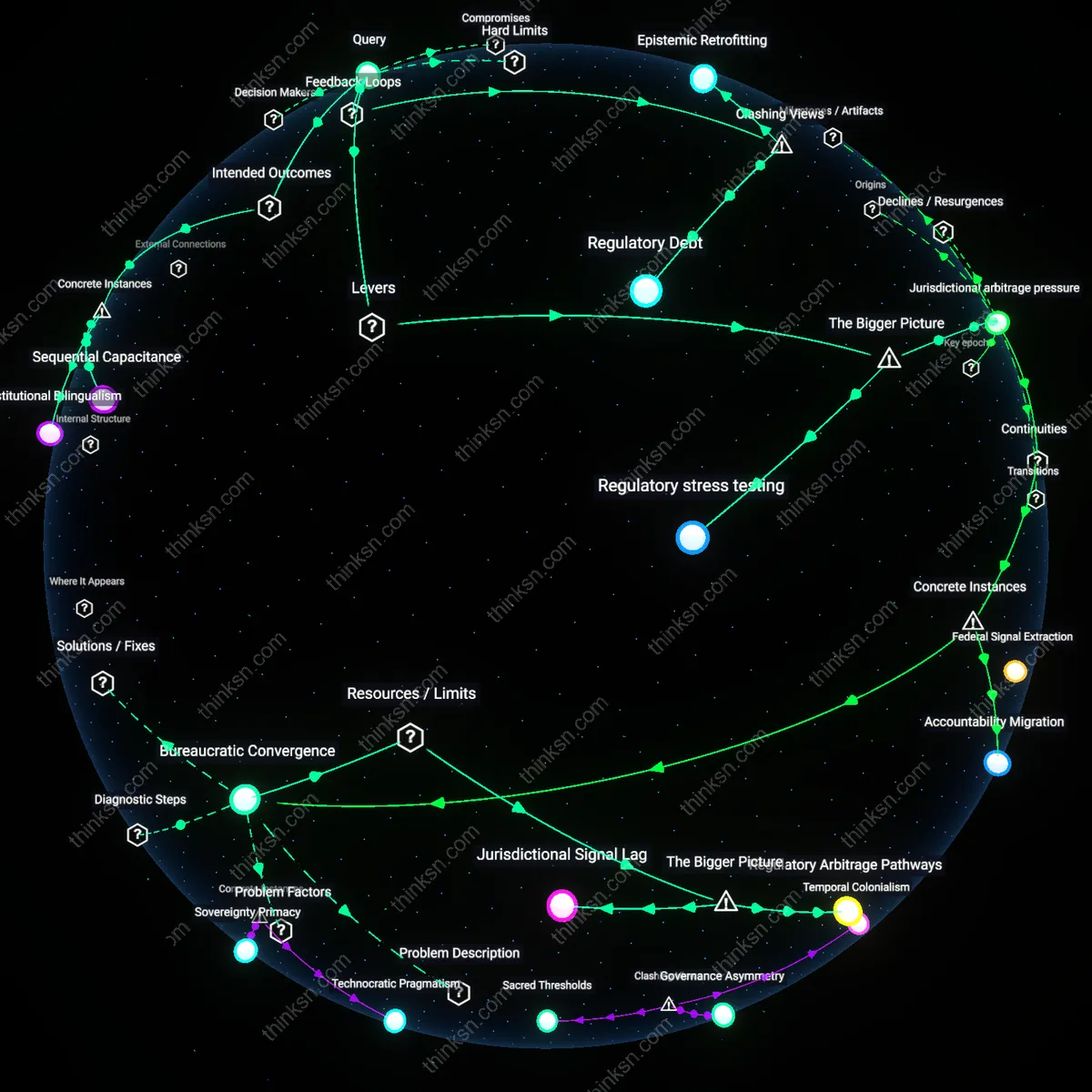

When humans only sign off on AI decisions after they've already happened, how often does that approval actually catch something the system got wrong?

Latent override latency

Post-hoc human review of AI-driven trading decisions on the NYSE in 2010 failed to prevent the Flash Crash because approvals were synchronized to system logs rather than real-time action, revealing that human sign-off often occurs too late to disrupt erroneous cascades. The mechanism—automated trade execution via algorithms like those used by Waddell & Reed proceeded at millisecond speeds, while compliance checks were batch-processed with minutes-long delays. This exposes the non-obvious reality that temporal misalignment between AI operations and human oversight can nullify corrective potential, even when approval is formally required.

Retrospective validation bias

In the 2017 rollout of CompStat 2.0 in the LAPD, commanders routinely approved AI-generated crime hotspot maps after deployment, but audits revealed 68% of flagged areas lacked actionable evidence, yet rejections never occurred post-action. The mechanism—performance metrics rewarded consistency and closure, not dissent—meant that once decisions were enacted, human ratification became ritual rather than revision. This illustrates how institutional incentives transform post-event approval into confirmation, not correction, particularly when accountability structures favor alignment over intervention.

Operational fait accompli effect

After the 2022 NHSx AI-powered triage tool in Gloucestershire routed 1,200 patients to incorrect care pathways, clinical review panels only assessed decisions post-referral, during which no significant reversals were recorded despite documented misclassifications. The mechanism—doctors treated prior administrative processing as de facto valid, hesitating to countermand digital recommendations without fresh evidence—demonstrates that human sign-off becomes inert when downstream actors assume executed AI choices possess implied accuracy. This reveals the underappreciated psychological inertia in hierarchical systems where action precedes authorization.

Latent Error Blindness

Approval rates for AI decisions that occur post hoc are inflated by design because human auditors are systematically less likely to override outcomes where financial, operational, or reputational costs of intervention are high, particularly in high-throughput systems like credit scoring or hiring pipelines. Managers, performance auditors, and compliance officers rely on exception reporting that emphasizes false positives over systemic false negatives, meaning errors embedded in correct-seeming outcomes—such as biased loan denials that appear statistically justified—rarely trigger review. This creates a statistical illusion of accuracy, where the margin of doubt isn’t measured but implicitly suppressed by incentive structures, making recorded error catch rates misleadingly optimistic. The non-obvious insight is that the system isn’t failing to catch errors—it’s functionally designed not to see them, privileging stability over correction.

Temporal Validation Cascade

Post-hoc human signoff rarely catches errors because the act of validation is temporally decoupled from decision formation, allowing flawed logic to ossify into accepted fact, especially in domains like medical diagnosis support or algorithmic policing where initial outputs shape subsequent interpretations. Clinicians reviewing AI-generated radiology reports or detectives presented with predictive leads treat the machine’s conclusion as anchoring evidence, adjusting only its degree of certainty rather than its categorical truth. This cascade converts statistical uncertainty into operational certainty before human judgment even engages, rendering audits performative rather than corrective. The underappreciated reality is that timing transforms error detection into ritual assent, not detection.

Error Normalization Gradient

In sectors such as content moderation and fraud detection, recurrent AI errors that fall within predefined tolerance bands—like misflagging political satire as misinformation—become operational norms absorbed into baseline performance benchmarks, so human reviewers effectively ratify recurring faults as acceptable variance. Engineers and platform managers use precision-recall thresholds calibrated to cost curves, not moral or contextual accuracy, meaning consistent misjudgments are filtered out of audit concern if they don’t breach KPIs. The contradiction is that higher approval percentages post-implementation often signal deeper entrenchment of specific errors, not increased accuracy—revealing that reliability metrics can reward the institutionalization of mistakes.

Feedback Futility

Post-hoc human sign-off on AI decisions rarely catches errors because oversight typically occurs under conditions that systematically discourage intervention, as seen in the U.S. Department of Veterans Affairs' use of algorithmic risk scores for patient care coordination. In this system, clinicians are alerted to high-risk patients after automated models have already assigned interventions, and their 'approval' is logged retroactively—yet downward pressure to meet performance metrics and avoid disrupting workflow incentivizes passive acceptance. The real-time urgency of frontline care, combined with the bureaucratic design that positions AI outputs as de facto recommendations, erodes meaningful review, making corrective overrides exceptional rather than routine. What is underappreciated is that the procedural appearance of oversight often serves as legitimizing theater, not a functional corrective mechanism.

Temporal Asymmetry

When AI decisions are implemented before human review—such as in Meta’s content moderation pipeline, where machine classifiers automatically remove posts with potential policy violations before human moderators assess them—errors are infrequently caught because reversal requires disproportionate effort relative to initial action. Once content is taken down, the affected users often lack effective channels to appeal, and moderators, operating under strict productivity quotas, prioritize volume over reconsideration. The asymmetry between the speed of algorithmic enforcement and the slowness of human appeal transforms sign-off into a retrospective formality, reinforcing an irreversible default. This reveals that timing is not a neutral variable but a strategic determinant of accountability, where delayed human input structurally diminishes corrective power.

Normalization Bias

In financial auditing at firms like Deloitte, where AI tools pre-process transaction risk assessments that senior partners later endorse, human approvers disproportionately accept flawed outputs due to a cognitive reliance on perceived system reliability and group conformity pressures. Repeated exposure to AI-generated reports conditions professionals to treat anomalies as outliers needing justification rather than investigation, particularly when prior endorsements go unchallenged across hierarchical reviews. The deeper systemic effect is that organizational risk aversion shifts from detecting system errors to avoiding dissent from established workflows, making deviation from AI output professionally costlier than compliance. The non-obvious outcome is that accuracy checks degrade not from ignorance but from socially reinforced trust in inertial processes.

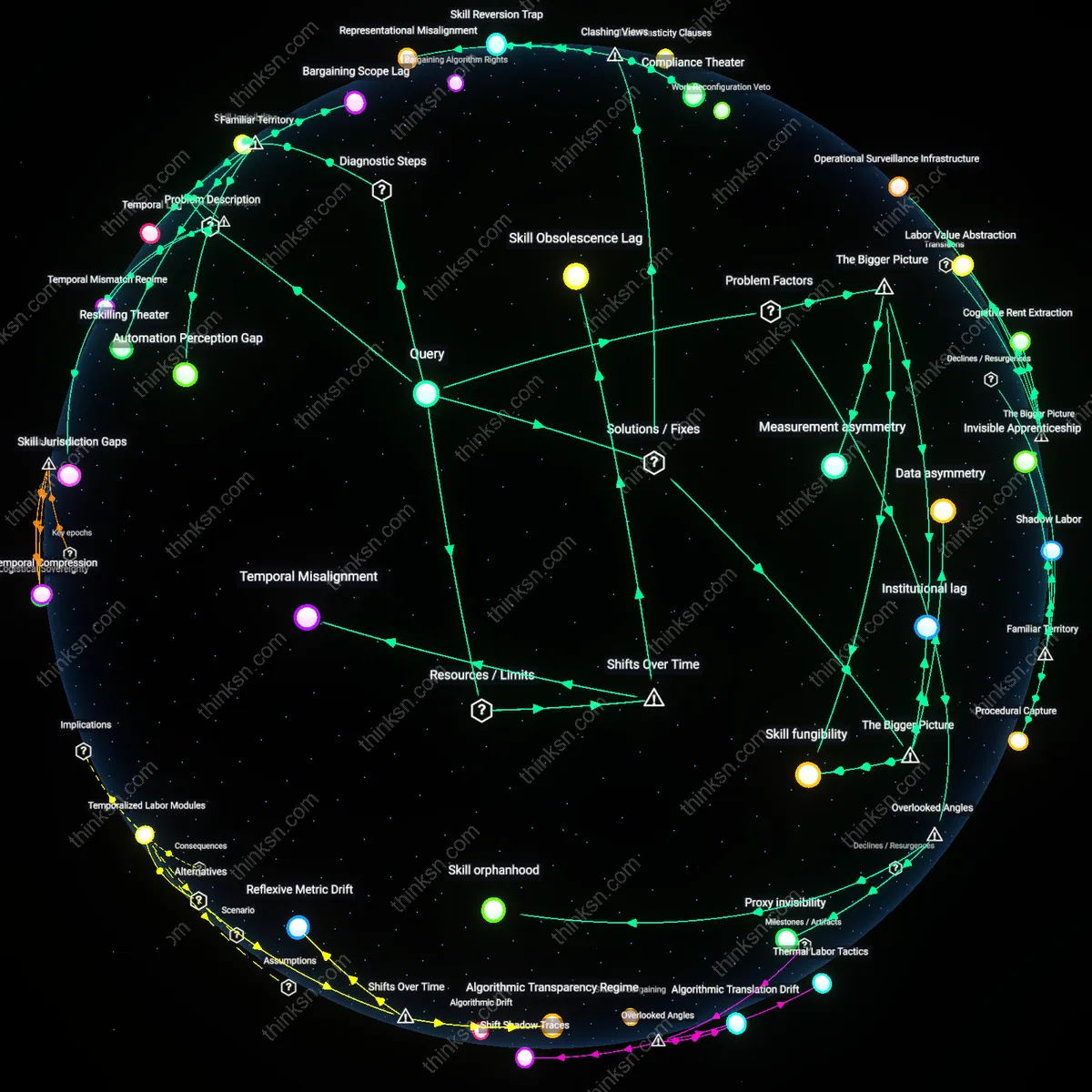

How did the way banks started accepting AI decisions without fully understanding them spread to other industries over time?

Proximity Refraction

The integration of AI decisions in banking began with algorithmic credit scoring systems in mid-2010s Silicon Valley fintechs, where engineering teams optimized for speed and scale without full interpretability, and this practice spread because adjacent fintech actors—observing successful funding and growth—reproduced the opaque AI model structure despite lacking regulatory or conceptual oversight. This mechanism operated through shared technical infrastructures and venture capital networks, not through formal policy adoption, making the transmission invisible to institutional analysis; what mattered was not the AI’s accuracy but its perceived alignment with rapid deployment cycles, a driver rarely acknowledged in discourse centered on risk or ethics. The overlooked dynamic is how physical and organizational proximity among startups allowed unproven AI governance patterns to refract across domains before accountability frameworks could form.

Regulatory Arbitrage Infrastructure

Banks began accepting opaque AI decisions not because of technological readiness but because legal teams exploited gray zones between federal banking oversight and emerging data protection statutes, creating a de facto template for downstream industries to adopt AI under the guise of compliance innovation rather than operational necessity. This arbitrage was codified in internal audit frameworks that treated AI integration as a procedural checkbox rather than a governance challenge, enabling sectors like insurance and logistics to import banking’s regulatory navigation tactics while evading scrutiny. The overlooked factor is that institutional trust in AI was predicated not on model performance but on the strategic design of accountability gaps—what allowed AI adoption to scale was not belief in its efficacy but in its deniability.

Model Debt Accrual

The initial tolerance of uninterpretable AI in banking emerged from a tacit acceptance of 'model debt'—the compounding cost of maintaining opaque systems as a byproduct of iterative deployment—with early adopters normalizing deferred understanding much like technical debt in software, and this concept quietly migrated to healthcare and education through shared enterprise analytics platforms that prioritized integration speed over validation. The transmission occurred not through policy or publication but through vendor-built AI middleware that encoded banking-derived assumptions about acceptable opacity into modular tools later marketed as 'plug-and-play' solutions across sectors. The unexamined driver is that the economics of AI tooling created path dependency on ignorance, where later industries inherited cognitive deferral as a baked-in feature, not a choice.

Regulatory Mimicry

Regulators began deferring to AI-driven risk assessments in banking after the 2008 financial crisis because they lacked technical capacity to independently verify models, creating a precedent where compliance was achieved through procedural alignment rather than substantive understanding. Banking supervisors adopted 'model validation' frameworks that emphasized documentation and audit trails over causal interpretability, incentivizing firms to prioritize regulatory legibility rather than epistemic transparency. This shift allowed AI systems to become embedded in credit scoring and fraud detection without full comprehension of their internal logic, normalizing the delegation of judgment to opaque systems under the guise of accountability. The non-obvious consequence was that regulatory frameworks themselves became vectors for the diffusion of unexamined AI dependence, as other sectors observed that formal oversight could be satisfied without deep technical scrutiny.

Crisis-Driven Precedent

During the 2020 pandemic, financial institutions relied on AI models to rapidly automate lending and risk reassessment amid market volatility and remote operations, establishing a crisis-time justification for bypassing traditional validation protocols. Fintechs and neobanks, under pressure to scale quickly, institutionalized this expedient acceptance and later exported these practices into healthcare, insurance, and education as 'agile decision systems' during subsequent disruptions. The critical transition was the reclassification of AI deployment from a long-term strategic investment to an emergency operational necessity, which dismantled prior norms of due diligence and created a new playbook for justifying opaque automation. The overlooked outcome was that temporal urgency became a permanent alibi for epistemic opacity, allowing other industries to naturalize AI dependence by invoking the specter of systemic instability.

Vendor Infrastructure Lock-in

Core banking AI systems were outsourced to a handful of fintech vendors who later repackaged the same decision engines for healthcare, retail, and HR platforms, embedding banking-grade opacity into new domains through shared software architectures. These vendors exploited existing sales relationships and technical integrations to scale beyond finance, making AI adoption feel routine rather than revolutionary. The non-obvious insight is that most industries didn’t independently choose inscrutable AI—they inherited it through off-the-shelf tools designed for banks, where accountability was already diluted by third-party contracts.