Is High Consistency Enough for Legal AI Trust?

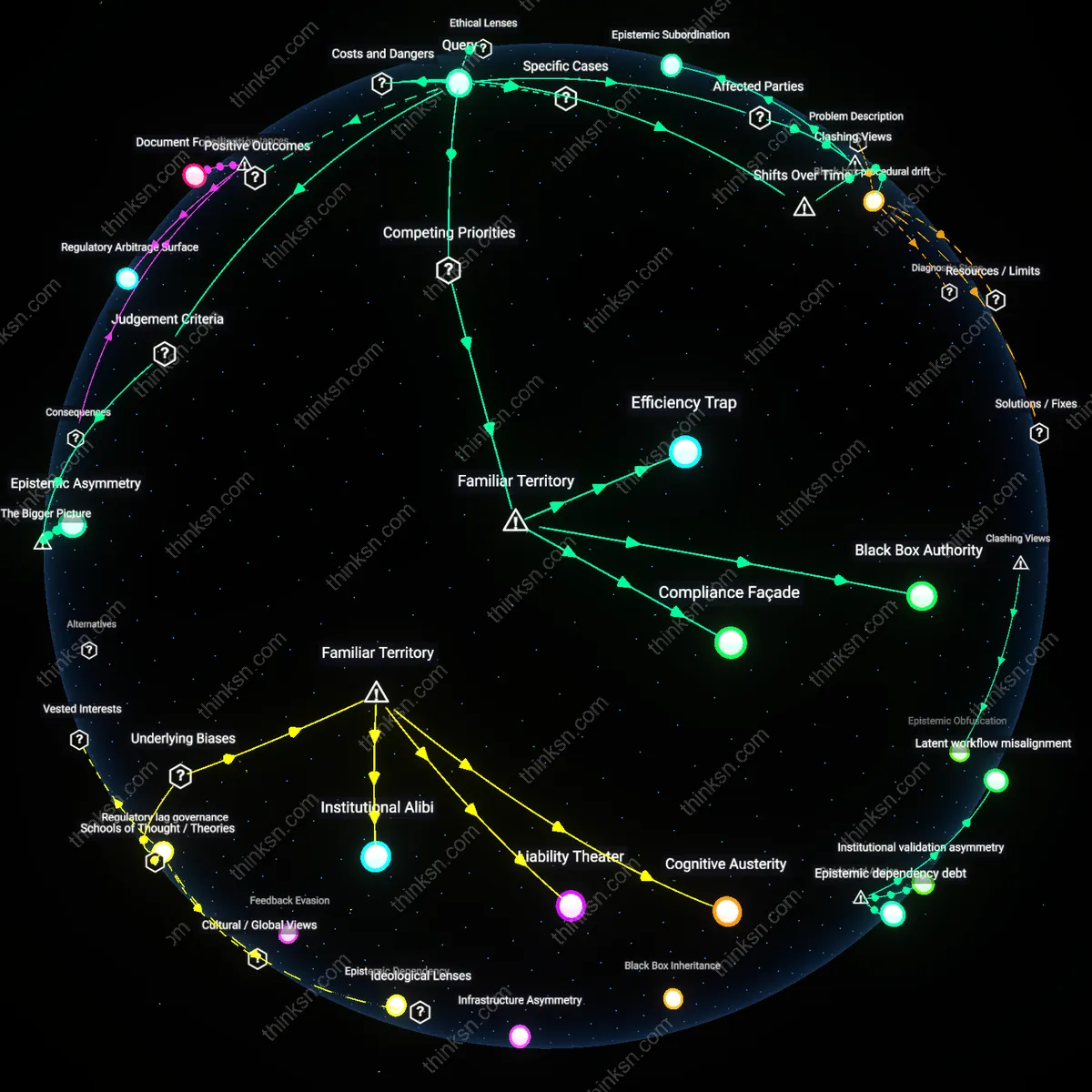

Analysis reveals 8 key thematic connections.

Key Findings

Provisional Accountability

Corporate legal teams should not trust AI contract-analysis tools because doing so transfers adjudicative responsibility to opaque systems while preserving liability with human actors, creating a rupture in accountability. Legal departments at firms like JPMorgan or Pfizer rely on AI tools such as Kira or Luminance to accelerate due diligence, yet remain solely liable under bar rules and regulatory frameworks when errors occur—meaning lawyers bear professional risk for outcomes they cannot fully explain or anticipate. This dynamic institutionalizes a form of provisional accountability, where obligations are fixed on humans but execution is delegated to systems whose logic is inaccessible, undermining the foundational legal principle that responsibility requires comprehension. The non-obvious consequence is not just inefficiency or error, but the normalization of decision-making regimes that absolve no one while explaining nothing.

Epistemic Subordination

The pressure to adopt AI contract tools reflects not a choice between efficiency and due process but a structural shift that subordinates junior lawyers’ interpretive labor to machine outputs, reshaping professional development within firms. At global law firms like Allen & Overy or Skadden, first- and second-year associates are increasingly barred from line-by-line contract review in favor of validating AI-generated summaries, eroding their ability to build intuitive legal reasoning through exposure to nuance and exception. This creates epistemic subordination, where emerging lawyers are systematically denied access to the raw material of expertise, rendering them dependent on machine judgments they cannot critique or refine. The overlooked consequence is not merely compromised tools, but a generational degradation of legal cognition that threatens the profession’s long-term capacity for autonomous judgment.

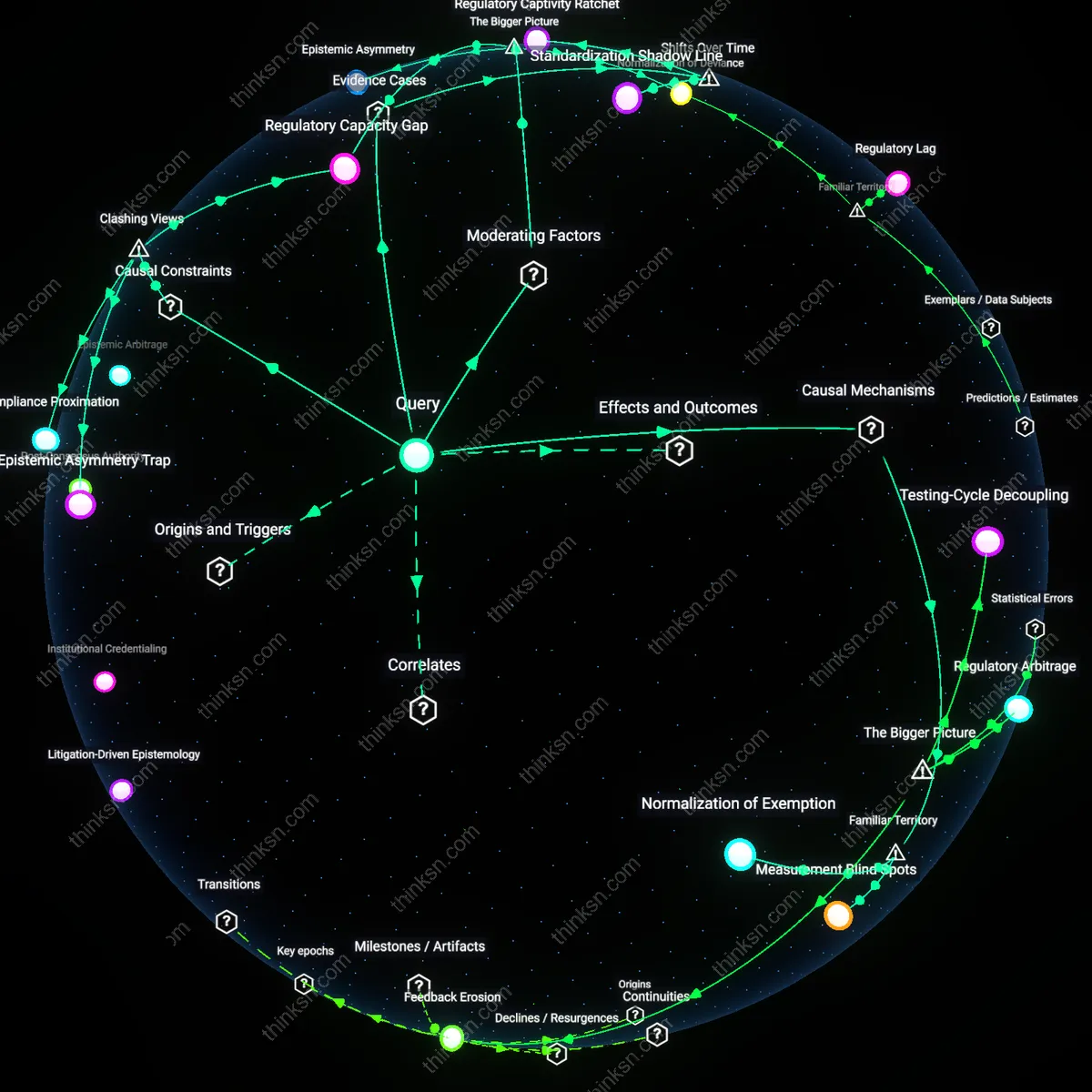

Compliance Debt

Corporate legal teams should trust AI contract-analysis tools with high consistency but low transparency because prolonged reliance on opaque AI systems creates a systemic backlog of unexamined contractual obligations, which accumulates as future legal vulnerability. This occurs when legal departments prioritize speed in contract review—driven by corporate pressure to scale operations—to clear workloads, yet forego understanding the diagnostic logic behind AI-generated outputs. The mechanism operates through centralized legal operations in multinational firms, particularly in jurisdictions like Delaware or London, where enforcement scrutiny intensifies post-signing, revealing that efficiency gains now are offset by hidden long-term compliance risks. What is underappreciated is that the lack of transparency doesn’t merely obscure AI reasoning—it systematically displaces accountability into future dispute resolution cycles, where contractual ambiguities reemerge as material liabilities.

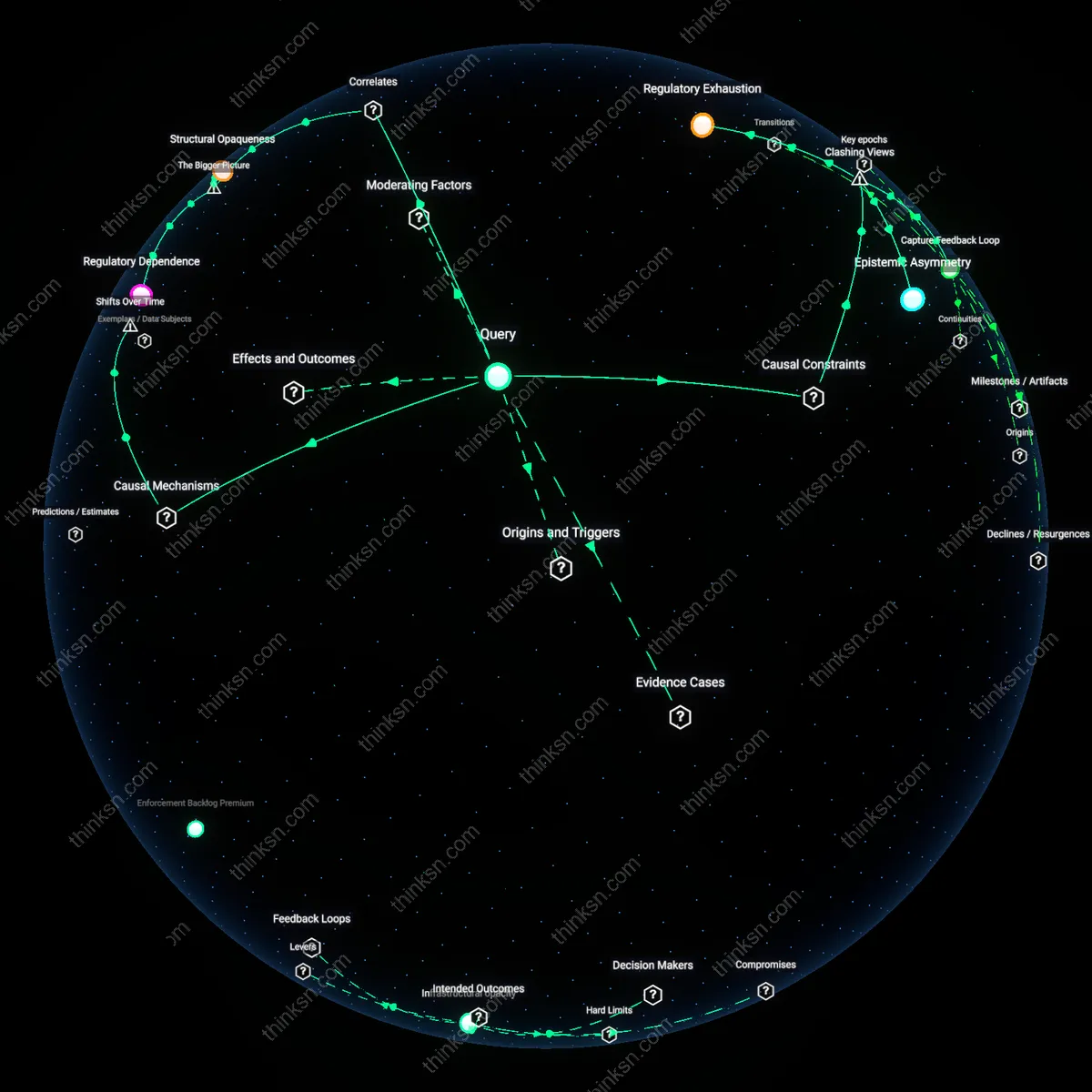

Epistemic Asymmetry

Corporate legal teams should not trust AI contract-analysis tools with high consistency but low transparency because the resulting information imbalance entrenches power within technical vendors rather than legal professionals, undermining due process as a functional norm. This dynamic unfolds when in-house counsel, dependent on proprietary algorithms from firms like Luminance or Ironclad, lose the ability to challenge or reinterpret analytical conclusions, effectively ceding interpretive authority to third-party developers operating under trade secrecy laws. The systemic consequence is visible in global corporations where legal teams must now defend decisions made by AI systems they cannot reconstruct—a condition that erases their role as independent fiduciaries. The non-obvious insight is that even with high output consistency, the inability to audit the basis of analysis shifts epistemic control from law departments to software providers, creating a structural dependency masquerading as automation.

Black-box procedural drift

Corporate legal teams should not trust AI contract-analysis tools because their historical shift from rule-based systems to opaque machine learning models has embedded hidden procedural distortions in contract interpretation. Unlike early expert systems that codified explicit legal logic and left audit trails, modern AI tools trained on proprietary datasets use pattern recognition that bypasses doctrinal reasoning, causing legal processes to covertly adapt to algorithmic tendencies rather than jurisprudential consistency—this drift is dangerous not because errors are frequent, but because they mimic due process while eroding its foundations over time. The non-obvious risk is that legal teams begin to treat output stability as validation, ignoring that consistency without explainability reinforces silent deviations from precedent, especially in jurisdictions with evolving contract norms.

Efficiency Trap

Corporate legal teams should not trust AI contract-analysis tools with high consistency but low transparency because prioritizing speed over interpretability risks normalizing flawed decisions that evade scrutiny. Large law firms and in-house legal departments adopt these tools to reduce review time by 40–60%, feeding standardized outputs into high-volume workflows at Fortune 500 companies, but the lack of explainability in models like those from Kira or Luminance means errors propagate silently through contract pipelines, privileging operational velocity at the expense of auditability. The non-obvious risk is that due process is eroded not through malice or negligence, but through seamless integration into familiar, efficiency-driven routines that lawyers equate with professionalism.

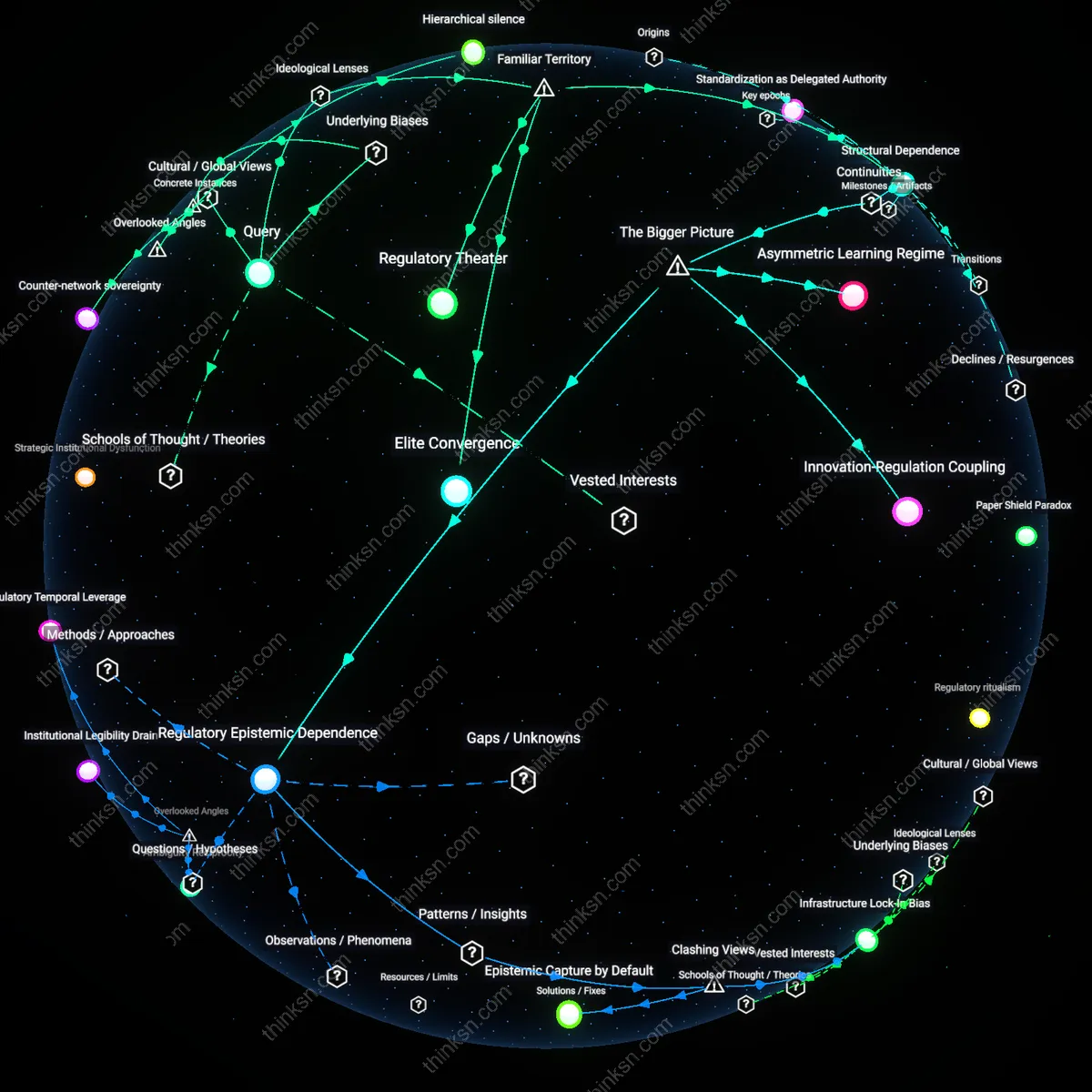

Black Box Authority

Corporate legal teams should trust AI contract-analysis tools only conditionally, because the opacity of proprietary algorithms transfers interpretive control from trained attorneys to unseen engineering teams operating within tech vendors like IBM or Litera. These tools function as inscrutable validators, where contractual meaning is increasingly certified not by legal reasoning but by statistical alignment to historical training data shaped by Silicon Valley norms and licensing patterns. The underappreciated shift is that lawyers, accustomed to citing precedent and jurisdiction, now defer to systems whose 'logic' cannot be challenged in court—transforming legal judgment into a compliance ritual anchored in technological credibility rather than doctrinal consistency.

Compliance Façade

Corporate legal teams should avoid full reliance on AI contract-analysis tools because the appearance of rigorous, consistent review masks the erosion of accountability expected in regulated industries such as healthcare and finance. Tools like DocuSign Insight or Thomson Reuters’ AI reviewers generate audit logs and scoring metrics that mimic legal diligence, satisfying internal governance checklists while obscuring the absence of human reasoning behind risk flags. The overlooked irony is that in seeking to meet compliance standards, legal departments may actually deepen exposure—since regulators expect judgment, not just justification—turning due process into a performative ritual calibrated for internal optics over genuine oversight.