Do Chatbots Free Consultants or Steal Their Future?

Analysis reveals 5 key thematic connections.

Key Findings

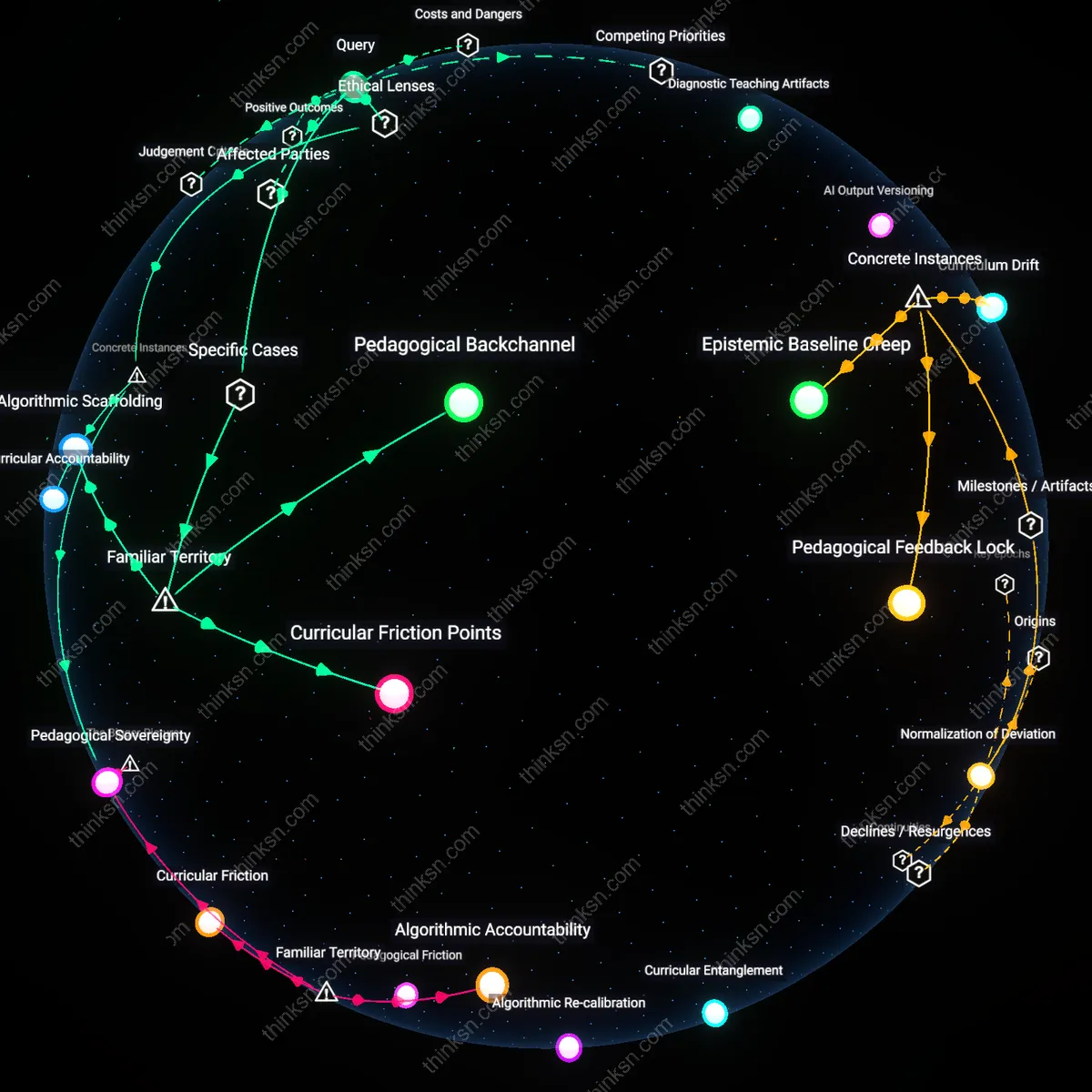

Adaptive Capacity

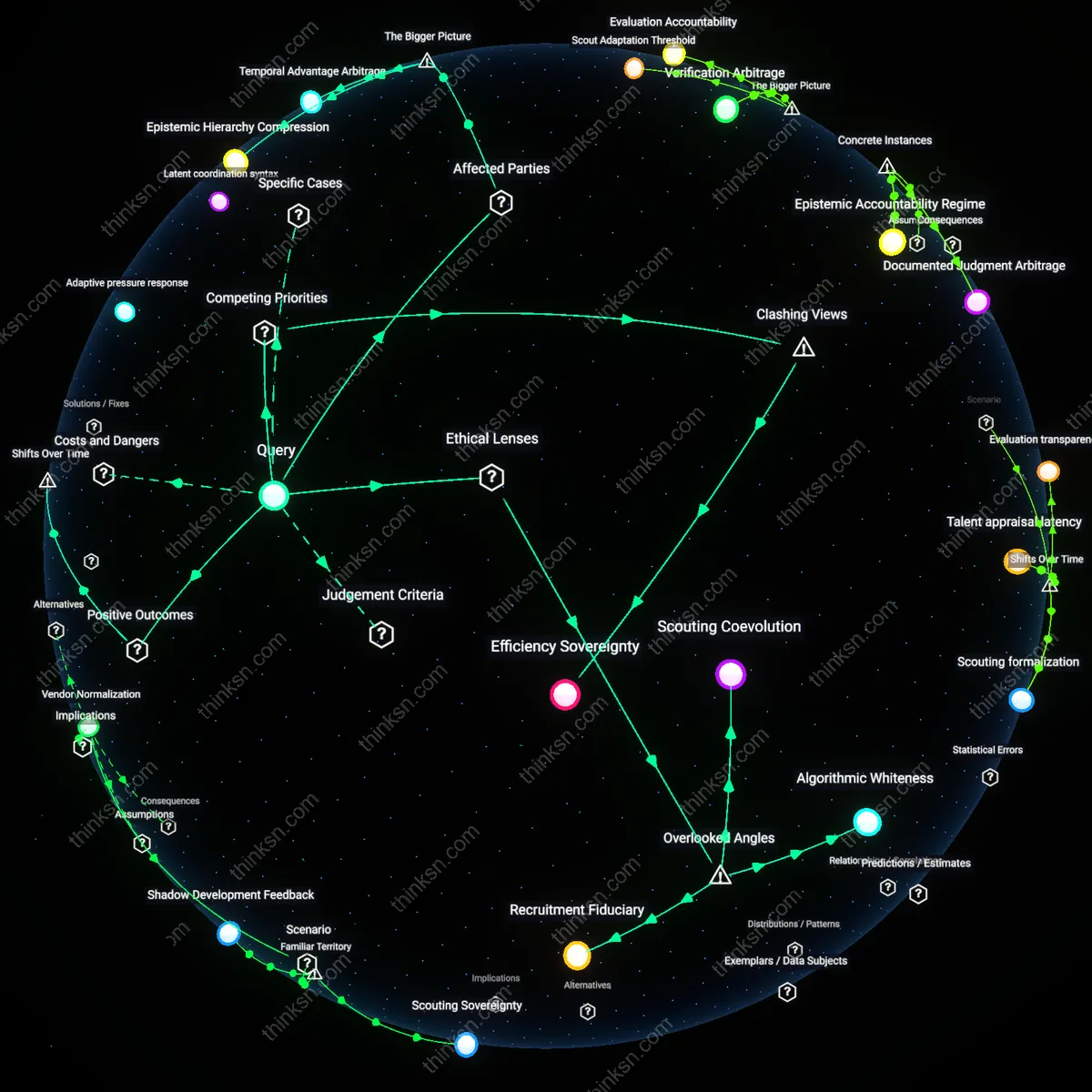

Consultants who develop AI-interaction design skills enhance their adaptive capacity in the face of shifting service automation trends, allowing them to reshape client engagement models before market pressures force reactive changes. This shift is driven by the increasing dominance of AI chatbots in first-point client contact, particularly in sectors like financial advisory and healthcare consulting, where firms such as Deloitte and Accenture now embed conversational AI into service delivery stacks—pressuring human consultants to add value upstream in experience architecture rather than downstream in information retrieval. The non-obvious consequence is that maintaining relevance in high-stakes advisory requires pre-emptive skill diversification, not specialization, because the boundary between 'routine' and 'strategic' interaction is being redefined by AI-augmented client expectations.

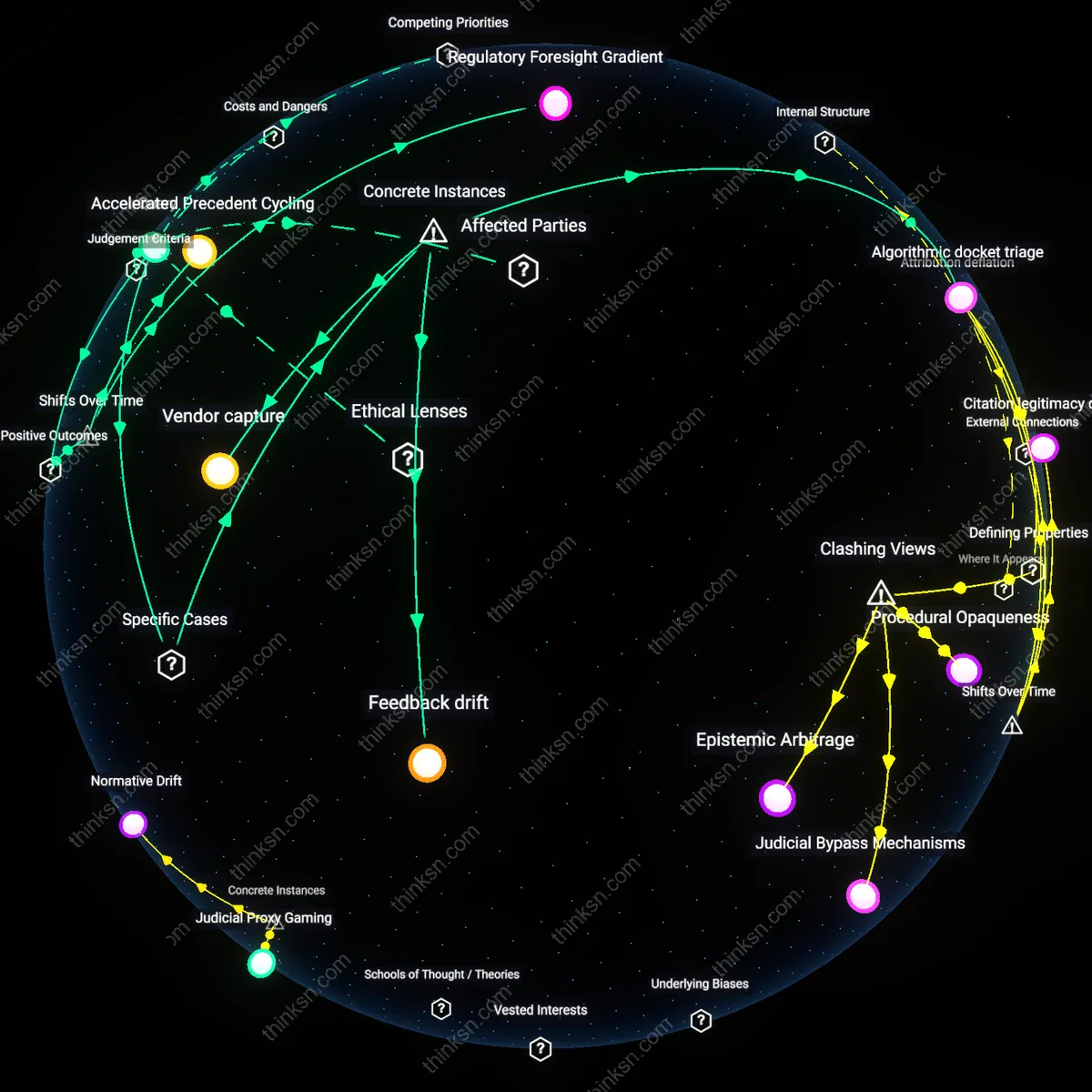

Strategic Gatekeeping

Remaining focused on high-stakes advisory work allows consultants to reinforce their role as strategic gatekeepers who interpret and legitimize AI-generated insights for executive decision-makers in regulated industries like pharmaceuticals and defense contracting. In these domains, AI chatbots handle inquiry triage, but final risk assessments require human validation due to compliance standards (e.g., FDA 21 CFR Part 11 or DoD RMF), creating structural demand for consultants who can bridge algorithmic output and institutional accountability. The underappreciated dynamic is that AI proliferation increases—not reduces—the need for socially authorized intermediaries, as organizational risk tolerance lags behind technological capability, making the consultant’s authority a systemic stabilizer.

Advisory Obsolescence

Consultants who prioritize high-stakes advisory work while relegating AI-interaction design to technical staff accelerate their own functional erosion, because elite strategic advice increasingly depends on proprietary data pipelines curated through direct AI-client engagement; senior advisors without immersion in AI interface dynamics lose access to the raw, unfiltered behavioral signals that shape emergent client needs, resulting in strategic recommendations that are retroactively rationalized rather than anticipatorily accurate — a danger rarely acknowledged in consulting firms where status is tied to boardroom presence rather than data intimacy.

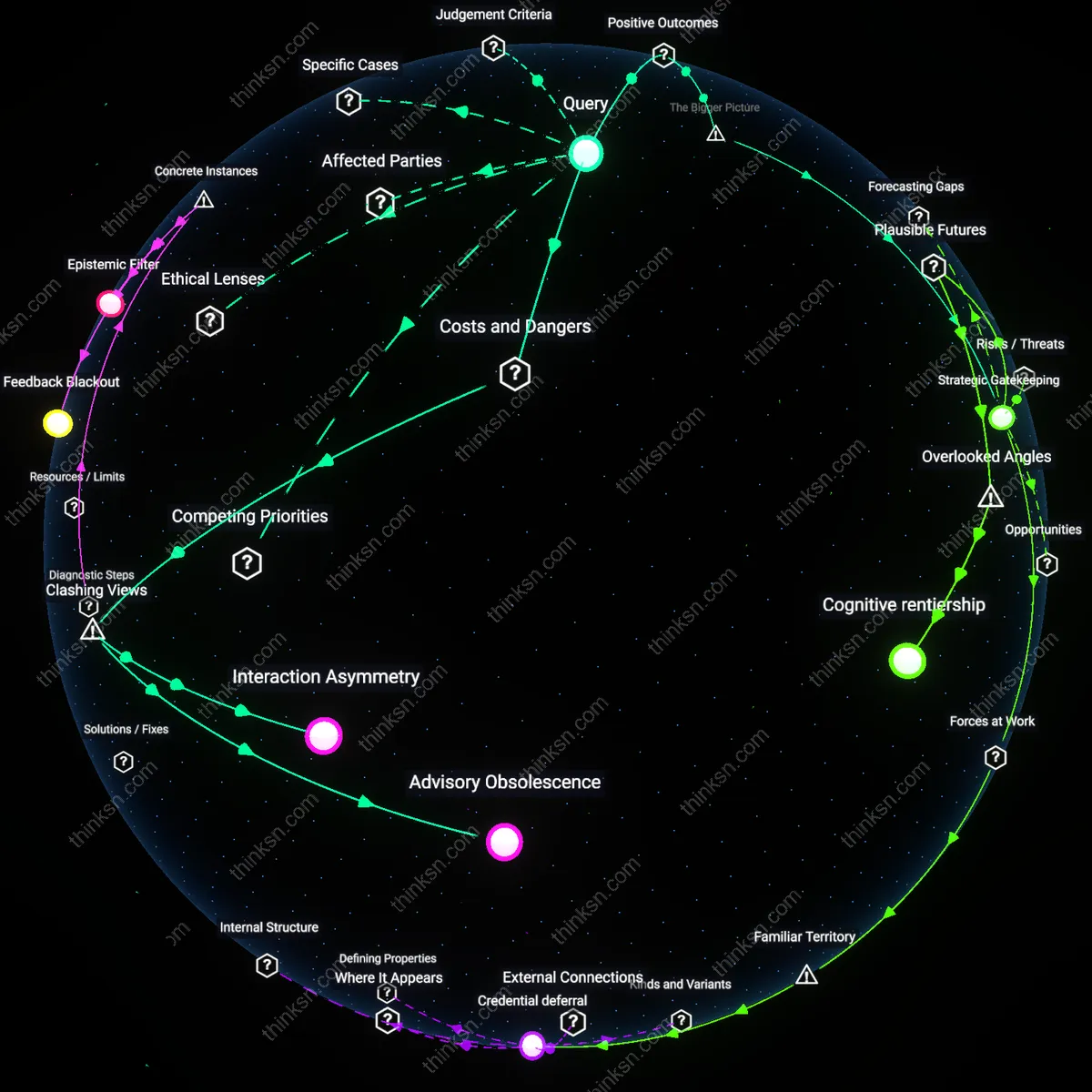

Interaction Asymmetry

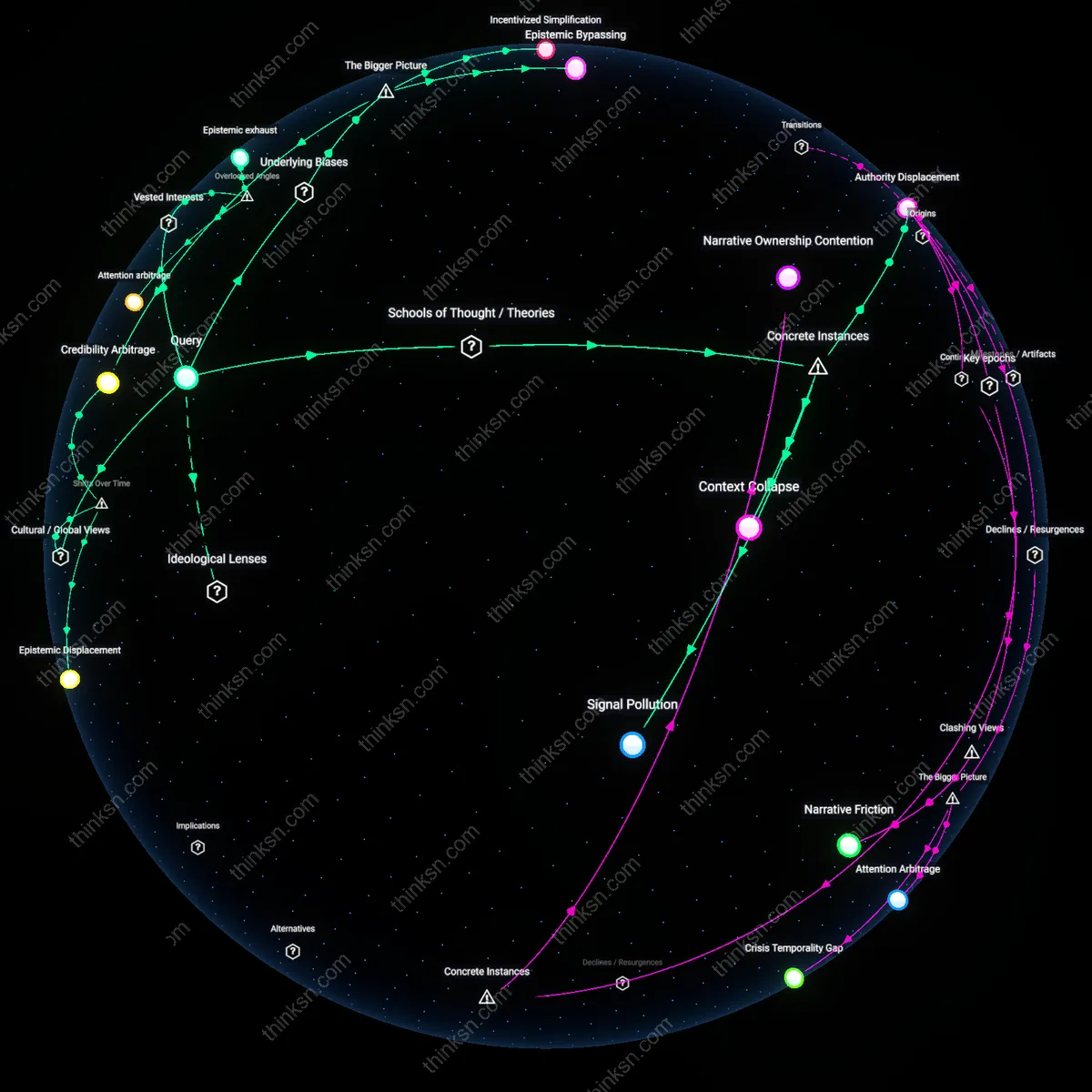

Firms that treat AI chatbots as mere handlers of routine inquiries blind themselves to the strategic asymmetry created when clients begin shaping their expectations and decision logic through frictionless AI interactions, because the real cost lies not in labor savings but in the gradual decoupling of consultant perception from client cognition — as clients learn to articulate needs in algorithmically legible ways, consultants who do not master this new dialect of inquiry risk becoming interpreters of secondhand insights in systems they no longer influence, a reversal few acknowledge as an active degradation rather than passive displacement.

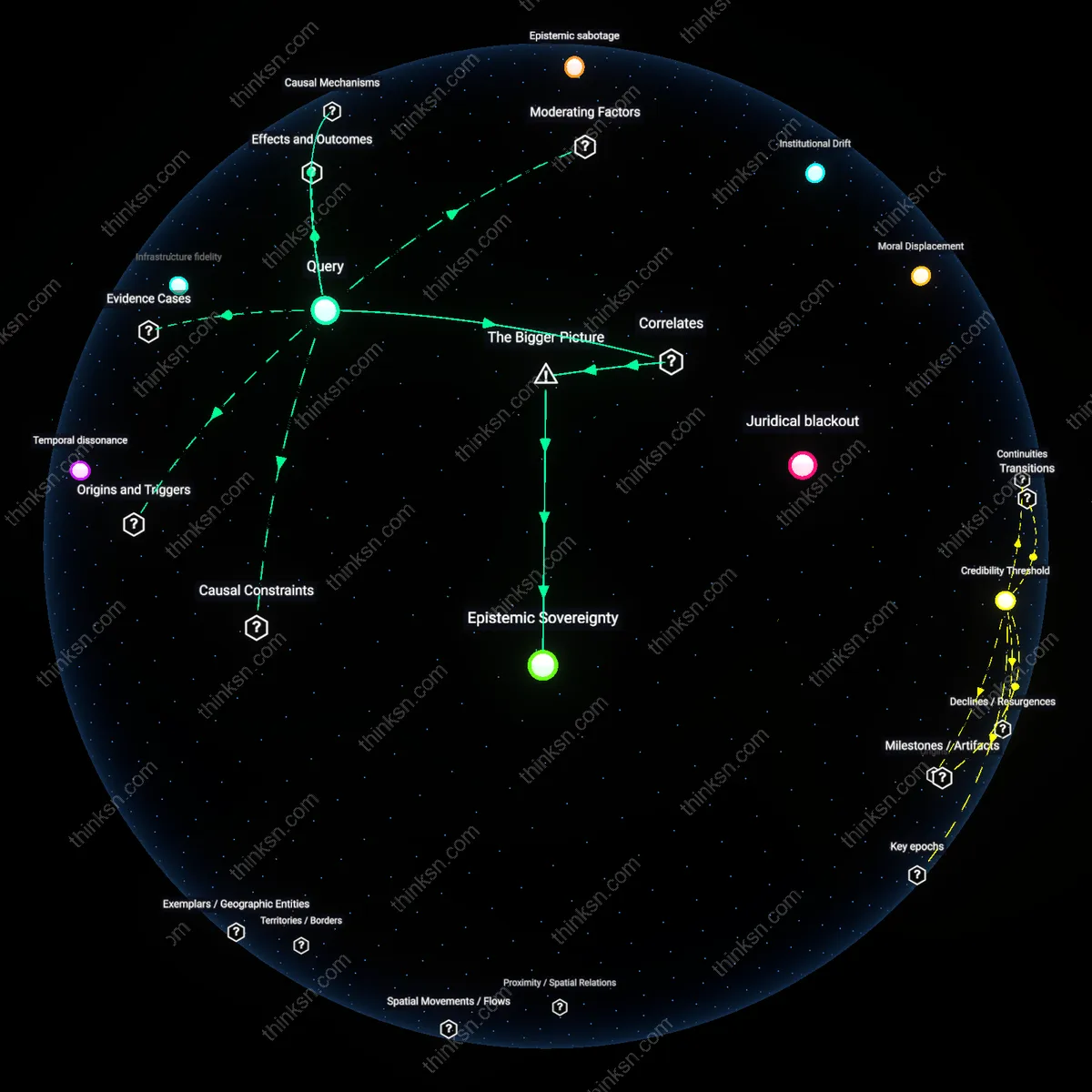

Behavioral Leakage

Prioritizing advisory roles over AI-interaction design surreptitiously cedes epistemic control to platform engineers and data scientists who decide how client behaviors are parsed, weighted, and funneled into decision models, because the danger is not malfunctioning chatbots but correctly functioning ones that normalize shallow client expressions and purge ambiguity before it reaches human consultants — this silent editing of reality creates systemic blind spots where complex, emotionally fraught, or ethically ambiguous issues are smoothed into operational noise, a risk invisible to those who assume high-stakes advice remains untainted by upstream interaction design.