AI or Advocacy? Mid-Career Lawyers Choose Their Future

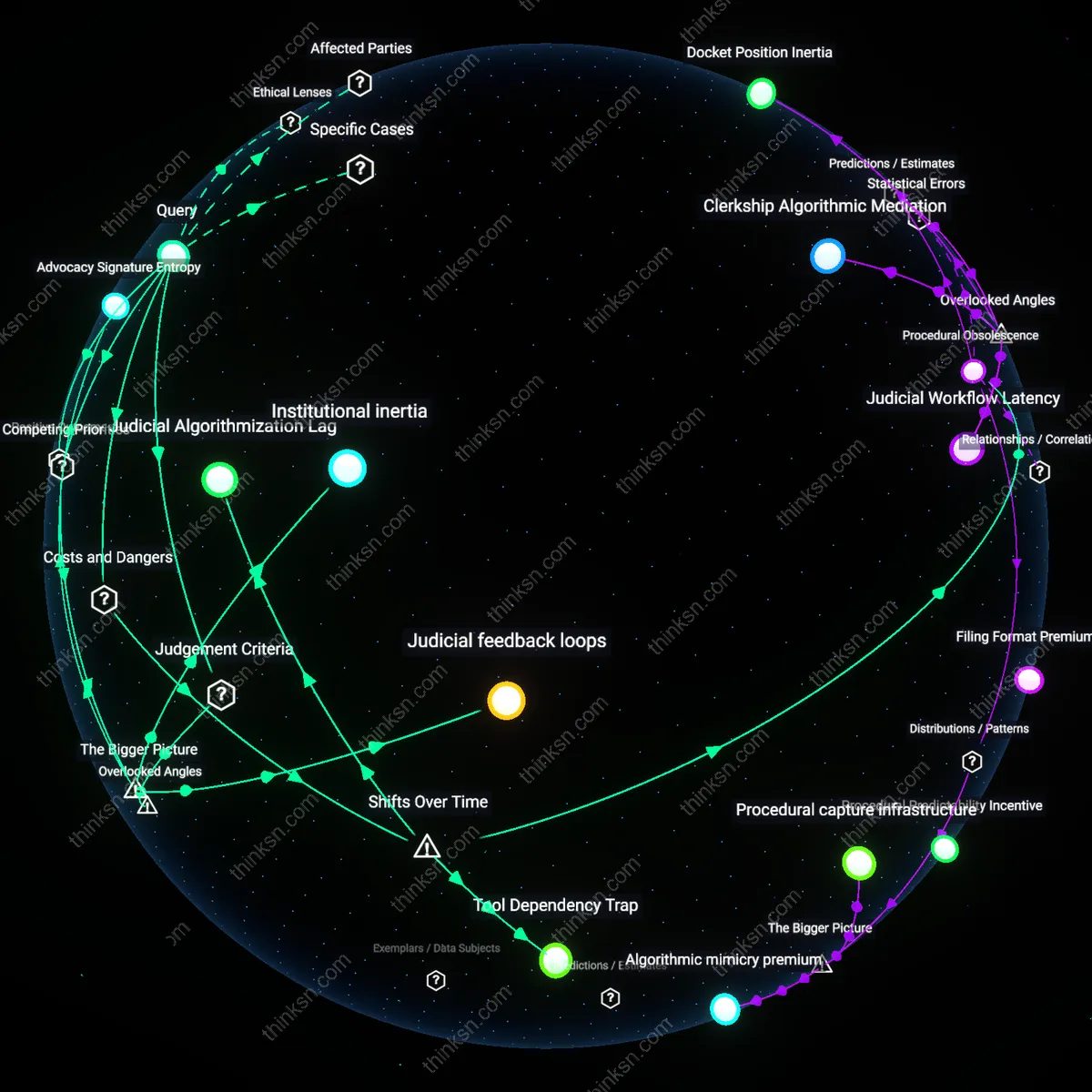

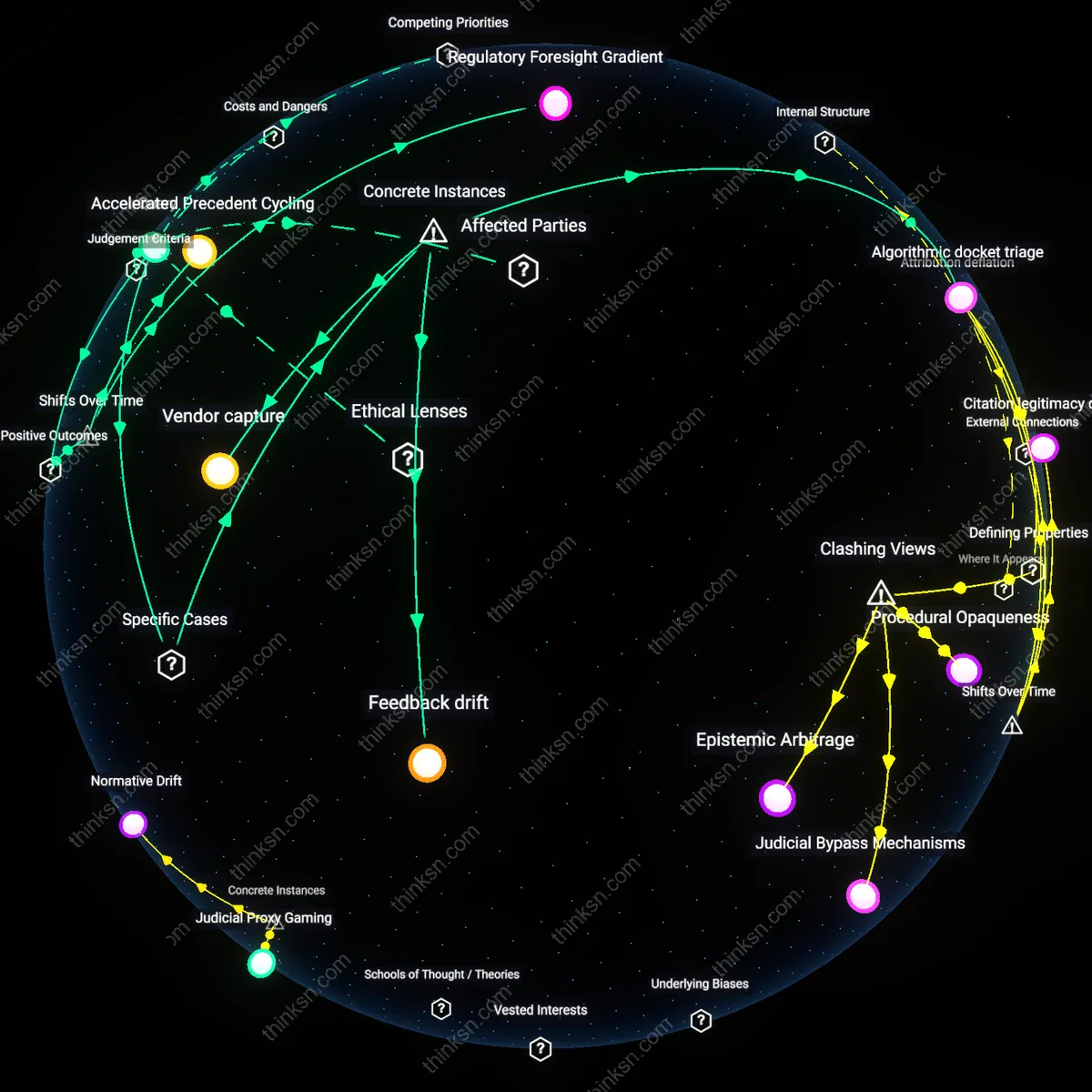

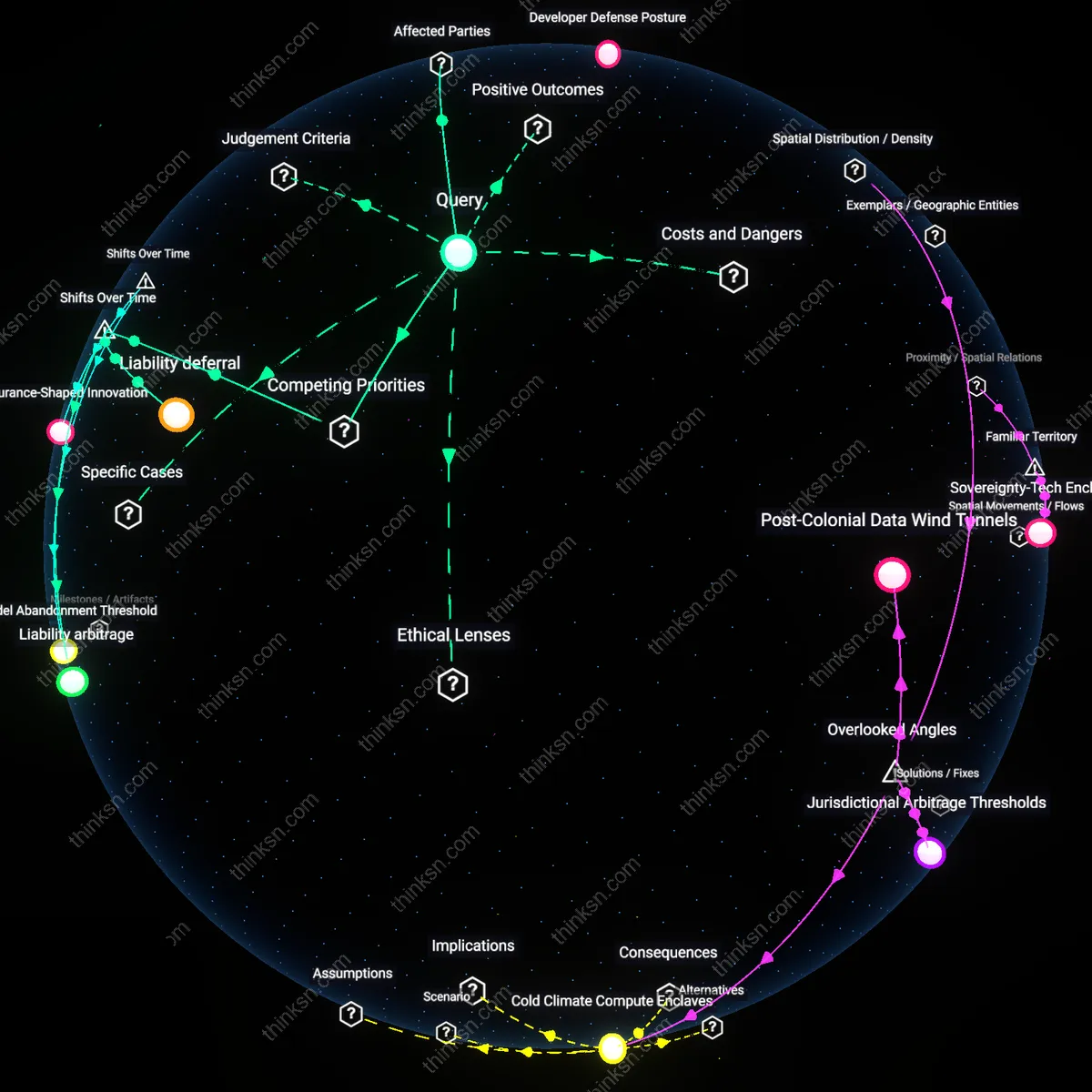

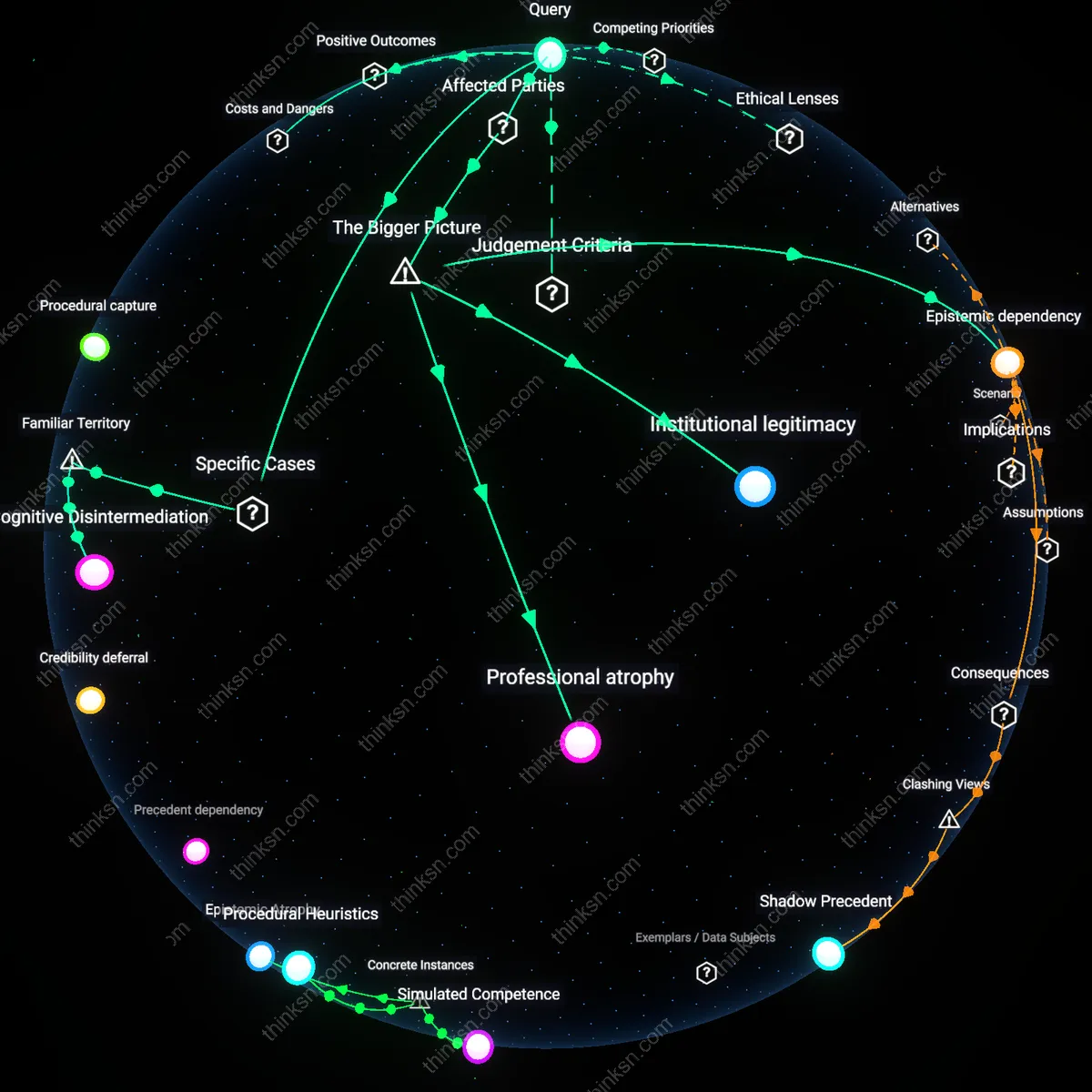

Analysis reveals 8 key thematic connections.

Key Findings

Litigation Ecosystem Lag

A mid-career lawyer should prioritize courtroom advocacy skills over AI tools because the inertia of procedural rules and institutional adoption in state trial courts creates a temporally protected niche for human performance that AI cannot yet exploit. The pace at which local civil procedure, evidentiary rulings, and judge-specific courtroom norms evolve is governed by judicial calendars, court funding cycles, and bar association lobbying—not by technological feasibility—meaning that AI integration lags years behind capability, especially outside federal or well-resourced jurisdictions. This delay establishes a de facto moratorium on automation in trial-level litigation, where outcome-controlling discretion resides with judges accustomed to analog workflows. The overlooked dynamic is that automation risk is not a function of AI’s technical ceiling but of the lowest common denominator in legal *implementation infrastructure*, a reality that flips the usual narrative equating sophistication with resilience.

Advocacy Signature Entropy

The lawyer should assess that courtroom advocacy remains defensible against automation only to the extent that their personal style produces unpredictable, narratively complex performances that resist algorithmic replication in real-time judicial decision environments—what appellate courts retrospectively condense into 'credibility differential' in reversal rationales. Judicial memory for oral argument turns less on doctrine than on affective anchoring, such as tone shifts during rebuttal or strategic silence, which are socially illegible yet statistically determinative in close cases. AI, bound to pattern optimization, routinely flattens these anomalies, making high-entropy advocates—those who systematize improvisation—not obsolete but *counter-optimized*, a category invisible to automation risk models that assume uniform skill distribution. The overlooked dependency is that courts automate inputs, not outcomes, meaning irregular human behavior can be a structural hedge if deliberately cultivated beyond rote 'charisma' or 'style' into procedural friction.

Epistemic Dependence

Investing in AI tools offers stronger protection against automation because law firms adopting predictive discovery and motion-drafting platforms like Casetext or Harvey create epistemic dependence—where judges, clerks, and opposing counsel come to rely on the uniformity and citation density these systems produce—making advocacy that deviates from algorithmically structured argumentation appear not just disadvantageous but professionally deviant; this shift reframes competence as alignment with machine-generated norms, rendering the most persuasive courtroom performers obsolete if they resist integration. The counterintuitive result is not that AI imitates lawyers, but that the legal field reshapes its epistemology around AI output, privileging structural conformity over performative skill.

Procedural Obsolescence

A mid-career lawyer who prioritizes courtroom advocacy over AI tools accelerates their own procedural obsolescence because advocacy skills, once the cornerstone of legal defense, are becoming structurally incompatible with the automated workflows now governing discovery, motion screening, and case triage in jurisdictions like the Southern District of New York, where AI-assisted docket management has reduced the weight of oral argument in pre-trial phases since 2021; this shift erodes the historical premium on live persuasion, replacing it with algorithmic predictability, a transition that is not merely technological but institutional—courts are now optimizing for speed and data coherence rather than adversarial drama, making the advocate’s craft increasingly residual despite its symbolic centrality.

Tool Dependency Trap

Investing in AI tools now exposes mid-career lawyers to a tool dependency trap, as the AI systems rolled out between 2020 and 2023 by firms like Ross Intelligence and LexisNexis are designed not for standalone utility but to lock users into proprietary data ecosystems controlled by legal tech conglomerates; this marks a break from the 20th-century model where research tools augmented independent judgment, whereas today’s AI embeds opaque risk models that subtly redefine legal strategy around vendor-generated benchmarks, creating a hidden dependency that compromises both autonomy and long-term adaptability as vendors—like Thomson Reuters—increasingly influence what counts as 'reasonable' legal analysis.

Judicial Algorithmization Lag

Focusing on courtroom advocacy underestimates the damage caused by the judicial algorithmization lag—the period since 2018 during which judges have been slow to understand AI evidence but fast to accept its outputs, creating a dangerous gap where automated risk assessments in bail, sentencing, and custody are admitted with minimal scrutiny despite documented racial biases in tools like COMPAS; this transition from human judgment to algorithmic deference without commensurate procedural safeguards means that lawyers skilled in traditional rhetoric are ill-equipped to challenge the technical opacity that now shapes case outcomes, revealing advocacy not as obsolete but as dangerously ceremonial.

Institutional inertia

A mid-career lawyer who prioritizes courtroom advocacy over AI tools will be better protected from automation because legal institutions systematically favor precedent and human discretion, which slows the integration of automated decision-making in trial settings. State-level court systems, particularly in common law jurisdictions like the U.S., rely on procedural formalism and judicial gatekeeping that privilege experienced advocates who can navigate ambiguity and persuasive rhetoric—conditions that resist algorithmic substitution. This creates a protective buffer for litigators who refine interpersonal and improvisational skills, revealing that the legal profession’s resistance to technological displacement is not due to technical limitations but institutional inertia rooted in procedural legitimacy.

Judicial feedback loops

Courtroom advocacy remains a resilient skill against automation because appellate outcomes—which shape trial strategy—depend on interpretive reasoning that is socially validated through judicial hierarchies, not computational accuracy. When trial lawyers frame arguments that anticipate how higher courts will interpret doctrinal nuance and public policy, they participate in feedback loops where rulings reinforce the value of narrative reasoning over pattern recognition, thereby preserving demand for human advocates. This dynamic reveals that the endurance of courtroom skills is not a lag in technological progress but a function of judicial feedback loops that reward strategic anticipation of human, not machine, cognition.