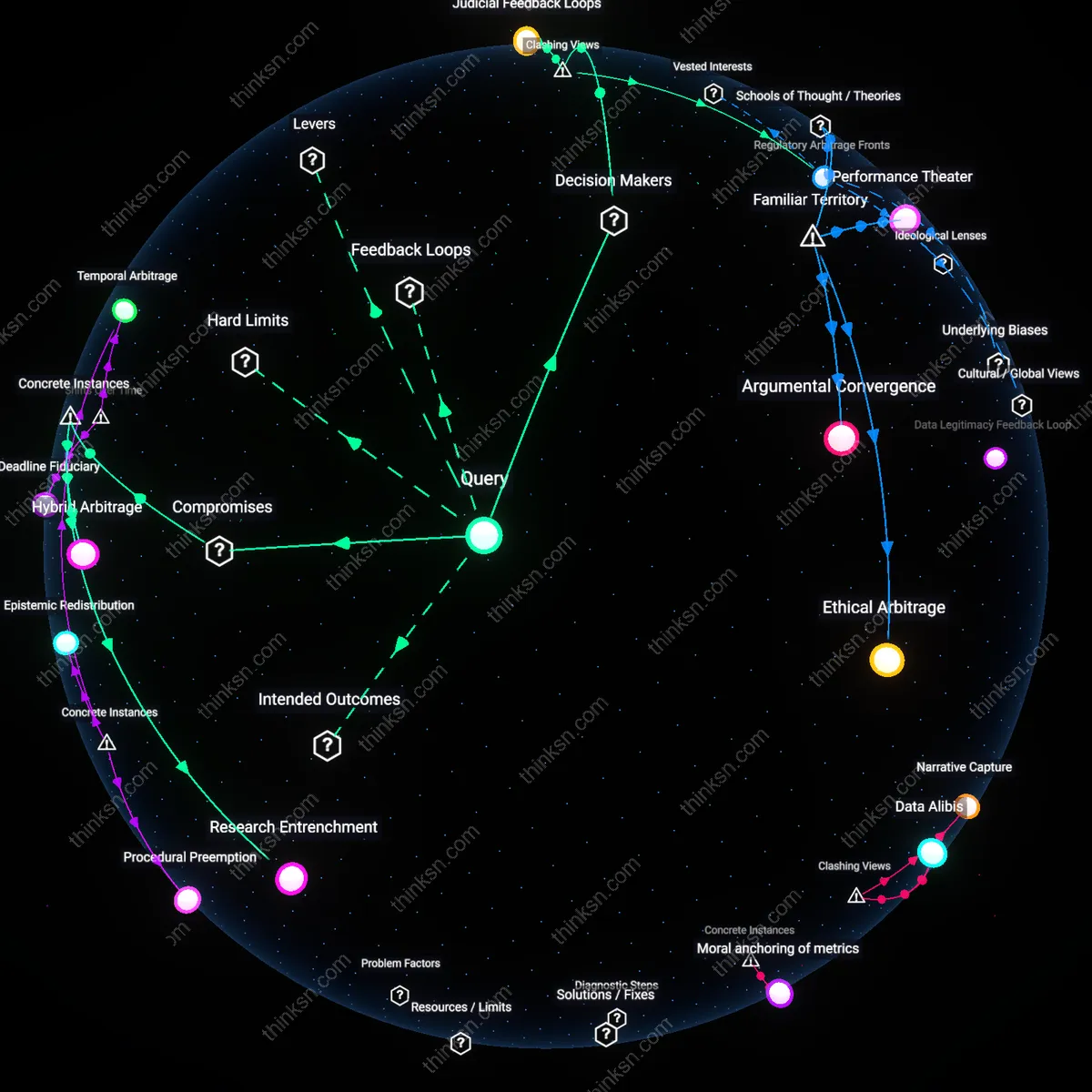

Narrative Capture

Moral narratives in recent corporate cases have diminished judicial reliance on raw data by reframing statistical evidence as ethically suspect or dehumanizing, particularly in post-2008 financial litigation. Judges increasingly treat datasets—such as algorithmic risk assessments or corporate audit trails—not as neutral tools but as artifacts of systemic moral failure, influenced by public and media pressure to hold institutions accountable in human terms. This shift, visible in rulings from the Southern District of New York in cases against banks like Wells Fargo and Lehman-linked entities, reveals a growing preference for testimonial coherence over probabilistic reasoning, where data is discounted when it conflicts with compelling stories of harm. The non-obvious result is that transparency in data can backfire, making corporations seem more culpable not for what the numbers show, but for their perceived coldness in deploying them.

Data Alibis

Judges have intensified their scrutiny of data in corporate cases not to override moral narratives but to expose their instrumentalization, especially since the rise of ESG-related litigation after 2020. In cases like those involving ExxonMobil or Volkswagen, courts have treated corporate-submitted data not as objective evidence but as performative alibis masked as accountability, where sustainability metrics or emissions reports are dismissed as moral theater. This skepticism has led to greater reliance on third-party or adversarially validated datasets, such as satellite emissions tracking or whistleblower analytics, which are framed as resisting narrative manipulation. The underappreciated dynamic is that moral storytelling hasn’t displaced data—it has triggered a judicial arms race to authenticate data as a counter-narrative force, revealing data’s evolving role as a tool to unmask strategic morality.

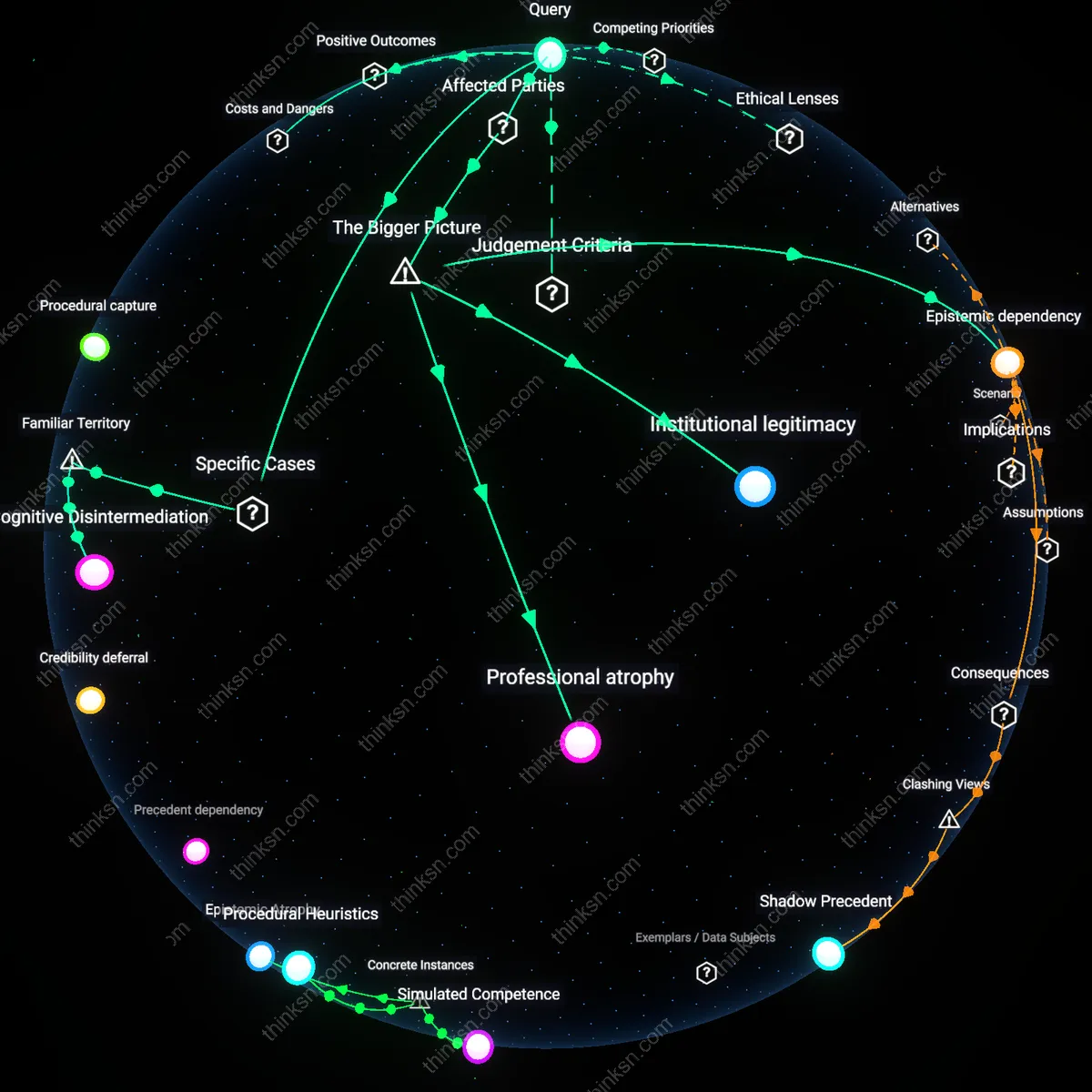

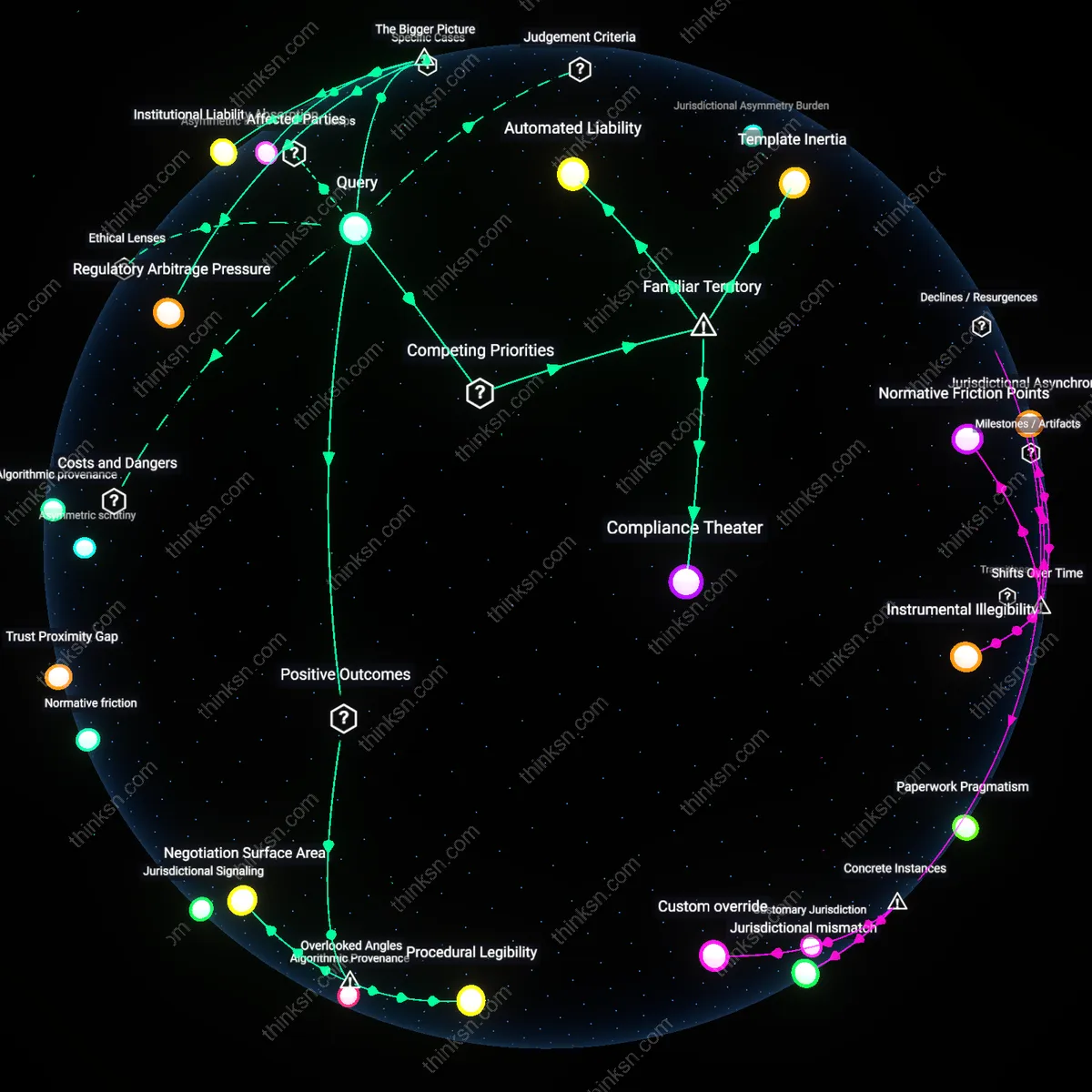

Temporal Legibility

The judicial preference for moral narratives over data is not a rejection of quantification but a recalibration around time-bound intelligibility, evident in bankruptcy and antitrust decisions such as those involving Purdue Pharma or Amazon from 2015 onward. Judges treat longitudinal data as unreliable not due to inaccuracy but because it complicates clear moral periodization—courts need narrative closure, which data often defers by showing gradual, systemic causation. As a result, judges selectively truncate data series to align with legally recognized moments of harm or decision-making, rendering data dependent on narrative chronologies rather than the reverse. The overlooked insight is that data has not lost authority, but its legitimacy is now contingent on fitting within judicially constructed moral timelines, revealing a hidden regime of temporal legibility shaping evidentiary weight.

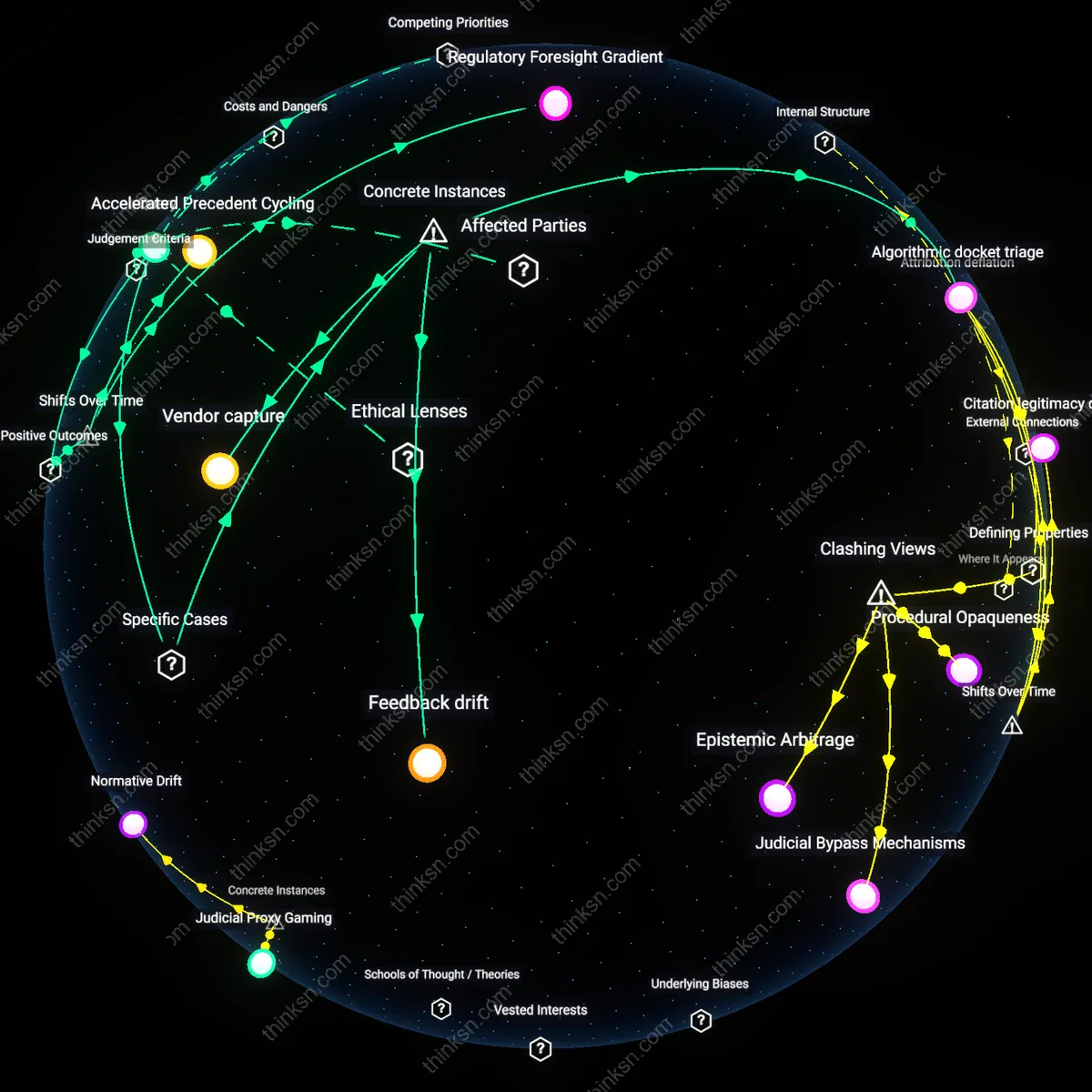

Judicial Epistemic Recalibration

Judges increasingly treat corporate moral narratives as evidence of systemic risk, which reorients their reliance on data toward predictive validity rather than retrospective accuracy. Faced with high-profile cases like Volkswagen’s emissions scandal or Facebook’s privacy violations, judges now interpret corporate admissions of ethical failure—not just numerical discrepancies—as markers of institutionalized deception, prompting heightened scrutiny of audited datasets. This shift operates through courtroom practices in federal district courts and regulatory tribunals, where moral framing by prosecutors has activated judicial skepticism toward sanitized data submissions, particularly when dissonant with organizational conduct. The non-obvious consequence is that data is no longer assessed neutrally but filtered through a credibility cascade initiated by moral narrative, altering evidentiary weight based on perceived corporate character.

Regulatory Narrative Capture

Moral narratives in corporate misconduct cases have enabled regulatory agencies to reposition themselves as moral interpreters, thereby influencing judicial data reliance through institutional intermediation. When entities like the SEC or DOJ frame enforcement actions around fiduciary betrayal or environmental harm—as seen in the Purdue Pharma or Enron cases—they embed qualitative judgments into settlement records, which courts then cite as authoritative context for data evaluation. This channel works through administrative precedent formation, where judicial deference to agency narrative authority converts moral claims into structural assumptions about data reliability. The underappreciated dynamic is that judges outsource epistemic labor to regulators who, in turn, weaponize morality to shape how quantitative evidence is contextualized and weighted downstream.

Data Legitimacy Feedback Loop

Public moral outrage channeled through media and legislative demands after corporate scandals has forced courts to treat data as politically embedded, not technically autonomous, thereby redefining evidentiary standards. In cases like Wells Fargo’s fake accounts or Boeing’s 737 MAX approvals, sustained public discourse framing data manipulation as moral collapse pressured appellate courts to question the neutrality of internal corporate metrics, even when statistically sound. This operates through the judicial responsiveness to democratic accountability pressures, particularly in elected or confirmable judiciaries, where perceived legitimacy depends on alignment with societal expectations of justice. The overlooked mechanism is that moral narratives do not just supplement data—they trigger a recursive process where judicial skepticism generates demand for new data forms, which then require further moral validation, cementing an interdependent cycle.

Moral anchoring of metrics

In the 2001 Enron case, judges ultimately relied not on raw financial data alone but on how prosecutors framed those figures as evidence of deliberate deceit by senior executives, revealing that data only gains judicial traction when embedded in a narrative of moral betrayal. The mechanisms of accounting fraud were complex, but the trial’s decisive turn came when Excel spreadsheets and off-balance-sheet entities were presented not as technicalities but as tools of greed and deception, thus activating judicial willingness to treat quantifiable anomalies as legal facts. This shows that judicial reliance on data has not increased autonomously—its admissibility and persuasive force depend on moral narratives that render abstract numbers as symptoms of ethical collapse, a continuity from older equity doctrines where conscience shaped evidence interpretation. The underappreciated point is that data never speaks for itself in court; it must be morally contextualized to become actionable.

Moralized quantification

Judges increasingly treat corporate data as suspect testimony that must be morally contextualized, a shift accelerated after the 2008 financial crisis when forensic audits exposed systematic manipulation of risk metrics by firms like Enron and AIG, revealing that data was not neutral but shaped by ethical failures; this led courts to incorporate narrative evidence of corporate culture into evidentiary weightings, making numbers legible only through moral framing. The mechanism operates through judicial adoption of SEC remedial reports as interpretive lenses for statistical evidence, privileging consistency with reform narratives over mathematical precision—what is underappreciated is that data has not been rejected but morally conditioned, transforming quantification into a form of accountability theater rather than objective proof.

Temporal evidentiary burden

Courts now impose an asymmetric timeline on corporate data validity, where past data is treated as inherently compromised by moral failure while future compliance data is granted provisional credibility, a shift crystallized in post-Dodd-Frank settlements involving Citigroup and Bank of America that established monitoring periods as evidentiary bridges; this temporal bifurcation institutionalizes moral rehabilitation as a prerequisite for data trust. The system functions through court-appointed monitors whose reports validate subsequent datasets, making the admissibility of numbers contingent on narrative arcs of reform—what remains underrecognized is that this timeline reorders evidentiary logic historically, replacing static reliability with phased moral progression as the foundation of data admissibility.