Smart Speakers: Convenience vs. Biometric Risk?

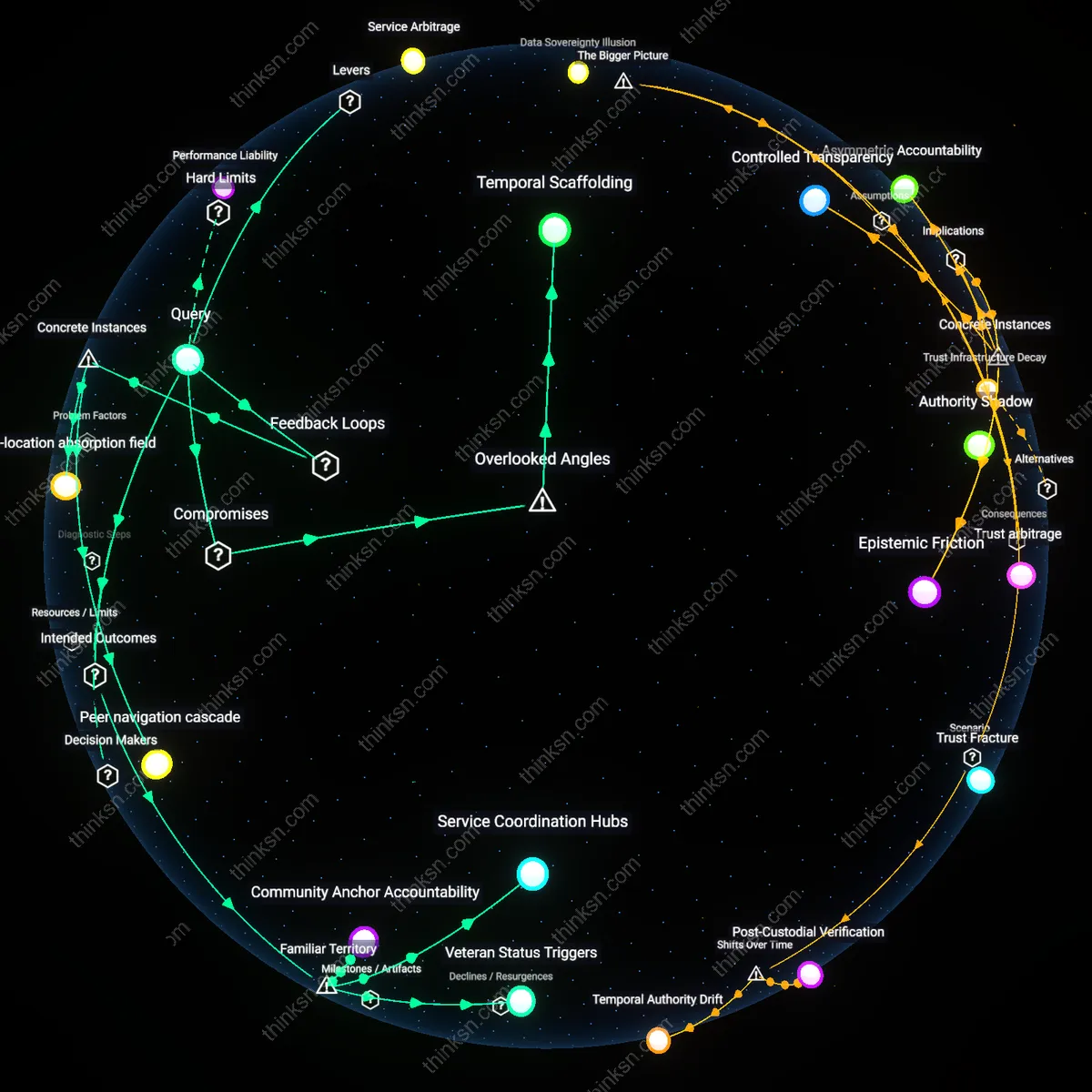

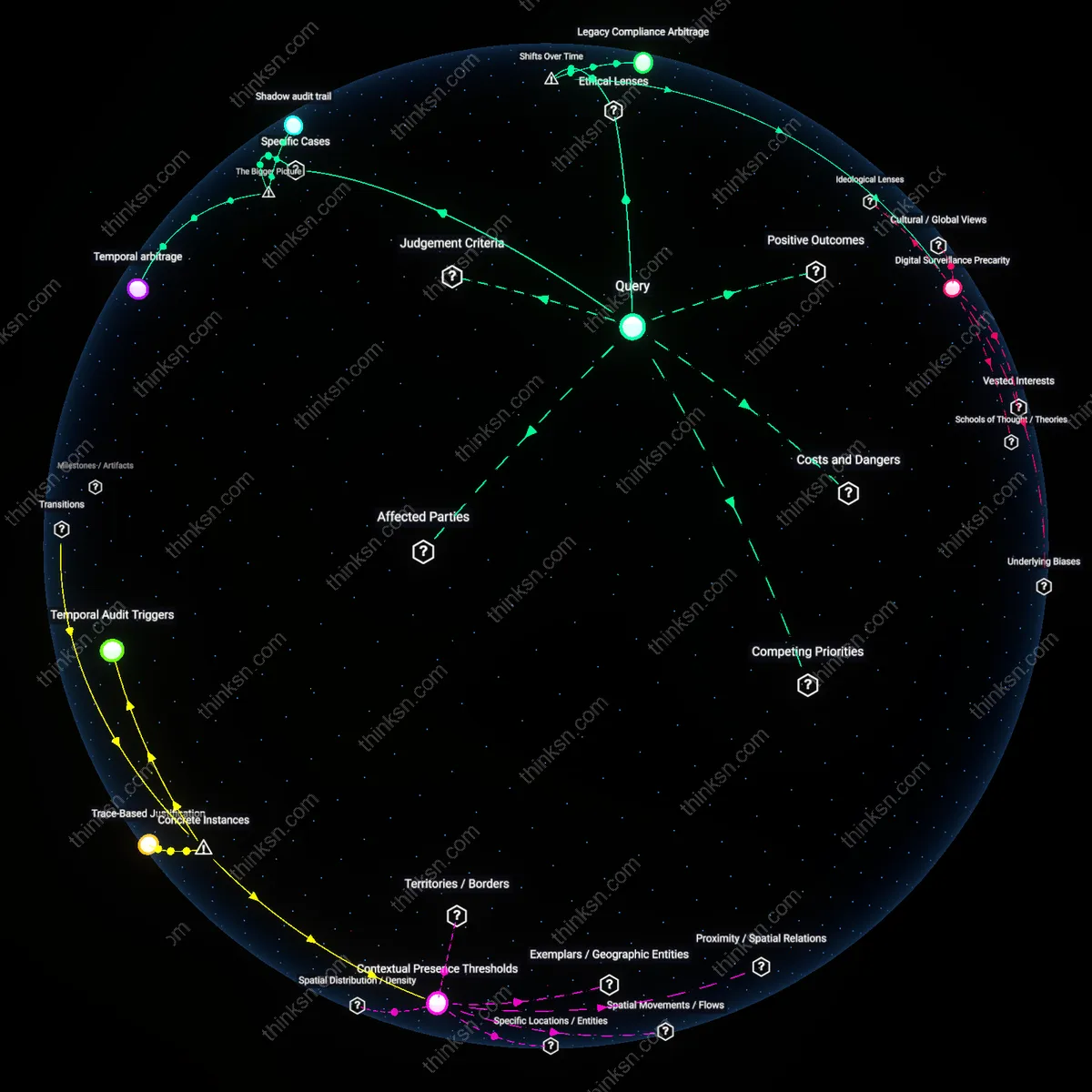

Analysis reveals 7 key thematic connections.

Key Findings

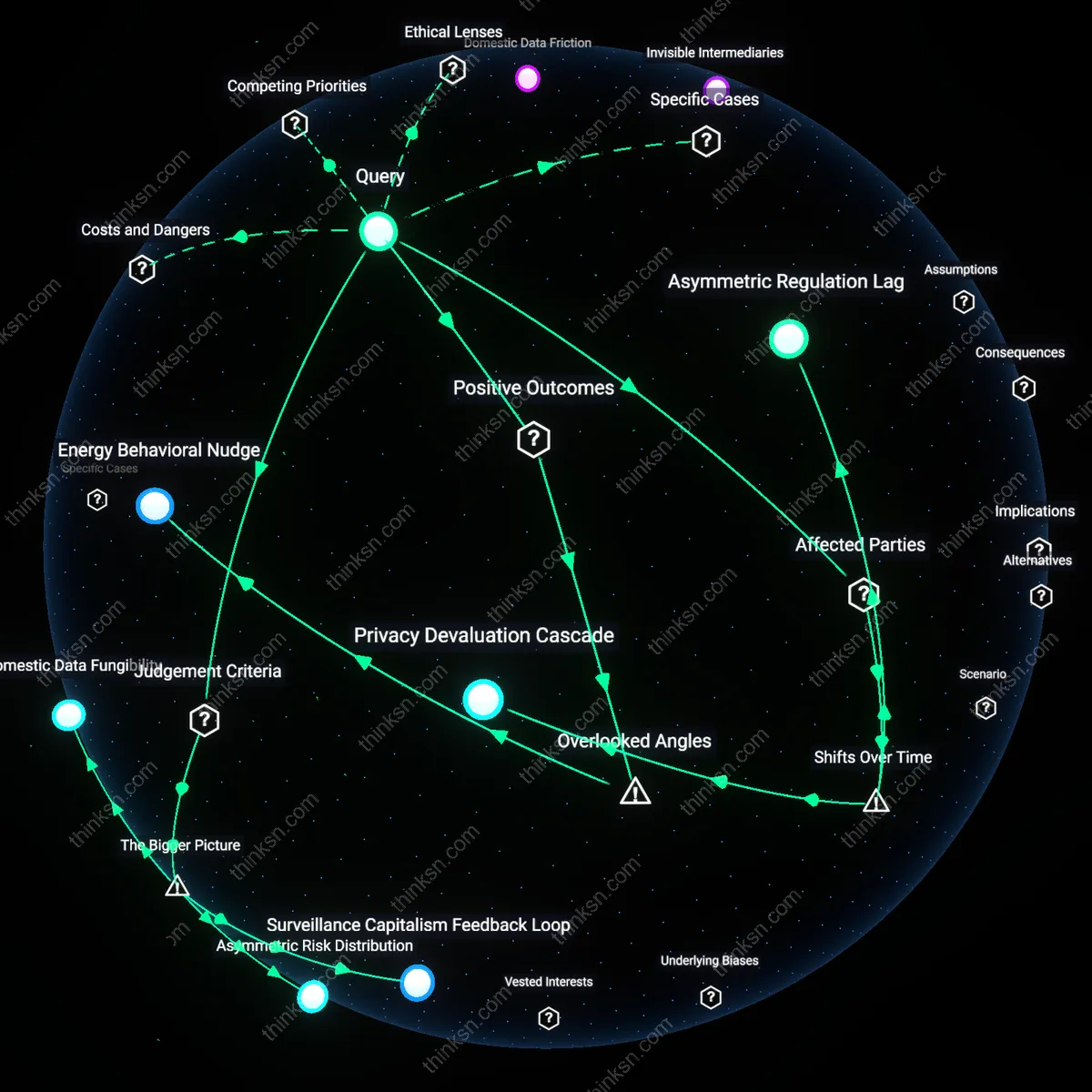

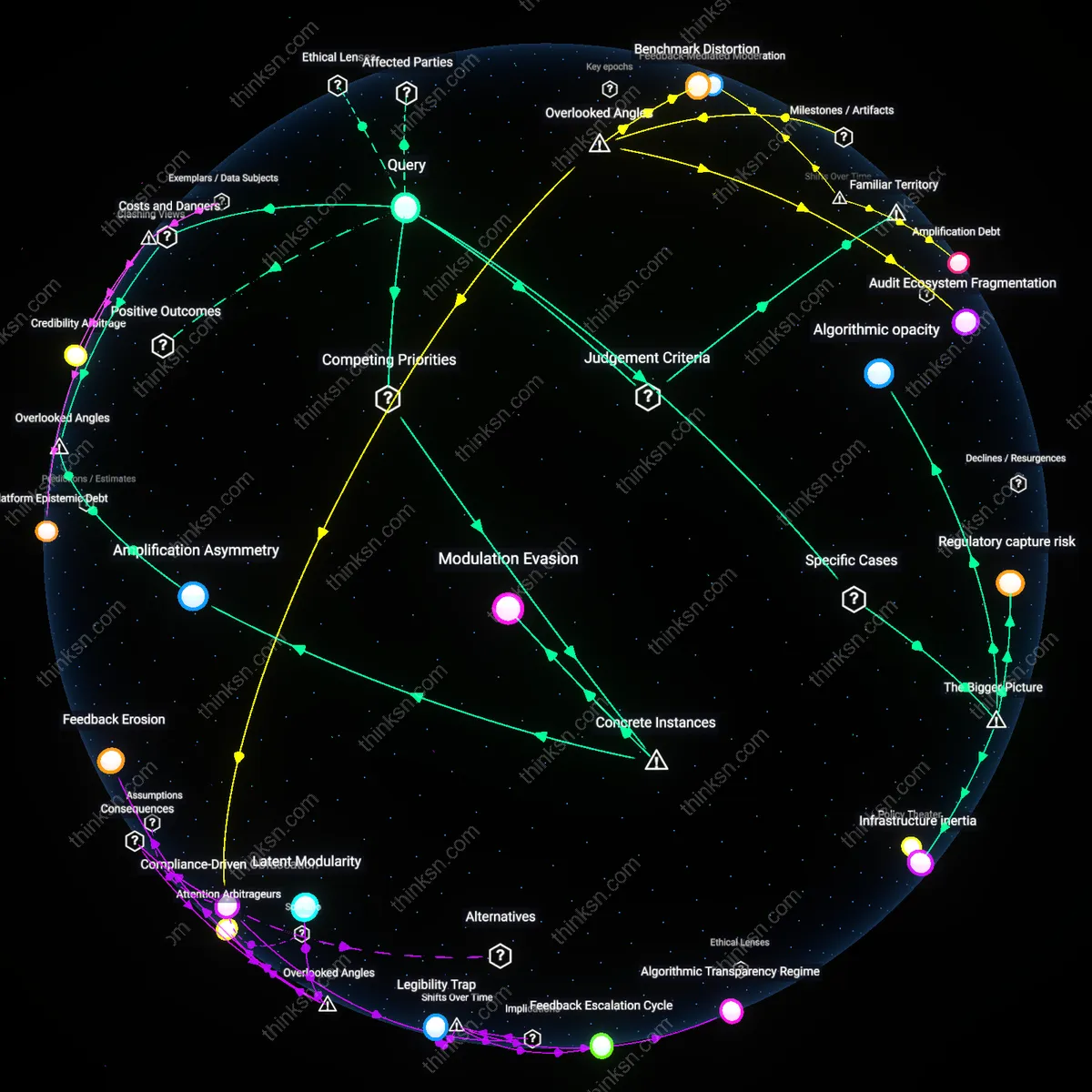

Privacy Devaluation Cascade

The convenience of voice-controlled smart-home speakers now outweighs biometric risks for early adopters because a 2015–2020 normalization of ambient data collection shifted consumer expectations—tech companies, through iterative design and free-tier services, embedded voice assistants into daily routines, conditioning households to trade vocal uniqueness for hands-free efficiency. This transition eroded pre-2010 norms where biometric data was restricted to high-security contexts like border control or banking, revealing how routine domestication of voiceprints has desensitized users to downstream surveillance possibilities, particularly among urban millennials who prioritize accessibility over data sovereignty.

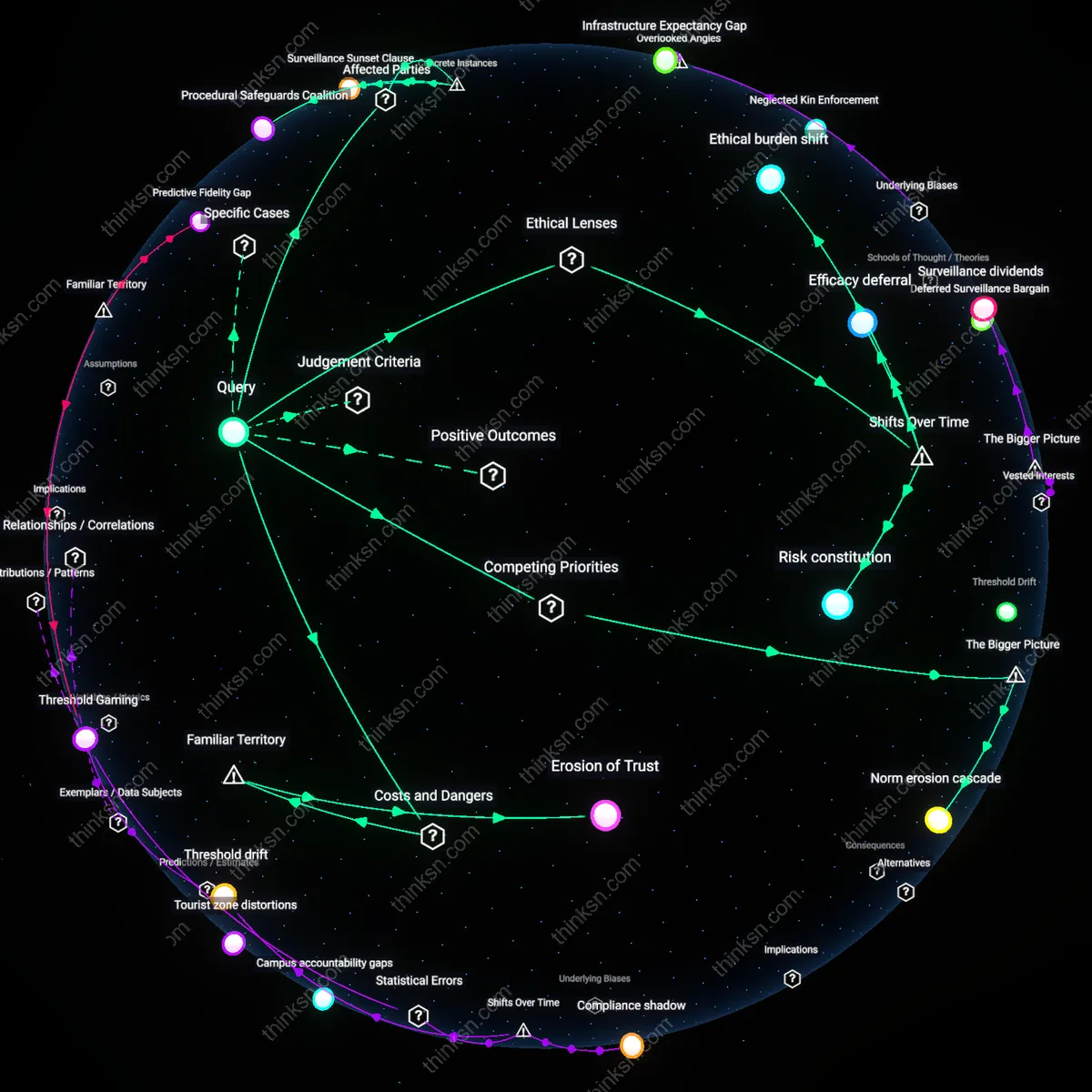

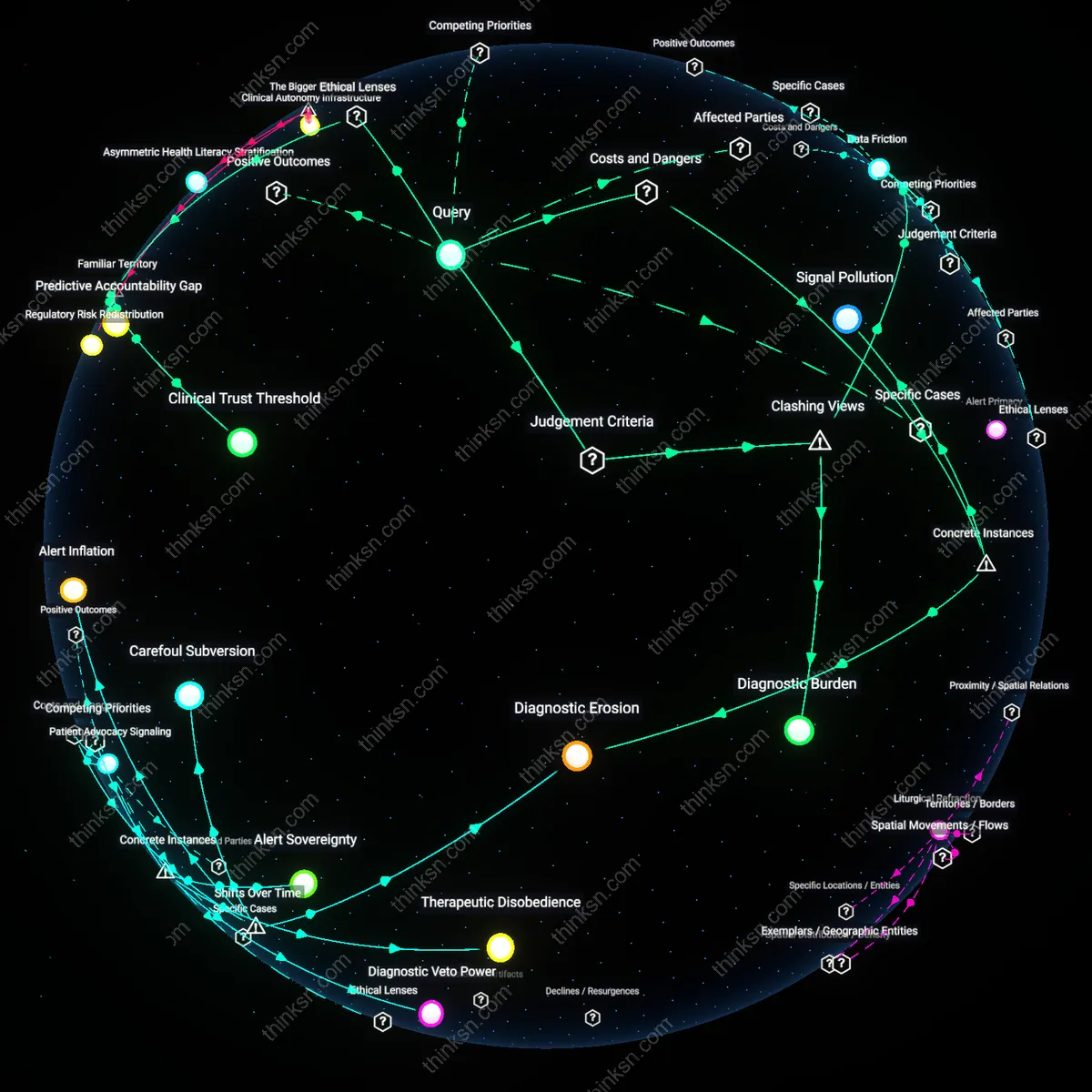

Institutional Trust Arbitrage

For elderly and disabled populations, the utility of voice assistants outweighs biometric risks due to a post-2018 pivot in healthcare delivery systems, where Medicaid waivers and aging-in-place programs began subsidizing smart speakers as assistive devices—this shift transferred responsibility for data governance from centralized health institutions to consumer tech firms, creating a new dependency on Amazon or Google for medical reminders and emergency calls. The non-obvious effect is that vulnerable groups, historically protected by HIPAA-bound providers, now expose voice biomarkers through devices with weaker regulatory oversight, trusting familiar brands more than obscure privacy policies.

Asymmetric Regulation Lag

Biometric data misuse risks now outweigh convenience for low-income renters due to a 2020–2023 surge in landlord-deployed smart speaker pilots, where property management firms in cities like Phoenix and Detroit began installing voice-enabled units as 'amenities' without informed consent—this shift transforms homes into unregulated data extraction zones, as tenant agreements rarely account for continuous voice monitoring, and eviction laws provide no recourse for refusal. The overlooked dynamic is that what was once a consumer choice marketed to homeowners has become a coercive, top-down surveillance layer in rental housing, exposing marginalized groups to predictive policing algorithms trained on vocal stress markers.

Surveillance Capitalism Feedback Loop

Yes, because corporate profit models systematically incentivize continuous voice data collection, which erodes user autonomy—platforms like Amazon Alexa refine advertising and service ecosystems by monetizing biometric voiceprints through third-party data brokers, creating a self-reinforcing cycle where convenience is subsidized by covert surveillance, a mechanism sustained by asymmetrical power in digital markets that normalizes consent-through-use. This dynamic is underappreciated because users perceive voice interactions as discrete events, not cumulative behavioral training inputs for predictive algorithms operated by ecosystems beyond the speaker itself, such as smart city planning or insurance risk profiling. The real driver is not the device but the infrastructural integration of voice data into automated decision-making networks that predate and outlast individual consent moments.

Asymmetric Risk Distribution

No, because marginalized populations bear disproportionate harm from biometric misuse despite minimal adoption benefits—they face higher exposure to law enforcement voiceprint databases (e.g., FBI’s Voice Biometric System) and lower recourse when voice data is weaponized in housing or employment discrimination, while the convenience of hands-free control often fails to address their pressing needs, such as energy affordability or physical accessibility. This imbalance is enabled by regulatory frameworks that treat biometric data as personal property rather than collective civil infrastructure, privileging innovation speed over societal resilience. The overlooked consequence is that voice tech amplifies structural inequity not through intent but through differential impact scaled by existing state and corporate surveillance architectures.

Domestic Data Fungibility

The convenience does not outweigh the risks because voice data captured in private homes is routinely repurposed for non-domestic functions—recordings from Google Nest devices are used to train large language models deployed in customer service automation, meaning intimate vocal patterns shape profit-generating AI systems far removed from household routines. This occurs due to contractual fine print that grants platform owners perpetual, irrevocable data rights, transforming domestic speech into industrial training fuel via legal rather than technical mechanisms. The underappreciated reality is that the home has become a data extraction site not because of hacking or breaches, but through legitimate, user-agreed data flows that blur the boundary between personal expression and commercial raw material.

Energy Behavioral Nudge

In Germany’s energy-cooperative housing blocks, voice-controlled thermostats linked to real-time grid demand data have reduced household energy consumption by passively guiding residents toward off-peak usage through vocal feedback. The system works by delaying responses during high-load periods with phrases like 'I’ll adjust that in 20 minutes when electricity is greener,' making abstract grid dynamics personally salient. The overlooked mechanism is voice as a temporal mediation layer—its conversational pacing inherently introduces response lag that, when strategically timed, reframes immediacy as environmental responsibility, subtly reshaping user expectations of instant gratification in energy use.