Is an Audit Needed When Algorithms Rank Small Businesses?

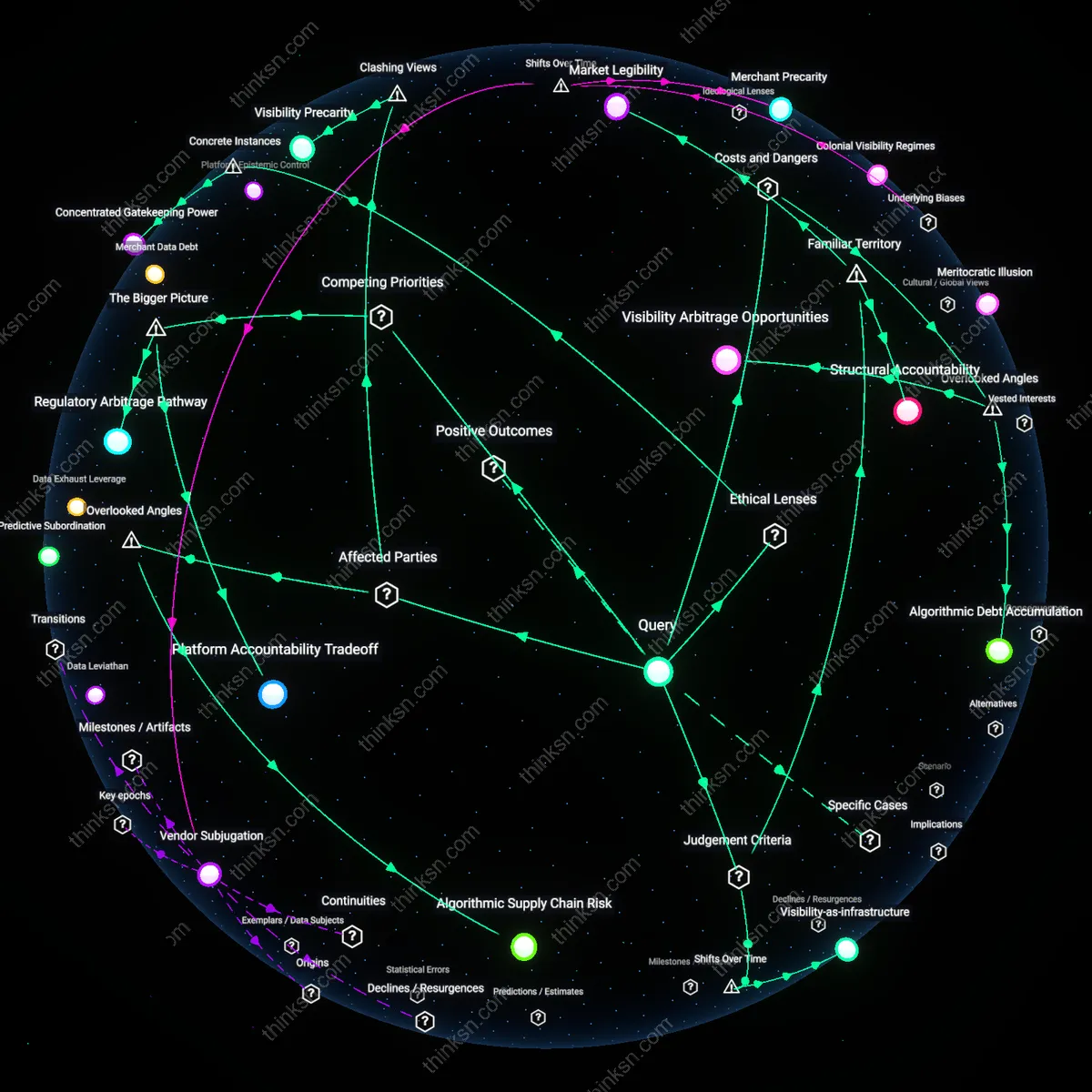

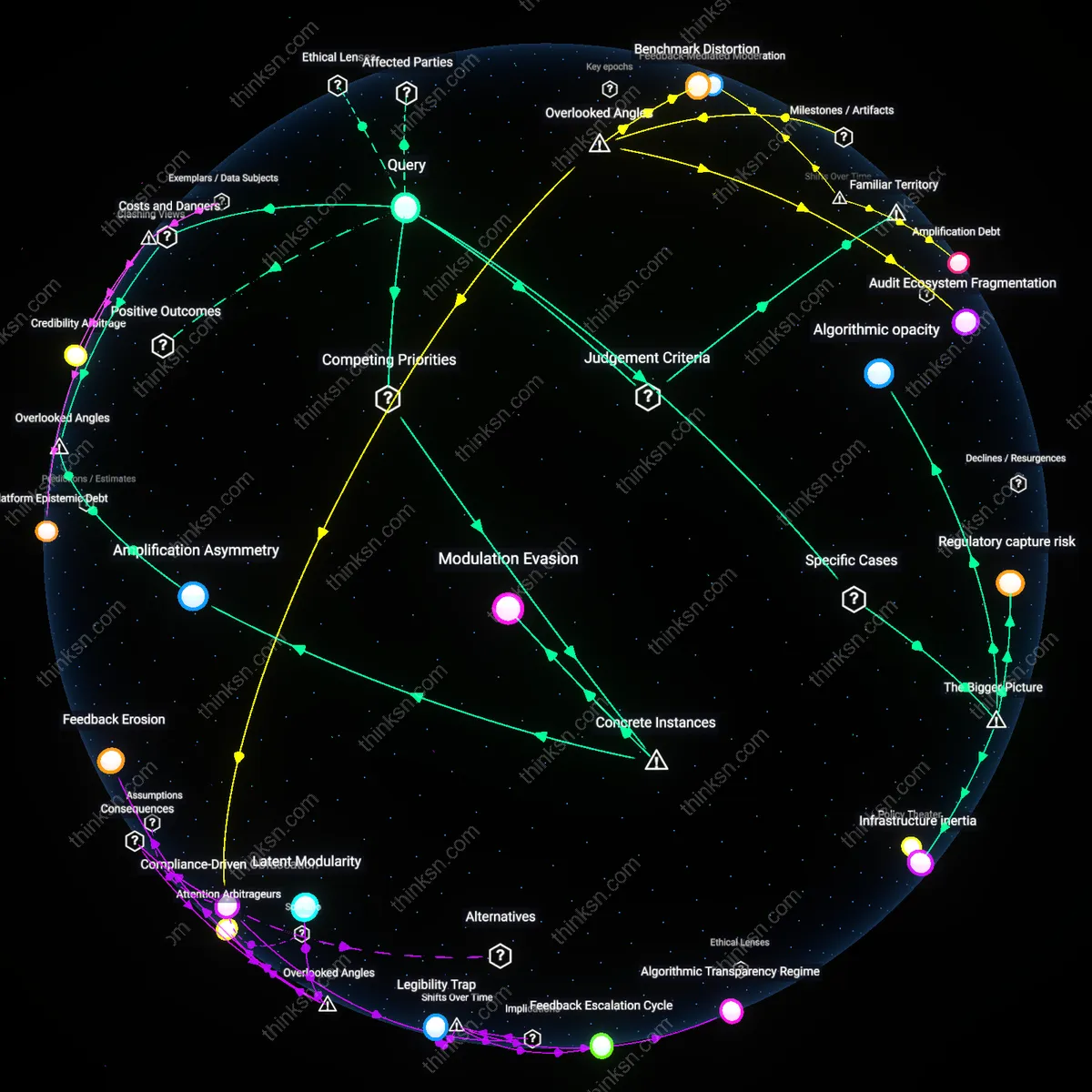

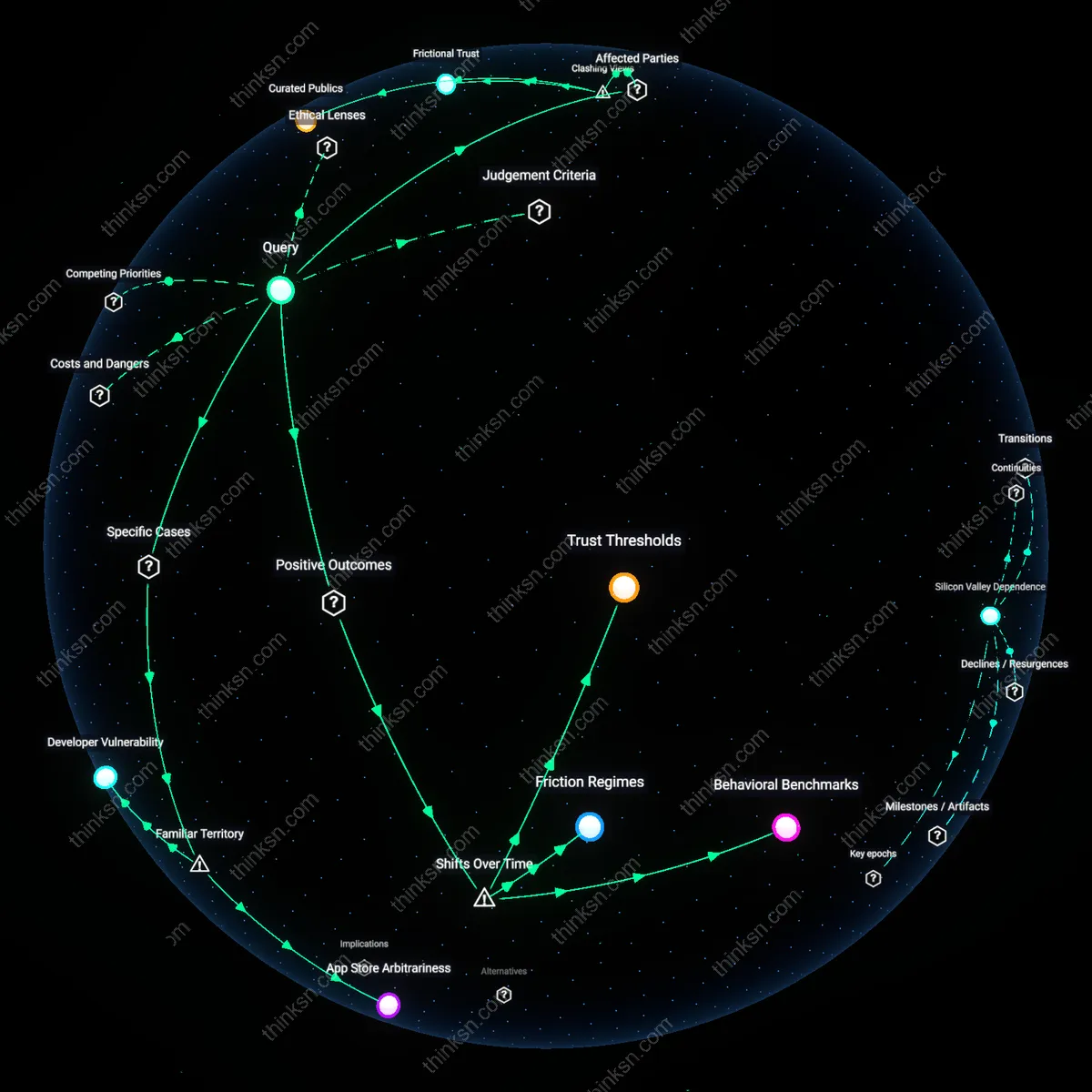

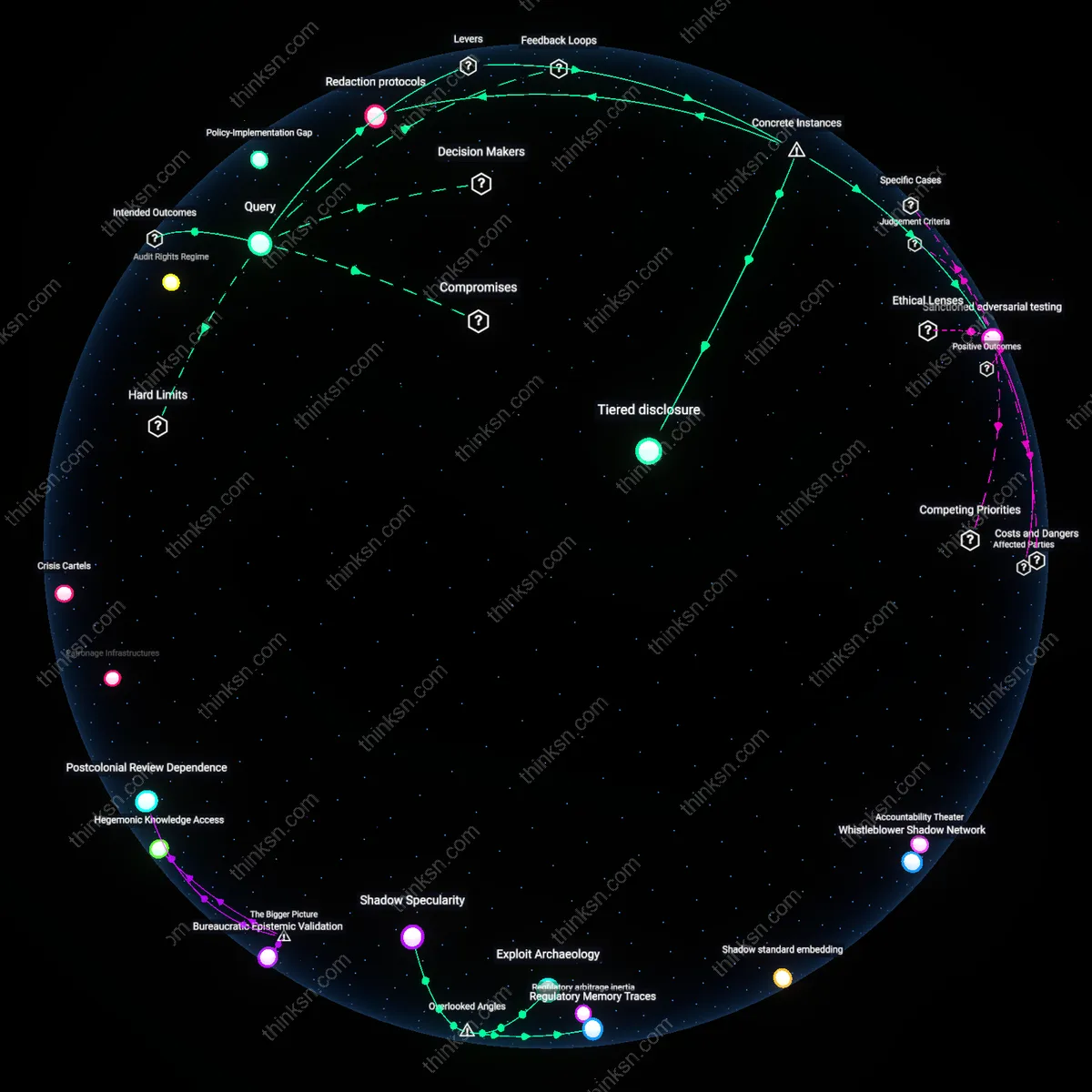

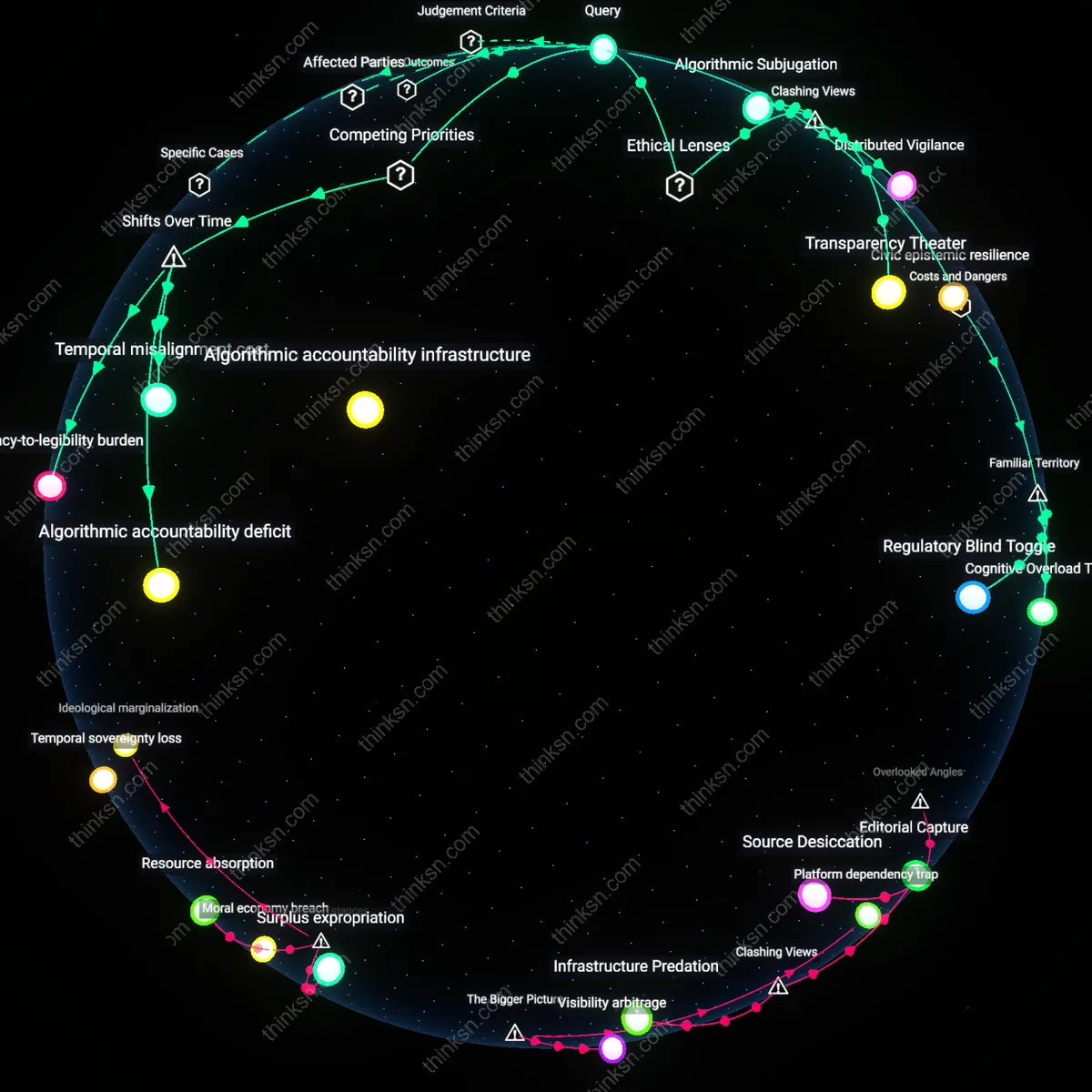

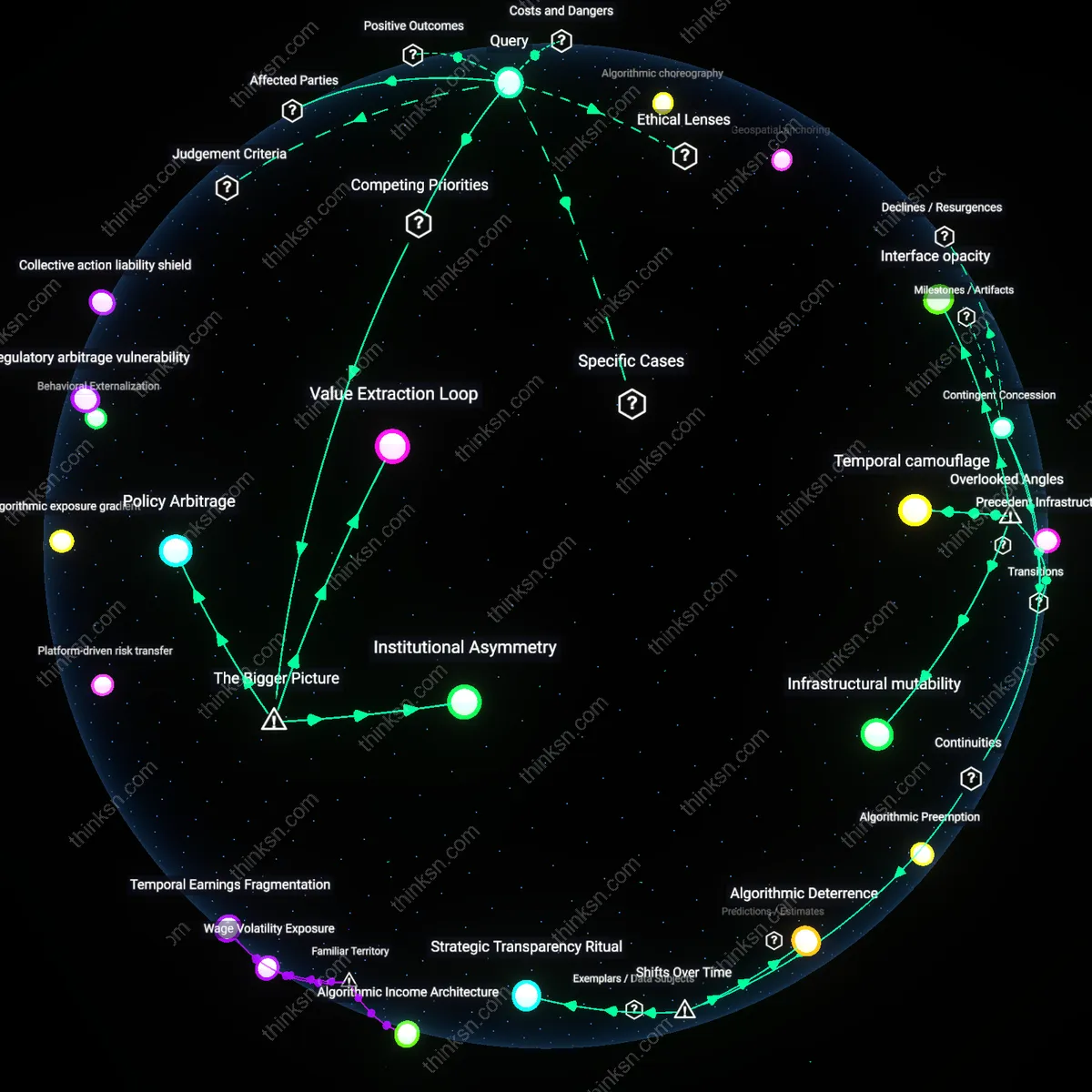

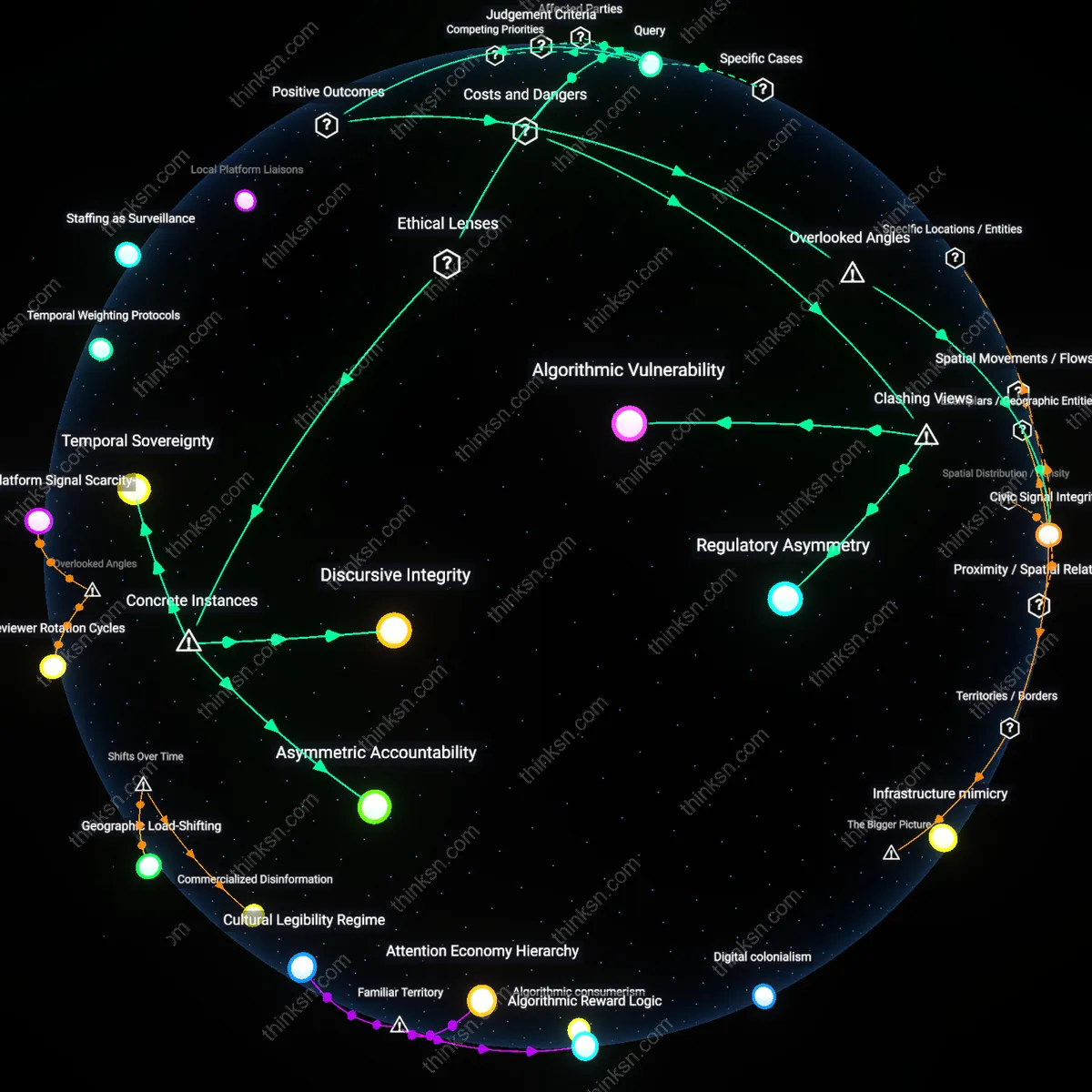

Analysis reveals 13 key thematic connections.

Key Findings

Platform Epistemic Power

Independent audits should be mandated because they challenge the epistemic monopoly digital platforms hold over the criteria that determine small business visibility, a control that operates through proprietary algorithmic logic which no external party can currently validate. This power allows platforms like Google or Meta to define what counts as 'relevant' or 'fair' visibility without empirical scrutiny, effectively making them sole arbiters of commercial discoverability for millions of small enterprises. The non-obvious dimension is not just bias or opacity, but the deeper authority platforms exercise in shaping knowledge about market access—a form of epistemic control that insulates their decision-making from democratic or regulatory challenge, rendering fairness a self-verified claim rather than an accountable outcome.

Algorithmic Supply Chain Risk

Mandating independent audits is necessary because the algorithms governing small business visibility function as critical nodes in decentralized digital supply chains, where disruptions in visibility directly impact inventory turnover, staffing, and vendor contracts for downstream service providers such as local manufacturers and delivery fleets. Without auditability, there is no way to anticipate or mitigate ripple effects when platforms suddenly alter ranking logic, which can collapse micro-economies anchored around platform-dependent businesses in regions like the Rust Belt or India’s tier-2 cities. The overlooked dynamic is that algorithmic decisions are not isolated visibility events but operational shocks in extended economic networks—turning platform algorithms into unregulated infrastructure with systemic risk profiles akin to power grids or transportation networks.

Merchant Data Labor

Independent audits must be mandated because small businesses unknowingly perform unpaid data labor that trains the very algorithms determining their market survival, yet they are denied access to the performance metrics derived from their own behavioral inputs. When platforms like Amazon or Yelp refine visibility algorithms using small merchants’ listing interactions, customer queries, and sales patterns, they extract value from these micro-contributions without reciprocal transparency. The unseen mechanism is a one-sided data economy where merchants subsidize platform intelligence while remaining blind to the rules of their own exposure—transforming fairness not into a design goal but into a perpetually deferred negotiation between capital and invisible labor.

Visibility Precarity

Yes, audits are necessary not because algorithms are inherently unfair, but because the very dependence of small businesses on platform-mediated visibility creates a condition of perpetual precarity that audits could expose, even if they do not correct it; unlike traditional market access mechanisms—such as physical retail placement or broadcast advertising—algorithmic visibility operates without notice or appeal, where a minor parameter shift in Facebook’s EdgeRank or Amazon’s A9 engine can collapse revenue overnight without disclosure. Research consistently shows that small businesses lack both the data access and technical fluency to diagnose or challenge such changes, making audits less a fix than a form of witnessing—an act that reveals systemic vulnerability rather than resolving it, and shifts focus from fairness as equity to fairness as existential security.

Algorithmic accountability gap

Independent audits must be mandated because the shift from human-curated markets to automated visibility systems has dissolved traditional oversight pathways, leaving small businesses exposed to opaque ranking logics controlled by private platforms. In the early 2000s, digital marketplaces like Amazon and Google depended on hybrid models where human editors influenced content placement, but the rise of machine learning-driven personalization after 2015 replaced those with autonomous ranking systems that are neither transparent nor contestable, creating a structural absence of redress. This transition has produced an accountability gap where platform operators assume quasi-regulatory power over market access while evading public or judicial scrutiny. The non-obvious insight is that fairness is no longer a managerial choice but a systemic vulnerability embedded in the delegation of economic gatekeeping to unmonitored algorithms.

Visibility-as-infrastructure

Independent audits should be required because digital visibility has shifted from being a promotional privilege to a foundational economic utility, akin to electricity or broadband, in the wake of the 2020–2023 platformization of local commerce. During the pandemic, small businesses across the U.S. and EU migrated en masse to platforms like Yelp, Instagram, and Google Maps, where algorithmic curation—once secondary to direct customer outreach—became the primary vector of survival. This transformation repositioned platforms as de facto public utilities controlling access to customers, a role historically filled by municipal directories or public advertising boards. The underappreciated consequence is that algorithmic bias now replicates the systemic exclusion once associated with redlining or media deserts, not by intent but by design inertia within privately owned infrastructure governing public economic life.

Market Legibility

Yes, independent audits should be required because algorithmic opacity distorts small businesses' ability to understand and navigate platform markets, undermining fair competition. When visibility on platforms like Google or Amazon depends on undisclosed ranking mechanisms, small firms cannot make informed decisions about marketing, inventory, or pricing—effectively making market access arbitrary. The core mechanism is informational asymmetry between platforms and businesses, where the platform holds proprietary knowledge that directly determines economic outcomes. What’s underappreciated is that fairness here isn’t just about bias or discrimination, but about whether the rules of participation are legible enough to enable rational economic action.

Structural Accountability

Yes, independent audits should be required because dominant platforms function as de facto public utilities in the digital economy, and concentrated algorithmic control without oversight risks systemic discrimination against small enterprises. Platforms like Facebook or Amazon wield outsized influence over which businesses succeed or fail—not through overt policy but through automated ranking, recommendation, and ad-targeting systems. The audit acts as a governance mechanism to align private algorithmic power with public interest, much like financial audits align corporate behavior with investor and regulatory expectations. The non-obvious insight is that fairness here depends less on individual algorithmic accuracy and more on institutional checks that prevent entrenched power from silently shaping market outcomes.

Algorithmic Debt Accumulation

Requiring independent audits for platform algorithms risks codifying snapshot evaluations that fail to account for the continuous, incremental changes in algorithmic behavior driven by real-time feedback loops. Platforms like Amazon or Google make thousands of daily adjustments to ranking and visibility systems based on user behavior, ad revenue incentives, and A/B testing, creating a dynamic system where any audit becomes obsolete within days. The overlooked danger is not audit evasion but the illusion of oversight—by certifying a version of an algorithm at one moment, the audit masks the compounding divergence from fairness over time, allowing platforms to accumulate 'algorithmic debt' where small, individually insignificant deviations coalesce into systemic bias. This invalidates the premise of periodic audits because compliance becomes a point-in-time performance rather than a sustained standard.

Visibility Arbitrage Opportunities

Mandating audits based on transparency criteria unintentionally creates new vectors for strategic manipulation by dominant platforms, who can exploit audit design limitations to obscure harmful effects under the guise of compliance. For instance, an audit might verify that a small business’s content isn’t demoted due to commercial conflict (e.g., favoring Amazon’s private label), but platforms can instead degrade visibility through latency throttling—delaying content indexing by milliseconds that compound across layers of delivery infrastructure, imperceptible in audit logs but crippling in practice. These non-decisions, hidden in the physical and temporal infrastructure of content distribution, are rarely included in audit frameworks focused on code or ranking weights. The overlooked dimension is that fairness is not only computed but also engineered through timing, location, and network priority—variables that evade software-level audits and allow platforms to maintain control while appearing compliant.

Platform Accountability Tradeoff

Mandating independent audits of algorithms that govern small business visibility on digital platforms would compromise proprietary technology protections, undermining platform competitiveness in global markets. Digital platforms like Amazon, Google, and Meta treat their algorithms as trade secrets critical to maintaining strategic advantage, and enforced transparency through independent audits creates legal and operational risks that disincentivize innovation. The tension arises not from lack of public interest in fairness but from the structural reality that regulatory scrutiny in one domain—market equity for small businesses—directly erodes corporate self-interest in IP protection, revealing how regulatory gains in accountability can produce losses in commercial autonomy. This tradeoff is systemically enforced by intellectual property regimes and capital market expectations, making transparency a zero-sum concession rather than a scalable governance tool.

Regulatory Arbitrage Pathway

Requiring algorithmic audits for small business fairness on digital platforms will shift enforcement outcomes to jurisdictions with weaker regulatory capacity, enabling dominant platforms to re-centralize control through compliance migration. U.S.- and EU-based platforms facing stringent audit mandates may relocate algorithmic operations or data processing to countries with looser digital governance, a move already observable in cloud infrastructure placement and AI model training locations. This dynamic transforms audit requirements into de facto barriers that favor large firms able to navigate jurisdictional complexity, while small businesses—especially those dependent on local markets—lose visibility precisely where oversight is weakest. The consequence is not reduced control but reallocated control, sustained by transnational legal asymmetries and investment flows that reward regulatory avoidance.

Concentrated Gatekeeping Power

Small businesses on Amazon Marketplace faced existential threats during the 2019 suspension wave, when thousands of third-party seller accounts were algorithmically deactivated for suspected policy violations, many without clear justification or appeal—prompting the Chinese government to intervene diplomatically due to the economic impact on its exporters. This incident, rooted in Amazon’s opaque enforcement algorithms, illustrates how concentrated private control over visibility functions as economic gatekeeping under neoliberal platform governance, where market access depends on unaccountable technical systems. From a Foucauldian disciplinary power perspective, this reveals that audits are not just oversight tools but potential counterweights to sovereign-like authority exercised by platforms. The underappreciated point is that mandatory audits could introduce heteronomous regulation into a domain designed for unilateral corporate discipline, thereby rebalancing power in digital market ecosystems.