Is Legal AI Undermining Lawyer Accountability Without Reasoning?

Analysis reveals 7 key thematic connections.

Key Findings

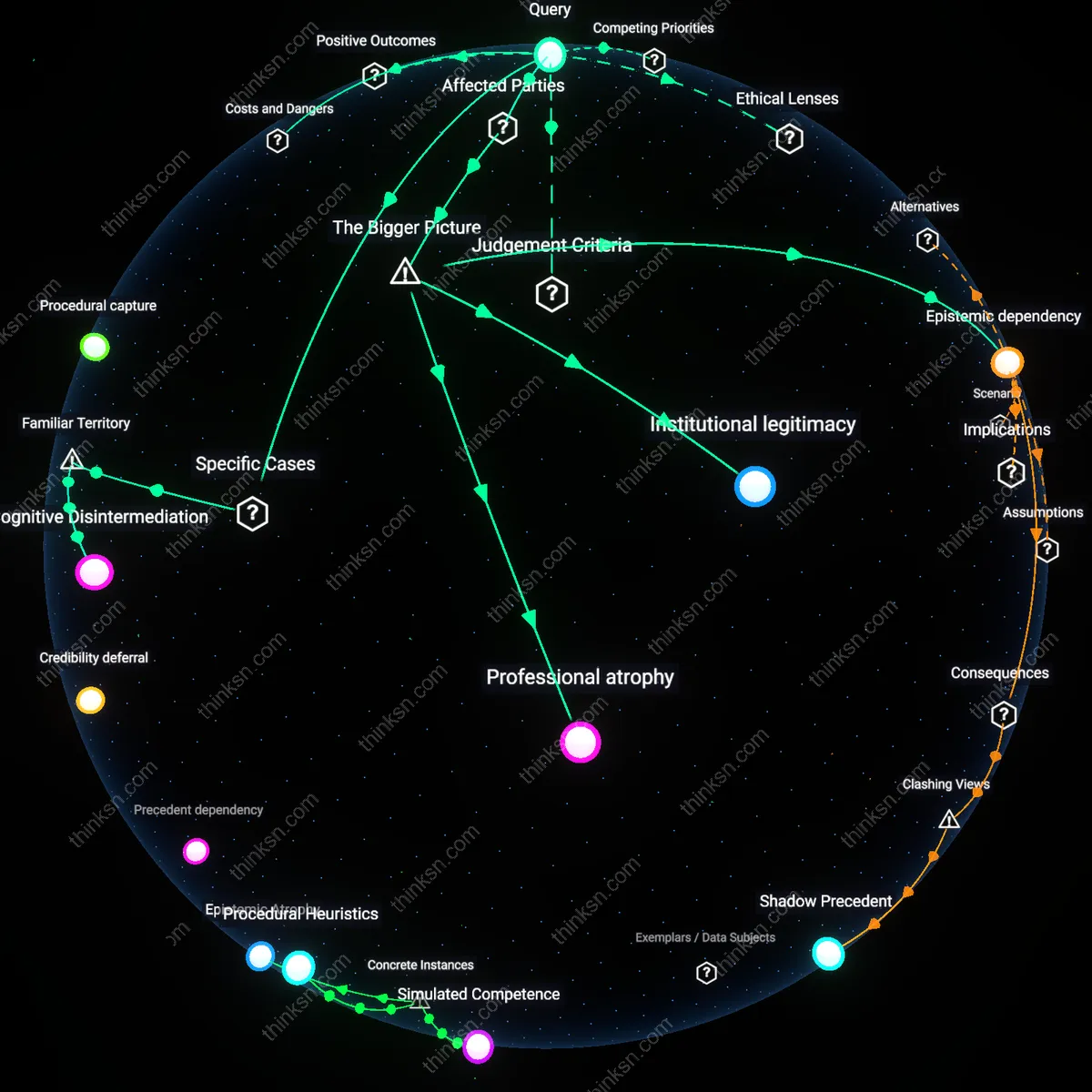

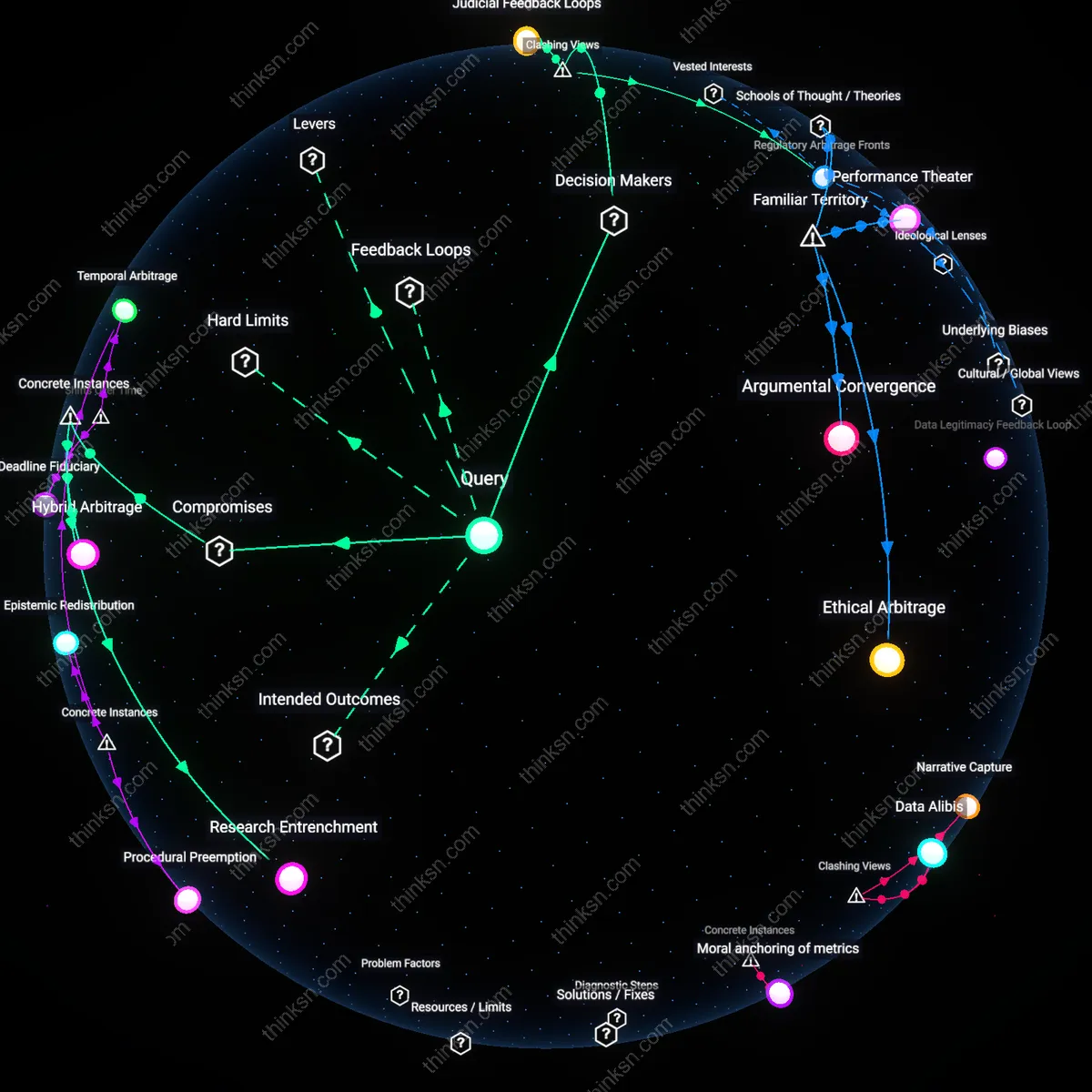

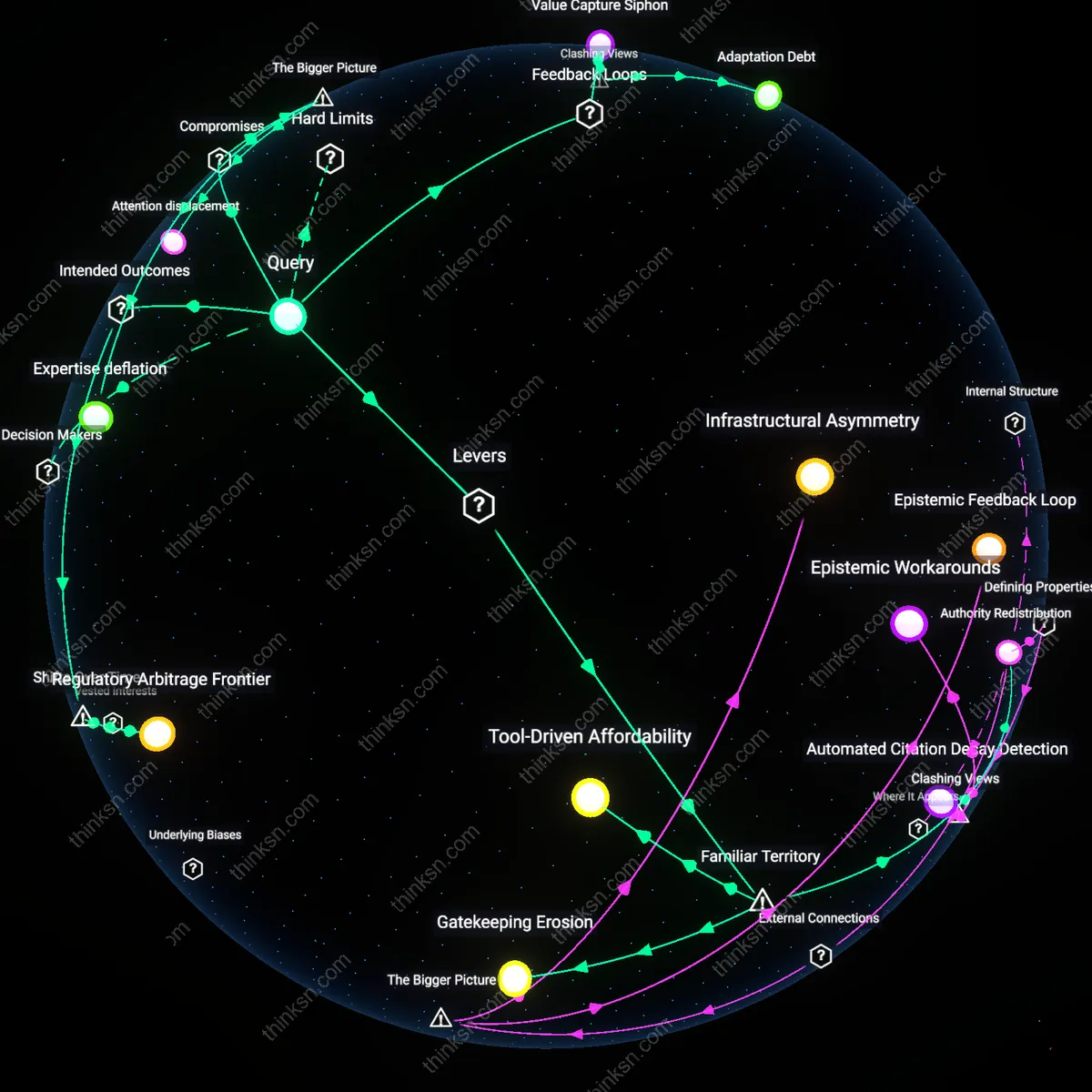

Epistemic dependency

Relying on unexplained AI-generated legal citations compromises a lawyer's duty to understand the law because it shifts interpretive labor from legal professionals to opaque computational systems, effectively outsourcing doctrinal reasoning to algorithms whose logic remains inaccessible even to the attorneys submitting the arguments. This shift alters the traditional chain of legal accountability, where lawyers historically served as both gatekeepers and translators of precedent, by inserting a non-reviewable cognitive intermediary between established case law and courtroom advocacy. The non-obvious consequence is not merely error propagation but the normalization of decisions grounded in authority without comprehension—a systemic drift toward functional legal literalism that erodes professional autonomy.

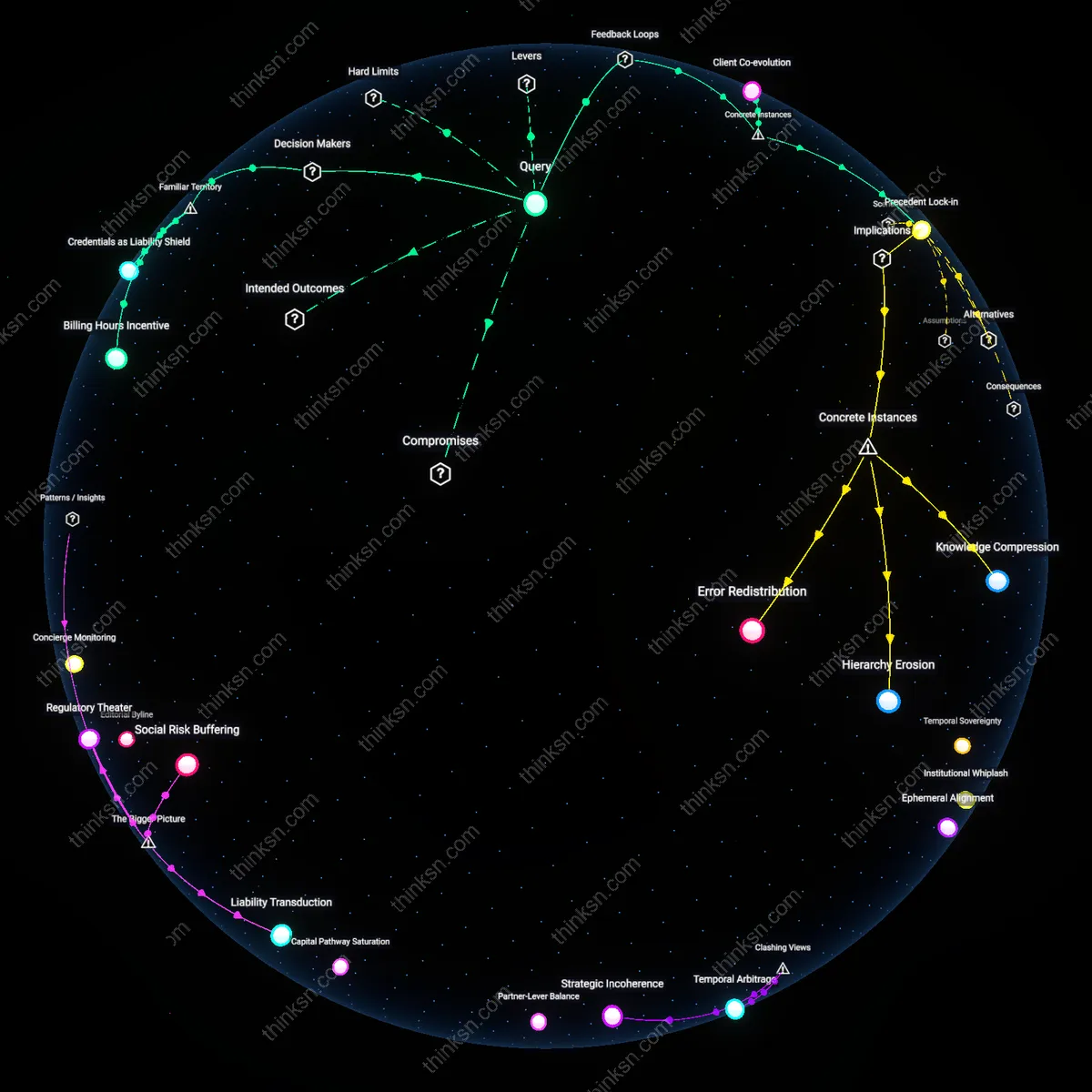

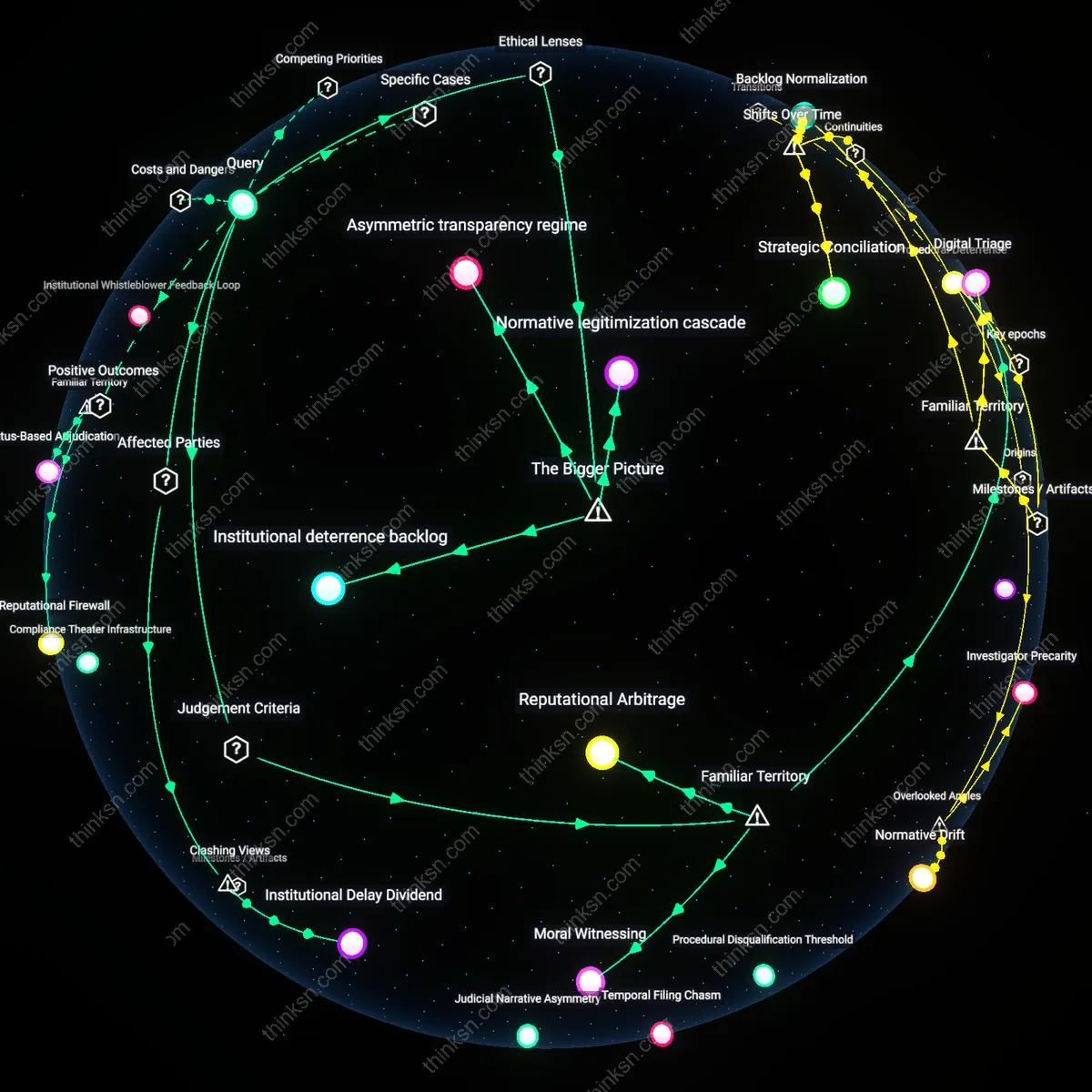

Institutional legitimacy

The use of unexplained but accurate AI-generated citations risks weakening public trust in judicial outcomes by uncoupling formal correctness from demonstrable human oversight, particularly when marginalized litigants challenge rulings derived from sources they cannot verify or contest. Courts, bar associations, and appellate systems become complicit in sustaining a facade of procedural rigor while depending on tools whose underlying processes evade scrutiny by judges, opposing counsel, or affected parties. The underappreciated dynamic is that legal authority depends not only on citation accuracy but on the visible human chain of reasoning; when that chain breaks, the legitimacy of the entire adjudicative structure becomes vulnerable to claims of algorithmic disenfranchisement.

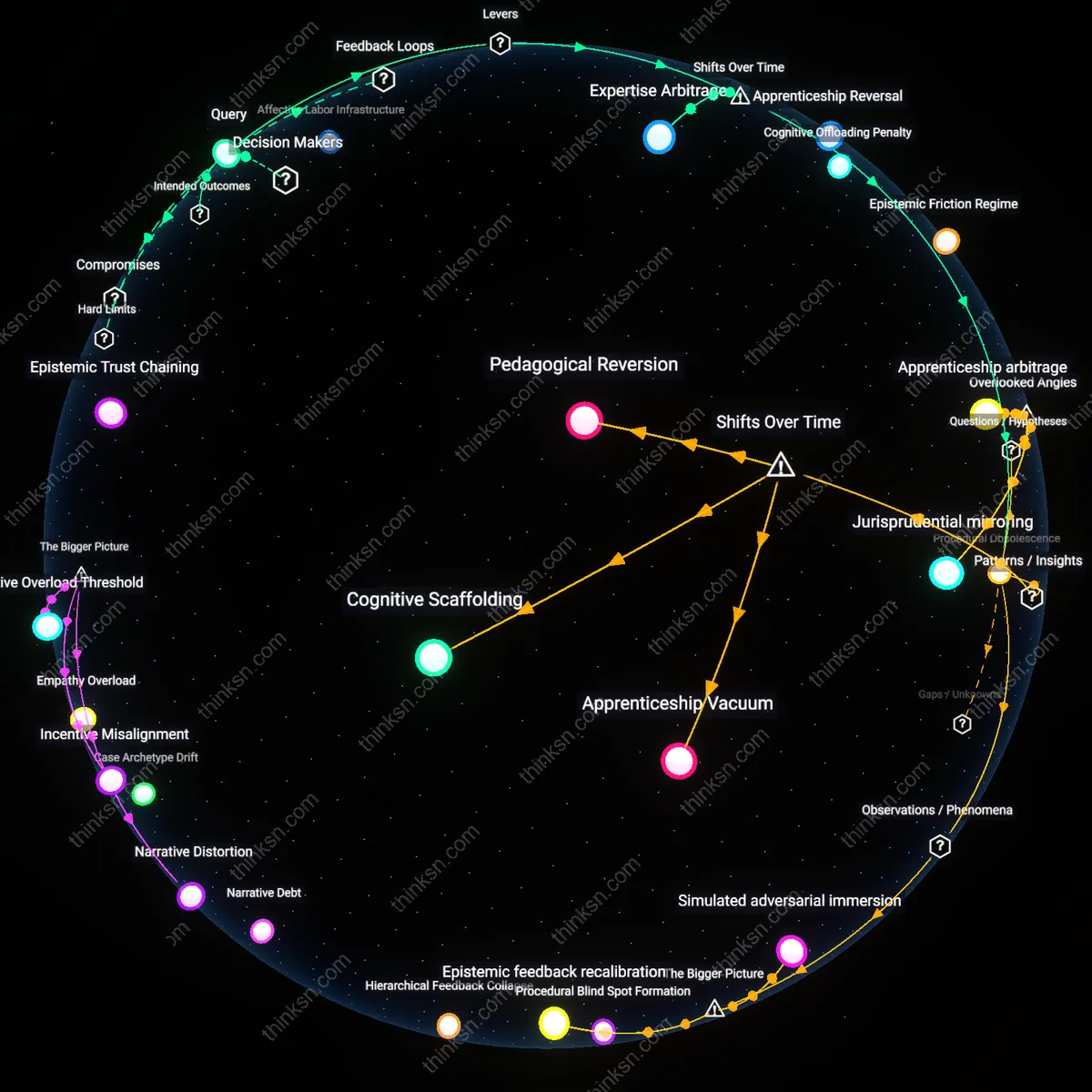

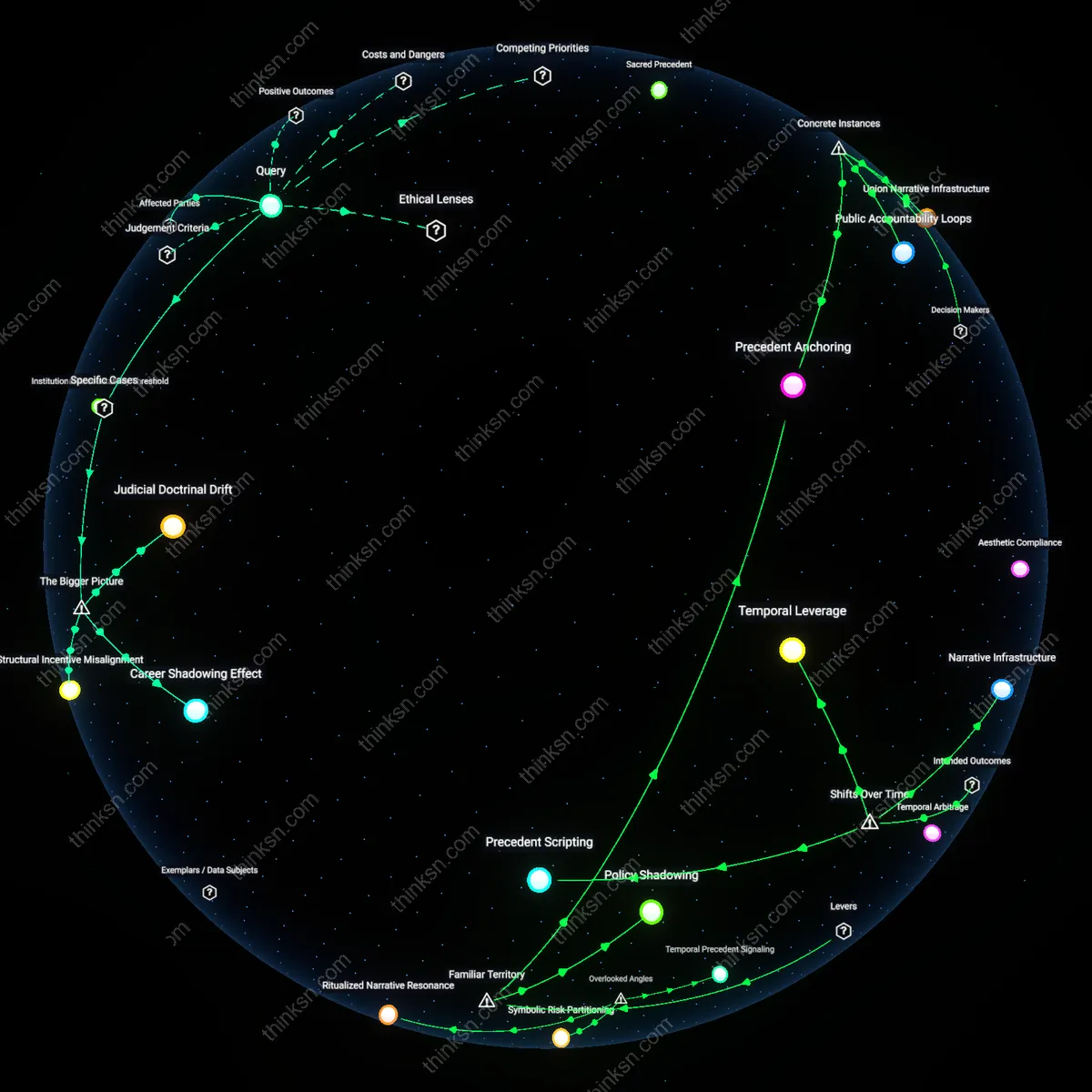

Professional atrophy

Routine dependence on AI to generate accurate legal citations without explanation erodes the developmental pathway for junior attorneys and law students, who rely on manual research to build doctrinal intuition and pattern recognition essential for strategic lawyering. Law firms and legal educators, under pressure to increase efficiency and reduce costs, inadvertently subsidize a system that prioritizes speed over mastery, replacing formative learning with black-box shortcuts. The non-obvious consequence is a long-term degradation of collective legal expertise—not because the citations are wrong, but because the profession stops reproducing the deep cognitive infrastructure that enables adaptation during legal crises or novel constitutional challenges.

Epistemic atrophy

Relying on accurate but unexplained AI-generated legal citations erodes a lawyer’s capacity to independently validate doctrinal coherence over time. Repeated dependence on opaque citations bypasses the cognitive effort required to trace judicial reasoning, weakening the lawyer’s internal schema for legal principles—especially in complex or evolving areas like constitutional interpretation or statutory construction. This slow degradation of analytical fitness is rarely observed in individual instances but manifests systemically in reduced resilience during unforeseen legal crises, such as novel constitutional challenges or jurisdictional conflicts, where analogical reasoning from first principles becomes essential. The danger lies not in citation inaccuracy but in the unnoticeable atrophy of professional judgment, a dynamic overlooked because performance metrics focus on case outcomes rather than cognitive readiness.

Procedural capture

The integration of unexplained but accurate AI citations shifts gatekeeping power from legal professionals to the procedural designers of AI training environments, such as paralegal training coordinators or firm technology officers who select which models are used and how. These actors, often without legal doctrine expertise, inadvertently shape legal reasoning by determining which datasets, citation styles, or jurisdictional filters are prioritized in AI outputs—such as favoring Westlaw-based corpora over grassroots legal aid precedents in public defense workflows. This quiet transfer of influence undermines the lawyer’s duty not just cognitively but institutionally, embedding normative legal choices in back-end configurations that are invisible during courtroom advocacy. The risk is not misuse but correct use of a system whose values are misaligned with equitable representation—a dimension obscured by the profession’s focus on individual ethical compliance rather than structural epistemic governance.

Cognitive Disintermediation

Relying on AI-generated citations without understanding their basis erodes a lawyer's interpretive engagement with legal doctrine. When firms like BakerHostetler deploy tools such as ROSS Intelligence, the attorney no longer traces precedent through judicial context but accepts output as functional truth, replacing legal reasoning with citation retrieval. This shifts responsibility from comprehension to validation, a mechanistic check that fails to replicate doctrinal mastery. The underappreciated risk is not inaccuracy but the quiet substitution of legal thinking with dependency on a system presumed competent—what feels like efficiency masks a hollowing of professional judgment.

Institutional Scaffolding

Courts like the Southern District of New York increasingly accept AI-vetted briefs without questioning the origin of citations, reinforcing reliance through procedural normalization. When Judge Lorna Schofield or her clerks do not challenge ROSS- or Casetext-generated references, they validate a system where accuracy substitutes for legal narrative. The mechanism is institutional trust in output consistency, not verification of reasoning chains. What’s overlooked is that the courtroom—meant to test understanding—now functions as a passive certification body for AI-mediated legal claims, making explanation optional if the citation lands correctly.