Do AI Clauses in Union Contracts Fail to Protect Partially Automated Jobs?

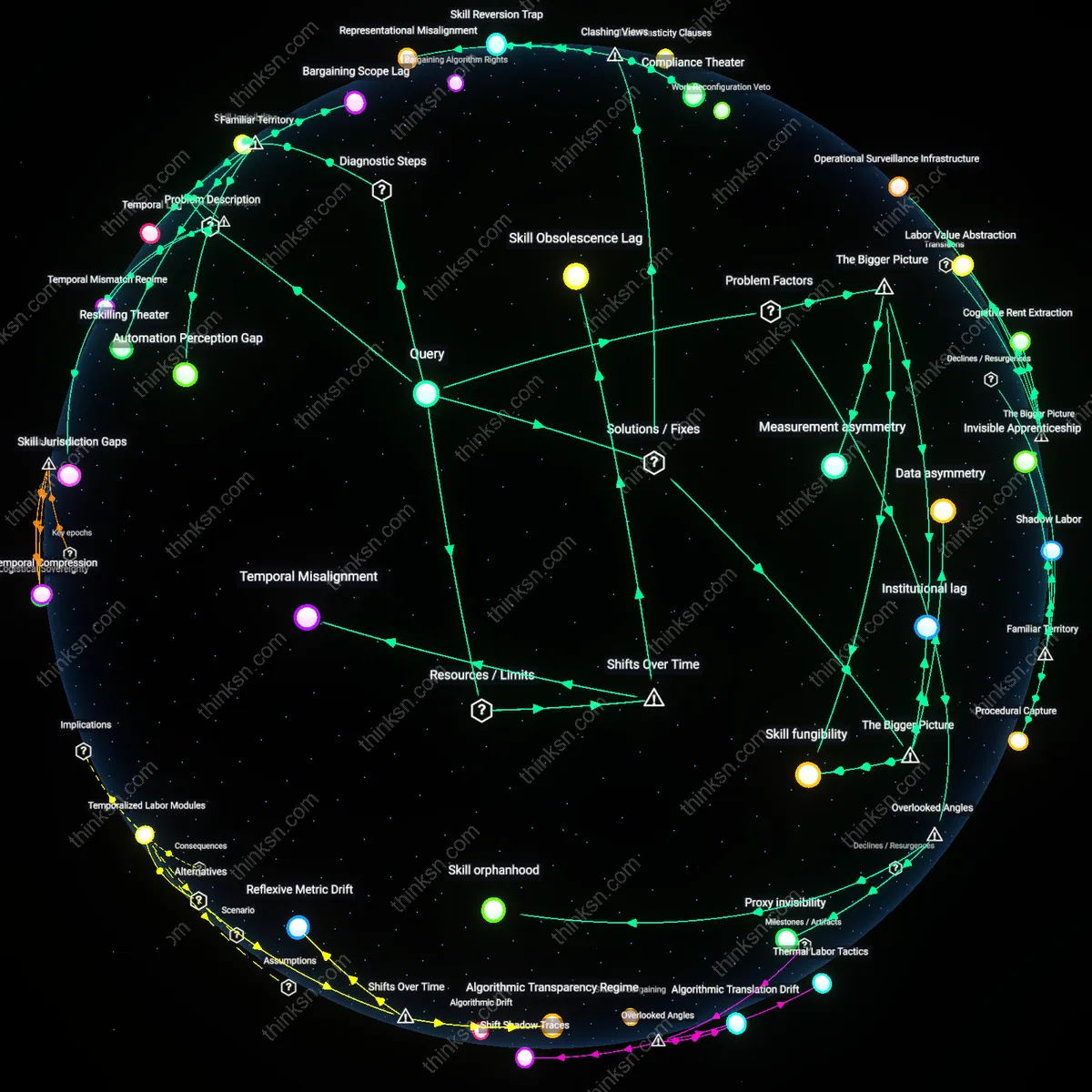

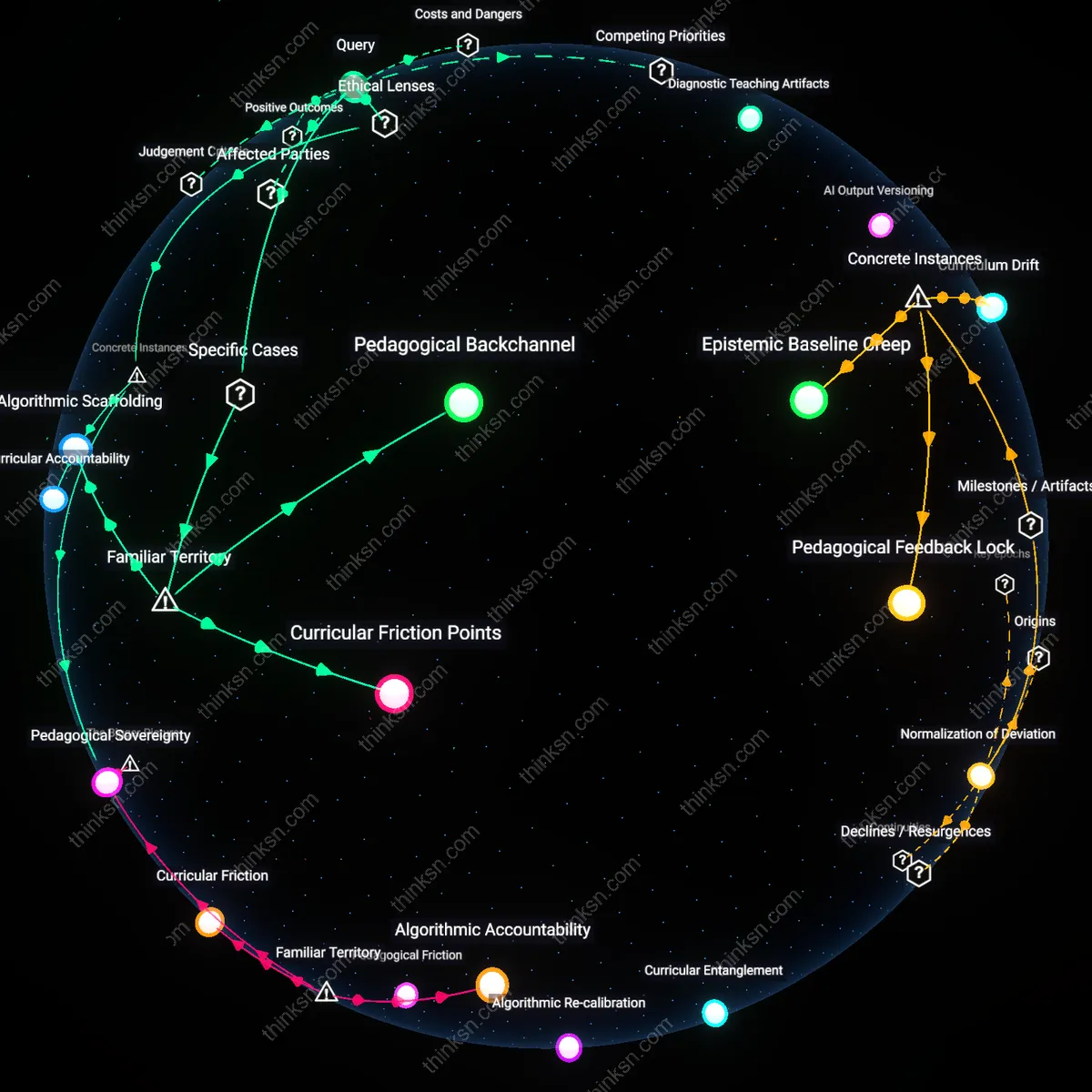

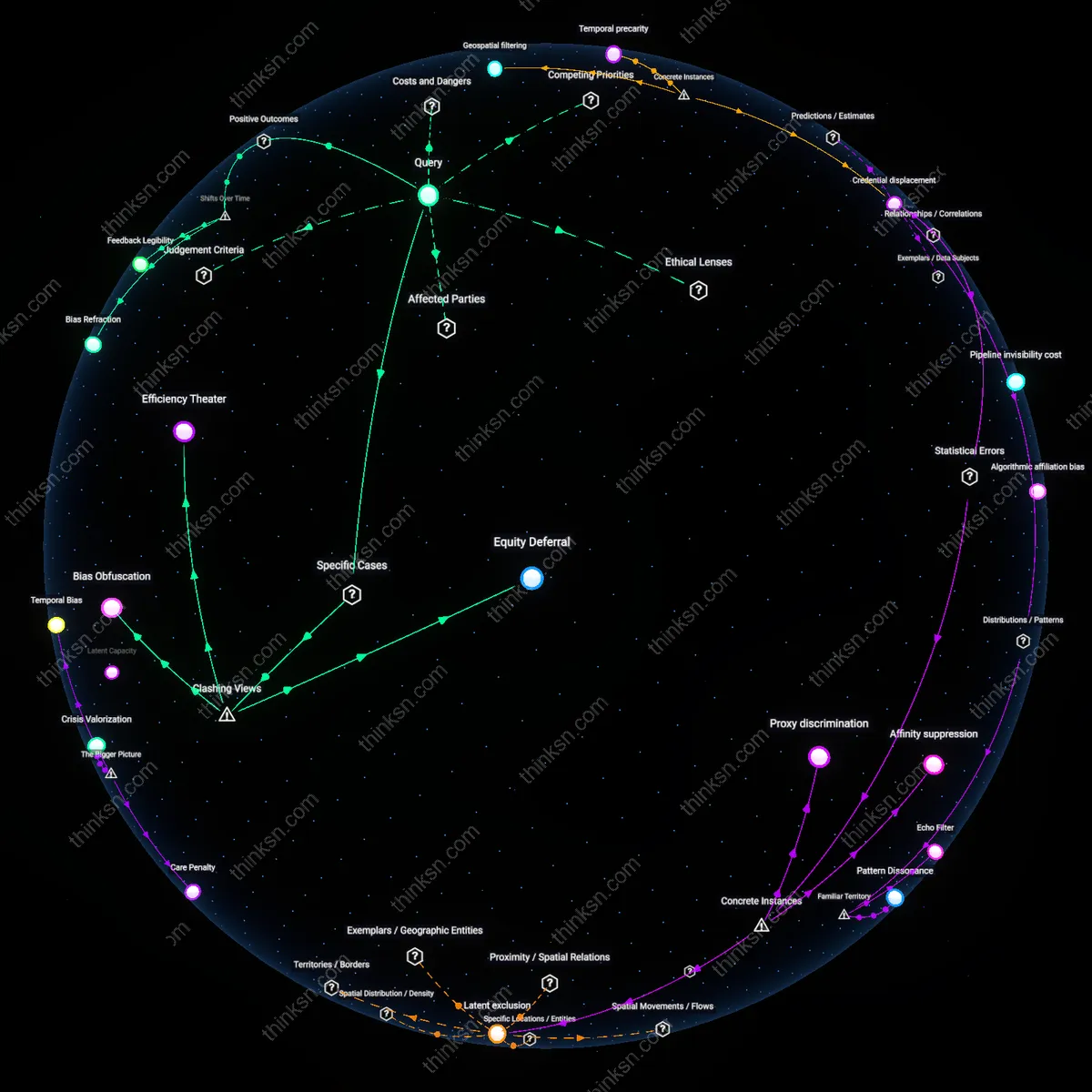

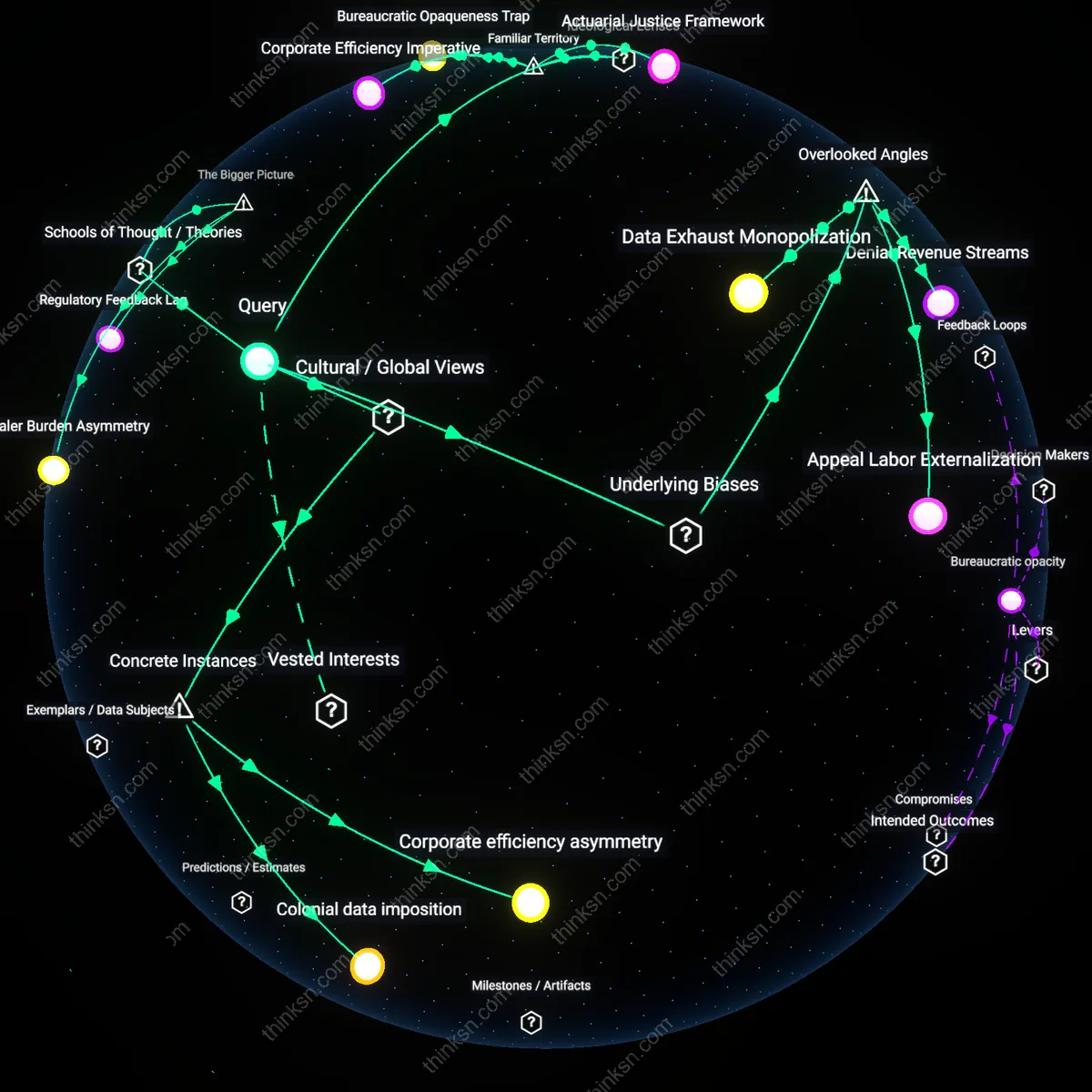

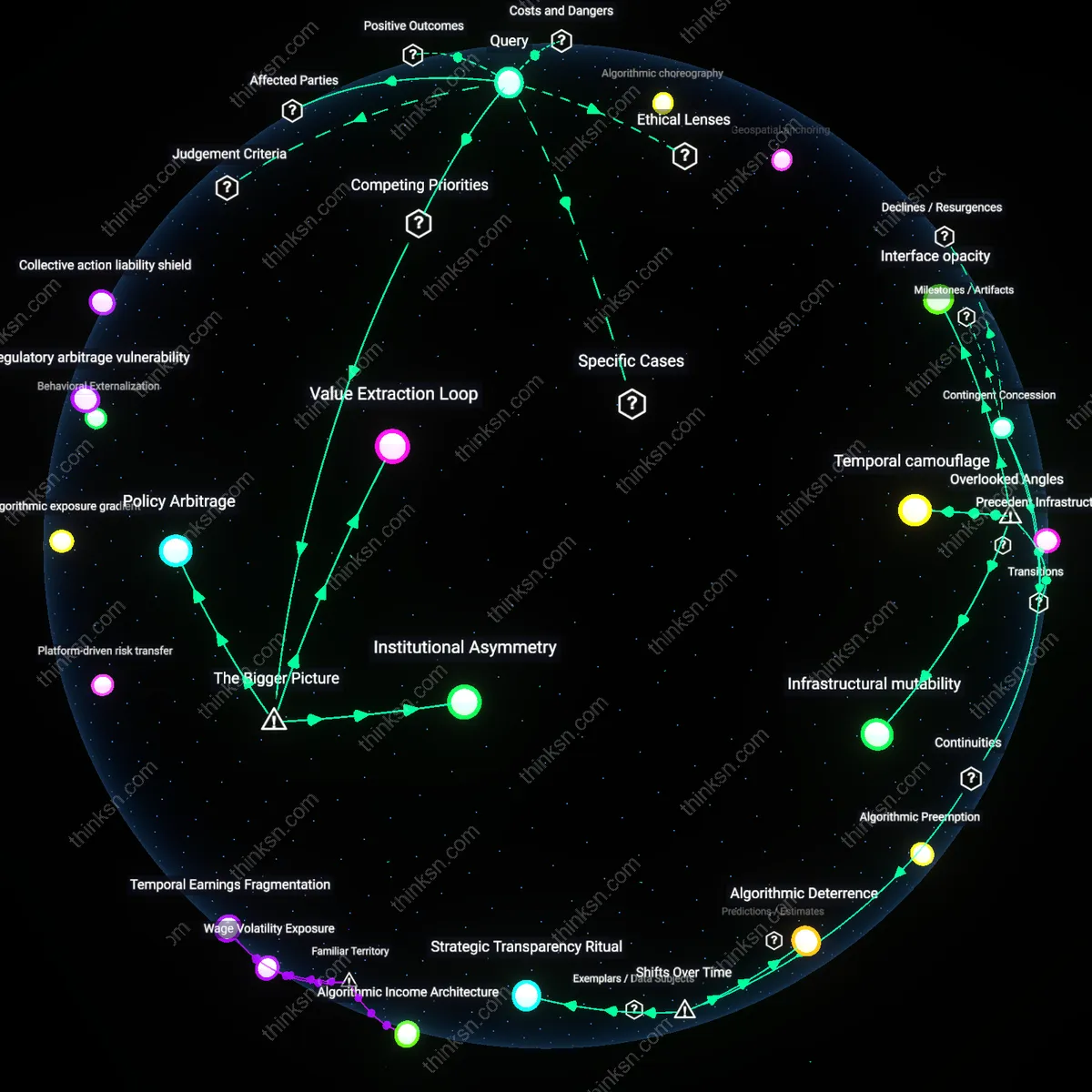

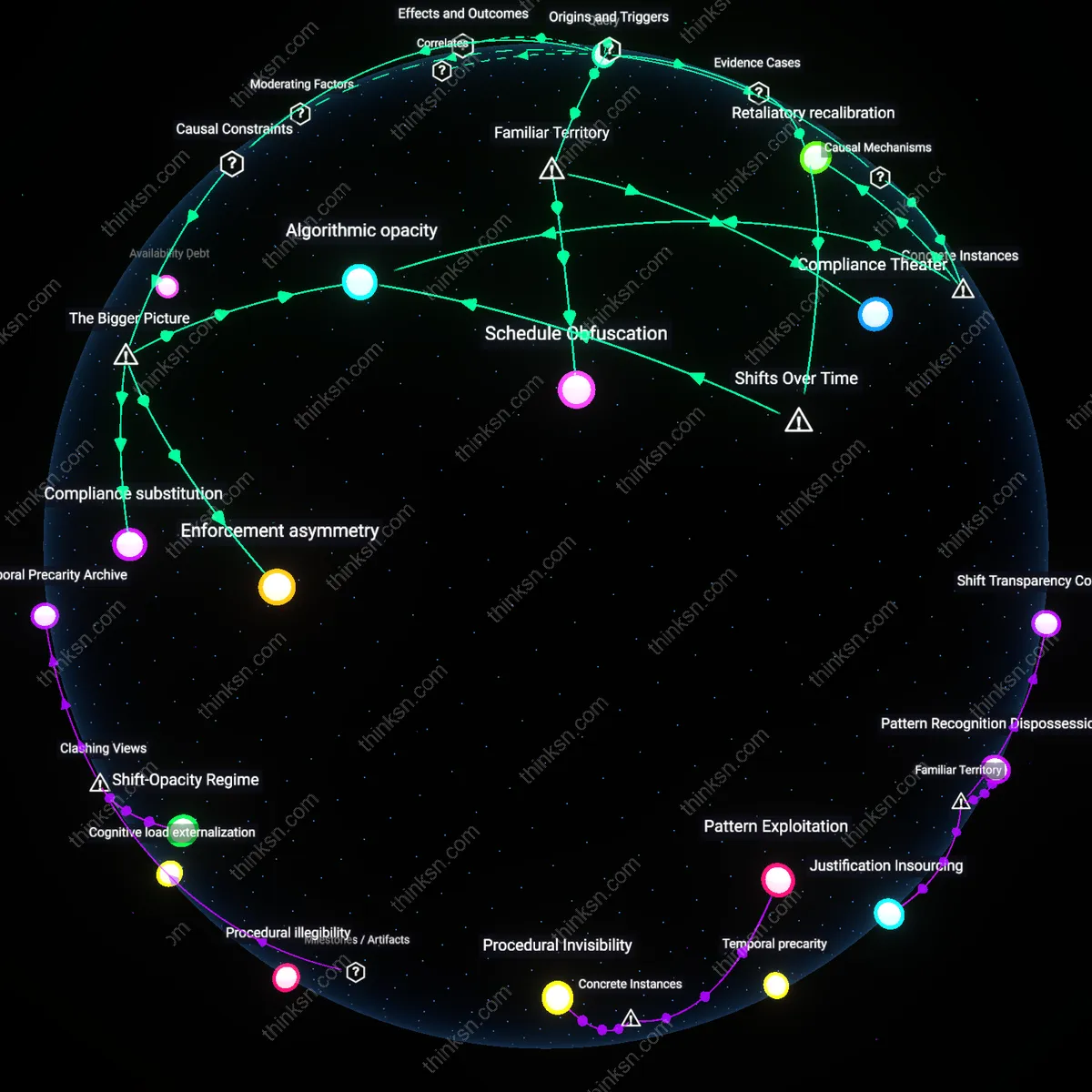

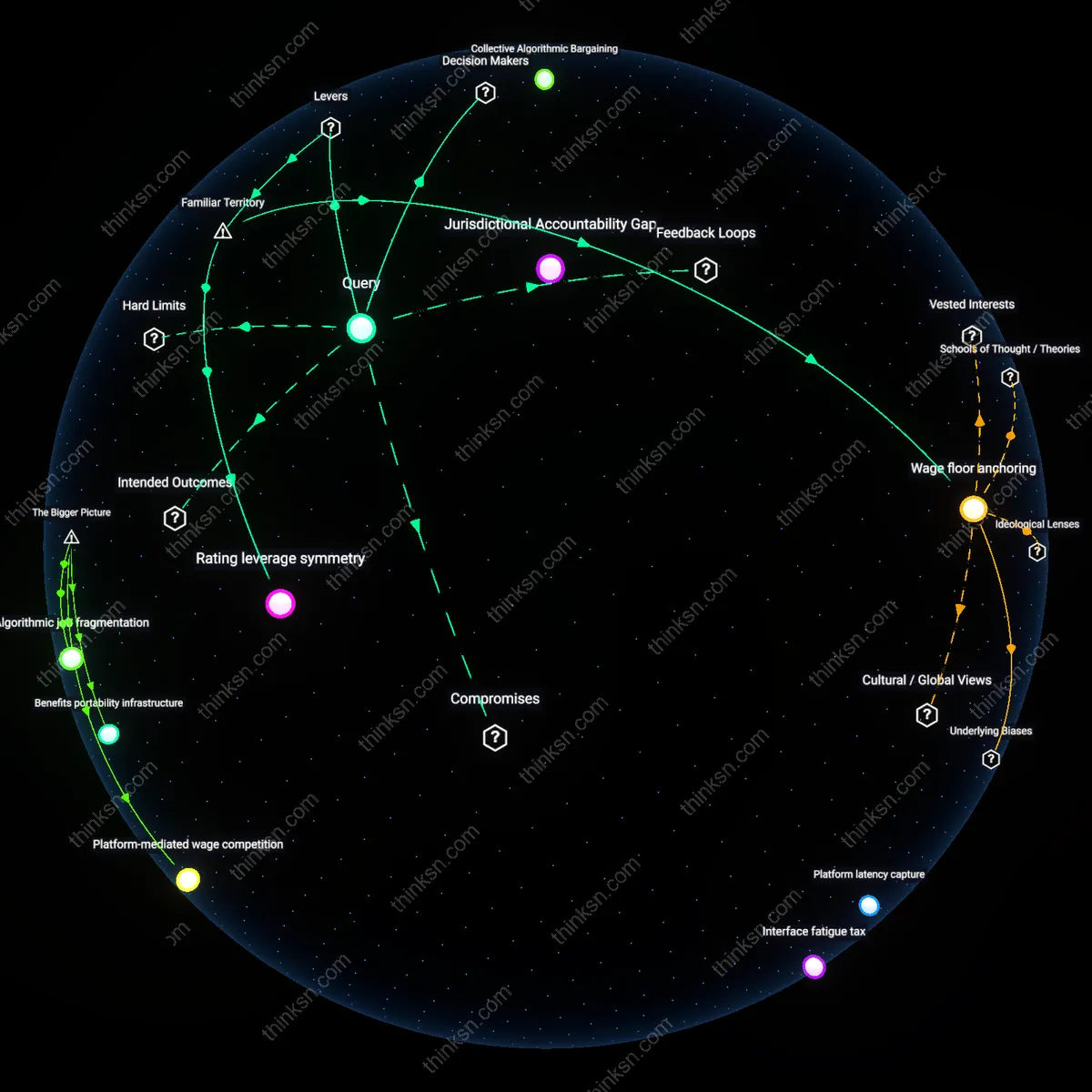

Analysis reveals 20 key thematic connections.

Key Findings

Procedural Obsolescence

AI impact clauses fail to protect partially automated workers because they assume static job boundaries, but continuous algorithmic refinement in sectors like warehouse logistics erodes the procedural coherence of human tasks—what remains 'human-performed' is fragmented and redefined mid-contract cycle, rendering past bargained safeguards irrelevant. This occurs because AI integration is treated as incremental rather than transformative, leaving union oversight confined to initial automation thresholds without mechanisms to audit evolving task allocation. The overlooked dynamic is that automation's procedural drift outpaces collective agreement timelines, undermining protections meant for discrete, stable roles. Most analyses focus on displacement or wages, missing how the erosion of procedural integrity destabilizes the very definition of the job.

Skill Jurisdiction Gaps

Union-represented workers in industries like transit and manufacturing lose protective leverage when AI offloads components of their labor to remote or centralized systems, because skill claims are geographically and institutionally bounded—yet algorithmic control shifts decision-making to offsite command centers staffed by non-bargained personnel. As a result, workers must develop cross-system diagnostic abilities to maintain relevance, but these skills fall outside traditional training pipelines and are rarely codified in apprenticeships. The critical but hidden dependency is that skill legitimacy in collective agreements presumes physical, local mastery, while AI undermines this premise by decentralizing operational expertise—this transforms skill development into a jurisdictional contest across space and bargaining units.

Temporal Mismatch Regime

Collective agreements prescribe fixed review periods for technology impacts, but machine learning systems in industrial settings can retrain and alter workflow dependency in weeks, making negotiated monitoring cycles too slow to capture shifts in partial automation. Workers respond by cultivating anticipatory technical fluency—learning to predict system updates through digital body language like interface changes or error logs—but this emergent skill set operates outside formal recognition and is rarely compensated. The underappreciated factor is that the real-time velocity of AI iteration introduces a new temporal regime that bypasses labor’s institutional pacing, requiring skills that are reactive and speculative rather than certified or credentialed.

Institutional lag

Collective bargaining agreements fail to protect partially automated workers because labor contract frameworks evolve slower than technological deployment, leaving hybrid roles—where humans work alongside AI systems without full job elimination—outside traditional displacement clauses. Unions and employers typically negotiate around discrete outcomes like layoffs or retraining triggered by automation, but these mechanisms assume clear thresholds of replacement rather than incremental encroachment by AI tools into human tasks. This mismatch between legal-formal timelines and technological-organizational timelines reflects a systemic inertia in industrial relations institutions, particularly in sectors like warehousing and call centers where AI-mediated supervision and performance tracking subtly reconfigure work without triggering contractual safeguards. The underappreciated reality is that partial automation erodes job quality without crossing the formal thresholds that activate existing protections, rendering collective agreements functionally obsolete in the face of gradual technologically driven work transformation.

Measurement asymmetry

AI impact clauses fail because the performance metrics used to assess worker productivity are increasingly generated and controlled by proprietary AI systems that obscure the causal relationship between automation and labor degradation. Employers in logistics, retail, and customer service now deploy algorithmic management platforms that adjust task allocation, pacing, and evaluation in real time, but these systems rarely disclose how AI inputs affect human workload or decision-making authority—making it impossible for unions to isolate and negotiate against AI-specific harms. Without auditable, transparent data that links AI interventions to changes in working conditions, collective agreements cannot specify enforceable limits on algorithmic interference, particularly when automation is partial and interwoven with human labor. The non-obvious consequence is that the opacity of AI systems functions as a structural barrier to contractual codification, disabling traditional bargaining leverage even when workforce disruption is evident but not attributable through conventional evidence channels.

Skill fungibility

Workers in partially automated jobs face eroded bargaining power because employers redefine skill value around adaptability to AI interfaces rather than domain expertise, shifting the basis of labor negotiation from accumulated knowledge to real-time system compliance. In manufacturing and healthcare, for instance, technicians and nurses are increasingly evaluated not on clinical judgment or craftsmanship but on their responsiveness to AI-generated alerts and protocols, privileging rapid iteration over deep skill mastery. This reconfiguration of skill legitimacy undermines the premise of many collective agreements—that experience and seniority correlate with autonomy and protection—because AI-integrated workflows standardize decision paths and compress learning curves, making workers more interchangeable. The overlooked dynamic is that skill strategies now favor cognitive flexibility and digital literacy over institutional memory, weakening the collective identity and solidarity that traditionally sustain union leverage in bargaining processes.

Temporal misalignment

AI impact clauses fail because they are negotiated at fixed intervals that mismatch the rapid deployment cycles of machine learning systems in warehouses like those operated by Amazon in Phoenix and Leipzig, where algorithmic workflows are updated biweekly, rendering contractual safeguards obsolete before implementation. This discrepancy between labor agreement timelines and technical iteration rates means worker protections are reactive rather than anticipatory, a factor rarely addressed in labor policy discussions that assume stable technological baselines. The non-obvious insight is that the timing of governance, not just its content, determines effectiveness—making temporal misalignment a critical failure point hidden beneath surface-level clause inadequacy.

Proxy invisibility

Partially automated workers are harmed not by full displacement but by AI systems that invisibly reconfigure their supervisors’ decision-making, such as when delivery drivers’ workload adjustments stem from algorithmic route optimizations fed to human dispatchers who believe they are making autonomous judgments. These AI-influenced management decisions evade traditional co-determination rights because the technology operates as a hidden proxy rather than a named actor, slipping beneath thresholds for worker consultation defined in agreements that only trigger on direct automation exposure. This dynamic is overlooked because labor frameworks assume visible technological agents, not decision-support intermediaries, making proxy invisibility a concealed driver of eroded agency.

Skill orphanhood

Workers whose roles combine automated and manual tasks develop hybrid competencies—such as fuel truck operators managing AI-guided routing while maintaining interpersonal client relations—who become skill orphans when training programs focus exclusively on either digital upskilling or traditional vocational retention, leaving mid-level integrators unsupported. Evidence indicates this group is less likely to qualify for reskilling initiatives tied to full job replacement, and their specific expertise in human-AI coordination is rarely codified in labor standards. This creates a stealth devaluation of intermediary proficiencies, a phenomenon missed in most policy designs that categorize workers as either 'at risk' or 'future-proof,' thus failing to recognize skill orphanhood as a structural consequence of transitional automation.

Temporal Lag

AI impact clauses fail because they respond to automation as a future event rather than an ongoing reconfiguration of tasks. Collective bargaining agreements typically negotiate around discrete technological displacements—like the introduction of a new robotic system in a factory—whereas in reality, AI incrementally alters the content of work through tools like scheduling algorithms, performance monitoring, and automated decision support embedded across service, logistics, and administrative roles. Because unions and employers alike treat automation as a bounded future shock requiring one-time renegotiation, the continuous task-level changes that erode worker autonomy and skill utilization go unaddressed. This misalignment renders clauses inert in the face of partial automation, where jobs are transformed but not eliminated—evading the triggers written into contracts. What goes unnoticed is that the timing of collective action is mismatched to the pace of technological creep, making provisions feel outdated even when freshly agreed.

Skill Invisibility

AI impact clauses overlook the erosion of tacit knowledge by embedding decision logic in opaque backend systems, leaving workers unable to assert control over processes they once managed through experience. In sectors like warehousing or call centers, AI systems now determine routing, pacing, and escalation, substituting for human judgment with little transparency—yet these changes rarely count as 'automation' in legal or contractual terms because human workers remain on the payroll. This persistence of laborers masks how core competencies are hollowed out, as real-time decision-making shifts to algorithms trained on aggregated worker behavior. The consequence is that skill degradation occurs without visible replacement, making it politically and conceptually difficult to defend training or wage protections under current language, which presumes automation manifests as job loss—not judgment loss.

Bargaining Scope Lag

AI impact clauses often fail because they are negotiated around existing job classifications that do not account for partial automation, leaving workers whose tasks are hybrid—human and algorithmic—without clear representation. Collective agreements typically define roles by historical workflows, and unions negotiate protections based on full-role displacement, not task-level erosion caused by AI integration into specific functions like scheduling, performance monitoring, or customer routing. This creates a mismatch where workers remain formally employed but experience de-skilling, intensified pace, or loss of autonomy due to embedded AI systems not recognized as altering their position under the contract. The non-obvious insight is that the failure is not in the clause's intent but in the foundational categories of labor organization, which predate and resist granularity at the level of task decomposition.

Automation Perception Gap

Workers interacting with AI systems often do not recognize their tools as 'automation' that alters job security or bargaining leverage, especially when interfaces resemble routine software updates rather than visible robotic replacement. This perceptual blind spot prevents them from activating or demanding protections embedded in AI impact clauses, which assume awareness of technological change as a discrete, reportable event. In warehouses, call centers, and delivery logistics—sites of heavy algorithmic management—employees may attribute performance pressure to managerial policy rather than AI-driven optimization, obscuring the root cause of workload changes. The underappreciated dynamic is that familiar terminology like 'system update' or 'new dashboard' masks transformative automation, rendering protective clauses inert because the trigger for their invocation is cognitively invisible.

Reskilling Theater

Collective agreements increasingly respond to AI risks by mandating 'upskilling' or 'reskilling' programs, but these often prioritize symbolic training in digital literacy over strategic mastery of AI-adjacent tools like data annotation, prompt engineering, or audit techniques that would enable workers to contest or influence automation decisions. Unions accept these offerings as concessions, while employers fulfill obligations through low-cost, high-participation workshops that do not transfer meaningful control or technical fluency. The real shift prompted is not worker empowerment but the institutionalization of skill development as a substitute for power redistribution, obscuring the fact that partial automation alters who defines work quality, pace, and evaluation—functions now embedded in unchallengeable algorithms.

Representational Misalignment

Mandatory AI impact negotiations in collective bargaining fail because unions are legally bound to represent all members equally, preventing targeted advocacy for partially automated workers whose roles have diverging managerial interpretations; this structural constraint forces labor representatives to dilute technical counterproposals in favor of broader job security assurances, which erases the specific vulnerabilities of hybrid roles. Evidence indicates that collective agreements in auto manufacturing and warehousing treat automation-related changes as enterprise-wide issues, even when only subsets of workers experience algorithmic management integration, thereby submerging worker-class-specific risks beneath general productivity clauses. The non-obvious reality is that democratic representation within union structures becomes a liability when automation creates divergent interests among workers performing similar but algorithmically differentiated tasks.

Skill Reversion Trap

Workers respond to inadequate AI safeguards by deliberately downgrading their technical proficiencies to avoid reassignment into high-surveillance automated roles, a defensive strategy that undermines both individual career resilience and union leverage in upskilling negotiations. Research consistently shows that long-haul truckers and pharmacy technicians, facing algorithmic performance tracking, avoid certification in AI-adjacent systems to remain in unmonitored traditional roles, creating a cascading disinvestment in digital fluency across unionized sectors. The dominant view assumes upskilling as the default worker response, but friction emerges when skill development is perceived not as advancement but as a pathway to diminished autonomy, revealing a hidden economy of resistance through deliberate obsolescence.

Compliance Theater

AI impact clauses are routinely implemented through check-the-box impact assessments that mirror public sector templates, such as those adapted from New York City’s Local Law 144, but these fail in private unionized settings because auditors validate procedural adherence rather than operational equity, allowing employers to satisfy bargaining requirements without altering AI’s real-world effects on workers. Because enforcement mechanisms rely on negotiated grievance processes that require workers to self-identify algorithmic harm — often invisible or data-privileged — the system rewards symbolic compliance over functional redress. The non-obvious insight is that rigorous documentation can function not as a safeguard but as a legitimizing ritual, masking ongoing degradation in job quality through formally 'verified' but substantively inert audits.

Data asymmetry

Mandating worker-accessible AI performance dashboards within bargaining units compels employers to disclose system-level productivity metrics that currently underwrite managerial decisions without worker input, because in manufacturing and call centers, partial automation relies on continuous data flows that workers generate but cannot interpret or challenge. This transparency breaks a key enabler of asymmetric adjustment—where firms optimize around labor cost reduction using AI-generated insights while workers lack baseline data to negotiate mitigation—highlighting that the core imbalance lies not in technology itself but in the controlled circulation of operational intelligence that shapes workplace power.

Temporal Misalignment

AI impact clauses fail because they are negotiated during stable labor cycles, not during the accelerated, incremental deployment phases of AI systems that erode job scopes over time. Collective bargaining historically responds to discrete technological disruptions—such as the introduction of CNC machines in auto plants in the 1980s—with defined retraining or severance terms, but today’s AI integrates quietly through software updates and data pipeline shifts that reconfigure tasks without triggering clause activation. This creates a lag where protections are tied to job elimination events, not the gradual devaluation of human labor within persisting roles, leaving partially automated workers—like loan underwriters using AI-scored risk assessments—without recourse despite diminished autonomy. The non-obvious insight is that the erosion of work occurs between bargaining cycles, rendering static agreements obsolete before they are tested.

Skill Obsolescence Lag

Workers in partially automated roles face protection gaps because AI clauses assume skill depreciation follows a predictable arc, but machine learning models now outpace retraining programs by continuously learning from worker inputs in real time. In warehouses using AI-coordinated logistics, for instance, pickers must adapt daily to shifting inventory algorithms that optimize paths and quotas, yet union training funds and curricula are locked into annual accreditation cycles governed by regional community colleges. This mismatch means that by the time upskilling occurs, the required competencies—such as interpreting dynamic AI-generated workloads—have already shifted, privileging firms that control algorithmic infrastructure. The historically significant shift is from episodic technological change to continuous algorithmic evolution, which turns skill development into a moving target.