Why Smart Homes Hide Law Enforcement Data Requests?

Analysis reveals 11 key thematic connections.

Key Findings

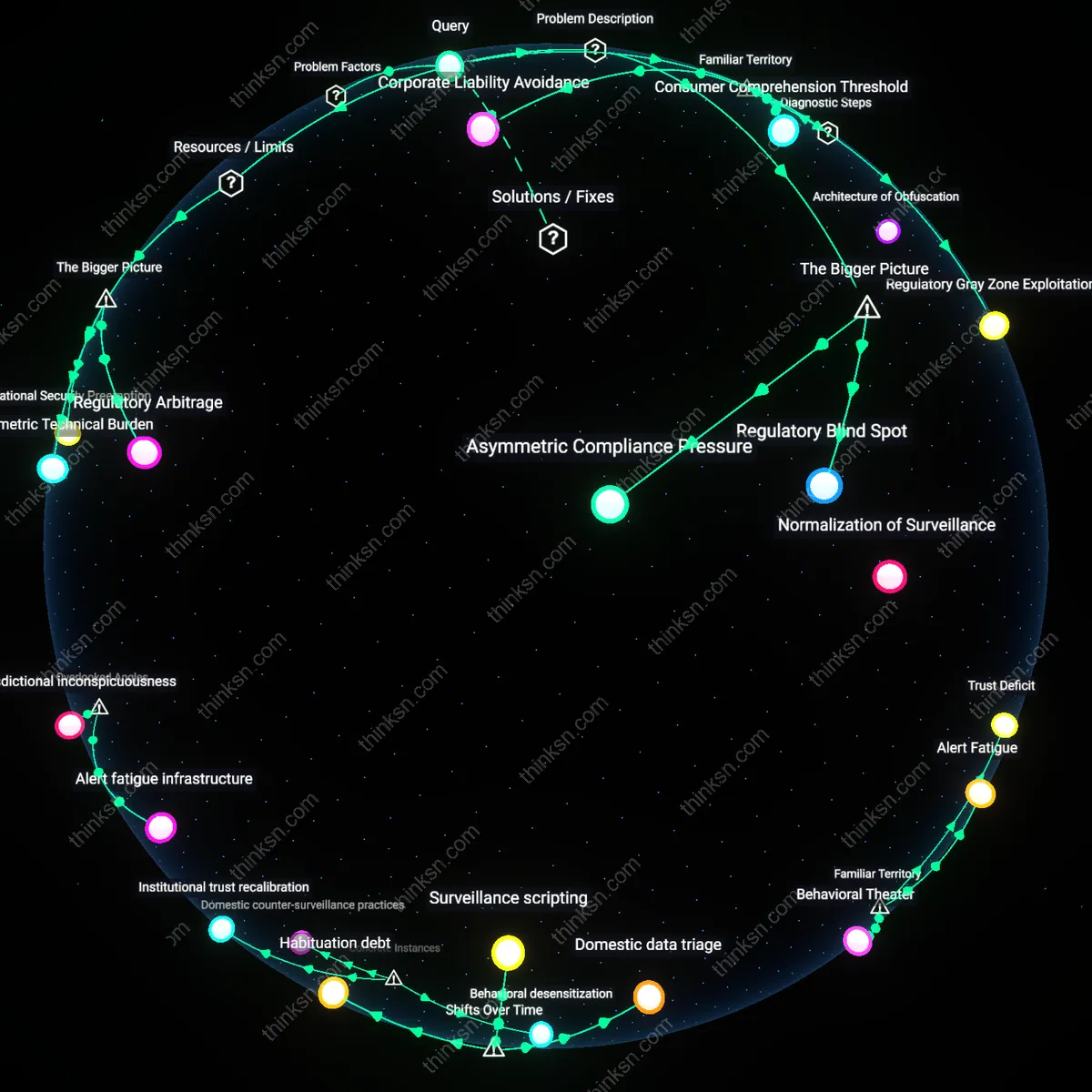

Regulatory Blind Spot

Privacy regulations focus on consumer transparency at the point of purchase, but exclude governmental data-sharing contingencies because oversight bodies lack authority over national security or law enforcement demands. This omission persists because agencies like the FBI or NSA operate under legal authorities such as National Security Letters or pen register statutes, which prohibit service providers from disclosing such data requests—meaning even if companies wanted to notify users, federal law blocks them. The non-obvious consequence is that notice-and-consent frameworks are structurally incapable of covering these access points not due to corporate evasion alone, but because the legal regime governing surveillance actively decouples transparency from accountability.

Asymmetric Compliance Pressure

Device manufacturers prioritize regulatory compliance with commercial data protection laws like GDPR or CCPA, which emphasize disclosure of data collection and third-party sharing for marketing purposes, but do not require disclosure of compelled government access. As a result, legal teams optimize consent forms for auditability under these frameworks, systematically omitting low-probability, high-sensitivity scenarios like law enforcement interception that carry legal penalties for disclosure. The underappreciated dynamic is that compliance efficiency incentivizes narrowing notice content to only those disclosures that reduce litigation risk, not those that reflect actual data vulnerability.

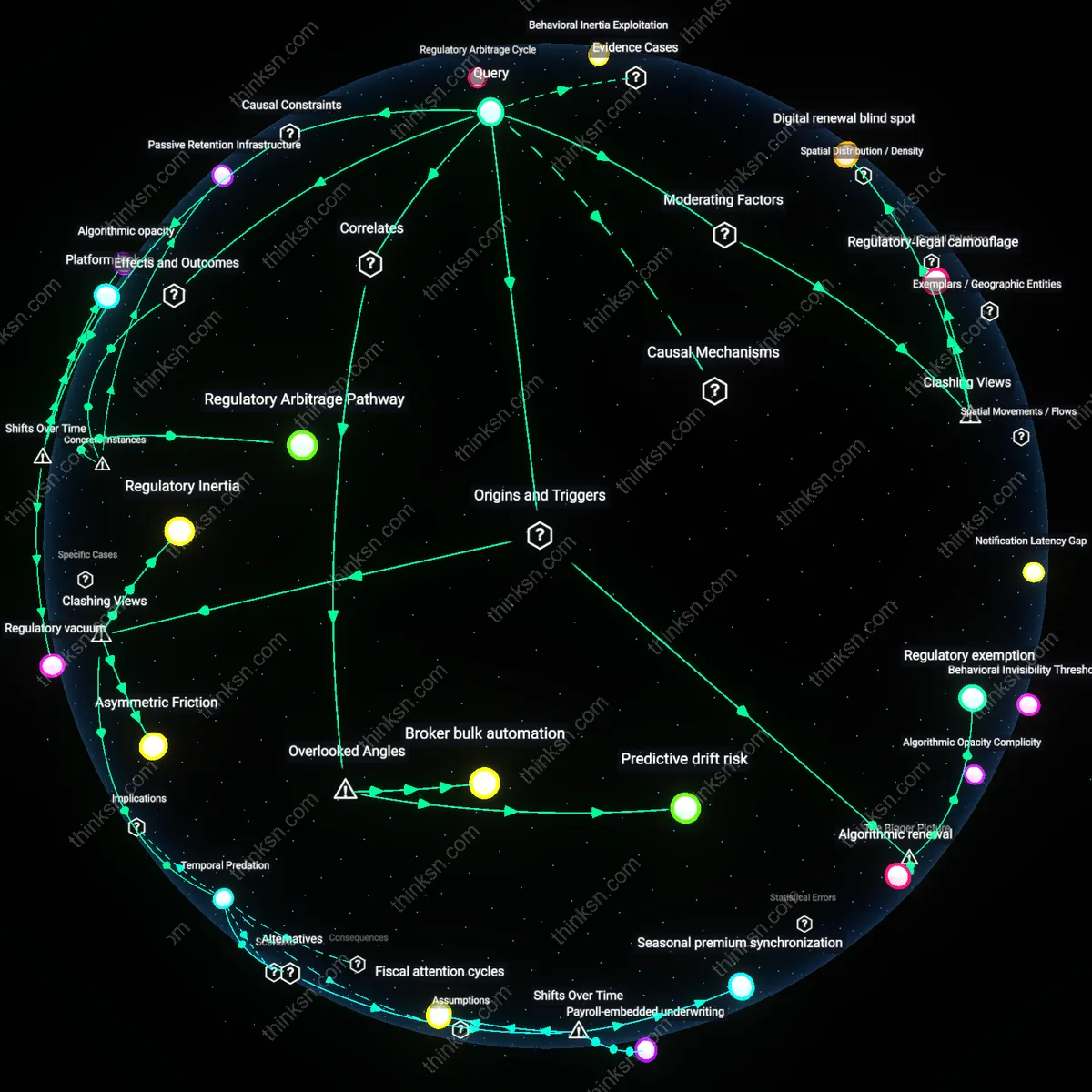

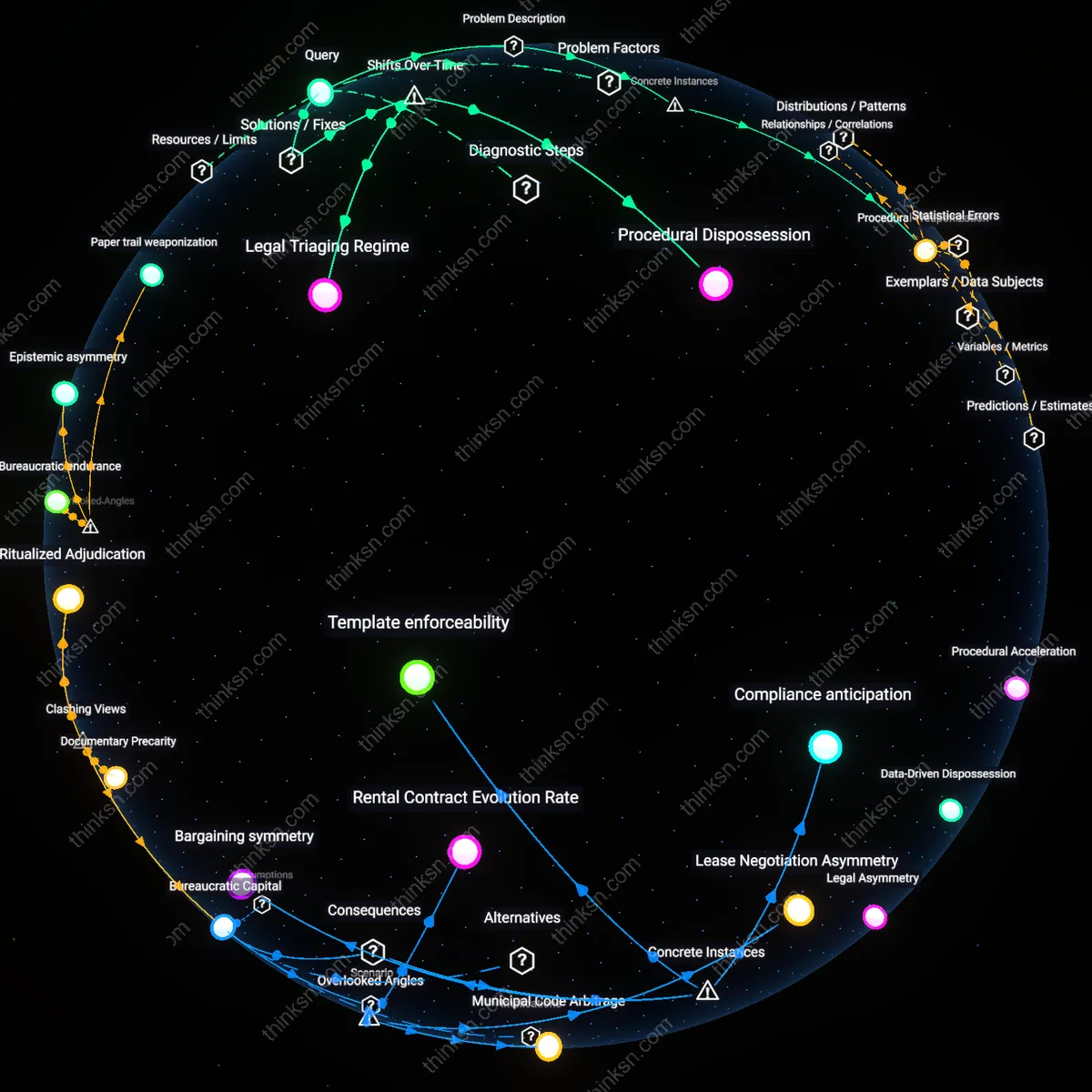

Regulatory Lag

Data privacy regulations have failed to keep pace with the proliferation of smart-home devices, leaving law enforcement data access loopholes unaddressed in notice-and-consent frameworks. After the 2010s consumer IoT boom, regulators continued relying on pre-digital consent paradigms designed for websites, not embedded sensors—creating a misalignment where agencies like the FBI could obtain data through third-party doctrines with minimal oversight. This gap emerged not from deliberate omission but from the slow institutional evolution of legal norms compared to rapid hardware scaling, revealing how legacy compliance templates cannot capture real-time surveillance risks in physical domestic spaces.

Architecture of Obfuscation

The modular design of smart-home ecosystems centralized data governance in cloud platforms post-2014, enabling manufacturers like Amazon and Google to externalize legal obligations by routing device data through opaque backend infrastructures. As the backend-as-service model matured, data access pathways for law enforcement were embedded in non-disclosable operational protocols—such as internal compliance tickets or national security letters—shielded from end-user visibility by design. This technical decentralization of accountability masked state access beneath layers of contractual and jurisdictional complexity, making transparency structurally infeasible rather than merely optional.

Normalization of Surveillance

Following the post-9/11 expansion of digital surveillance accepted during the 2000s, law enforcement’s access to personal data became an assumed operational baseline, which consumer tech firms internalized when designing smart-home services from 2015 onward. Instead of treating state data requests as exceptional events requiring disclosure, companies adopted a default posture of compliance—seen in automatic adherence to pen register orders or data-sharing with fusion centers—because legal risk was perceived as lower than user backlash. This shift redefined privacy not as user autonomy but as corporate risk management, producing consent frameworks that systematically erase the state as a data actor.

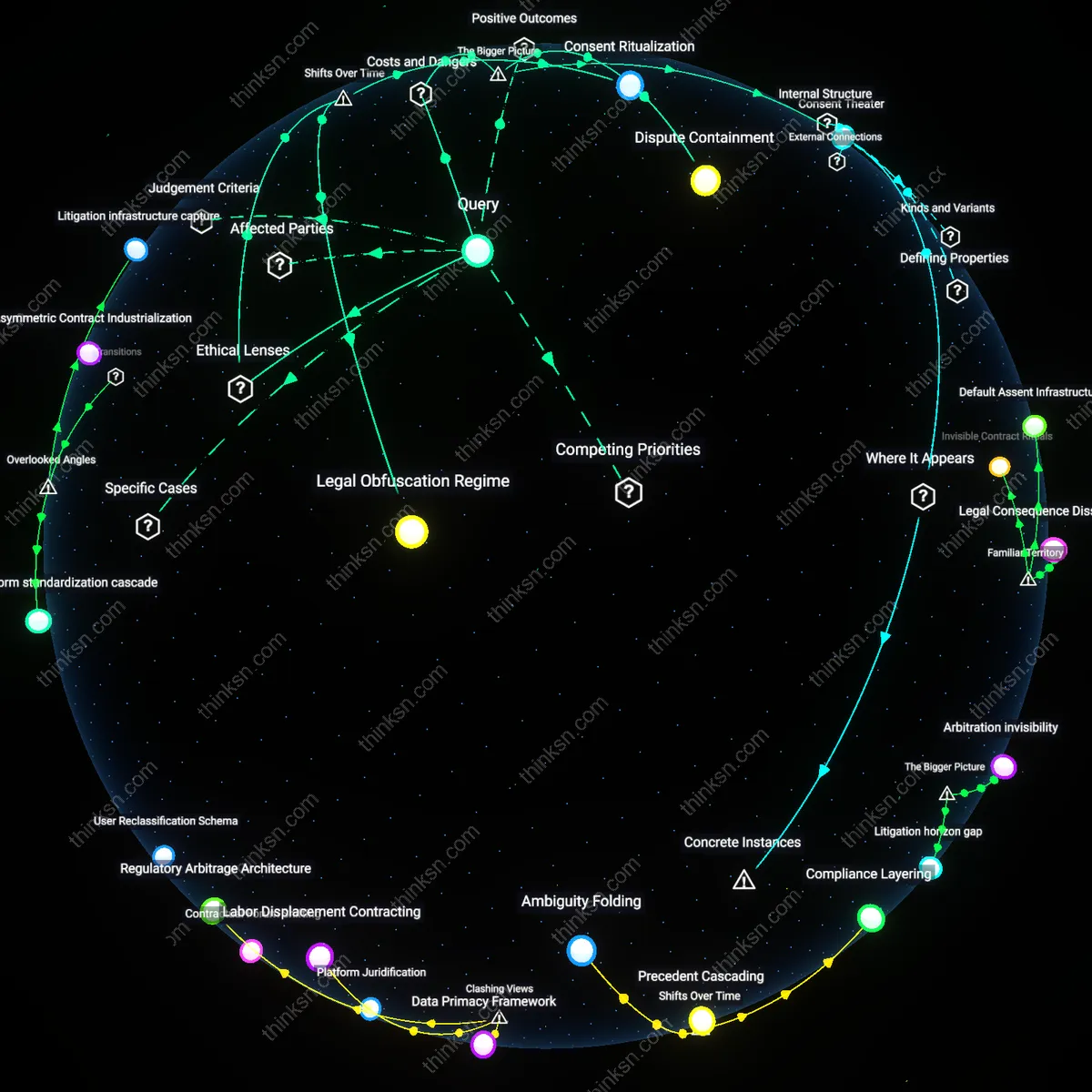

Corporate Liability Avoidance

Smart-home companies omit law enforcement data access disclosures to preempt legal exposure. These firms operate under ambiguous regulatory interpretations of the Electronic Communications Privacy Act, where revealing cooperation pathways could establish precedent for third-party access rights or incite class-action litigation over privacy violations; by maintaining strategic silence, they avoid affirming any duty to notify users, preserving maximum legal flexibility. The non-obvious insight is that disclosure omission isn't just opacity—it's a deliberate litigation risk calculus embedded in product documentation.

Consumer Comprehension Threshold

Notice-and-consent forms exclude law enforcement data access because including it would exceed users’ cognitive tolerance for complexity. Interface designers at firms like Amazon and Google routinely prioritize scannable, actionable summaries, knowing that adding legally rare but emotionally charged scenarios—such as government surveillance—triggers disproportionate anxiety while offering minimal behavioral utility. The underappreciated reality is that simplicity isn’t a flaw in the model but a designed threshold, calibrated to sustain perceived usability over exhaustive transparency.

Regulatory Gray Zone Exploitation

Manufacturers omit disclosure of law enforcement access because federal privacy frameworks do not explicitly mandate it for device-level data. In the U.S., agencies can compel data production through National Security Letters or administrative subpoenas, and companies exploit gaps between sector-specific laws like ECPA and emerging IoT contexts to avoid affirmative disclosures. The key insight is that the absence isn’t accidental—it reflects deliberate navigation of fragmented jurisdictional oversight where compliance is defined by what is required, not what is ethically expected.

Regulatory Arbitrage

Manufacturers omit disclosure about law enforcement data access because privacy regulations like the FTC Act and state laws focus on consumer-facing transparency, not government data requests, allowing companies to treat such disclosures as legally optional. This regulatory gap is actively exploited by device makers who prioritize compliance with minimum disclosure standards to avoid liability while maximizing operational flexibility, particularly in jurisdictions where national security letters or gag orders preempt transparency. The non-obvious consequence is that companies are not merely passive actors following mandates but are strategically using fragmented oversight to minimize public scrutiny of government collaboration, which undermines informed consent as a functional norm.

Asymmetric Technical Burden

Smart-home firms exclude law enforcement data access from notices because implementing dynamic, legally accurate disclosures across millions of distributed devices would require real-time updates, legal interpretation infrastructure, and user interface redesigns that exceed typical development cycles and firmware constraints. Most manufacturers operate under tight hardware margins and rely on centralized cloud logic, meaning localized transparency mechanisms are technically infeasible without systemic reengineering. The underappreciated reality is that technical limitations are not incidental but structural—device architecture itself becomes a barrier to disclosure, privileging backend control over edge-level accountability.

National Security Preemption

Disclosures about law enforcement data access are omitted because federal mechanisms like National Security Letters and FISA orders legally prohibit companies from revealing specific surveillance activities, creating a downstream effect where notice-and-consent frameworks cannot reflect compelled data sharing. This legal blackout binds device makers—particularly those in the U.S. or serving U.S. markets—even when they would otherwise disclose, embedding silence into their privacy architectures. The systemic insight is that the notice model fails not due to corporate negligence alone, but because national security regimes actively disable transparency at the policy level, making consumer consent structurally unattainable in high-risk data contexts.