Is Your Heartbeat Worth Personalized Fitness Over Privacy?

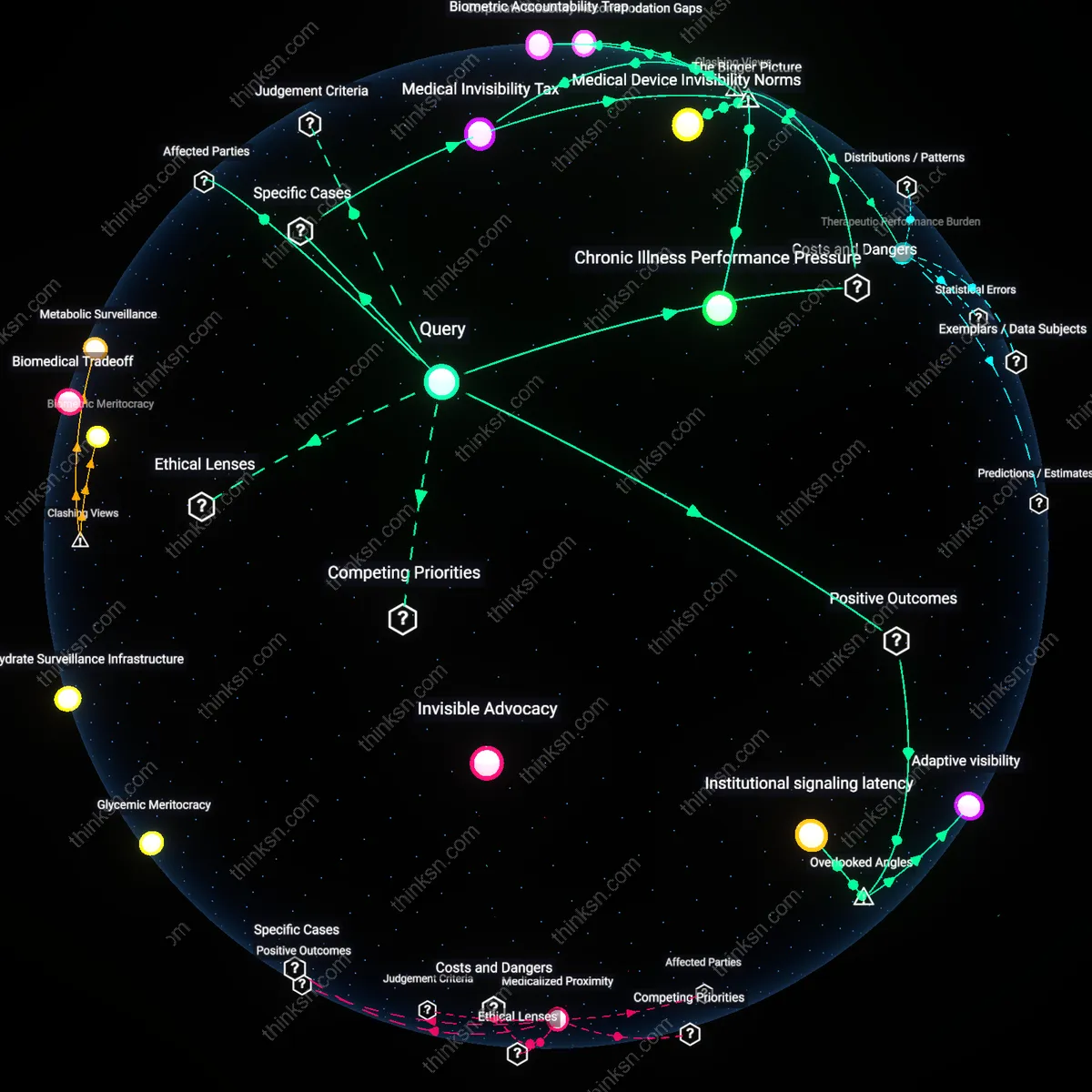

Analysis reveals 10 key thematic connections.

Key Findings

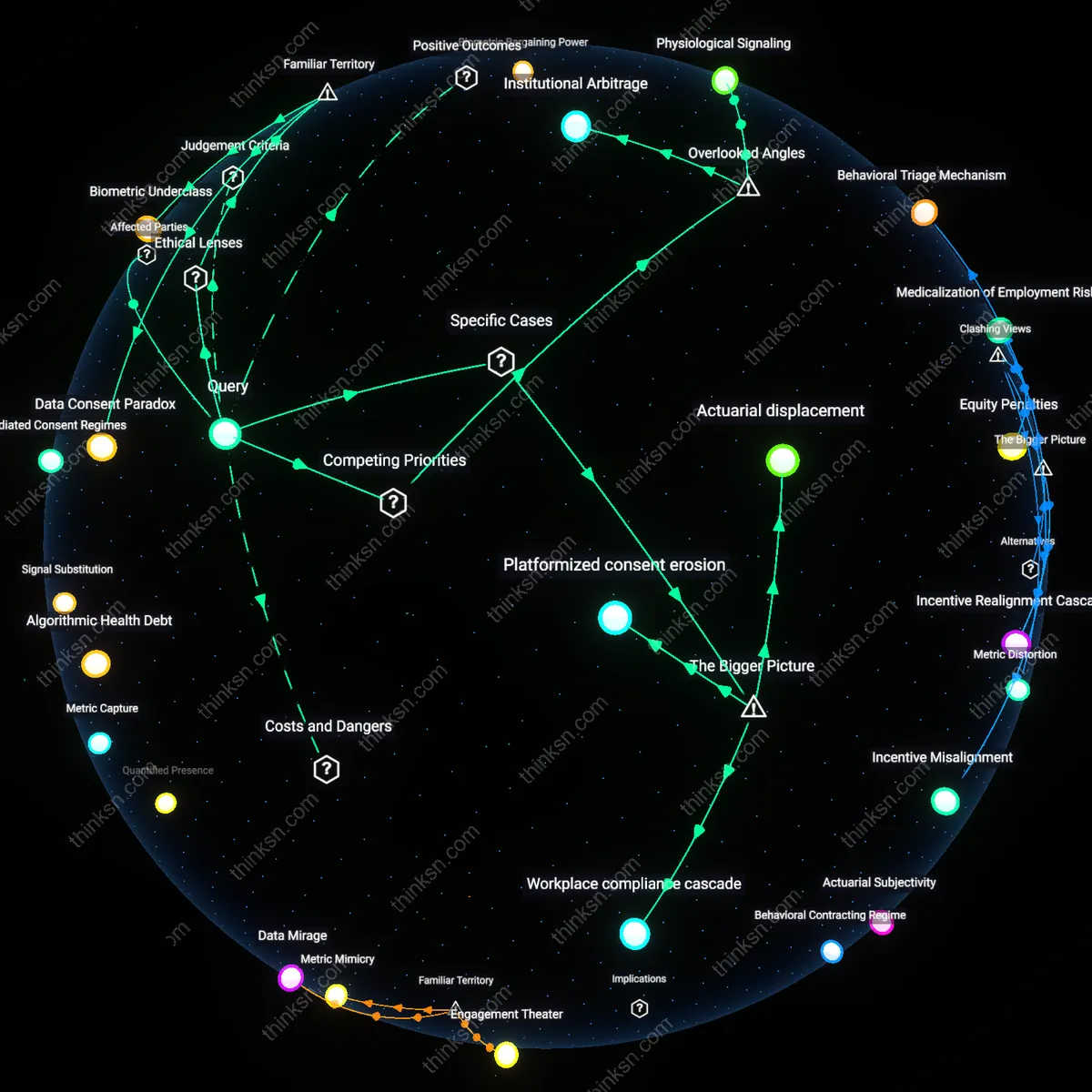

Biometric Exploitation Nexus

Insurers in the United States have used wearable-derived heart rate data aggregated through employer wellness programs, such as with Omada Health partnerships, to adjust premiums under the ACA’s risk adjustment frameworks, thereby transferring the burden of data exposure onto low-income workers who cannot opt out without penalty—revealing that personalization profits rely on institutionalized health surveillance where marginalized groups subsidize systemic efficiency.

Algorithmic Health Debt

In 2018, Fitbit data was used in a Canadian personal injury lawsuit to challenge a plaintiff’s claim of chronic fatigue, where elevated resting heart rates during alleged disability periods were weaponized by insurance assessors to deny compensation, exposing how personalized baselines become forensic tools that embed past physiological norms as legal liabilities, disproportionately affecting those without resources to contest algorithmic interpretations.

Mediated Consent Regimes

The NHS’s partnership with Google DeepMind in 2016, involving access to 1.2 million patient records including cardiac metrics, collapsed public trust when audit revealed heart rate and activity data were repurposed beyond fitness into clinical prioritization models without explicit user opt-in, demonstrating that the architecture of data consent in public-private health collaborations normalizes passive data surrender under perceived medical authority.

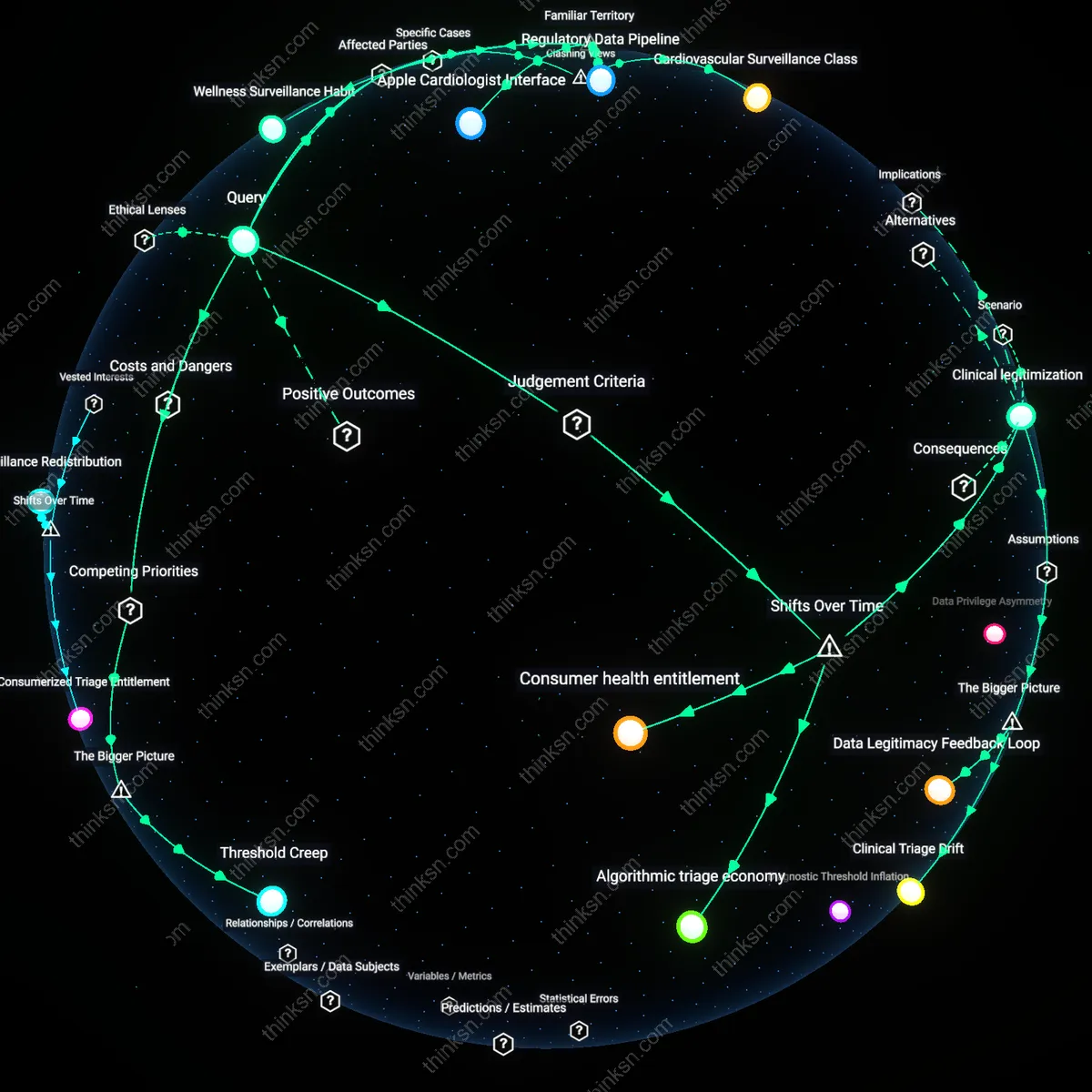

Institutional Arbitrage

The benefit of personalized fitness recommendations from shared heart-rate data outweighs the risk of health discrimination only when data flows are confined within regulated clinical ecosystems, but the overlooked reality is that consumer wearables enable institutional arbitrage—technology firms route biometric data through jurisdictions with weak health protections before reselling it to actors in highly regulated ones. This creates a backdoor for discrimination that bypasses traditional healthcare safeguards, and its significance lies in exposing how legal fragmentation, not data sensitivity alone, determines harm. The non-obvious insight is that the danger arises not from the data itself, but from jurisdictional gaps exploited as transfer mechanisms.

Physiological Signaling

The risk of health discrimination from shared heart-rate data outweighs its fitness benefits because heart-rate variability serves as a covert signal of mental health status, detectable through machine learning models even when users self-report no psychological symptoms. This form of physiological signaling is not protected under current disability or mental health laws, which assume disclosure is verbal and intentional, making it a hidden vector for bias in hiring or insurance. The underappreciated factor is that biometrics can expose conditions before clinical diagnosis, shifting the timeline of discrimination into a pre-symptomatic, legally invisible zone.

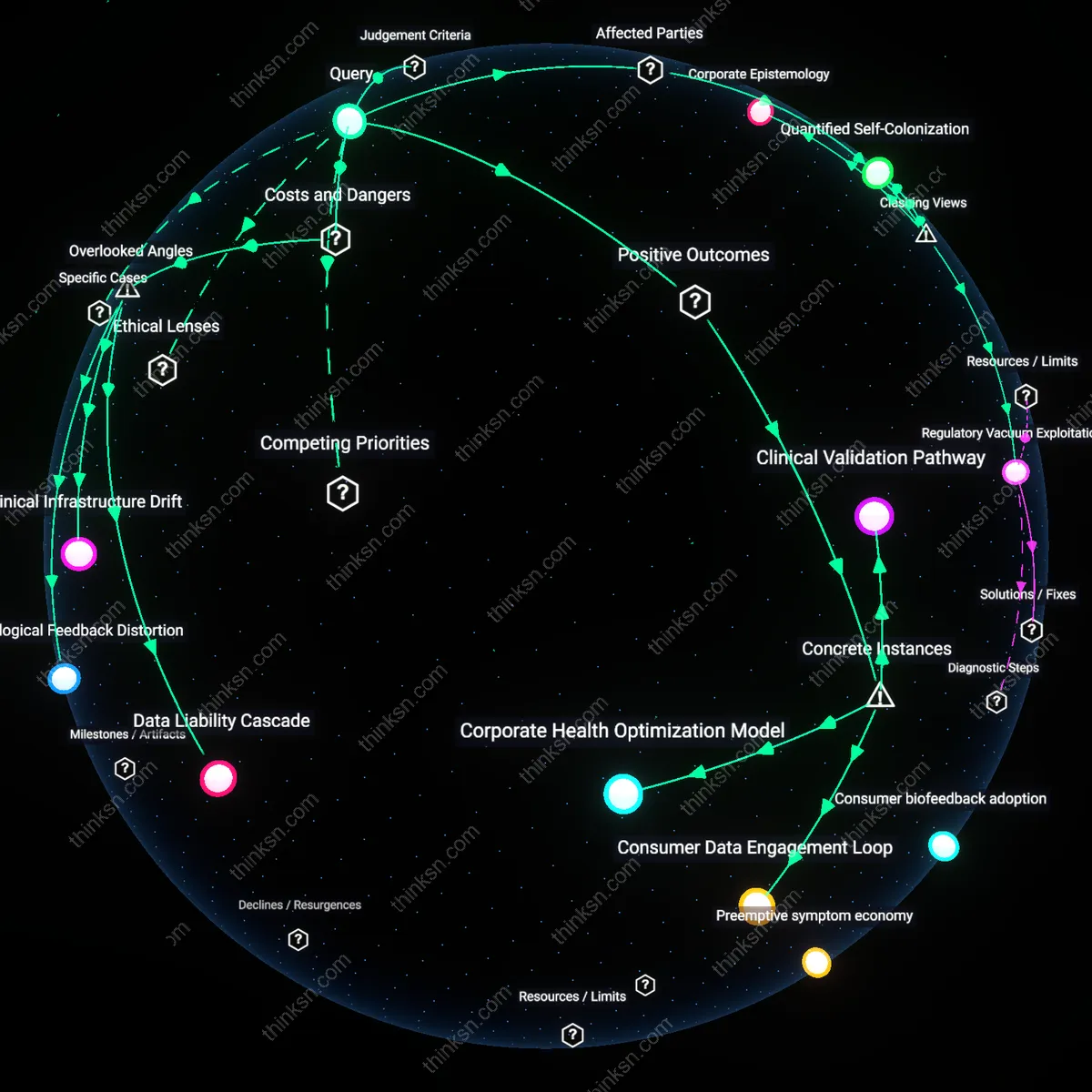

Data Consent Paradox

Yes, because users routinely surrender heart-rate data under the illusion of control, trusting fitness platforms to act as ethical stewards, yet existing privacy policies and terms of service—structured by libertarian-leaning data liberalism—allow downstream sharing with insurers and employers without meaningful opt-out. This false sovereignty over personal data, protected in name by frameworks like HIPAA yet circumvented by non-covered entities such as wearable tech firms, enables health discrimination under color of consent. The non-obvious truth is that the familiar 'privacy settings' users adjust create an ethical fig leaf, not a barrier.

Biometric Underclass

No, because the normalization of shared biometric data in employment and insurance contexts—such as corporate wellness incentives or premium discounts—creates de facto health stratification, a process legitimized by neoliberal policy doctrines that frame health as an individual responsibility. Employers and insurers, acting as informal regulators, use heart-rate trends to infer productivity or risk, penalizing those with 'suboptimal' metrics even in the absence of diagnosed illness. The unspoken consequence, hiding behind the familiar promise of 'personalized fitness,' is the emergence of a biometric underclass excluded from opportunities based on predictive proxies.

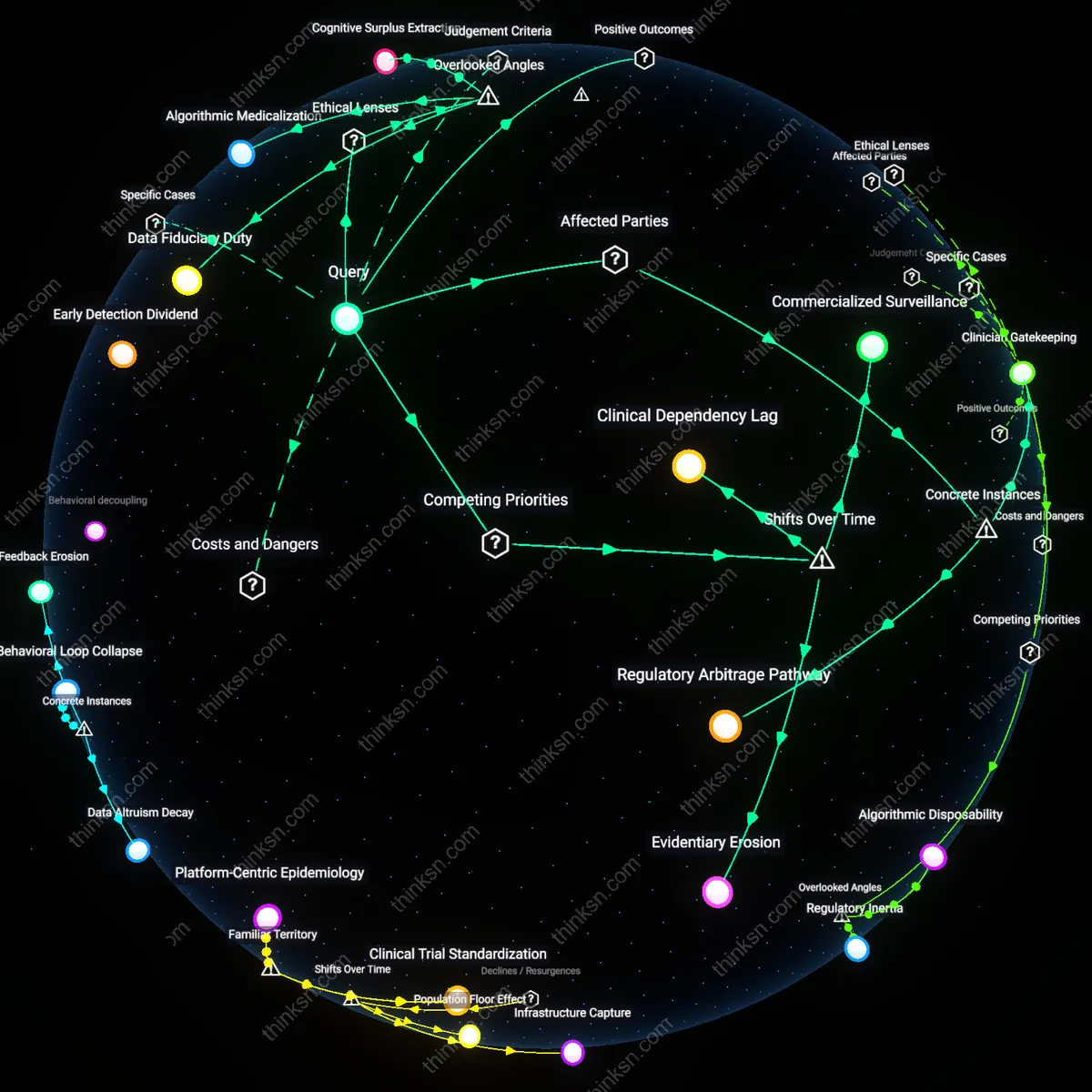

Actuarial displacement

Personalized fitness recommendations from shared heart-rate data do not outweigh the risk of health discrimination because insurers in the U.S., such as UnitedHealth Group, leverage aggregated biometric data to reclassify pre-symptomatic individuals as high-risk, shifting financial burden onto those most vulnerable to algorithmic misattribution. This mechanism operates through private health insurance markets where risk pooling depends on asymmetrical data access, enabling carriers to exploit real-time physiological signals as proxies for preexisting conditions—despite no clinical diagnosis. The significance lies in the quiet transformation of preventive health tools into instruments of financial exclusion, masked as wellness innovation. What remains underappreciated is how data originally collected for individual benefit becomes structurally repurposed to undermine collective risk solidarity.

Workplace compliance cascade

The risk of health discrimination from shared heart-rate data exceeds its benefit because corporate wellness programs, like those mandated by large employers such as Amazon or Walmart, use biometric benchmarks to impose financial penalties on employees who fail to meet algorithmically generated fitness thresholds. These programs are enabled by the U.S. Affordable Care Act’s wellness incentive provisions, which permit employers to charge up to 30% higher premiums for non-compliance—creating a compliance cascade where biometric data justifies coercive workplace health governance. The systemic dynamic arises from the privatization of public health responsibility onto employers, who outsource risk management to third-party platforms like Vitality, thus embedding surveillance into compensation structures. The non-obvious effect is that personalization becomes a tool of norm enforcement rather than care, privileging behavioral conformity over medical individuality.

Platformized consent erosion

The benefit of personalized fitness recommendations does not outweigh the risk of health discrimination because data from wearable devices—such as Fitbit or Apple Watch—is routinely aggregated by platforms like Google Fit and shared with data brokers like Optum Insight without granular user control, enabling downstream profiling in employment and lending. This occurs through opaque data supply chains where initial consent for health tracking is functionally irreversible once data enters multi-tenant cloud ecosystems controlled by firms with conflicting commercial incentives. The systemic pressure comes from the lack of federal data privacy legislation in the United States, which allows secondary uses of biometric data to proliferate under the legal guise of 'user agreement'. What is overlooked is that personalization depends on surveillance infrastructures that systematically degrade autonomy, turning informed consent into a procedural fiction.