AI Audits vs Deep Regulations: A Senior Accountants Dilemma?

Analysis reveals 7 key thematic connections.

Key Findings

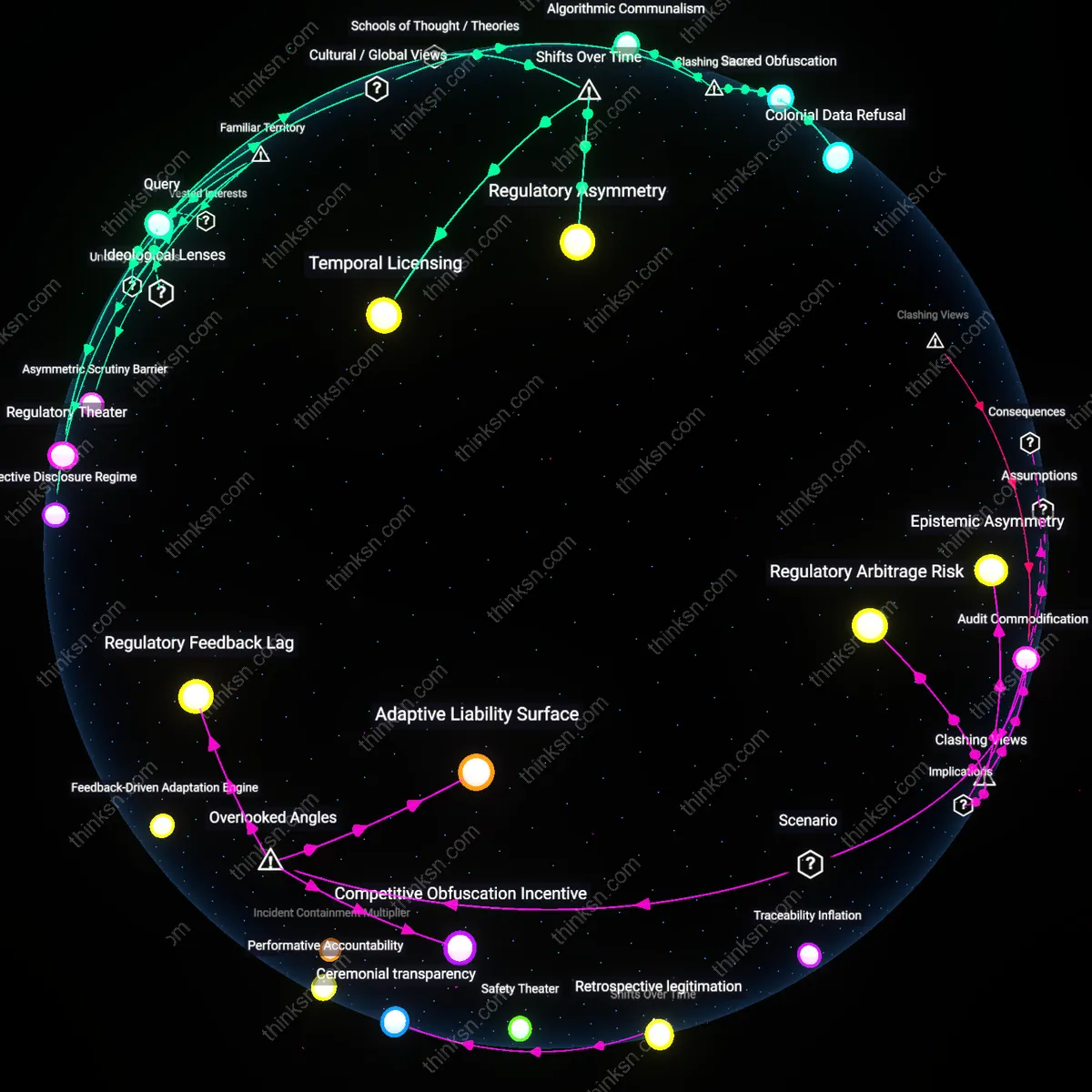

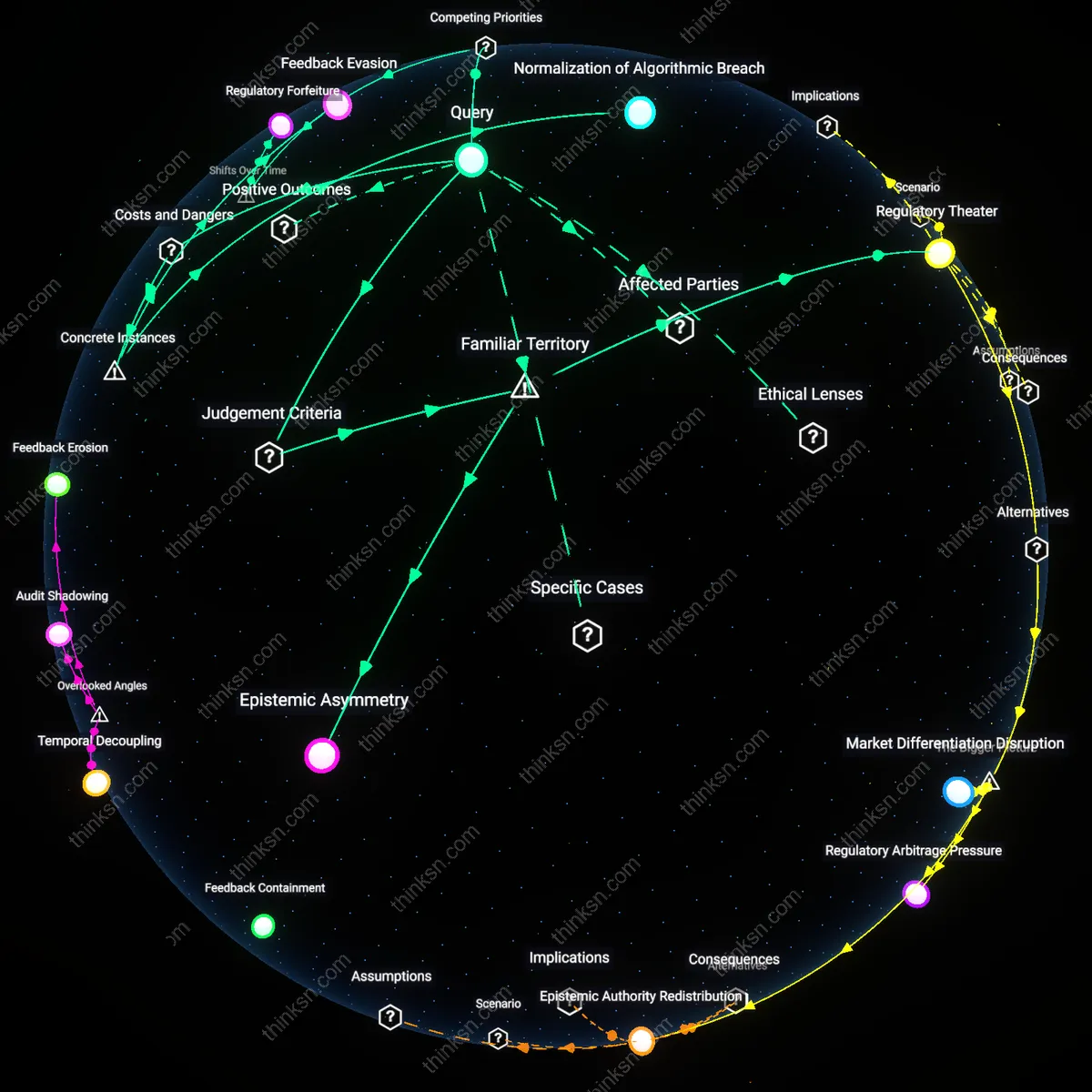

Compliance Fracture

Senior accountants must codify jurisdictional regulatory variances into AI training datasets as non-negotiable input constraints, not post-hoc validations. This forces AI tools to operate within legally defined boundaries by design, embedding regional audit logic—such as SEC vs. EBA capital treatment rules—into model architecture rather than treating them as external checks; the mechanism shifts compliance from a review layer to a computational substrate. The underappreciated reality is that AI adoption disparities across organizations are not delays to overcome but active sources of regulatory divergence—treating them as systemic noise reveals how automation entrenches rather than resolves interpretation gaps.

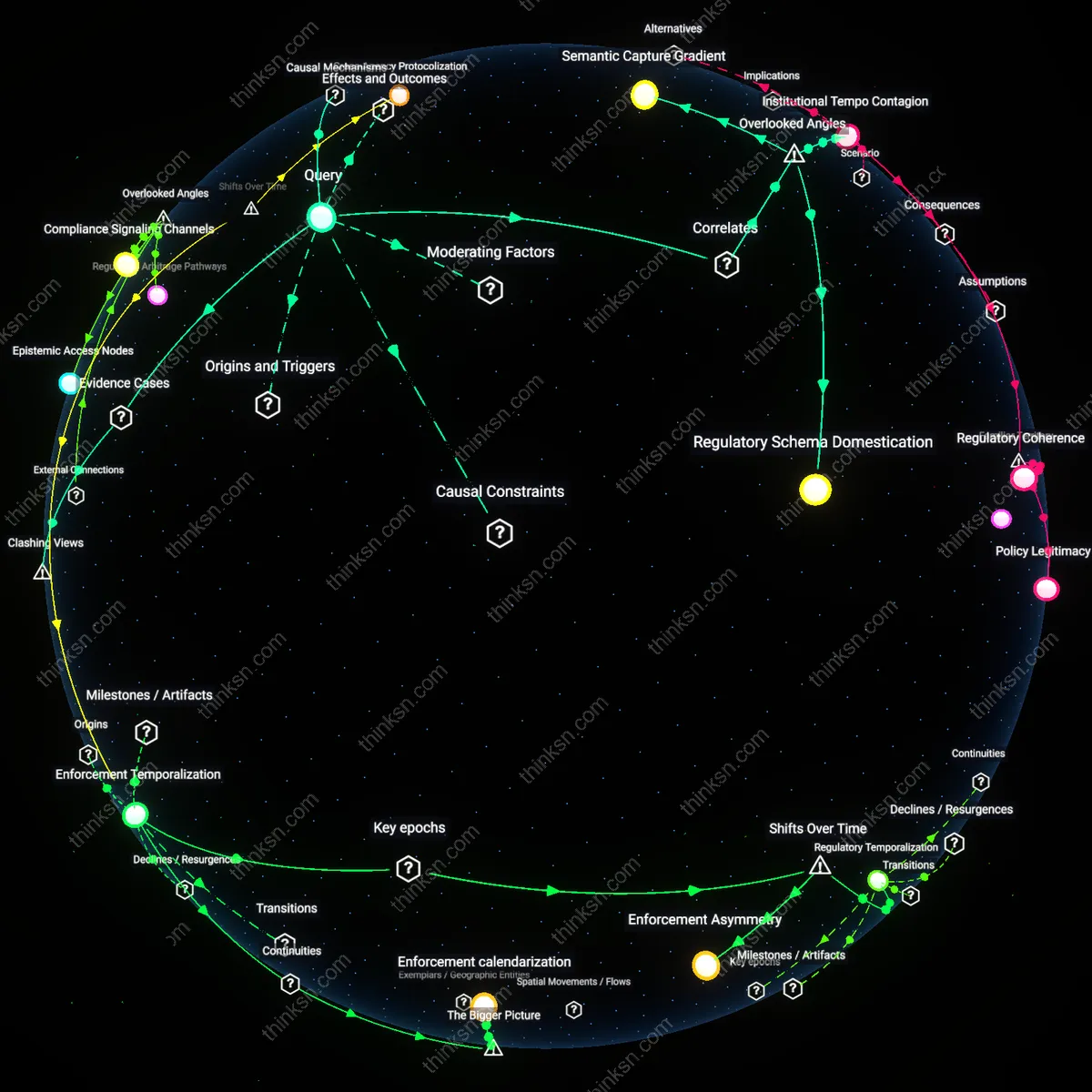

Regulatory Buffer Zones

Senior accountants at the U.S. Securities and Exchange Commission (SEC) preserved regulatory expertise during early AI audit experiments by mandating human-led review layers around AI-generated risk flags, as seen in the 2021 pilot with Kira Systems' machine learning contract analysis tool — where legal interpretation remained siloed within veteran staff. This created a functional division between automated pattern detection and human regulatory judgment, allowing AI integration without ceding interpretive authority. The non-obvious insight is that resistance to full automation was not a technical limitation but a deliberate institutional safeguard ensuring AI served, rather than supplanted, domain mastery.

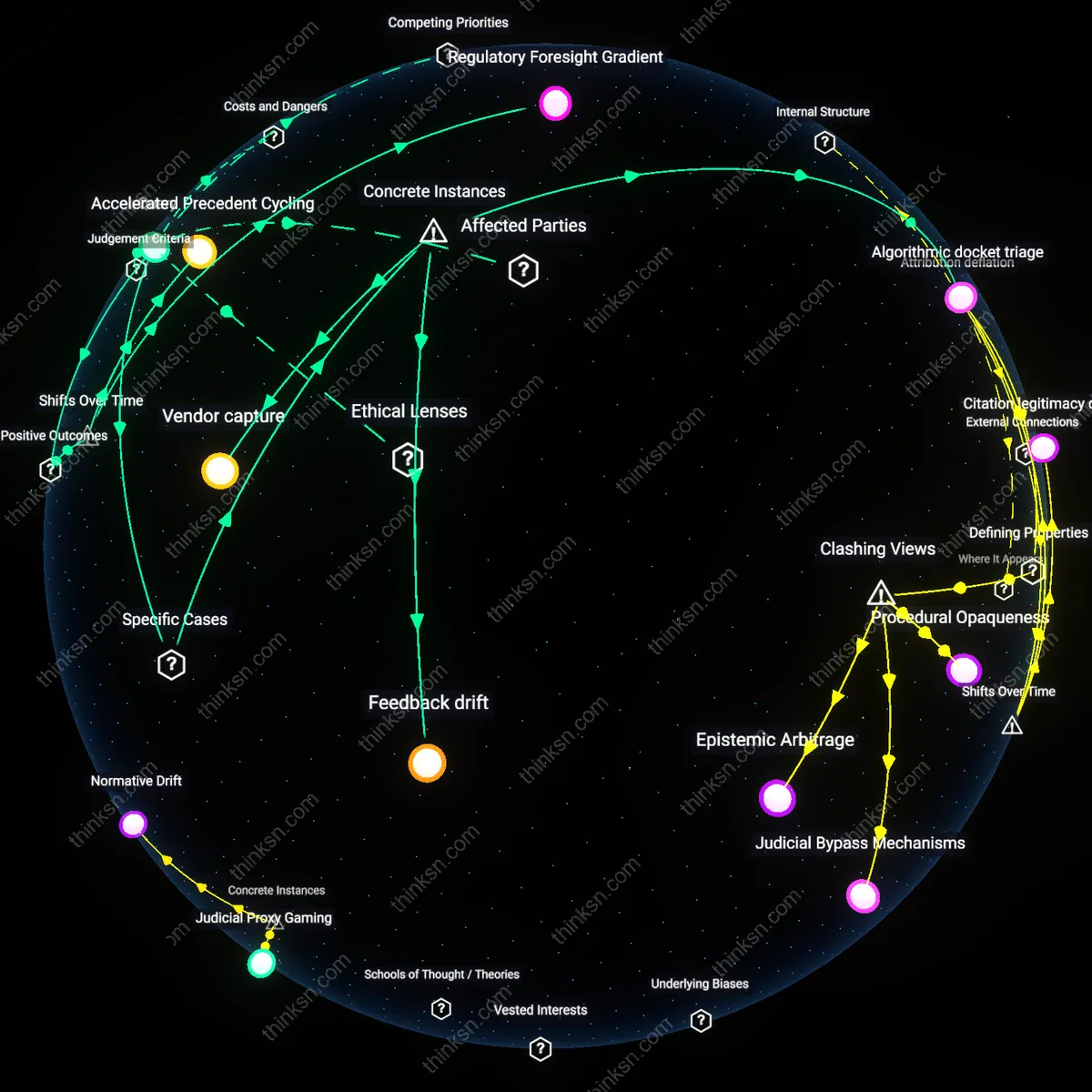

Expertise Arbitrage

In 2023, Deloitte’s rollout of AI-augmented audit workflows across its EU practices relied on a cadre of hybrid professionals — ‘AI-audit liaisons’ — trained both in International Financial Reporting Standards (IFRS) and data model validation, exemplified by the Frankfurt Audit Innovation Hub. These individuals translated between AI development teams and regulatory compliance officers, ensuring tools adhered to local audit customs even when central algorithms were standardized. The overlooked dynamic here is that expertise preservation occurred not through institutional inertia but through active role creation that arbitraged across technical and regulatory domains.

Asymmetric Governance

When PwC implemented AI audit assistants in its Japanese branch starting in 2022, it retained a centralized team of veteran tax specialists from the Tokyo Financial Instruments Bureau to oversee model outputs, despite the global AI platform being managed from London. This created a local veto point where regulatory non-compliance could halt deployment, demonstrating how uneven adoption enabled niche retention of institutional memory. The critical insight is that asymmetry in decision authority — not uniform tool access — became the vehicle for preserving deep regulatory knowledge in high-compliance environments.

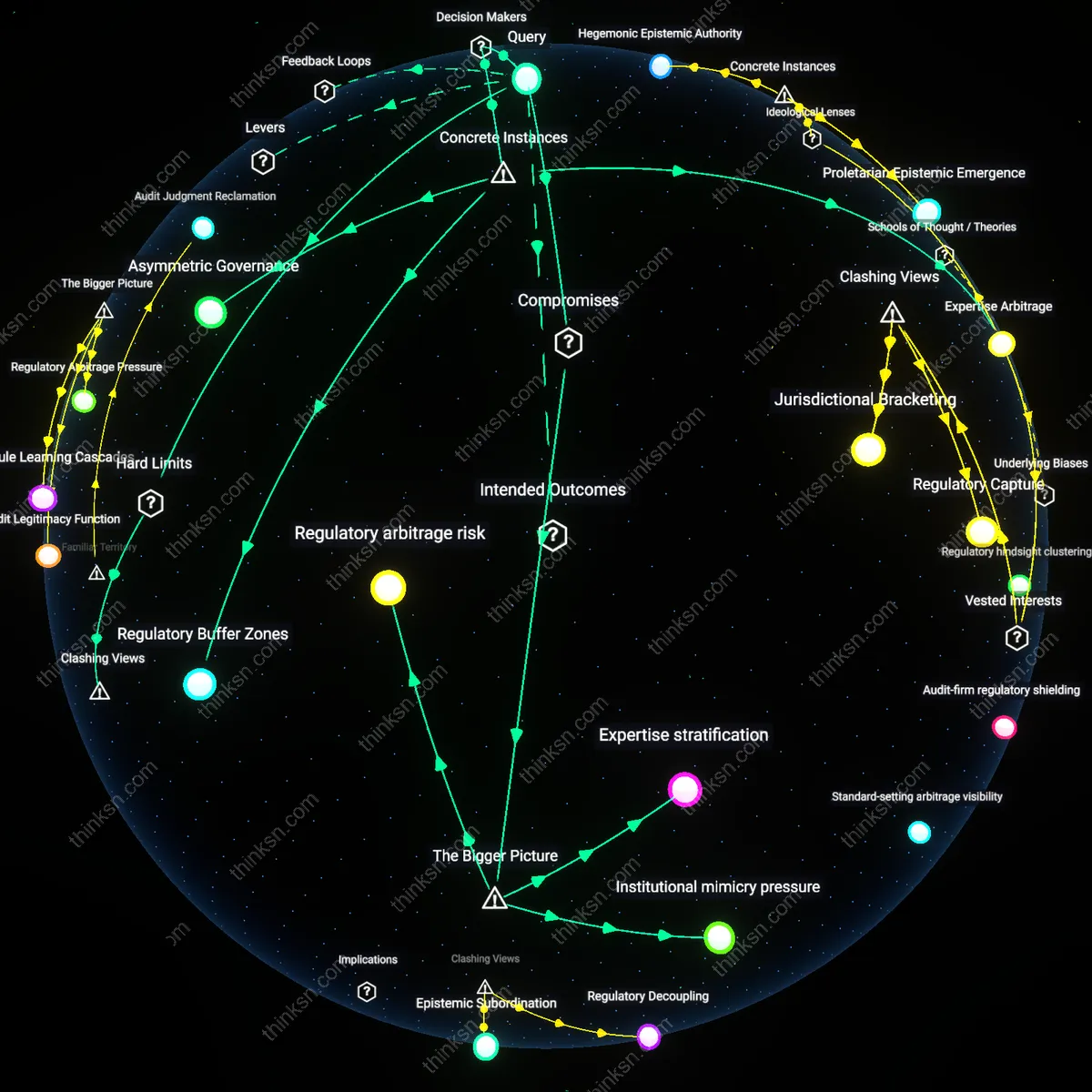

Regulatory arbitrage risk

Senior accountants must prioritize harmonizing internal AI-augmented audit protocols with jurisdiction-specific regulatory expectations to prevent misalignment that could be exploited as regulatory arbitrage. As AI tools automate compliance checks, inconsistencies in adoption across firms create variation in enforcement visibility—some organizations appear more compliant due to automation while others maintain deeper interpretive expertise through manual processes. This divergence enables regulatory arbitrage risk, where institutions gain competitive advantage not by higher compliance quality but by differential presentation, driven by regulators' uneven capacity to assess AI-mediated outputs. The significance lies in how automation asymmetries reshape enforcement dynamics, privileging speed over interpretive fidelity in ways that are invisible without cross-institutional comparison.

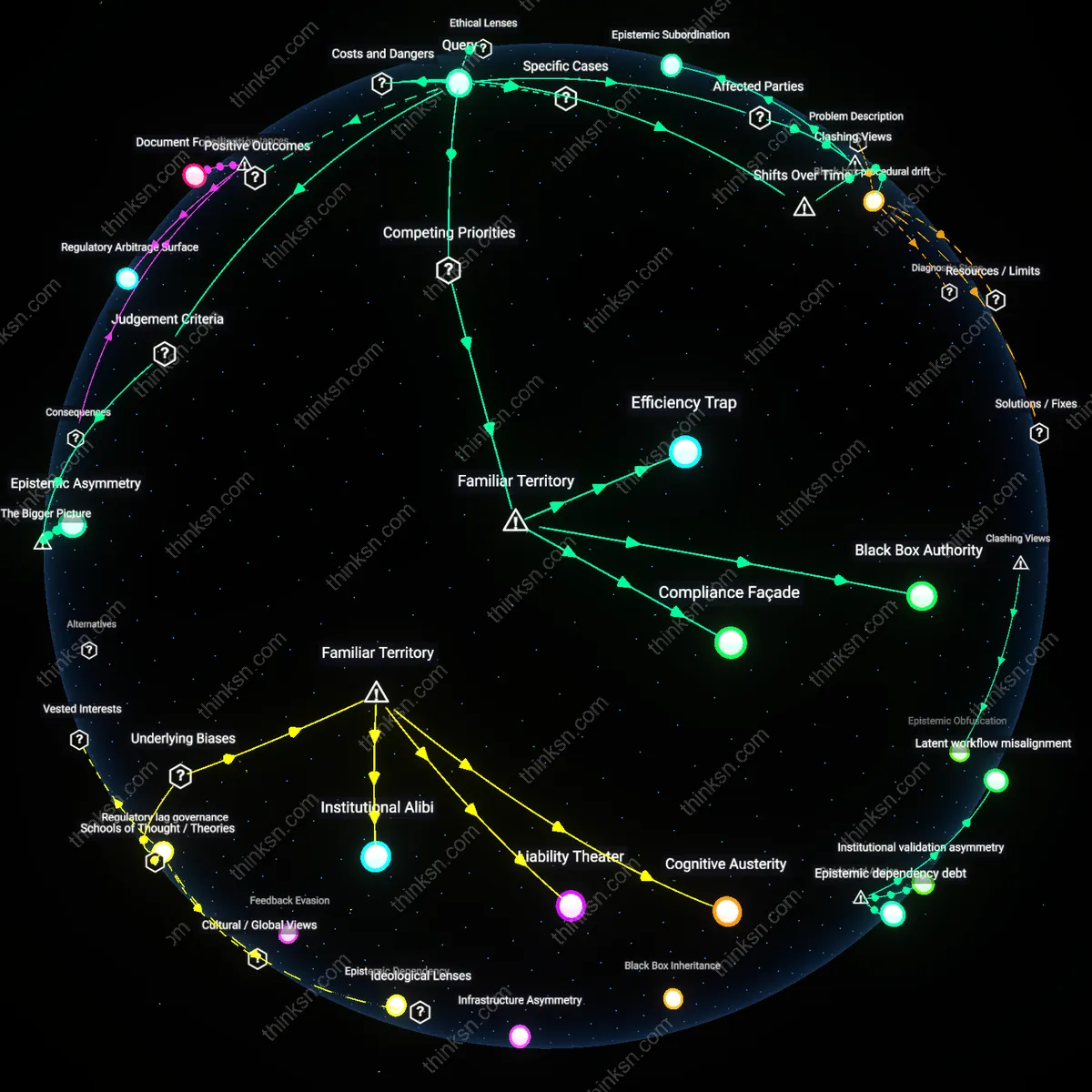

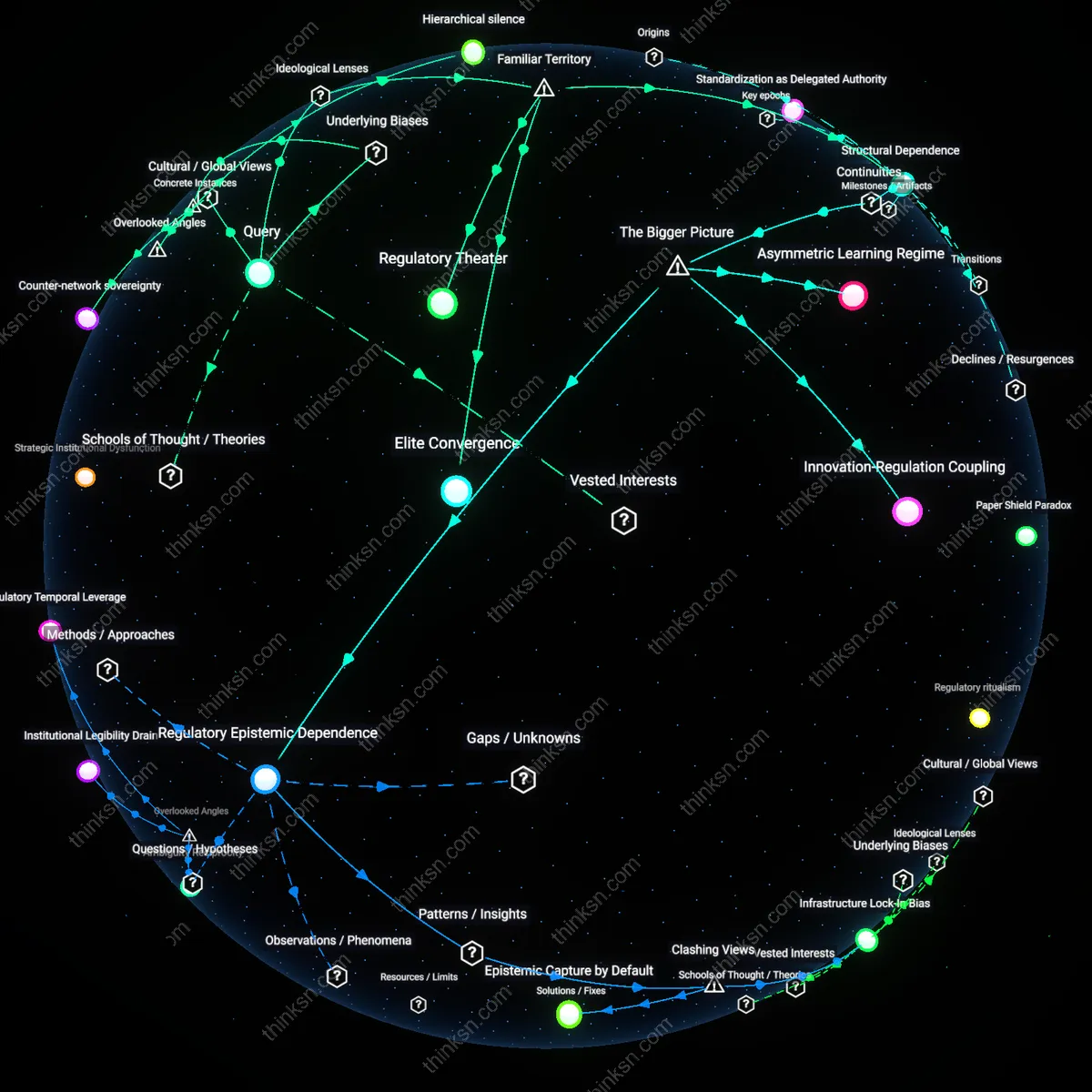

Expertise stratification

Senior accountants preserve regulatory expertise by designating AI tools to junior teams for routine validation while reserving high-discretion interpretive tasks for seasoned professionals, thereby maintaining institutional knowledge through hierarchical task partitioning. This works because AI adoption is uneven not just between organizations but within them, creating a dual-track workflow where efficiency-driven automation coexists with judgment-intensive legacy practices. The mechanism reflects expertise stratification—where seniority becomes congealed judgment amid automation pressure—sustained by client liability concerns and audit accountability structures that ultimately rest on human sign-off. This reveals how professional hierarchy functions as a risk-containment system when technological adoption outpaces regulatory codification.

Institutional mimicry pressure

Senior accountants sustain regulatory depth by selectively adopting AI features that mirror the procedural forms of established audit frameworks, even if the underlying analytics differ, allowing firms to demonstrate modernity without overhauling expertise-based workflows. This mimicry is driven by institutional pressures from auditors, clients, and standard-setters who reward symbolic alignment with innovation while retaining traditional audit logics in practice. The resulting dynamic—where AI tools are adapted to fit pre-existing interpretive norms rather than transform them—produces institutional mimicry pressure, a stabilizing force that curbs technological disruption by embedding new tools within older epistemic cultures. This shows how legitimacy-seeking behavior in professional services can decouple technological change from operational transformation.