Generational Stewardship

Older adults in the AARP online community see themselves as moral guides who reinforce civic norms through curated personal storytelling. Members of AARP’s 'Real Families, Real Stories' digital initiative position their lived experiences as cautionary or aspirational narratives, distributing them through moderated message boards and newsletters; this mechanism of selective self-disclosure operates within a trusted-neighbor framework institutionalized by AARP’s brand reputation, revealing how older adults leverage perceived legitimacy to shape intergenerational values—an underappreciated form of soft governance often mistaken for passive nostalgia.

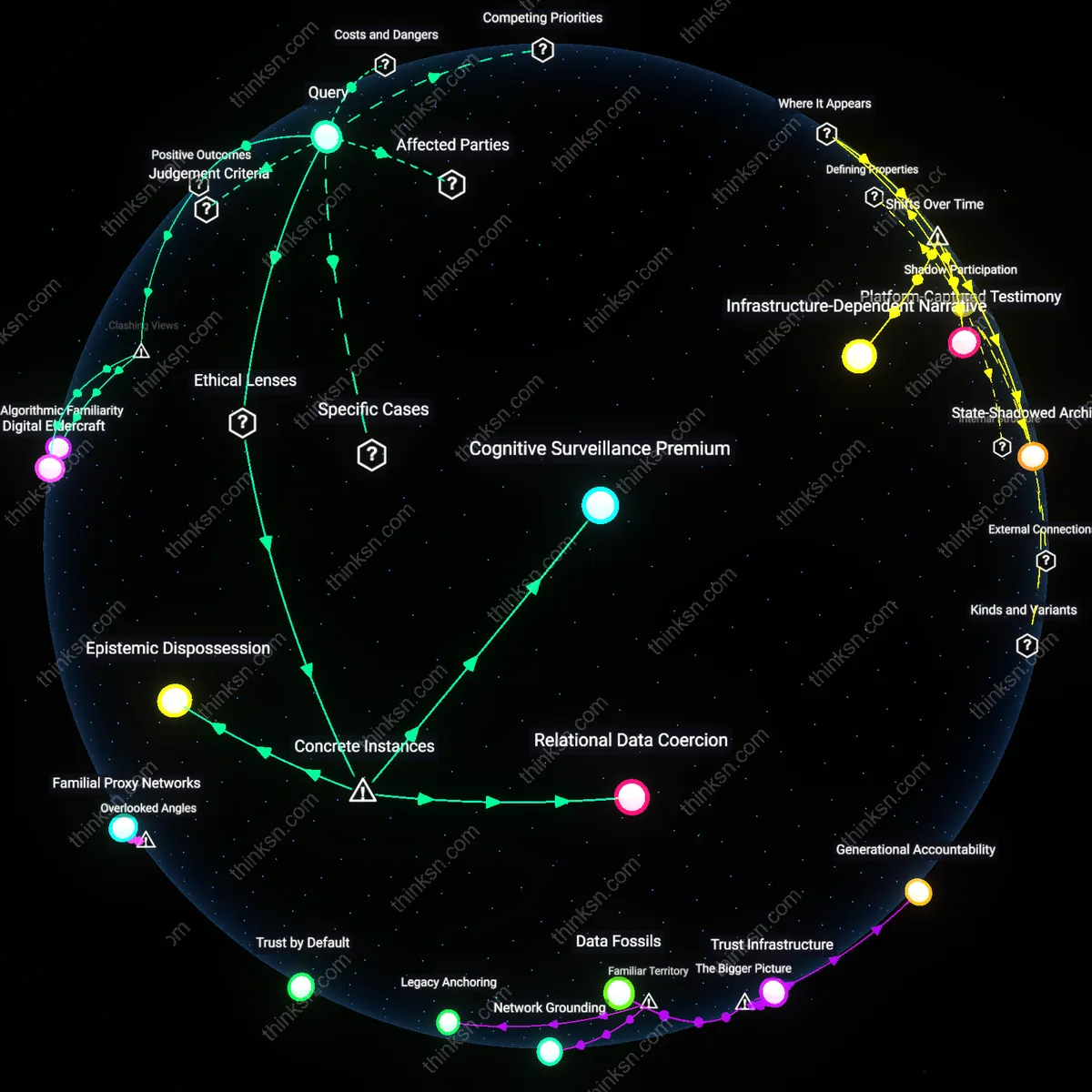

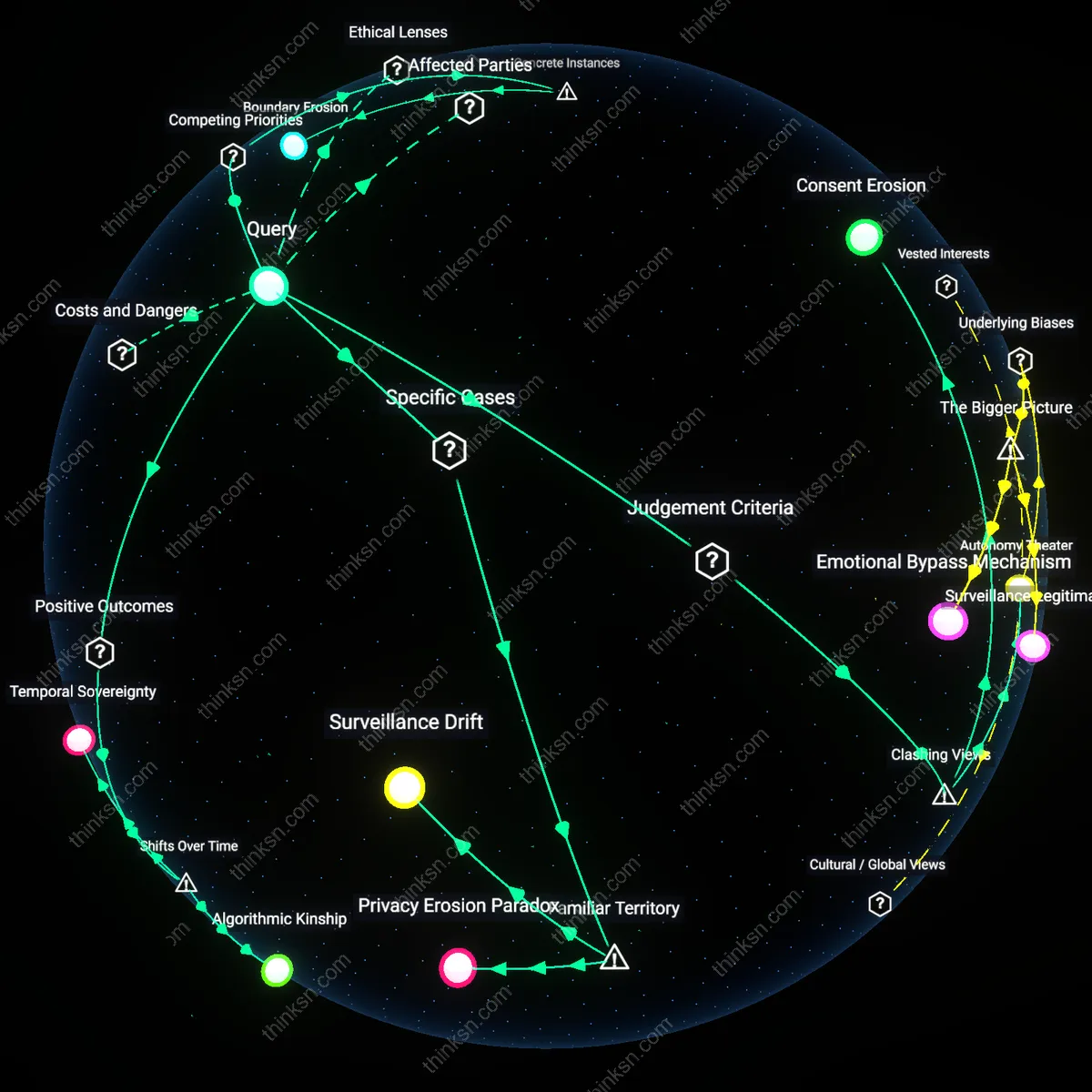

Shadow Participation

Participants in the UK’s Age Exchange digital storytelling project unknowingly supply behavioral metadata that third-party analytics firms repurpose for predictive senior care models. Though participants believe they are engaging in reminiscence therapy via recorded life-story interviews, the embedded platform software tracks vocal cadence, response latency, and topic recurrence—data later monetized by health-tech firms under NHS partnerships; this reveals a latent system of behavioral extraction masked as cultural preservation, where perceived trust in public-sector-linked organizations enables unnoticed surveillance participation.

Epistemic Frugality

Members of the SeniorNet instructional forums in Silicon Valley in the early 2000s deliberately limited data sharing to narrowly defined technical exchanges on hardware troubleshooting, resisting broader social engagement despite platform design encouraging it. These users instrumentalized minimal information disclosure as a protective strategy, interpreting unsolicited connectivity as a threat rather than a resource—highlighting a calculated disengagement tactic that defies both the 'trusted neighbor' and 'unwitting source' binaries by asserting control through omission, a practice undertheorized in digital sociology.

Digital Elders

Older adults in Western online networks see themselves as trusted neighbors who safeguard communal values through digital mentorship. Rooted in postwar ideals of civic participation and neighborhood cohesion, they engage platforms like Facebook to share life advice, verify local information, and reinforce social norms, viewing their presence as morally anchoring in chaotic digital spaces. This self-perception as stabilizers—though often unreciprocated by younger users—reveals a quiet resistance to algorithmic impersonality, where gravitas is offered freely even as data extraction proceeds unnoticed beneath their curated posts. The non-obvious insight is that their trusted status is not despite their analog mindset, but because of how it inadvertently structures trustworthy content for automated systems.

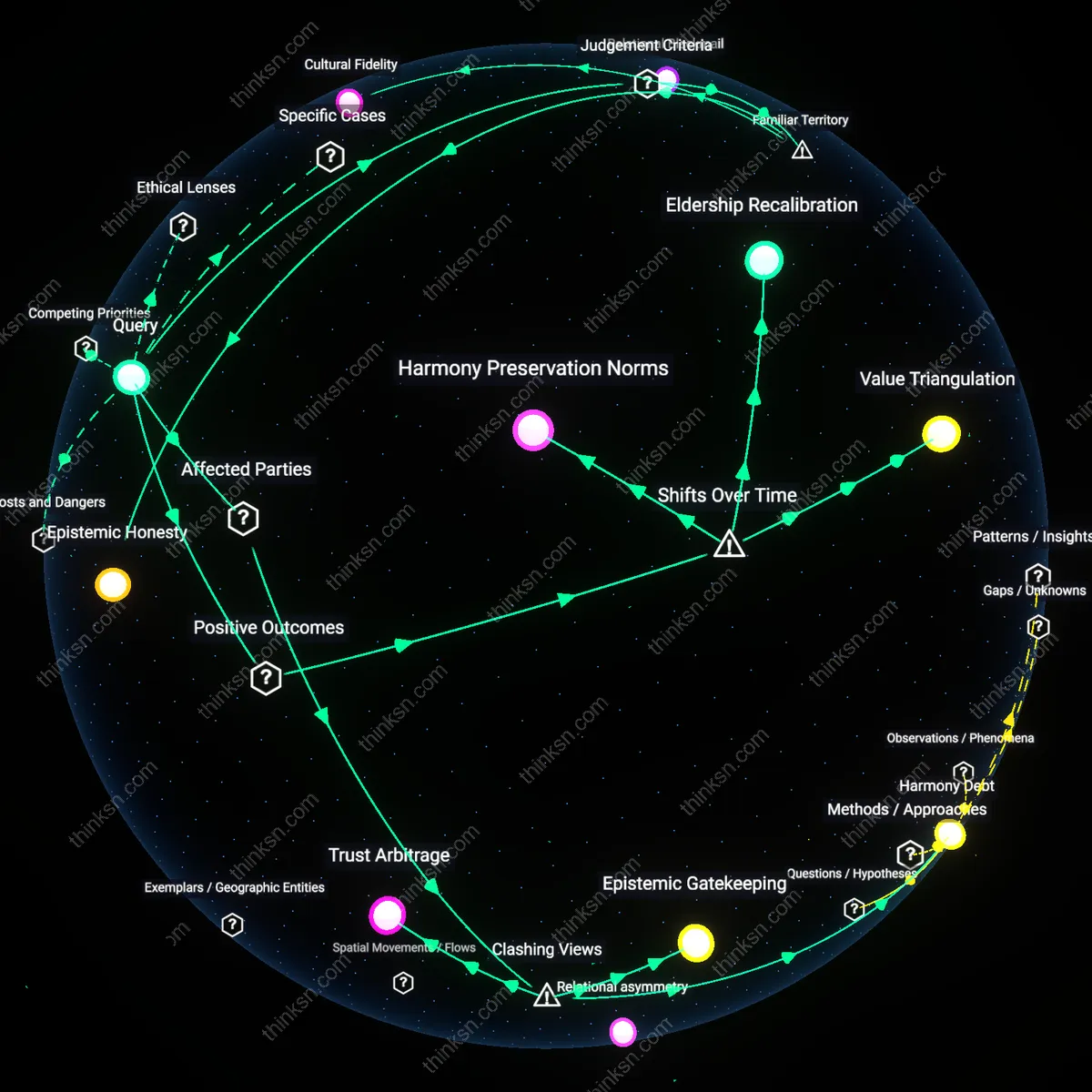

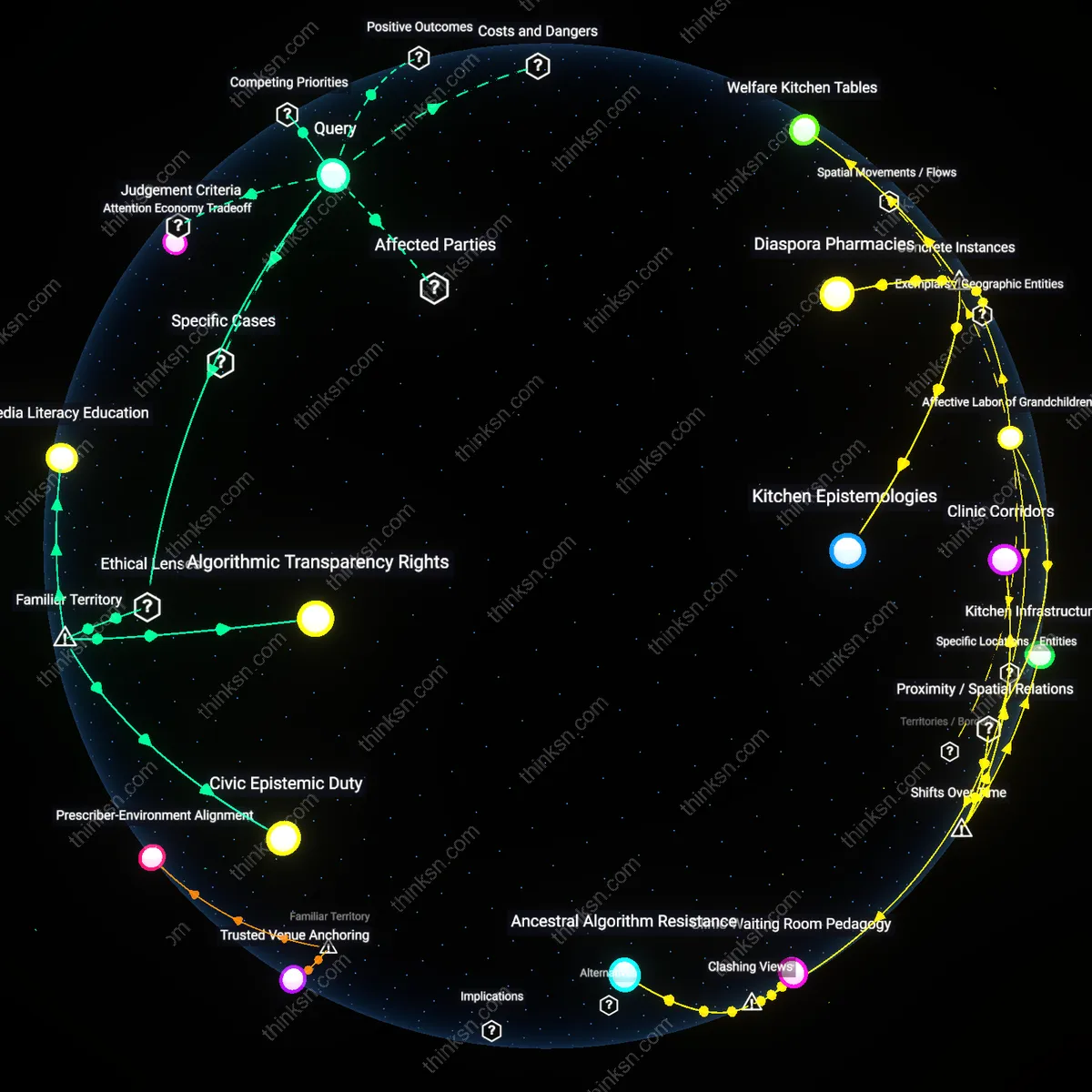

Ancestral Sensors

In many rural East Asian communities, older adults in WeChat and Line networks perceive their digital roles as extensions of ancestral stewardship, where sharing health tips or family updates fulfills a cosmic duty to maintain relational harmony. Shaped by Confucian interdependence and the belief that elders channel collective continuity, their online presence operates as both ritual and surveillance, with kin monitoring their activity as signs of familial vitality. Their data—location check-ins, medical revelations, birthday messages—is not merely harvested but culturally sanctioned as offerings to the social whole, blurring the line between personal disclosure and relational obligation. The overlooked reality is that their datafication is experienced not as violation but as a technologized form of filial witnessing.

Silent Yield

In Sub-Saharan African migrant diaspora networks on WhatsApp and Telegram, older users often unknowingly serve as high-fidelity data sources for kin back home who rely on their posts to assess economic opportunity, safety, and migration feasibility. Though viewed by themselves as passive family correspondents sharing news, their mundane updates—about job availability, cost of living, or bureaucratic hurdles—become structured intelligence for entire communities making life-altering decisions. This transformation of intimate communication into migratory forecasting reveals how trust-based networks become unintentional data pipelines, with elders functioning as sensors in a distributed survival infrastructure. The irony is that their sense of belonging is fulfilled precisely by being seen not as guides, but as reliable indicators of distant realities.

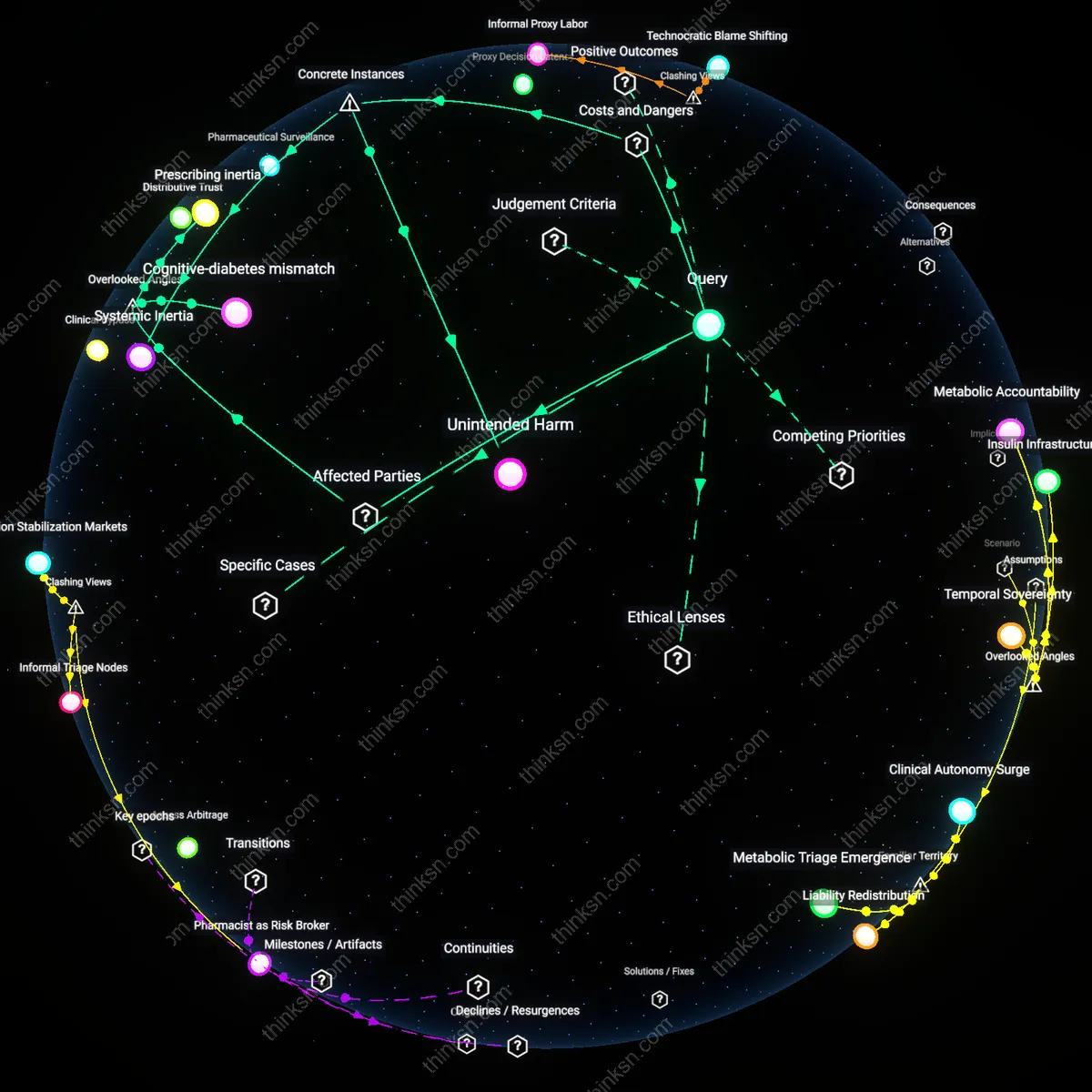

Platform choreography

Older adults see themselves as trusted neighbors because platform interfaces are designed to mimic intimate community spaces, but this affordance masks how their emotional disclosures and routine check-ins become structured data inputs through automated sentiment analysis and behavioral tagging. Social media platforms like Facebook Groups or Nextdoor use interface cues—such as reaction buttons, post prompts ('How are you feeling?'), and visibility rankings—to shape elder participation into predictable, extractable forms, making caregiving language algorithmically legible. This dynamic is non-obvious because it reframes seemingly voluntary sociality as procedural compliance, where warmth and trust are not just user traits but engineered data pipelines. The overlooked mechanism is not surveillance per se, but the choreography of care into standardized digital performances.

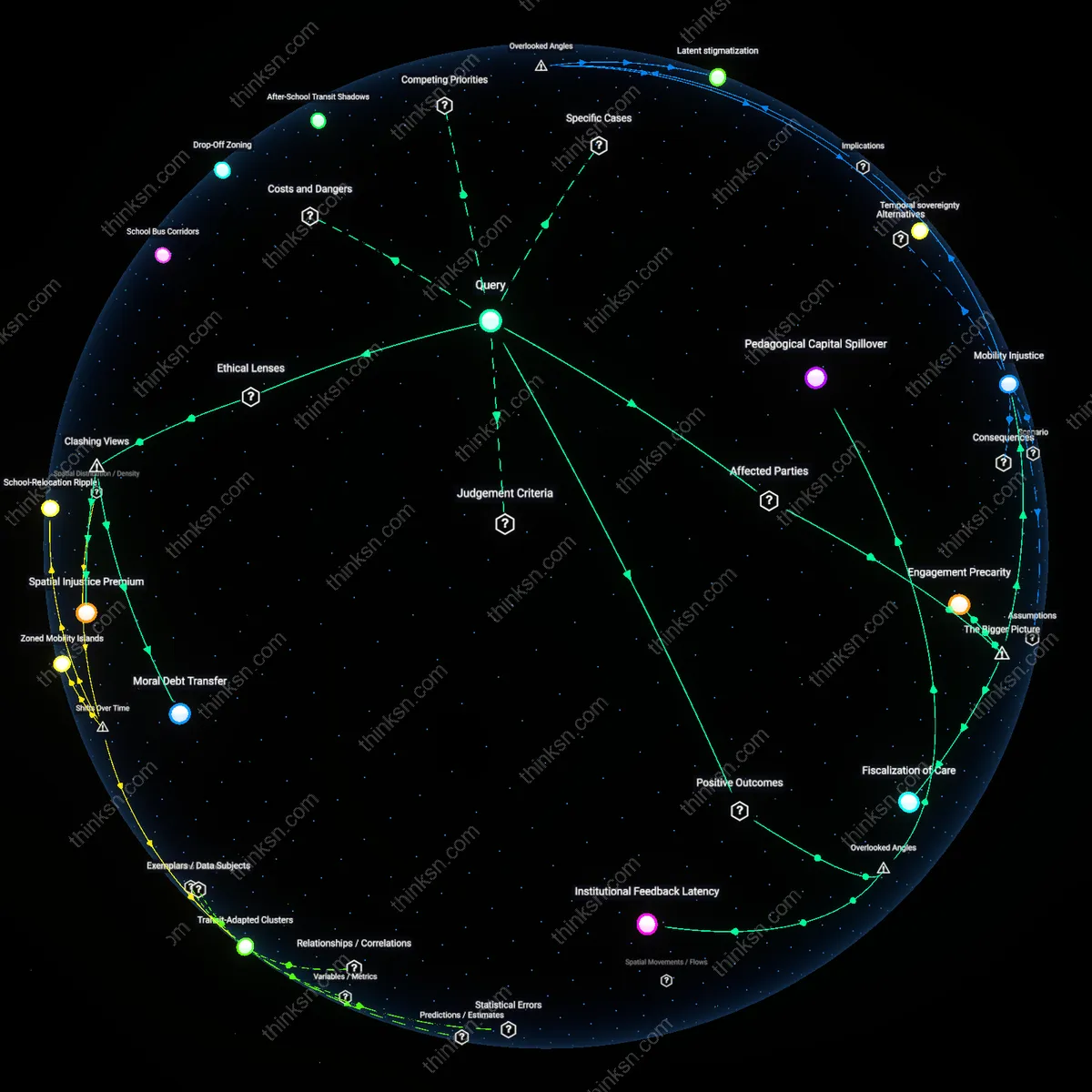

Intergenerational data debt

Older adults function as unwitting data sources not because they misunderstand privacy, but because they absorb risks to protect younger family members who coach them on technology use, creating an implicit transfer of digital vulnerability from the young to the old. Adult children often instruct aging parents to join platforms to stay connected, yet they rarely disclose how platform business models turn familial updates—like grandchild photos or health status changes—into training data for affective AI or ad-targeting systems. This dynamic is overlooked because conventional privacy discourse focuses on individual consent rather than kinship-based risk transference, and it matters because it positions elders not as passive victims but as silent guarantors in a broader familial data economy. The residual concept reveals how care manifests asymmetrically in data flows.

Infrastructural eldering

Older adults' self-perception as trusted neighbors is systemically leveraged to stabilize platform legitimacy, where their visible presence signals social authenticity and reduces perceived algorithmic coldness, thus older users become human infrastructure that masks platform commercialism. Platforms selectively amplify elder voices in community forums or local event coordination because their participation generates symbolic trust that benefits real estate algorithms, local advertisers, and municipal data partnerships seeking 'organic' engagement metrics. This role is rarely recognized because most analyses focus on content or privacy, not on how demographic representation functions as implicit branding; the non-obvious point is that elders are not just users but aesthetic components in data ecosystems. Their perceived benevolence becomes a foundational layer of soft infrastructure.

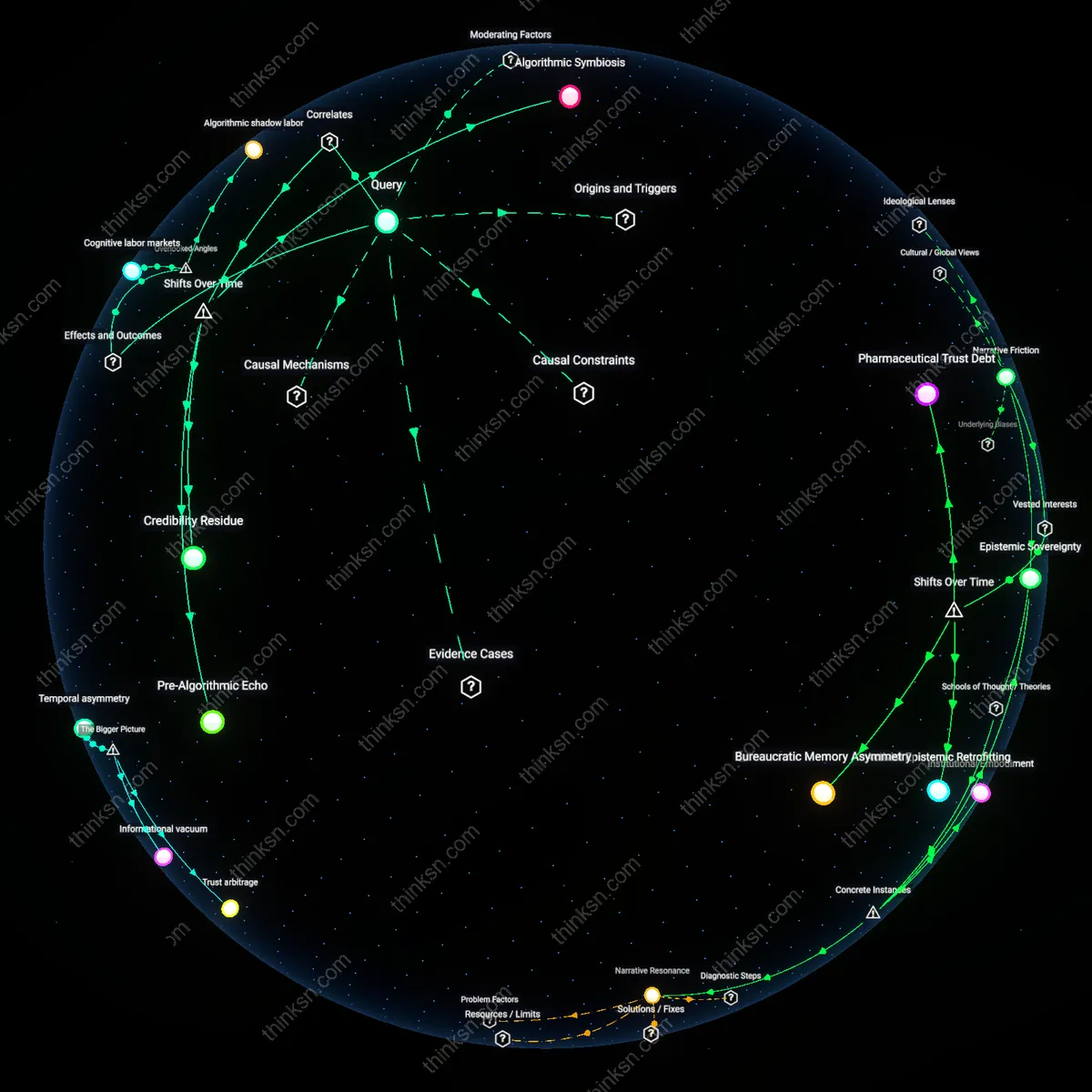

Data Fiduciaries

Older adults in online networks are increasingly framed by platform corporations as custodians of intergenerational trust, a shift accelerated after the post-2016 surge in disinformation campaigns revealed their outsized influence in family digital ecosystems. Tech firms, facing regulatory pressure, retrofitted privacy policies to position elders as informal moderators—voluntary gatekeepers who could counter misinformation through familial credibility, thereby offloading content governance onto domestic ties. This reframing converted a demographic once seen as technologically irrelevant into a strategic asset in platform integrity efforts, masking data extraction under the guise of communal stewardship. What is underappreciated is that this role was not embraced autonomously but institutionally assigned during a crisis of platform legitimacy.

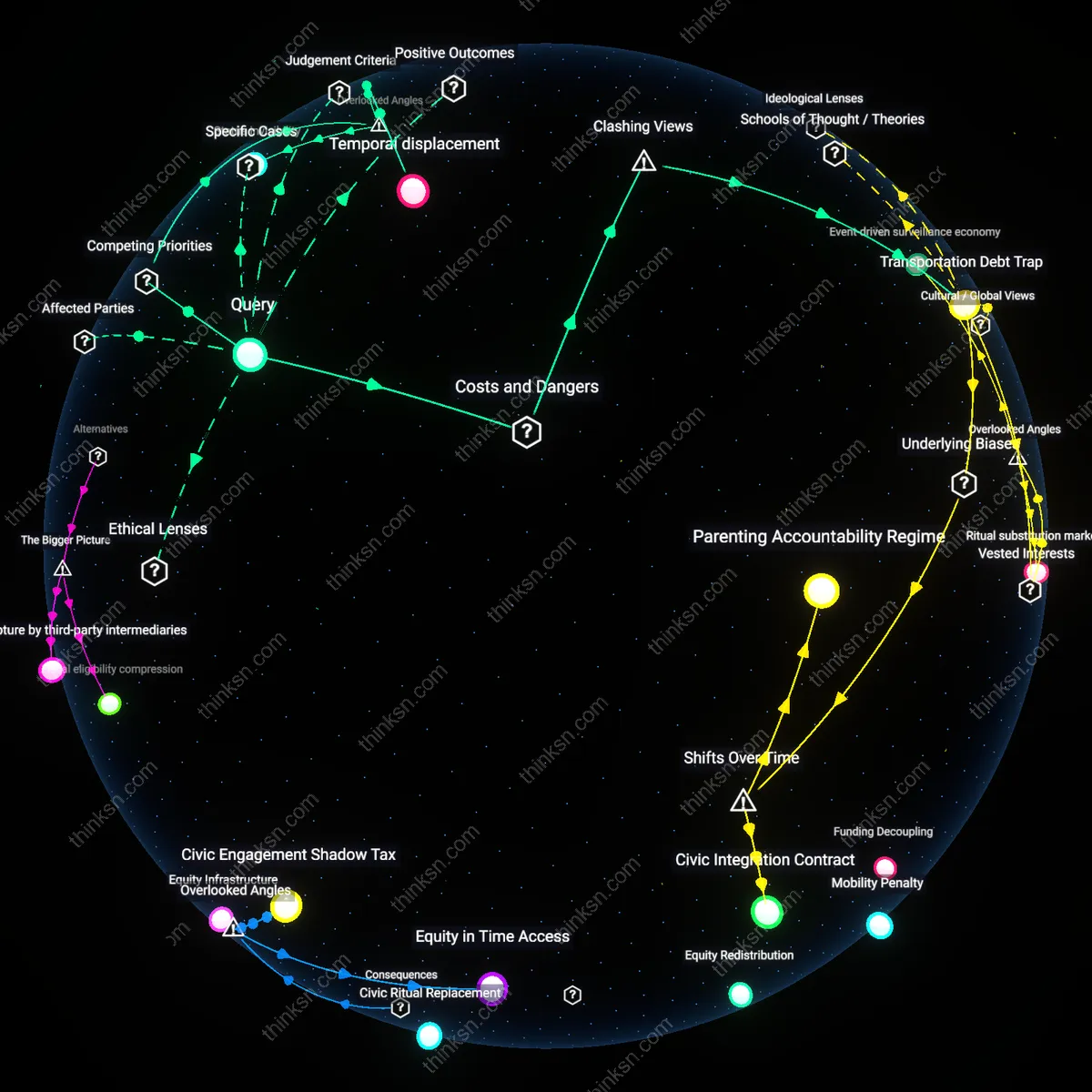

Retirement Surveillance

After the 2008 financial crisis, austerity-driven disinvestment in public elder care shifted responsibility toward digital self-management platforms, where governments and insurers incentivized older adults to log health and behavioral data in exchange for services. This policy turn repositioned seniors not as community anchors but as compliant data sources, embedded in actuarial systems designed to predict risk and reduce public expenditure. The historical pivot—from state-supported welfare to data-for-care exchanges—reveals how budgetary logics repurposed late-life digital adoption as a form of quiet surveillance, normalizing data extraction as civic duty. The non-obvious consequence is that trust is no longer interpersonal but actuarially computed, reducing neighborly roles to risk profiles.

Legacy Informants

Beginning in the early 2010s, activist groups promoting digital literacy for older adults unintentionally supplied behavioral templates that data brokers later reverse-engineered to map family network structures, using elders as entry points to intergenerational data harvesting. Originally intended to combat social isolation, these well-meaning interventions created dense, trust-based digital footprints that were then exploited by commercial firms during the rise of psychographic marketing. The transformation of elders from isolated users to pivotal nodes occurred not through technological adoption but through relational density being weaponized over time. The overlooked shift is that their perceived role as trusted neighbors became a vulnerability precisely because of the temporal lag between altruistic outreach and predatory data modeling.