Are Fitness Trackers Selling Your Data to Insurers?

Analysis reveals 5 key thematic connections.

Key Findings

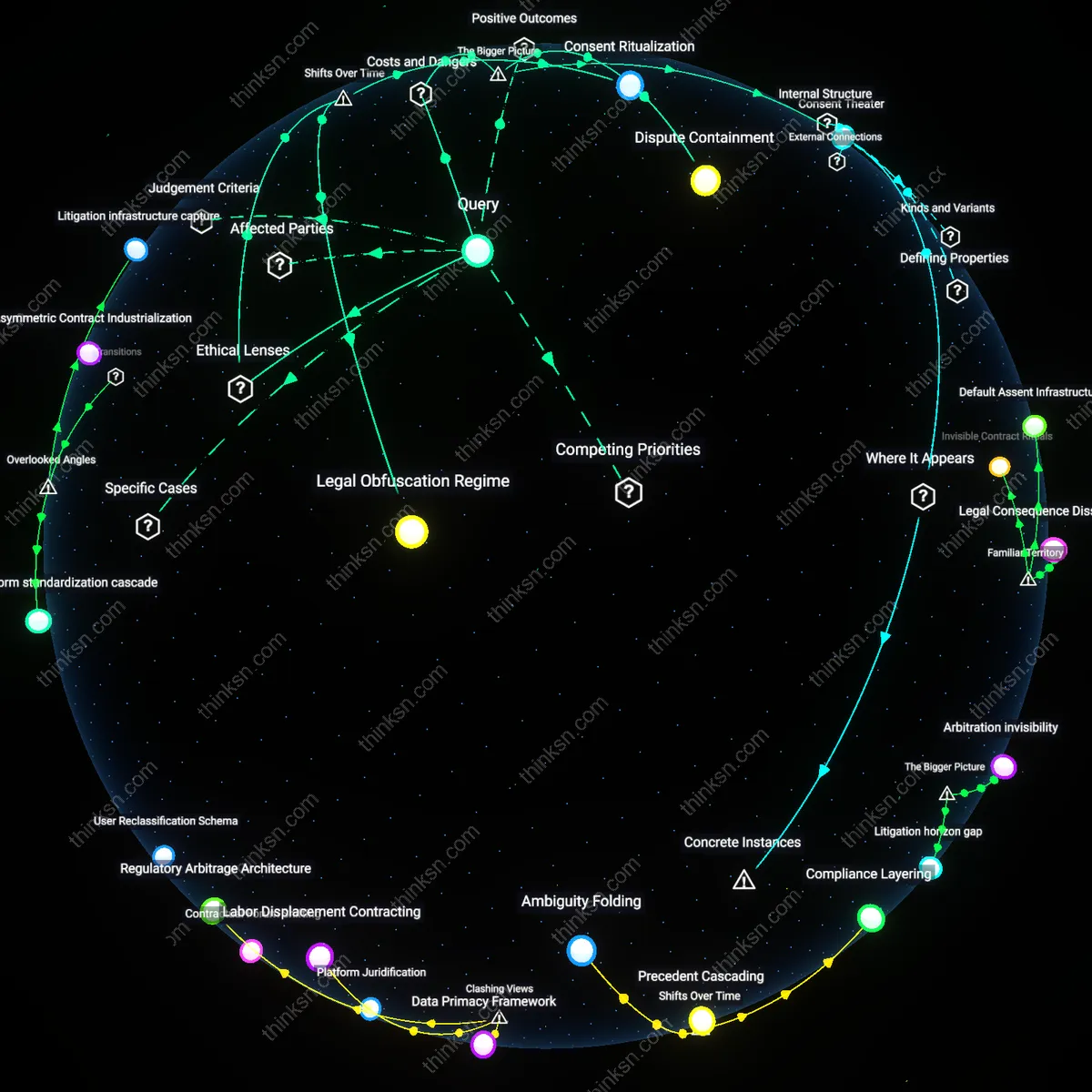

Regulatory Mimicry

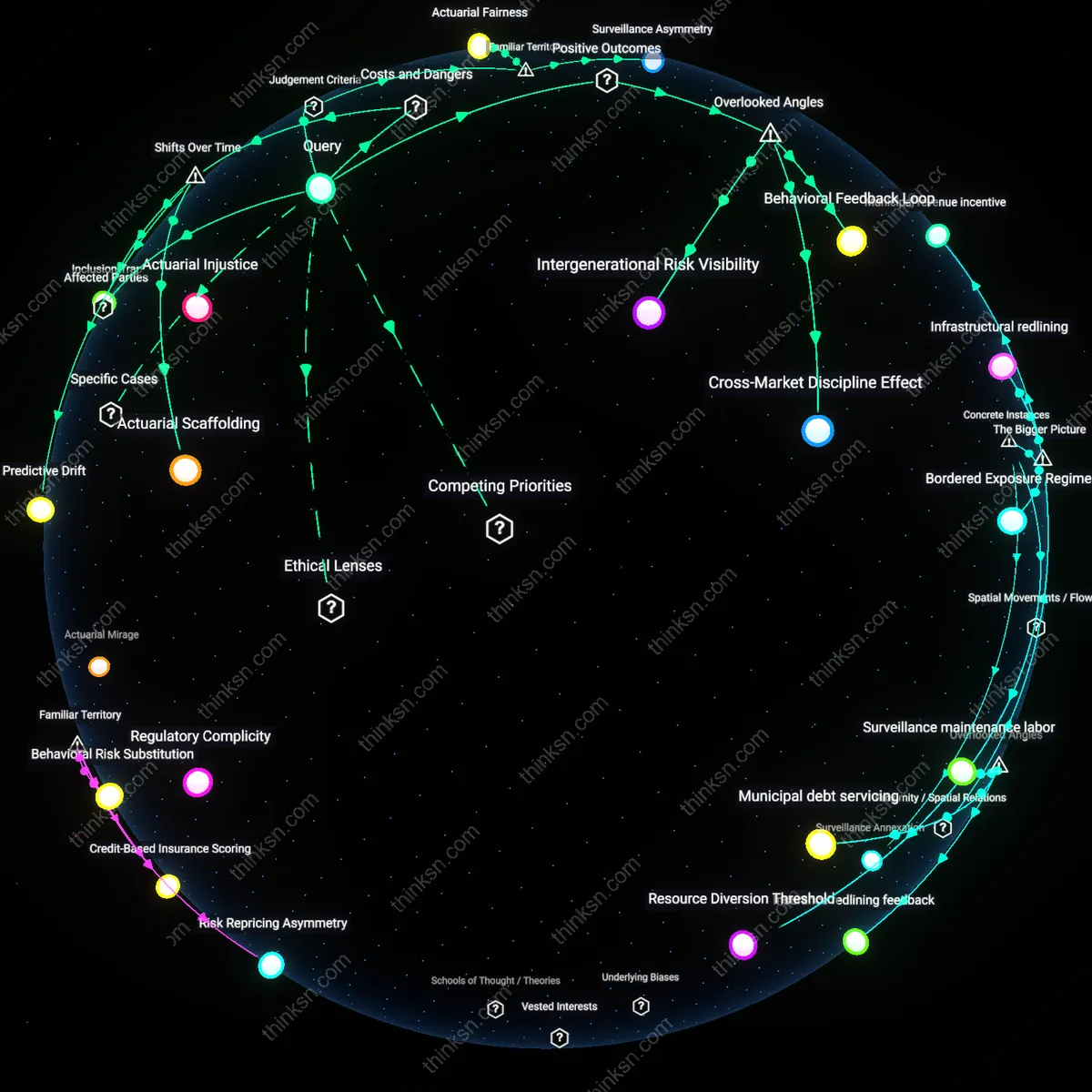

The consent illusion in fitness tracking persists not due to deception but because regulatory compliance performs transparency without enabling user control. Companies like Fitbit and Garmin adopt HIPAA-like consent interfaces—pop-ups, toggles, layered notices—not to inform, but to mimic healthcare accountability structures while operating outside them. This theatrical adherence allows wearable firms to signal ethical data handling while legally permitting data sharing with partners such as Vitality Group and AIA, who then funnel behavioral scores into insurance underwriting. The dissonance is that users interpret consent as a shield, when it functions as a ritualized disclaimer that legitimizes downstream data flows in jurisdictions where no enforceable ownership right exists.

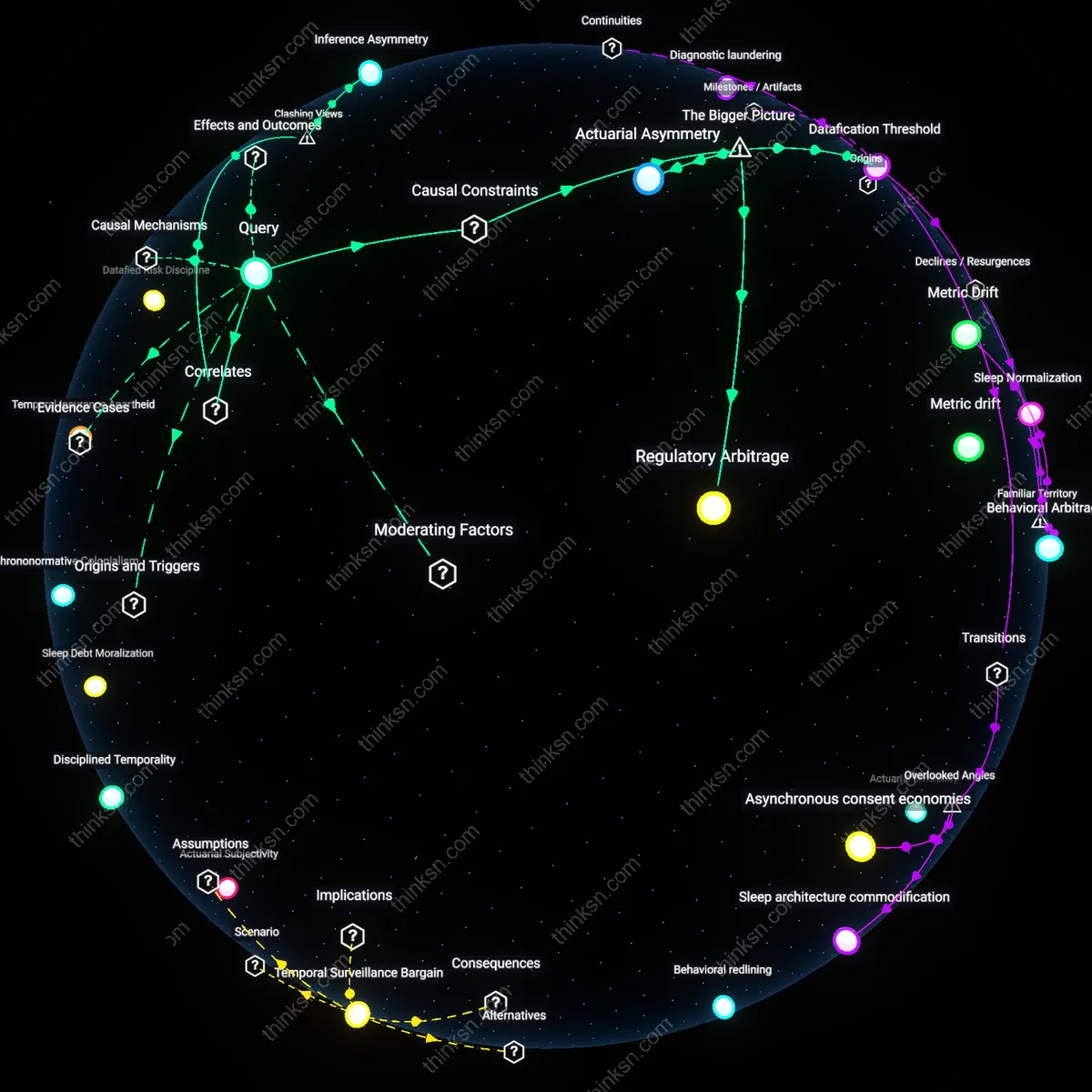

Inference Asymmetry

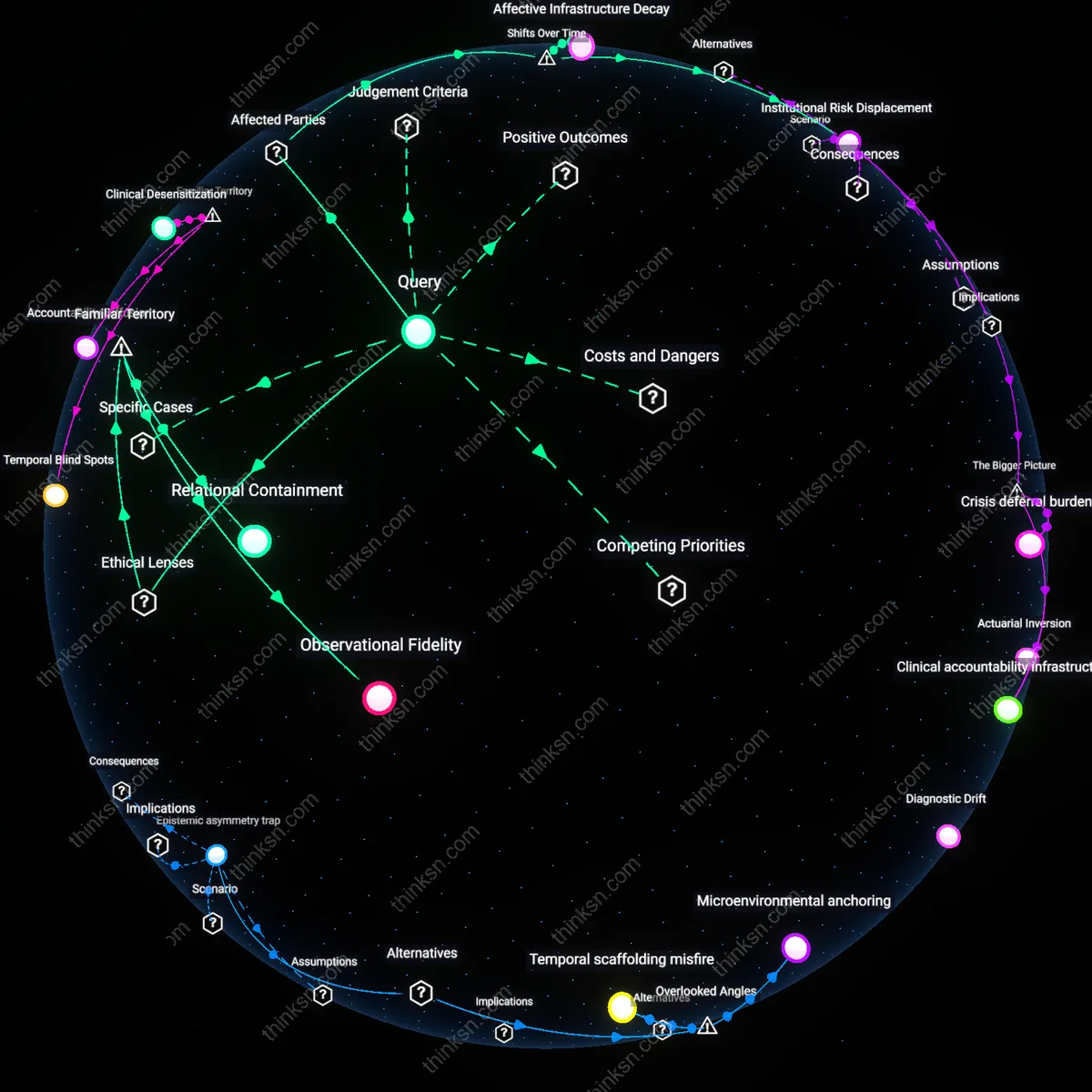

Users misunderstand data risks not because they ignore consent terms, but because insurers derive value from relational inferences that cannot be disclosed in any meaningful consent form. For example, a decline in nighttime movement paired with weekend social inactivity may signal depression, a condition insurers cannot directly inquire about under anti-discrimination laws—but can indirectly infer from 'lifestyle consistency' scores sold by companies like Aetna’s subsidiary Coherent Data Insights. The sale does not hinge on raw data or user permission, but on algorithmically generated behavioral typologies that evade categorization as health data. The obscured reality is that consent governs data transfer, not interpretive authority, letting third parties legally sidestep disclosure requirements while producing actionable health surrogates.

Datafication Threshold

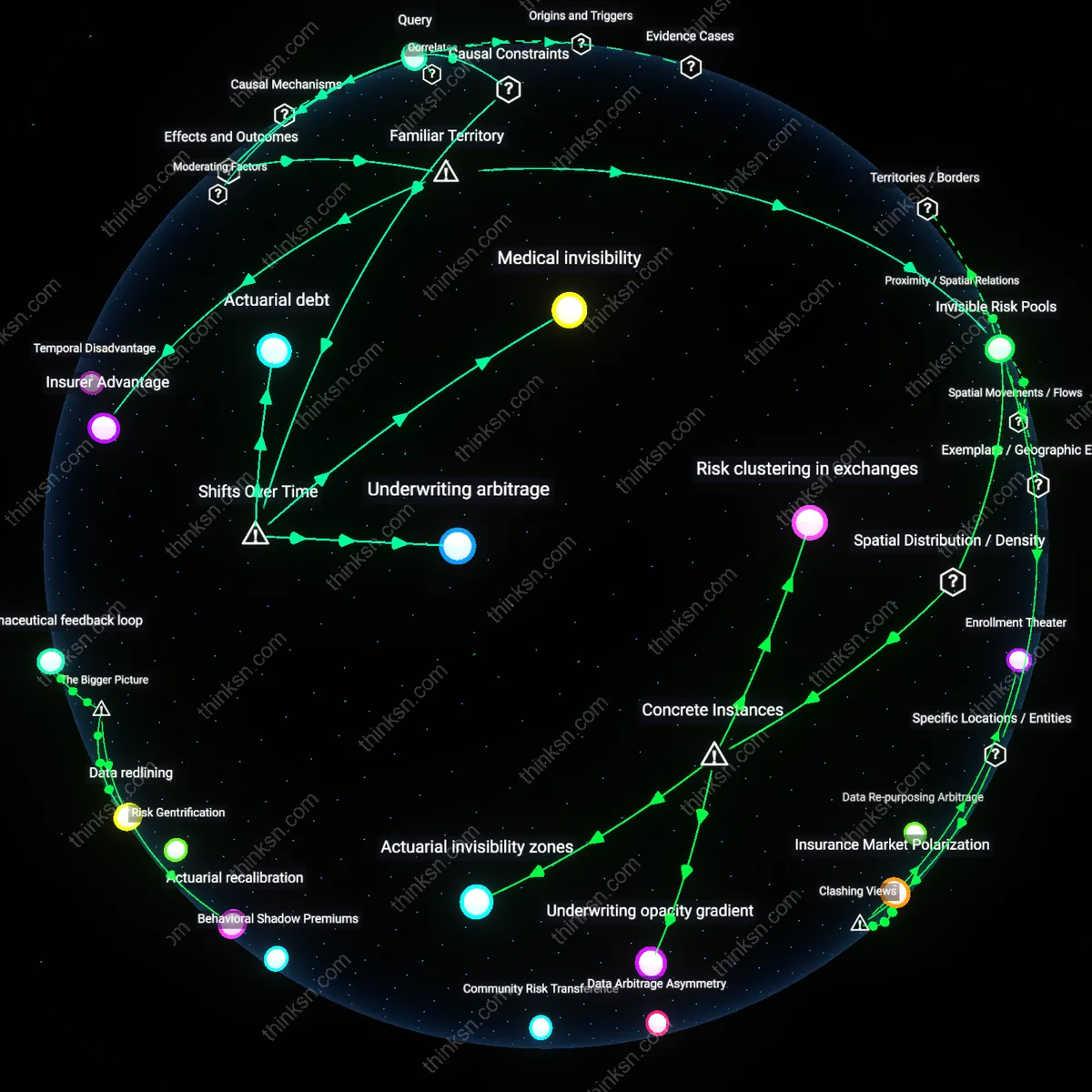

The 'consent fiction' in fitness trackers enables the sale of relational data to insurers only because users must first cross a datafication threshold, where raw movement and behavioral signals are transformed into actuarially relevant proxies through proprietary algorithms. This conversion is a mandatory bottleneck controlled by tracker manufacturers and data brokers, who define what constitutes 'inactivity' or 'irregular sleep' in ways that align with insurance risk models. Without this translation of lived experience into quantified, standardized behavioral markers, insurers cannot operationally use the data—making the technical and epistemological shift, not consent, the true enabler of data monetization. The non-obvious significance is that regulatory focus on consent rituals distracts from the opaque calibration of these metrics, which occurs outside public scrutiny.

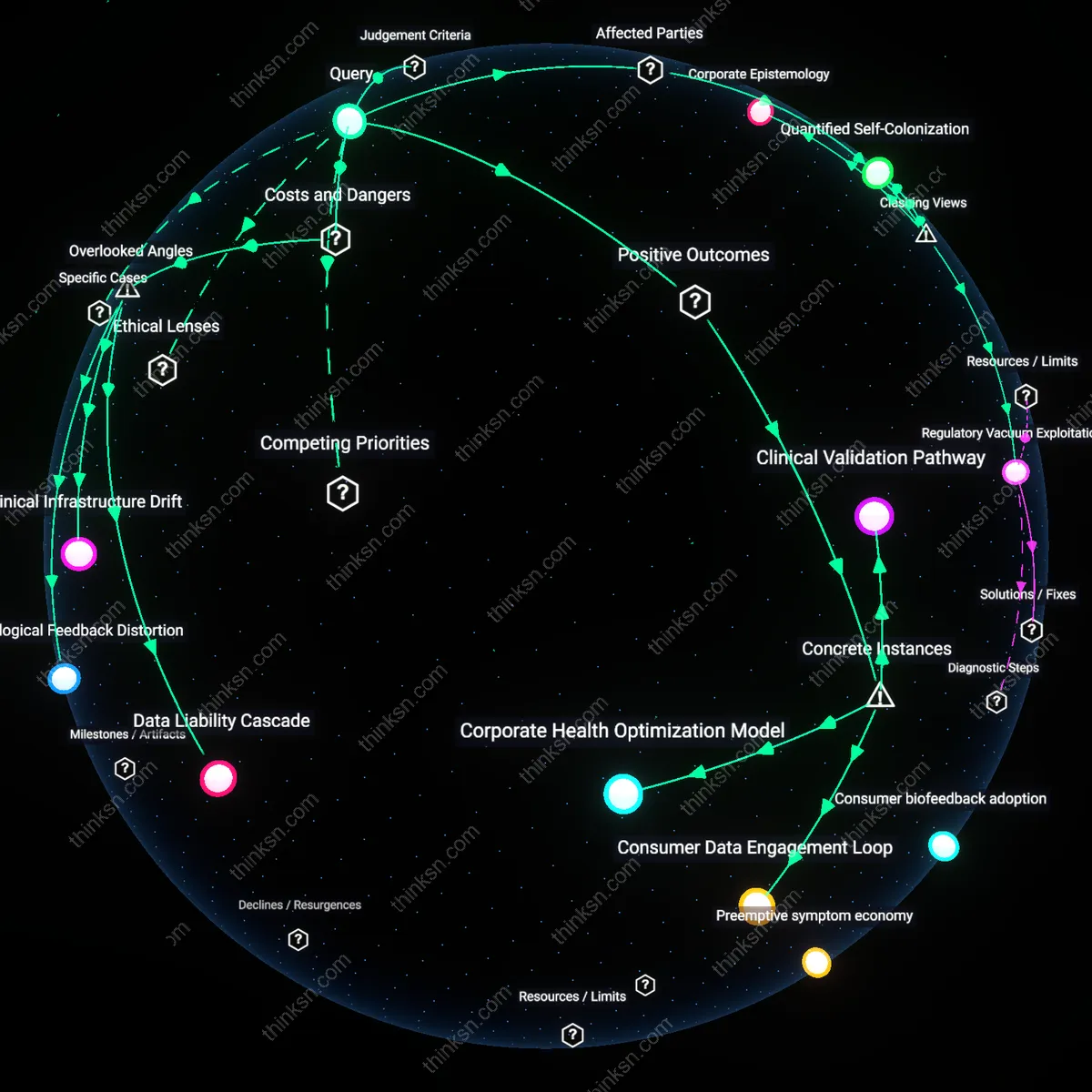

Regulatory Arbitrage

The 'consent fiction' persists because the sale of fitness tracker data to insurers is structurally enabled by jurisdictional misalignment between consumer protection laws and insurance regulation, a constraint without which data brokers could not legally transfer risk profiles. For example, while HIPAA restricts health data in clinical settings, fitness data falls under the weaker FTC-enforced 'consumer information' framework, allowing firms like Fitbit or Whoop to bypass medical privacy safeguards. This regulatory gap acts as a mandatory prerequisite for the causal chain from data collection to underwriting—what matters is not user ignorance but the deliberate institutional fragmentation that permits parallel data economies. The underappreciated reality is that reforming consent forms will not stop data exploitation if the underlying legal segmentation remains intact.

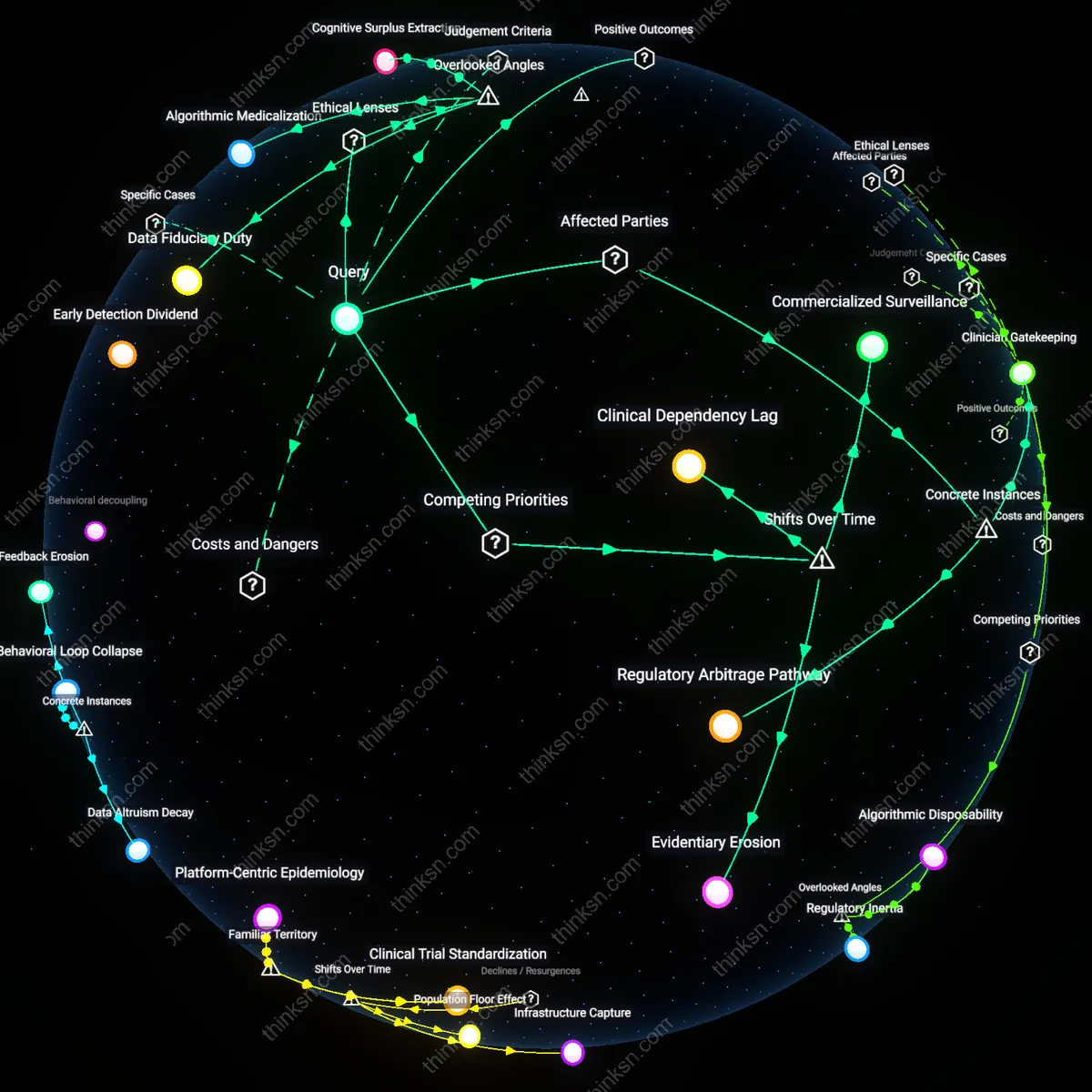

Actuarial Asymmetry

The 'consent fiction' obscures how relational data from fitness trackers feeds into insurance pricing only because insurers rely on asymmetric actuarial models that treat population-level correlations as individual risk certainties, a precondition that converts ambiguous behavioral patterns into policy decisions. These models, developed by firms like Optum or LexisNexis Risk Solutions, assume that nighttime movements detected by wearables indicate chronic conditions—even when no clinical diagnosis exists—making the statistical inference, not the data itself, the operational bottleneck. The real concealment lies in how users are misled into believing their data is interpreted contextually, when in fact it is flattened into actuarial categories divorced from personal narrative. This reveals that the systemic pressure to reduce uncertainty favors algorithmic over medical logic, normalizing preemptive risk categorization.