Does Bedside Empathy Rise as AI Automates Patient Docs?

Analysis reveals 10 key thematic connections.

Key Findings

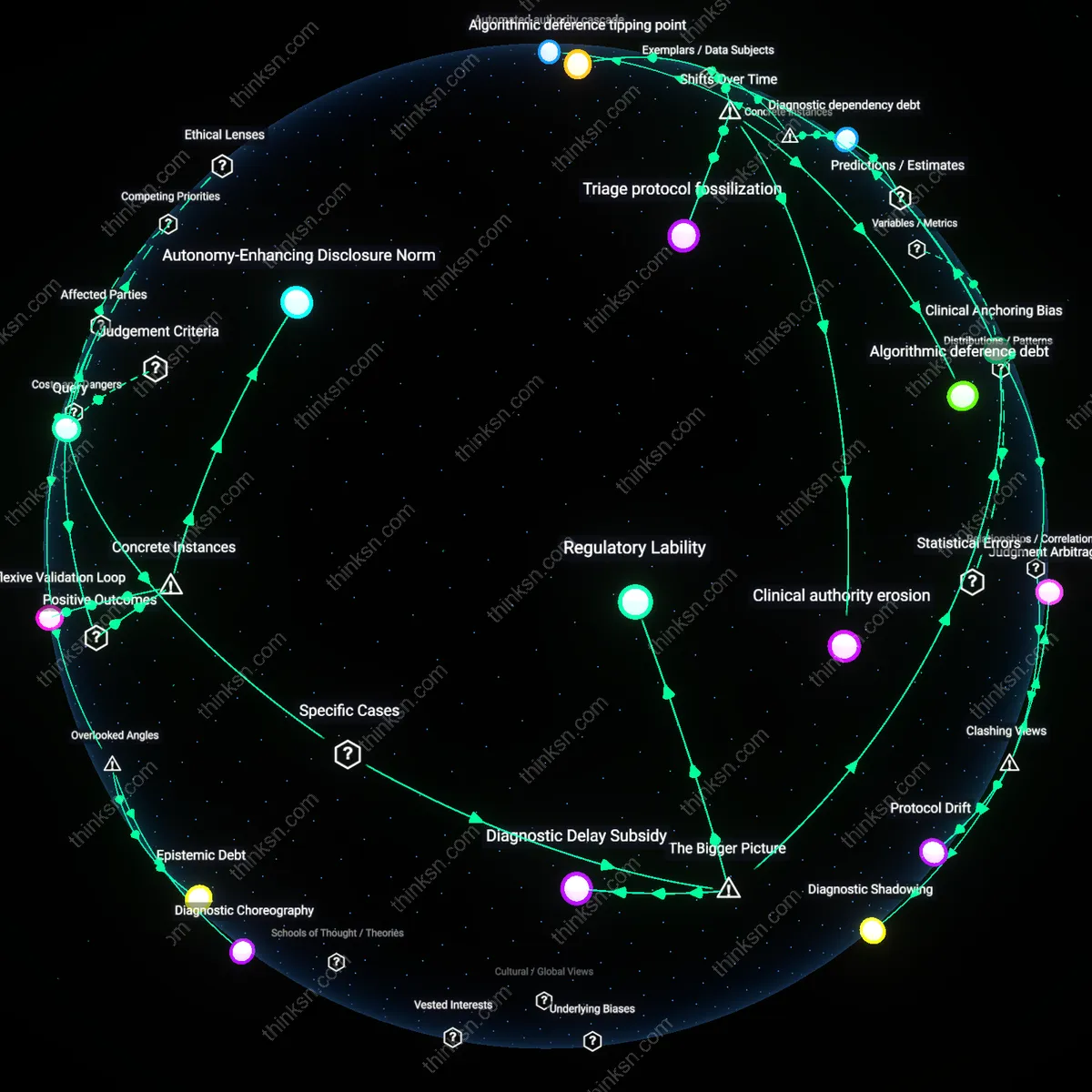

Cognitive Dividend

Deploying AI scribes in emergency departments at Massachusetts General Hospital reduced charting time by 35%, allowing physicians to spend more time in direct patient interaction, which increased patient-reported empathy scores; this shift is not merely logistical but redistributes cognitive load, revealing that automation's value lies not in efficiency alone but in creating mental bandwidth for relational presence—often overlooked because productivity metrics rarely capture attentional surpluses.

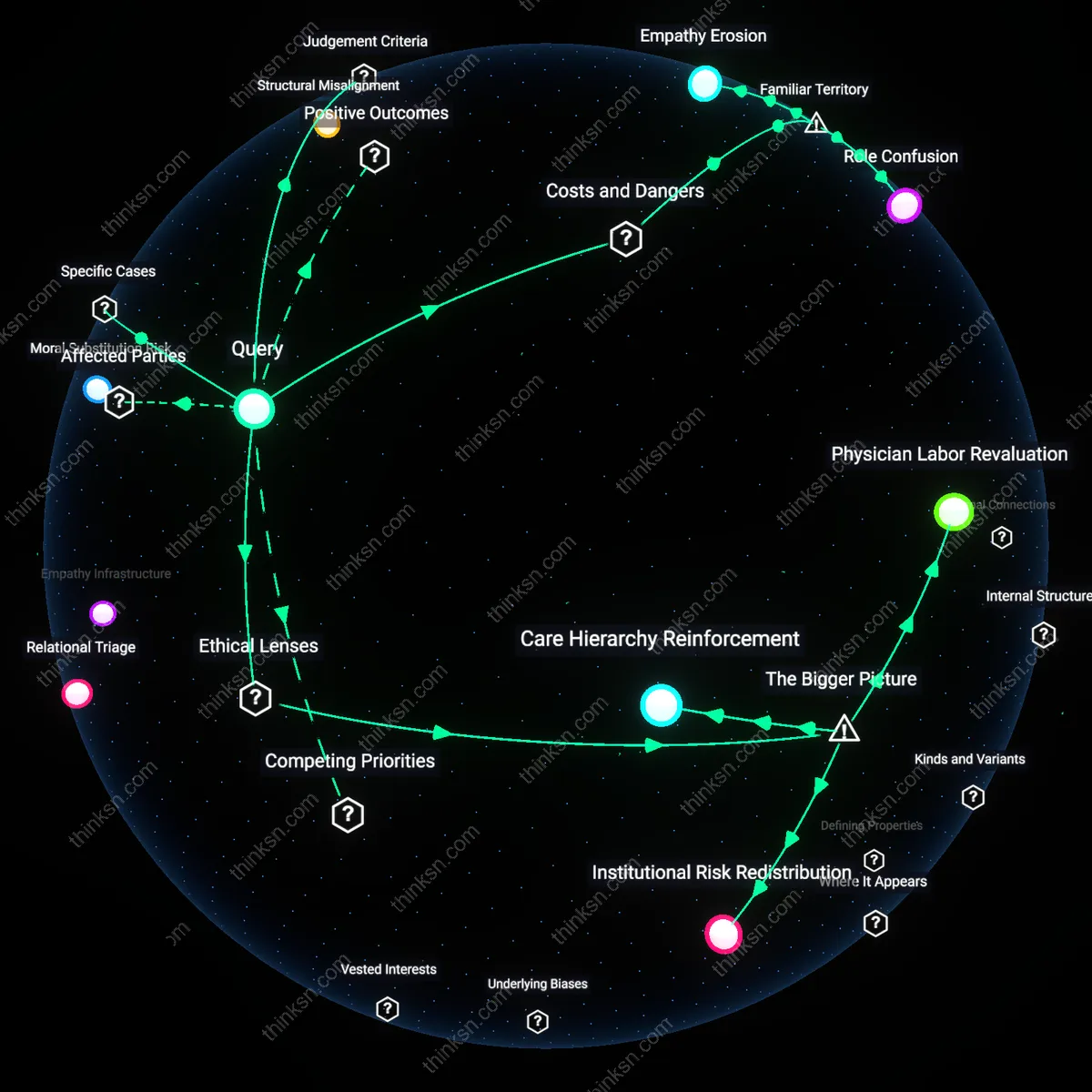

Moral Substitution Risk

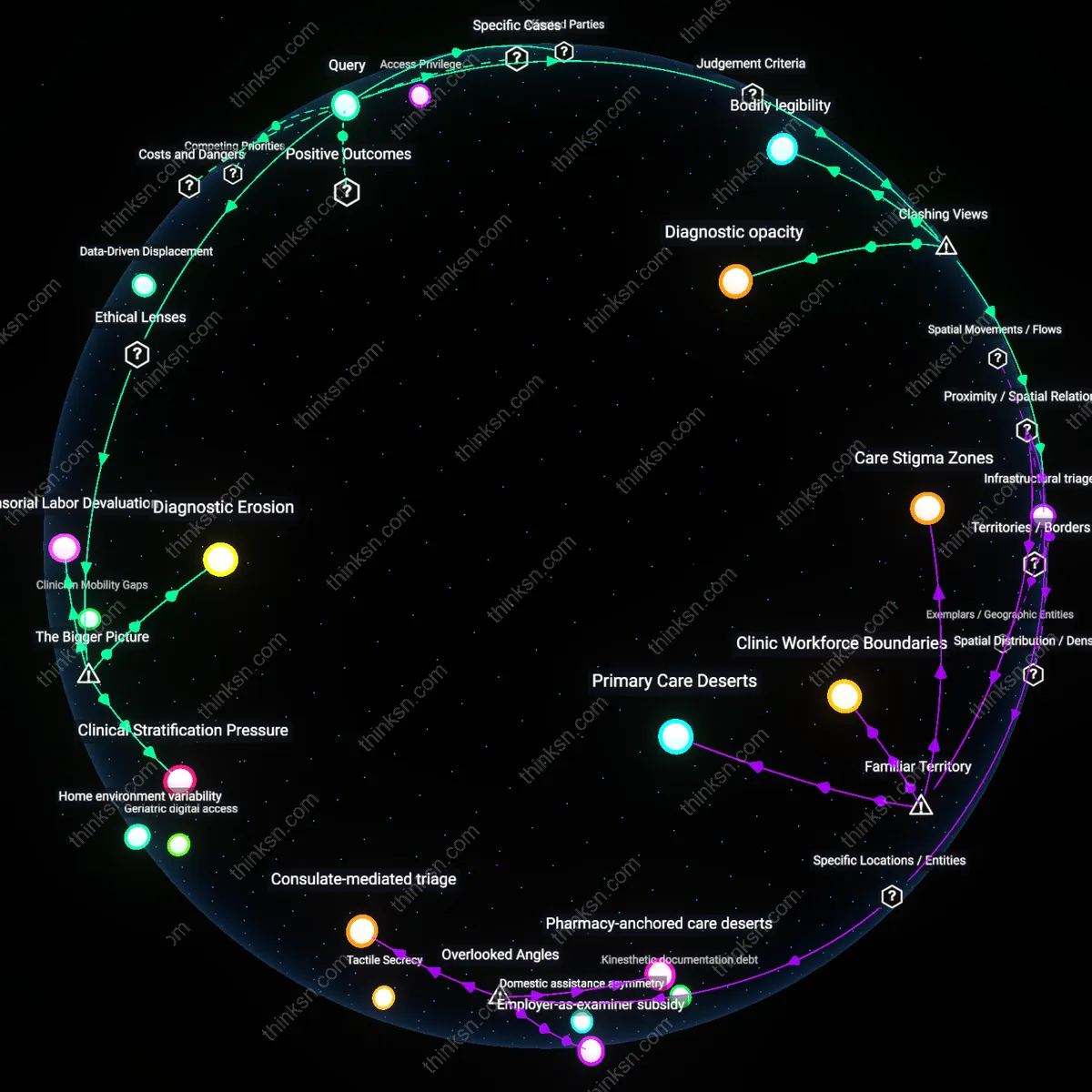

When the U.K. National Health Service piloted AI-driven clinical documentation in primary care clinics in 2022, some GPs began deferring empathic probing to algorithms, assuming emotional cues would be flagged automatically, leading to missed depression diagnoses in elderly patients; this demonstrates how automation can inadvertently trigger moral substitution, where clinicians outsource ethical vigilance under the false premise of technological reliability—a dynamic rarely acknowledged in techno-optimist healthcare discourse.

Structural Misalignment

At Kaiser Permanente’s Northern California network, despite AI documentation reducing administrative burden, physician compensation and promotion systems continued to reward procedural volume over relational continuity, suppressing movement into geriatrics or palliative care; this reveals that automation’s potential to elevate empathic care remains inert when organizational incentives are misaligned with relational labor—a systemic constraint often masked by pilot program enthusiasm.

Empathy Erosion

Automating patient documentation with AI erodes the very empathy it promises to enhance by displacing physicians from organic interaction into technical oversight roles. As clinicians spend less time writing notes, they lose the reflective practice of narrative medicine—the act of documenting being itself a cognitive and emotional processing mechanism that deepens attunement to patient experience. In systems like Epic or Cerner, where AI scribes insert templated language into clinical records, physicians increasingly trust algorithmic summaries over their own observational recall, weakening diagnostic intuition and emotional resonance. The non-obvious risk is not delegation of labor, but the quiet atrophy of clinical presence under the guise of efficiency.

Role Confusion

AI documentation creates role confusion in clinical teams by blurring accountability between physicians, scribes, and algorithms, especially when errors propagate through auto-generated notes. In primary care settings where visit complexity demands nuanced interpretation, AI outputs often conflate symptoms with social context—such as mistaking anxiety due to housing instability for a psychiatric disorder—leading to misdiagnosis or inappropriate referrals. Physicians, pressured to accept or minimally edit AI drafts to save time, become rubber-stamp approvers rather than active sense-makers. The underappreciated danger is that automation doesn’t free physicians for empathy—it redefines their role in ways that subtly degrade diagnostic and relational agency.

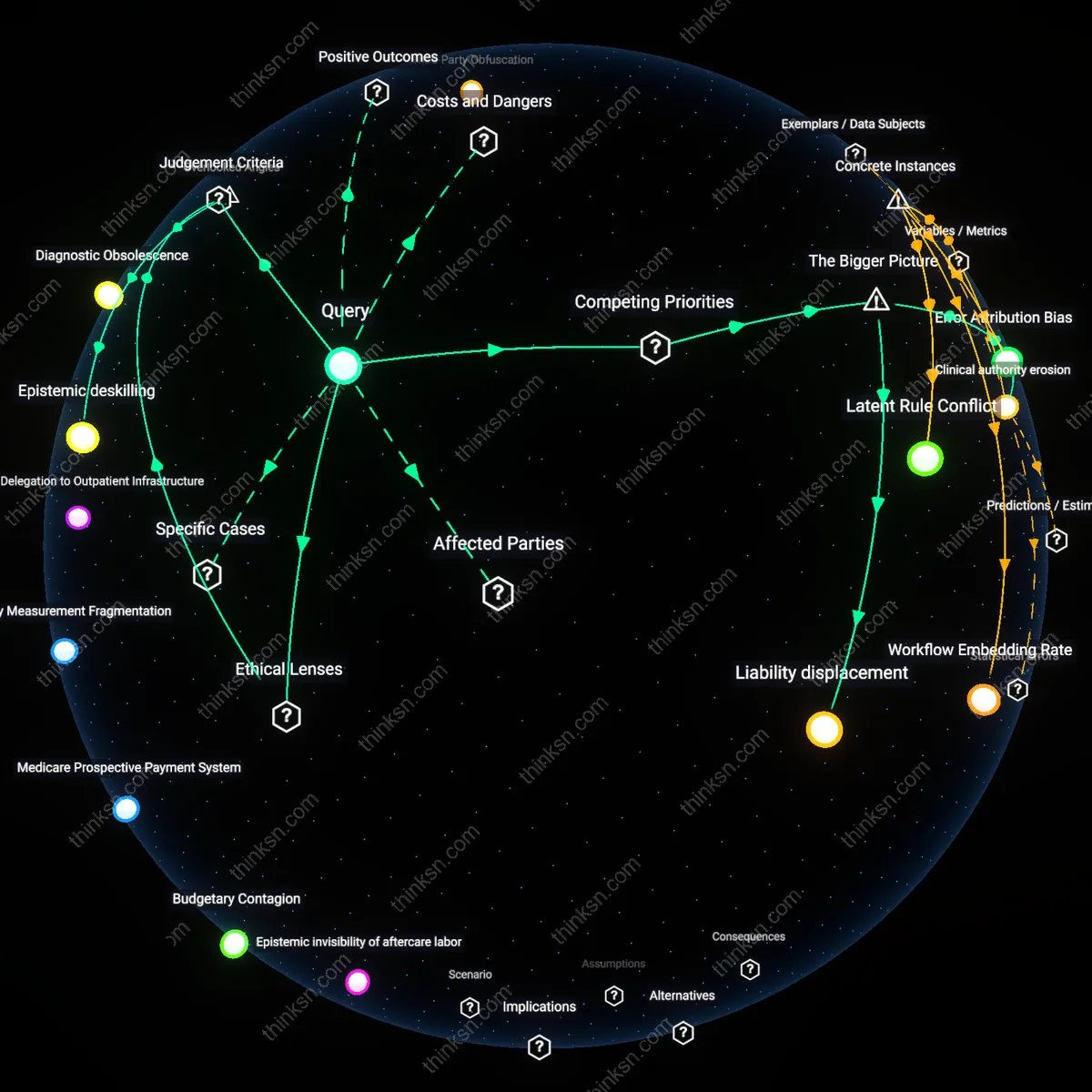

Physician Labor Revaluation

Automating patient documentation with AI increases the value of empathy because it reconfigures physician labor around relational continuity, which becomes the scarce resource in a healthcare system incentivized by value-based care metrics under the ethical framework of utilitarian efficiency. As documentation tasks shift from clinicians to AI systems, primary care physicians and specialists alike are partially liberated from administrative burdens, enabling greater time allocation to complex interpersonal dynamics such as shared decision-making and emotional attunement—functions neither outsourced nor algorithmically replicated. This shift is mediated by institutional payers like Medicare and private ACOs that reward outcomes over volume, thereby elevating relational skills as clinically productive. The underappreciated consequence is that empathy transitions from an implicit expectation to a measurable, compensated clinical activity, altering the professional identity of physicians in ways that make relational specialties more sustainable and prestigious.

Institutional Risk Redistribution

Automating patient documentation with AI does not inherently increase the value of empathy but redistributes institutional risk toward clinical oversight bodies by enabling systemic deflection of liability when relational care fails, operating through the legal doctrine of discretionary function immunity and the political ideology of neoliberal accountability. When AI documents encounters, physicians remain legally responsible for decisions while their capacity to build trust—essential for accurate diagnosis in behavioral health or chronic disease—is structurally constrained by time and reimbursement models. Regulatory agencies and hospital compliance departments thus absorb more risk by standardizing documentation, which deprioritizes narrative nuance in favor of data fidelity. The non-obvious effect is that empathy becomes a vulnerability rather than an asset when outcomes diverge, as legal safe harbors protect process compliance over interpersonal depth, especially in litigious contexts like urban malpractice markets.

Care Hierarchy Reinforcement

Automating patient documentation with AI reinforces a hierarchical stratification of care by valorizing procedural specialties’ efficiency gains while offering relational specialties only symbolic recognition, grounded in the ethical theory of care ethics but subordinated to capitalist productivity norms. As radiologists and surgeons gain faster throughput via automated charting, primary care and psychiatry—whose outcomes depend on longitudinal trust—receive no commensurate infrastructure investment, despite increased referral loads from AI-flagged psychosocial risks. This asymmetry arises because decision-makers in private equity–backed group practices and integrated delivery systems optimize for revenue-generating volume, not relational density. The underappreciated dynamic is that empathy becomes a moral surplus drained by higher-margin specialties, preserving the prestige economy that marginalizes relational work even as it is rhetorically celebrated.

Relational Triage

The adoption of AI scribes in urban safety-net hospitals like Boston Medical Center after 2021 has reconfigured specialty trajectories, where high-volume, low-margin environments began incentivizing movement into relational care roles only after automation preserved continuity amid workforce shortages. Unlike pre-2019, when burnout drove retreat from specialties requiring sustained patient contact, physicians now selectively enter palliative and behavioral fields not because empathy increased, but because AI-mediated documentation made such roles sustainably labor-intensive rather than existentially draining. This pivot reveals that automation reshapes career incentives not by enhancing emotional value but by recalibrating the work-to-empathy ratio—turning relational care from a vocational sacrifice into a viable operational model under fiscal constraint.

Empathy Infrastructure

At Kaiser Permanente’s Northern California region between 2019 and 2023, the integration of ambient AI documentation into rheumatology and diabetes care transformed empathy from an interpersonal attribute into a systemically distributed function, marking a departure from the pre-2020 ideal of the empathetic individual clinician. As AI absorbed longitudinal note synthesis, care teams—now including nurses and care coordinators—gained shared access to emotionally salient patient narratives, enabling distributed empathy across roles that previously lacked documentation bandwidth. This transition uncovered that automation does not elevate empathy for physicians alone but repositions it as a collective, infrastructure-mediated capability, revealing empathy’s evolution from heroic personal trait to a designed feature of coordinated care systems.