Is a Unified Digital Portfolio Worth the Risk of Algorithmic Bias?

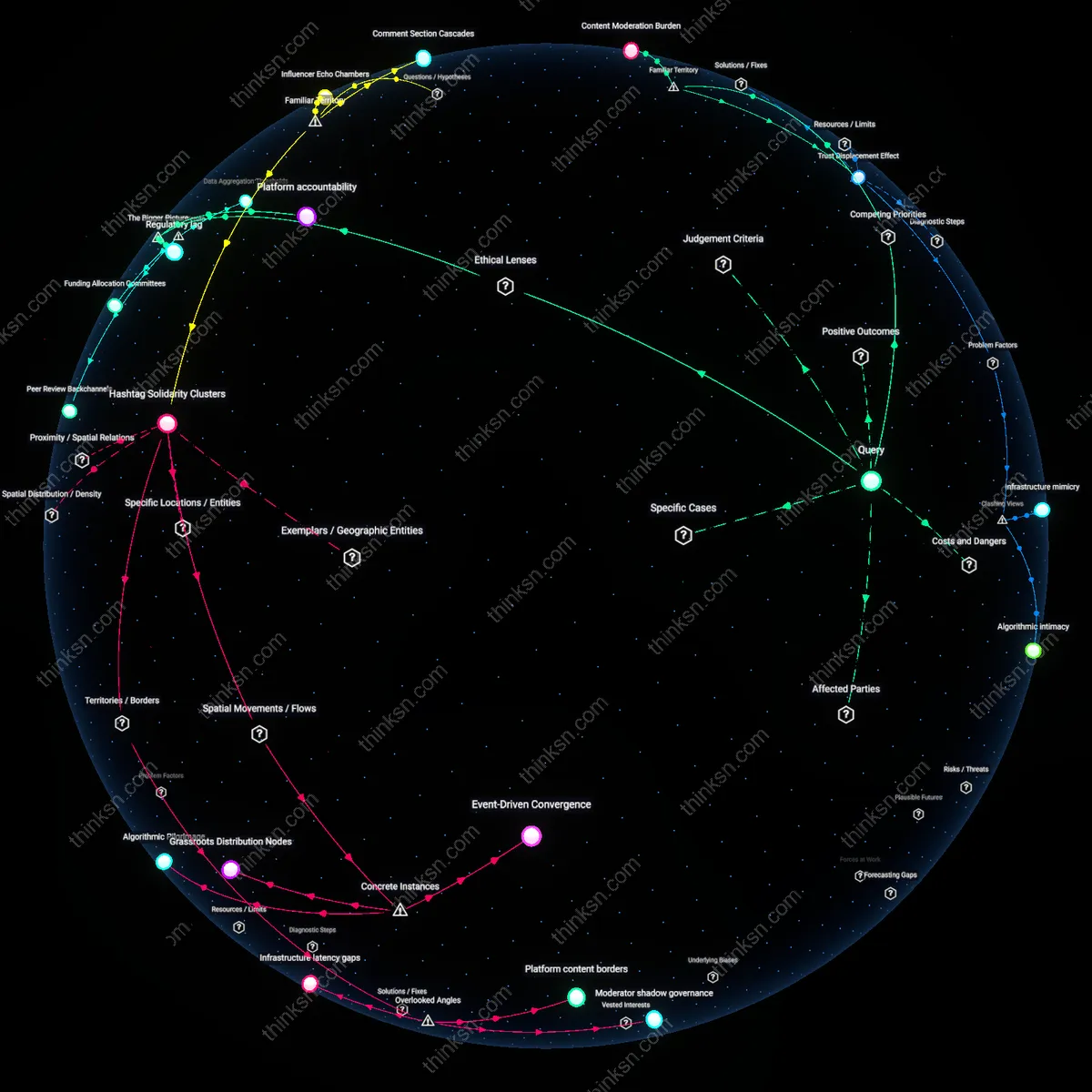

Analysis reveals 9 key thematic connections.

Key Findings

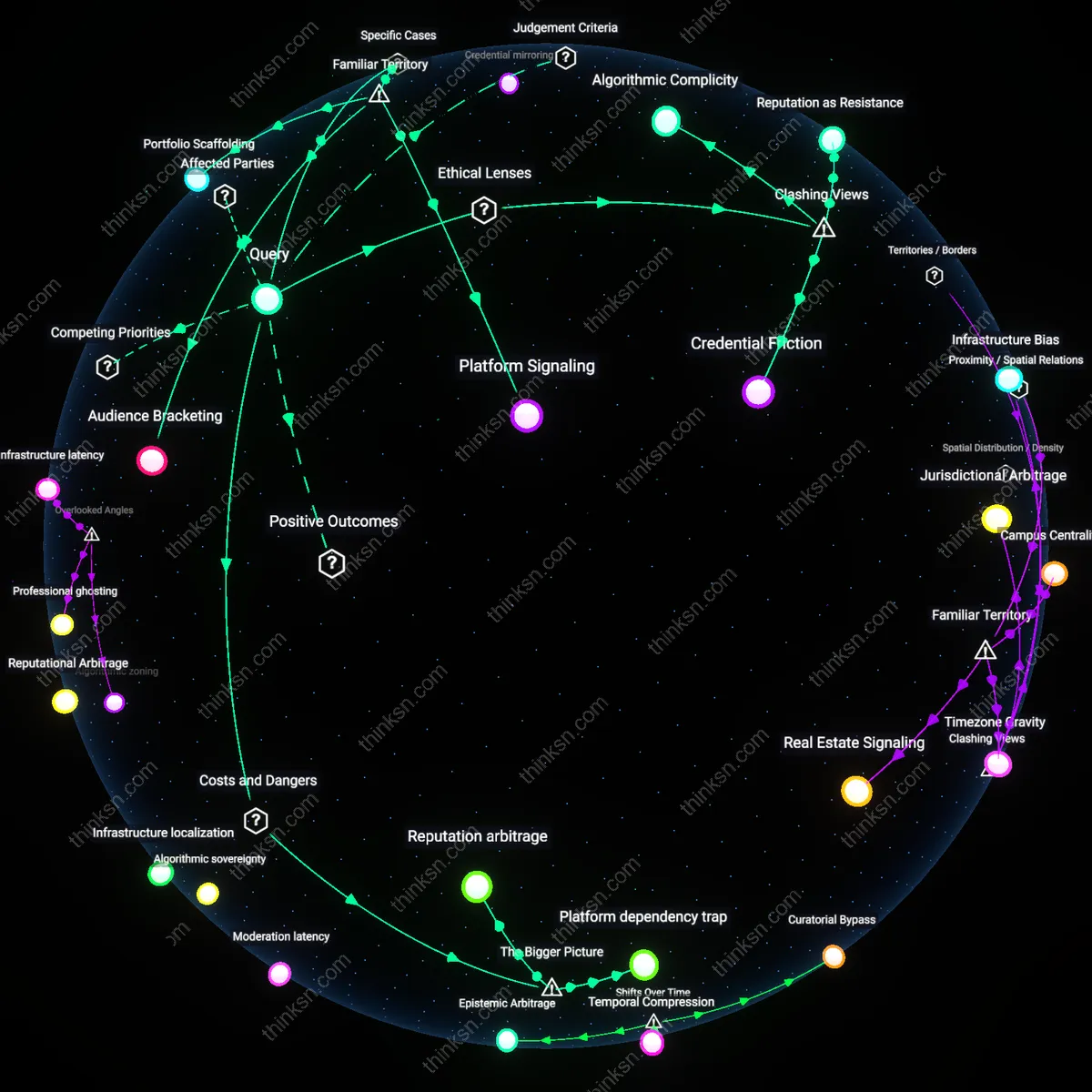

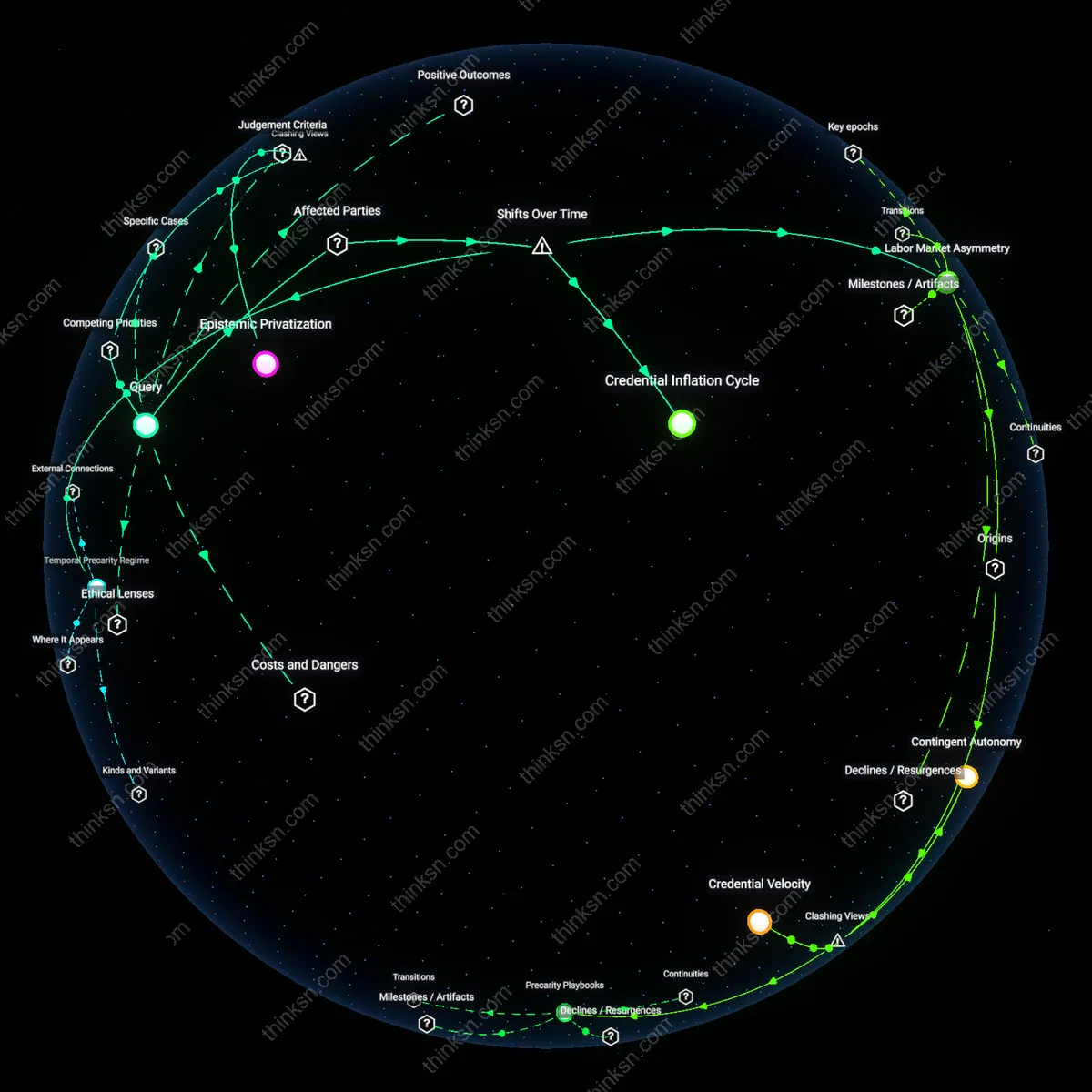

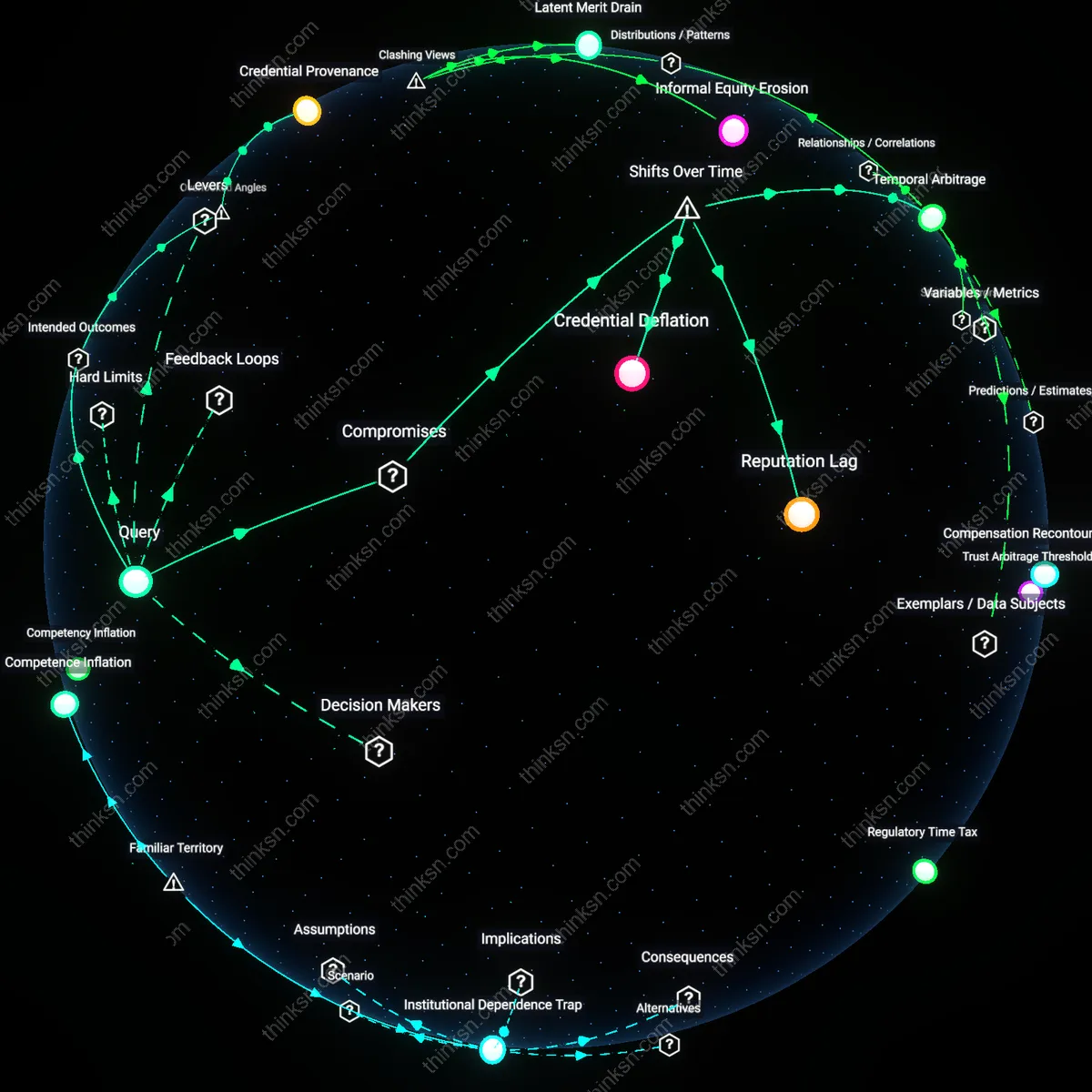

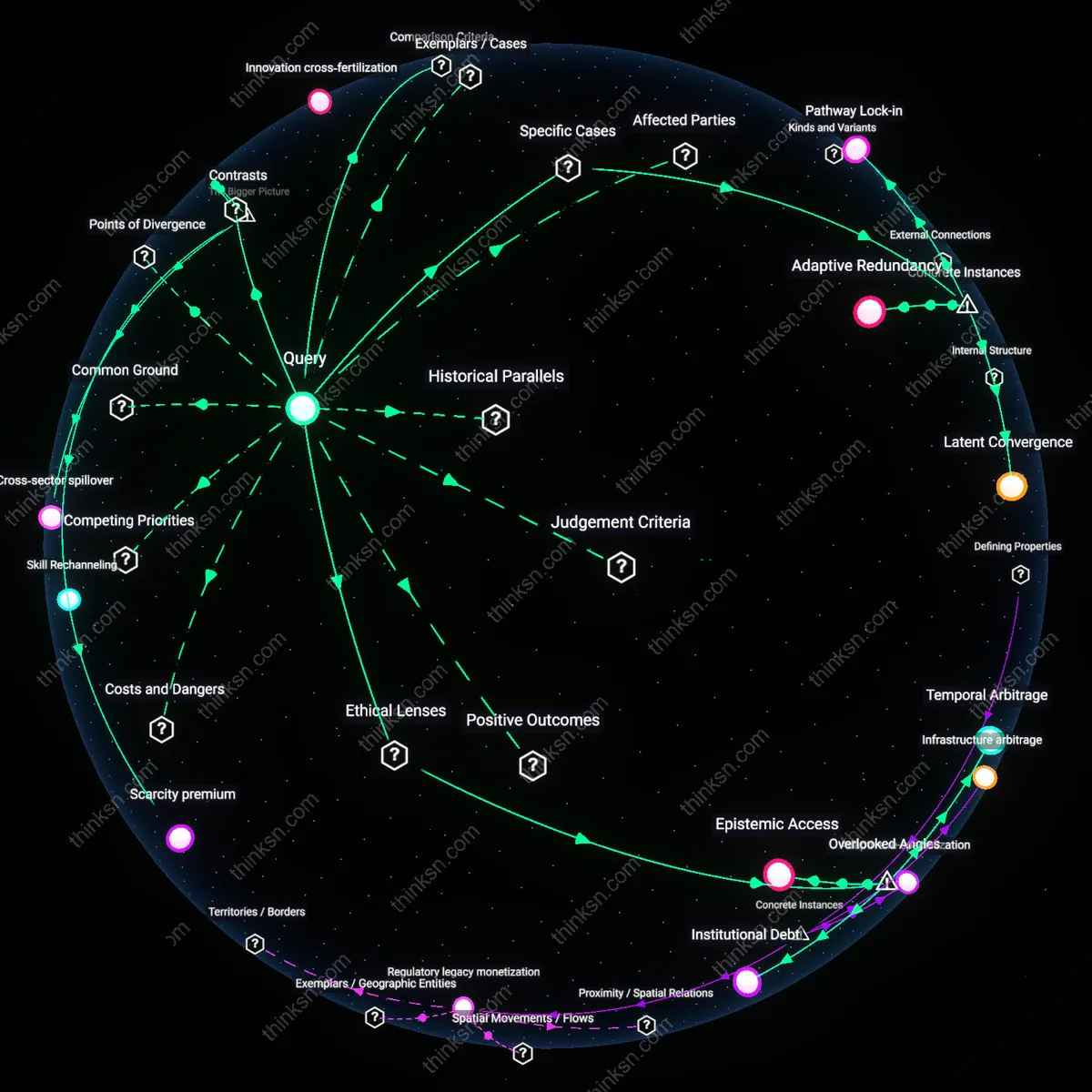

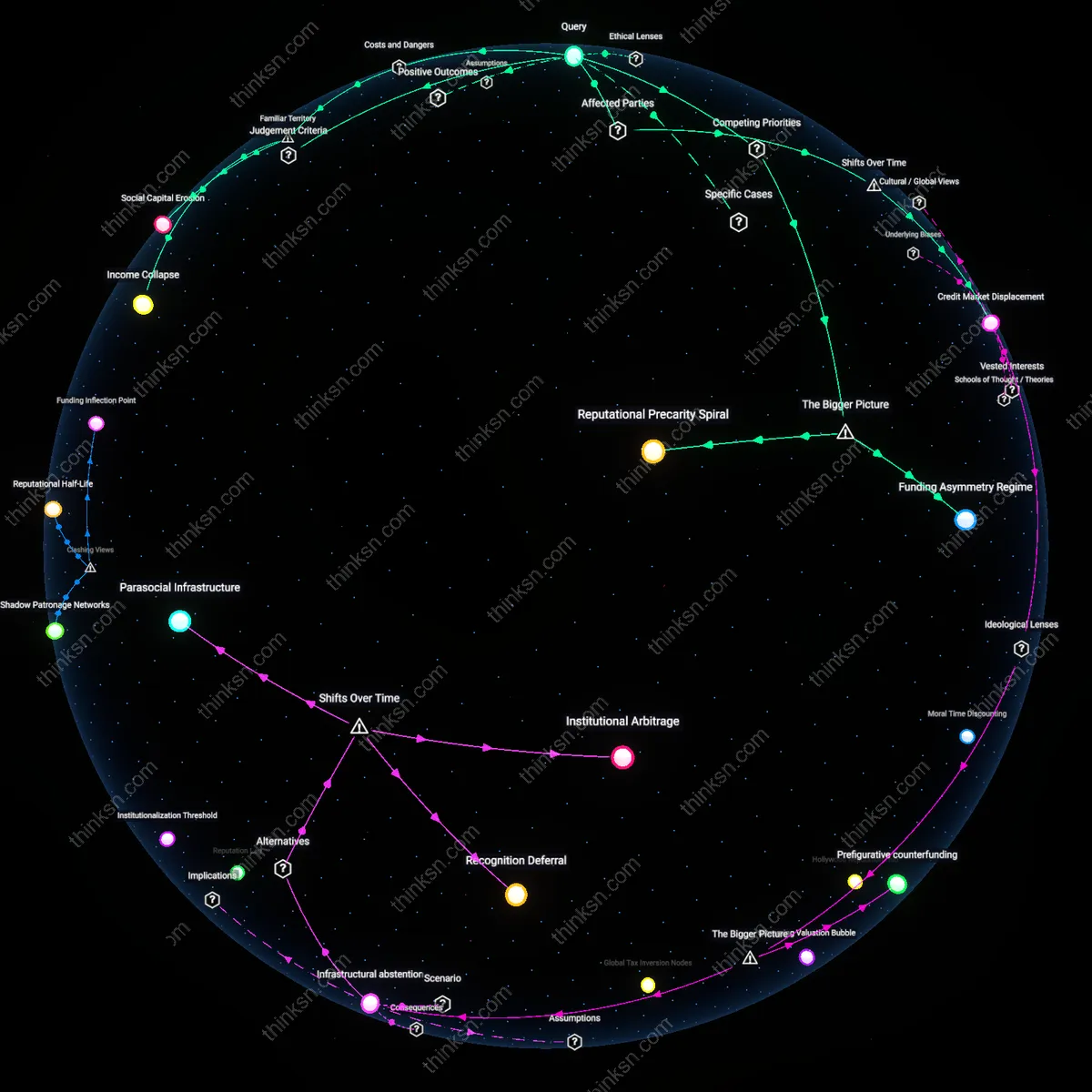

Algorithmic overexposure

Maintaining a unified digital portfolio increases the risk of algorithmic overexposure, where visibility becomes a liability rather than an asset. Platforms like LinkedIn or GitHub prioritize content through engagement-driven algorithms that amplify certain behaviors—such as frequent posting or controversial takes—while suppressing nuanced or context-dependent work. This creates a systemic incentive for professionals to conform to algorithmically rewarded performance, inadvertently exposing them to scrutiny when past content is stripped of context during shifts in cultural or political norms. The non-obvious danger is not misuse of data but the platform’s active reshaping of professional identity over time, where earlier, acceptable contributions resurface at scale during moments of social change, triggering reputational damage the individual cannot control.

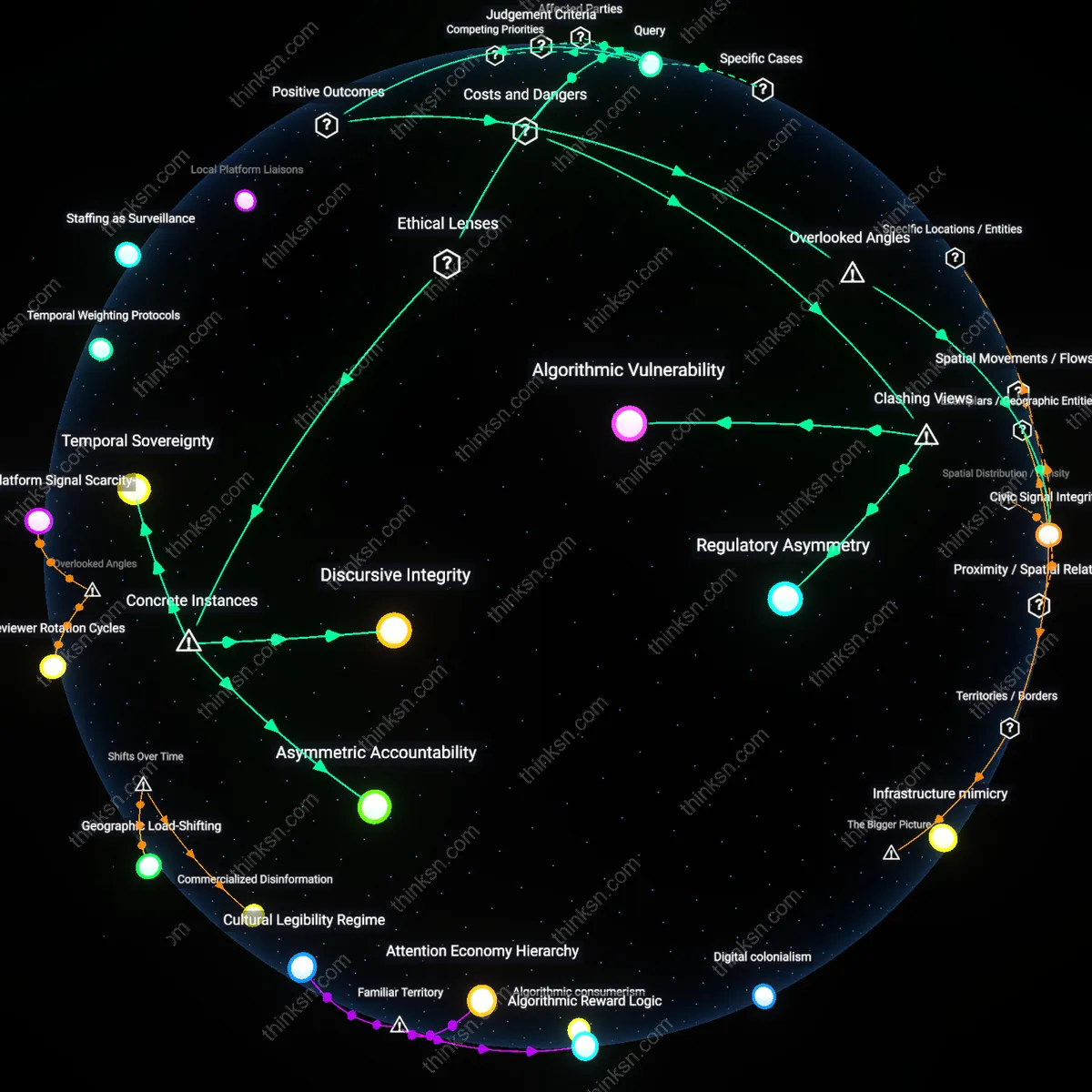

Platform dependency trap

Professionals who centralize their digital presence on commercial platforms become subject to a platform dependency trap, where career mobility depends on infrastructure not designed for individual resilience. Companies like Meta, Google, or even niche networks like Behance optimize for platform retention and data extraction, not professional integrity or long-term reputation management. When these platforms alter interface logic, deprecate features, or change visibility rules—as seen in repeated Facebook algorithm shifts—entire portfolios can be rendered inert or mischaracterized without user consent. The overlooked systemic risk is that professionals invest labor into ecosystems whose core incentives are misaligned with personal career sustainability, making reputation not just vulnerable to bias but to silent obsolescence.

Reputation arbitrage

The pursuit of career advancement through a unified digital portfolio enables third-party actors to exploit reputation arbitrage, where perceived professional value is manipulated through algorithmic gaming. Recruiters, competitors, or automated bots can weaponize inconsistencies or context gaps in a consolidated digital trail—such as a years-old blog post or informal comment—to discredit a professional in high-stakes environments like job transitions or promotions. Because platforms lack accountability for downstream consequences of content resurfacing, the damage occurs within opaque feedback loops involving HR analytics tools, AI screening, and social validation metrics. The underappreciated systemic mechanism is that reputation is no longer managed by the individual but is instead negotiated through invisible markets where algorithms and external actors assign value based on partial, decontextualized signals.

Algorithmic Complicity

Professionals should refuse to optimize their digital portfolios for algorithmic visibility, because compliance constitutes tacit endorsement of platforms' ethically opaque ranking systems rooted in utilitarian data aggregation. This mechanism—where self-promotion requires alignment with engagement-driven algorithms—embeds individual actors within a consequentialist framework that prioritizes reach over truth, thereby making them ethically complicit in the amplification of bias, such as when LinkedIn influencers inadvertently reinforce gendered job recommendations through reactive content shaping. The non-obvious consequence is that reputation management under these conditions does not mitigate risk but reproduces it, normalizing structural discrimination as professional common sense.

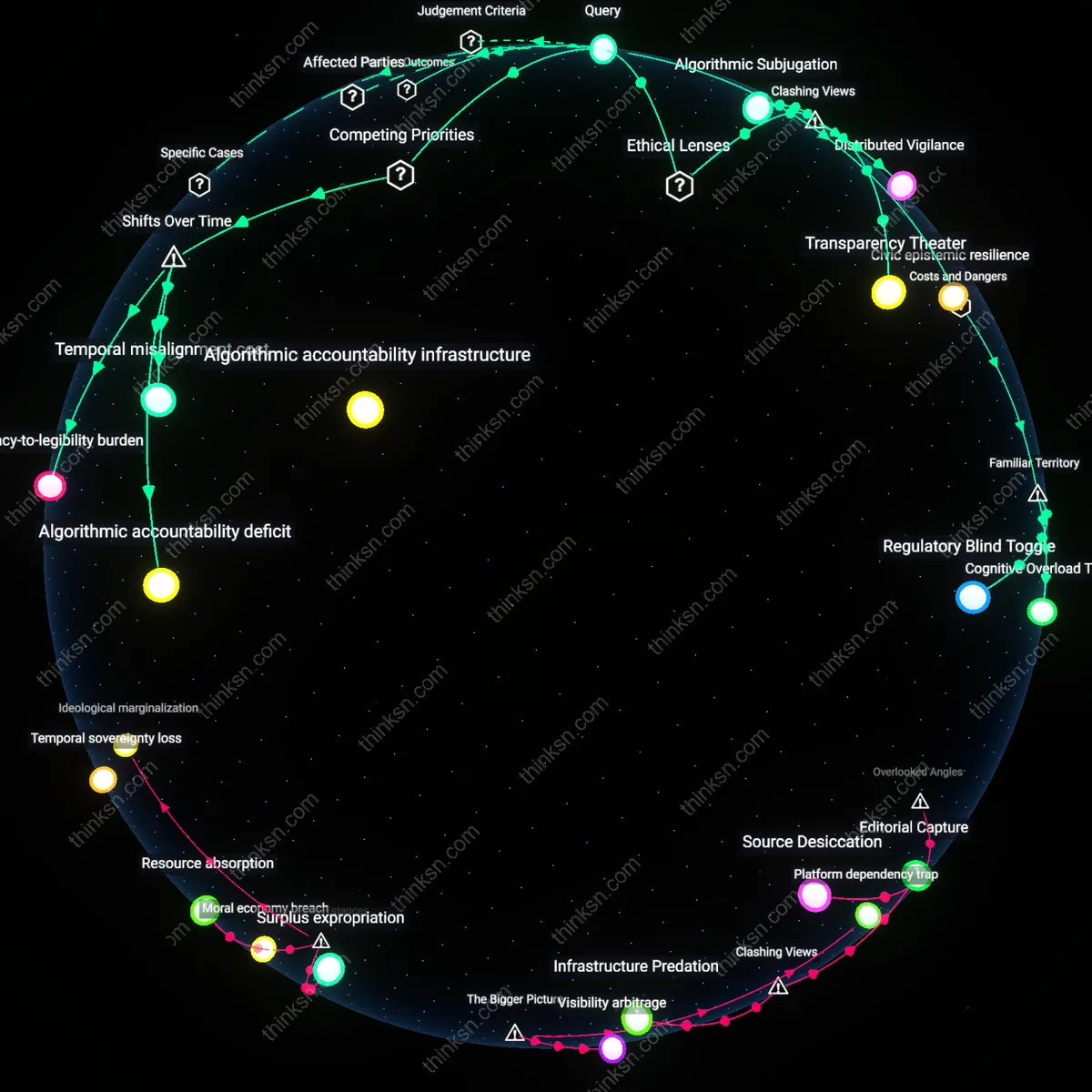

Reputation as Resistance

Strategic under-exposure—deliberately withholding content or obscuring expertise across platforms—can be a form of professional dissent against the neoliberal expectation of perpetual visibility enforced by Silicon Valley–style meritocracy. In fields like investigative journalism or human rights law, practitioners who limit their digital footprints to avoid algorithmic misrepresentation are not committing career suicide but enacting a deontological duty to protect sources or vulnerable communities, even at reputational cost. This reframes the absence of a polished portfolio not as a weakness but as a testimonial act, exposing how algorithmic reputation systems punish those who refuse to perform identity for surveillance capitalism.

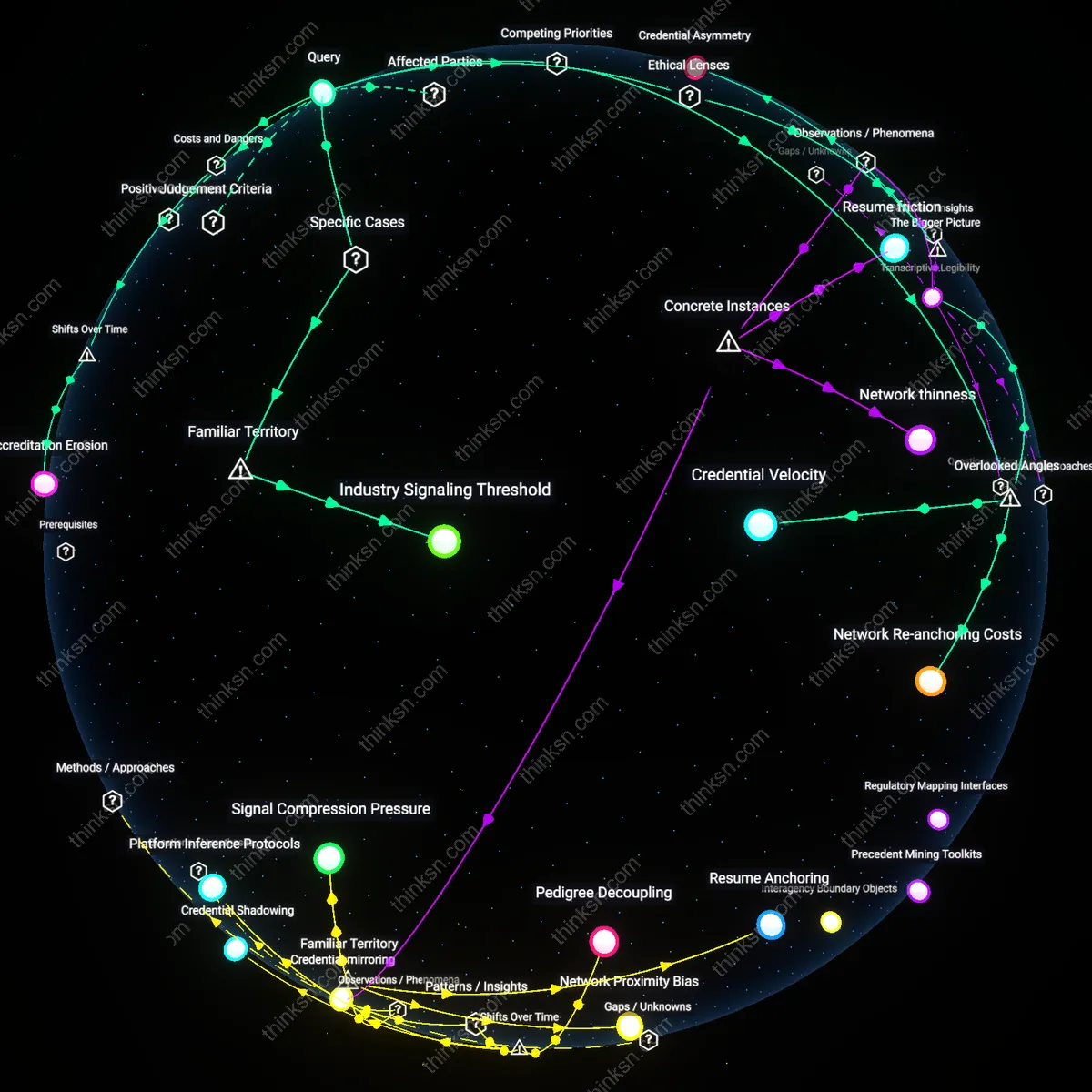

Credential Friction

Professionals can weaponize credential misalignment—by maintaining deliberately inconsistent or outdated information across platforms—to disrupt the predictive logic of algorithms designed to categorize expertise, forcing human review over automated sorting. This practice, seen in fields like academic philosophy where scholars withhold publications from ResearchGate to protest its commercialization of knowledge, inserts a Rawlsian 'veil of ignorance' into hiring pipelines by making algorithmic shortcuts fail. The underappreciated insight is that reputational harm from platform bias is not avoided through optimization but provoked intentionally, using friction as leverage to demand equitable evaluation in systems that otherwise privilege legibility to machines over merit.

Platform Signaling

Professionals can mitigate reputational risk from algorithmic bias by curating visible engagement metrics that align with elite industry benchmarks. Artists on Instagram, for instance, selectively showcase high-engagement posts—likes, shares, and saves—to simulate algorithmic favor, signaling credibility to galleries and collectors even when their content is algorithmically buried. This works because platforms serve as de facto credentialing systems, where surface metrics substitute for traditional reputation markers, making perceived popularity more influential than actual reach. What’s underappreciated is that professionals aren’t just sharing work—they’re signaling adherence to platform-specific norms of success, which increasingly define gatekeeping.

Audience Bracketing

Professionals reduce exposure to algorithmic bias by segmenting their digital presence across platform-specific audiences with divergent expectations. Academics, for example, maintain tightly controlled CVs on institutional websites while using Academia.edu or ResearchGate to distribute preprints under open-access visibility rules shaped by algorithmic discovery. These platforms categorize knowledge differently—search ranking versus peer recognition—exposing scholars to distinct reputational risks. The non-obvious insight is that by bracketing audiences, professionals don’t just diversify exposure; they exploit the inconsistency of algorithmic valuation between platforms to isolate and protect core reputations.

Portfolio Scaffolding

Designers on Behance counteract algorithmic unpredictability by embedding their work within narrative structures—case studies, process logs, client testimonials—that platforms cannot easily parse or re-rank without distorting meaning. Firms like Pentagram use this scaffolding technique to ensure interpretive control over how projects are received, even when visibility fluctuates. The mechanism relies on making content resistant to algorithmic simplification, preserving professional intent across recombinant feeds. The overlooked dimension is that the portfolio becomes less a container of work than a structured defense against reductive platform logic, where context becomes armor.