Is Consumer Consent Mere Formality in Opaque Data Deals?

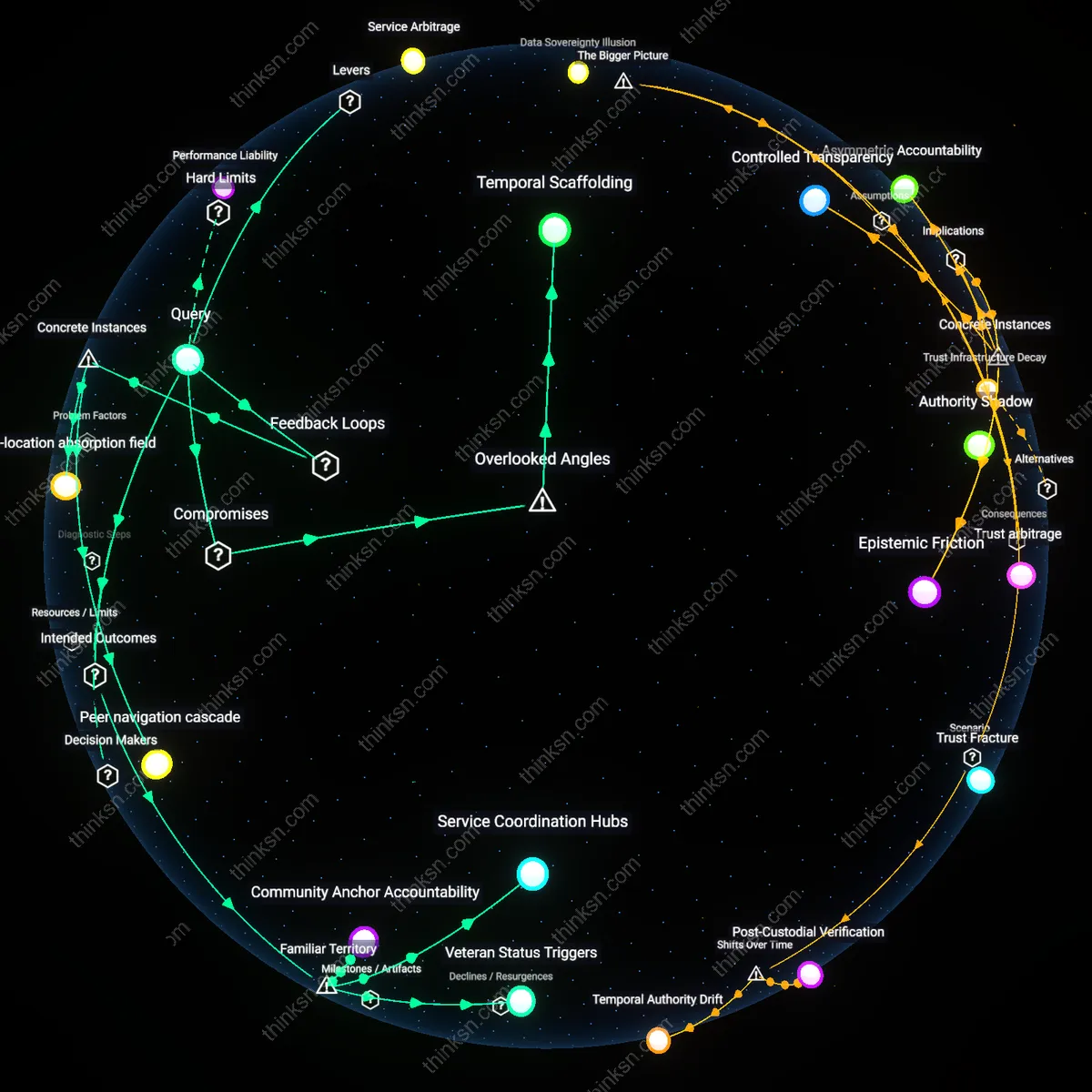

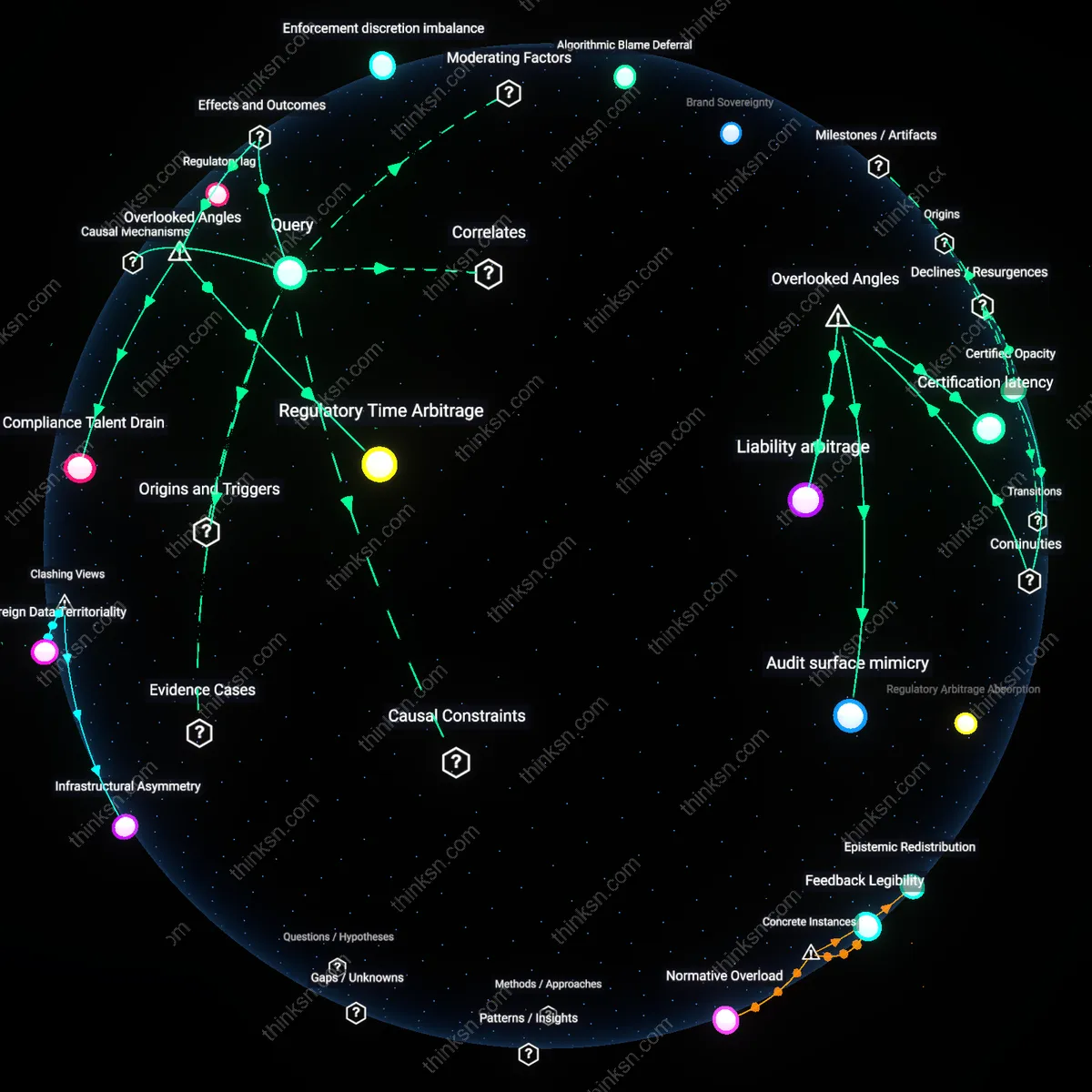

Analysis reveals 5 key thematic connections.

Key Findings

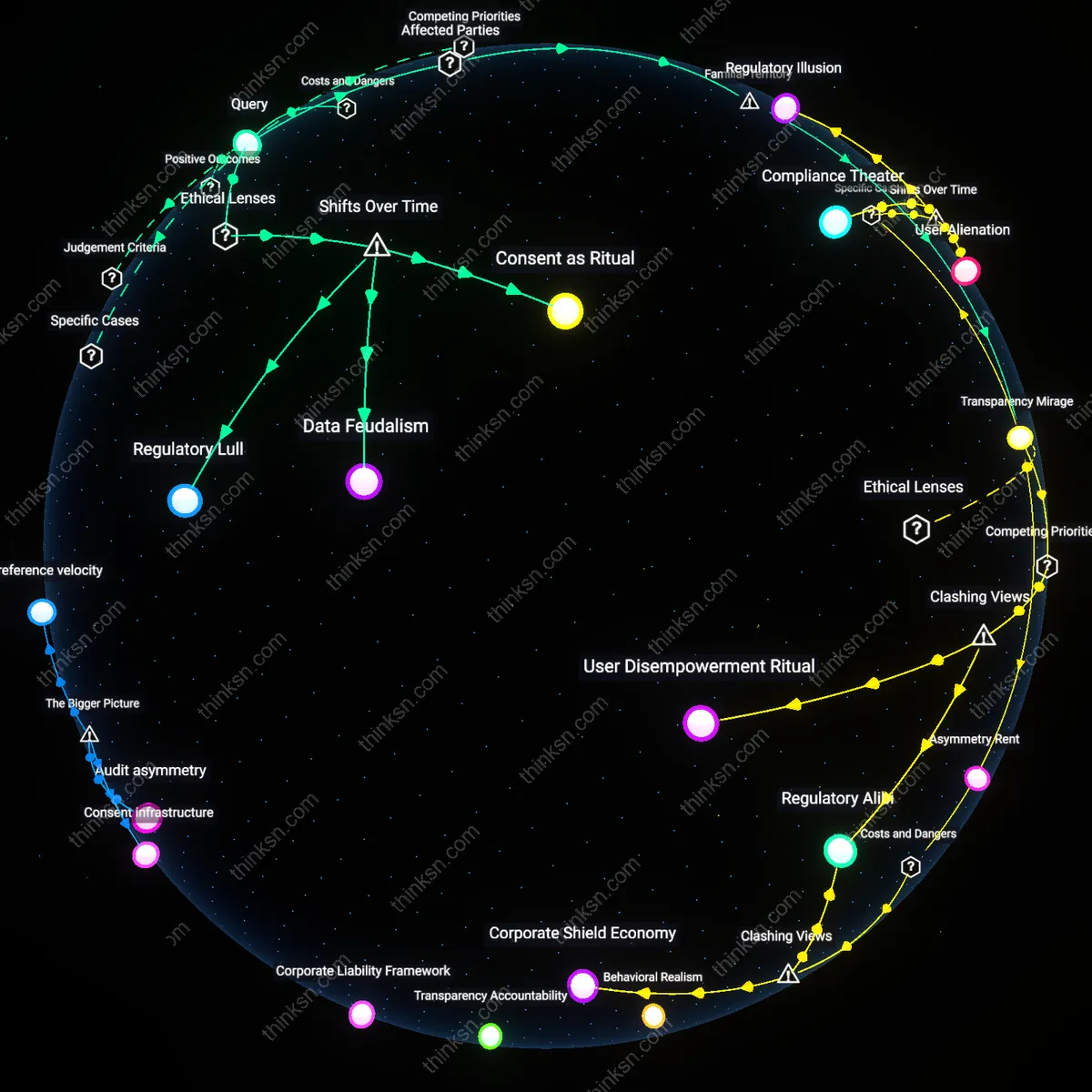

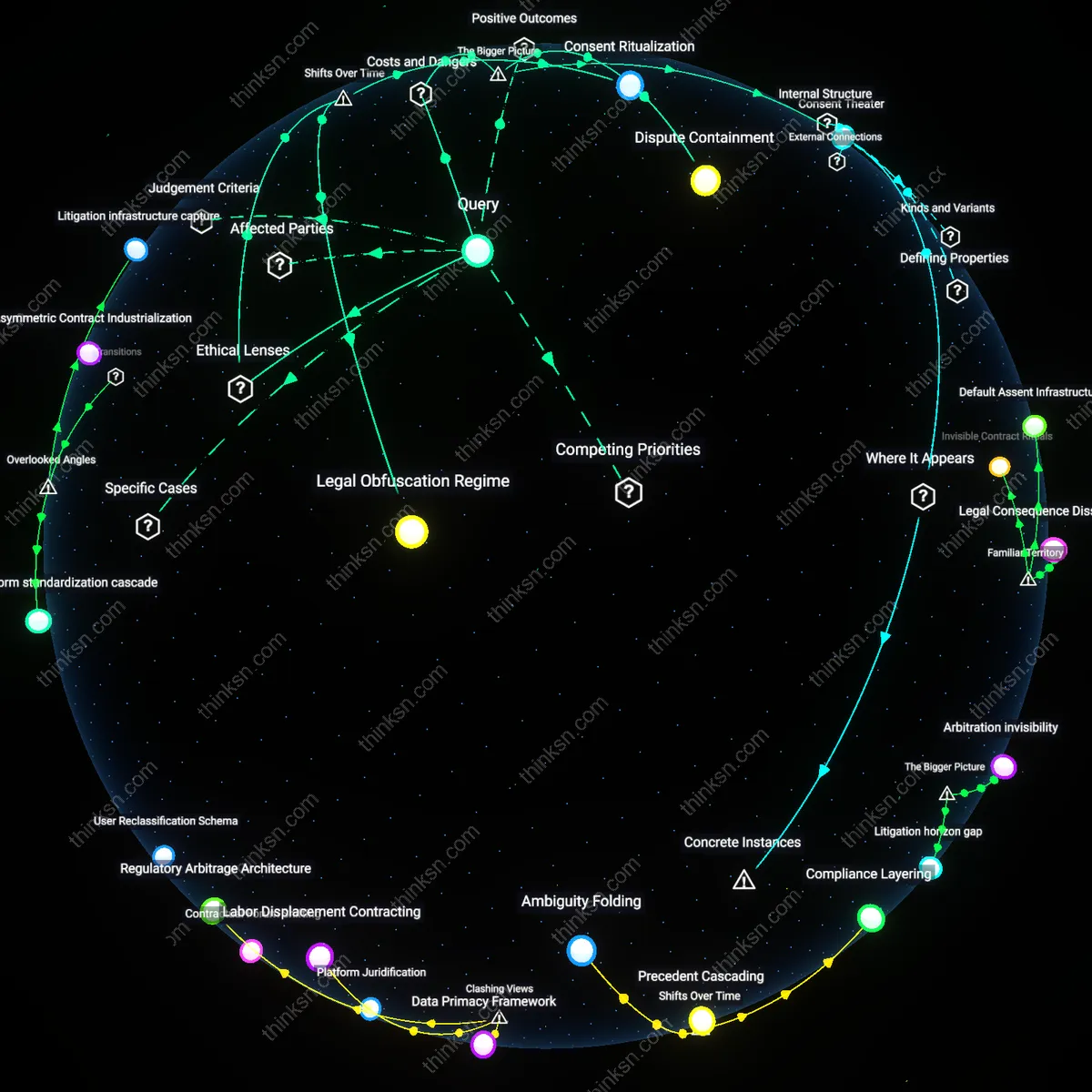

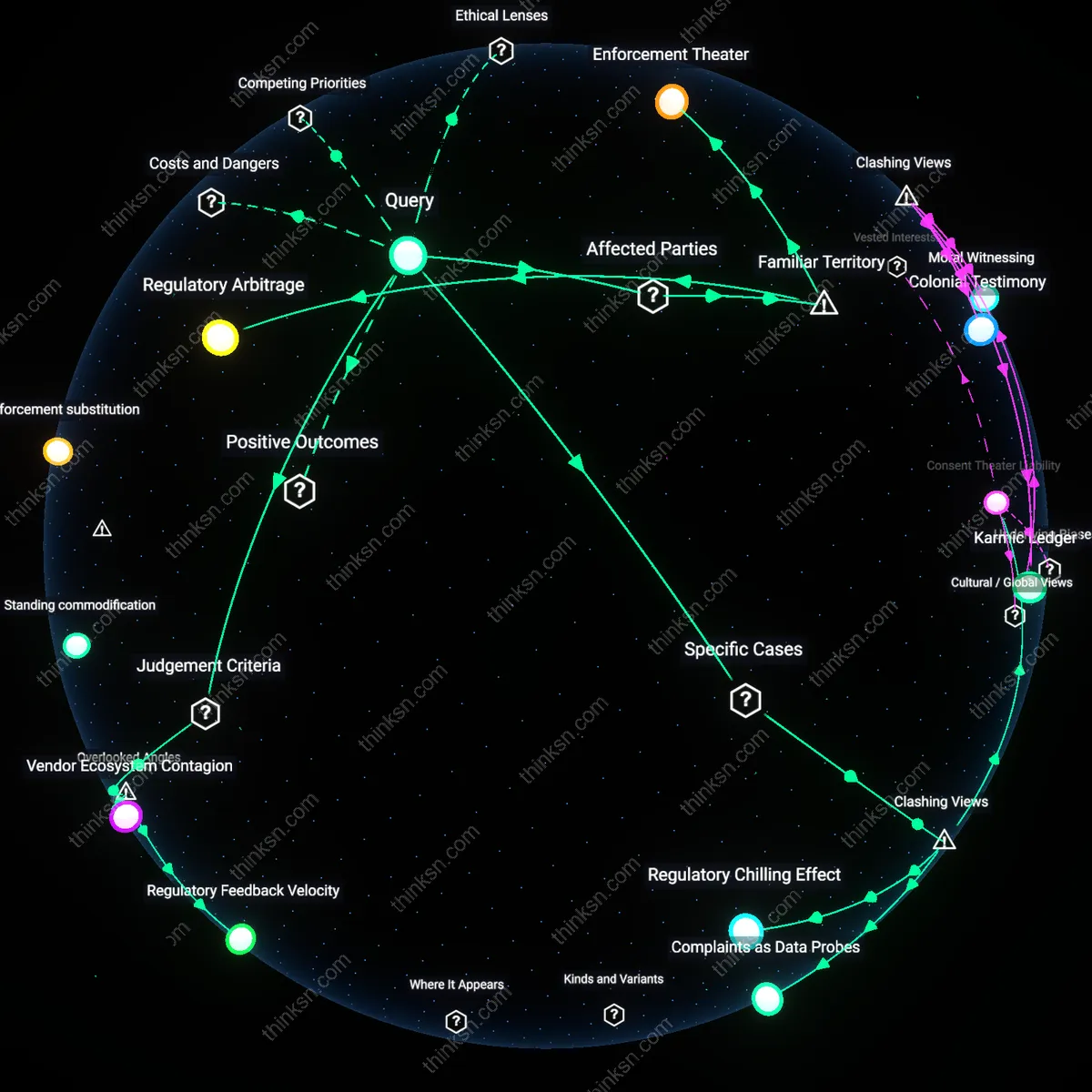

Transparency Mirage

Consumer consent loses substantive meaning when clarity is substituted with volume, turning disclosure into obfuscation. People commonly equate long privacy notices with thoroughness, trusting that visible text equals informed consent—a reflex cultivated by institutions like banks or health apps that use length as a proxy for legitimacy. In reality, systems like Apple’s App Tracking Transparency or hospital HIPAA notices deploy linguistic density and legal formatting to simulate honesty while avoiding actionable clarity, operating through the cognitive illusion that visibility equals understanding. The underappreciated truth here is that transparency, as currently implemented, often serves not the user, but the auditor—the lawyer or regulator—making the public performance of disclosure more important than its comprehension.

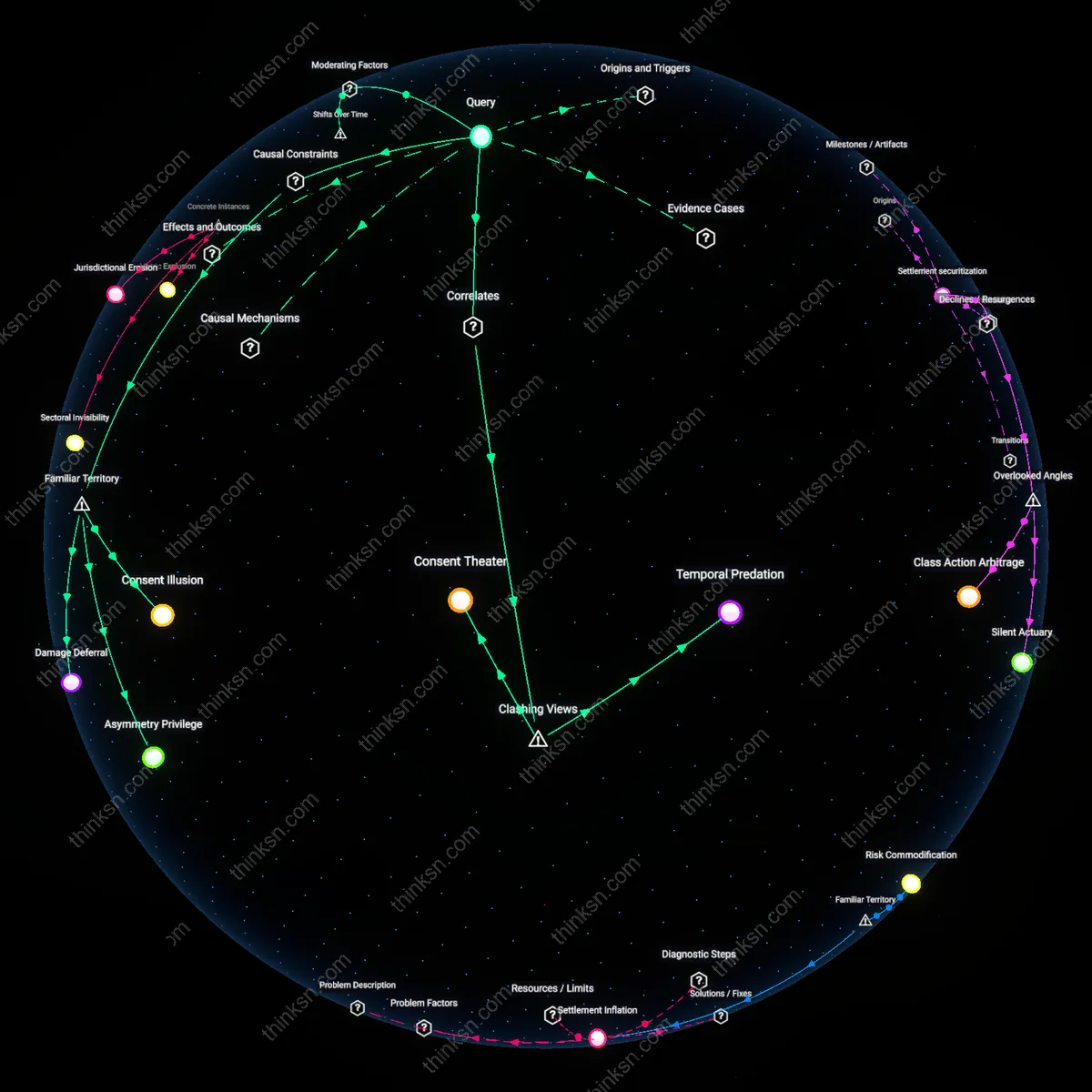

Consent obsolescence

Facebook's 2014 acquisition of Onavo Protect, a VPN app that funneled user data back to Facebook for competitive intelligence, rendered consumer consent meaningless because users were unaware that their activity outside Facebook’s ecosystem was being monitored and exploited—exposing how data-sharing agreements buried in vague privacy policies enable surveillance functions never explicitly authorized. This case reveals that when transparency fails, consent becomes a retroactive justification rather than a prior condition, allowing platforms to redefine data use boundaries unilaterally. The mechanism—embedding data harvesting within ostensibly protective software—exploits user trust and technical opacity, creating a feedback loop where downloaded consent forms become structurally incapable of governing actual data use. The underappreciated reality is that consent in such cases does not merely degrade; it becomes functionally obsolete before it is even given.

Consent as Ritual

Consumer consent loses substantive meaning when data-sharing agreements lack transparency because it has evolved into a ceremonial compliance mechanism rather than a functional exercise in autonomy, exemplified by the post-1990s shift toward clickwrap agreements under U.S. common law interpretations that equate silent assent with informed agreement. This transformation, accelerated by the1998 adoption of the OECD Privacy Guidelines and their harmonization into corporate practice, institutionalized a model where users, intermediaries, and regulators accept ritualized acceptance—scrolling and clicking—as evidentiary of consent, regardless of comprehension. The mechanism operates through private ordering in digital contracts, displacing earlier 1970s-era assumptions of meaningful disclosure under Fair Information Practice Principles, making the ritual itself the compliance unit rather than its content. What is underappreciated is that this ritual does not fail transparency—it *replaces* it, producing legitimacy without intelligibility.

Data Feudalism

Consumer consent loses substantive meaning when data-sharing agreements lack transparency because, since the mid-2000s platformization of the internet, user data has been systematically treated as a lordly prerogative rather than a personal right, echoing feudal hierarchies in which access to services depends on submission to opaque tributary obligations. Under this regime, dominant platforms like Facebook and Google act as digital sovereigns who define the terms of data tenure, supported by legal innovations such as the 2006 change to Section 230 interpretations and the 2018 U.S. judicial narrowing of standing in data misuse cases. The mechanism is the privatization of public rights through end-user license agreements rewritten as edicts, transforming users into vassals whose 'consent' is structurally coerced by service dependency. The underappreciated consequence is that transparency is not absent but inverted—rendered visible only to the lord, not the serf—marking a departure from Enlightenment-era contractual reciprocity.

Regulatory Lull

Consumer consent loses substantive meaning when data-sharing agreements lack transparency because the 1996–2004 period of deregulatory momentum, particularly the U.S. retreat from enforcing the FTC's consent decree model for online privacy, allowed corporate data practices to crystallize into norms that later regulation struggled to unwind, even after the 2013 Snowden revelations. In this interval, the notion of 'notice and choice' was hollowed out by industry self-regulation frameworks, which were codified in soft law guidance and later absorbed into GDPR's 'transparency' requirements in diluted form, preserving proceduralism over power redistribution. The mechanism is the strategic delay of substantive oversight during a formative technological phase, enabling a path dependency in which disclosure became a compliance checkbox rather than a democratic safeguard. The underappreciated insight is that the loss of meaning in consent was not gradual but engineered through a precise historical window where regulatory inaction became institutionalized.