Regulatory Risks of Relying on Industry Compliance Data?

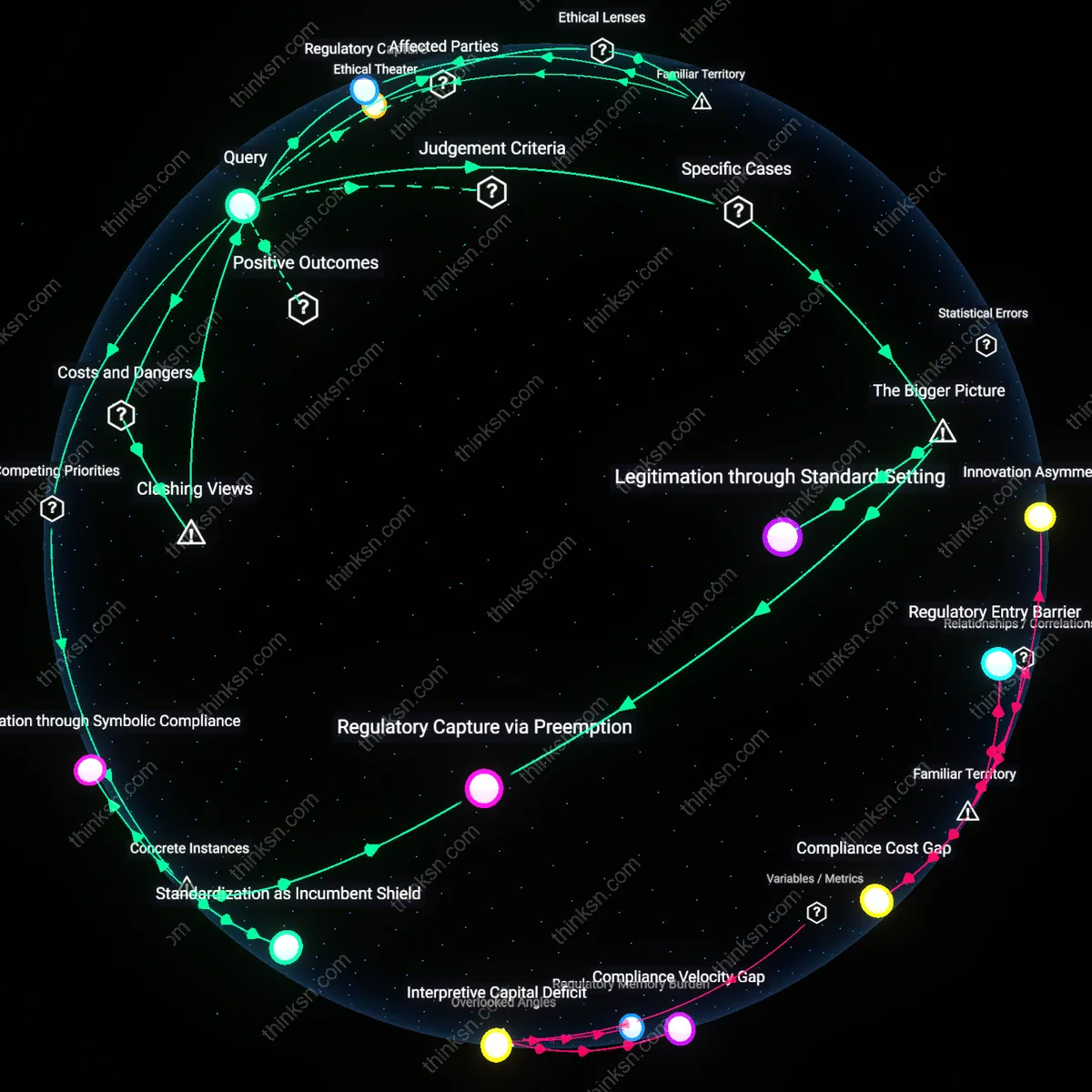

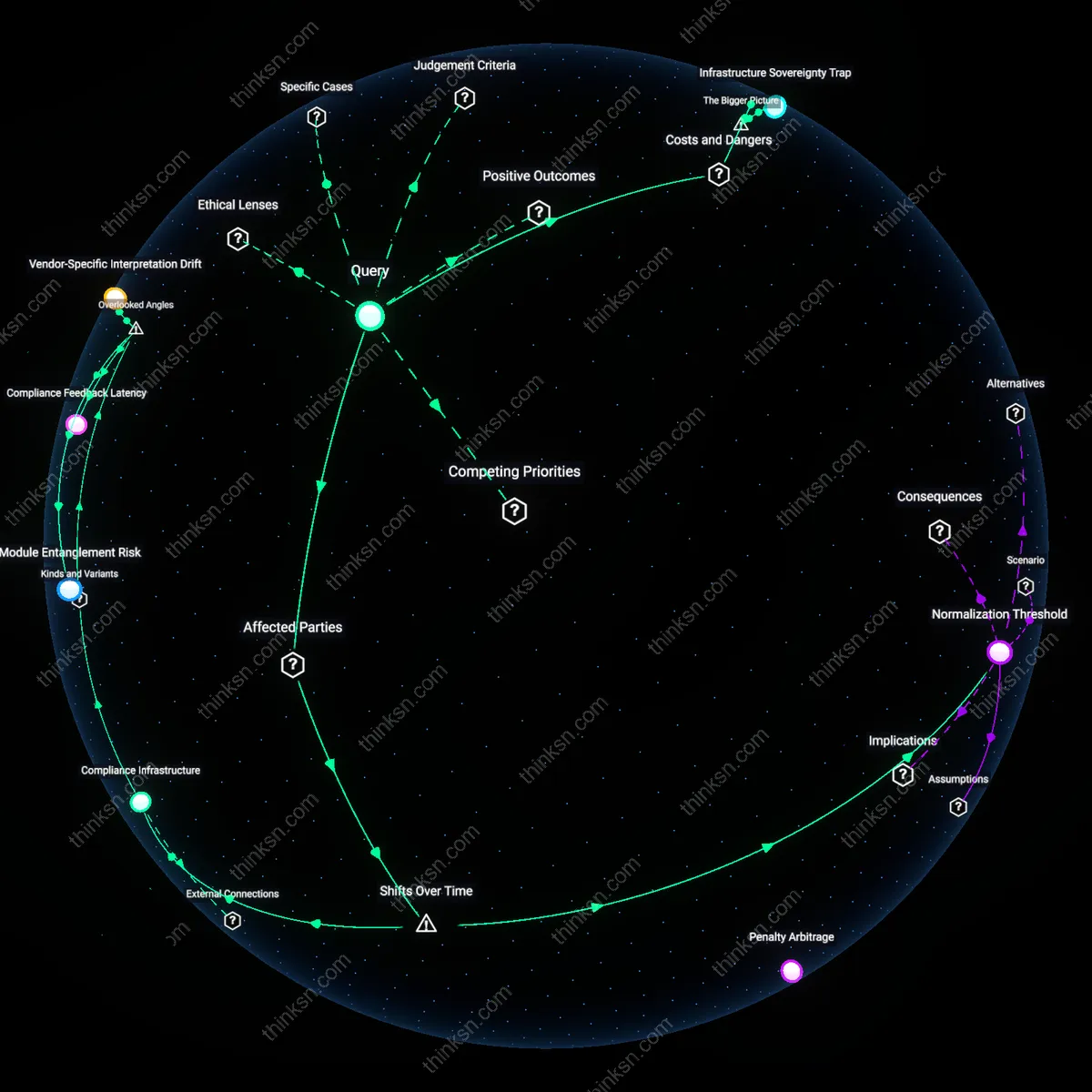

Analysis reveals 7 key thematic connections.

Key Findings

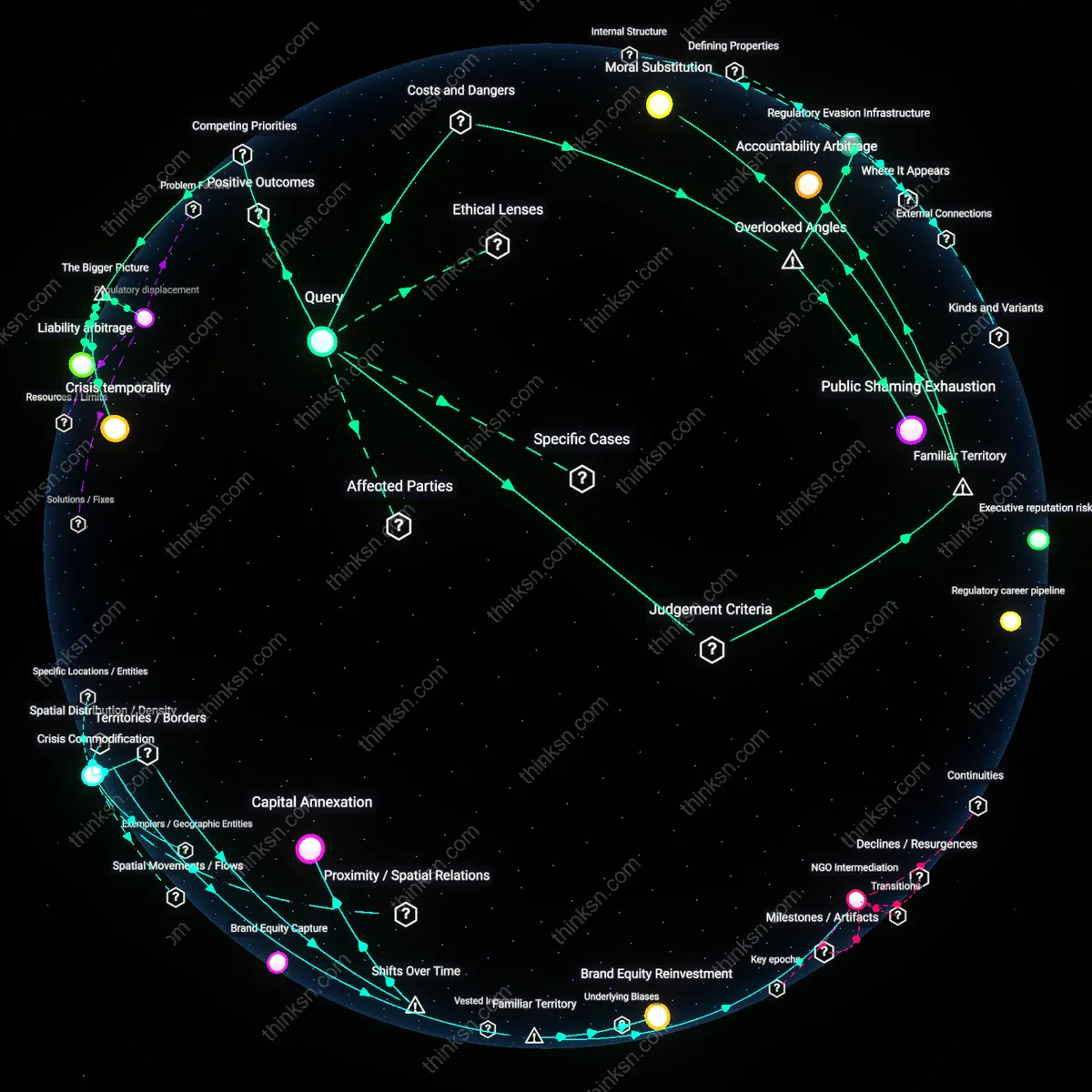

Regulatory Capture Nexus

The U.S. FDA’s reliance on tobacco industry-generated data during the 1990s enabled Philip Morris and Brown & Williamson to define nicotine’s pharmacological profile, effectively outsourcing the scientific basis of regulation to the regulated; this asymmetric dependence allowed companies to control the evidentiary thresholds for addiction, delaying meaningful oversight until internal whistleblower documents revealed deliberate product manipulation, demonstrating how data ownership translates into de facto rule-making authority. The non-obvious insight is that the crisis of credibility did not stem from overt corruption but from the legitimate procedural act of data submission, which became a covert policy lever.

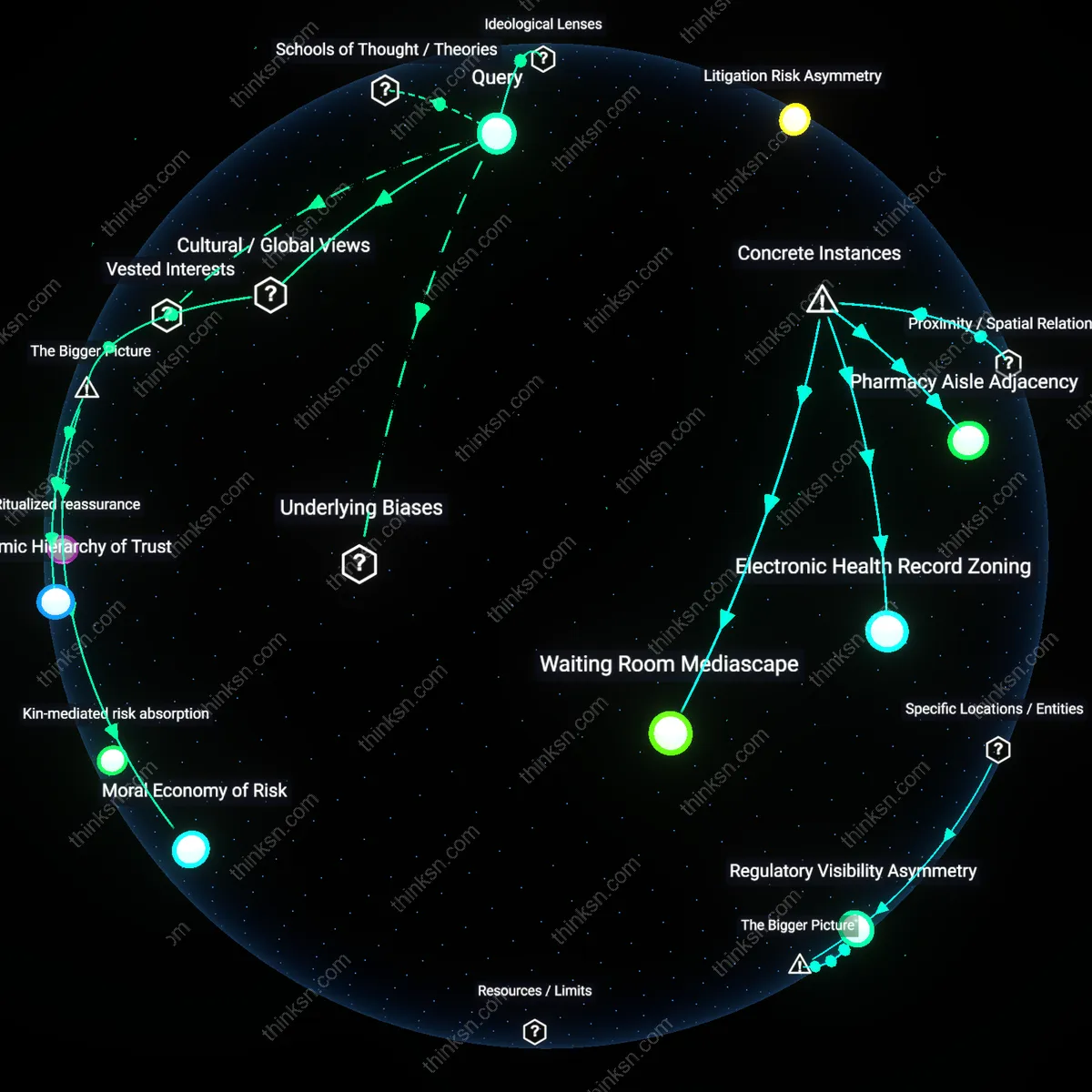

Epistemic Bureaucratic Drift

The European Chemicals Agency’s (ECHA) dependence on dioxin toxicity assessments supplied almost exclusively by chemical conglomerates like Bayer and Dow during REACH authorization cycles led to the institutional normalization of industry-derived uncertainty margins, which systematically underweighted independent ecotoxicological findings; this created a feedback loop where only methodologies aligned with corporate models were deemed ‘robust,’ thus elevating procedural consistency over biological plausibility. The overlooked mechanism is how regulatory legitimacy becomes reflexively tied to data formats that industry alone can produce at scale, thereby excluding epistemically divergent science from decision-making.

Standardization Asymmetry

In Bhopal, India, post-1984 regulatory frameworks for Union Carbide’s pesticide production relied on safety benchmarks and emissions data produced wholly by the company itself, a practice later adopted by state inspectors in Maharashtra, which led to the codification of corporate thresholds into law despite known local morbidity patterns; this institutionalization of proprietary metrics as public benchmarks persisted even after the disaster, not because of malice but because no independent data infrastructure existed to counter it. The core revelation is that regulatory dependence begins not with data submission but with the absence of parallel public knowledge systems capable of contesting it.

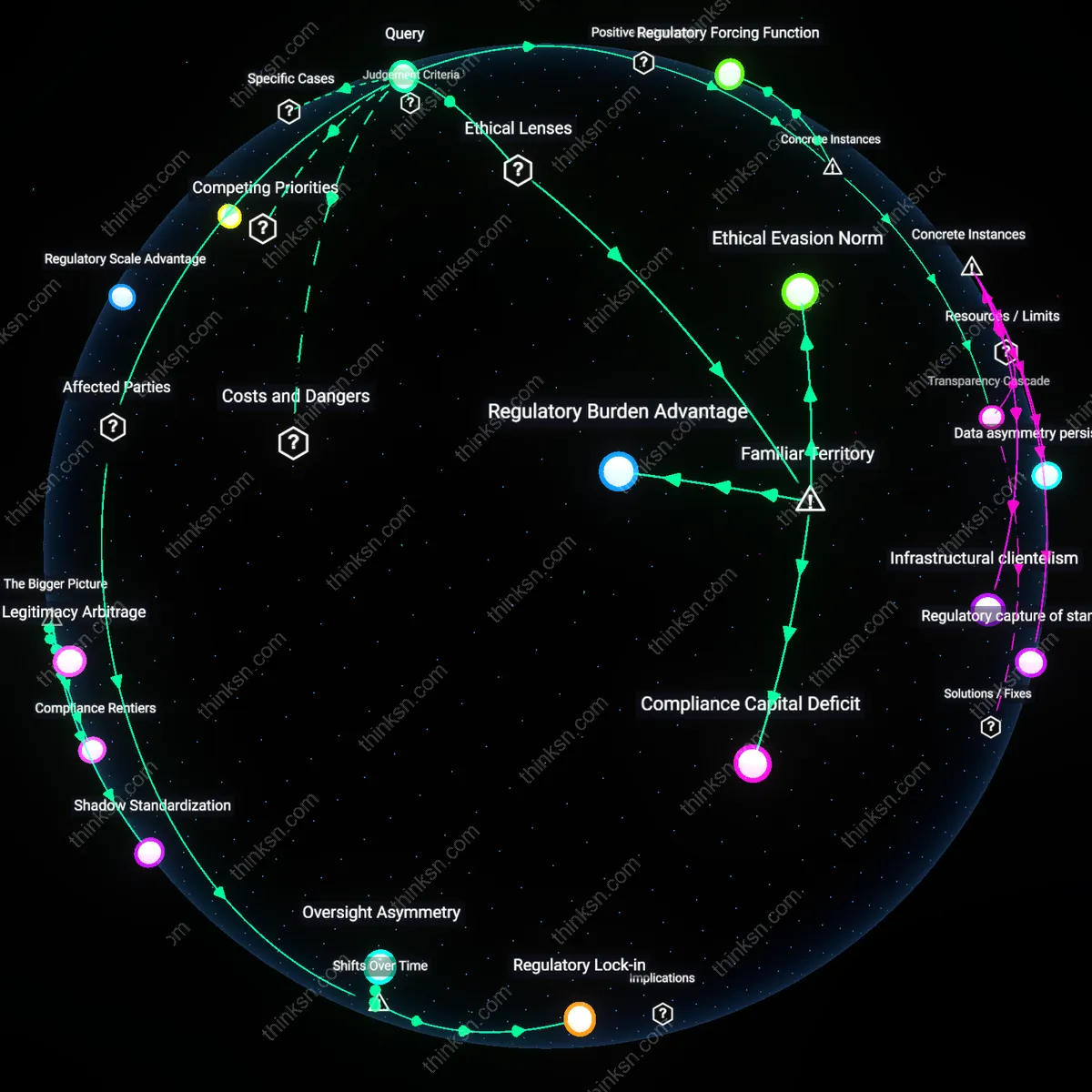

Path Dependency Lock-in

The adoption of industry-standard toxicity testing frameworks in the 1970s—such as GLP (Good Laboratory Practice)—normalized proprietary data as regulatory currency, displacing community health observations and independent science in environmental rulemaking. Over time, this created a feedback loop where only data produced under industry-controlled conditions were deemed 'reliable,' effectively excluding alternative methodologies and marginalized exposure reports from shaping policy. The shift reveals that the institutional memory of agencies like the EPA became encoded with corporate epistemologies, making systemic reform technically unfeasible without dismantling decades of methodological alignment.

Normalization of Corporate Epistemology

Following the rise of neoliberal governance in the 1990s, regulatory bodies increasingly outsourced risk modeling and data analysis to private consultants and industry-affiliated scientists, transforming what counted as 'evidence' in public health decisions. This shift redefined scientific legitimacy around reproducibility under commercial protocols rather than public accountability, embedding profit-constrained research designs into legal thresholds for harm. The underappreciated effect is that public health advocacy now must speak in industrially licensed terms—producing a silent doctrinal revolution where corporate logic became the baseline syntax of regulation.

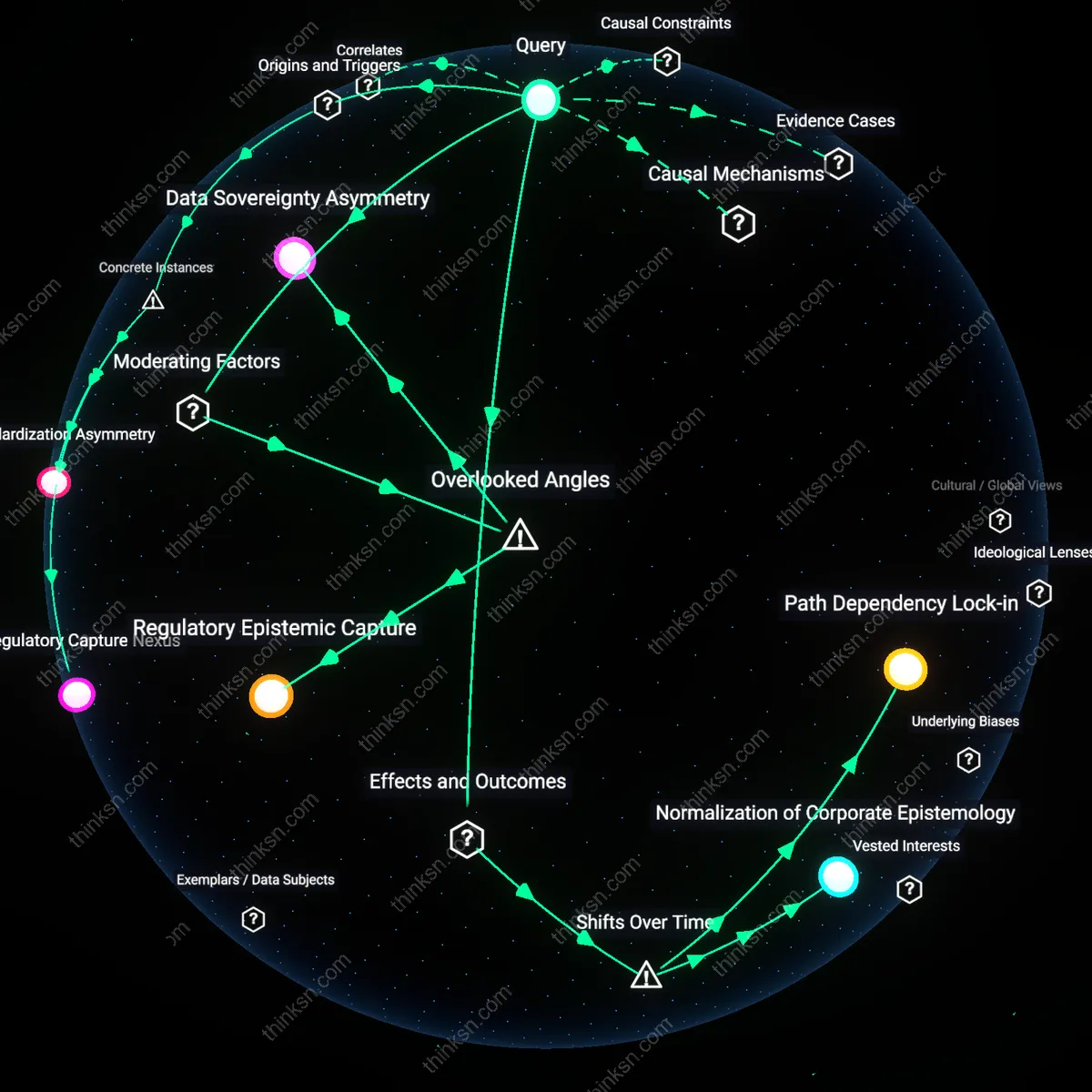

Regulatory Epistemic Capture

Dependence on industry-generated safety data entrenches a cognitive monopoly where regulators adopt the industry’s evidentiary standards as default, not because they are scientifically superior but because they structurally displace alternative forms of evidence. This occurs when agencies like the EPA or FDA rely on proprietary toxicology studies or clinical trial datasets that only well-resourced firms can produce, effectively excluding community-based health observations or ecological monitoring from decision-making; the non-obvious mechanism is epistemic homogenization—where what counts as 'valid' science becomes aligned with corporate protocols not through overt coercion but through resource asymmetry and institutional habit. This shifts the regulatory mindset itself, making it systematically blind to non-industry epistemologies even when they signal emerging public health risks.

Data Sovereignty Asymmetry

When regulatory bodies depend on industry-held raw data—such as chemical exposure datasets or real-world patient monitoring—without securing enforceable rights to independently reanalyze or archive it, corporations gain de facto sovereignty over the evidentiary foundation of regulation. This dynamic plays out in cases like pesticide approvals, where manufacturers provide summary conclusions but withhold underlying field data, preventing regulators from verifying claims or detecting latent patterns of harm; the overlooked condition is that data ownership, not just data quality, determines regulatory autonomy. This creates a silent veto power, where firms can indirectly block reassessments by controlling access, thus preserving standards that favor commercial continuity over precautionary health interventions.