Should You Trust Voice Assistants That Learn Too Well?

Analysis reveals 6 key thematic connections.

Key Findings

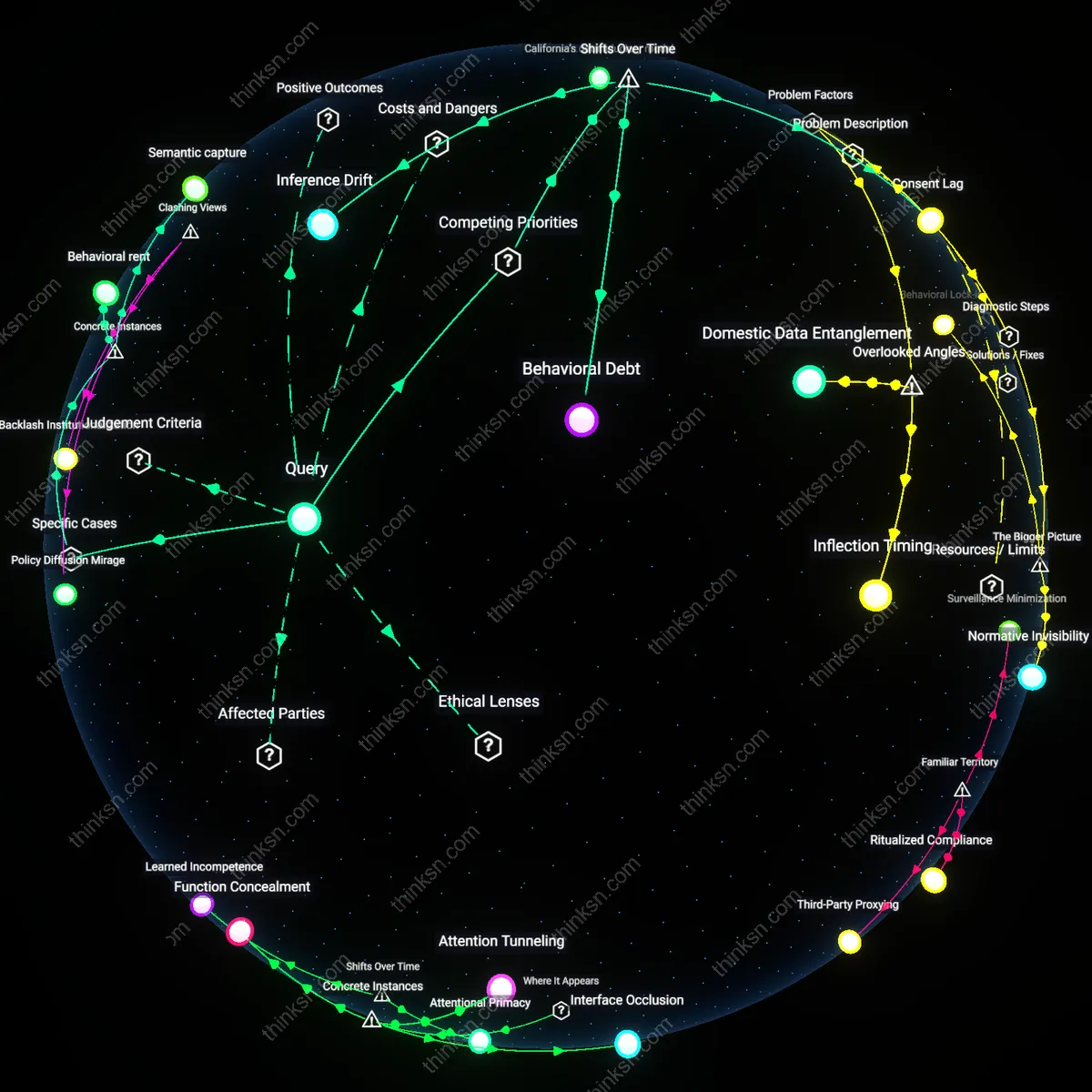

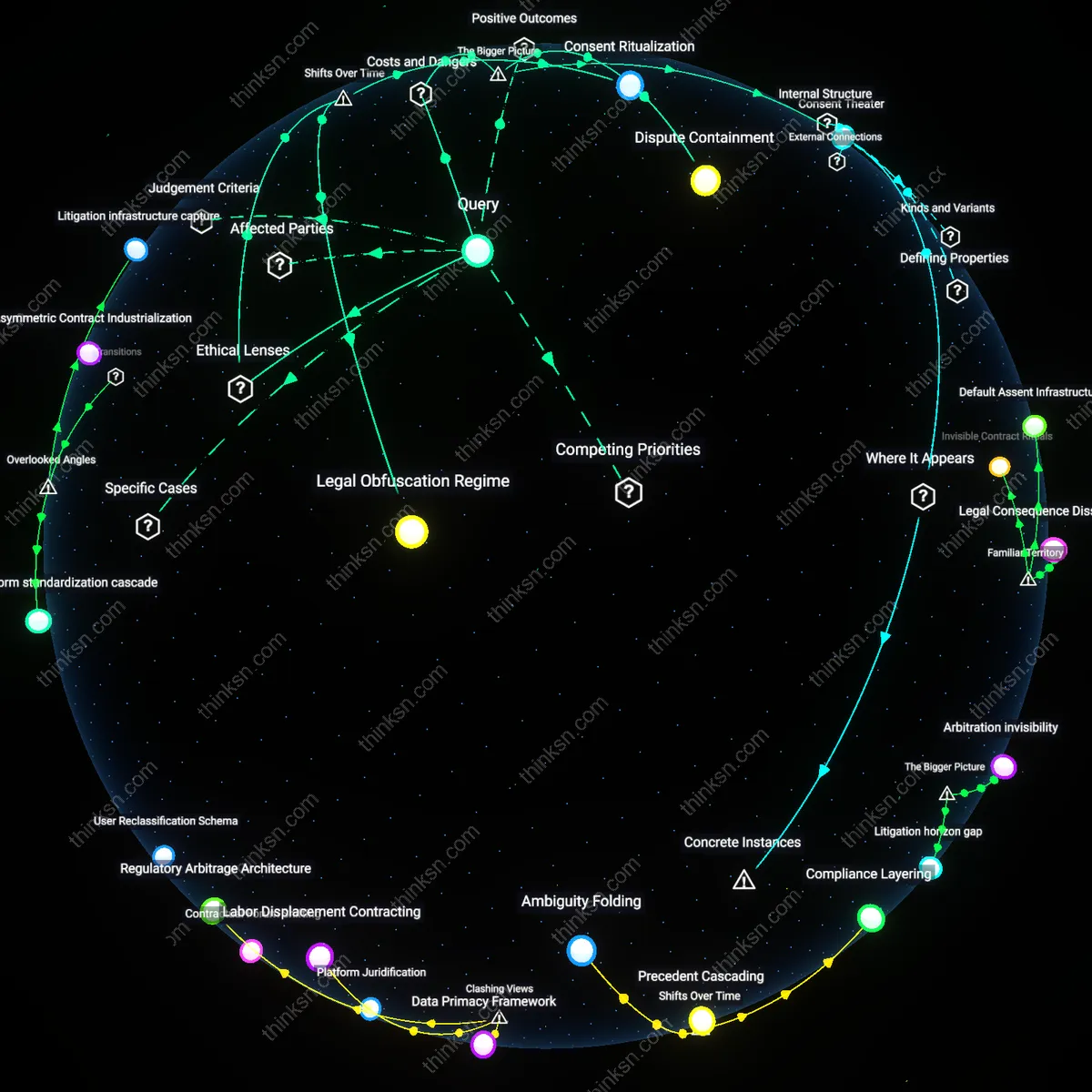

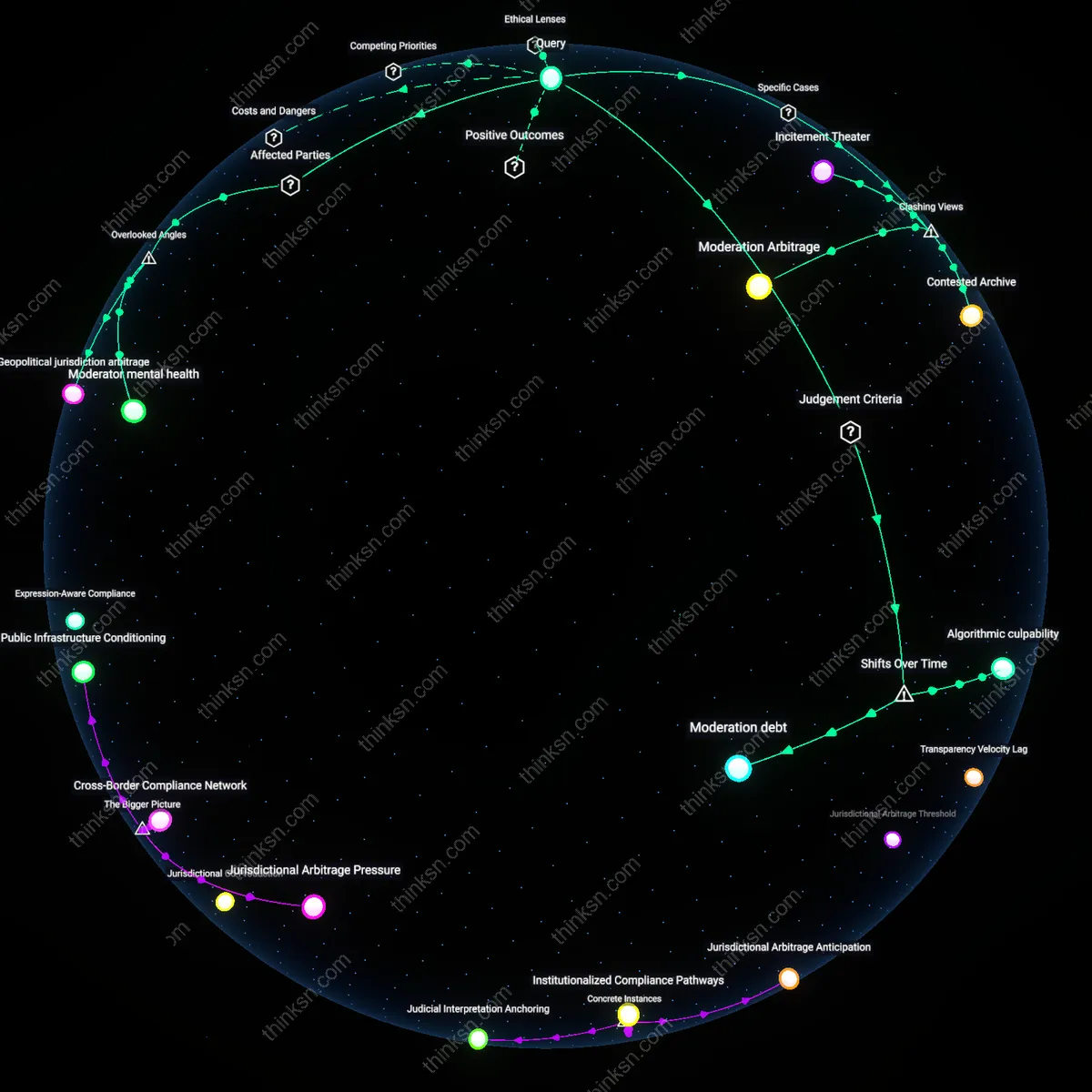

Behavioral Debt

Consumers should adopt voice assistants by accepting early privacy erosion in exchange for functional personalization, because corporate platforms shifted from discrete data collection in the 2010s to continuous behavioral inference post-2020, embedding user behavior into predictive models that compound over time; this creates a deferred cost—behavioral debt—where initial convenience locks users into systems that increasingly anticipate and shape choices with minimal opt-out mechanisms, a non-obvious consequence of how machine learning pipelines evolved from episodic analysis to always-on adaptation.

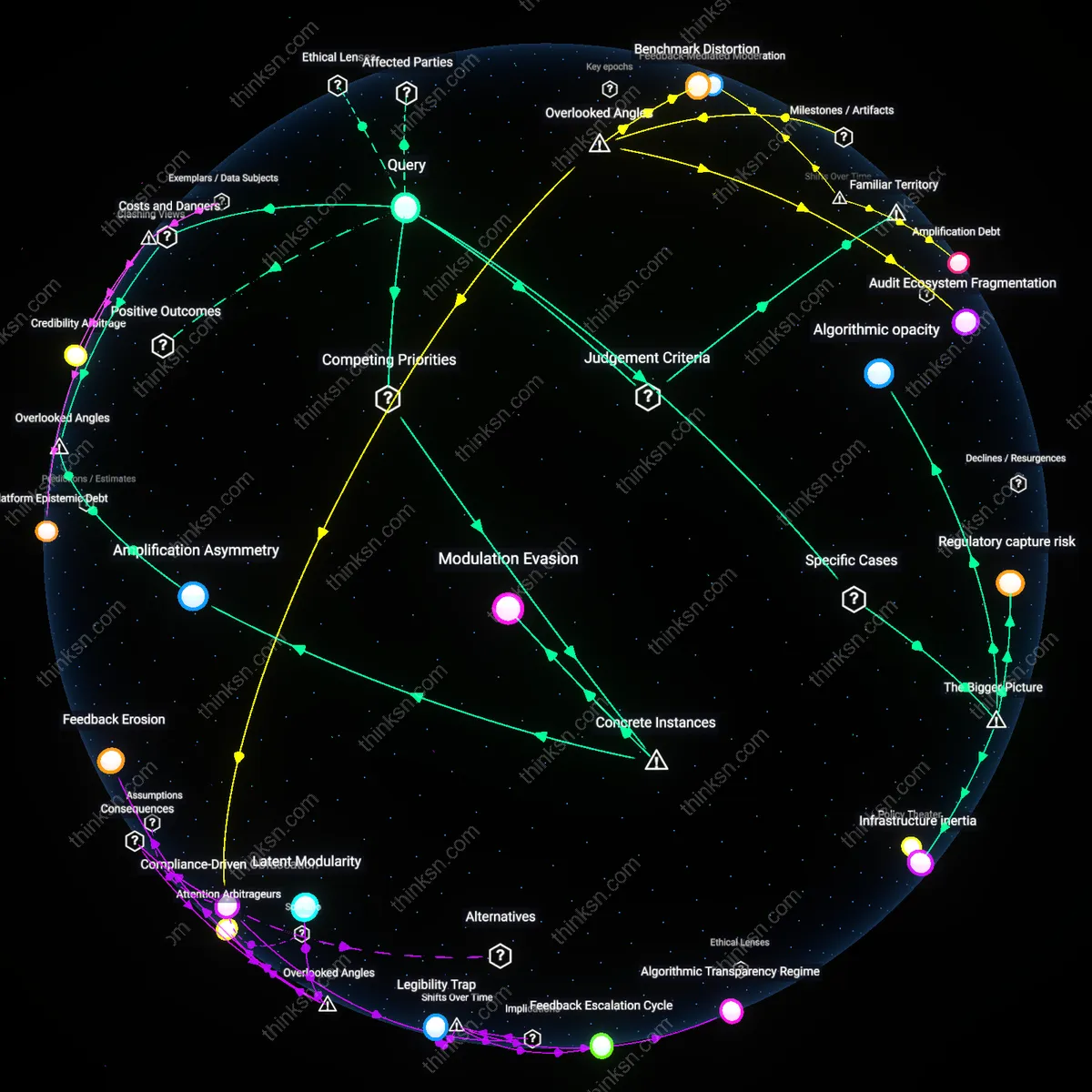

Inference Drift

Consumers ought to resist unregulated voice assistants because the post-2016 shift from rule-based automation to deep learning models has detached algorithmic outcomes from user intent, allowing platforms to infer sensitive attributes—like emotional state or health conditions—from mundane speech patterns; this inference drift transforms voice data into proxies for private traits users never consented to disclose, revealing how the erosion of transparency became systemic when training data scales eclipsed human interpretability in model development.

Consent Lag

Consumers should treat voice assistant adoption as a time-bound decision because the transition from standalone devices in the early 2010s to interconnected smart ecosystems by the late 2010s recalibrated consent from informed agreement to ongoing participation in opaque data flows; as voice platforms became central nodes in home networks, users’ ability to withdraw meaningful consent diminished due to embedded dependencies on automated routines, exposing consent lag—the growing delay between evolving data practices and users’ capacity to comprehend or object to them.

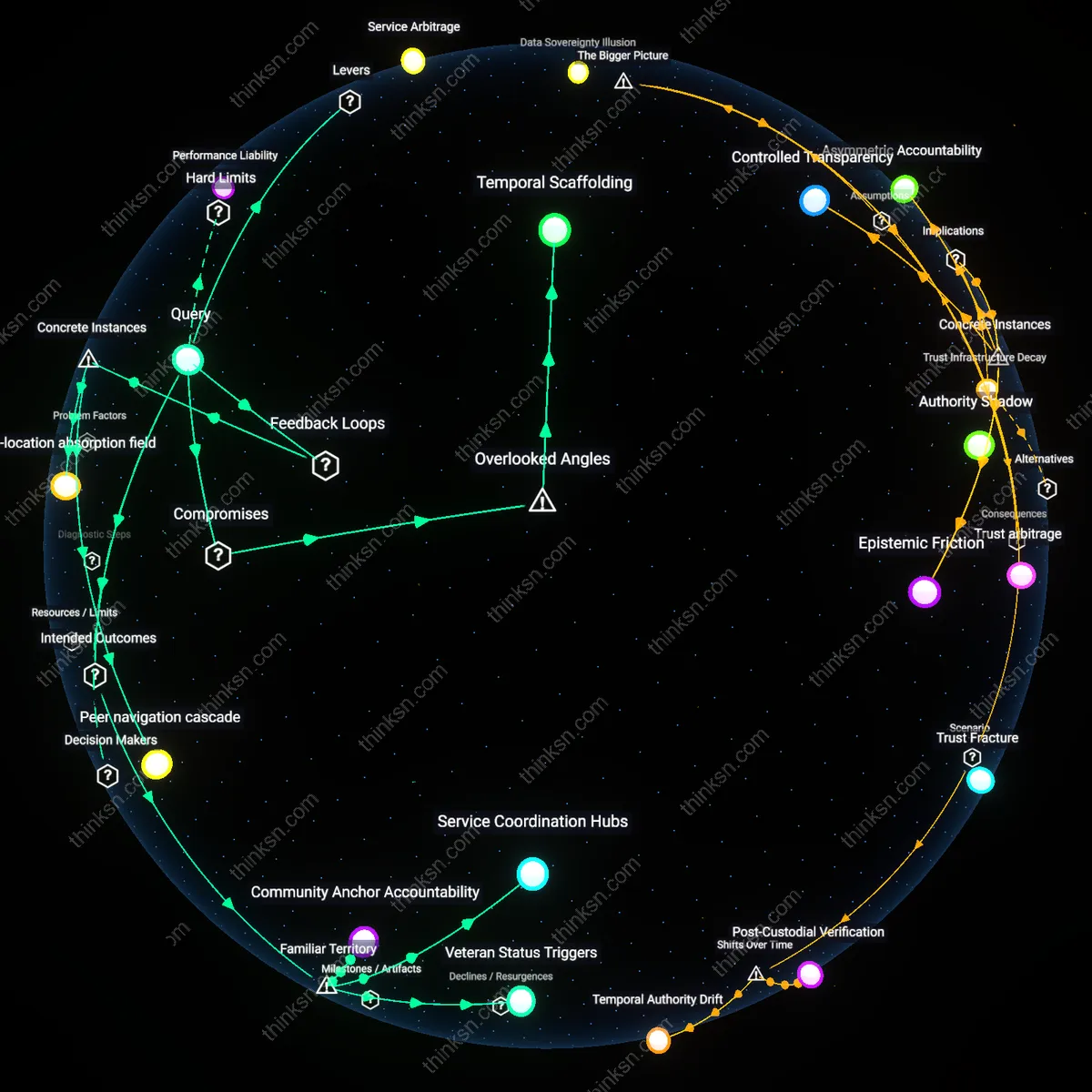

Behavioral rent

Consumers should assess voice-activated assistants through the lens of behavioral rent, as demonstrated by Amazon’s Alexa monetizing routine interaction patterns through third-party skill prioritization. Amazon extracts value not from direct user payments but from guiding user behavior toward preferred services, exemplified by the promotion of Amazon-owned or partnered skills in response to generic requests like 'order toilet paper.' This creates an invisible tollgate on habitual use, where user data trains algorithms that subsequently steer future behavior—revealing that the primary cost is not privacy loss per se, but long-term behavioral enclosure and reduced autonomy. The non-obvious insight is that algorithmic opacity isn't just a shield for vendors—it actively enables the commodification of user habits as a revenue stream.

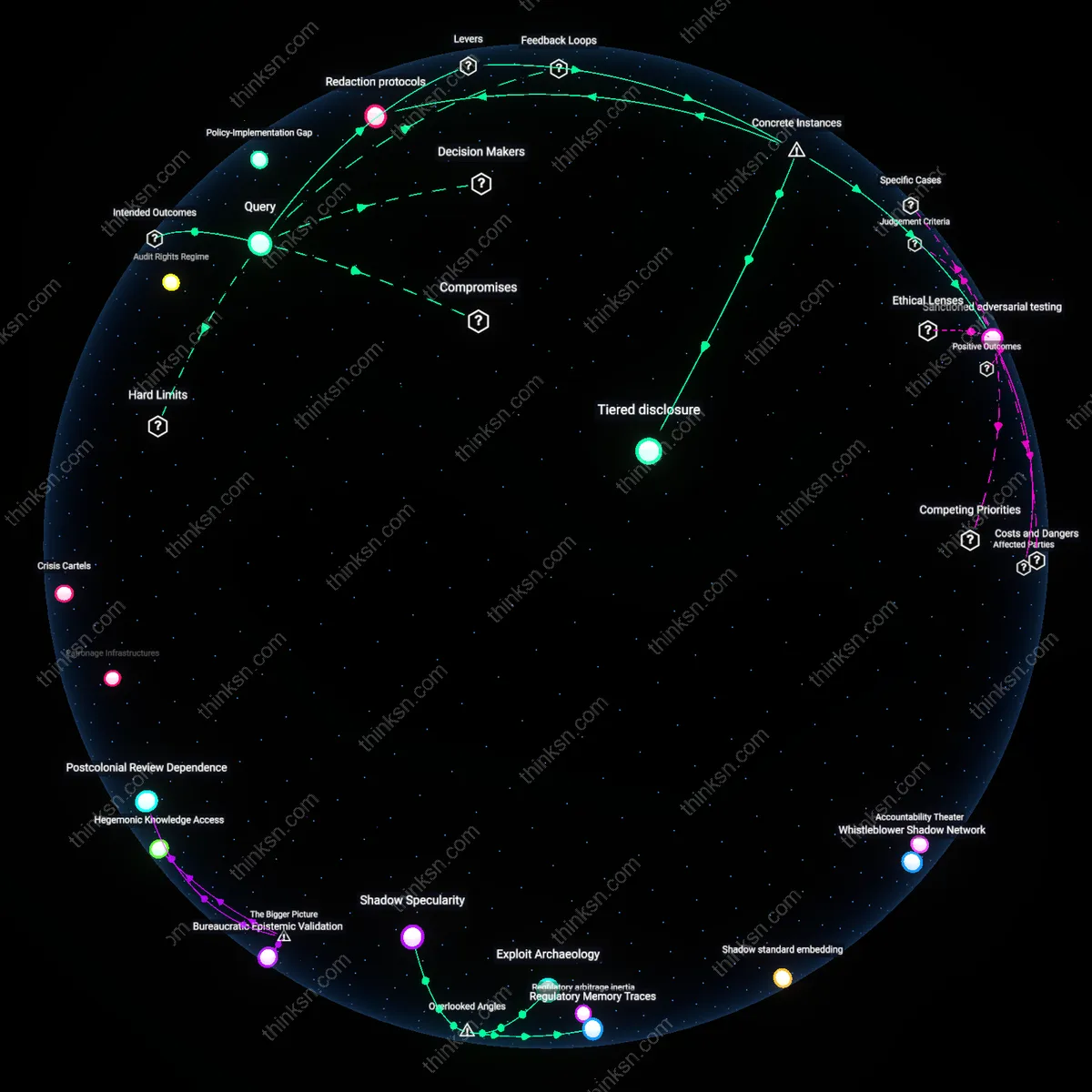

Institutional fallback

Consumers should condition their trust in voice assistants on the strength of institutional fallbacks, as shown by the European Data Protection Board’s enforcement actions against Google’s Assistant over insufficient just-in-time consent disclosures in 2021. French data regulator CNIL, acting under EDPB coordination, mandated changes to how user data is processed during voice recording retention because Google could not prove users meaningfully understood how their queries trained backend models. This demonstrates that consumer decision-making depends not only on personal risk tolerance but on enforceable regulatory infrastructures that correct behavioral learning systems post-deployment—highlighting that opacity gaps are mitigated not by individual vigilance but by third-party accountability mechanisms with investigative jurisdiction.

Semantic capture

Consumers should recognize that voice assistants may induce semantic capture, as occurred when United States federal agencies’ use of Microsoft’s Cortana during 2019-2021 internal pilots subtly reshaped employee understanding of procedural queries, such as redefining 'urgent request' to align with the system’s response thresholds rather than existing policy codes. Over time, staff adjusted their choice of words and reporting categories to match the assistant’s interpretive logic, effectively adapting human judgment to machine inferences. This reveals that low algorithmic transparency enables a quiet inversion of control—where the need to anticipate system response distorts human communication itself, making the deeper risk not data extraction but linguistic assimilation.