Is suspending accounts for hate speech without review silencing free expression?

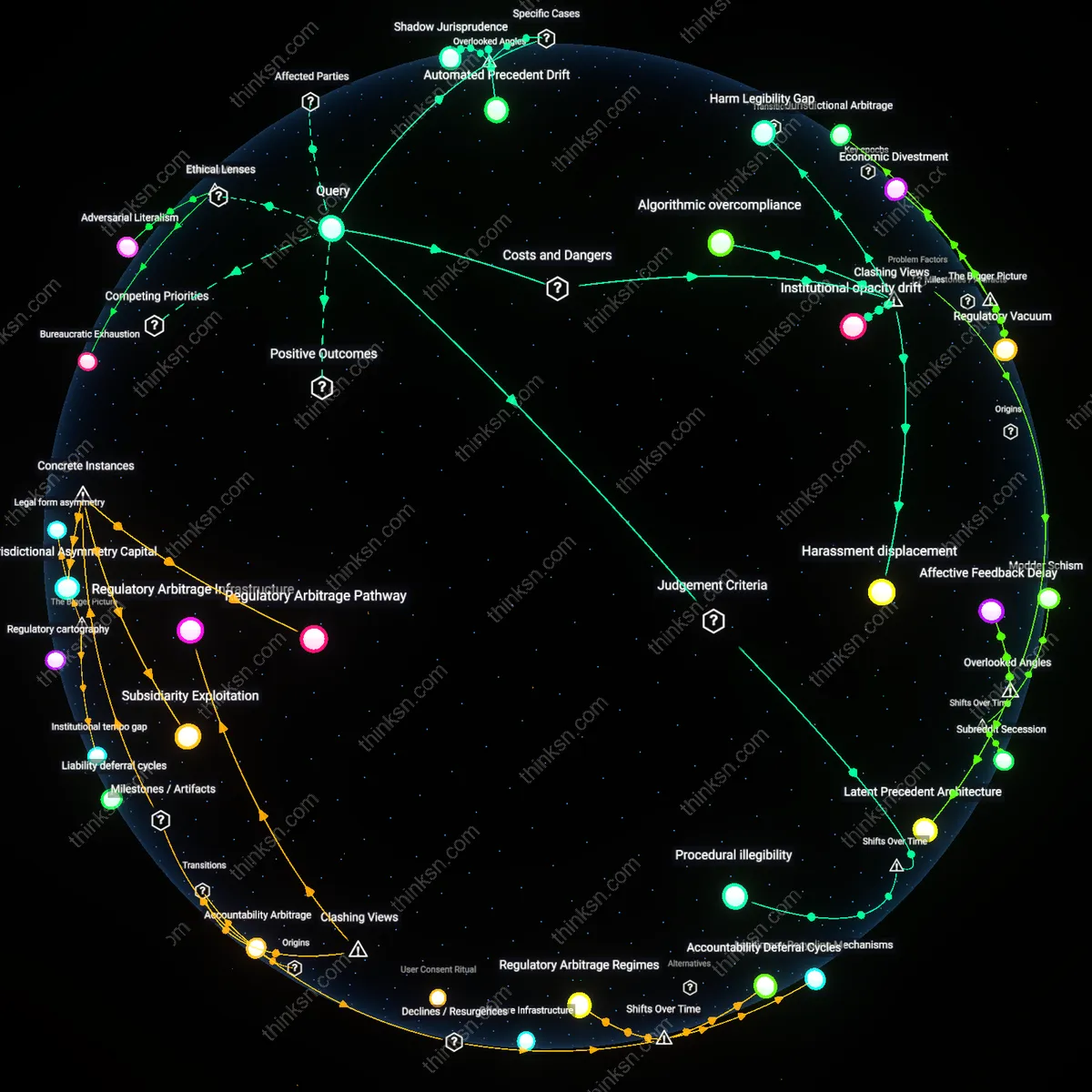

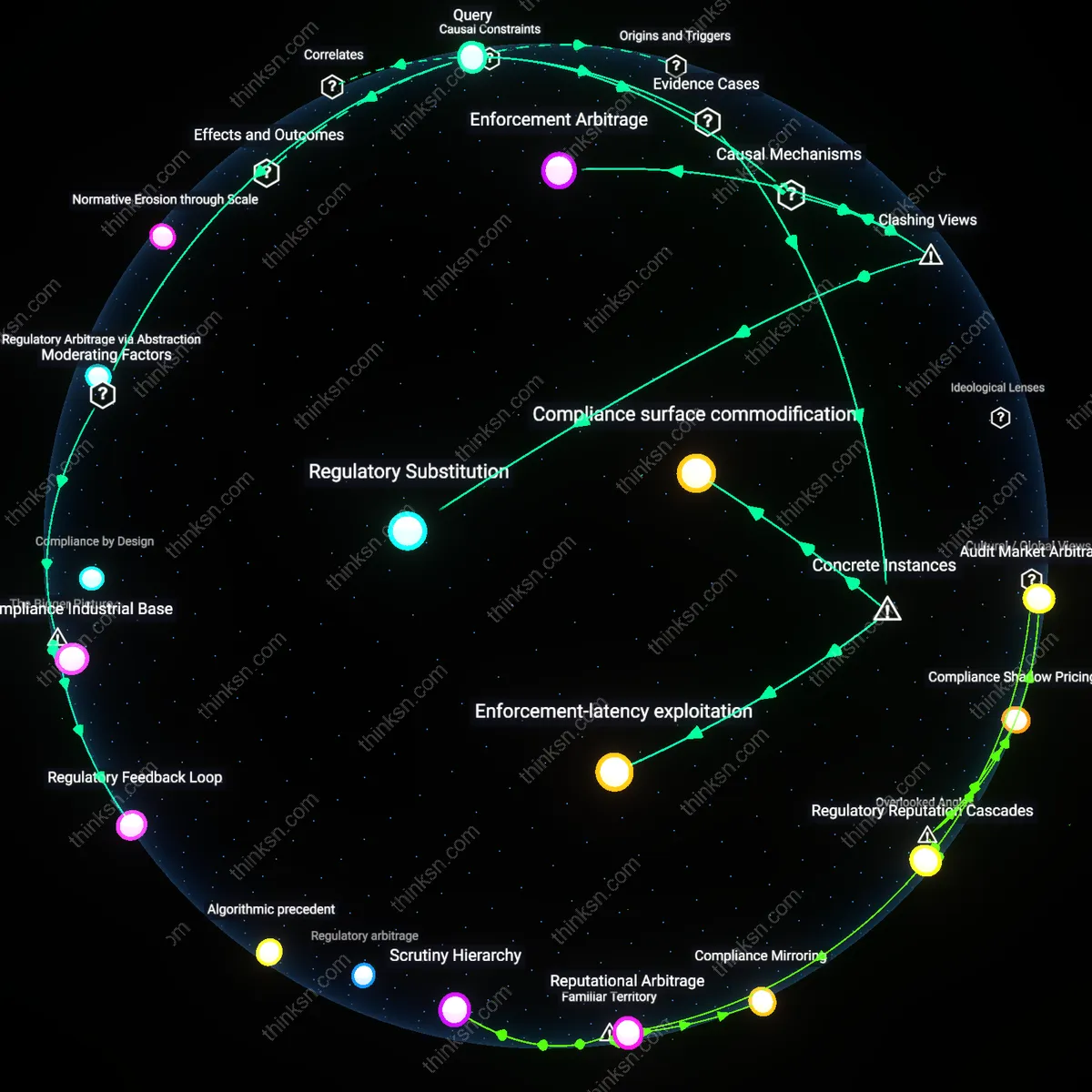

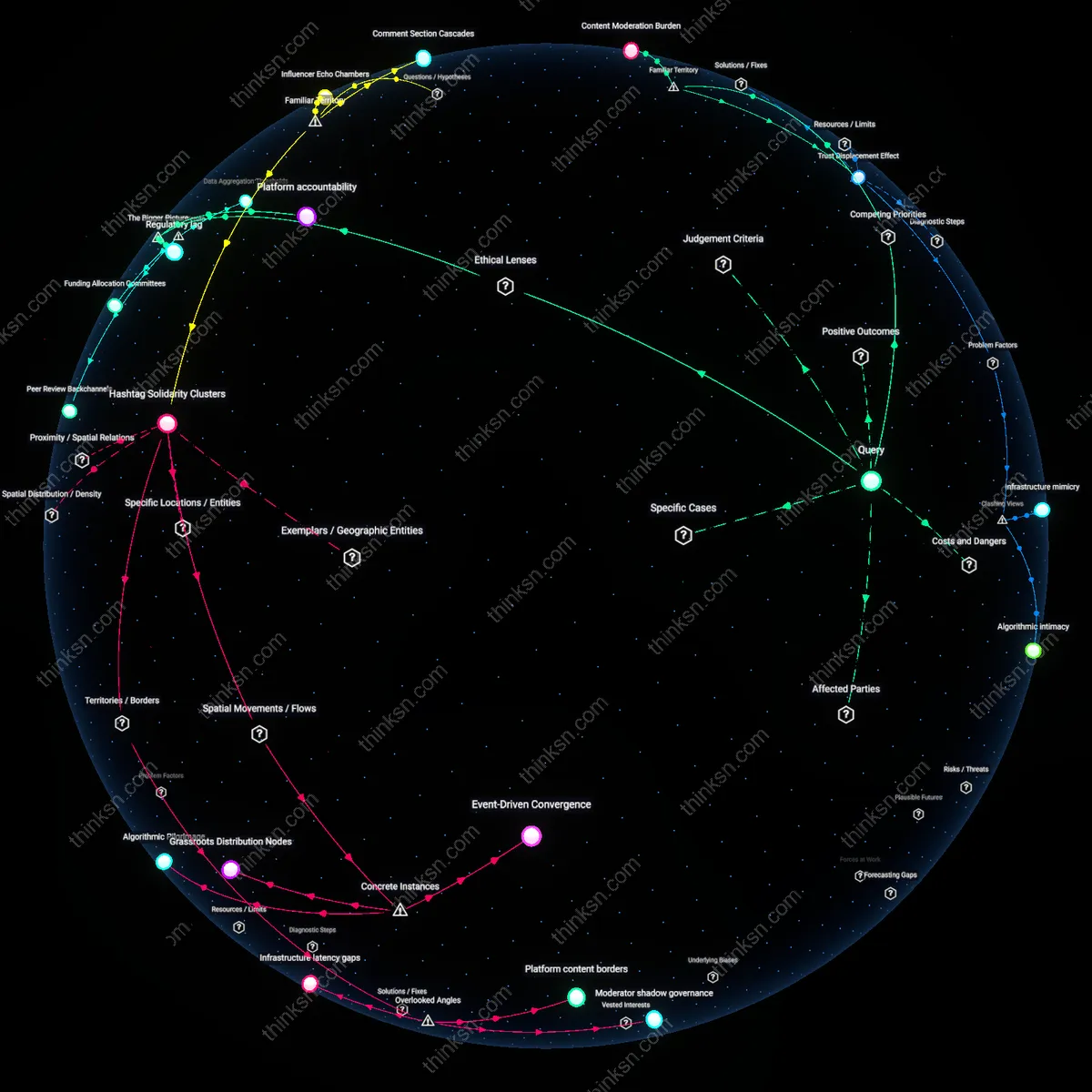

Analysis reveals 12 key thematic connections.

Key Findings

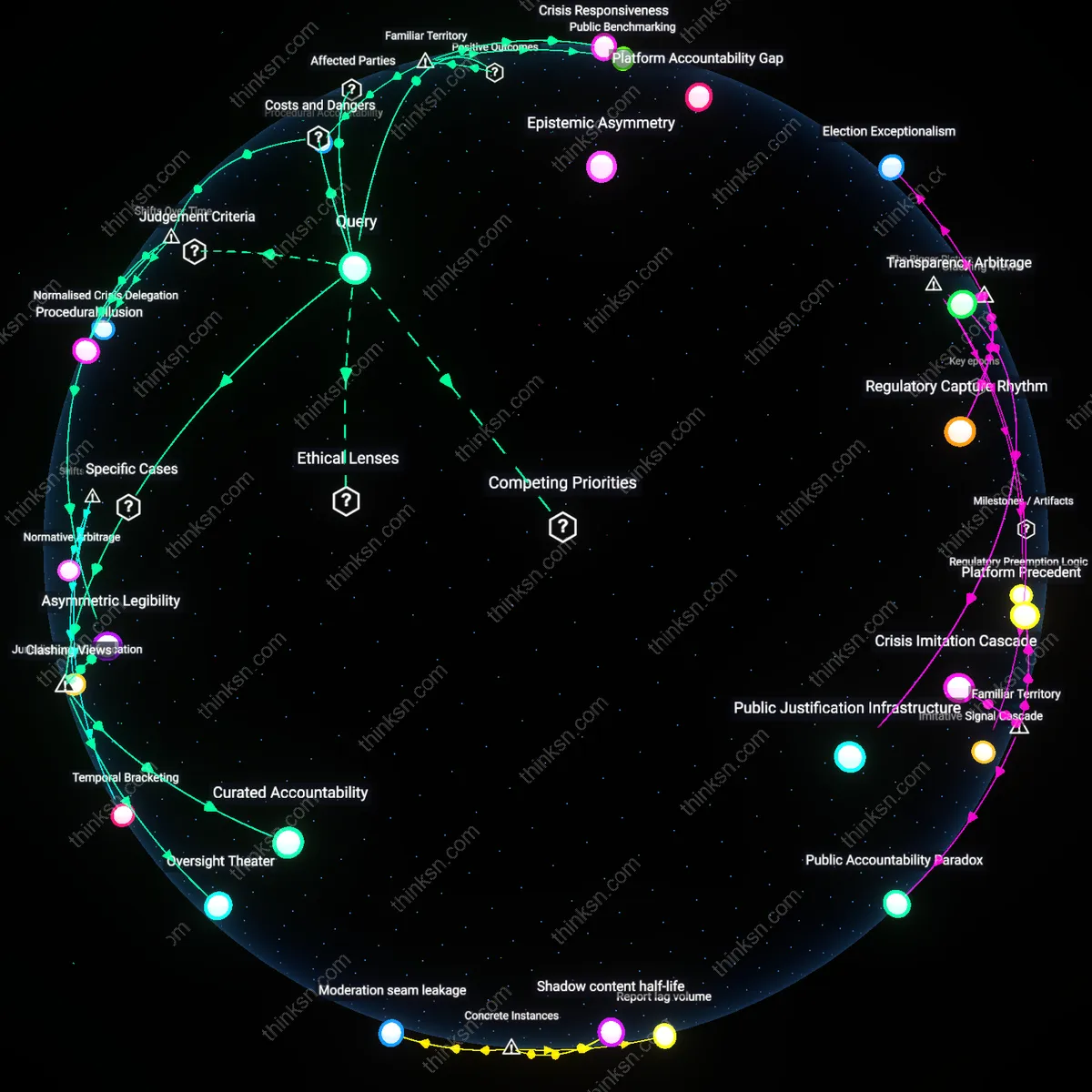

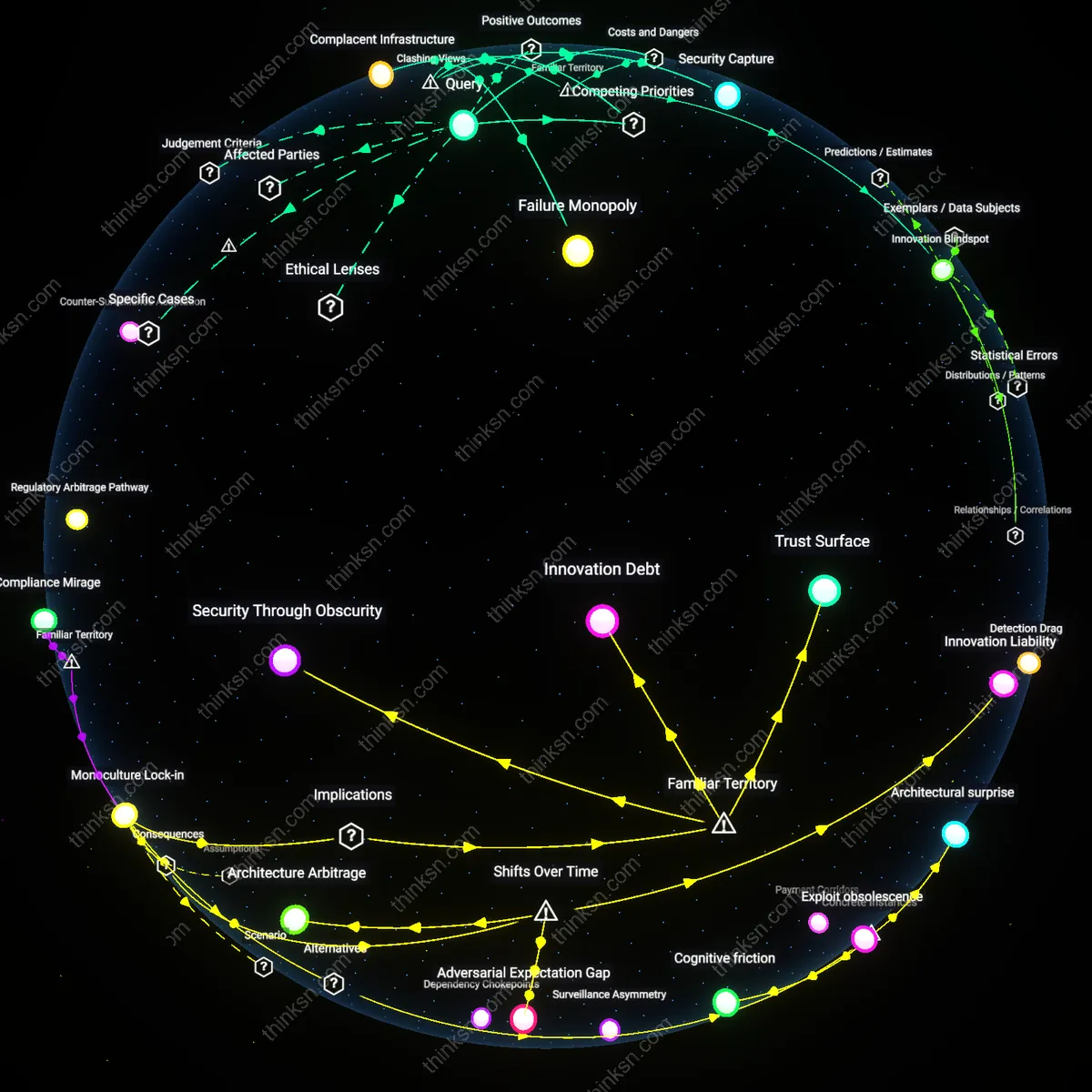

Risk spillover

Payment processors that suspend accounts for hate‑speech reports without transparent adjudication cause an external redistribution of financial risk onto merchants, consumers, and downstream partners, violating the principle of equitable risk sharing that underpins market efficiency. When a merchant’s account is halted, the processor forfeits the ability to settle transaction fees, leaving the merchant to absorb unexpected liabilities and forcing consumers to wait or cancel orders. These hidden burden shifts create a spillover effect that harms entire payment ecosystems, especially small‑business ecosystems that cannot absorb such shocks. Because the market implicitly assumes participants bear their own costs, the processor’s opaque suspensions distort that assumption, undermining economic justice for innocent third parties.

Epistemic‑filter risk

The use of proprietary, opaque algorithms to flag hate speech before account suspension introduces an epistemic‑filter risk that erodes user trust and heightens the chilling effect on speech. Without public disclosure of the criteria or a verifiable audit trail, merchants cannot verify whether their content triggers the filter or adjust their behavior accordingly, leading to over‑caution in legitimate expression. This epistemic uncertainty also perpetuates a cycle where users fear misclassification, reducing engagement and hampering platform innovation. Because procedural justice requires users to understand the rationale behind punitive actions, the lack of transparency fails that core principle, making the practice ethically unsound.

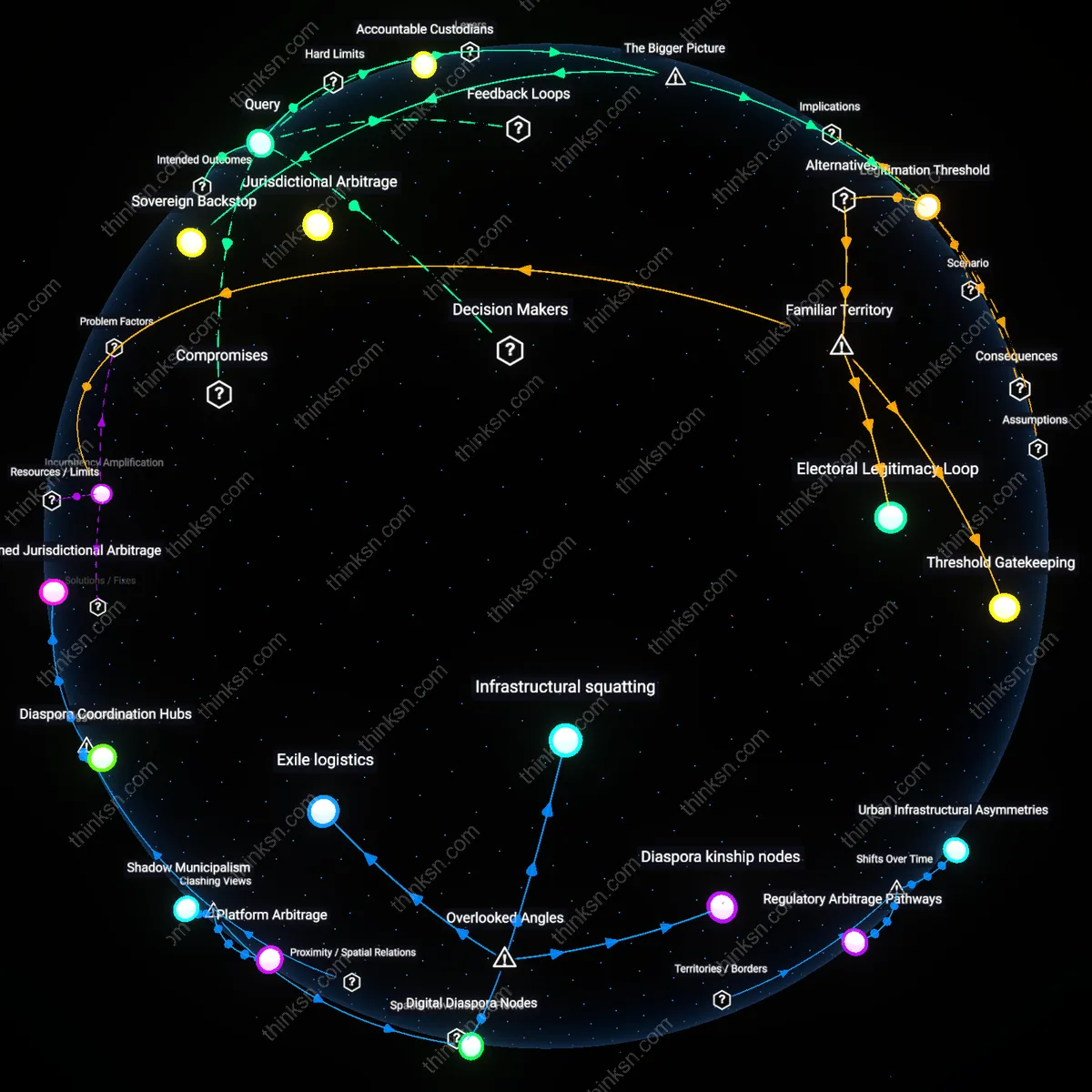

Gatekeeper virtue

If payment processors enforce account suspension for hate‑speech reports as a first‑line defense against financial abuse, and provide a transparent, evidence‑based policy plus a responsive appeal mechanism, the action can be ethically defensible under a public‑safety duty. Such processors can quickly halt the circulation of funds to hate‑speech‑enabled actors, preventing further harm to victims and protecting the integrity of the financial system. The policy’s transparency and appeal ensures that suspended parties can contest decisions, aligning the processor’s duty to prevent harm with the autonomy of the affected users. This equilibrium underutilizes the neglected role of payment gateways as guardians of societal welfare, demonstrating that safeguards can coexist with expressive freedom when grounded in clear, accountable procedures.

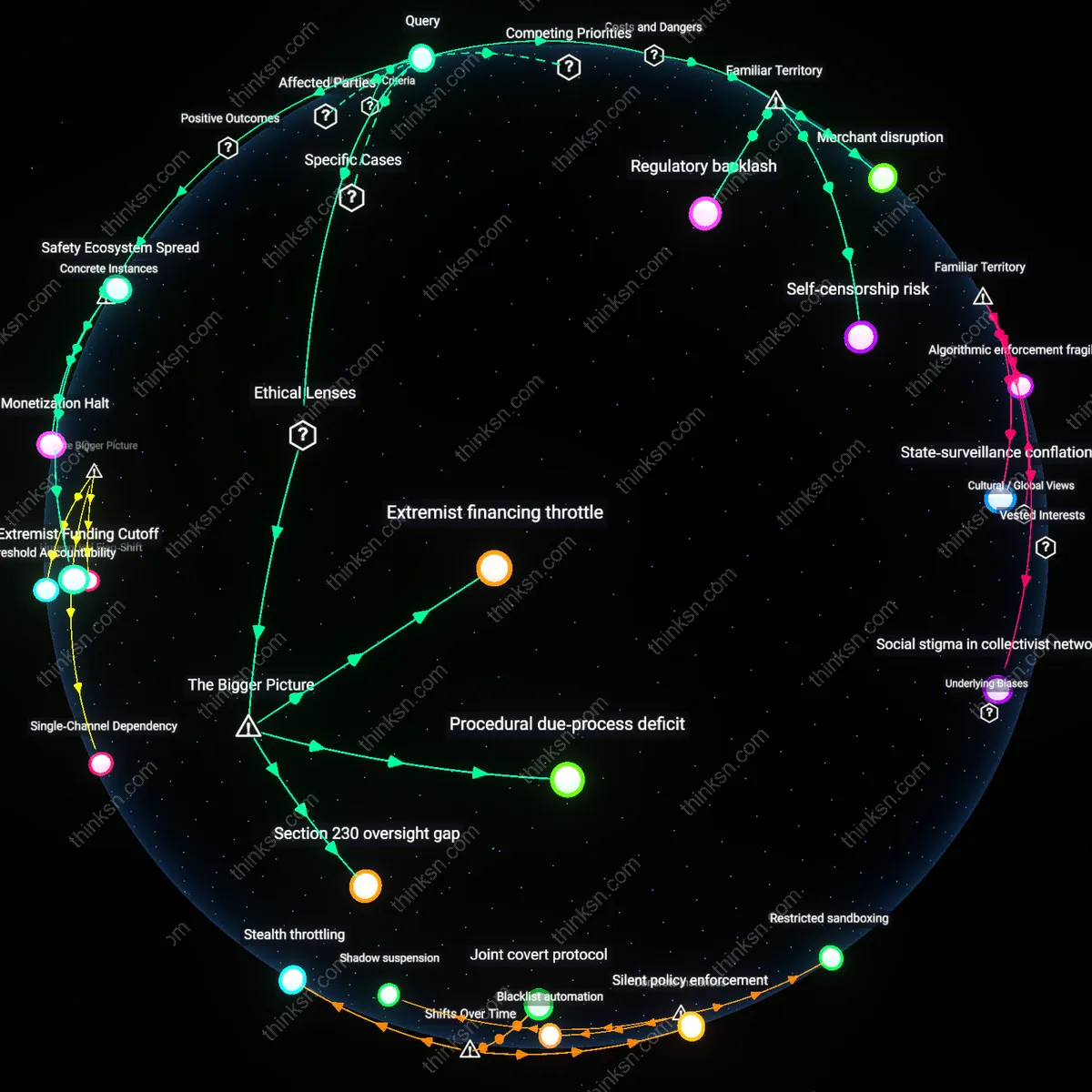

Extremist Funding Cutoff

PayPal suspended the accounts of the neo‑Nation Social Club (NSC) in 2015 after the group was identified as a white‑supremacist organization that used PayPal to purchase extremist literature and propaganda. By cutting off the funding channel that enabled the club to disseminate hate speech, the payment processor directly reduced the group's reach and influence online. The action demonstrates that suspending accounts without transparent adjudication can be ethically defensible when it serves a clear public‑safety goal, as the protection of vulnerable communities outweighs concerns about the chilling effect. This case illustrates how a single suspension can dismantle a funding pipeline that otherwise fuels hate speech.

Monetization Halt

Stripe blocked the merchant account of the online forum "Satanic Hellfire", a site that hosted extremist propaganda, in 2018 after the company detected policy‑violating content. The suspension halted the forum’s ability to collect payments from its subscribers and advertisers, which effectively ended its revenue stream and forced the site to shut down. By preventing monetization of hate speech, Stripe’s action achieved a constructive outcome that curbed the spread of extremist content, showing that suspensions can have positive societal effects even without detailed public adjudication. It highlights the role of payment processors in limiting the economic viability of hate‑speech platforms.

Safety Ecosystem Spread

PayPal’s 2015 policy to suspend accounts belonging to extremist organizations influenced European regulators to embed anti‑hate clauses in the Digital Services Act, prompting processors such as Square and Adyen to adopt stricter verification protocols. The resulting policy diffusion created a broader ecosystem of digital safety measures that go beyond any single processor, preventing potential engagements of hate‑speech platforms across multiple services. This chain of influence demonstrates that a suspension—without transparent adjudication—can catalyze systemic change that mitigates the chilling effect on speech by reinforcing overall platform accountability. The positive utility lies in the ripple effect that strengthens societal protection against extremist content.

Merchant disruption

No, the practice is not ethically defensible because it imposes sudden, costly economic disruption on small merchants who rely on continuous payment processing, undermining their survival. Payment processors such as Stripe and Square often suspend accounts flagged for hate‑speech claims, cutting off revenue streams for restaurants, online retailers, and gig‑workers who have no alternative payment outlet. This abrupt loss of liquidity threatens job security and competitiveness, highlighting how the economic cost to vulnerable businesses can outweigh the benefits of suppressing hateful content.

Self‑censorship risk

No, the practice is not ethically defensible because a lack of transparent adjudication fosters widespread self‑censorship, especially among politically marginalized communities, effectively chilling essential political discourse. Users who share strong, minority‑aligned viewpoints fear that their accounts may be suspended without clear justification, prompting them to dilute or erase content that could be deemed controversial. The resulting suppression of civic engagement erodes democratic deliberation, illustrating that the chilling effect overrides the moral imperative to limit hateful speech.

Regulatory backlash

No, the practice is not ethically defensible because opaque suspensions open payment processors to civil‑rights litigation and regulatory fines, imposing significant legal costs that outweigh the benefit of curbing hate speech. When processors like PayPal refuse to disclose decision criteria, affected users can file claims under the First Amendment and Equal Protection, compelling costly court‑room battles; regulators such as the FTC may issue cease‑and‑desist orders for violating non‑discriminatory practices. The resulting financial and reputational risks demonstrate that the legal burden of silent silencing can surpass any deterrent effect on hate propaganda.

Extremist financing throttle

Payment processors serve as de facto financial gatekeepers for extremist networks, so suspending accounts in response to hate‑speech reports aligns with a utilitarian calculus that prioritizes public safety over ambiguous free‑speech claims. However, the absence of transparent adjudication erodes the legitimacy of that safety net and risks a chilling effect on legitimate commerce.

Procedural due‑process deficit

Payment processors violate the deontological duty to procedural justice by suspending accounts without transparent adjudication, thereby inducing a chilling effect that conflicts with the moral imperative to uphold free speech. By assuming the role of gatekeepers yet lacking formal adjudicative safeguards, these processors hand over the power to censor to opaque corporate discretion. Such opacity contravenes the principle that liberty requires clear, predictable limits, and thus erodes trust among users and civil‑society advocates.

Section 230 oversight gap

Section 230 confers immunity on payment processors as non‑content providers, but suspending accounts without transparent adjudication sidesteps the statutory protection of free speech and introduces a regulatory blind spot. Because Section 230’s intent is to balance the economic ecosystem with user expression, opaque enforcement delegitimizes the very balance it seeks to preserve. Consequently, marginalized voices are disproportionately exposed to arbitrary suspensions, intensifying the chilling effect on the pluralist public sphere.