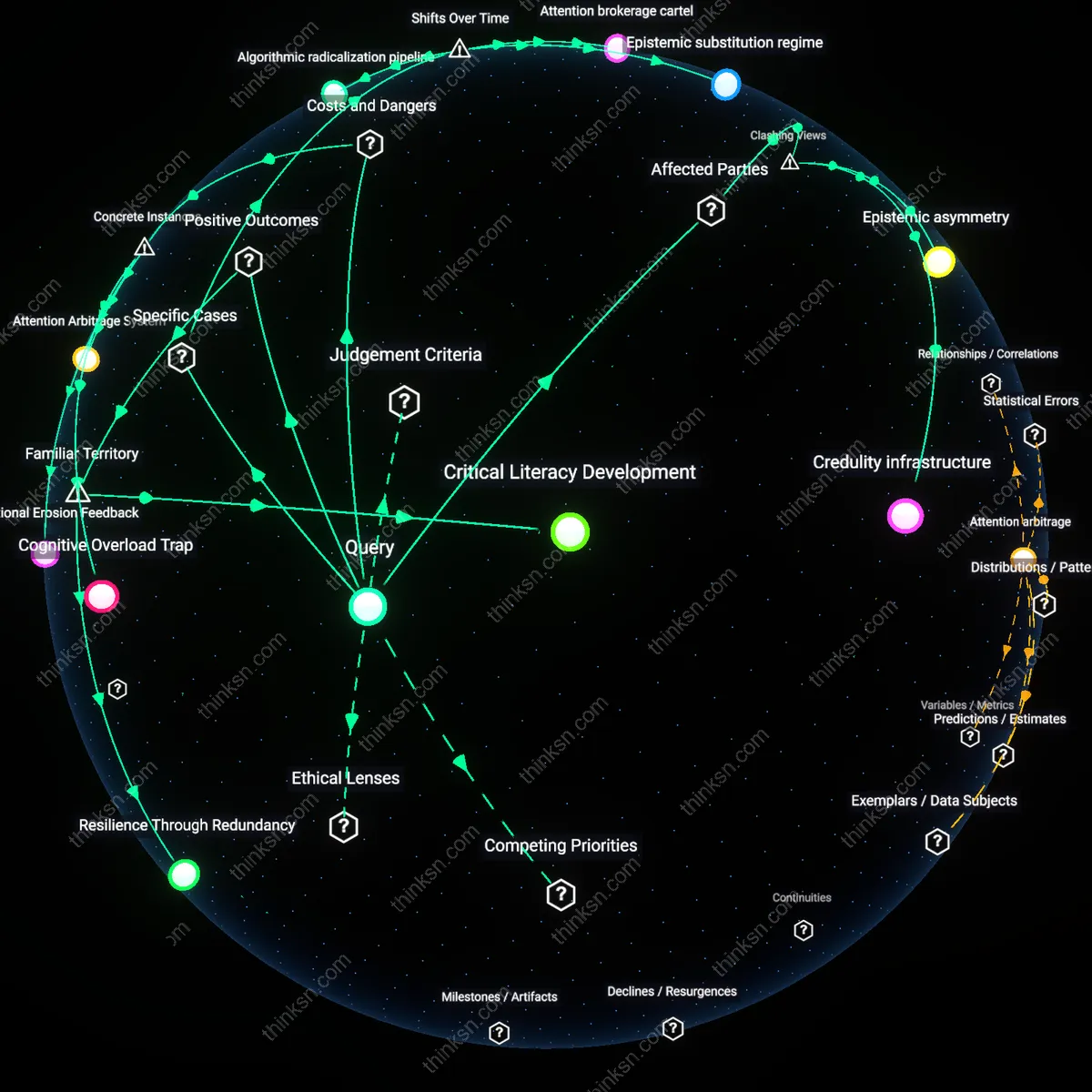

AI Literacy Training

By adopting Poynter Institute’s AI‑Media Literacy Curriculum, staff at the Washington Post can actively interrogate AI headlines through hands‑on exercises that expose algorithmic biases and typical sensational patterns. The curriculum pairs real headlines generated by GPT‑3 with a peer‑review board that evaluates plausibility, encouraging reporters to ask why certain phrasing was chosen and whether it aligns with source material. The underappreciated impact is that the same analytic framework later becomes a template for journalists when assessing unfamiliar AI outputs, effectively turning training into an ongoing investigative habit.

Label Transparency

By deploying the EU AI Act’s mandatory content‑labeling scheme in Italy’s national broadcaster Rai News, workers encounter a conspicuous “AI‑generated” header on every headline that triggers a procedural verification step. Rai News has integrated the EU guidelines into its content management system, drafting an automated tag that appears in article feeds and in the newsroom’s editorial dashboard, demanding a brief note from the editor about source verification. The surprising effect is that the label shifts skepticism from content to process, making humility a default workflow action.

API Moderation Flagging

By integrating OpenAI’s Moderation API into the Associated Press’s CMS, reporters can instantly receive a risk score and a user‑friendly flag for headlines that exhibit high AI‑likeness, prompting them to dig deeper. The AP’s internal dashboard displays an AI‑Likelihood percentage and supplementary confidence metrics, enabling editors to consult the context within a few clicks, thereby turning an autonomous AI tool into a question‑prompt. What is underappreciated is that the API’s transparency of scoring thresholds embeds an audit trail directly into newsroom workflow, making each headline’s genesis traceable and disciplinarily accountable.

AI Literacy Apprenticeship

Require that every worker's mandatory occupational safety training includes an AI‑generated headline literacy module, turning compliance training into frontline media scrutiny practice. It will embed short, scenario‑based lessons where workers dissect AI headlines for bias and factuality within the same OSHA or HR safety compliance frameworks that already mandate checklists and refresher courses, thus using established instructor‑led or e‑learning infrastructure. The approach banks on the 2000–2010 shift from manual apprenticeship to digitally certified skill sets, making skepticism a regulated safety component rather than an optional soft skill – a transition often overlooked when discussing media literacy.

Browser Verification Extension

Integrate a lightweight AI‑verification plug‑in into the corporate web‑browser, automatically flagging and annotating AI headlines encountered in the workplace's internal and external browsing. The plug‑in uses the browser extension ecosystem that expanded after the 2010s shift from isolated news sites to single‑login corporate intranets, enabling real‑time source trails and bias alerts within the employee's workflow. By leveraging existing IT security policies and the transition to unified knowledge portals, passive consumption is turned into active interrogation – a nuance of the browser‑extension era that is rarely highlighted in media‑criticism strategies.

AI Transparency Standard

Mandate that any AI system deployed for headline generation must produce an audit trail and source metadata, enforced by a new federal AI Transparency Regulation. This leverages the 2016 electoral interference turning point that exposed AI‑driven misinformation, creating legislative impetus for source accountability, and is implemented through FTC and FCC adopting AI content disclosure rules requiring headlines to display provenance logos and verifiable data feeds. The regulatory shift from opaque algorithmic black boxes to publicly auditable models reveals that workers can question headlines when the system itself obliges transparency, turning passive trust into active verification.

Learning‑cycle loop

Embedding media‑literacy modules into the standard continuous learning trajectory of knowledge workers will create a reinforcing loop where each training cycle ups critical appraisal of AI headlines, reducing acceptance of misleading content and reinforcing new informational norms that circulate within teams. HR, L&D, and executive policy must align these modules with performance metrics, ensuring that the skill translates to real headline evaluation. The underappreciated factor is that existing CPD frameworks already provide a stable conduit for skill diffusion, so the initiative leverages an established balancing loop—corporate learning budgets—while scaling the reinforcing effect.

Peer‑challenge loop

Organizing monthly peer‑review contests where employees critique AI headlines in a gamified platform creates a peer‑challenge loop, normalizing scrutiny through social reward and generating a reinforcing minority that disburses warning signals across the company. Managers and internal communications departments set up the contests, offering badges and leaderboards, while peer networking sessions convert competitive findings into broader informational norms. The balancing loop of novelty fatigue is mitigated by tying contest outcomes to professional development credits, sustaining the engagement cycle.

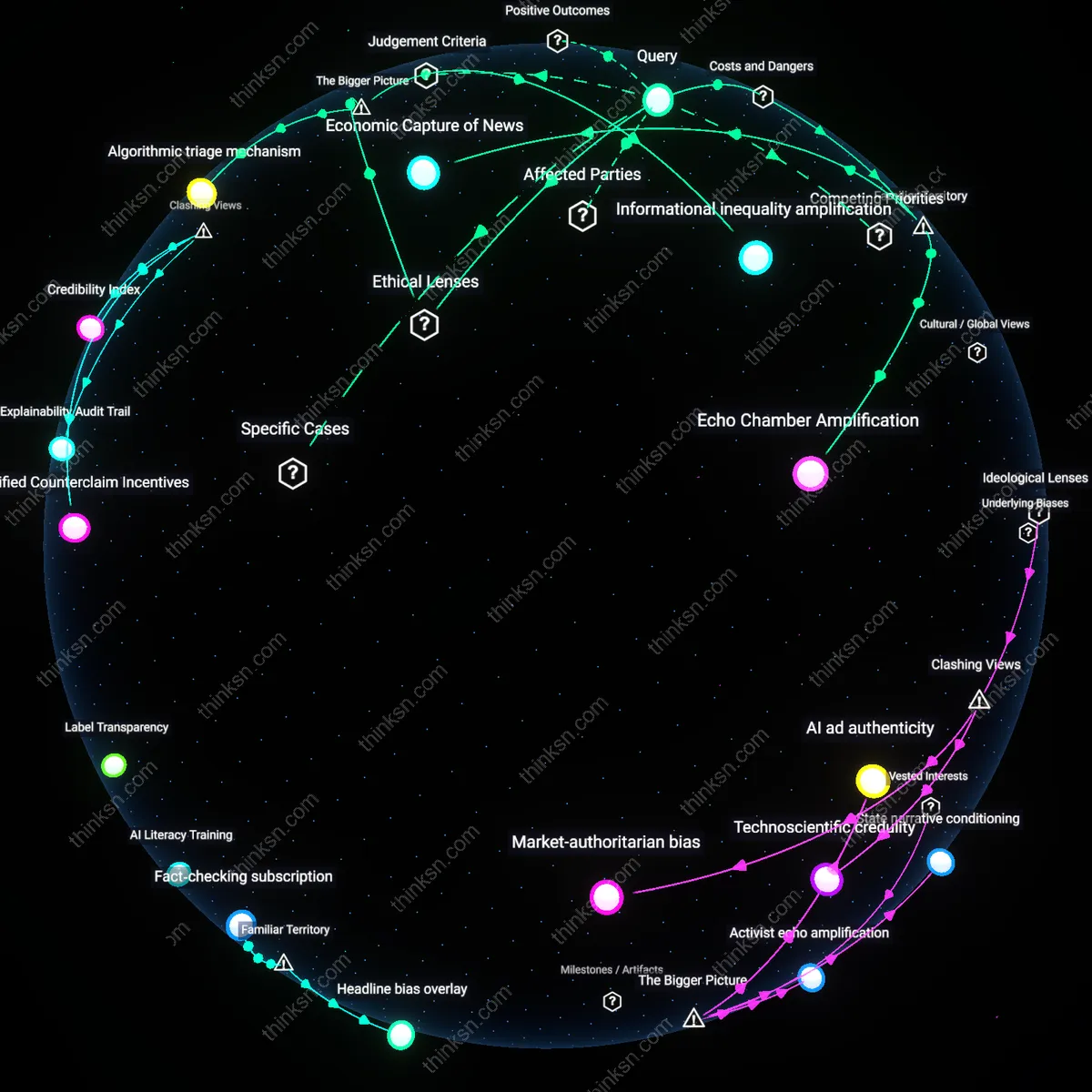

Explainability Audit Trail

Corporate AI governance boards must require every generative AI headline to include a machine-readable explainability audit trail that workers can edit. The audit trail records the specific model version, data lineage, and the minimal justification chosen by the algorithm, and it is surfaced in the internal collaboration platform that employees access to draft reports. Because workers must consult this metadata before trusting a headline, they develop a habit of questioning instead of accepting; the ability to edit the audit trail empowers them to communicate errors back upstream. This structural requirement contrasts with the prevailing view that only training improves critical reading skills, showing that forcing transparency alters behavior.

Gamified Counterclaim Incentives

Foundational performance committees should implement a points-based incentive scheme that rewards employees who expose factual inaccuracies in AI‑generated headlines. Each detection is verified by an internal verification task force; points translate into quarterly bonus adjustments and public recognition on a leaderboard. By tying skepticism to tangible rewards, workers see critical analysis as a competitive advantage rather than a procedural duty. This replaces the intuitive notion that informal policy nudges suffice, revealing that economic incentives can powerfully sustain skepticism.

Credibility Index

Labor union representatives must negotiate with AI vendors to institutionalize a worker‑verified credibility index that rates headlines on a public transparency dashboard. Unions mobilize worker panels to audit each headline, scoring it on timeliness, precision, and source diversity; vendors provide access to internal logs to validate scores. The published index forces vendors to adjust their headline generation parameters in real time and signals to workers that scrutiny carries institutional weight. This challenges the assumption that AI content can be self‑verified by vendors alone, emphasizing collective accountability.

Headline bias overlay

Integrate a real‑time AI‑driven fact‑check overlay in newsroom email clients to let reporters spot headline bias instantly.

The overlay scans incoming headlines and flags linguistic patterns that correlate with sensationalism or misrepresentation, prompting the journalist to re‑evaluate before forwarding. Reporters, editors, and the IT department collaborate to calibrate the algorithm, ensuring it reflects editorial standards while remaining responsive. The upside is speed—instant feedback—but the trade‑off is growing dependence on an automated signal that may subtly diminish independent scrutiny.

Fact‑checking subscription

Provide a low‑cost, up‑front fact‑checking subscription to all workers, where automated summaries highlight the most contested facts, reducing reliance on AI headline acceptance while keeping cost low.

Workers receive daily digests that summarize findings from reputable fact‑check services, automatically tagging headlines that conflict with established evidence. The finance team negotiates a tiered subscription with providers like Snopes or PolitiFact, while the IT department embeds the summaries into existing collaboration tools. The method enhances headline accuracy but embeds a recurring budgetary commitment, illustrating the tension between fiscal savings and the sustained investment required for rigorous scrutiny.