Is YouTube Educating or Distracting Your Teen?

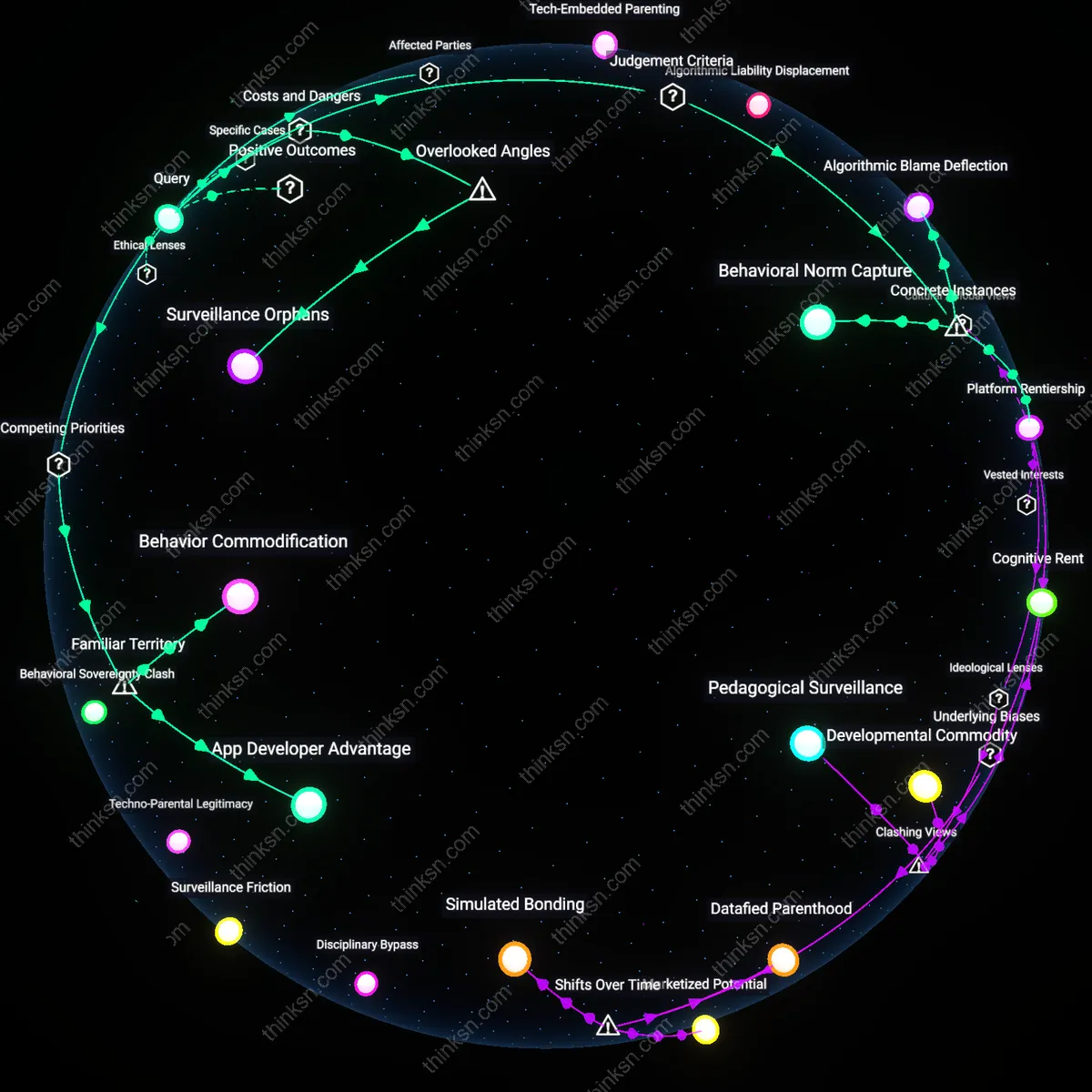

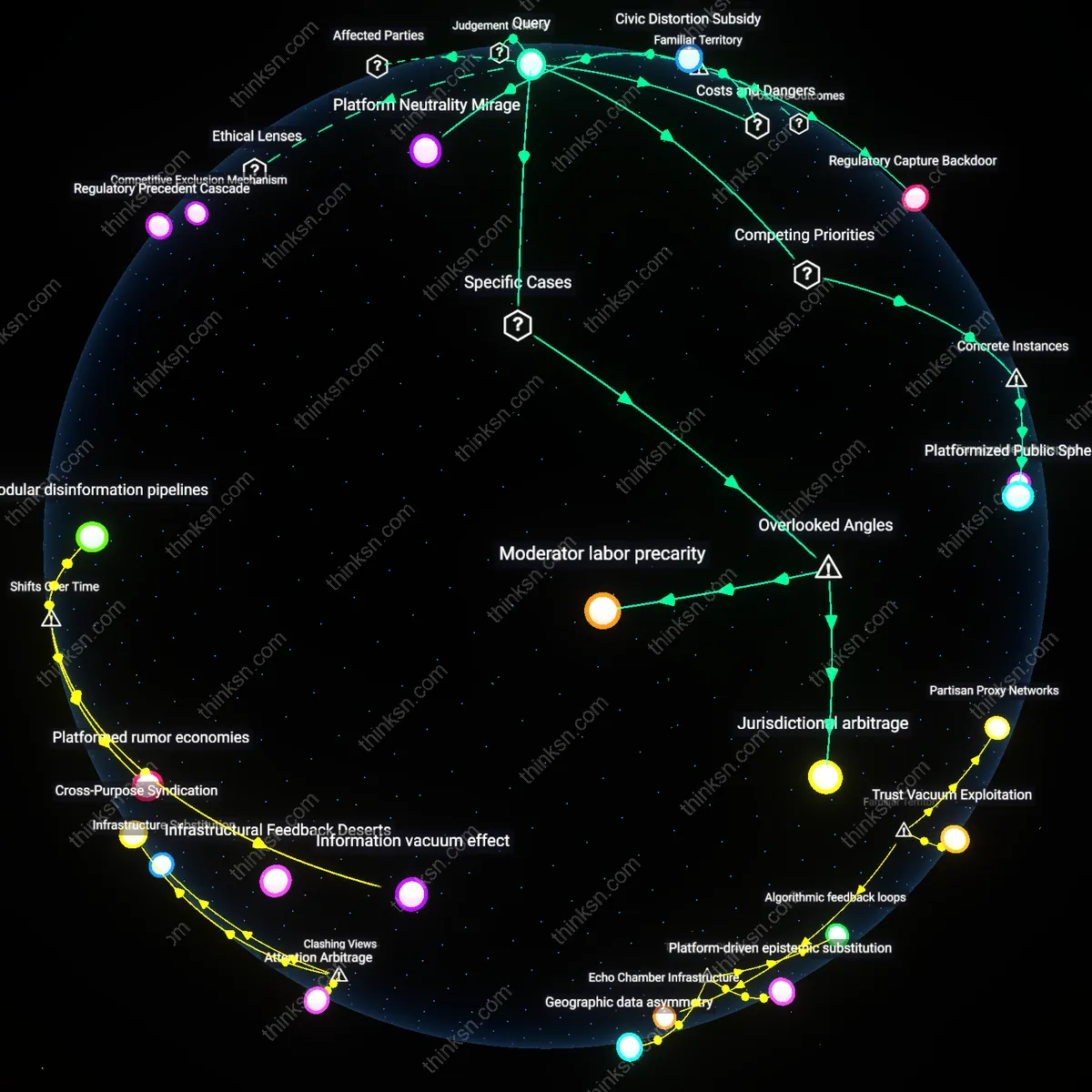

Analysis reveals 8 key thematic connections.

Key Findings

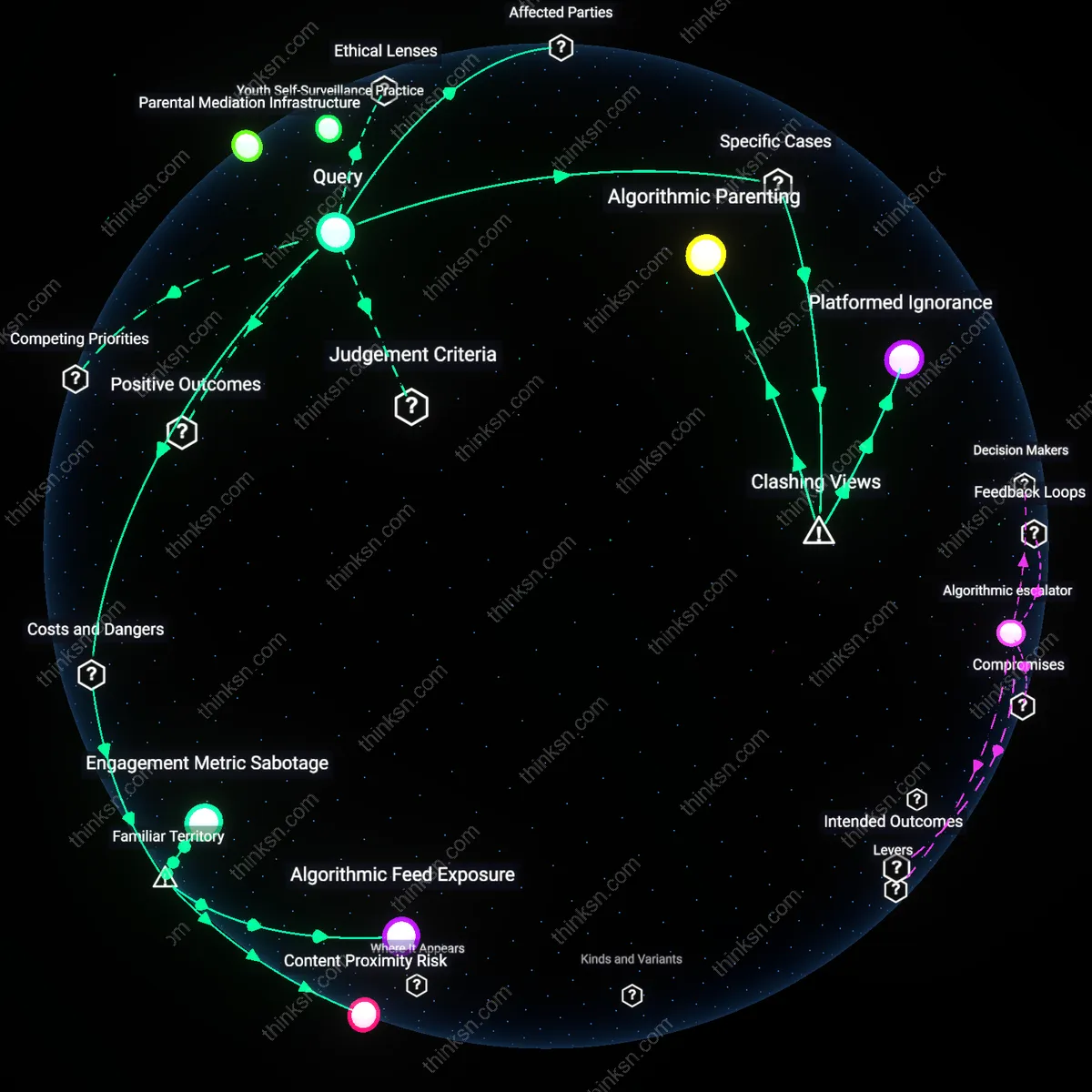

Parental Mediation Infrastructure

A parent can counterbalance YouTube’s algorithmic risks by institutionalizing co-viewing routines supported by school-led digital literacy programs, as seen in the Oslo Municipal Education Office’s 2021 parental engagement initiative in Norway, where educators trained parents to use shared watch sessions with students as structured reflection points. This approach shifts parental oversight from surveillance to pedagogical collaboration, leveraging public education institutions as mediators between algorithmic systems and family dynamics. The non-obvious insight is that effective parental control does not require technical blocking but can emerge through socially embedded, school-facilitated routines that reposition parents as interpretive guides rather than gatekeepers.

Algorithmic Friction Design

Parents gain leverage over YouTube’s sensationalist algorithms when their child uses platforms like Kiddle or YouTube Kids, which were explicitly engineered with friction mechanisms—demonstrated by Google’s 2017 redesign of YouTube Kids to disable autoplay by default and limit related content recommendations. This structural intervention disrupts the seamless escalation path from educational to extreme content by inserting deliberate pauses and choice constraints into the user journey. The underappreciated reality is that algorithmic influence can be mitigated not only through vigilance but through purposefully degraded interface designs that reduce engagement-driven drift, privileging cognitive sovereignty over retention metrics.

Youth Self-Surveillance Practice

Teenagers themselves can become active regulators of algorithmic exposure when equipped with reflexive media tools, as evidenced by students at Mission High School in San Francisco who, in 2019, participated in a digital citizenship curriculum that taught them to audit their own YouTube watch histories and retrain recommendation engines using intentional search and deletion patterns. This flips the power dynamic by treating algorithmic feedback loops as malleable rather than deterministic, turning self-tracking into a form of resistance. The overlooked insight is that algorithmic resilience emerges not just from external oversight but from fostering youth-led interrogation of recommendation logic, making self-surveillance a critical component of digital agency.

Algorithmic Feed Exposure

Disable autoplay to cut the primary vector of compulsive content escalation. Autoplay removes decision points for teens, enabling YouTube’s system to automatically serve increasingly extreme or emotionally charged videos after each viewed item; this mechanism operates through engagement-optimized machine learning models trained on attention retention metrics, making passive continuation more likely than active disengagement. Most parents recognize autoplay as a feature, but fail to see it as the central architectural lever that sustains addictive viewing patterns even when initial content is educational.

Content Proximity Risk

Restrict search to pre-approved channels because search results and recommendations are co-contaminated by proximity to sensationalist metadata. YouTube’s algorithm clusters videos not only by topic but by engagement patterns, so a teen watching a history documentary might be funneled toward extremist revisionist content due to shared keywords and high watch-time signals; this associative drag operates beneath visibility, leveraging behavioral mimicry across ideologically charged and benign topics. While parents often monitor specific videos, they overlook how semantic adjacency in YouTube’s recommendation graph generates risk even from legitimate educational starting points.

Engagement Metric Sabotage

Use browser extensions to hide like counts and view metrics because visible popularity signals reshape teen evaluation of credible content. When teenagers see high view counts or viral indicators on emotionally charged videos, they unconsciously treat popularity as a proxy for truth or relevance, a cognitive shortcut exploited by YouTube’s interface design; this heuristic distortion occurs through social proof mechanisms embedded in the platform’s display logic. Parents typically focus on content filtering but neglect how metric visibility alters judgment itself, promoting algorithmic conformity over critical assessment.

Algorithmic Parenting

Parents can counter YouTube’s sensationalist algorithms by actively co-viewing and narrating content alongside their teenagers, transforming passive consumption into guided engagement. In households where parents regularly sit with teens while they watch YouTube—such as those observed in Common Sense Media’s 2022 family case studies—the adult’s real-time commentary disrupts the platform’s autonomous recommendation flow by inserting deliberate cognitive friction. This practice exploits YouTube’s lack of user authentication between viewers, allowing parental presence to hijack the algorithm’s assumed user identity and reframe the context of engagement. The non-obvious insight is that parenting becomes not a boundary-setting act but a performative interference within the algorithmic feedback loop itself, reframing supervision as a technical intervention rather than a behavioral restriction.

Platformed Ignorance

The real risk for parents isn’t exposure to harmful videos but the illusion of control provided by YouTube’s 'Supervised Mode,' which falsely suggests algorithmic influence can be filtered without altering usage patterns. Families relying on this feature—such as those documented in Mozilla’s 2023 'Regretted Watching' study—often experience increased passive viewing because the tool reinforces trust in automated safety while doing little to decouple recommendations from engagement-driven logic. This creates 'Platformed Ignorance,' where parental vigilance is satisfied by interface illusions, and teens drift deeper into curated rabbit holes masked as age-appropriate. The counterintuitive finding is that safety tools may amplify risk by displacing critical media literacy with misplaced faith in corporate-designed oversight.