Public Statements and Platform Censorship: State Control or Political Influence?

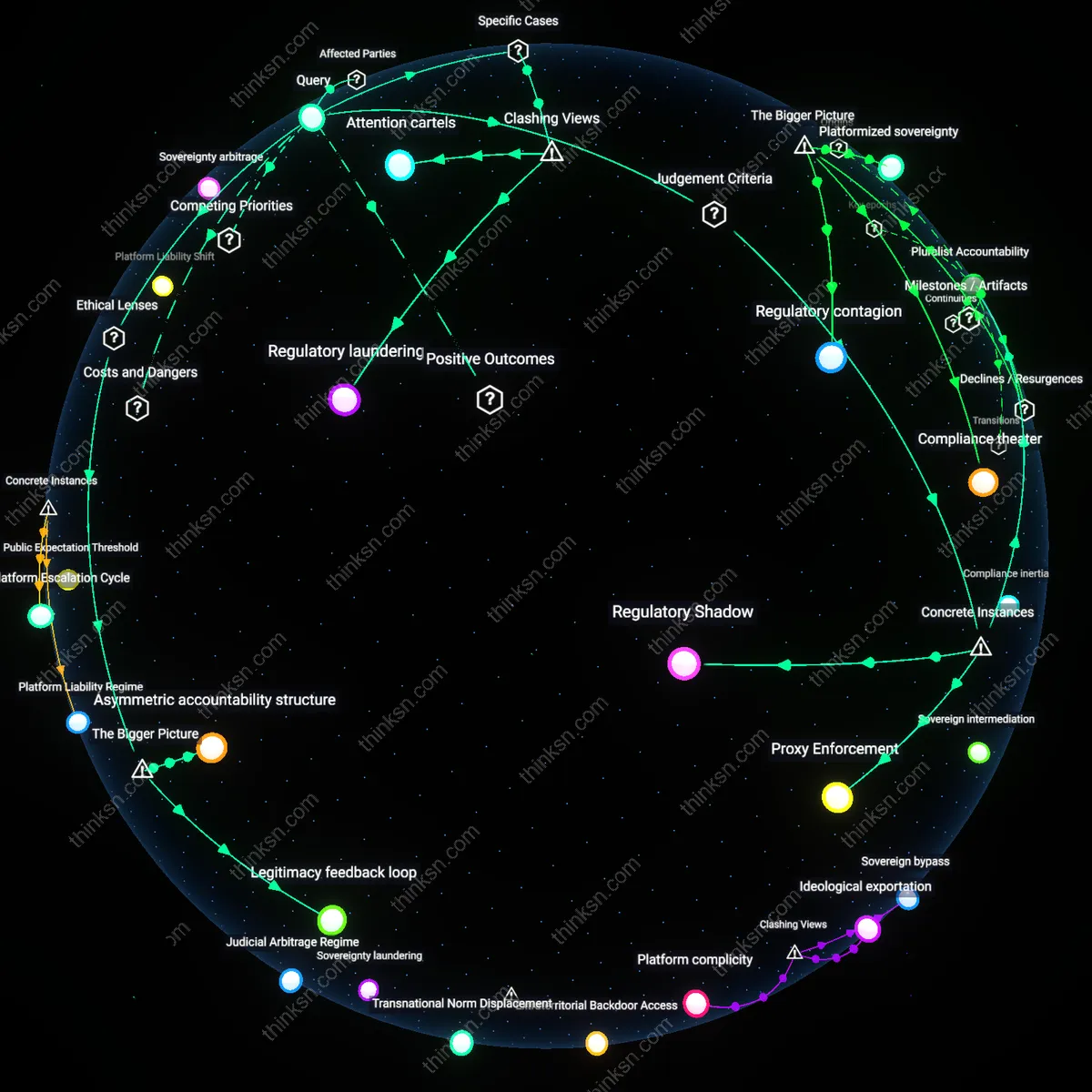

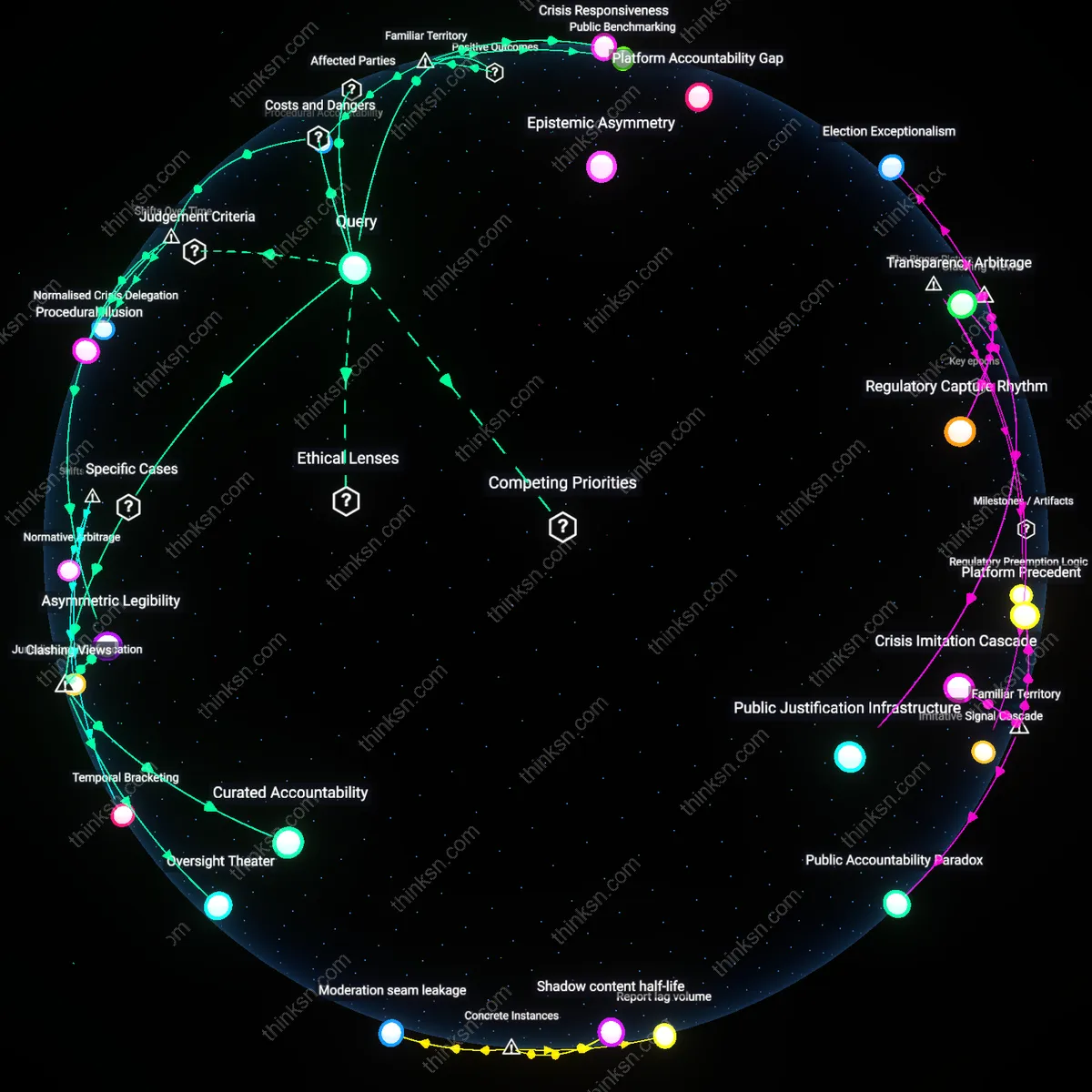

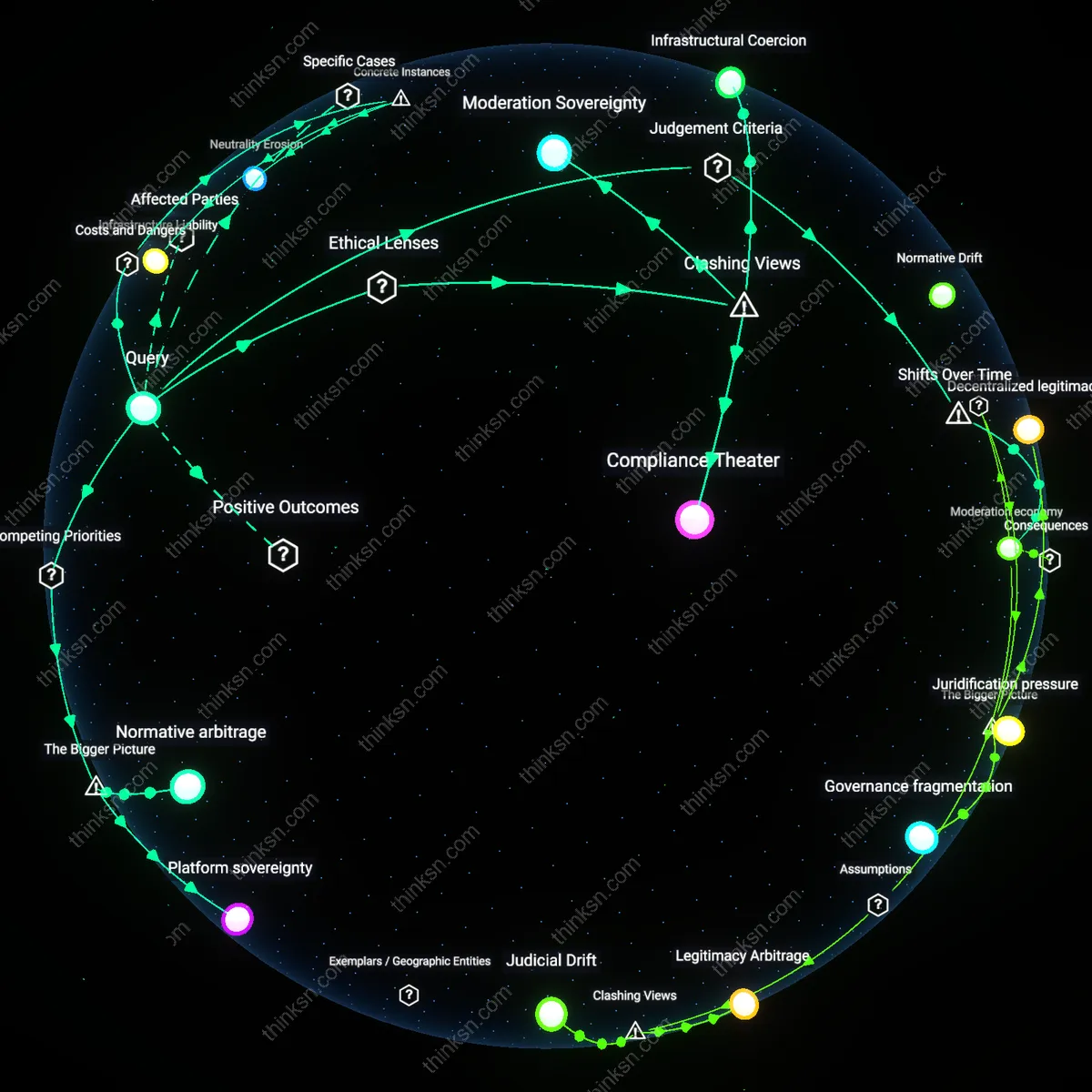

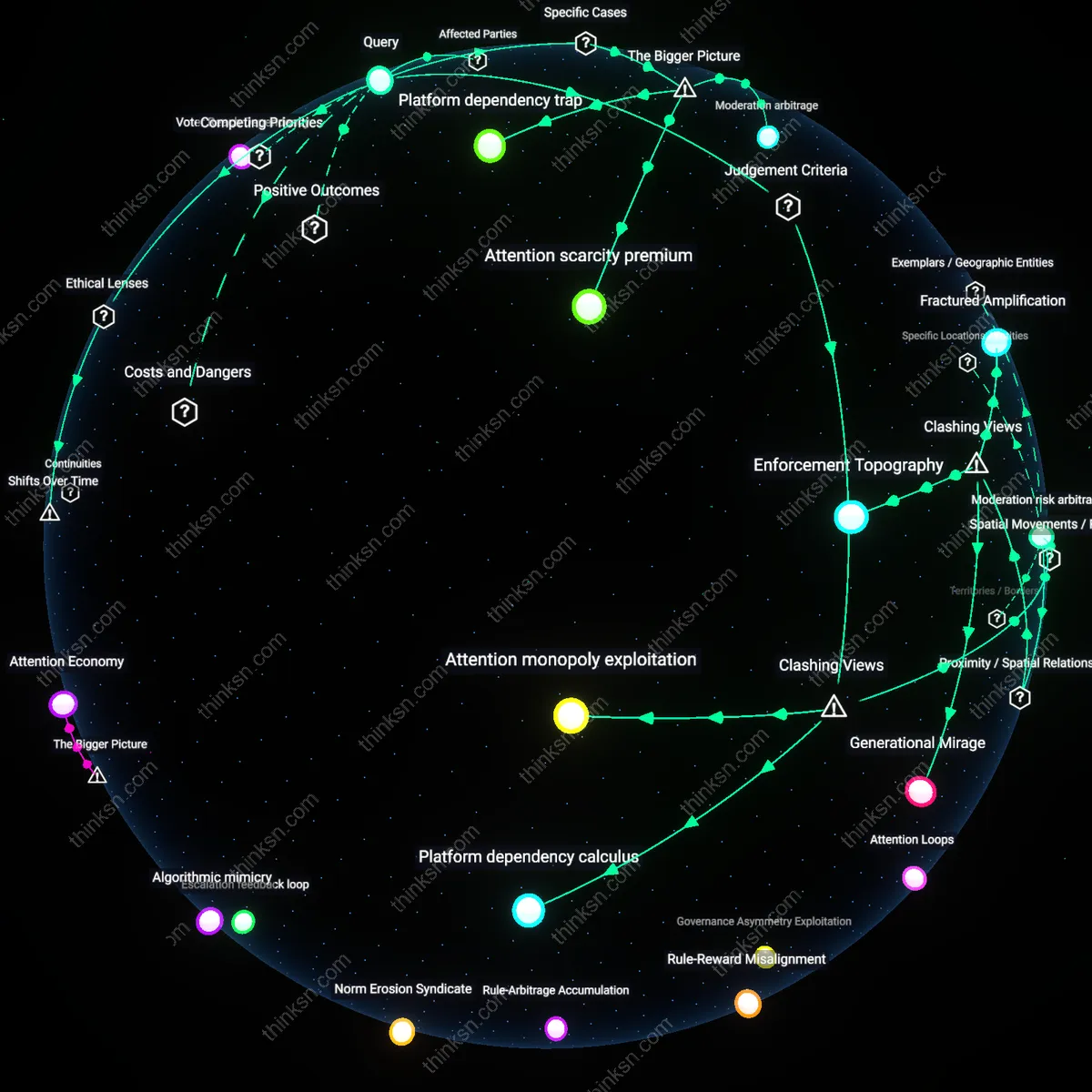

Analysis reveals 9 key thematic connections.

Key Findings

Algorithmic liability deferral

Governments indirectly censor by pressuring platforms to remove content because it shifts legal and reputational risk onto private firms, allowing states to achieve regulatory outcomes without direct action. This mechanism operates through platforms’ reliance on government cooperation for market access and regulatory goodwill—particularly evident in countries like India and Indonesia, where telecom laws enable broad takedown demands under threat of service suspension. What is overlooked is that platforms don’t merely comply; they proactively over-enforce removals to preempt future penalties, effectively internalizing state preferences into automated moderation systems. This transforms political pressure into self-sustaining technical architecture, making censorship persistent even absent new directives.

Sovereignty arbitrage

Indirect government pressure is a form of legitimate political influence when understood as a tactical adaptation by smaller or emerging states to project regulatory power in digital spaces they cannot technically control. Countries like Vietnam or Turkey leverage economic leverage—such as data localization laws or ad market access—to compel platform compliance, mimicking the enforcement reach of more powerful states without equivalent surveillance infrastructure. The underappreciated dynamic is that these governments are not merely reacting but strategically exploiting platform dependency on local user bases, turning content moderation into a bargaining chip in broader digital sovereignty negotiations—reframing censorship accusations as asymmetric regulatory competition in a fragmented global internet.

Regulatory Shadow

Indirect government pressure constitutes censorship when officials exploit plausible deniability to shape platform content removals without formal orders, as seen in India’s 2021 takedown of tweets by Twitter during farmer protests—where the Ministry of Electronics and Information Technology issued legal notices under Section 69A of the IT Act, compelling platforms to act under threat of penalty, revealing a non-transparent governance mechanism that functions as de facto prior restraint.

Pluralist Accountability

When democratic institutions coordinate with platforms to enforce lawful speech boundaries during crises, such influence serves as legitimate political oversight, exemplified by Germany’s Network Enforcement Act (NetzDG) requiring firms like Facebook to remove verified hate speech—the state upholds civic equality and public order through transparent, rule-based demands, exposing how legal interoperability between governments and platforms can reinforce democratic legitimacy rather than suppress dissent.

Proxy Enforcement

U.S. federal pressure on social media companies to moderate misinformation during the 2020 election—such as FBI briefings to Twitter and Facebook about foreign disinformation campaigns—demonstrates how intelligence agencies can indirectly steer content policy through national security rationales, constructing a backstage channel of influence where platforms preemptively comply to maintain access, revealing a systemic shift toward covert operational dependency that bypasses public scrutiny.

Legitimacy feedback loop

Indirect government pressure amounts to legitimate political influence when it emerges from democratically accountable institutions responding to verified public harms, such as disinformation undermining electoral integrity or incitement to violence during civil unrest. In liberal democracies, bodies like parliamentary oversight committees or independent advisory councils exert pressure on platforms within a framework of proportionality and rule-of-law norms, grounding their actions in ethical theories of procedural legitimacy and republican civic virtue. The non-obvious insight here is that sustained public trust in governance depends not only on formal laws but on informal coordination that signals responsiveness—thus, pressure functions not as suppression but as a feedback mechanism that aligns private platform governance with collectively endorsed social values.

Asymmetric accountability structure

Indirect government pressure becomes a tool of systemic censorship when it exploits the unequal power and accountability between state actors and private platforms, particularly in hybrid regimes where judicial independence is compromised. In countries like India or Turkey, executive branches use financial audits, licensing threats, or coordinated online mobs to signal disfavored content to platforms, which then remove material to maintain market access—effectively outsourcing censorship while preserving a façade of corporate autonomy. The underappreciated reality is that this arrangement institutionalizes speech control through plausible deniability, where the state avoids direct responsibility while cultivating a norm of submission among intermediaries, reinforcing authoritarian resilience through private compliance.

Regulatory laundering

Indirect government pressure on platforms to remove content functions as a deliberate workaround to constitutional constraints, where agencies like the U.S. Department of Homeland Security signal content removal preferences to social media companies during election cycles, exploiting platforms’ dependency on state cooperation for threat intelligence, thereby outsourcing takedown decisions to private actors to avoid First Amendment scrutiny. This mechanism transforms content governance into a covert compliance system that mimics censorship while maintaining formal deniability, revealing how legal boundaries incentivize the state to act through, rather than upon, intermediaries. The non-obvious insight is not that governments influence platforms, but that they systematically create dependency structures to mask coercion as coordination.

Attention cartels

When governments indirectly pressure platforms to remove content, the result is less about suppressing speech and more about reshaping attention economies in favor of state-aligned narratives, as seen in India where the Ministry of Electronics and Information Technology issues weekly takedown directives that platform moderators treat as de facto priority queues, amplifying government-defined urgency while marginalizing unlisted but equally urgent crises. This dynamic reveals that influence operates not through prohibition but through attention arbitrage, where the state leverages its role as a primary signal generator to set the platform’s operational rhythm. The counterintuitive finding is that political power is exercised not by silencing voices, but by monopolizing the perception of what counts as a threat.