Who Loses When Congress Trusts Industry on AI Liability?

Analysis reveals 5 key thematic connections.

Key Findings

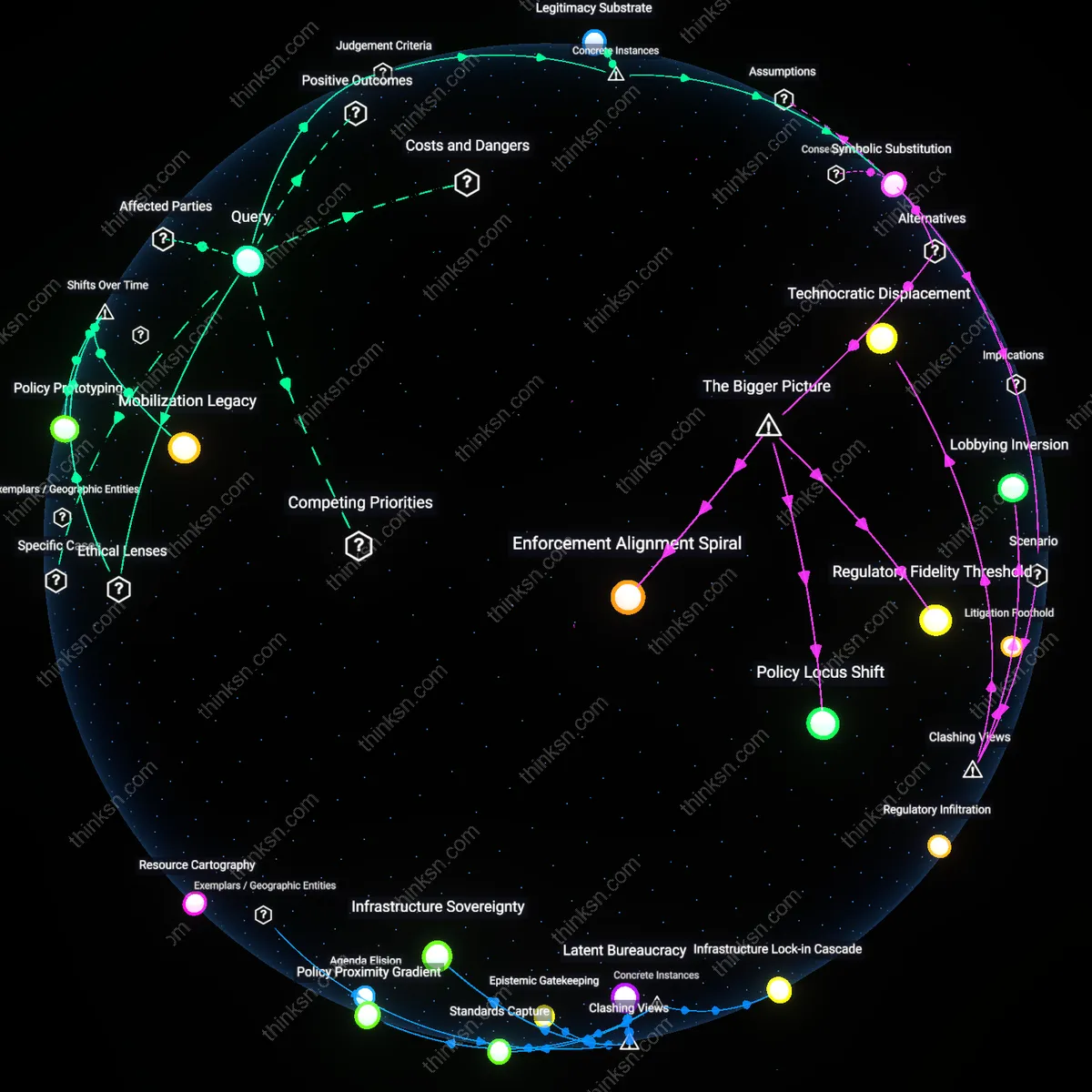

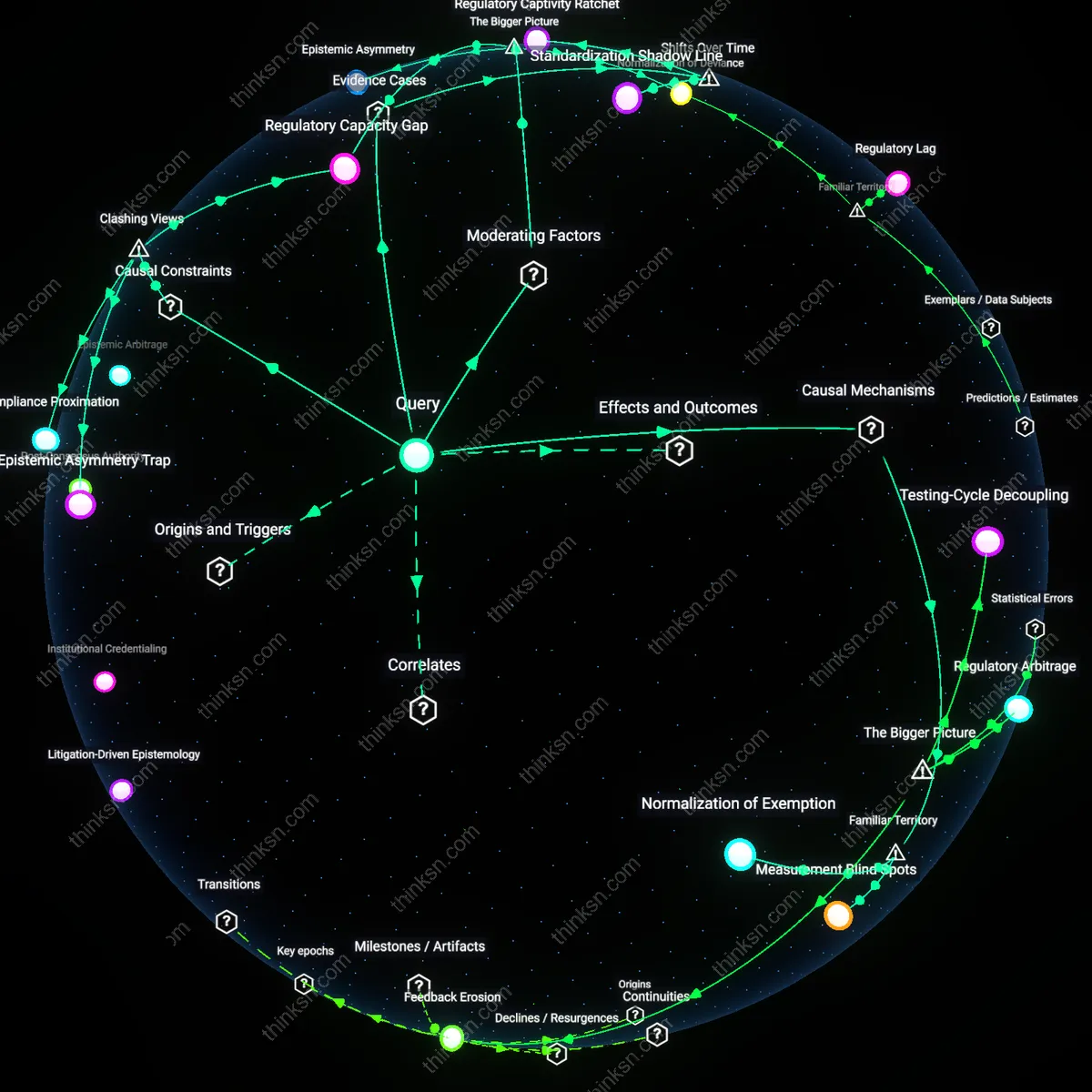

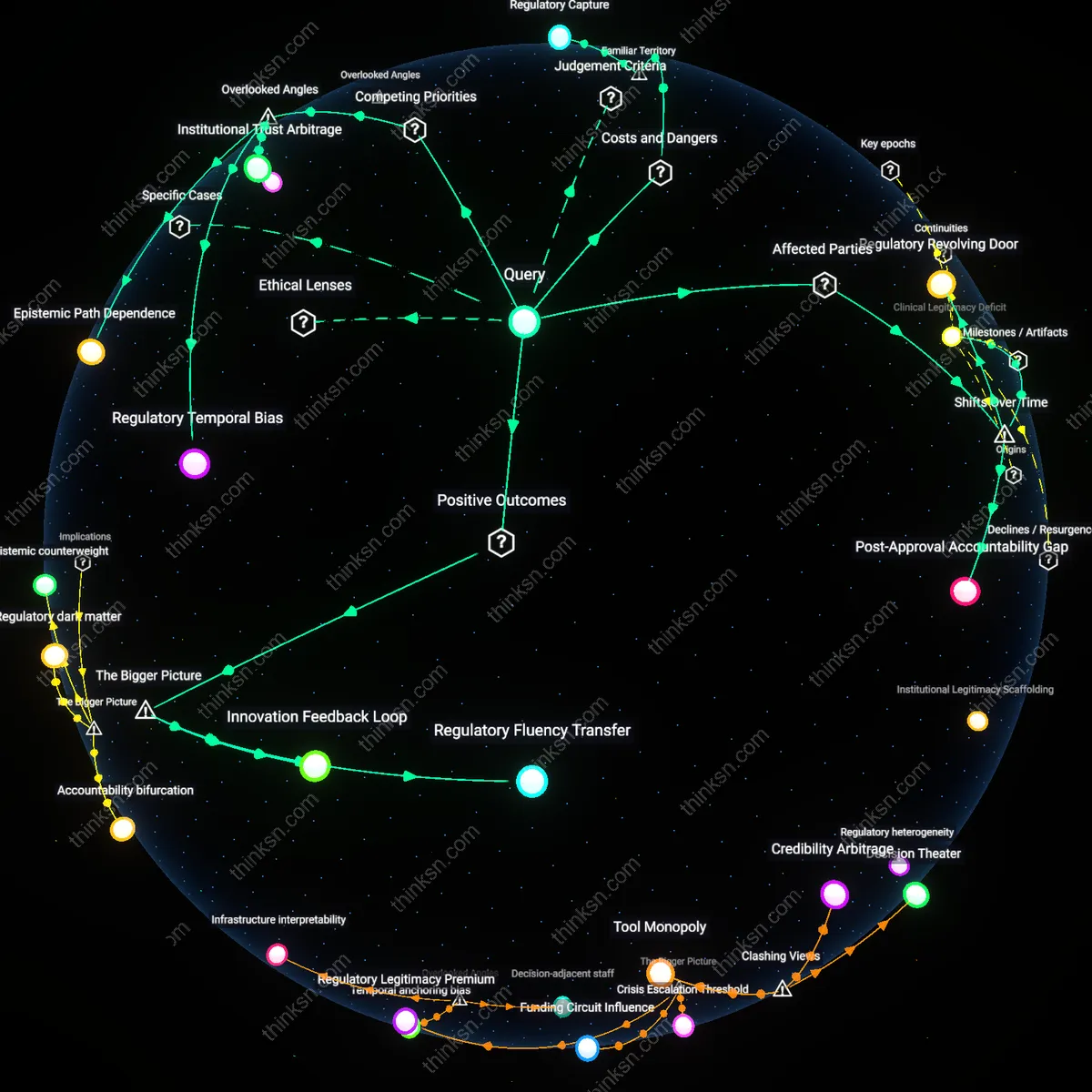

Regulatory Preemption Regime

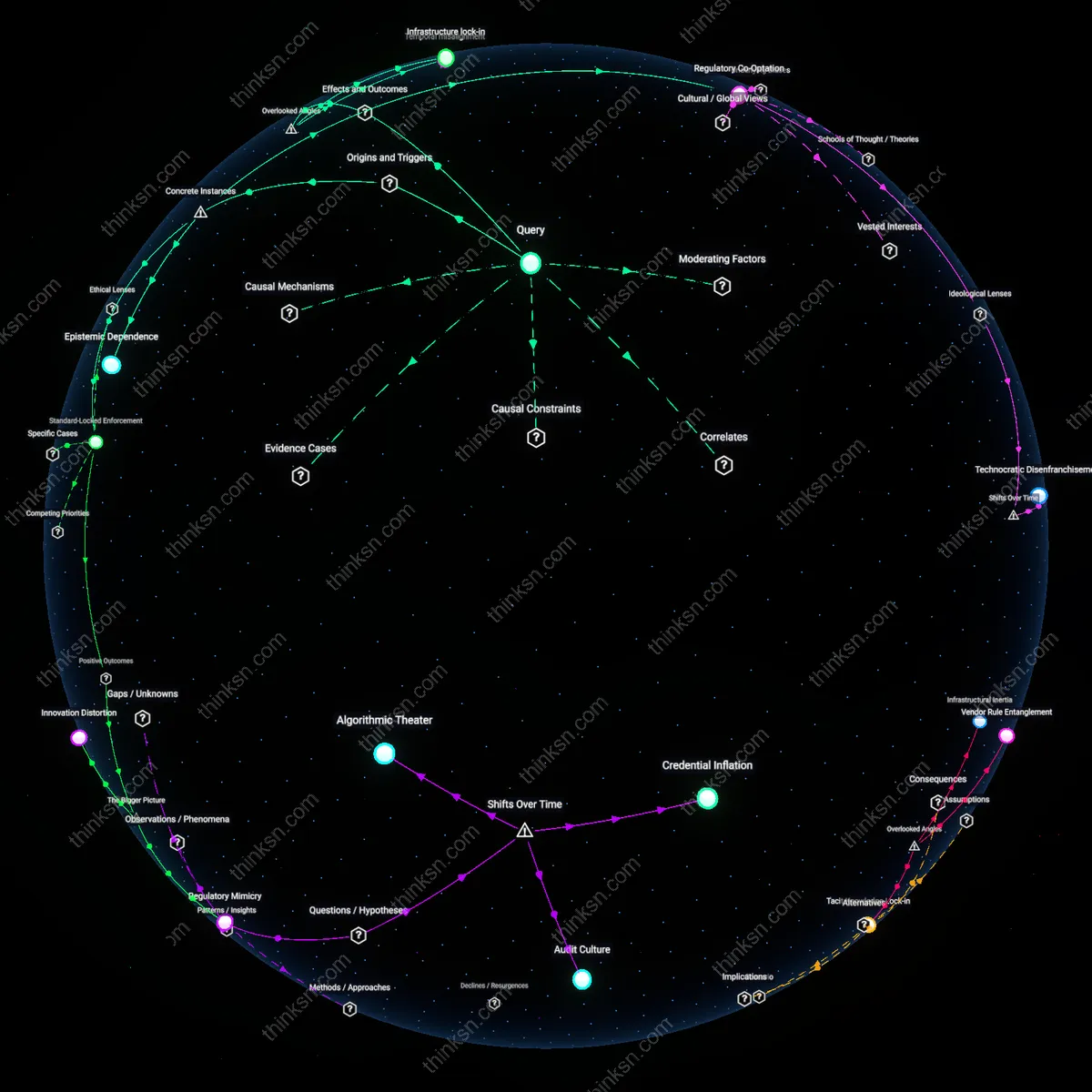

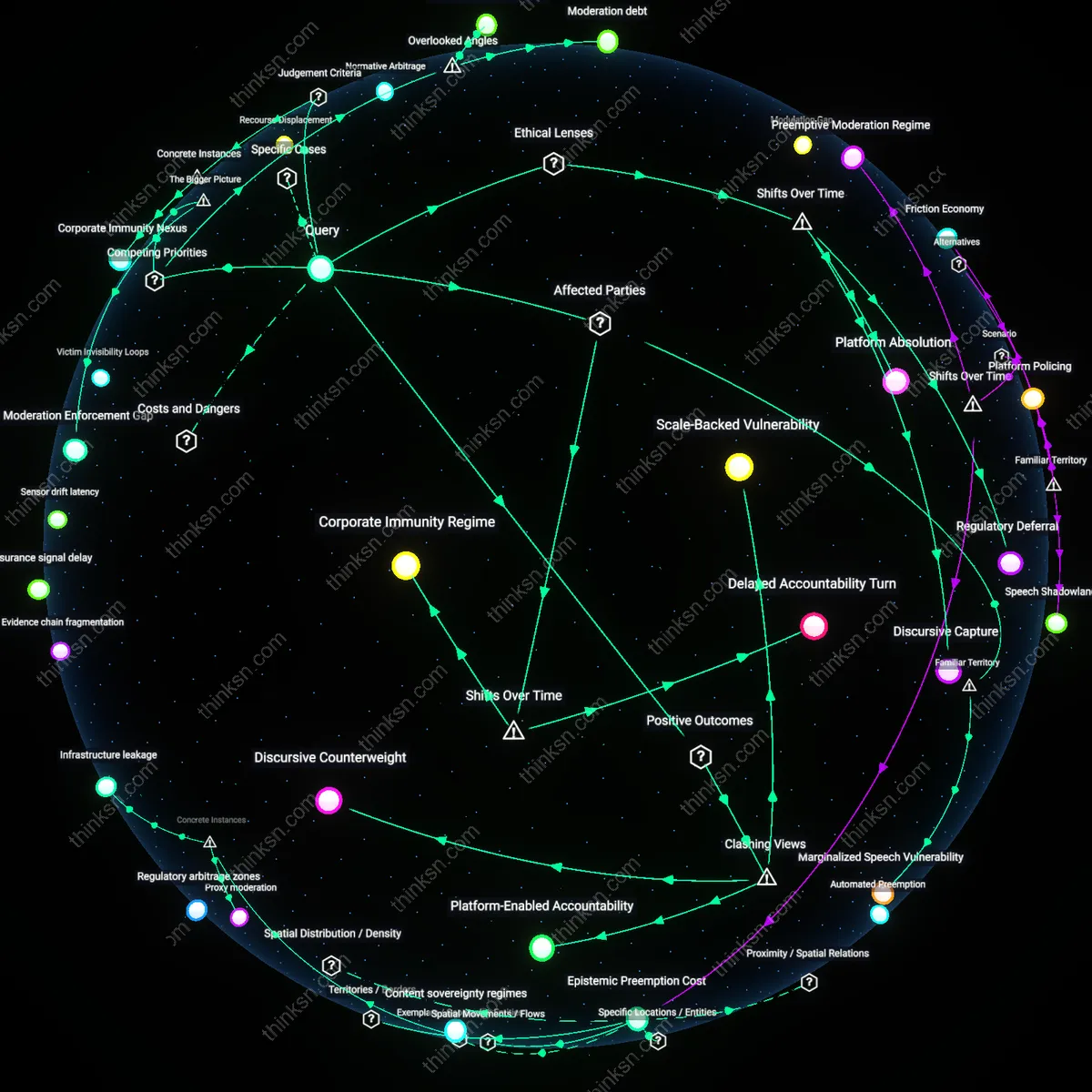

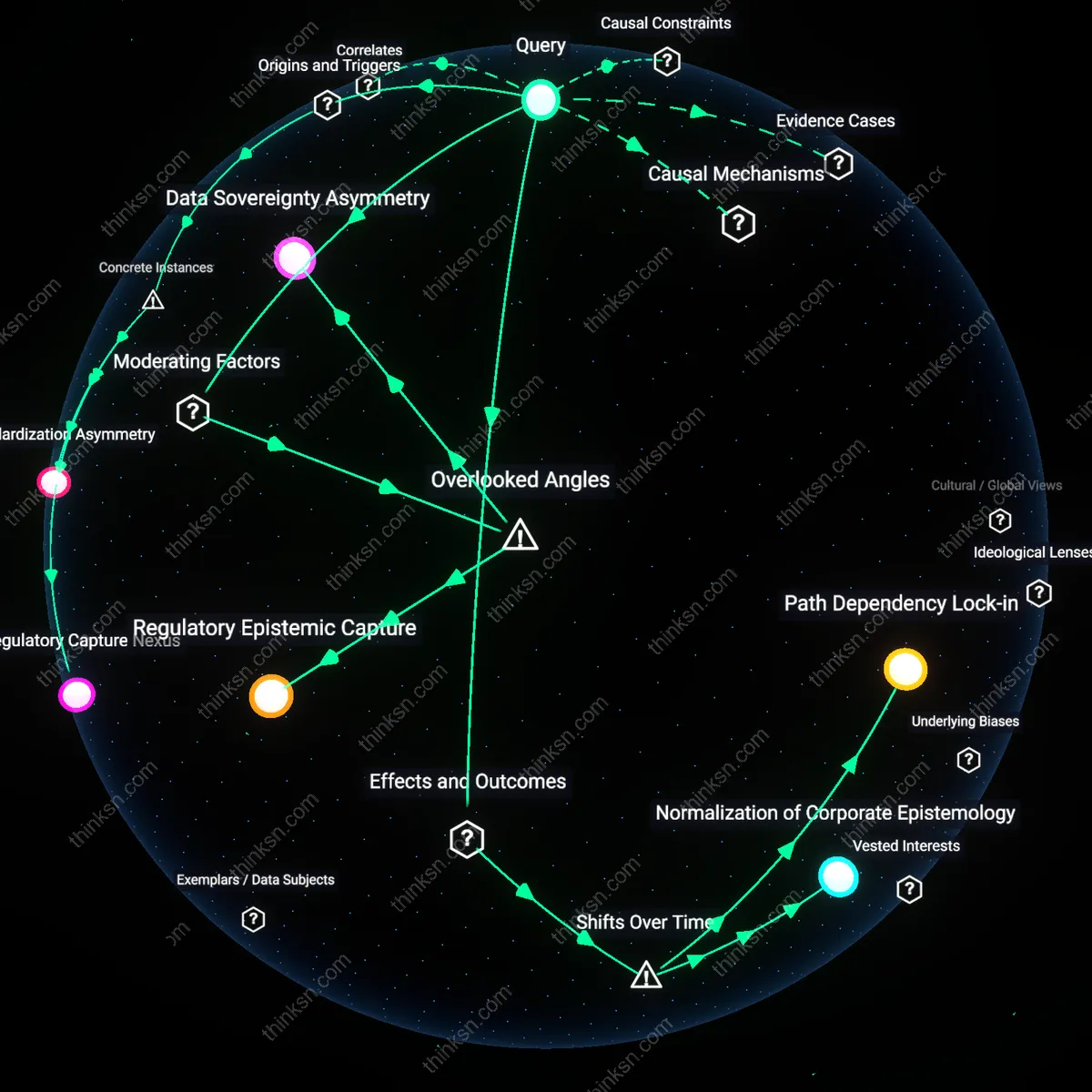

Congress relies on industry standards in AI liability legislation because federal agencies and legislative drafters increasingly treat private standard-setting bodies as de facto rulemakers, reducing direct statutory mandates; this shift, solidified in the 2010s with the rise of AI-integrated critical infrastructure, replaced prescriptive regulation with deferred governance through technical consensus, embedding corporate influence into liability thresholds. The move from public rulemaking to reliance on bodies like IEEE or ISO, underwritten by firms like Google and IBM, institutionalizes industry norms as legal baselines, making deviation from such standards an exception rather than the norm in litigation. This transition—evident in the FAA’s reliance on RTCA guidelines for autonomous systems or NHTSA’s consultation with SAE for self-driving vehicles—reveals a post-2008 turn toward risk-based, industry-led regulation that insulates developers from negligence claims even when harms occur. The underappreciated consequence is not weaker enforcement per se, but the systemic displacement of democratic oversight by procedural legitimacy derived from technical expertise, which redefines 'reasonable care' in ways that preclude novel legal theories.

Remedial Asymmetry

Congress integrates industry standards into AI liability legislation to preserve innovation incentives, a priority that emerged distinctly after the 2016 AI policy pivot when NSF and OSTP framed regulatory caution as a strategic liability in U.S.–China tech competition; this geopolitical framing transformed due diligence benchmarks from public safety tools into instruments of national competitiveness, with standards becoming shields against tort claims rather than minimum safeguards. As agencies like NIST were tasked with developing AI Risk Management Frameworks in 2023, compliance with these non-binding standards began to function as de facto evidence of due care in court, altering the evidentiary burden for plaintiffs. The shift from post-harm compensation models (prevalent in 1990s product liability) to forward-looking 'risk management conformity' reveals how legal redress has become structurally diminished—not through explicit immunity, but through a temporal inversion where future-oriented prudence negates past harm. The overlooked dynamic is that victims now face a 'conformity defense'—not codified in law, but normalized through judicial deference—making successful claims dependent on proving not just injury, but deviation from consensual, industry-curated processes.

Regulatory Expediency

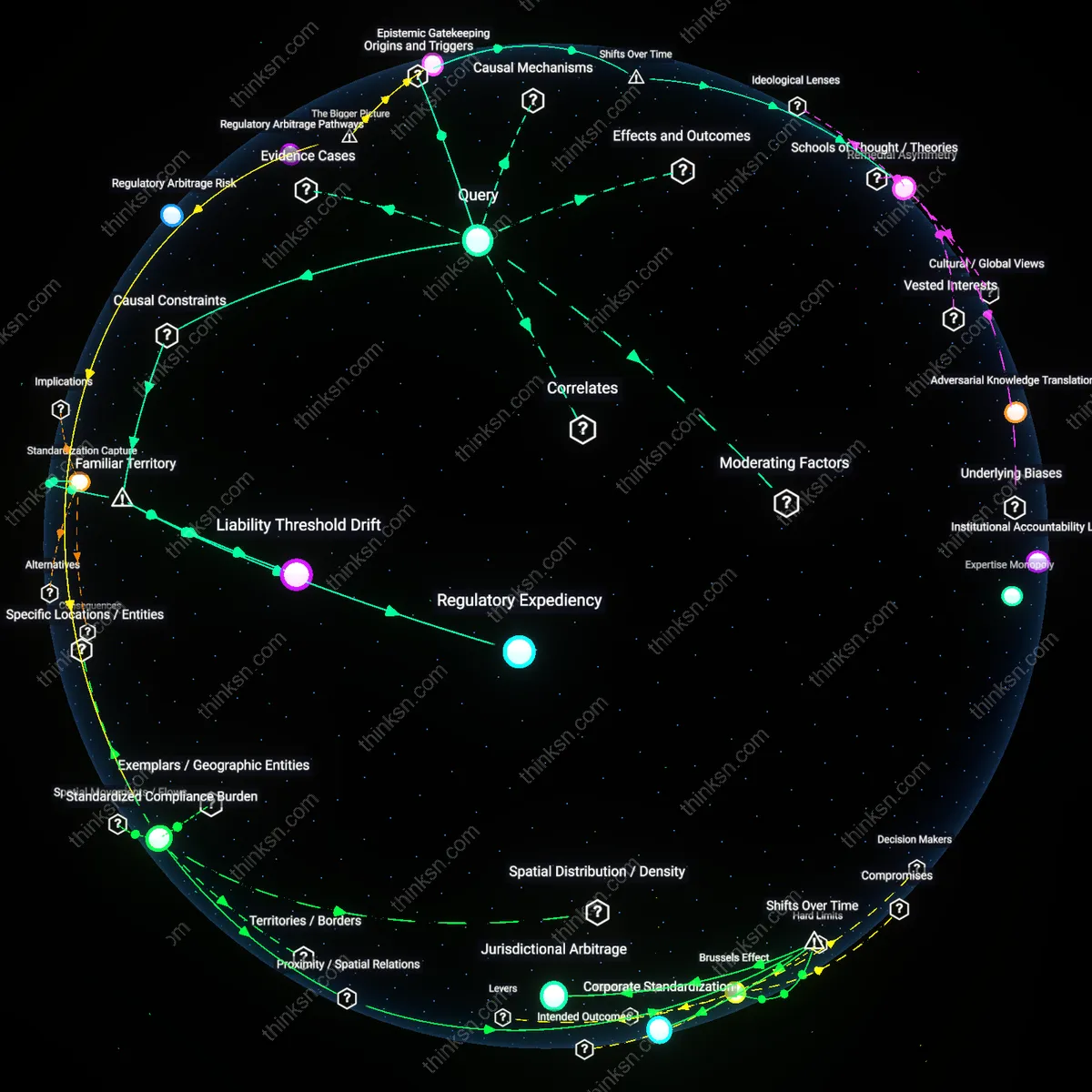

Congress adopts industry standards in AI liability legislation because it lacks the technical capacity to independently define safety and performance benchmarks. Legislative bodies like the House Committee on Science, Space, and Technology depend on specifications already developed by private consortia such as IEEE or NIST to avoid delays and technical inaccuracies, embedding these standards into law through reference-by-inclusion. This reliance shortcuts the need for Congress to build in-house expertise or engage in protracted rulemaking, but it also means that the baseline for legal accountability is set by entities funded and influenced by the very firms deploying AI systems—making it harder for victims to challenge harms as 'non-compliant' when compliance itself is defined retroactively by industry norms.

Liability Threshold Drift

Victims struggle to obtain legal redress because industry standards, once codified into law, redefine what constitutes 'reasonable' AI behavior, raising the bar for proving negligence. Courts traditionally rely on the 'reasonable person' standard, but in AI cases, this has shifted toward a 'reasonable algorithm' standard anchored in prevailing industry practice, as seen in rulings citing ISO/IEC standards. This judicial deference to norms established by tech firms—like those from the Partnership on AI or Meta’s internal AI review boards—means deviations from standard practice, not harm outcomes, become the legal focal point, effectively immunizing companies that follow flawed but widely adopted protocols and obscuring victims’ claims that depend on moral or functional wrongs outside technical compliance.

Standardization Capture

Industry participation in standard-setting bodies functions as a de facto lobbying mechanism that preempts congressional oversight and shapes liability exposure before legislation is drafted. Groups such as the Information Technology Industry Council (ITI) and members of the AI Safety Institute Consortium co-author technical specifications under ANSI accreditation, ensuring their interests are embedded in standards later adopted by law. Because Congress treats these standards as neutral and scientifically grounded, the resulting legislation inherits built-in assumptions that minimize corporate risk—such as treating model transparency as voluntary—while making non-compliance both rare and difficult to prove, effectively narrowing the legal pathways available to injured parties who lack access to internal validation data or audit rights.