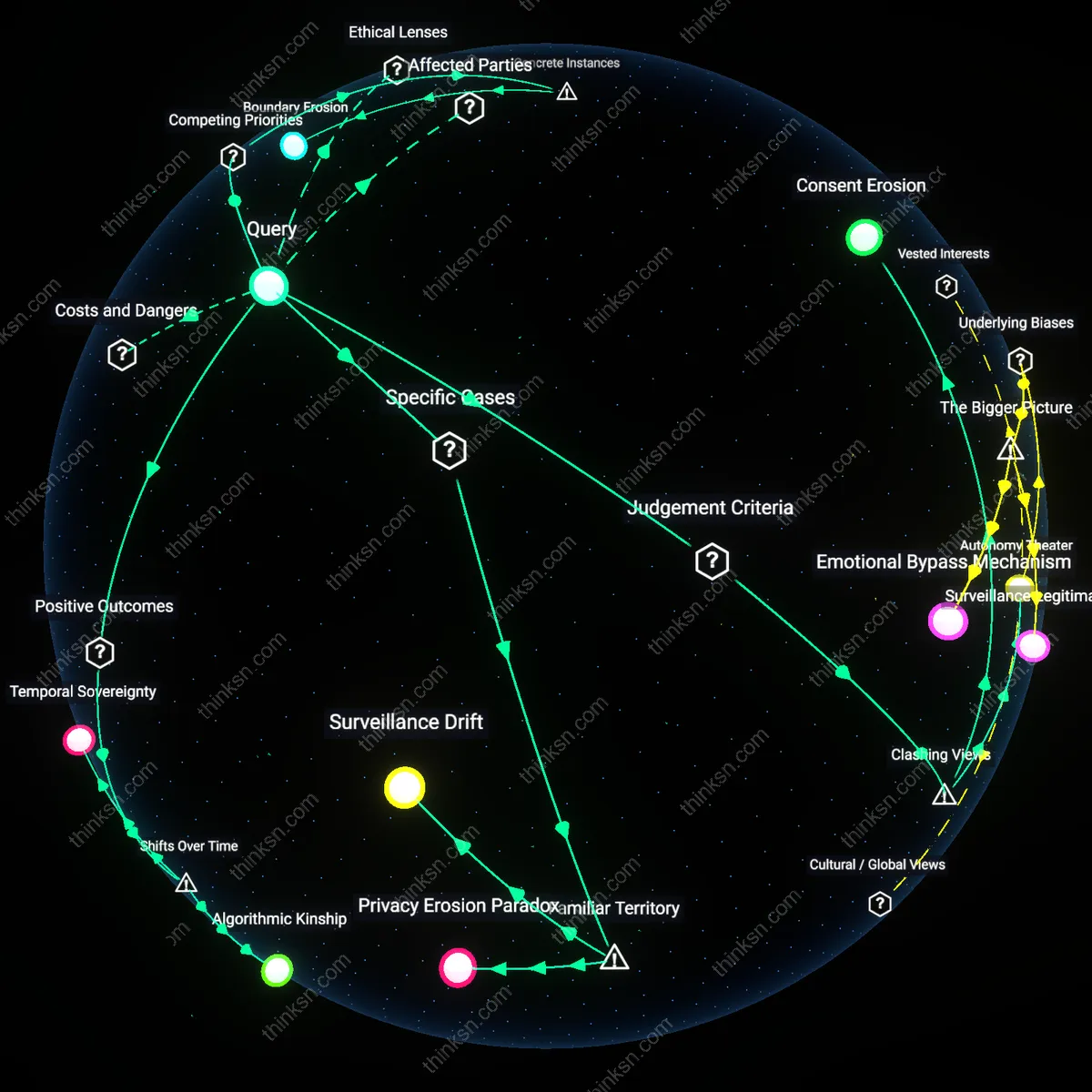

Is Employee Biometric Data Consent Just an Illusion of Privacy?

Analysis reveals 12 key thematic connections.

Key Findings

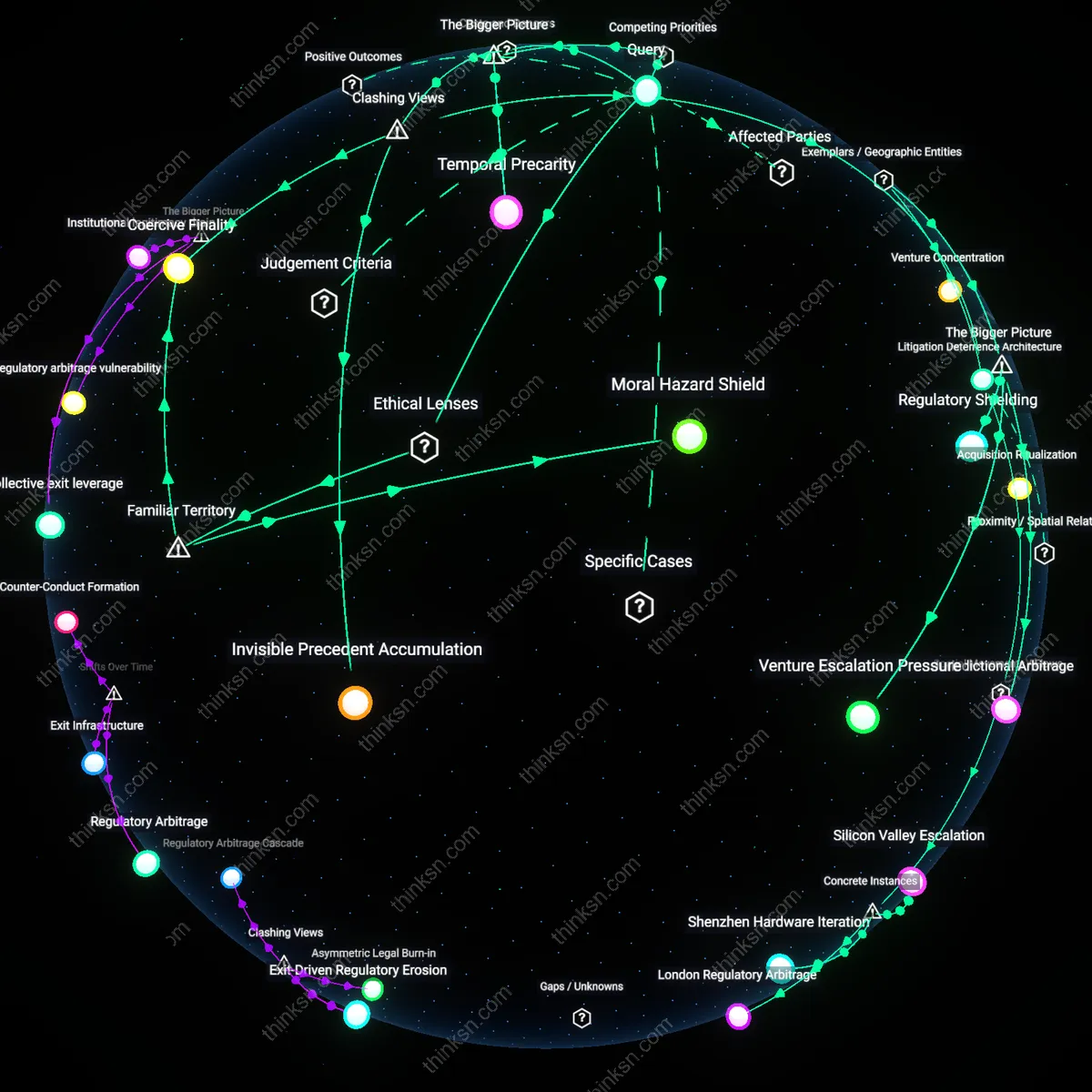

Consent As Theater

Requiring employee consent for biometric monitoring sustains the appearance of autonomy while preserving managerial control because workers understand refusal risks job loss. The mechanism operates through at-will employment regimes—common in the U.S.—where legal protections against retaliation are weak or unenforceable in practice, rendering consent performative. This is significant because it reveals how procedural safeguards like informed consent become symbolic rituals rather than actual limits on power, a dynamic routinely obscured by corporate ethics policies.

Data Coercion Regime

Biometric monitoring consent functions as economic duress because employees weigh potential discipline or missed promotions against privacy loss, not outright termination. The condition enabling this is asymmetrical dependency on wages in high-unemployment sectors such as warehousing or call centers, where surveillance is increasingly common. This exposes how the market’s structural pressure—not overt command—shapes decisions, contradicting the individualistic logic of consent in a way often ignored in legislative debates focused on transparency over equity.

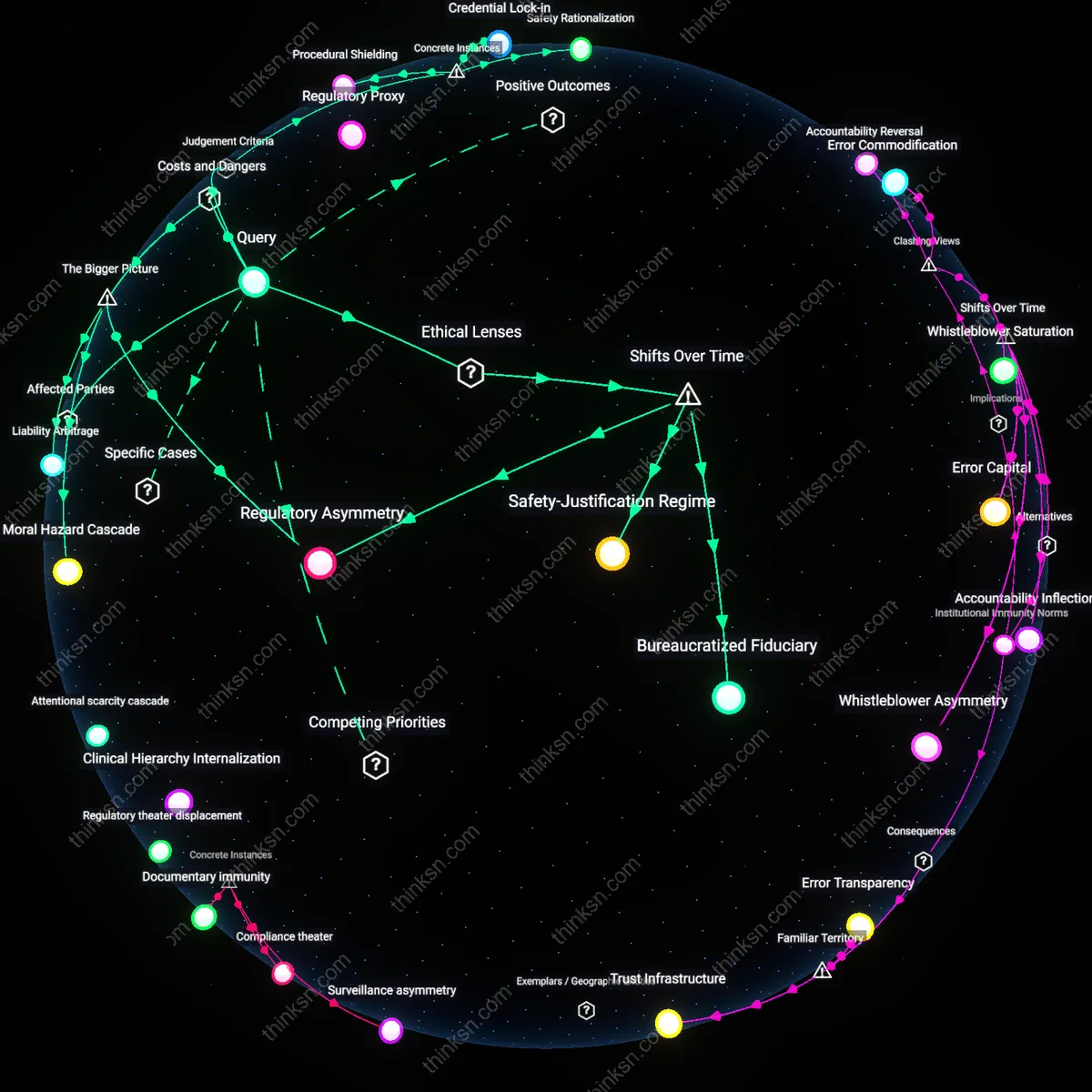

Performance Ratchet

Consent to biometric monitoring locks workers into a feedback loop where data normalizes ever-tighter productivity benchmarks, making past performance the baseline for future expectations. The system operates via algorithmic management platforms—like those used by Amazon or Uber—that translate movement, response time, or idle moments into quantified metrics. What’s underappreciated is that consent isn’t just compromised at enrollment; it enables a dynamic eroding autonomy over time, which feels inevitable because it evolves incrementally beneath regulatory thresholds.

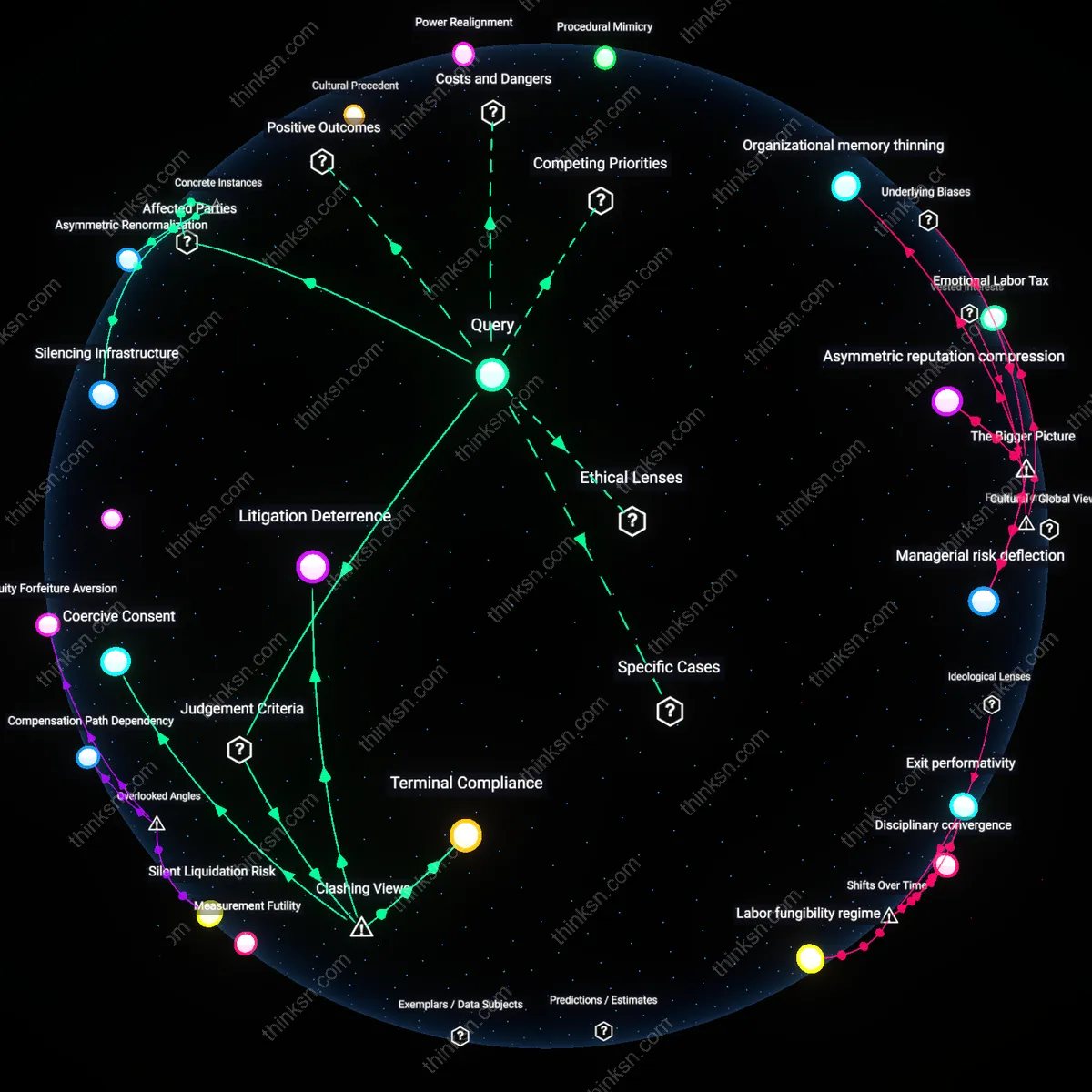

Coerced Consent

Requiring employee consent for biometric monitoring reinforces coercive workplace control by exploiting job insecurity, making 'consent' a perfunctory ritual rather than a voluntary choice. Workers in low-wage or high-surveillance sectors, such as warehouse logistics or call centers, routinely sign consent forms not because they agree with monitoring but because refusal risks demotion, discipline, or termination—mechanisms enforced through asymmetrical power in at-will employment systems. This renders consent a procedural shield for employers, masking coercion under legal compliance, which sustains the illusion of ethical oversight while deepening systemic vulnerability. The non-obvious reality is that consent frameworks, designed to protect autonomy, become tools of subordination when applied under duress.

Surveillance Theater

Mandating consent for biometric monitoring functions less to protect workers than to absolve employers of liability, creating a performance of ethical governance that distracts from unchecked data exploitation. In tech-driven firms using AI-powered productivity scoring, managers deploy consent forms as alibis for invasive tracking, even as employees have no real capacity to negotiate terms—an outcome amplified in gig platforms where work is contingent and algorithmic management is opaque. This dynamic institutionalizes a theater of accountability, where procedural checklists replace substantive protections, allowing corporations to claim transparency while entrenching power asymmetries. The underappreciated danger is that compliance rituals become substitutes for justice, legitimizing surveillance under the guise of choice.

Data Coercion Nexus

Biometric consent requirements embed a feedback loop where job precarity directly fuels involuntary data extraction, transforming HR policies into conduits of structural exploitation. In healthcare and manufacturing, where shift assignments and promotions rely on algorithmic performance metrics derived from biometric data, employees 'consent' not to affirm privacy preferences but to survive economically, knowing refusers are statistically more likely to be deselected for opportunities. This system normalizes data extraction as a condition of labor participation, weaponizing consent to automate discrimination under neutral administrative procedures. The overlooked mechanism is how consent, framed as individual agency, becomes a systemic lever for extracting intimate data at scale through the quiet threat of professional exclusion.

Consent Asymmetry

Requiring employee consent for biometric monitoring obscures structural coercion that emerged after the 1980s shift from collective to individualized labor contracts. Under post-New Deal labor regimes, employment protections weakened as at-will doctrine expanded, transforming consent from a mutual agreement into a transaction where workers must surrender autonomy to preserve income. This mechanism operates through private workplace governance replacing public regulatory oversight, making refusal economically unviable even if technically permitted. The non-obvious outcome is that formal consent replicates power dominance while simulating ethical legitimacy, revealing how legal forms adapt to maintain control without overt coercion.

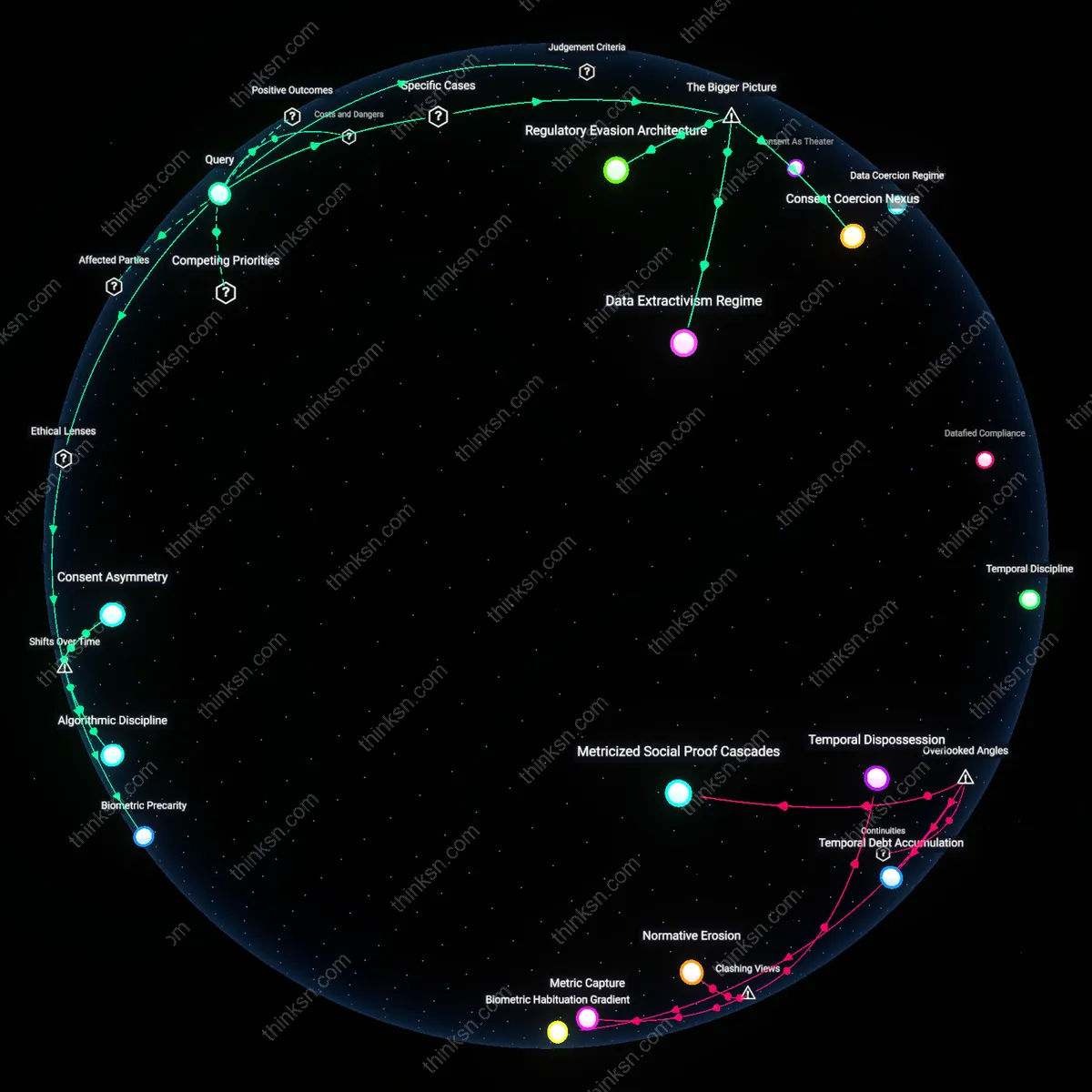

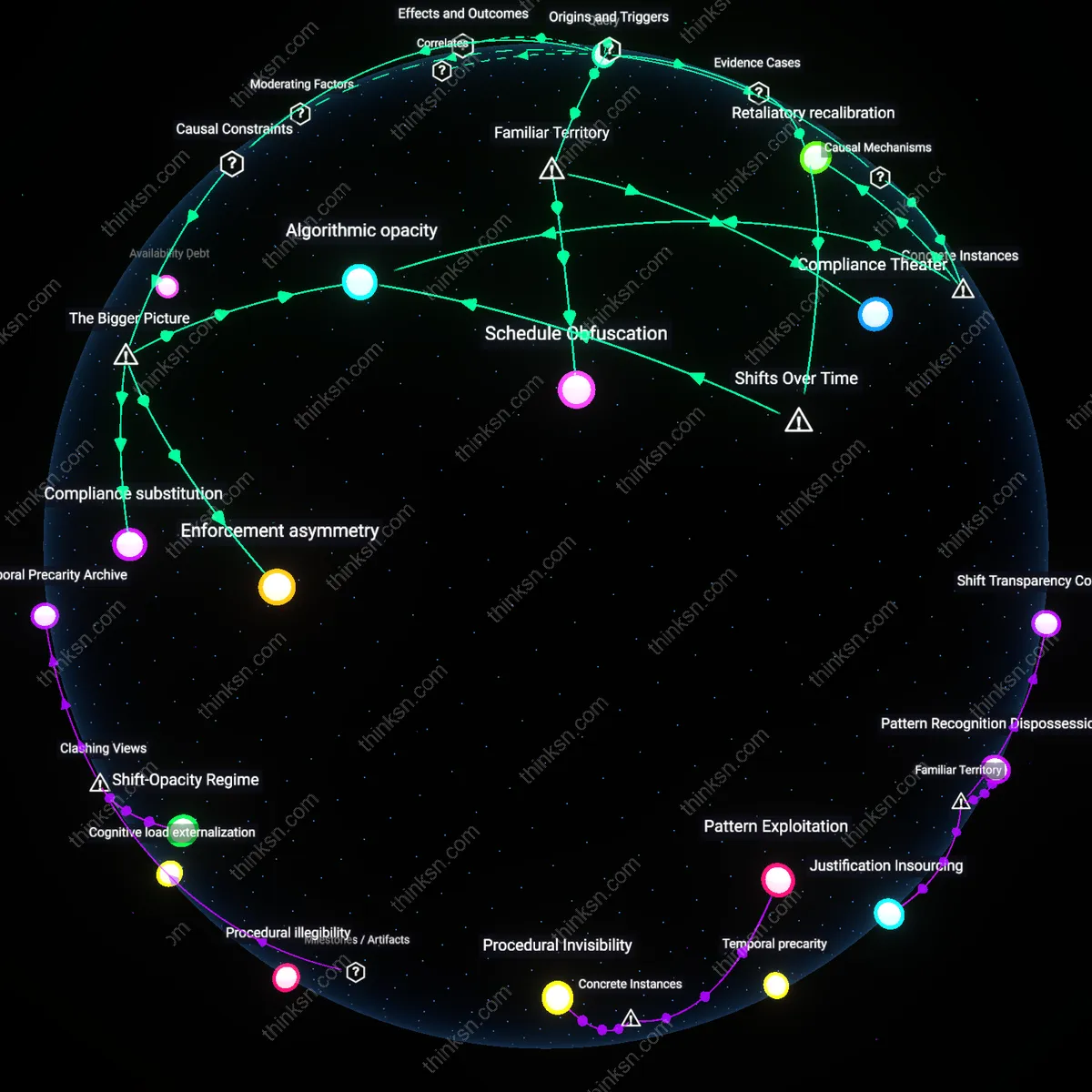

Algorithmic Discipline

The normalization of biometric monitoring with purported consent intensified after the 2010s platform economy boom, when productivity tracking migrated from industrial sites to service and gig work through datafication. Employers now leverage the 'voluntary' opt-in structure to embed surveillance into app-based workflows, where continuous performance metrics determine job allocation and retention. This shift operates through digital labor platforms that bypass traditional employment relations, using behavioral nudges rather than direct orders to enforce compliance. The overlooked consequence is that consent becomes a ritualized entry point into algorithmic discipline, where withdrawal means deactivation—not termination—evading labor law thresholds while deepening dependency.

Biometric Precarity

The legitimization of biometric consent gained traction after 9/11 through securitization logics that migrated from borders to workplaces, especially in transportation, logistics, and government contracting. What was once exceptional—state-mandated biometric screening for national security—became routinized in private sector hiring and attendance systems under the guise of safety and efficiency. This transition operates through public-private surveillance partnerships that reframe consent as civic duty, embedding it in onboarding rituals where refusal signals suspicion. The underappreciated effect is that job security becomes contingent on biometric legibility, producing a new class of labor precarity tied not to skill or contract but to bodily compliance.

Consent Coercion Nexus

Requiring employee consent for biometric monitoring in Amazon warehouse operations creates a false equivalence between voluntary agreement and survival-driven compliance, because workers face algorithmic productivity tracking that penalizes deviations in real time. Frontline employees, aware that sustained low efficiency scores can trigger automated performance warnings or termination, feel compelled to consent even when uncomfortable—making consent a procedural formality rather than a meaningful choice. This effect is amplified by the closed-loop nature of Amazon’s human capital management system, where refusal risks immediate operational marginalization, revealing how workplace power asymmetries are codified into technical governance structures. The non-obvious insight is that consent mechanisms, when embedded in high-surveillance environments, can deepen rather than mitigate coercion by giving legal cover to extractive practices.

Data Extractivism Regime

Inffecto Robotics’ deployment of biometric fatigue monitoring in Indian gig delivery hubs functions not as a safety measure but as a labor control technology, because the data collected is used to refine workload allocation and reduce rest time allowances. Workers must consent to facial and blink tracking under the threat of being deprioritized for high-value delivery routes—a soft sanction with immediate income consequences. This operates through platform-mediated wage optimization systems that treat biometric feedback as a tuning mechanism for maximizing labor output per rupee, situating consent within a transnational infrastructure of value extraction. The overlooked dynamic is that biometric consent in globally distributed gig economies becomes a gateway for externalizing human limitations as algorithmic inefficiencies, normalizing data harvesting as a cost of job retention.

Regulatory Evasion Architecture

Illinois factory workers at Walgreens distribution centers who signed biometric timeclock consent forms under the state’s Biometric Information Privacy Act (BIPA) were later denied redress when their data was shared with third-party analytics firms, exposing how legal compliance substitutes for genuine worker agency. The existence of written consent allowed the company to claim adherence to BIPA while insulating itself from liability, even though employees had no power to negotiate terms or opt out without risking discipline. This functions through a legal-technical interface where corporate risk management strategies exploit the form over the substance of consent, leveraging jurisdictional loopholes and fragmented labor protections. The underrecognized outcome is that consent becomes a procedural shield for employers, transforming regulatory safeguards into tools that reinforce dependency and limit collective resistance.