Monitoring Loyalty: Where Workplace Security Meets Political Expression?

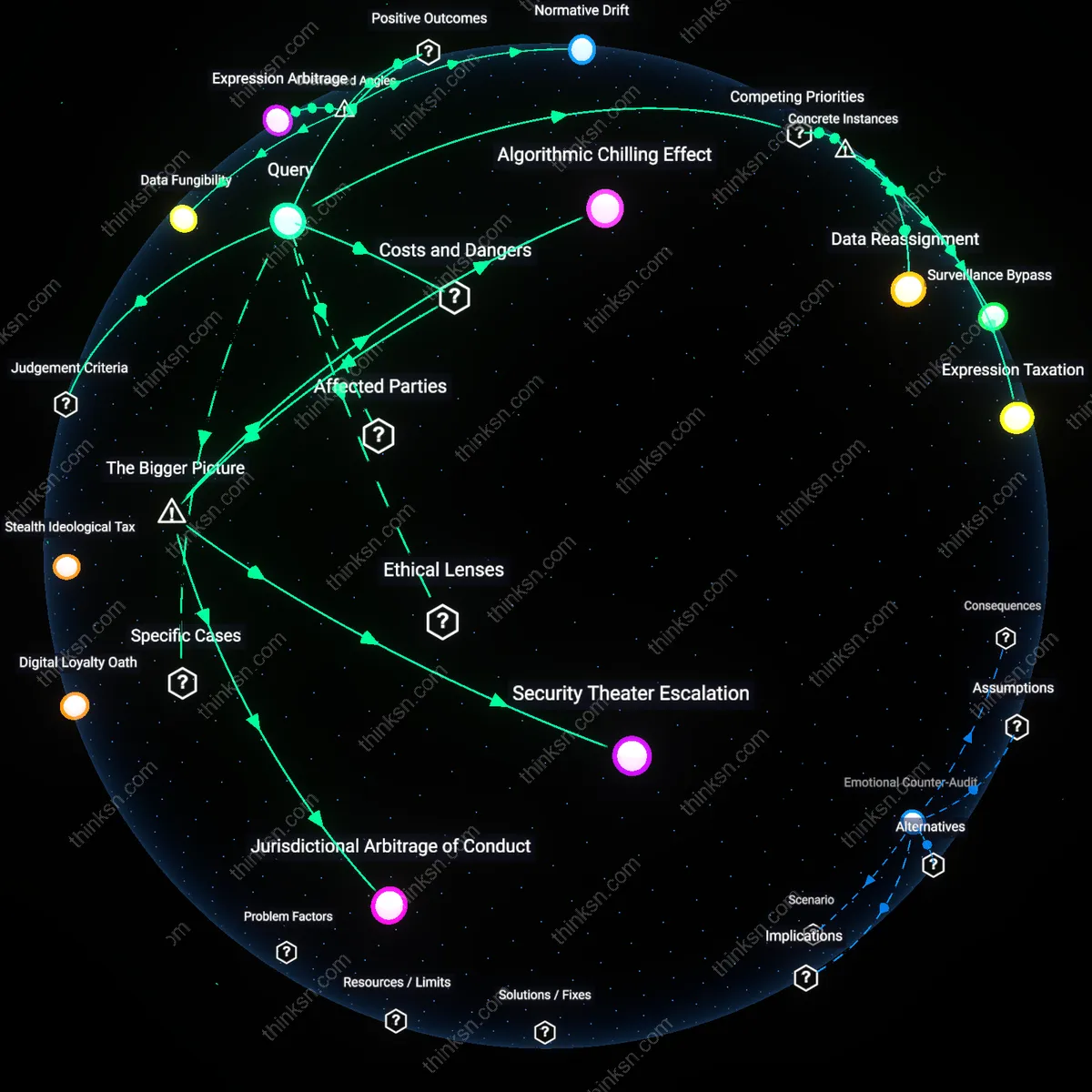

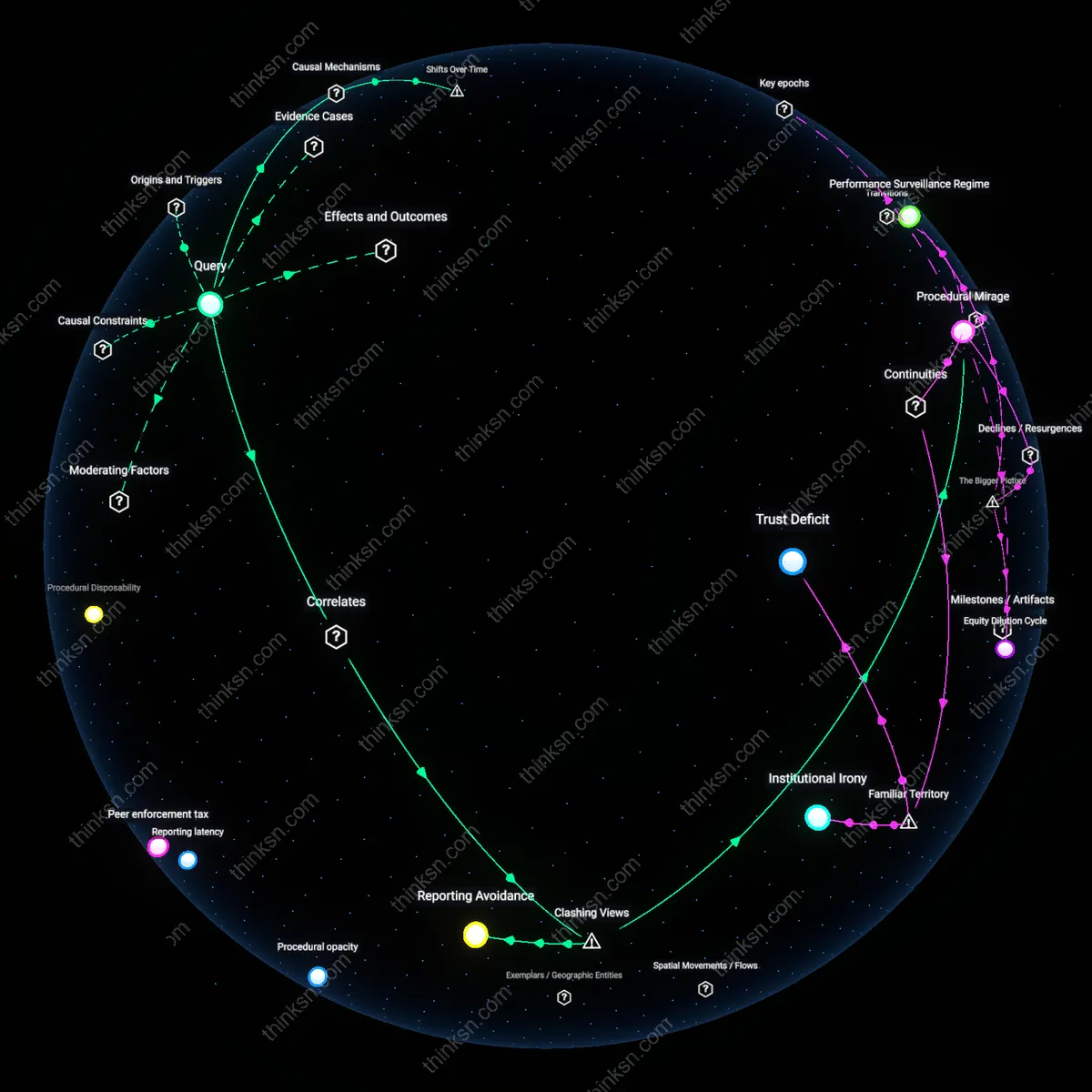

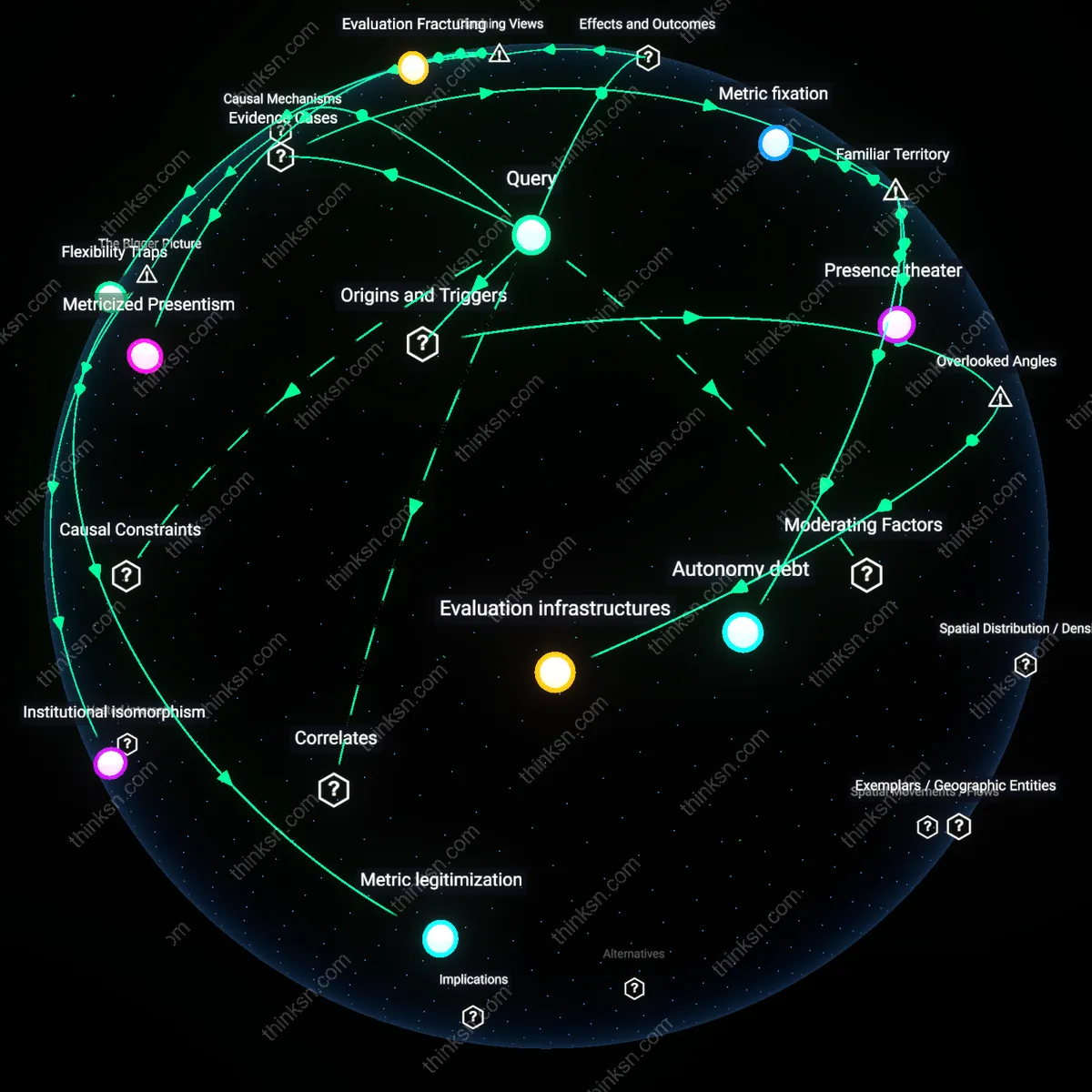

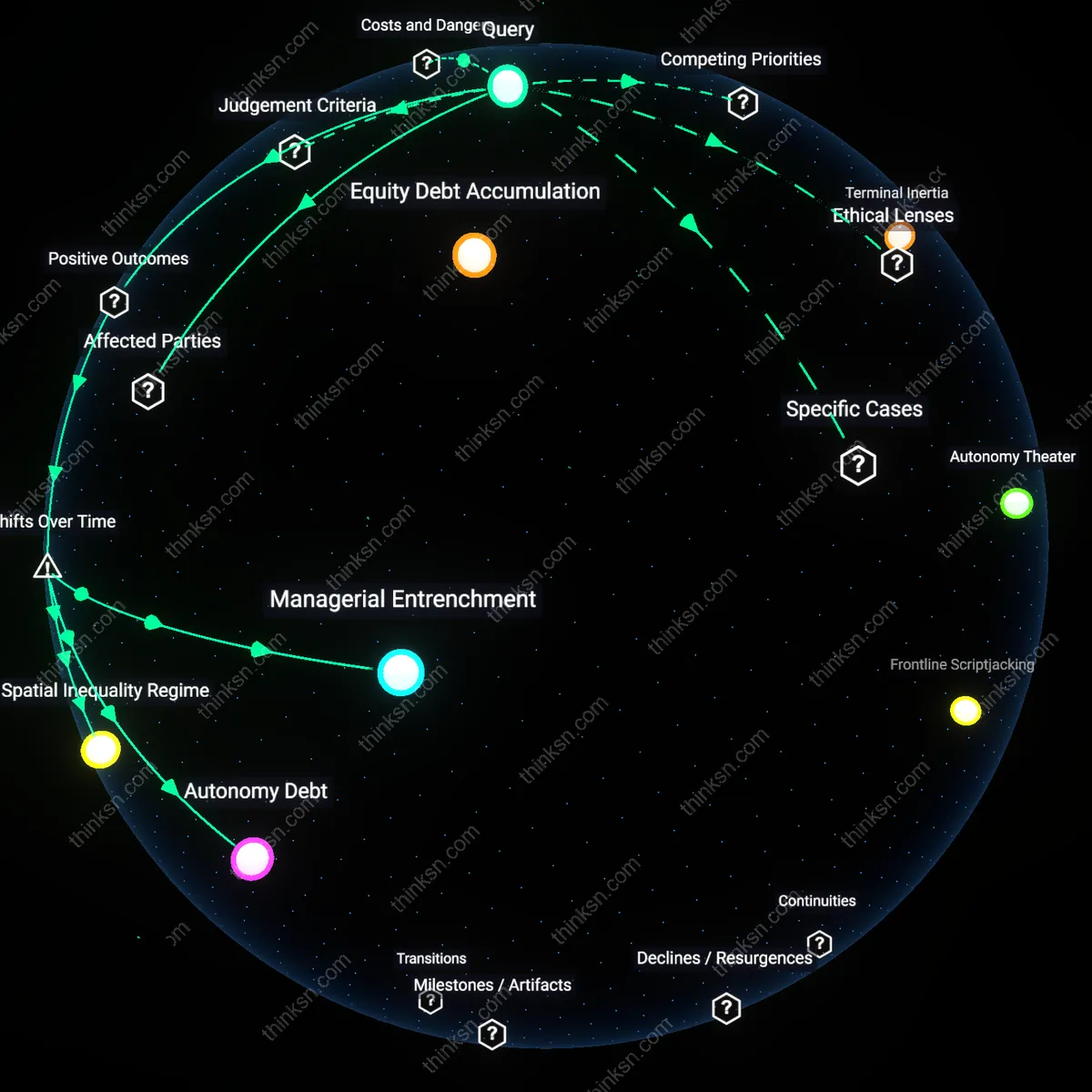

Analysis reveals 12 key thematic connections.

Key Findings

Digital Loyalty Oath

AI-driven behavioral monitoring by employers functions as a digital loyalty oath, where the expectation of political neutrality becomes a covert demand for ideological conformity. Corporations deploy algorithmic surveillance to flag language or associations deemed disruptive, not necessarily illegal, converting vague risk thresholds into operational mandates enforced through HR workflows and data pipelines. This reveals a normative shift where the yardstick of organizational loyalty overrides the principle of personal autonomy, particularly insidious because it operates preemptively—employees self-censor not due to stated rules but to the opaque logic of predictive systems. The non-obvious consequence is that political expression is no longer challenged as dissent but managed as a cybersecurity-like threat vector, aligning workplace compliance with post-9/11 security paradigms.

Stealth Ideological Tax

The use of AI to monitor employee behavior imposes a stealth ideological tax, where workers implicitly pay for employment stability by suppressing politically charged speech, even outside work hours. Employers justify this through the economic yardstick of brand protection and investor confidence, leveraging machine learning systems trained on social media to infer affiliations that may alienate customer bases or trigger controversy. What remains underappreciated is that this tax is regressive—disproportionately affecting lower-wage workers without communications teams or legal shields—turning personal expression into a privilege of seniority. The familiar frame of 'company culture fit' masks this as soft management, when in practice it enforces a hidden ideological equilibrium.

Behavioral Containment Field

AI-driven monitoring constructs a behavioral containment field, where the practical yardstick of operational continuity legitimizes the erosion of democratic expression within private workplaces. Drawing on counterinsurgency logic adapted from military intelligence, firms treat employee sentiment as a terrain to be mapped and stabilized, using natural language processing to detect 'radicalization pathways' in communications. This reframes political speech not as a right but as a precursor to disruption, aligning corporate governance with national security epistemologies. The underappreciated reality is that these systems don’t merely respond to threats—they produce the normative baseline against which all expression is measured, making dissent statistically deviant.

Data Fungibility

AI-driven behavioral monitoring enables employers to repurpose security-collected data for talent development by identifying patterns in employee collaboration and communication that correlate with innovation, thereby transforming surveillance outputs into organizational learning inputs. This occurs when HR analytics teams cross-reference monitored metadata—such as meeting frequency, response latency, and network centrality—with performance outcomes, revealing previously invisible pathways for upskilling and team design. The overlooked dynamic is that data collected for control becomes structurally fungible, serving generative purposes once decoupled from disciplinary intent, which challenges the zero-sum framing of privacy versus security.

Expression Arbitrage

Employees in politically sensitive industries use AI monitoring as a signal to calibrate and strategically relocate their political expression to off-platform, voice-mediated, or humor-coded forms of communication, thereby increasing the resilience of informal dissent networks. This adaptive behavior emerges in hybrid workplaces where algorithmic detection thresholds are known to favor text-based sentiment analysis, creating arbitrage opportunities in modality choice. The non-obvious consequence is that surveillance pressure doesn’t suppress expression but reshapes its linguistic and technical form, revealing expression as a dynamically allocable resource rather than a fixed right.

Normative Drift

Persistent behavioral monitoring shifts the baseline of acceptable political silence, not through coercion but through the gradual recalibration of peer expectations in monitored environments, where employees internalize observed restraint as social norm. In knowledge firms with high information transparency—such as consulting or tech—this normative drift manifests as self-censorship clustered around high-visibility digital channels, which then migrates unrecorded political discourse into physical or ephemeral digital spaces. The overlooked mechanism is that AI monitoring alters group-level expressive norms not by enforcement but by visibility, transforming privacy into a collectively produced artifact rather than an individual guarantee.

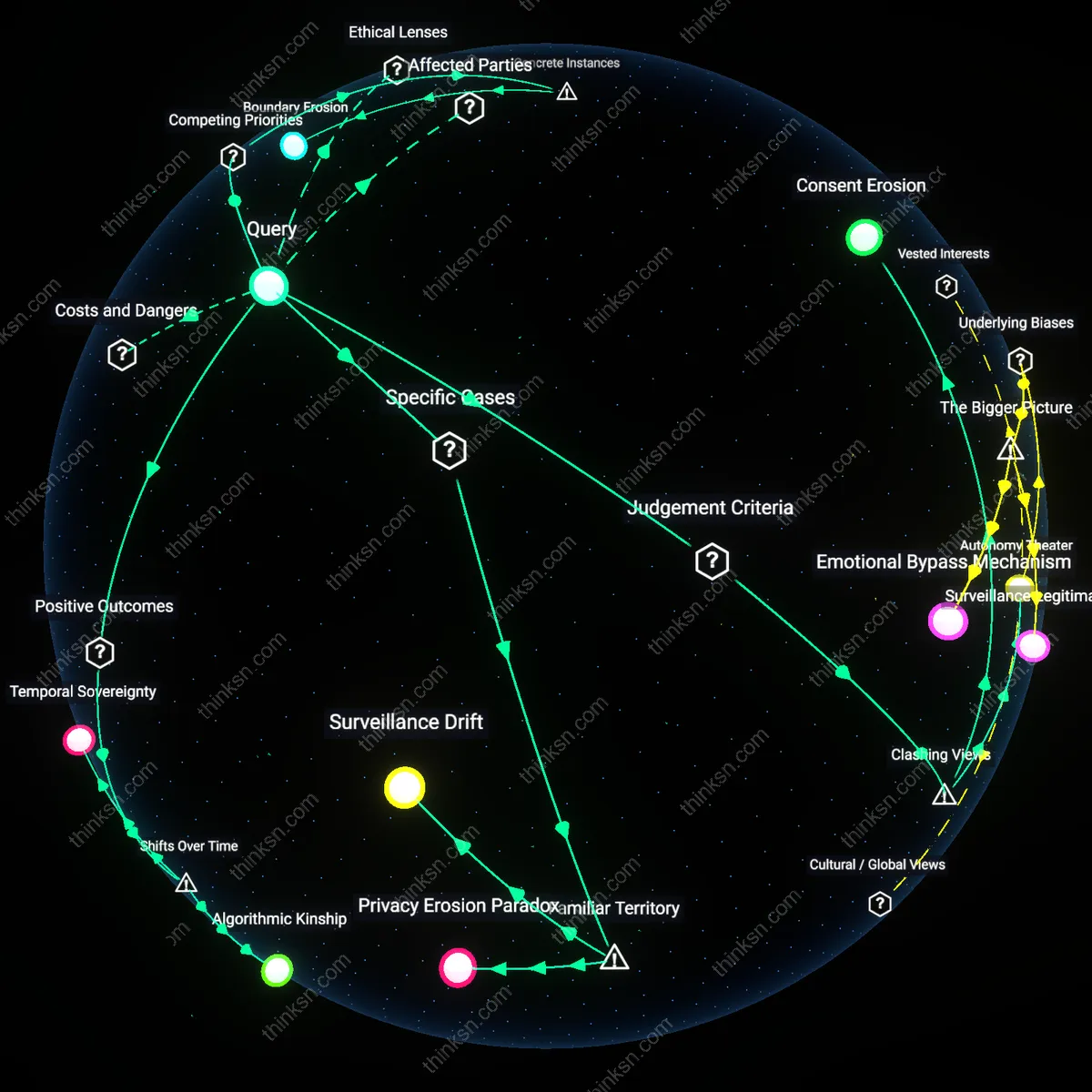

Algorithmic Chilling Effect

AI-driven behavioral monitoring suppresses employees' political expression by amplifying perceived surveillance risks, dissuading dissent even in legally protected contexts. When employers deploy pattern-recognition algorithms to flag 'risky' behavior—such as participation in protests or social media activity—employees internalize ambiguity about what constitutes a career liability, leading to preemptive self-censorship. This operates through the structural coupling of human resources risk management protocols with opaque machine-learning models trained on historically biased disciplinary data, creating a feedback loop where political neutrality becomes a de facto employment requirement. The non-obvious consequence is not overt punishment but the erosion of associative freedom through anticipatory compliance, driven not by policy but by the uncertainty engineered into monitoring systems.

Security Theater Escalation

The adoption of AI monitoring reflects organizational theater in which security procedures are expanded to signal control, regardless of actual threat reduction, displacing accountability onto employees’ personal conduct. Corporations, particularly in finance and tech sectors, face shareholder and regulatory pressure to demonstrate proactive threat mitigation after high-profile insider threat incidents, incentivizing the deployment of surveillance tools that create visible compliance artifacts. This dynamic emerges from the misalignment between diffuse, low-probability risks (e.g., radicalization) and the high visibility of surveillance expenditures, leading firms to prioritize performative security over substantive assessment of political expression as a non-threat. The underappreciated systemic cost is the inflation of threat ontology—where normal political variance is absorbed into security frameworks—fuelling a recursive demand for deeper monitoring.

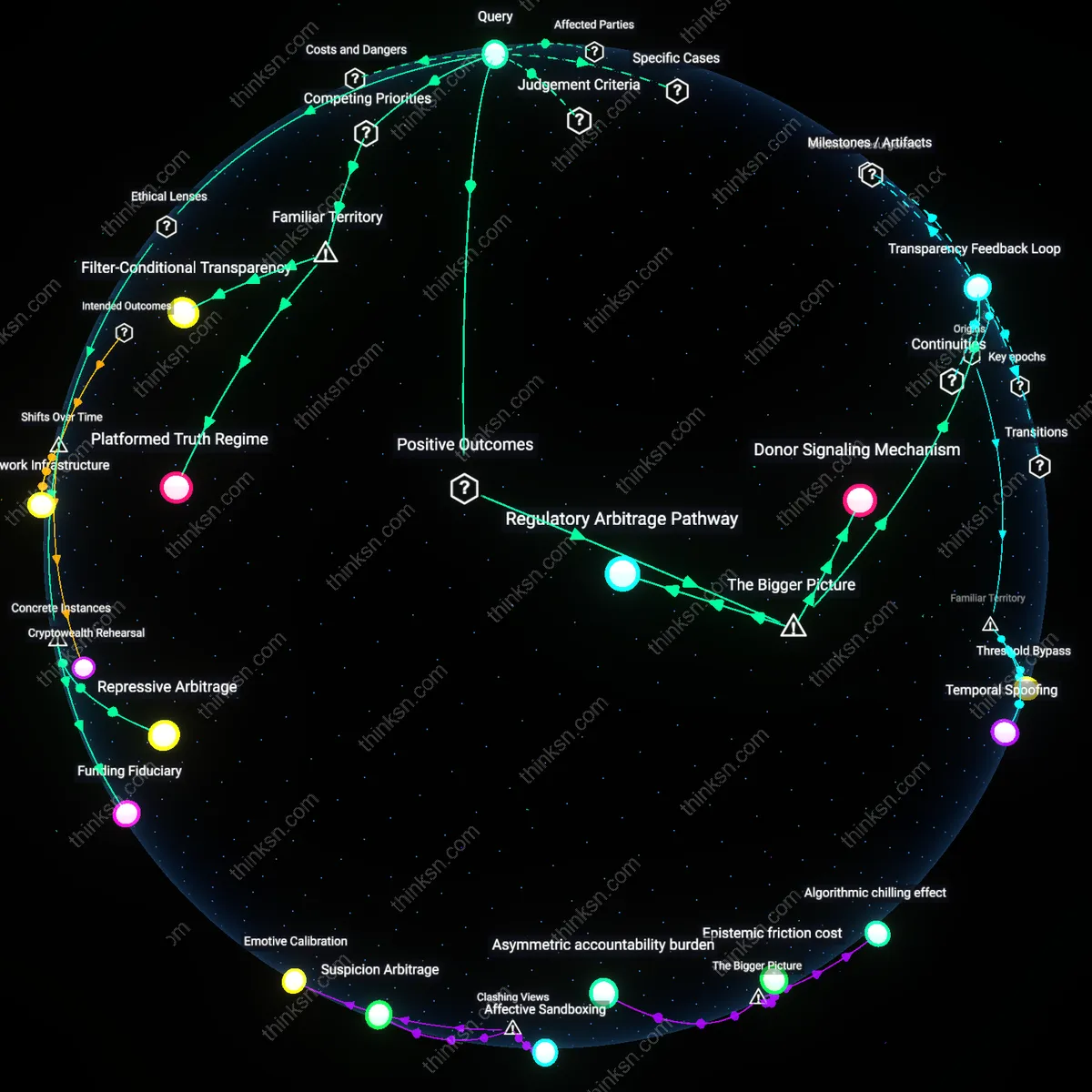

Jurisdictional Arbitrage of Conduct

Employers use AI monitoring to enforce conduct standards that circumvent legal protections by shifting regulation from public to private governance domains, where constitutional rights do not apply. Multinational corporations, especially those operating across jurisdictions with divergent speech laws (e.g., U.S. vs. EU vs. Southeast Asia), deploy centralized behavioral analytics to standardize employee conduct under internal codes of 'professionalism' or 'brand safety,' effectively overriding local legal allowances for political speech. This operates through the consolidation of compliance infrastructure in global HR platforms that treat political expression as a reputational risk, not a civic right, enabling firms to preemptively suppress speech that could trigger regulatory friction in any single market. The overlooked consequence is the quiet privatization of political liberty, where non-state actors impose transnational behavioral norms without democratic oversight.

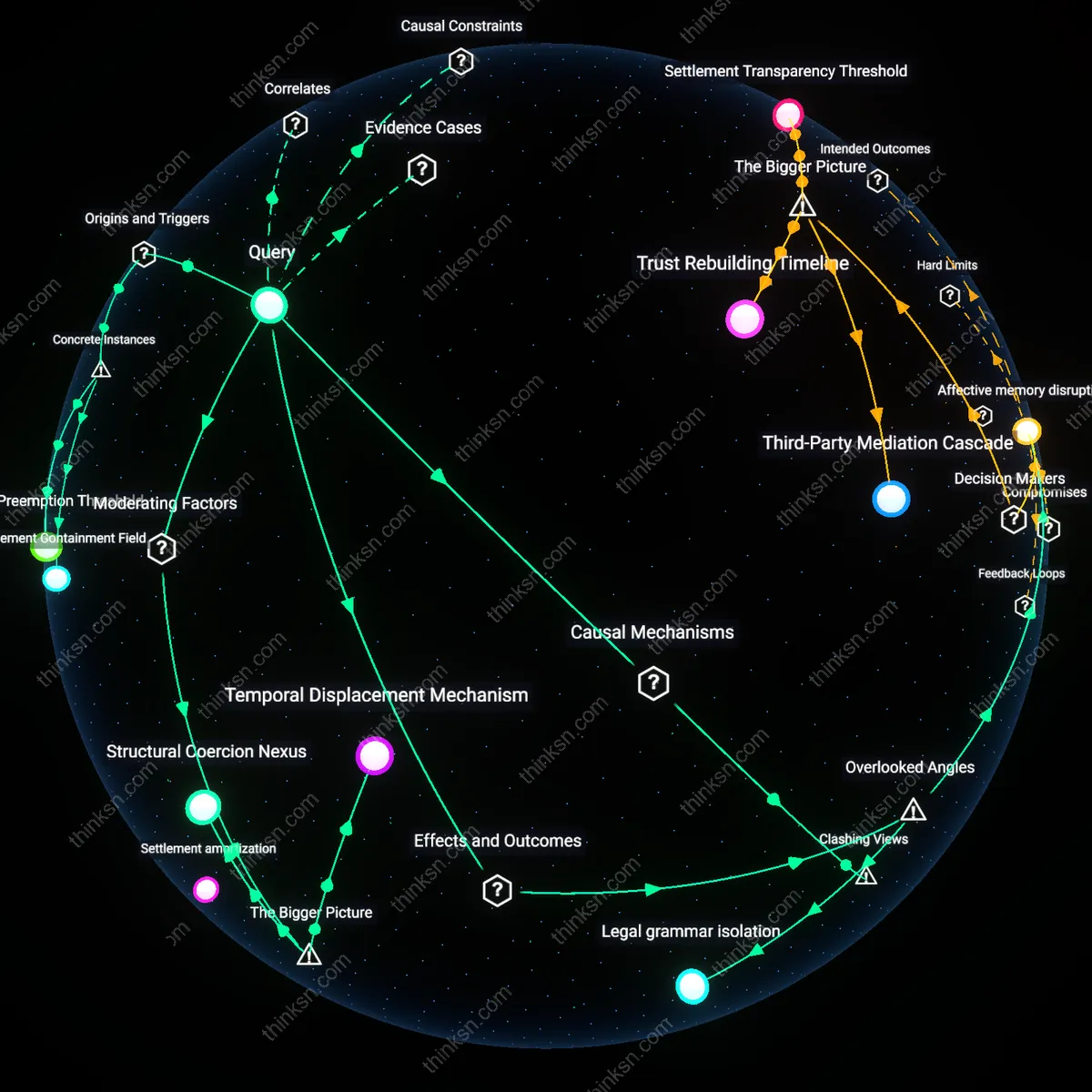

Surveillance Bypass

The deployment of AI-driven keystroke and sentiment analysis by Amazon warehouse supervisors in Baltimore in 2022 reveals that automated monitoring systems are designed not to prevent external security threats but to circumvent unionization efforts under the guise of operational integrity; the mechanism functions by flagging language patterns associated with labor organizing as 'security risks,' thereby redefining political expression as a breach of conduct, which systematically suppresses dissent before it can formalize—what is underappreciated is that the security infrastructure operates less on external threats and more as an anticipatory gatekeeper of internal ideological alignment.

Expression Taxation

Hewlett-Packard’s use of AI-powered webcam monitoring in its China-based customer service divisions from 2019–2021 shows that facial recognition systems penalize employees for displaying insufficient 'positive affect' during client calls, effectively requiring the constant performance of apolitical emotional states; this system converts political passivity into a job requirement, where any deviation—such as subdued expression during politically tense periods like the Hong Kong protests—triggers performance warnings, revealing that security is recast as emotional compliance, and political neutrality becomes a monitored labor output rather than a personal right.

Data Reassignment

The rollout of Microsoft’s Workplace Analytics suite in the European branch offices of Unilever in 2020 enabled the repurposing of email metadata and calendar patterns to infer employee affiliations with internal advocacy groups, such as those pushing for climate action or racial equity; although the tool was introduced under cybersecurity protocols for data leakage prevention, its behavioral clustering algorithms reclassified participation in DEI strategy meetings as 'non-core role activity,' effectively demoting politically engaged employees through algorithmic sidelining—what is rarely acknowledged is that behavioral data harvested for security becomes a covert mechanism for marginalizing dissent under performance optimization frameworks.