Faster Data Services or Risky Personal Info? Weighing the Trade-offs

Analysis reveals 5 key thematic connections.

Key Findings

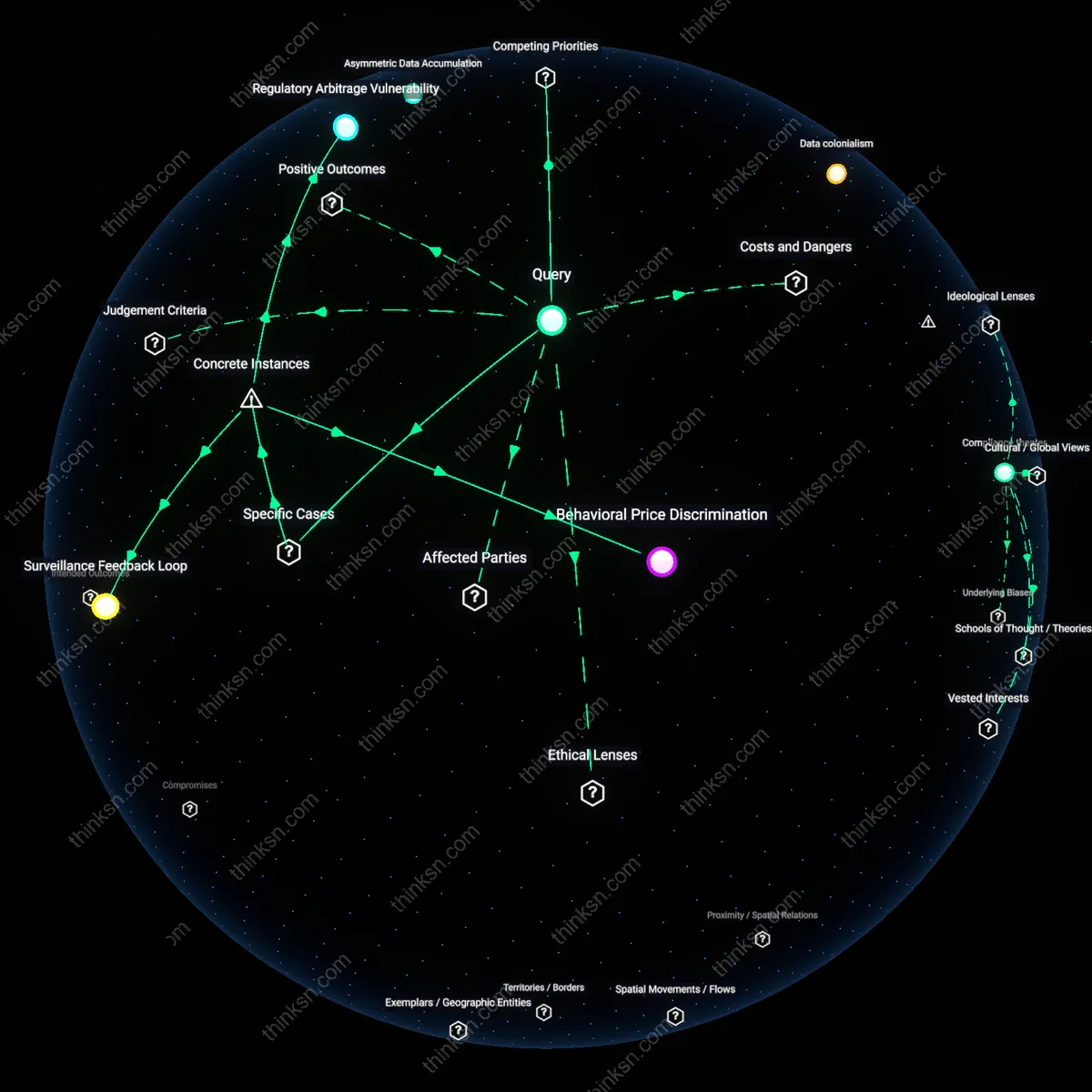

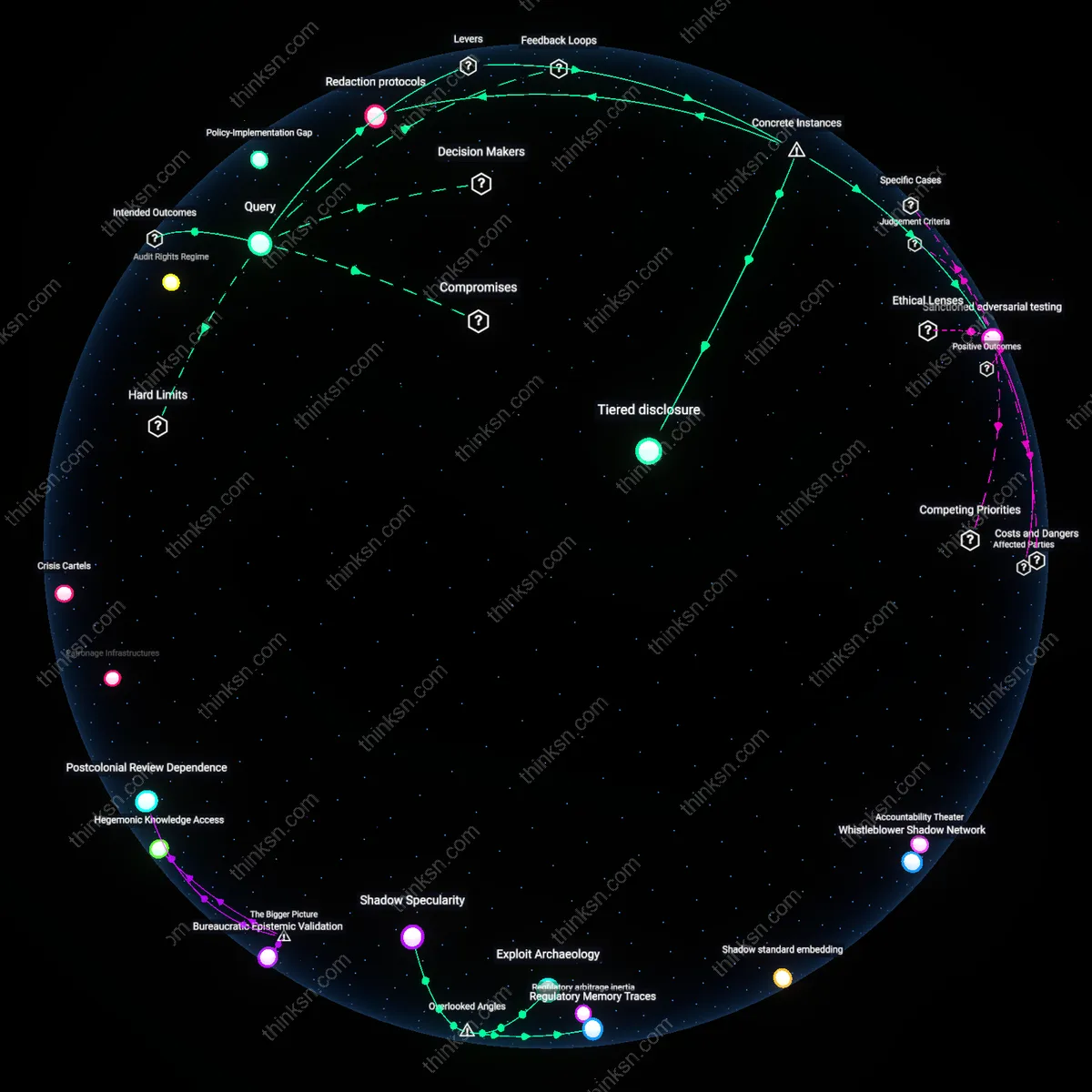

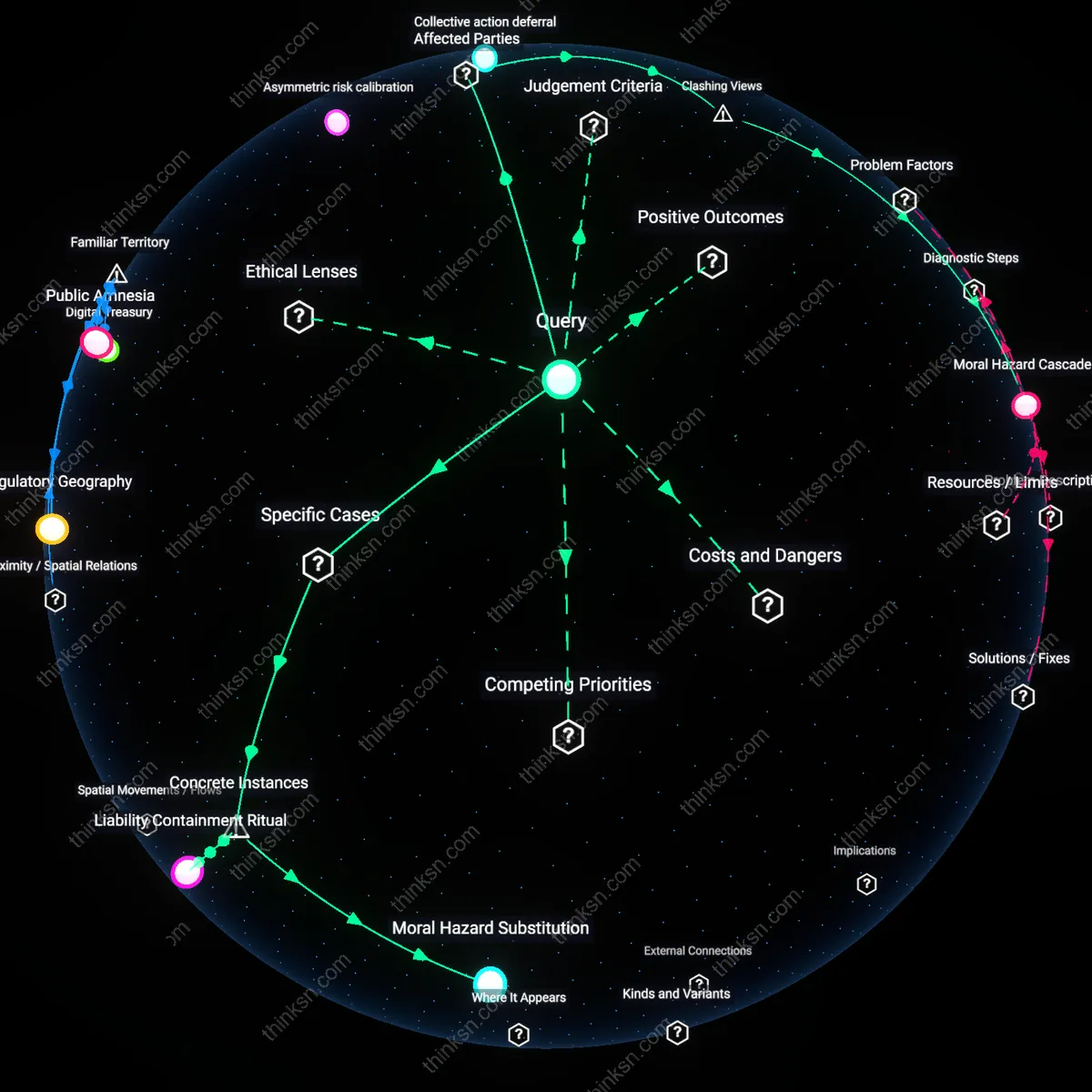

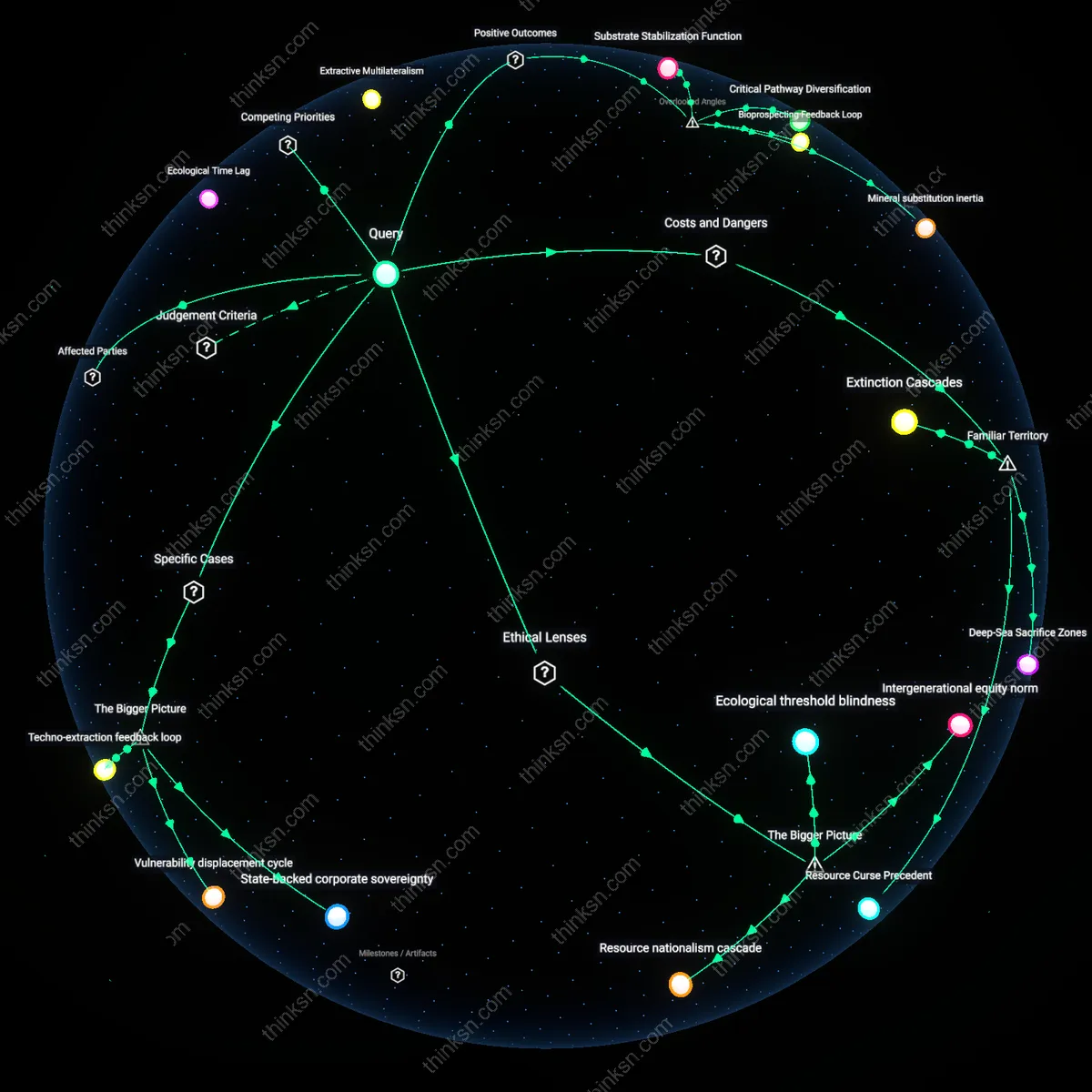

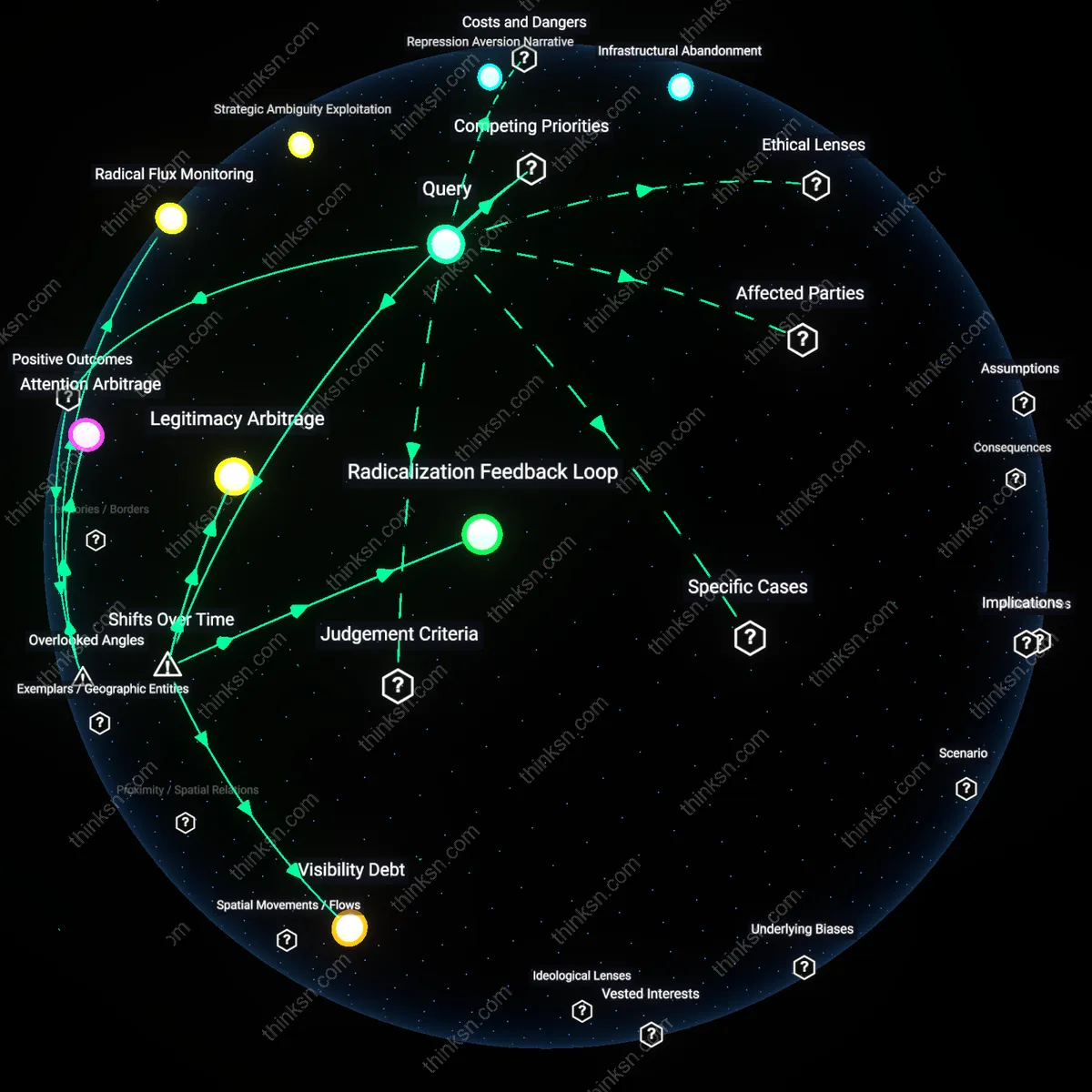

Regulatory Arbitrage Incentive

Consumers cannot meaningfully balance service speed against data misuse risks because platform operators exploit jurisdictional fragmentation to minimize compliance costs while maximizing data extraction, a dynamic reinforced by the race-to-the-bottom in privacy regulation among states and nations competing for tech investment. Tech firms route data processing through regions with weaker enforcement—like Ireland for US multinationals or Singapore for cross-Asia platforms—enabling faster service deployment without equivalent accountability, thereby shifting the burden of risk onto individuals who lack legal recourse or transparency. This creates a systemic bias where consumer 'choice' is illusory, as options are pre-shaped by corporate legal engineering rather than market or ethical equilibrium. The underappreciated force here is not consumer behavior but the structural advantage deregulated zones provide in scaling platforms globally while avoiding unified oversight.

Asymmetric Data Accumulation

The trade-off between speed and privacy is inherently skewed because data-mining platforms achieve service acceleration not through isolated data points but via continuous, ambient surveillance across user behaviors, which becomes irreversible once aggregated into proprietary behavioral models. Platforms like Amazon or TikTok refine recommendation engines by bundling explicit actions (purchases, clicks) with implicit signals (dwell time, scroll speed), creating feedback loops that deepen dependency on faster personalization while making opt-outs functionally costly in time or convenience. This accumulation operates silently, beyond momentary consent, embedding surveillance into the usability of the service itself. The overlooked mechanism is that speed becomes addictive not because it improves isolated transactions, but because the system learns to anticipate needs before users articulate them, thereby raising the hidden cost of privacy protection to cognitive and temporal disengagement.

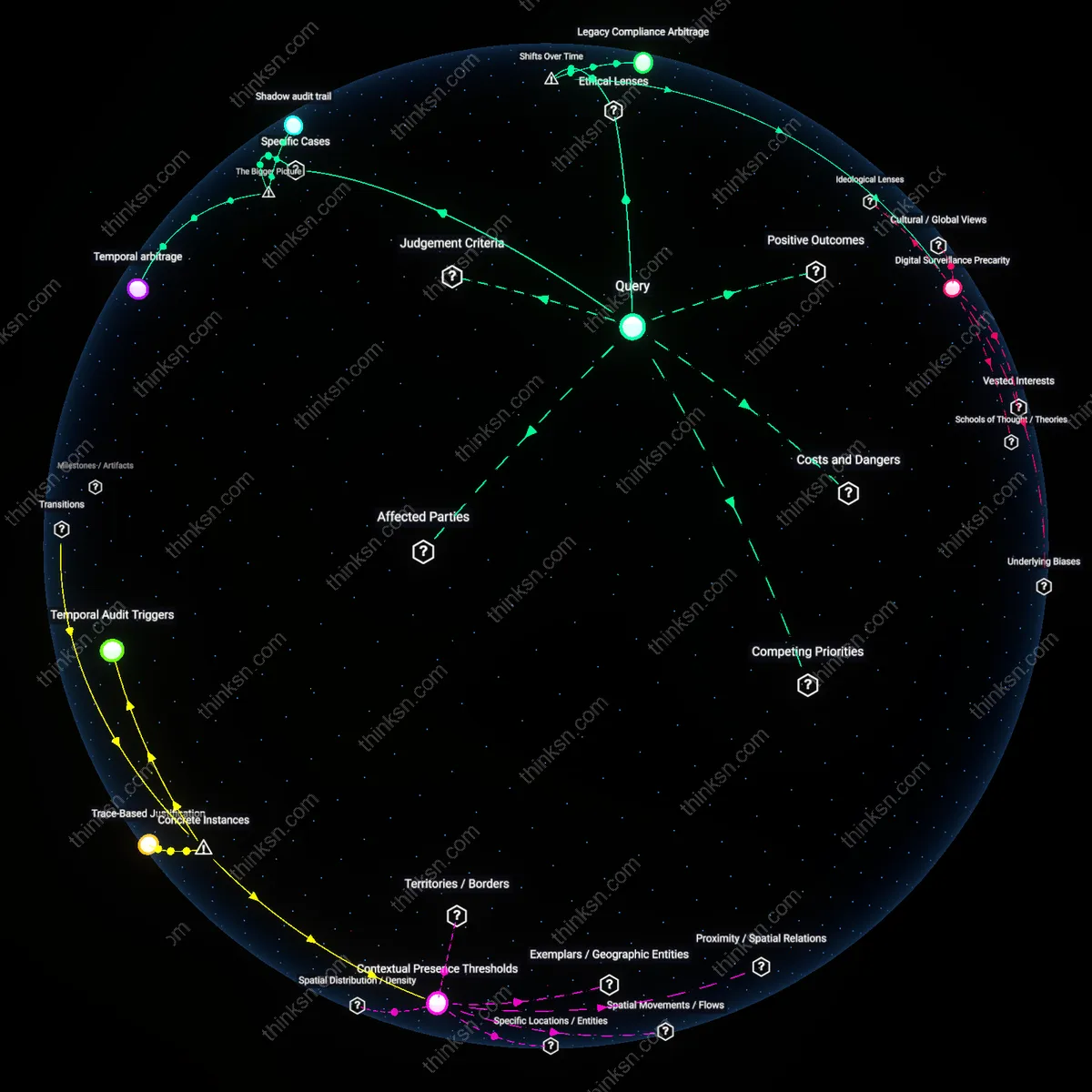

Regulatory Arbitrage Vulnerability

Consumers in the European Union experience stronger protection against data misuse not because platforms inherently limit data-mining, but because GDPR forces companies like Meta to adopt opt-in consent architectures for services such as targeted advertising on Facebook; this demonstrates that faster service built on data-mining is only safely balanced when jurisdictions impose enforceable constraints, revealing that the disparity in regulatory stringency across regions creates a structural weakness where firms optimize for lax environments, thereby transferring risk to users in less regulated markets.

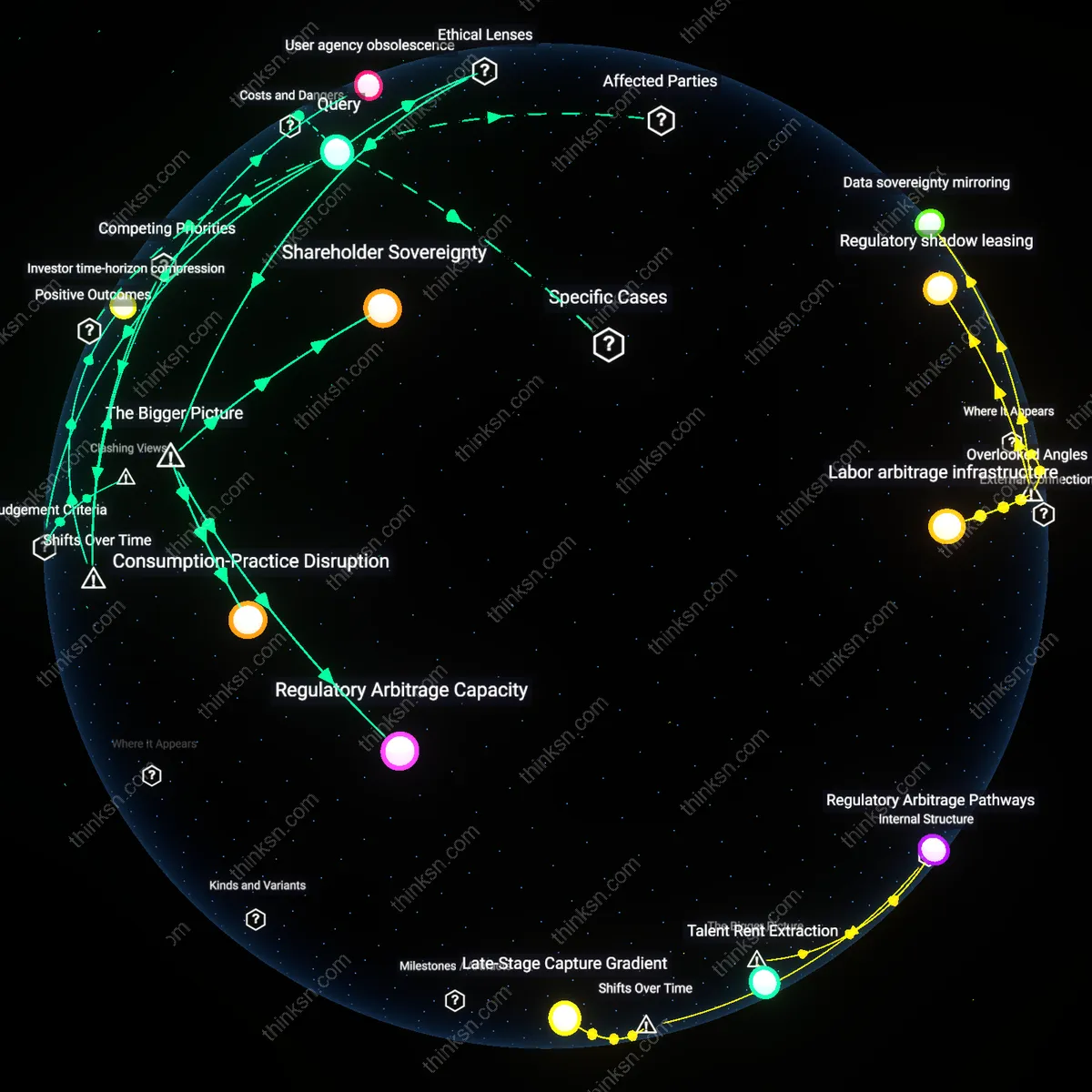

Behavioral Price Discrimination

Amazon’s dynamic pricing engine, which customizes product prices in real time based on user browsing history and purchase patterns, illustrates how data-mining accelerates service personalization while covertly enabling differential pricing; this mechanism shows consumers may accept faster, tailored recommendations unaware that their data is being leveraged to extract maximum willingness to pay, exposing a hidden cost in perceived convenience that operates through algorithmic inference rather than explicit transaction terms.

Surveillance Feedback Loop

The Chicago Police Department’s use of predictive policing algorithms, which draw on data-mined civilian records and social network analyses to anticipate crime hotspots, has led to over-policing in predominantly Black neighborhoods like Englewood, where inaccurate risk profiles feed back into expanded data collection; this case reveals that faster operational outcomes from data-mining systems can entrench systemic bias by rewarding platforms that prioritize speed over accuracy and accountability, thereby normalizing invasive data use under the guise of public safety efficiency.