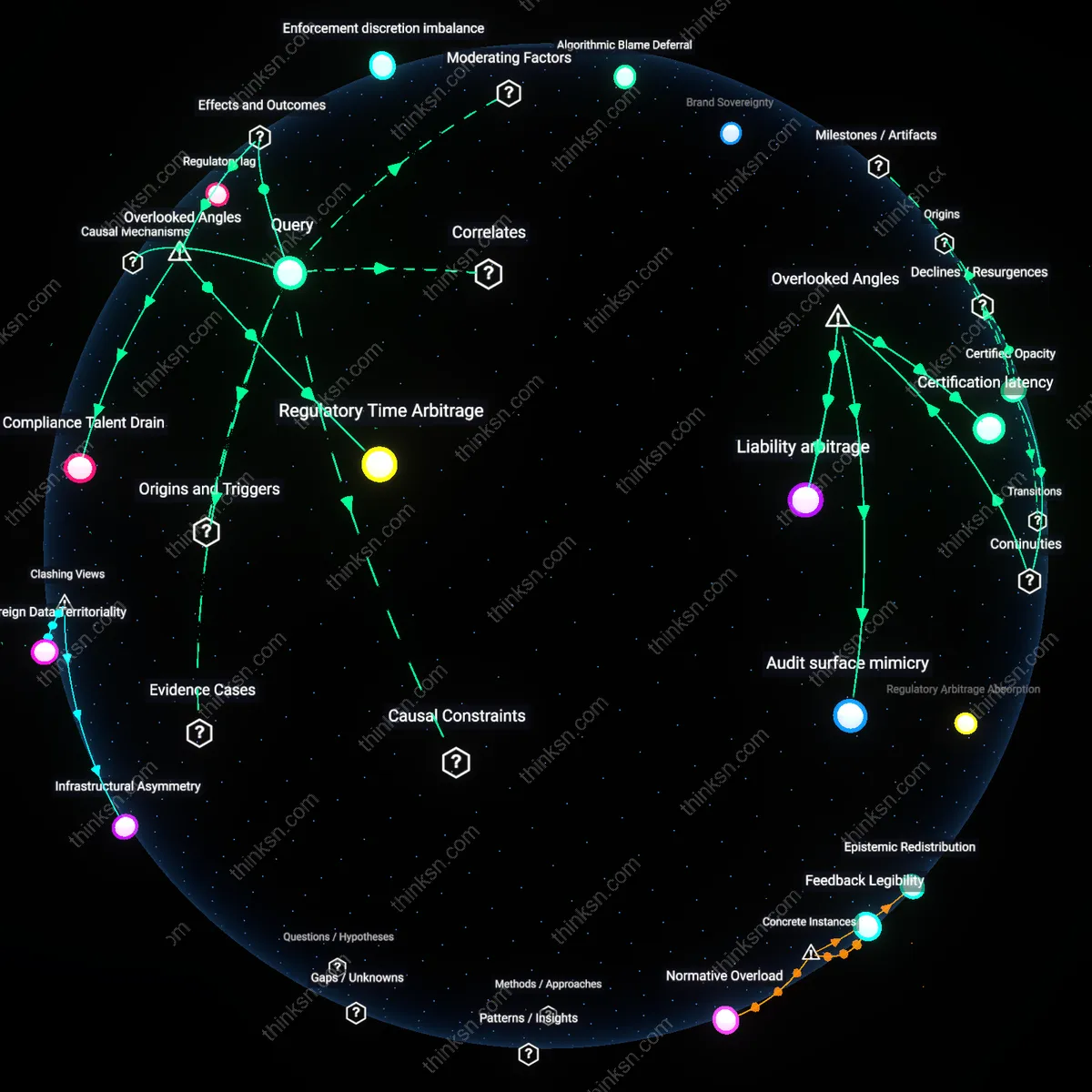

Is Biometric Finance Worth the Privacy Risk?

Analysis reveals 12 key thematic connections.

Key Findings

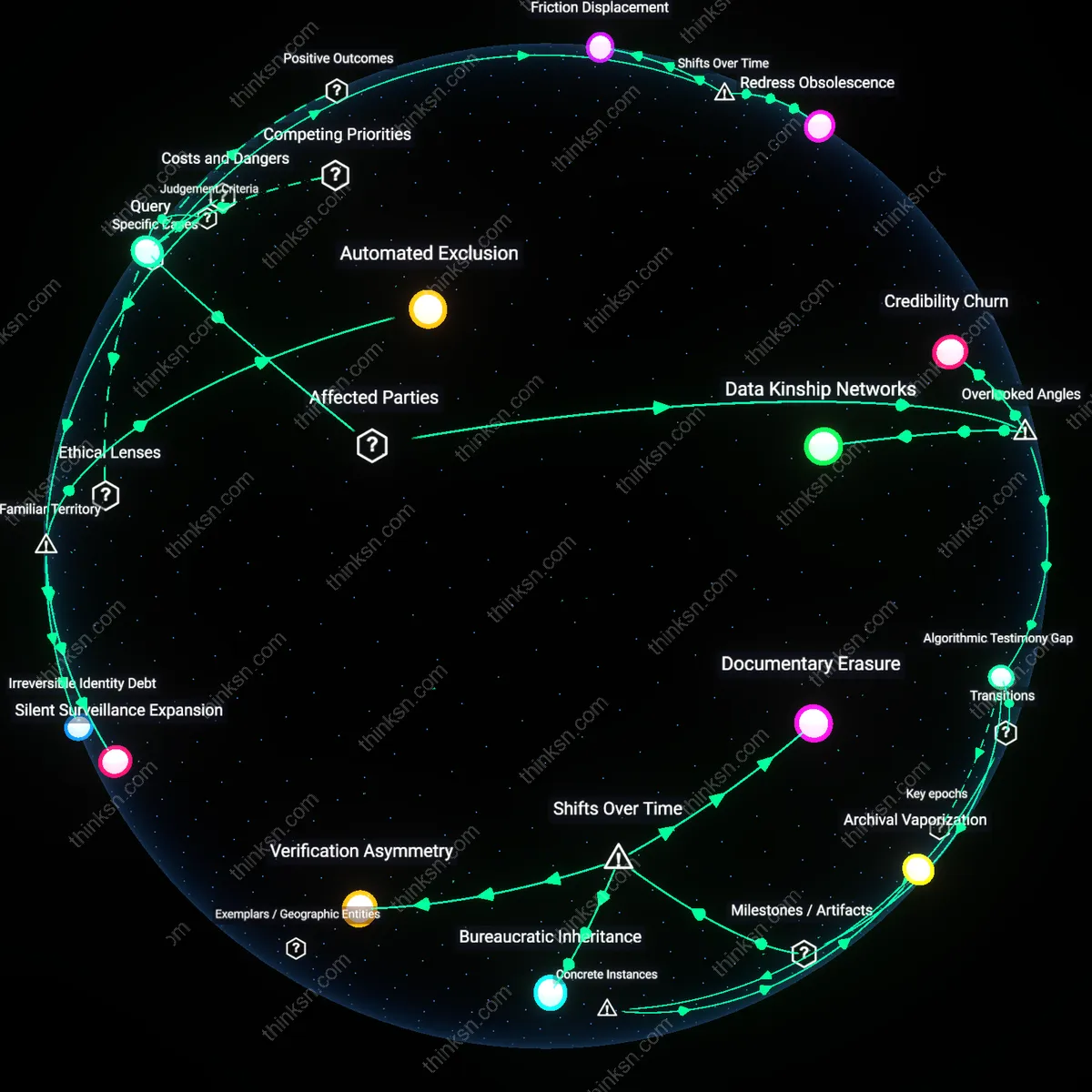

Credibility Churn

Requiring biometric authentication on private financial platforms undermines the long-term credibility of financial inclusion initiatives by systematically eroding trust among low-income urban populations in Global South megacities. Because false matches cannot be appealed through accessible mechanisms, users in informal economies—such as street vendors in Lagos or rickshaw drivers in Dhaka—disengage from formal financial ecosystems not due to distrust of banks per se, but because biometric systems conflate identity verification with surveillance exposure, making non-use a rational act of self-preservation. This dynamic is overlooked in ethical debates that focus on accuracy rates rather than the cumulative attrition of system legitimacy across repeated micro-interactions. The residual concept is the erosion of institutional trust not through single catastrophic failures, but through the steady accumulation of uncorrectable minor exclusions.

Data Kinship Networks

Biometric authentication systems on private financial platforms create ethical hazards by rupturing extended family financial practices in rural Indigenous communities where identity is relationally rather than individually defined. In regions like the Zapotec highlands of Oaxaca or Māori enclaves in Aotearoa, financial decisions and creditworthiness are tied to kinship signaling and communal recognition—biometric mismatches that cannot be corrected sever these invisible layers of social collateral, effectively penalizing users for not conforming to atomized identity models. Standard ethics frameworks miss this because they treat identity verification as a technical interface rather than a cultural contract. The overlooked mechanism is how biometric systems destabilize interdependent credit ecosystems that predate formal banking, rendering entire lineages financially illegible.

Algorithmic Testimony Gap

The inability to correct false biometric matches denies users in post-conflict societies the capacity to assert personhood in systems that increasingly mediate basic economic agency, transforming authentication failures into silences that mimic state erasure. In places like Sierra Leone or Eastern DR Congo, where survivors of war-time displacement rely on financial platforms to rebuild lives without official ID, a false match becomes an unchallengeable verdict on existence—one that replicates the epistemic violence of prior regimes. This dimension is missed because mainstream discourse assumes user recourse exists unless proven otherwise, ignoring how lack of appeal functions as a form of testimony suppression. The residual concept is the absence of evidentiary return loops in automated identity regimes.

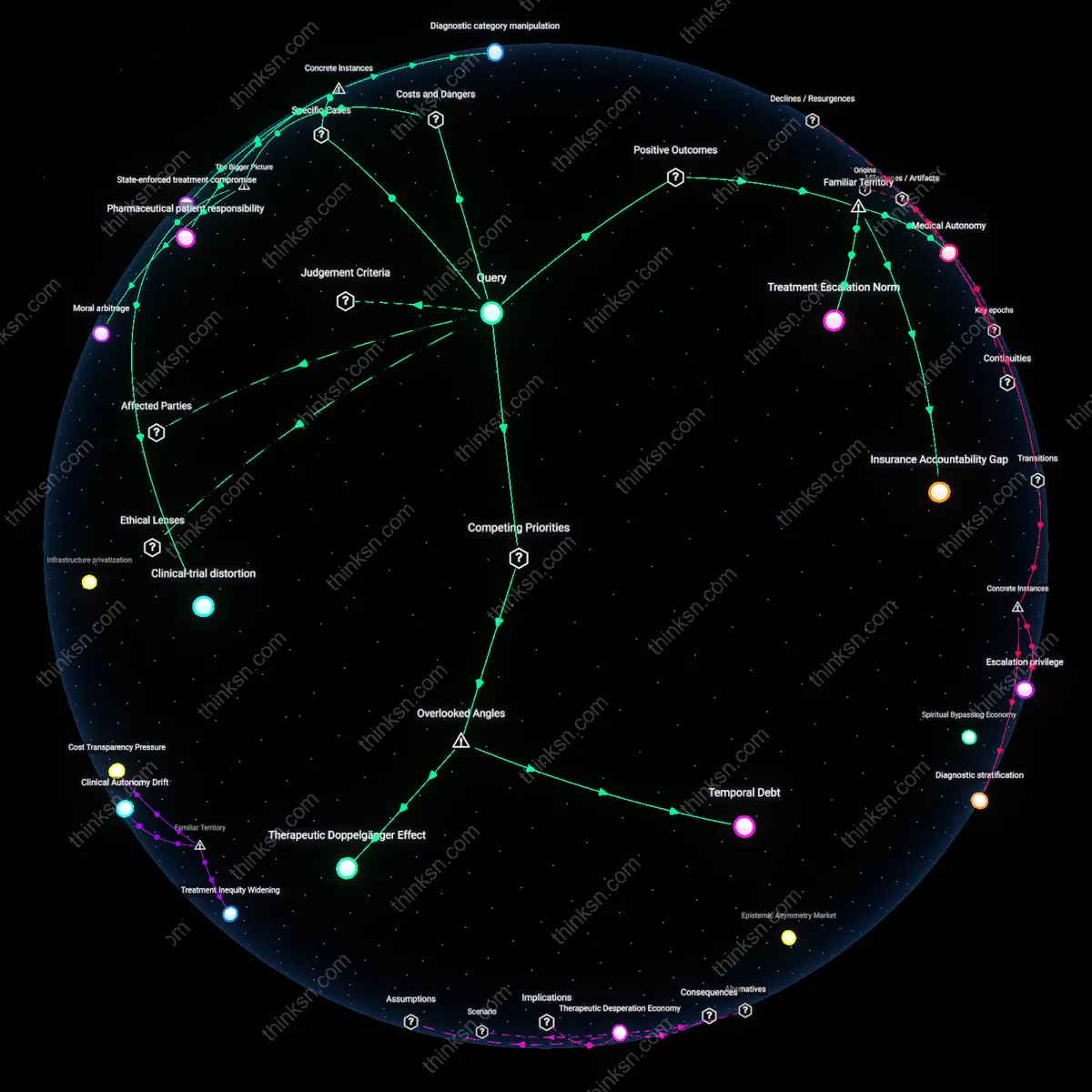

Asymmetric redress burden

Requiring biometric authentication on private financial platforms cannot be ethically justified when users lack recourse against false matches, as demonstrated by Aadhaar-linked bank de-duplication errors in India between 2016 and 2019, where rural welfare recipients were denied access to funds due to fingerprint misreads that system operators refused to override without biometric re-verification—revealing a systemic collapse of accountability when automated exclusions outpace human remediation, particularly among marginalized populations with no fallback channels.

Consent obfuscation

Biometric authentication in private financial systems is ethically unjustifiable when false matches cannot be appealed, illustrated by Mastercard’s 2020 ‘Selfie Pay’ rollout in the UK, where users were enrolled in facial recognition by implied consent through general terms of service, leaving no opt-out mechanism for dispute resolution when authentication errors occurred—exposing how user ‘consent’ functions as a regulatory shield rather than a meaningful autonomy safeguard when error correction is structurally absent.

Infrastructural moral hazard

Mandatory biometrics on financial platforms without correction mechanisms fail ethically, as seen in JPMorgan Chase’s internal biometric login system from 2018 onward, which locked out employees after three iris-scan mismatches while maintaining no review board or appeal path, privileging system integrity over individual access—demonstrating how private actors internalize security as a technical outcome while externalizing ethical risk onto users, especially when auditing is treated as an operational cost rather than a moral obligation.

Automated Exclusion

Requiring biometric authentication on private financial platforms inevitably subjects low-income and elderly users to permanent account lockouts when systems falsely reject their scans, especially as these groups exhibit higher rates of degraded biometrics due to manual labor or medication. Financial institutions outsource identity verification to algorithms that lack appeal pathways, creating irreversible barriers to access even when users are legitimate. This mechanism transforms technical error rates into systemic social exclusion, disproportionately affecting those already marginal to banking infrastructure. The non-obvious reality is that the most trusted security feature—biometrics—becomes a gatekeeper not of fraud, but of vulnerability, rendering human oversight irrelevant in the face of unchallengeable machine decisions.

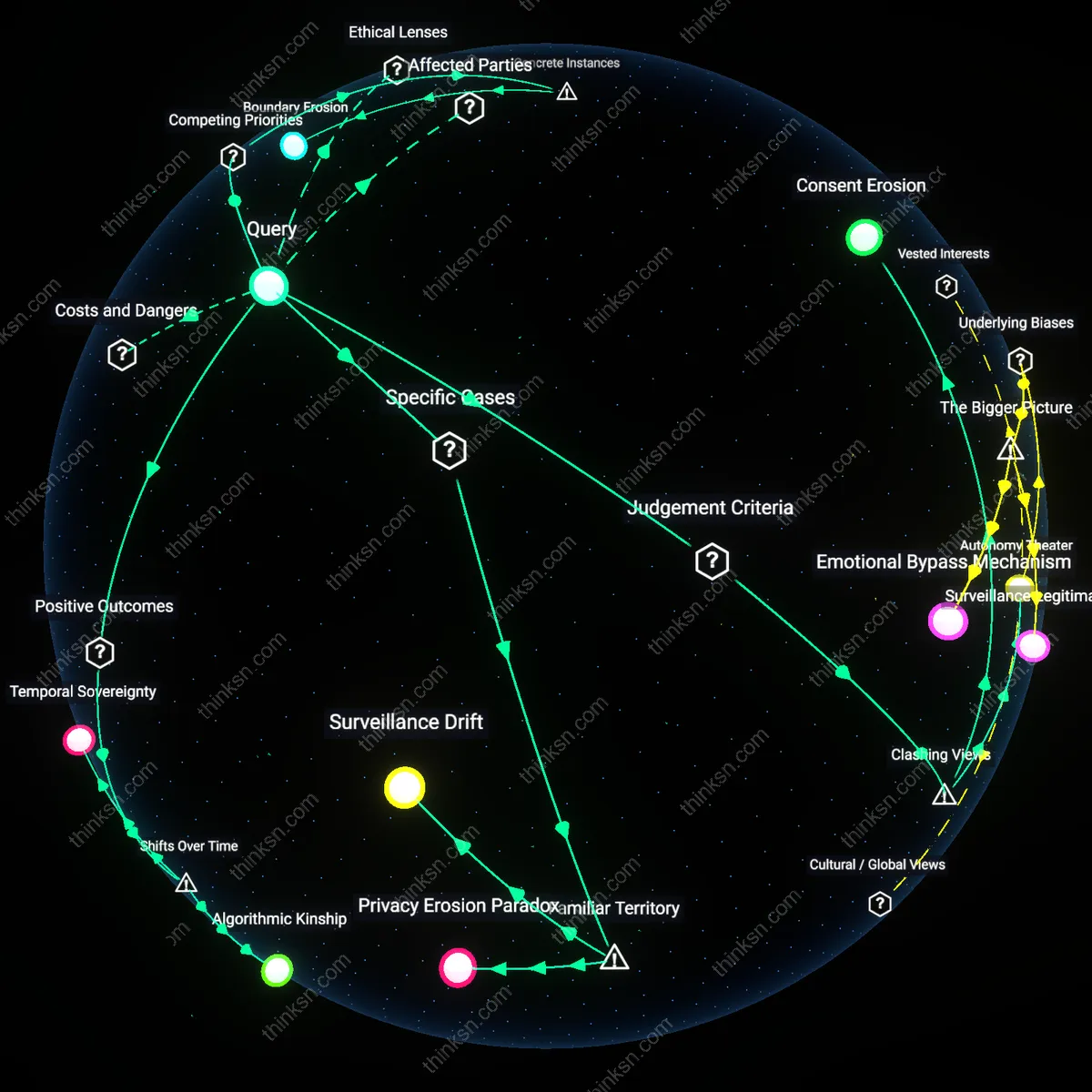

Silent Surveillance Expansion

Biometric enrollment on financial platforms establishes a covert pipeline for government and third-party access to physiological identifiers under the guise of anti-fraud compliance, where data-sharing agreements with identity verification startups link directly to national security networks. Once collected, these biometric templates are rarely deleted and often repurposed beyond their original context, embedding financial services into broader surveillance ecosystems without user knowledge. The danger lies not in overt misuse but in the quiet normalization of permanent physiological tracking through routine transactions. The underappreciated reality is that the most familiar risk—identity theft—is being leveraged to justify a far larger, invisible expansion of state and corporate biometric monitoring.

Irreversible Identity Debt

False biometric mismatches accumulate over time into an untraceable ledger of authentication failures that permanently degrade a user’s standing in automated credit and risk assessment systems, even when identity is later confirmed through alternate means. Unlike passwords, biometric data cannot be reset, so failed matches become permanent markers of suspicion embedded in proprietary fraud algorithms across interconnected financial providers. This creates a hidden penalty system where identity itself becomes tainted by algorithmic error, with no process for redemption. The non-obvious cost is that users are punished not for wrongdoing, but for the technical limitations of systems they did not design, creating a new form of inescapable digital liability.

Consent Erosion

Requiring biometric authentication on private financial platforms cannot be ethically justified when users cannot correct false matches, because the shift from opt-in biometric use to embedded, inescapable enrollment in systems like India’s Aadhaar-linked banking has transformed user consent from an active agreement into a procedural fiction. After the 2016 demonetization crisis, millions were funneled into biometric-dependent banking without recourse, making authentication a condition of economic survival rather than a voluntary choice—this erosion of exit and correction options reveals how temporal dependence on infrastructure hollows out consent over time, particularly when redress mechanisms collapse under scale.

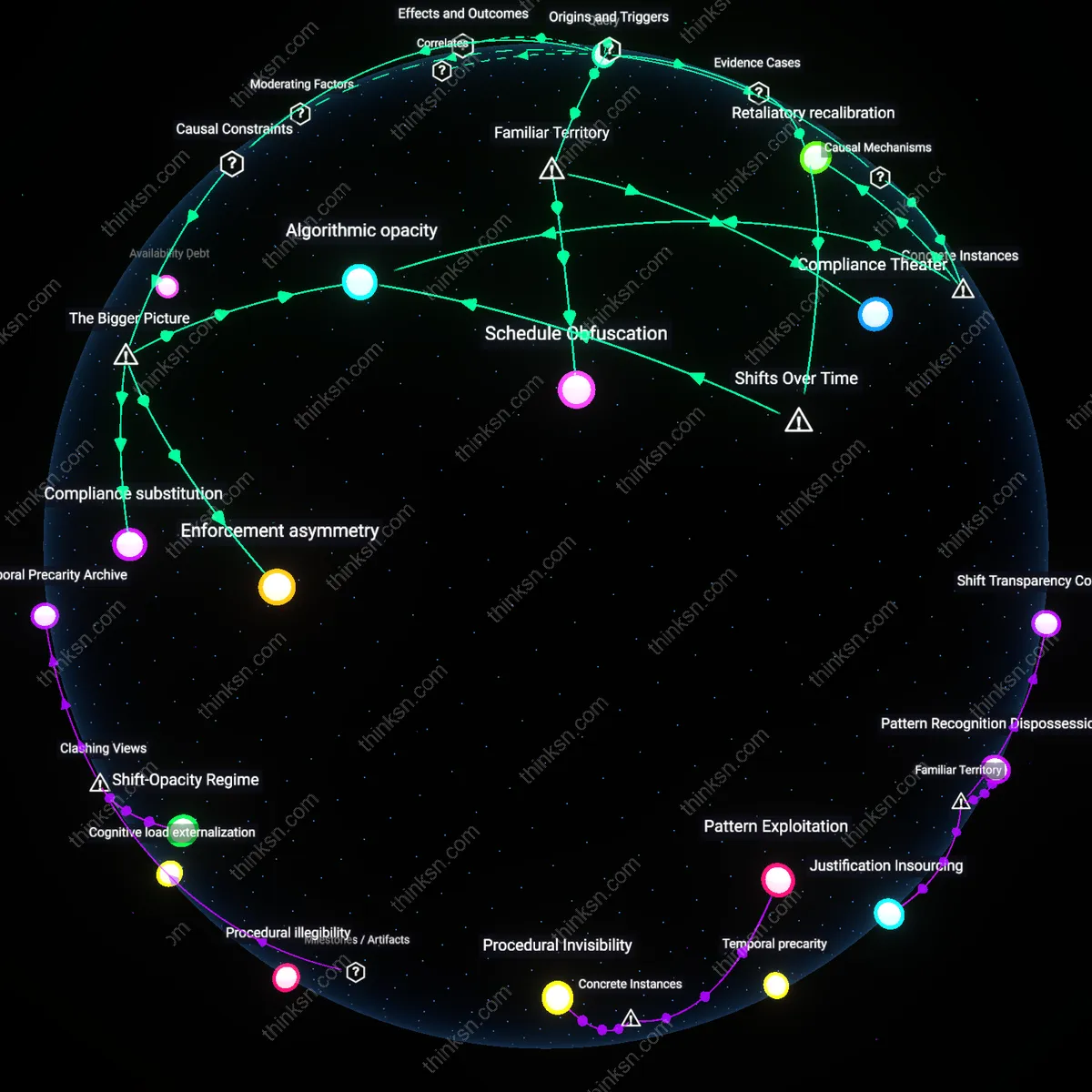

Friction Displacement

Biometric authentication on financial platforms became ethically unstable not when it was introduced, but when the period from 2013 to 2018 saw major fintech firms like Paytm and Mastercard shift from treating false matches as edge cases to normalizing them as systemic friction, relocating the burden of error from the system operator to the marginalized user. This normalization followed the infrastructural scaling of real-time payment ecosystems in Southeast Asia, where speed trumped accuracy, and the inability to correct false denials or false acceptances became invisible through design—what was once a technical flaw became a managed cost, exposing how time-bound optimization pressures displace ethical friction onto the weakest nodes.

Redress Obsolescence

The ethical justification for biometric authentication on private financial platforms collapsed in the post-2020 era, when centralized biometric databases like Clearview AI’s facial recognition network were repurposed by financial underwriting firms in the U.S., rendering user correction impossible not due to design flaw but by intentional dissociation of identity verification from accountability. Unlike the early 2000s, when biometric use in banking was tied to local, auditable systems with human oversight, current architectures operate through opaque algorithmic chains where the moment of misidentification cannot be retraced—this historical shift from traceable to black-boxed authentication produces a new condition where redress mechanisms are structurally obsolete, not merely inadequate.