Therapy or Toxic Threads: Navigating Reddits Impact on Mental Health

Analysis reveals 4 key thematic connections.

Key Findings

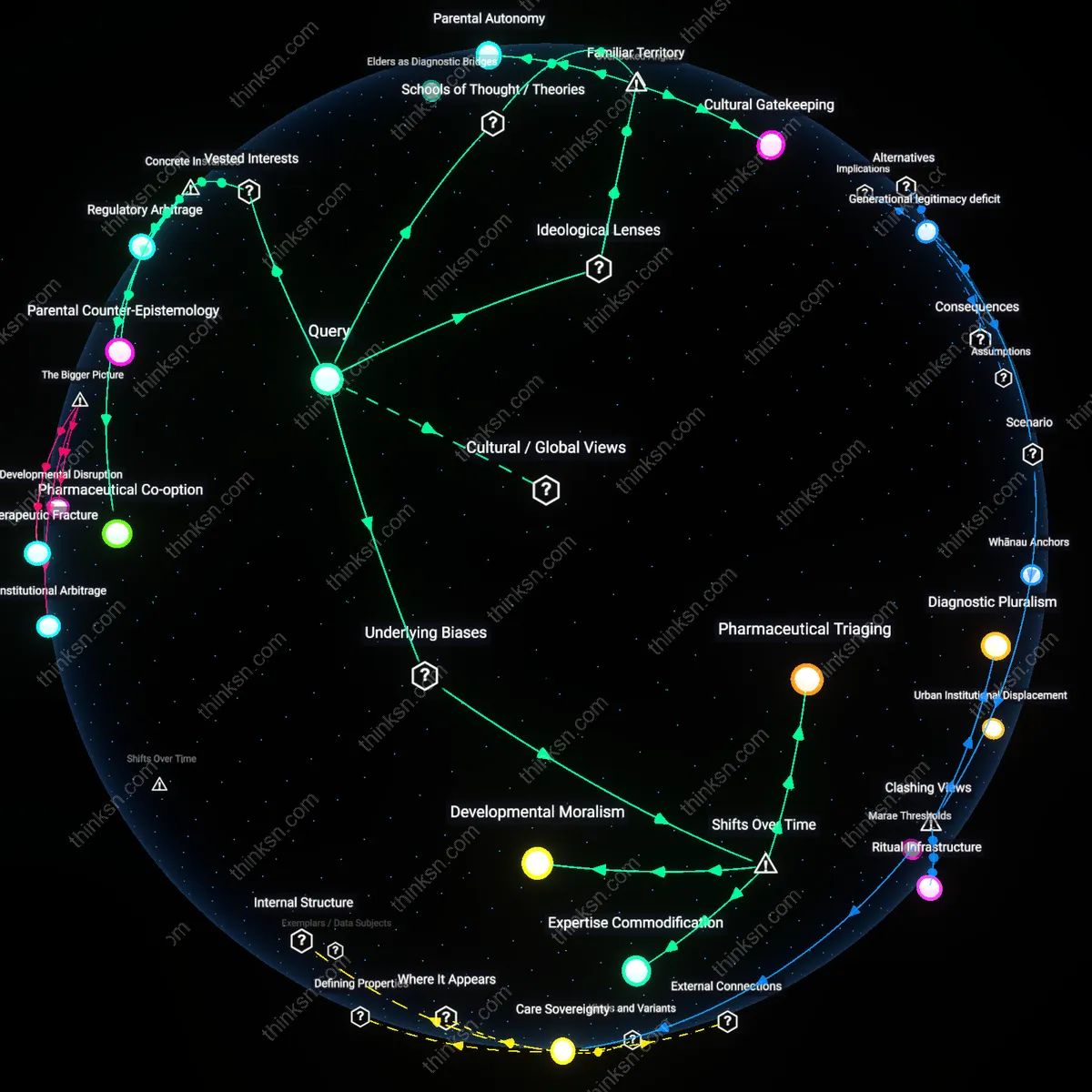

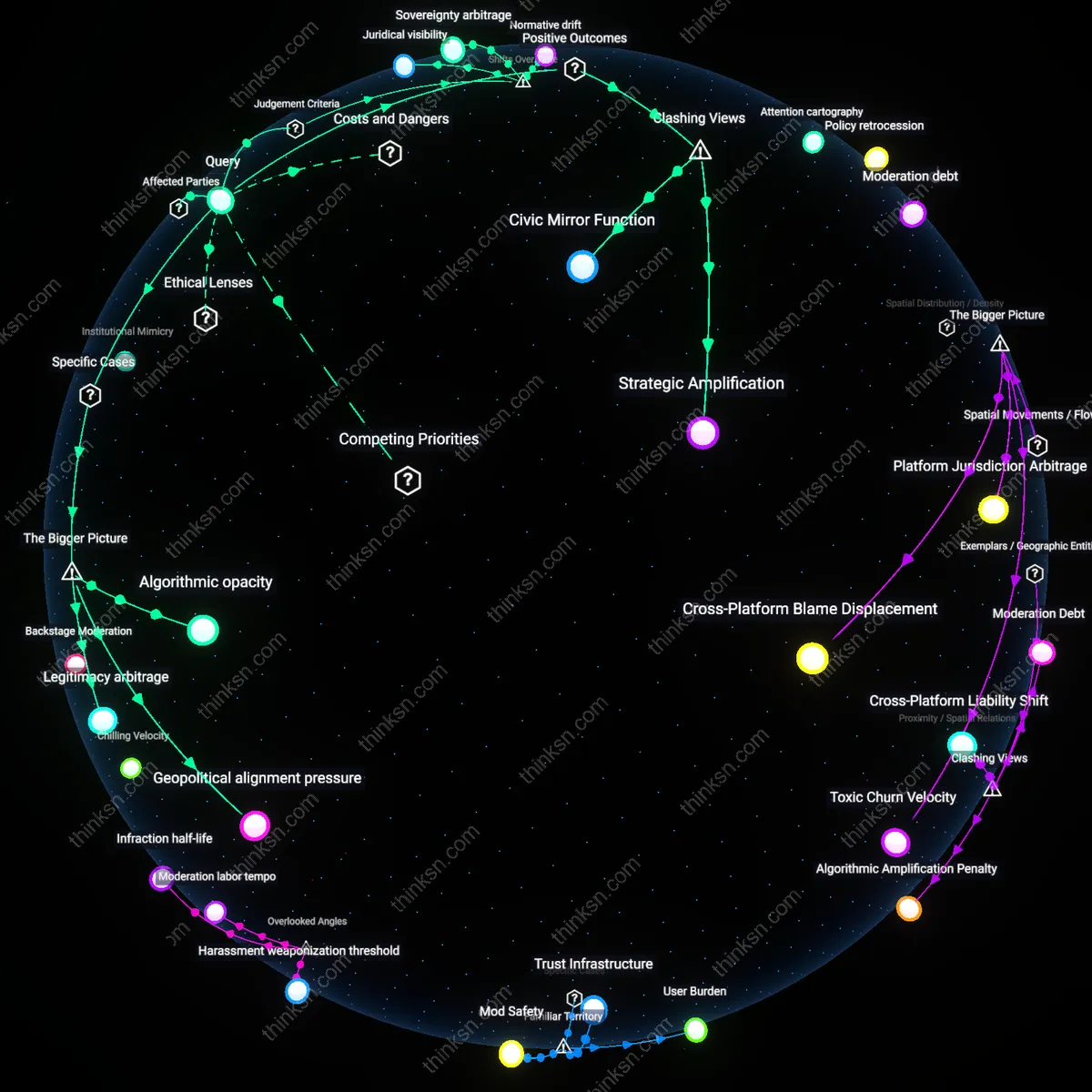

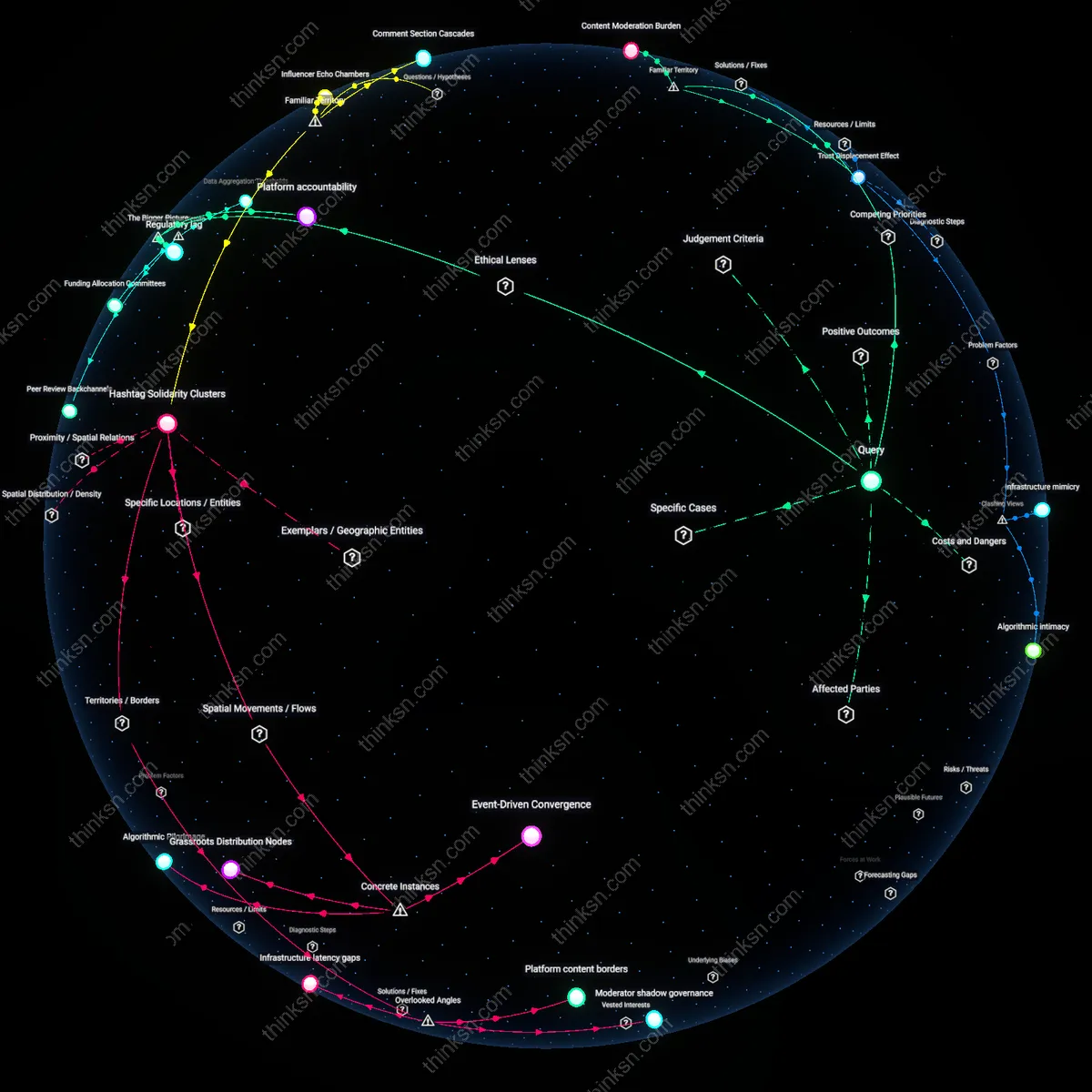

Subreddit Moderation Load

Clinicians should assess and discuss the cognitive and emotional labor patients perform while moderating subreddits, as this hidden role often drives both their sense of purpose and psychological distress. Many adult Reddit users with mental health concerns are active moderators in niche or support-oriented subreddits, where they manage conflicts, enforce rules, and provide peer care—tasks that confer identity and belonging but also generate burnout, moral injury, and exposure to trauma. This dynamic is structurally invisible in therapy because moderation work is rarely framed as labor, yet it shapes users’ sleep patterns, emotional regulation, and self-worth through rhythms of crisis intervention and community governance. The overlooked angle is that therapeutic benefit is not derived from passive consumption or even participation, but from sustained organizational responsibility within decentralized digital communities.

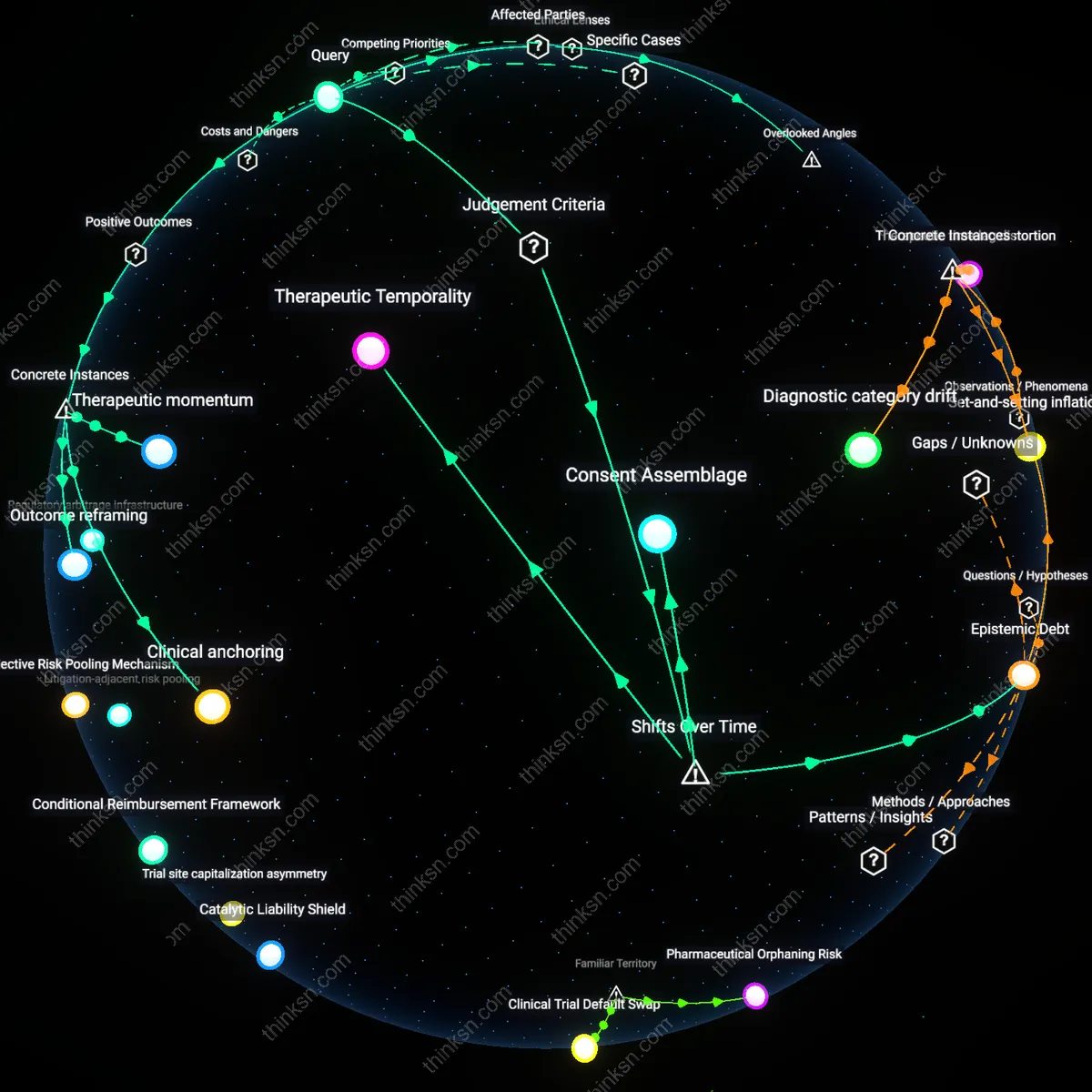

Algorithmic Temporal Mismatch

Clinicians must map how the timing of Reddit’s visibility algorithms—such as post decay, bump cycles, and voting bursts—collides with patients’ circadian vulnerability and symptom fluctuations. For example, a user posting about anxiety at 2 a.m. may receive delayed engagement due to low traffic, leading to feelings of invisibility, while daytime upvote surges can trigger reactive overinvolvement when the user is in a different psychological state. This temporal misalignment creates feedback loops where emotional exposure is unpredictable and often dissociated from intent, undermining cognitive behavioral strategies based on consistent cause-effect expectations. The non-obvious insight is that platform pacing, not just content, regulates affective outcomes—making algorithmic rhythm a latent therapeutic variable.

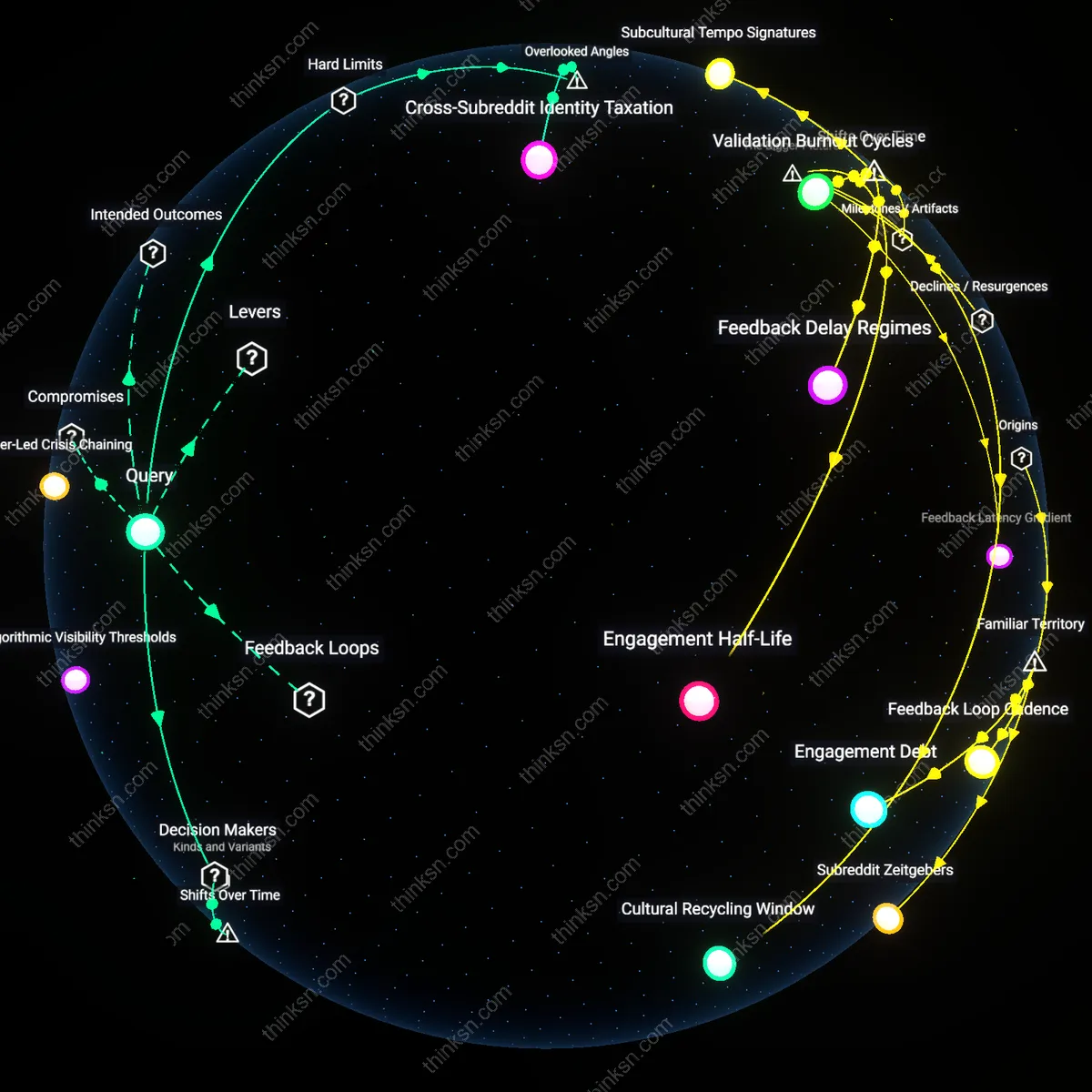

Cross-Subreddit Identity Taxation

Therapists should explore the psychological cost of maintaining distinct persona investments across multiple, contextually isolated subreddits, where users experience identity fragmentation under the guise of anonymity. An individual may present as a recovering addict in one subreddit, a dominant partner in a kink community in another, and a cynical observer in a politics forum—all without narrative continuity—leading to internal dissonance that mimics dissociation but is digitally scaffolded. Each identity carries reputational stakes, social obligations, and linguistic norms, creating a hidden tax on self-cohesion that resists standard diagnostic categories. This reveals that anonymity on Reddit does not free the self but multiplies it into competing, uncoordinated roles that strain integrative functioning over time.

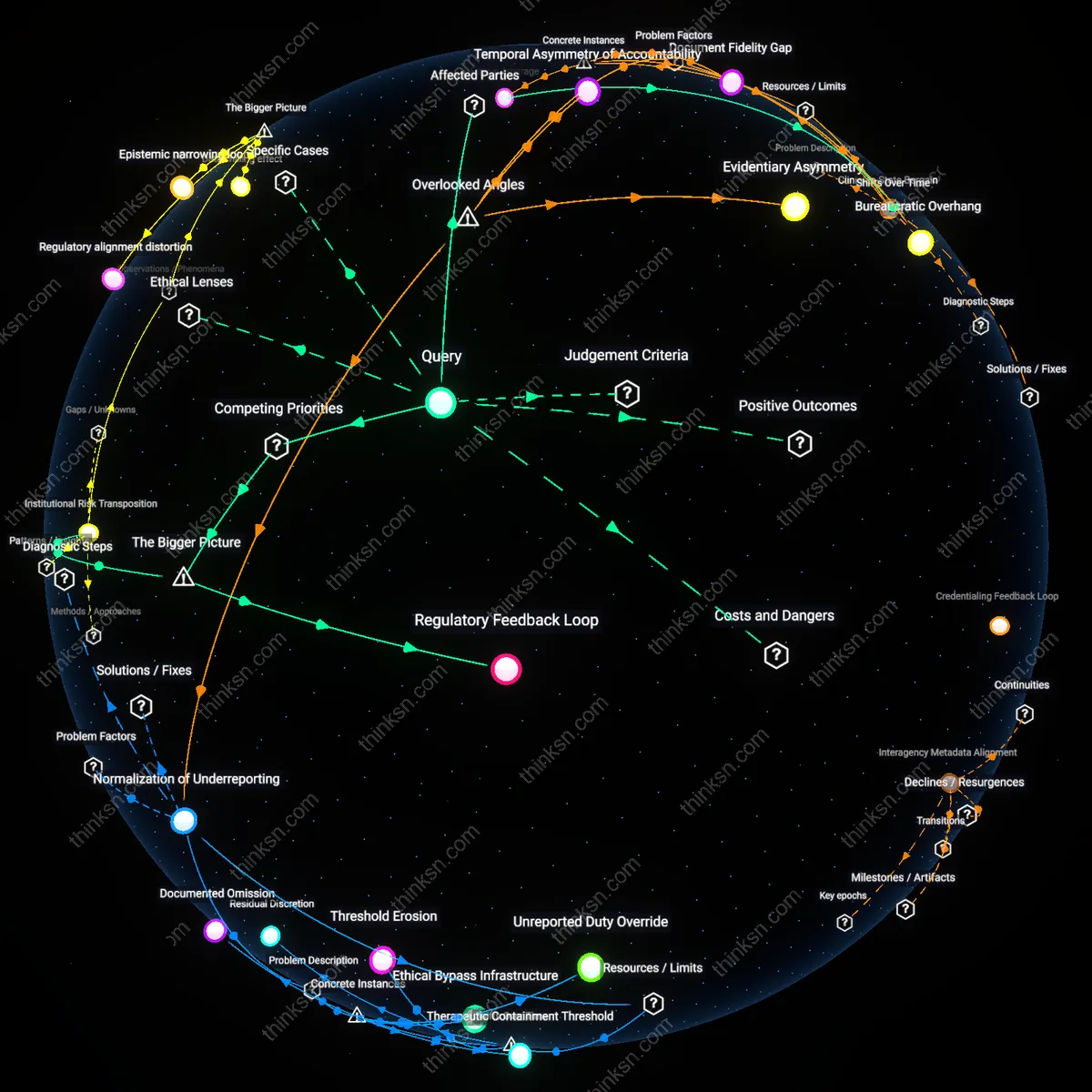

Platform-Clinician Interface

Mental-health clinicians must partner with Reddit’s safety and policy teams to co-develop real-time data-sharing protocols that flag community-level shifts in user behavior linked to clinical outcomes, because the platform’s 2015 shift toward algorithmic content curation intensified affective feedback loops that now evolve faster than clinical assessment cycles can detect. This mechanism—where community moderation tools like automated flairs and subreddit quarantines began producing measurable psychological externalities—reveals that the temporal decoupling between clinical observation and platform-scale behavioral change has become the central constraint in digital mental health intervention. The underappreciated reality is that clinicians now operate within a latency gap where a patient’s self-reported Reddit experience often lags behind the actual dynamics of the communities they engage, rendering traditional therapeutic timelines ineffective without structural access to platform-side data.