Latency-Driven Architectures

Mid-sized SaaS companies began reengineering backend workflows around millisecond-scale data routing decisions after 2020, when OpenAI's API introduced probabilistic output latency that disrupted synchronous request chains. Engineering teams at firms like Coda and Notion shifted from monolithic service logic to distributed, context-aware proxy layers that route tasks through multiple AI providers dynamically, based on real-time cost-performance snapshots—an operational paradigm previously reserved for high-frequency trading systems. This change was not driven by AI feature demand but by the need to stabilize UX amid unpredictable third-party timing, a non-obvious constraint that made latency, not accuracy or cost, the dominant architectural signal. The resulting systems prioritize temporal predictability over functional simplicity, revealing a hidden trade-off between external API dependency and real-time reliability.

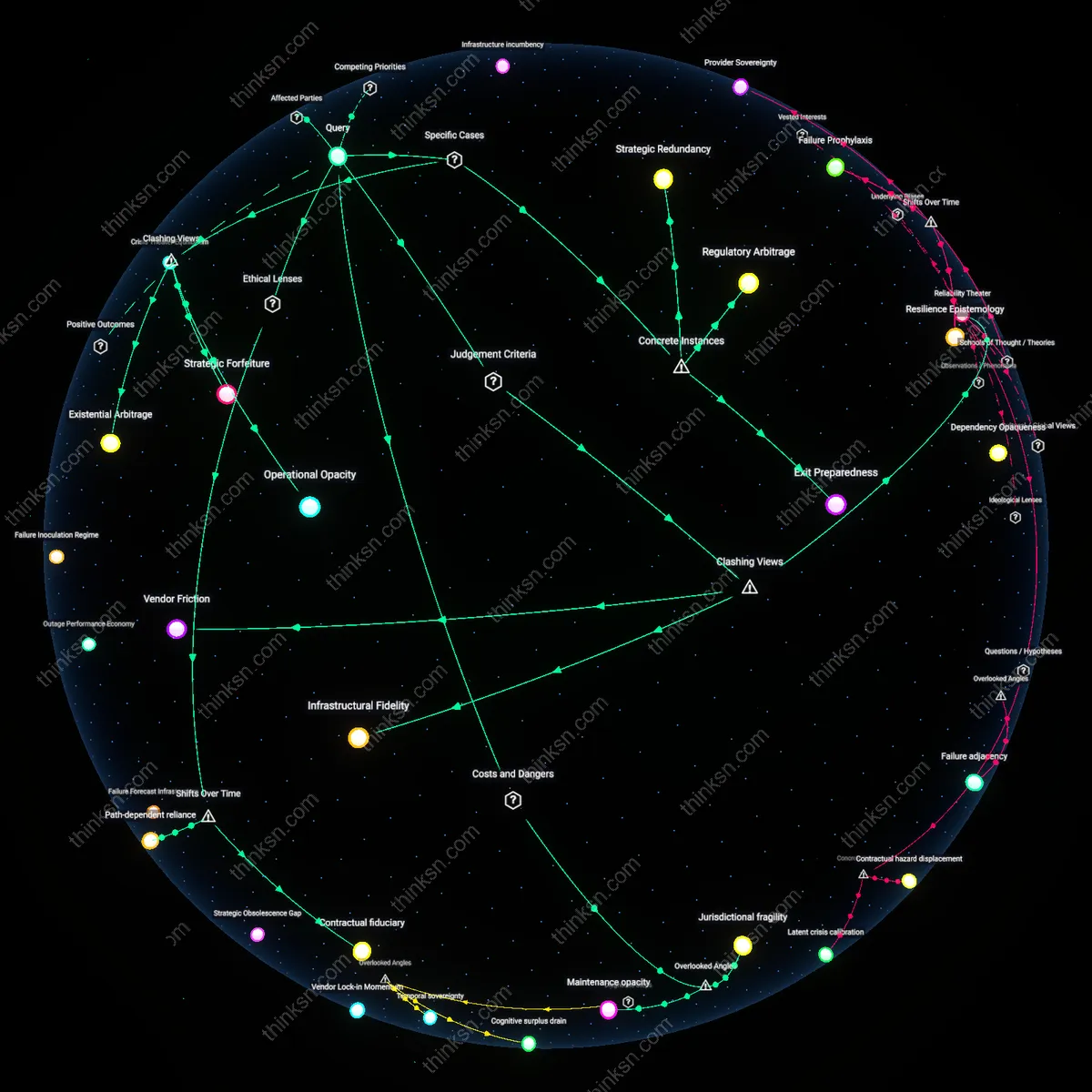

Shadow Orchestration Layers

Starting in 2021, mid-sized SaaS companies began embedding covert routing logic between user actions and AI API calls to avoid usage-based pricing traps created by OpenAI and Anthropic, often rewriting prompts to compress token count or caching synthetic responses that mimic model outputs without invoking APIs. Product teams at companies like Airtable and Grammarly designed these systems not to improve AI performance but to deceive billing metrics—a form of algorithmic thrift that institutionalizes strategic omission and data hallucination as maintenance practices. This undermines the assumed transparency of API consumption models, exposing how usage-based pricing can incentivize architectural dishonesty rather than efficiency. The necessity of these stealth layers reveals a breakdown in trust between API providers and clients, where cost control becomes a subversive engineering objective.

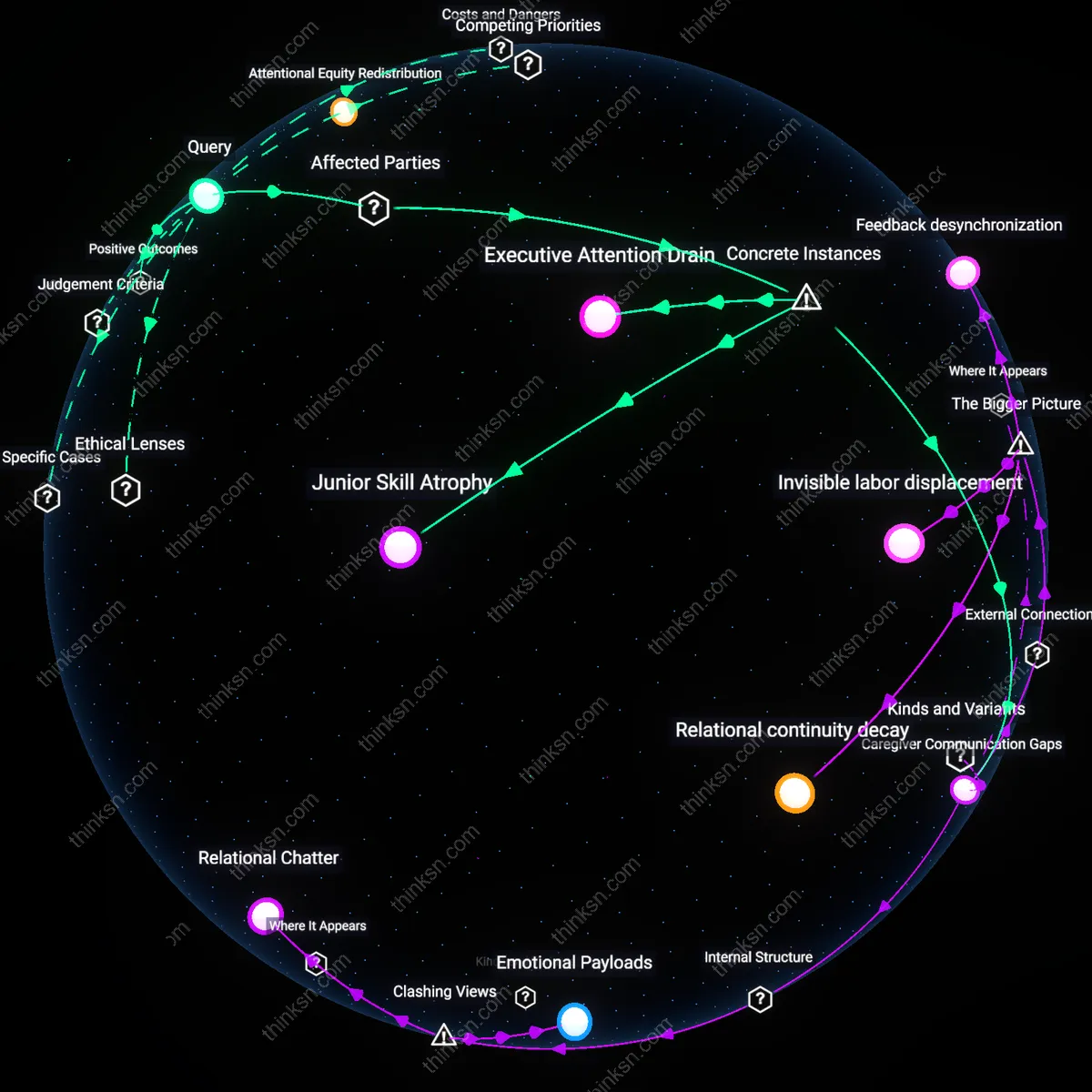

Cognitive Load Shifting

SaaS companies have offloaded UX complexity onto users by 2023 not because AI integration is seamless but because opaque API behaviors forced them to push decision-making upstream—users now manually choose between 'fast/cheap' and 'slow/accurate' AI modes due to inconsistent output quality from external models. Companies like Figma and ClickUp implemented user-facing tier selectors for AI features not as premium upsells but as necessary circuit breakers to manage expectations when API responses failed silently or diverged from prompts. This reversal of the assumed automation trajectory—where more AI should reduce user intervention—exposes how external API unpredictability inverted the promise of intelligent assistance into a new form of digital labor, where users absorb the cost of system instability. The result is a hidden redistribution of cognitive burden disguised as customization.

Cost Elasticity Coupling

Mid-sized SaaS companies began aligning system architecture directly with variable AI API costs, because usage-based pricing from providers like OpenAI and Anthropic tied computational expense to customer behavior in real time. Engineering teams restructured workflows to gate AI features behind user-triggered actions, cache results aggressively, and introduce tiered access—operating under CFO-driven mandates to prevent margin erosion. Most overlook that this shifted product design from user need to cost containment logic, making feature usability secondary to expenditure predictability.

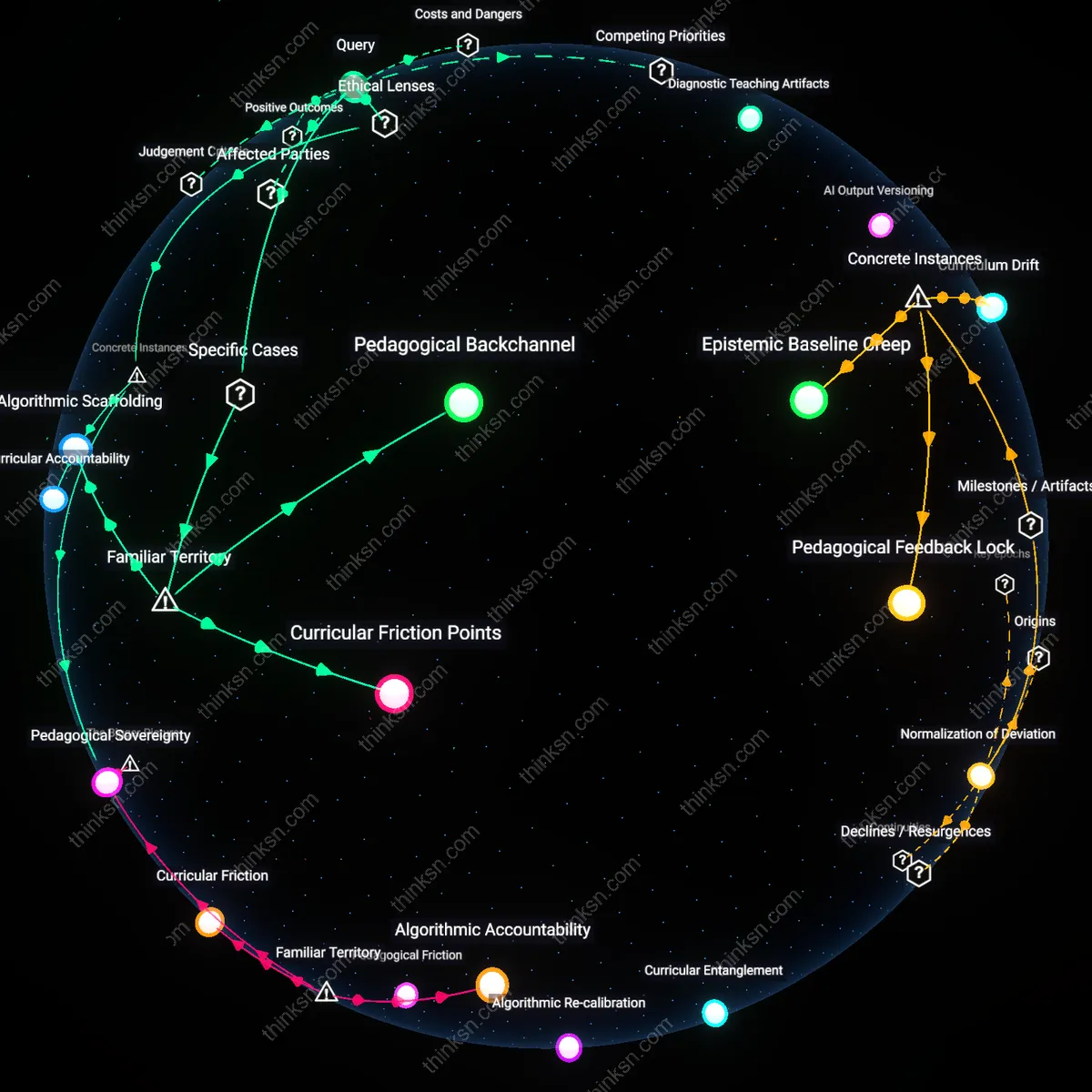

Feature Velocity Dependence

SaaS companies accelerated feature rollouts by treating third-party AI APIs as modular building blocks, relying on platforms like Hugging Face or Google Vertex to avoid developing models in-house. This created a dependency where roadmap planning became synchronized with API availability, updates, and rate limits, often forcing product teams to redesign functionality when backend AI services changed. The underappreciated consequence is that innovation speed now depends less on internal R&D and more on the external release cycles of AI API vendors.

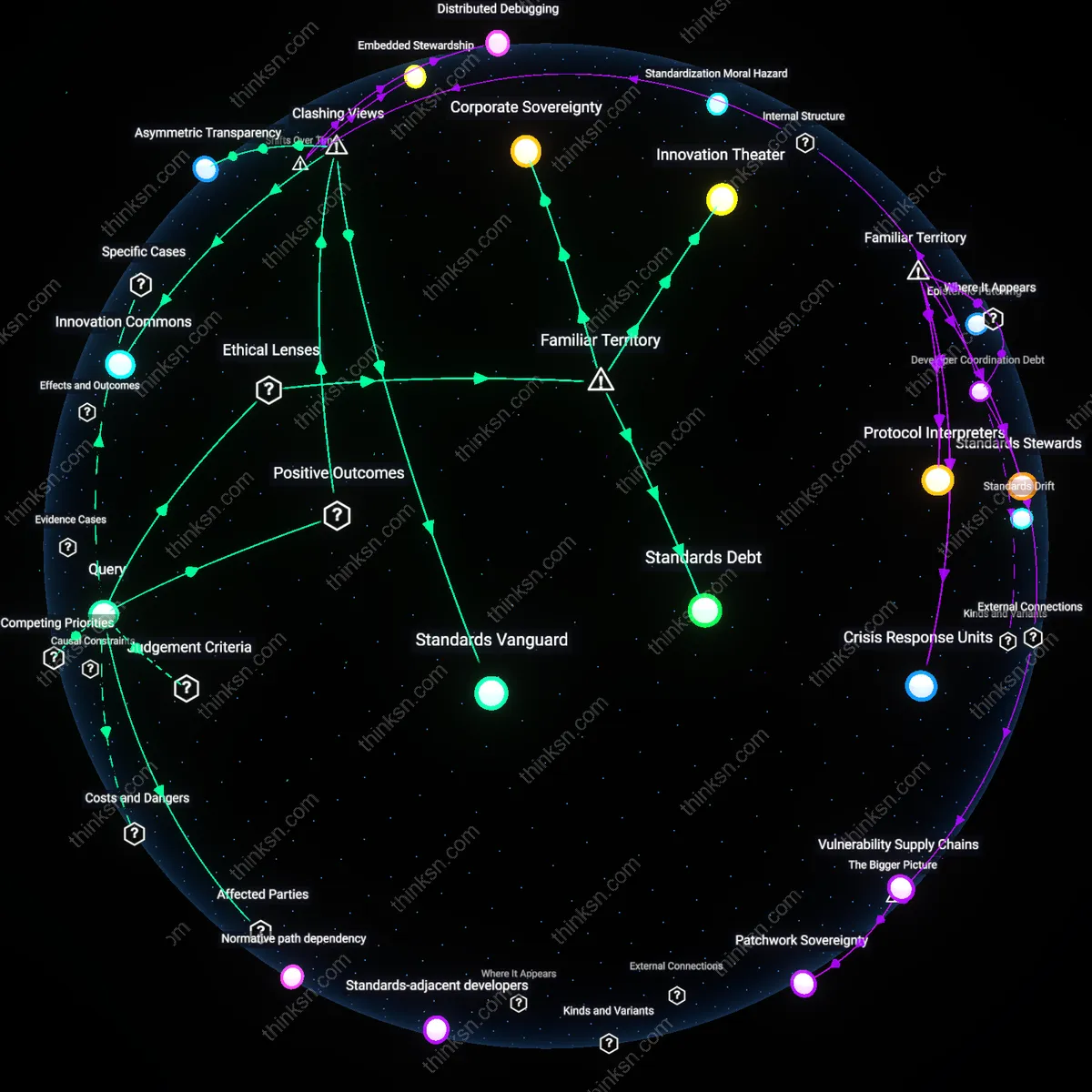

Service Modularization

Mid-sized SaaS company Zapier shifted from monolithic workflow automation to modular microservices orchestrated around OpenAI’s API in 2020, enabling on-demand natural language processing without in-house model development. This architectural pivot relied on external AI as a drop-in capability layer, reducing the need for vertical integration and allowing product teams to treat intelligence as a swappable component. The significance lies in decoupling core logic from cognitive functionality, which redefined system boundaries—not what the software does, but what it must own. This reveals that API-based AI did not merely enhance features but altered the granularity of service design itself.

Latency Sovereignty

In 2022, the Canadian healthcare SaaS firm Think Research redesigned its clinical decision support systems after adopting Anthropic’s usage-based API, moving real-time AI inference to edge-proximate cloud zones to comply with provincial data residency laws. The shift prioritized control over data transit paths, transforming infrastructure layout not for performance alone but for jurisdictional alignment, where AI usage became a regulatory surface. This exposes how sovereignty concerns, once peripheral, were operationalized into system topology through API dependency—where the price of access is not just monetary but constitutional.

Feature Liquidity

In 2021, the HR platform Greenhouse integrated Hugging Face’s inference API to rapidly deploy resume parsing and bias detection features that would have required years of internal NLP development, turning formerly strategic capabilities into disposable, short-cycle experiments. This transition enabled product managers to treat features as financially fungible and temporally bounded, burning down AI-powered tools after single-quarter trials. The underappreciated effect is that usage-based pricing dissolved the sunk-cost logic of feature development, making functionality a flow rather than a stock—shifting software roadmaps from planned obsolescence to real-time arbitrage.

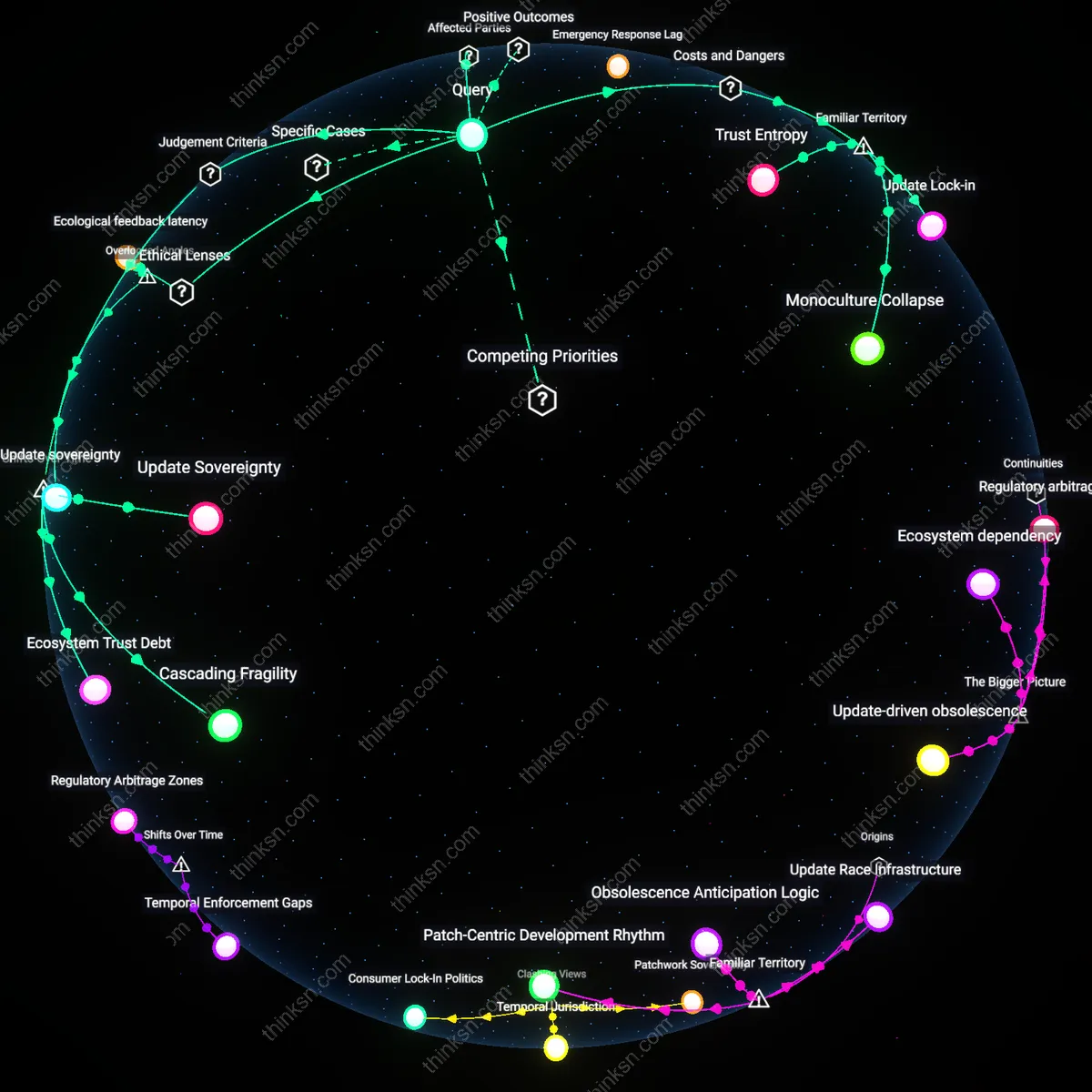

Infrastructural Lock-in

Mid-sized SaaS companies increasingly committed to cloud-native architectures because API-based AI services reduced the cost of entry for advanced features, allowing engineering teams to bypass building custom models and instead embed capabilities like natural language processing through providers such as OpenAI or Anthropic—this shift created path dependency on external vendors’ roadmaps and pricing models, which in turn constrained long-term system design autonomy; the non-obvious consequence is that technical flexibility eroded as business incentives favored speed-to-market, embedding infrastructural lock-in through operational convenience rather than strategic choice, with downstream effects including reduced modularity when regulatory or geopolitical pressures demanded on-premises deployments.

Capability Arbitrage

SaaS product managers began treating AI functionalities as modular differentiators rather than core competencies, leveraging usage-based APIs to rapidly introduce features like smart search or automated summarization without large upfront R&D investment—this shift aligned with venture pressure to demonstrate innovation velocity, enabling firms to outsource complexity to AI API ecosystems while reallocating internal engineering resources toward customer-specific workflows; the underappreciated systemic effect is that competitive advantage now stems not from proprietary AI development but from strategic arbitrage—orchestrating third-party capabilities in novel combinations, which reshaped the design logic of SaaS platforms around composability and integration layer innovation rather than monolithic in-house stacks.

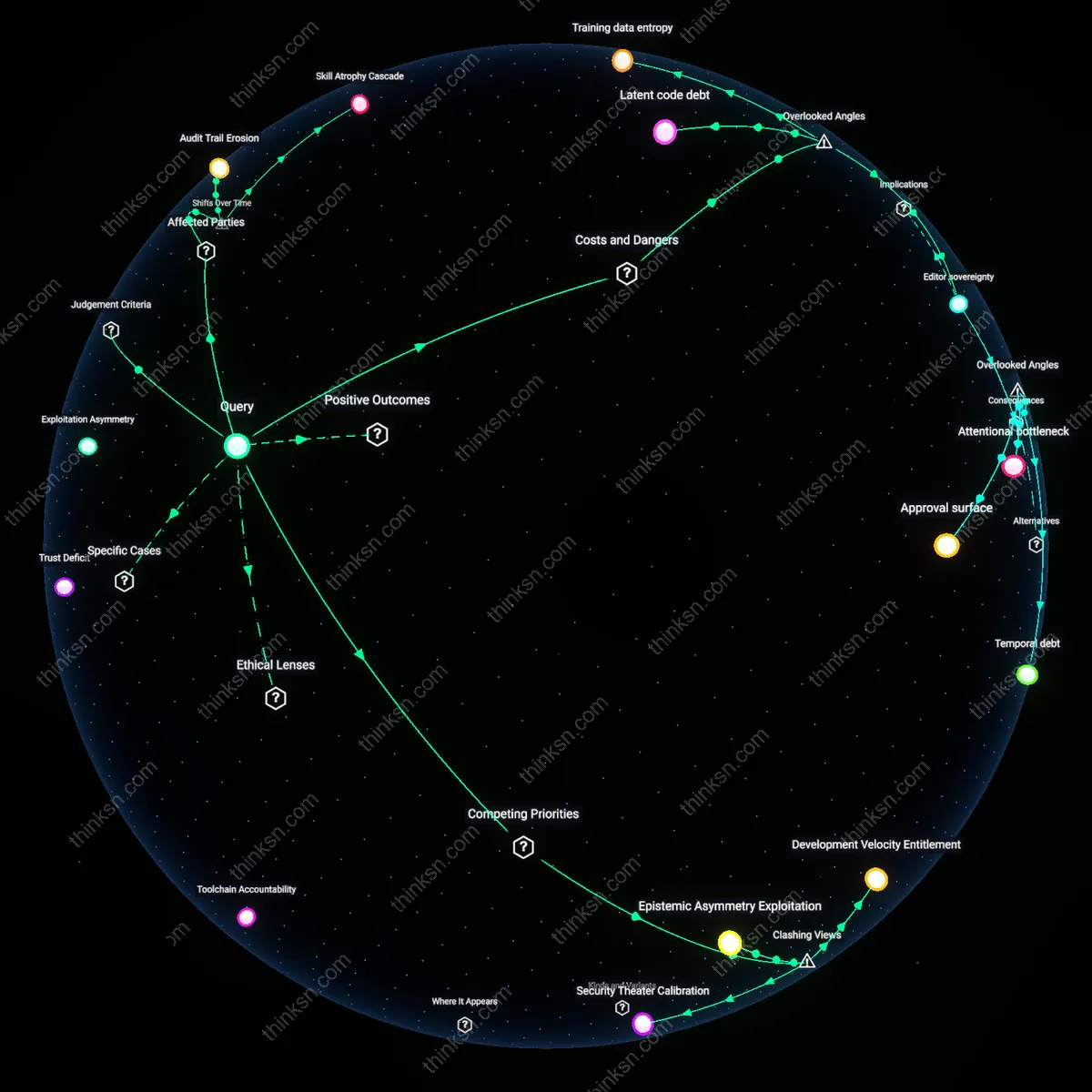

Economic Feedback Loop

Engineering budgets in mid-sized SaaS firms shifted from capital expenditures on data science teams to variable operational costs tied to AI API consumption, reshaping system design around usage throttling, caching strategies, and user-tier segmentation to manage unpredictable billing spikes—this fiscal realignment created an economic feedback loop where product decisions were increasingly constrained by API cost elasticity, forcing architects to treat AI features not as enhancements but as profit-margin variables, which led to the emergence of cost-aware design patterns such as fallback heuristics and synthetic feature substitution when usage thresholds were breached, revealing how pricing models of AI providers became a covert control layer over software functionality.

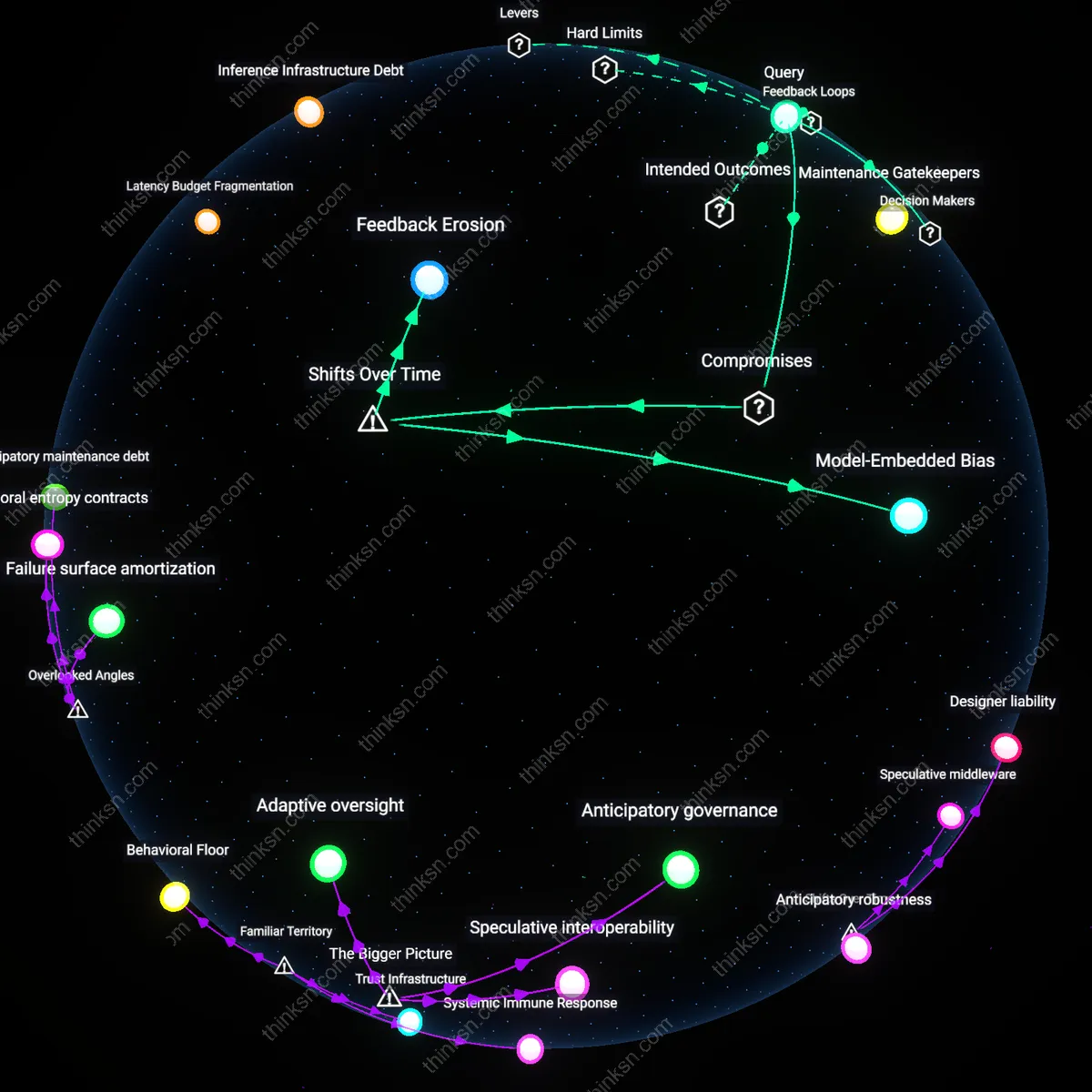

Latency Budget Fragmentation

Mid-sized SaaS companies began restructuring backend orchestration workflows to allocate fixed latency budgets per user request after OpenAI's API v1 release in 2019, a shift codified in internal observability dashboards that started tracking AI call wait times as a percentage of total response SLAs. This reengineering was driven not by raw cost or throughput but by customer UX thresholds — where even 300ms of unpredictability from external AI APIs breached previously stable performance envelopes — forcing engineering teams to treat API response variance as a primary design constraint rather than a secondary concern. The non-obvious insight is that AI integration didn’t just add a service call, but fractured the temporal coherence of monolithic response cycles, requiring entirely new methods of distributed timing arbitration across internal and external systems.

Prompt Chain Obsolescence

The 2021 rollout of token-based pricing in major AI APIs led mid-market SaaS firms to decommission complex, reusable prompt templating engines in favor of minimal, single-shot prompts — evidenced by archival removals in Git repositories such as 'prompt-composer-v4' at companies like Coda and Notion. This regression occurred not because prompt engineering failed technically, but because usage-based billing made long, structured prompt chains economically unsustainable at scale, revealing a hidden dependency between API pricing granularity and the architectural depth of AI logic. The overlooked consequence is that economic signal, not capability, became the dominant selector of AI feature sophistication, downgrading system design from intelligence amplification to cost containment.

Vendor-Imposed Feature Ceiling

After Anthropic and OpenAI began publishing rate limit schemas tied to active user seats in 2022, mid-sized SaaS platforms such as Zapier and Airtable redesigned feature gating mechanisms to mirror downstream API entitlements, aligning their own paid tiers not with internal development costs but with opaque external vendor quotas. This created a new class of shadow constraints where roadmap decisions were preempted by anticipated API availability ceilings, as documented in product planning artifacts like 'API Quota Forecast Q3 2023' from Airtable’s product team. The hidden dynamic is that AI API providers, through non-negotiable access tiers, effectively weaponized scarcity to shape the competitive positioning of SaaS clients — turning infrastructure dependency into a covert product strategy lever.