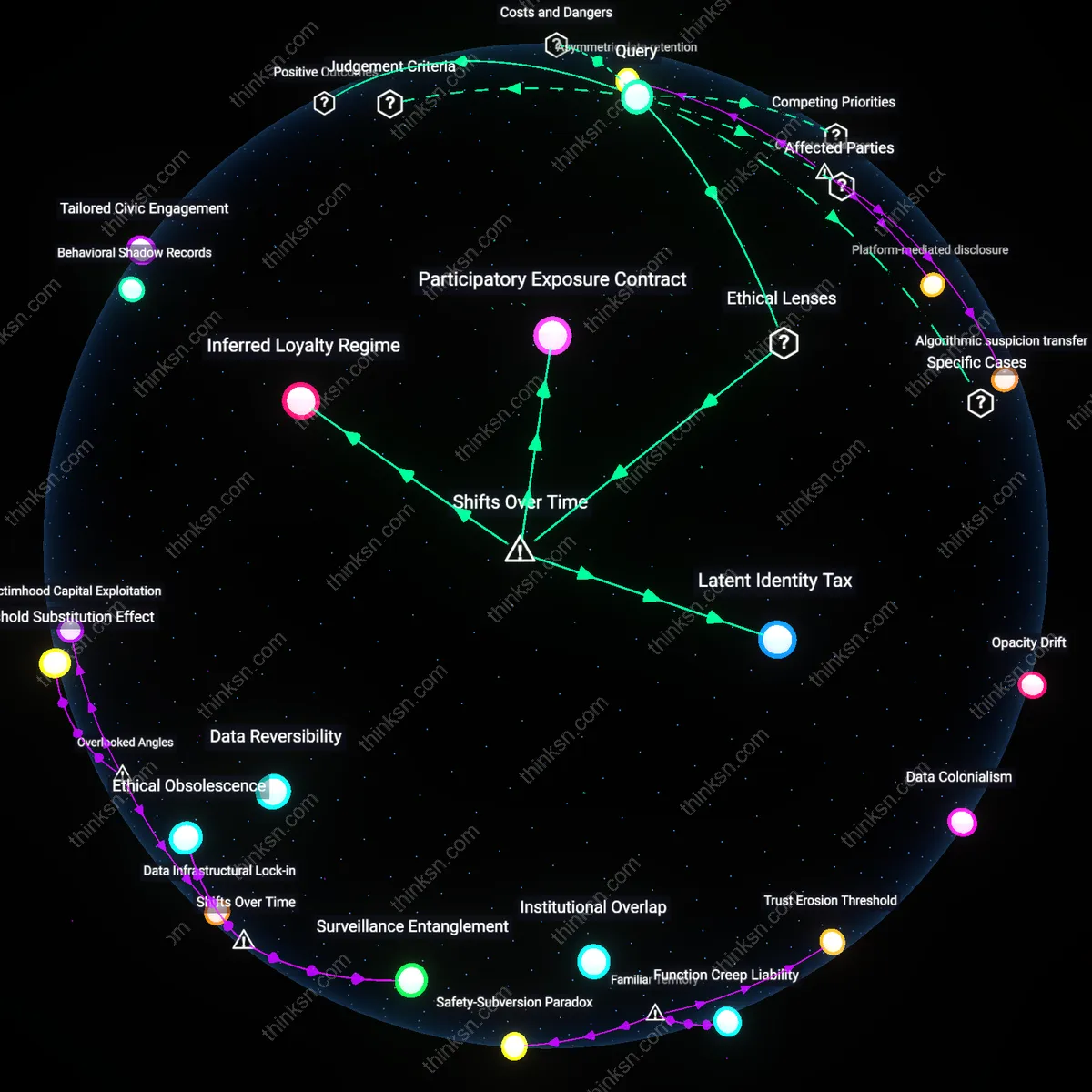

Trust Erosion Threshold

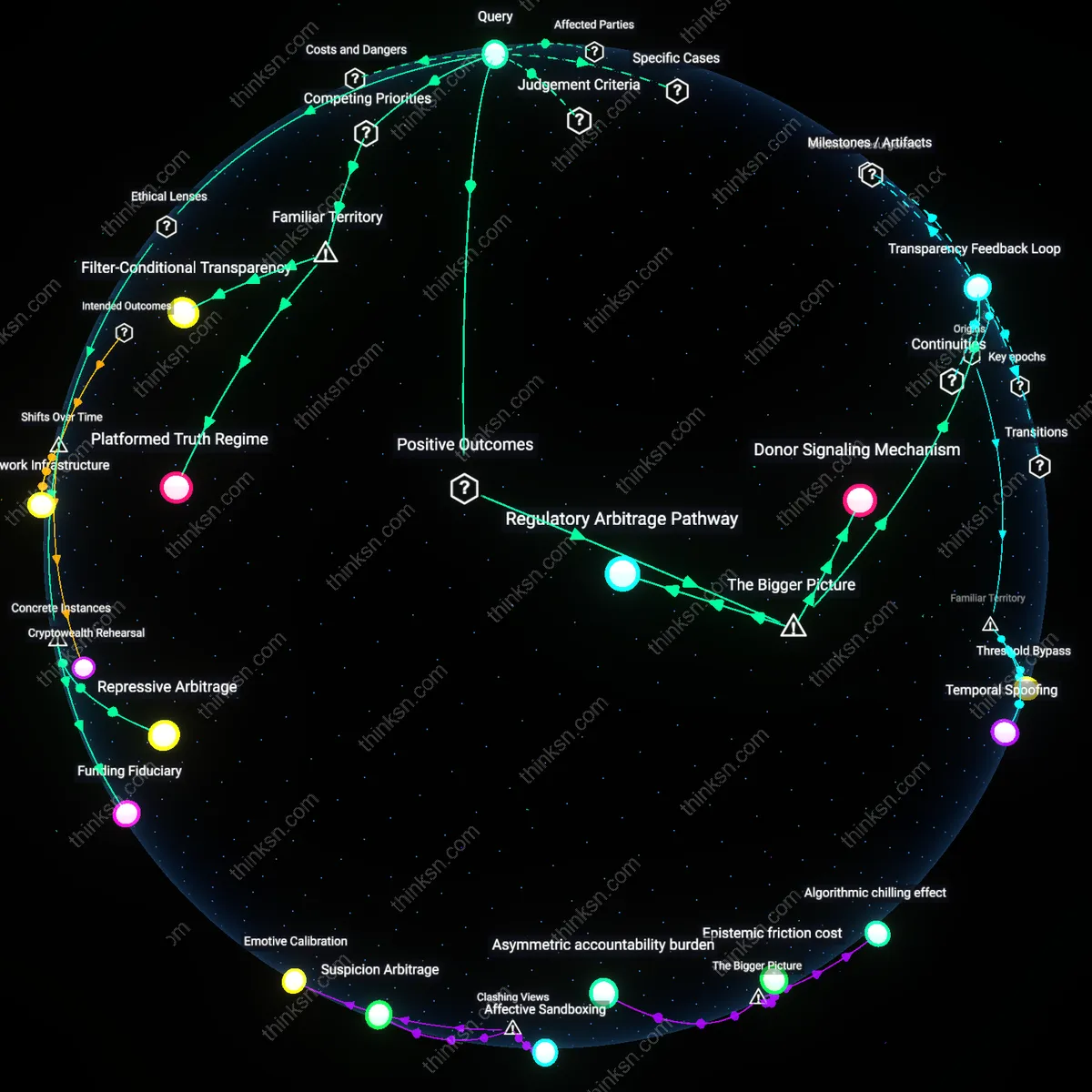

Child safety tools that double as government surveillance infrastructure erode public trust in protective institutions. When parents report exploitation through platforms like NCMEC, they assume the data serves only child protection—yet when law enforcement repurposes those reports to monitor protests or dissidents, the breach reframes safety systems as instruments of control. This shift undermines the legitimacy of institutions like schools and social services that depend on community cooperation, revealing the non-obvious risk that well-intentioned participation fuels invisible surveillance expansion.

Function Creep Liability

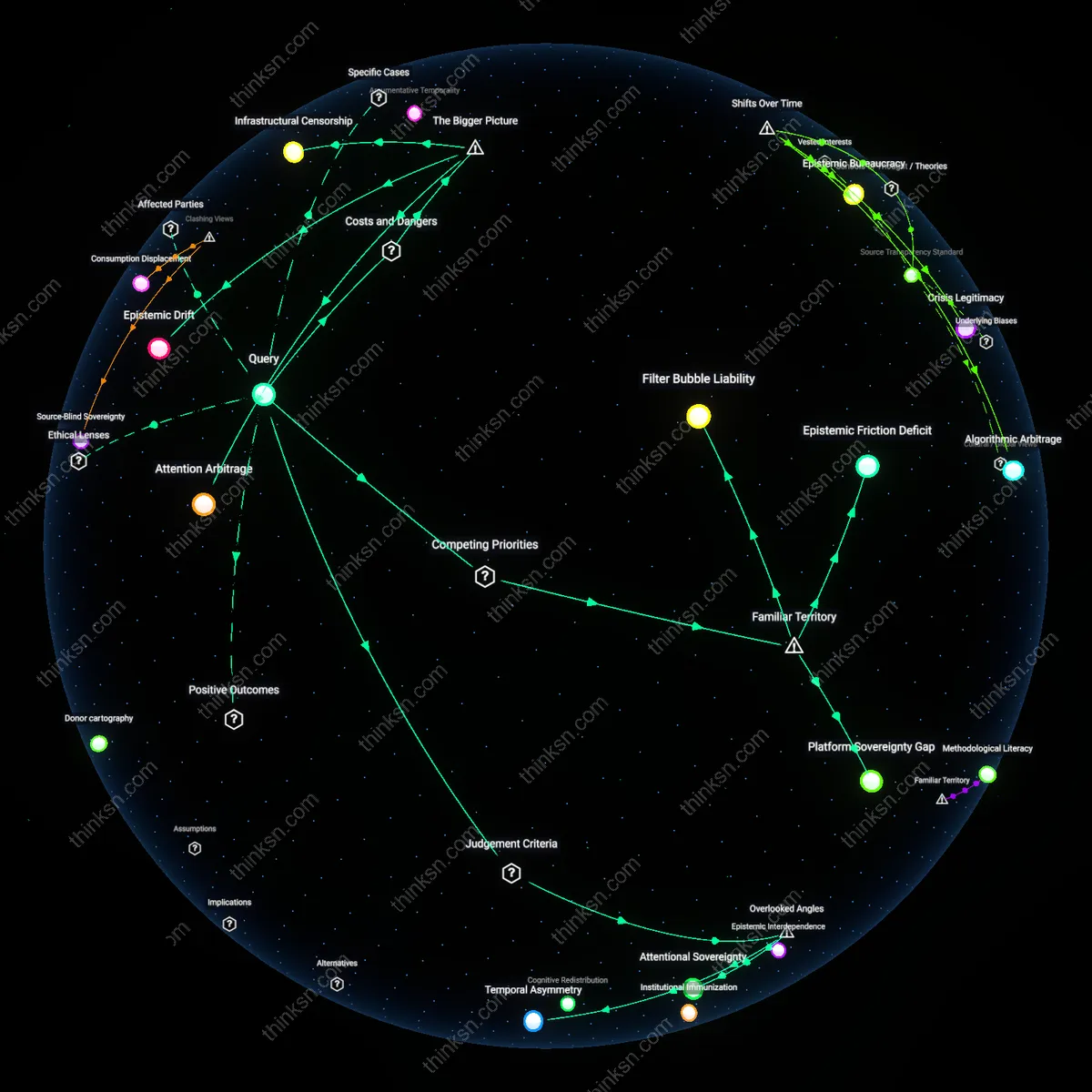

Automated detection systems such as Apple’s CSAM scanning tool acquire function creep liability when their technical capacity to identify illegal content is extended to political monitoring. Though designed to flag child abuse material using hash-matching, the infrastructure enables state access to private behavioral data streams under emergency or national security justifications. The underappreciated dynamic is not misuse per se, but normalization—the gradual redefinition of ‘safety’ to include social order, which aligns with public fears about technology overreach while masking incremental institutional capture.

Safety-Subversion Paradox

The deployment of child safety tools in digital ecosystems creates a safety-subversion paradox when the very entities mandated to protect vulnerable populations—such as internet providers under legislative mandates like FOSTA-SESTA—become de facto data suppliers to intelligence agencies. Users comply with surveillance expectations believing evasion implies guilt, yet this compliance generates databases that disproportionately target marginalized activists, such as sex workers or LGBTQ+ organizers, under the guise of protection. The insight masked by familiar ‘think of the children’ rhetoric is that the cost is not only privacy loss but the inversion of safeguarding into suppression.

Data Colonialism

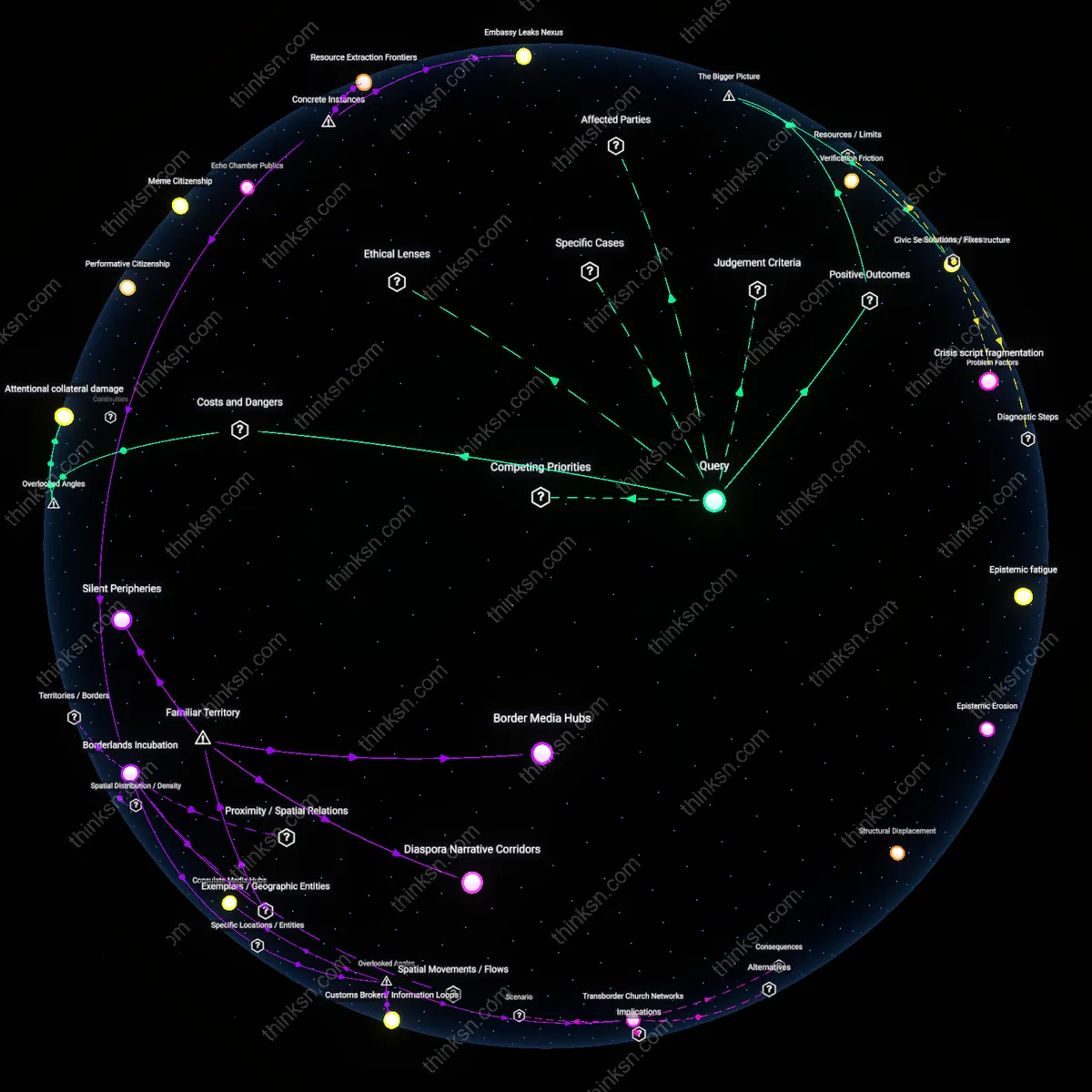

Child safety tools that collect behavioral metadata become de facto surveillance infrastructure usable by authoritarian regimes to target dissidents. Tech firms, in outsourcing content moderation to AI systems trained on Global South user data, create extractive data pipelines that label political satire as exploitative content, enabling states like Uganda or Turkey to weaponize these reports against journalists. This reframes child protection not as a moral imperative but as a cover for data harvesting in vulnerable regions, exposing how ostensibly benevolent systems embed imperial logic into digital governance.

Opacity Drift

Governments exploit the moral urgency of child safety to shield mass surveillance programs from public scrutiny, invoking emotional consensus to conceal the integration of these tools into broader intelligence architectures. When platforms like Telegram or Signal face pressure to adopt client-side scanning, the resulting obfuscation of technical change prevents democratic oversight—what appears as incremental safety becomes a Trojan horse for normalization of unchecked monitoring, revealing how ethical panic can accelerate institutional secrecy.

Protection Rackets

State-mandated child safety tools incentivize private platforms to collaborate with law enforcement in ways that systematically blur child protection with political policing, as seen in India’s use of Google’s CSAM detection API to track Kashmiri activists. The financial and legal liability of non-compliance forces companies into coercive partnerships where due process is bypassed, transforming safeguarding into a regulatory lever that empowers states to outsource repression under the banner of innocence preservation.

Surveillance Drift

The deployment of the Amber Alert system in the United States to locate abducted children has enabled federal law enforcement to repurpose the same emergency broadcast infrastructure for monitoring protests, as seen during the 2020 Black Lives Matter demonstrations in Minneapolis where location-based alerts were coordinated with federal surveillance operations. The integration of cell tower triangulation and IP tracking into a tool originally limited to child safety expanded its use into real-time monitoring of mass political gatherings, revealing how systems designed for urgent, narrow purposes can mutate into generalized tracking apparatuses. The underappreciated mechanism here is not deliberate abuse, but interoperability by design—when child safety infrastructure shares technical architecture with law enforcement databases, the boundary between protection and surveillance collapses organically. This case demonstrates that technical systems embedding crisis-response logics become available for political control without formal policy change.

Data Reversibility

In India, the Aadhaar biometric identification system, initially introduced to improve welfare distribution for vulnerable children through targeted nutrition programs, became a centralized repository enabling state tracking of dissent, as evidenced when protest attendees at the 2020–2021 farmers’ demonstrations were identified via Aadhaar-linked mobile data and flagged for surveillance. Because children’s benefits were administered through a universal, uniquely identifiable digital credential, the same data stream that verified eligibility also enabled retroactive mapping of political participation when cross-referenced with telecom providers. The non-obvious insight is that even anonymized or purpose-bound child safety datasets become irreversible when identity architecture is centralized—once a biometric key exists for protection, it can be reversed to expose political behavior without additional legislation or overt censorship. This reveals that data designed to shield becomes a vector of exposure when built on irreversible identification systems.

Institutional Overlap

The United Kingdom’s Child Exploitation and Online Protection Command (CEOP), established to track child sexual abuse material, routinely shared cyber-patrol data with GCHQ during the 2011 London riots, where encrypted communication pathways developed for identifying predators were redirected to map activist networks and anticipate protest locations. The crossover occurred not through policy amendment but through embedded inter-agency task forces, where personnel trained in child protection tools were redeployed during civil unrest under national security mandates. The underappreciated reality is that organizational proximity—shared training, common software, overlapping personnel—creates a functional overlap that bypasses legislative scrutiny, making child safety units operational backdoors for political surveillance. This shows that institutional co-location, not just technology, erodes boundary maintenance between protection and control.

Surveillance Entanglement

Child safety tools become embedded in state surveillance infrastructures when post-9/11 securitization norms normalized data aggregation across civilian systems, enabling governments to repurpose mechanisms like hash-matching for CSAM detection into broader monitoring of dissent, particularly after 2016 when encrypted platforms became central to both activism and child protection debates; this convergence reveals how emergency-driven exceptions erode functional boundaries between protection and control, normalizing drift that was institutionally inconceivable before the mid-2000s.

Ethical Obsolescence

Mandatory reporting obligations introduced in U.S. internet policy in the 1990s, designed to detect child exploitation, have undergone a normative inversion after 2020, wherein their scalability via AI and integration with intelligence-sharing networks like ICSE databases now positions them as default infrastructures for tracking digital behavior beyond their original mandate, exposing a residual condition where ethical frameworks predicated on individual harm prevention become obsolete when the tools they legitimize are absorbed into anticipatory governance regimes focused on population-level risk.

Data Infrastructural Lock-in

Mandatory child abuse material detection systems like Microsoft's PhotoDNA, once embedded in national surveillance architectures such as the UK's Internet Watch Foundation filtering mandates, create irreversible dependencies in state monitoring infrastructure, where encrypted communication backdoors justified for child protection are repurposed for tracking dissident organizers during protests in Hong Kong and Belarus; this lock-in effect is non-obvious because the technical integration of these tools into lawful interception systems occurs incrementally through private-sector compliance protocols, obscuring how initial child safety mandates become institutionalized as generalized surveillance enablers that cannot be easily disentangled without degrading core security functions.

Victimhood Capital Exploitation

Platforms such as Meta leverage public goodwill generated by their visible enforcement of child safety policies—like removing grooming content on Instagram—to gain political immunity when those same behavioral profiling models are adapted by allied governments in India and Brazil to flag 'suspicious' user activity among ethnic minorities and land rights activists; this exploitation of moral credibility is typically overlooked because policy debates focus on data accuracy or oversight mechanisms, not how the symbolic capital of protecting children inoculates companies from scrutiny when downstream surveillance harms emerge in jurisdictions with weak rule of law.

Threshold Substitution Effect

In Germany, where strict child protection laws required telecommunications providers to implement automated message scanning, the established technical and legal threshold for permissible content inspection was redefined from 'probable cause' to 'pattern-based anticipation,' enabling intelligence agencies to argue for analogous deployment against anti-nuclear activists by reclassifying their encrypted coordination as 'risk-correlated signaling'—a shift that remains hidden because discussions concentrate on intent rather than the procedural erosion of evidentiary standards, where child safety tools act not as direct surveillance vectors but as precedents that redefine what constitutes a legitimate trigger for state observation.