Can Rapid AI Decisions Justify Unclear Accountability in Emergencies?

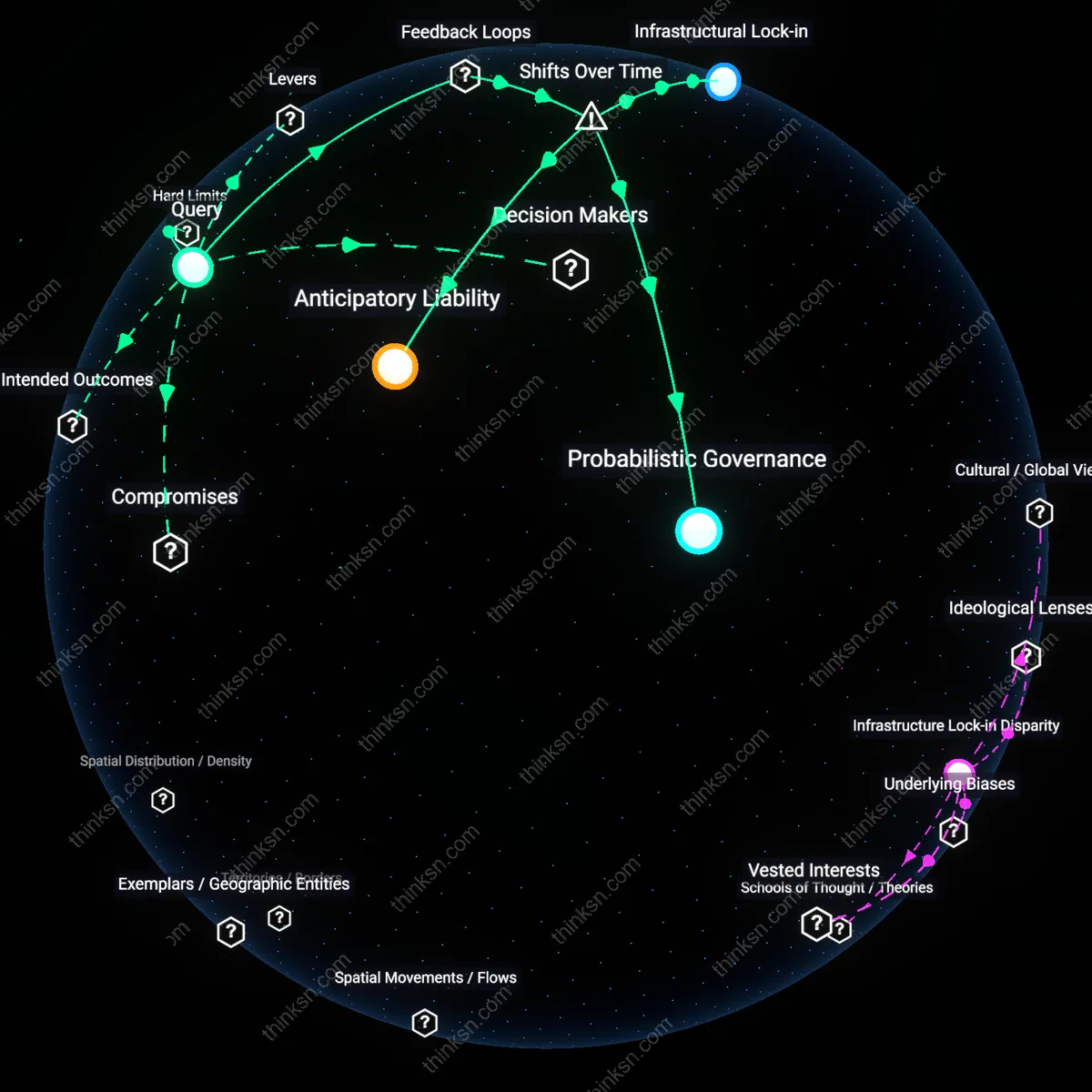

Analysis reveals 9 key thematic connections.

Key Findings

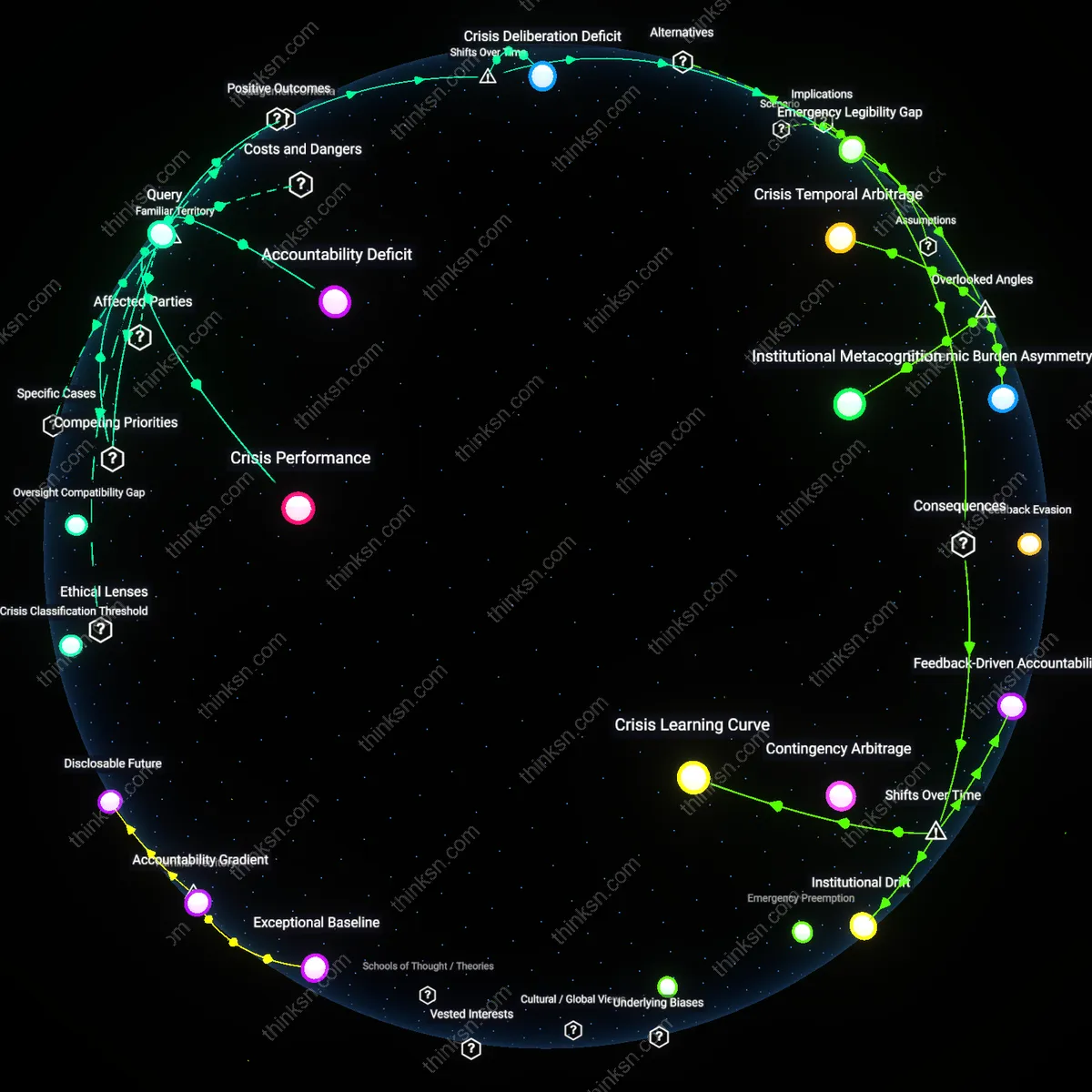

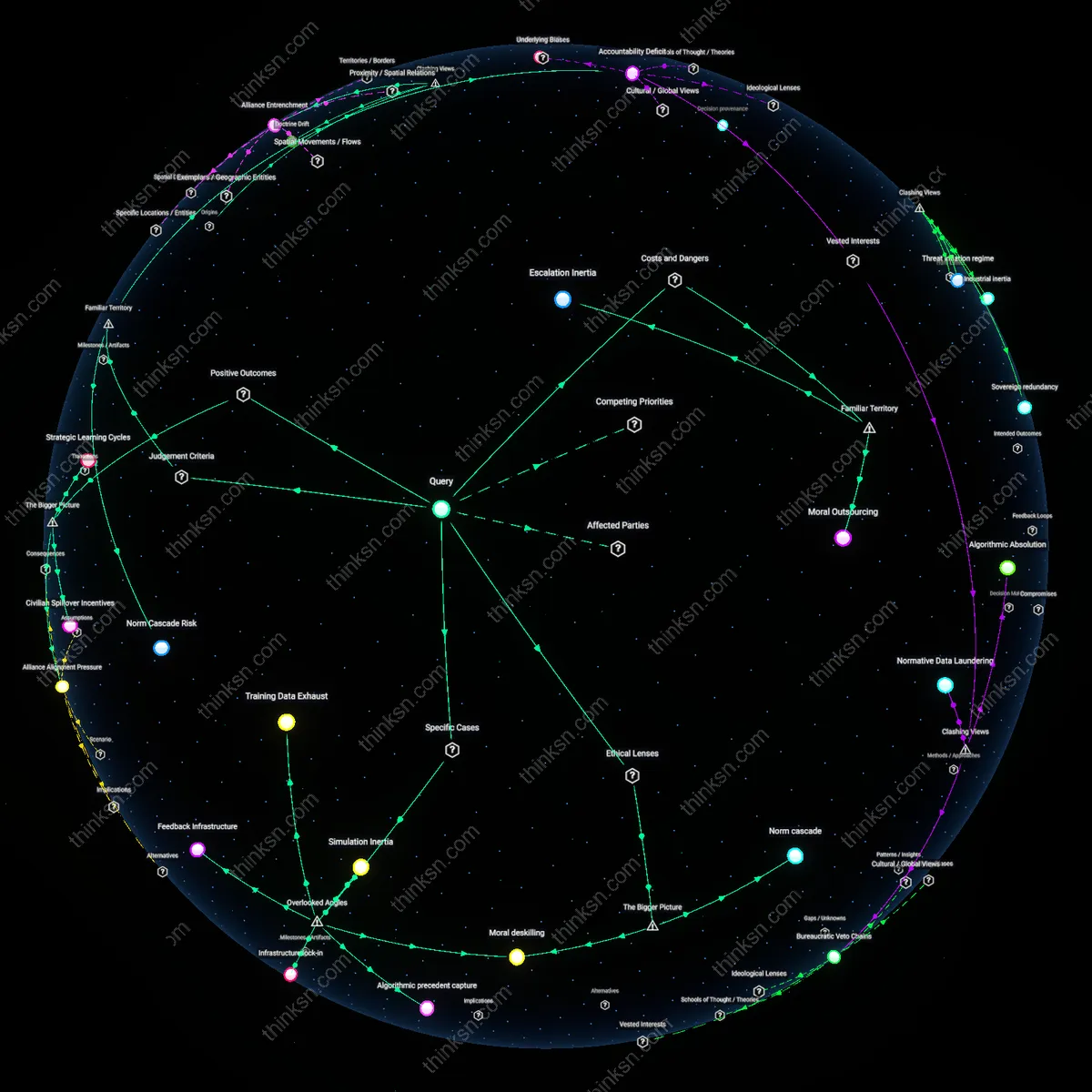

Political Liability Displacement

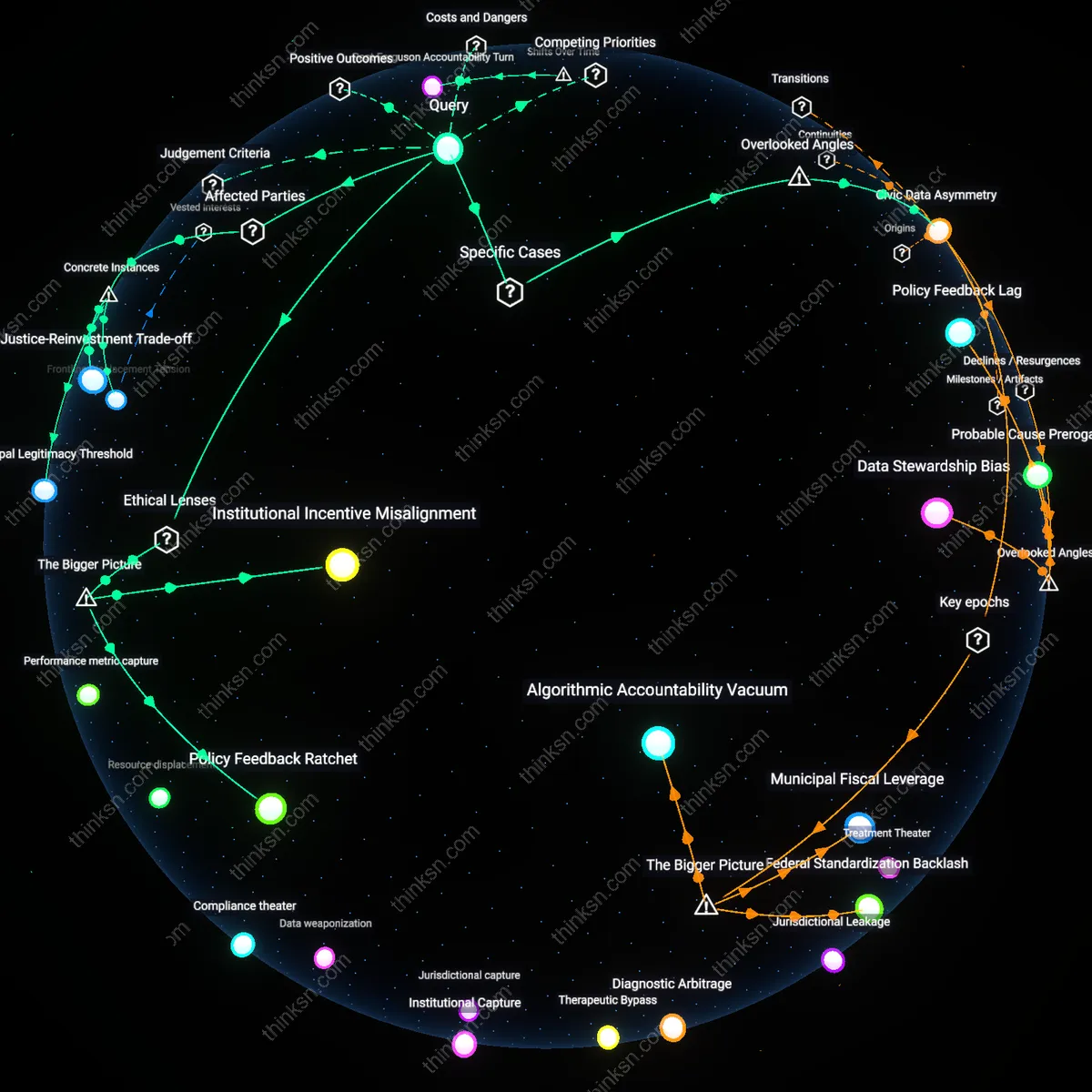

Governments can ethically delegate emergency response coordination to AI systems because elected officials transfer legal and moral liability to technical agencies, enabling plausible deniability when AI-driven interventions fail. This occurs through institutional architectures that distance policymakers from operational decisions—such as FEMA’s reliance on algorithmic resource allocation during hurricane responses—where bureaucratic intermediaries and opaque machine logic absorb public blame. The non-obvious mechanism is not the speed of AI but the strategic weakening of political accountability circuits under crisis conditions, transforming democratic responsibility into technical error management.

Algorithmic Triage Inequality

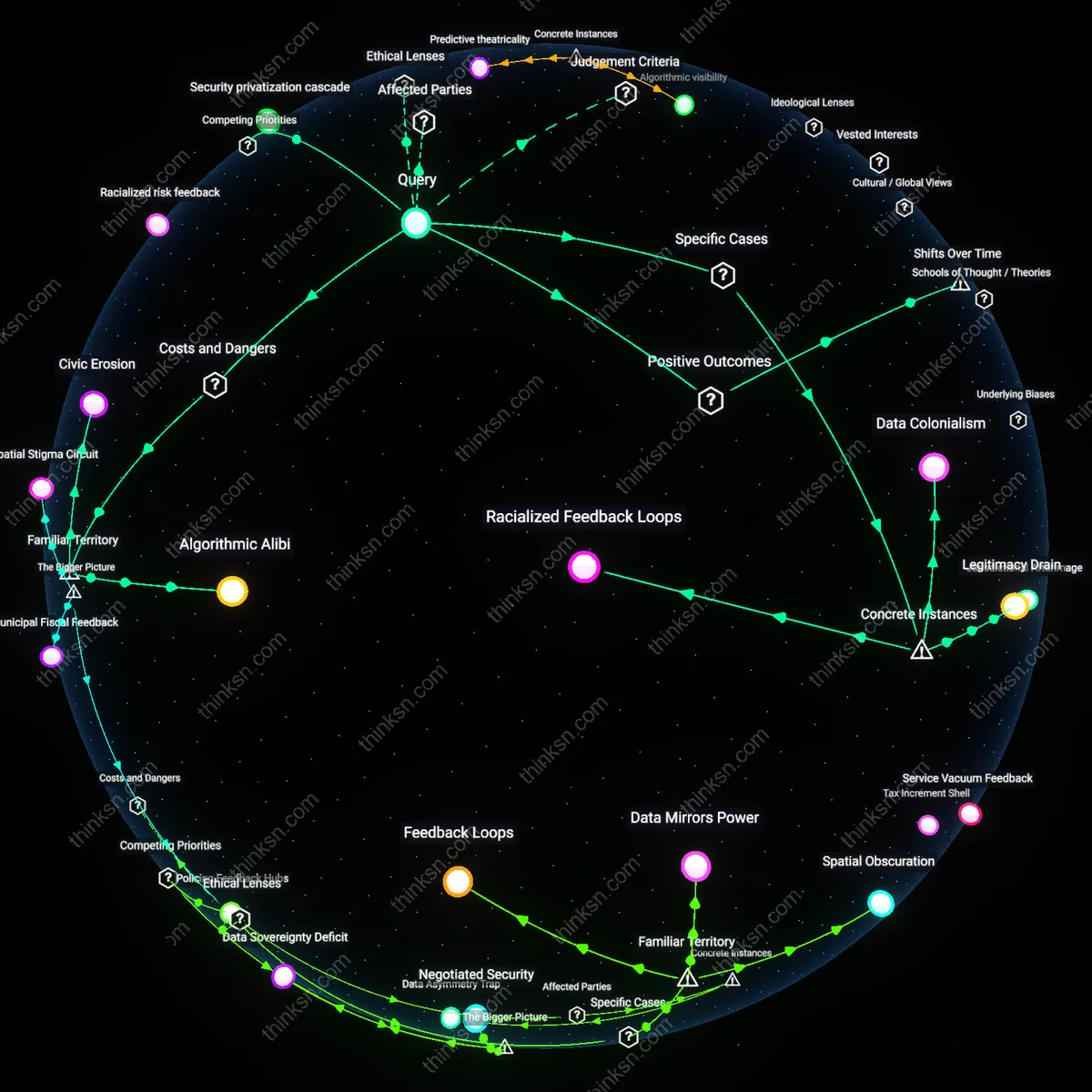

Governments cannot ethically delegate emergency response coordination to AI systems because marginalized populations bear disproportionate risk when algorithmic prioritization embeds historical biases in life-saving decisions. In urban flood evacuations, for example, AI models trained on wealth-skewed infrastructure data systematically deprioritize low-income neighborhoods for rescue deployment, reinforcing spatial inequities through feedback loops between data scarcity and resource denial. The overlooked systemic driver is how algorithmic efficiency in crisis amplifies pre-existing infrastructural neglect, converting technical optimization into structural violence.

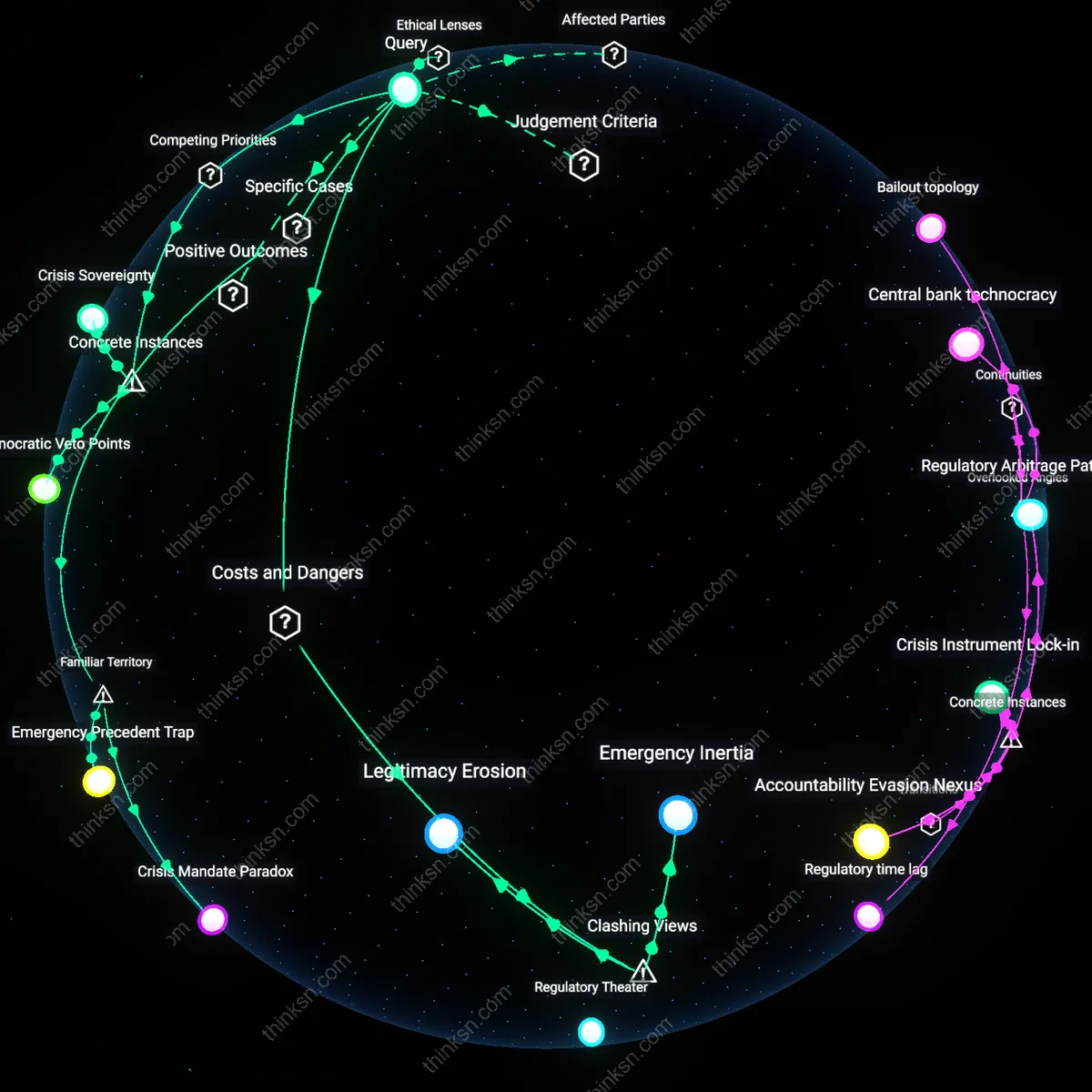

Crisis Temporality Exploitation

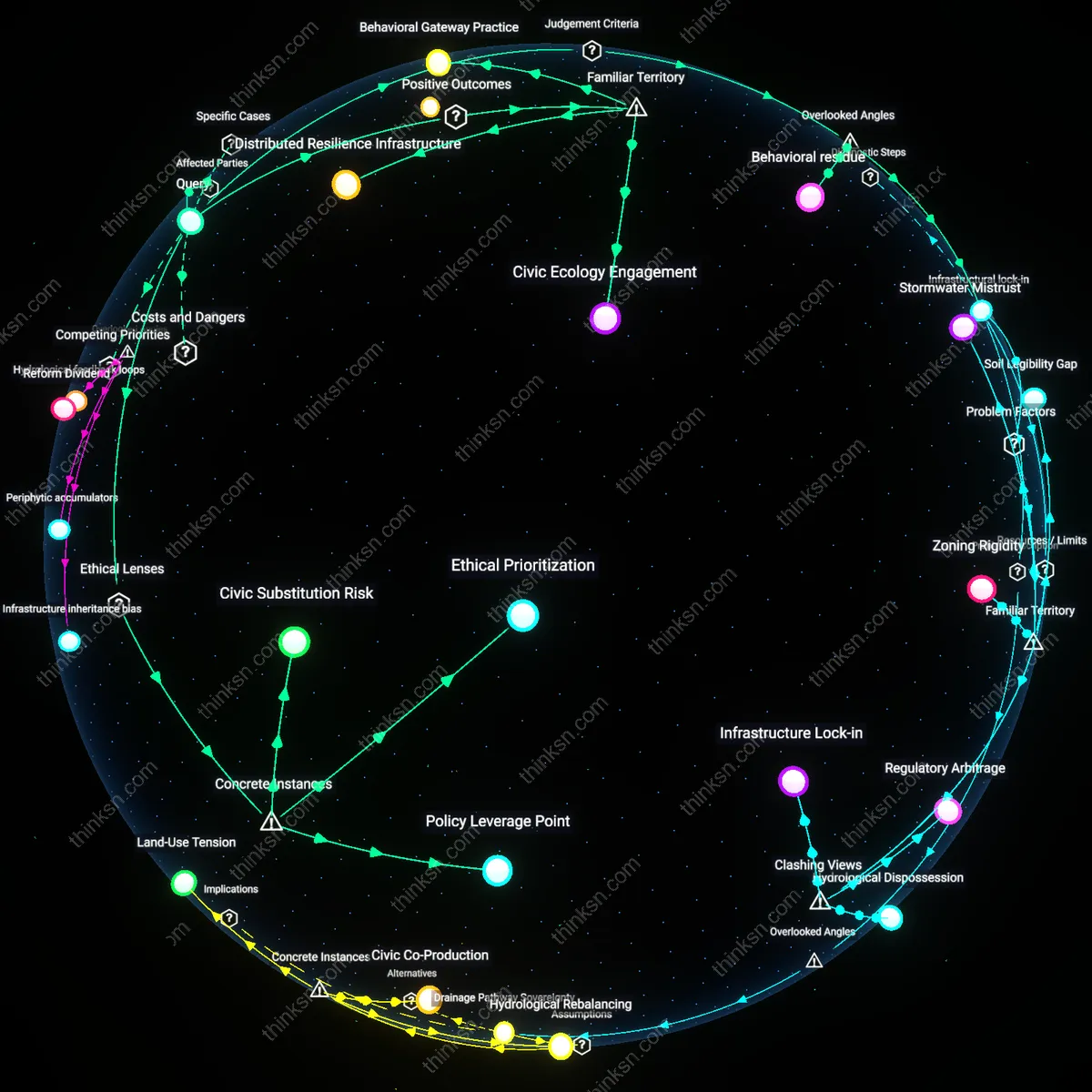

Governments ethically compromise public trust by delegating emergency coordination to AI systems during acute crises, exploiting compressed decision windows to legitimize permanent surveillance and control infrastructures under the guise of urgency. After the 2023 Türkiye earthquakes, temporary AI-powered damage assessment platforms were rapidly institutionalized into national disaster governance, extending biometric data collection into refugee camps without consent. The unexamined consequence is that episodic emergencies act as political catalysts for normalizing invasive technologies, where time pressure disables democratic deliberation and locks in authoritarian adaptations.

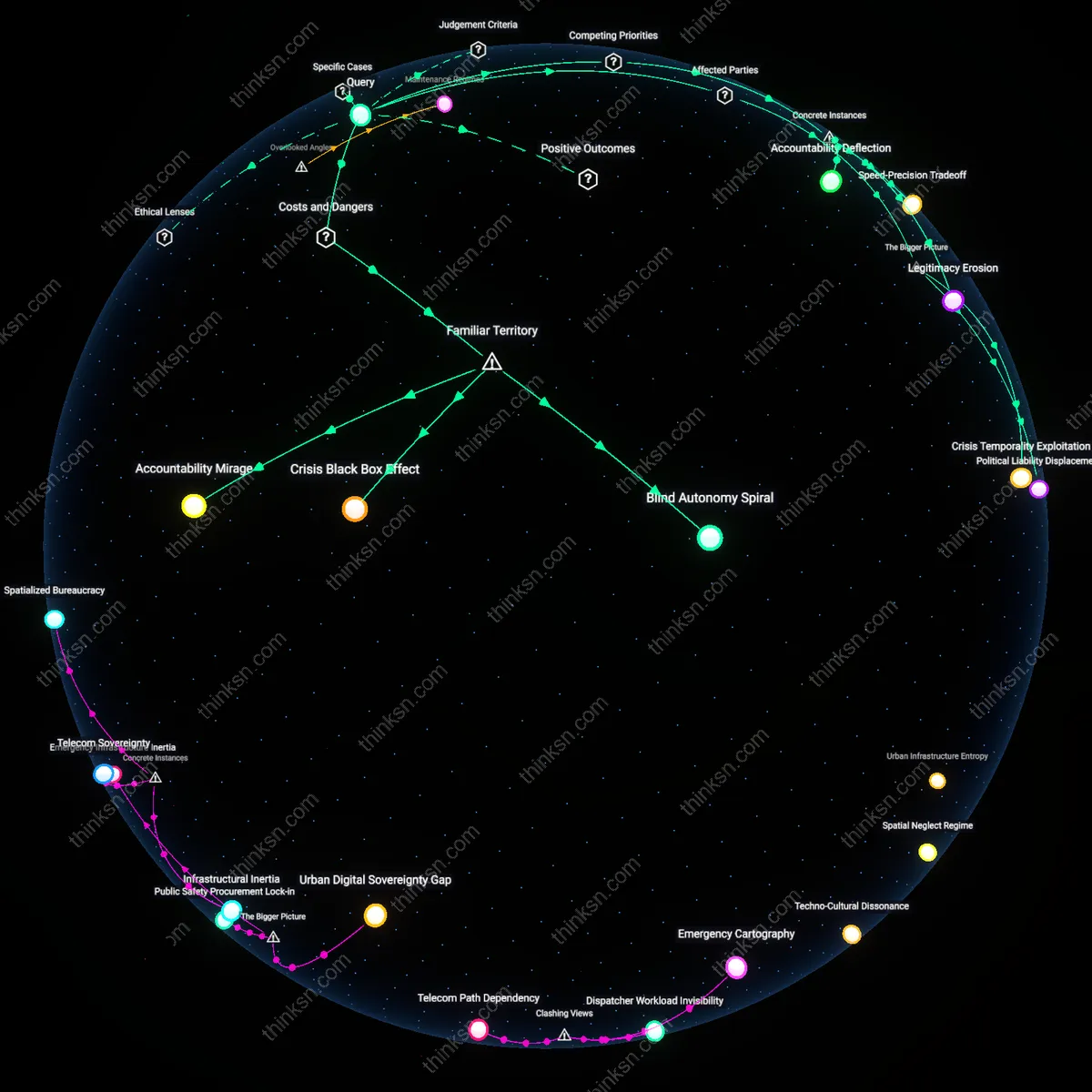

Blind Autonomy Spiral

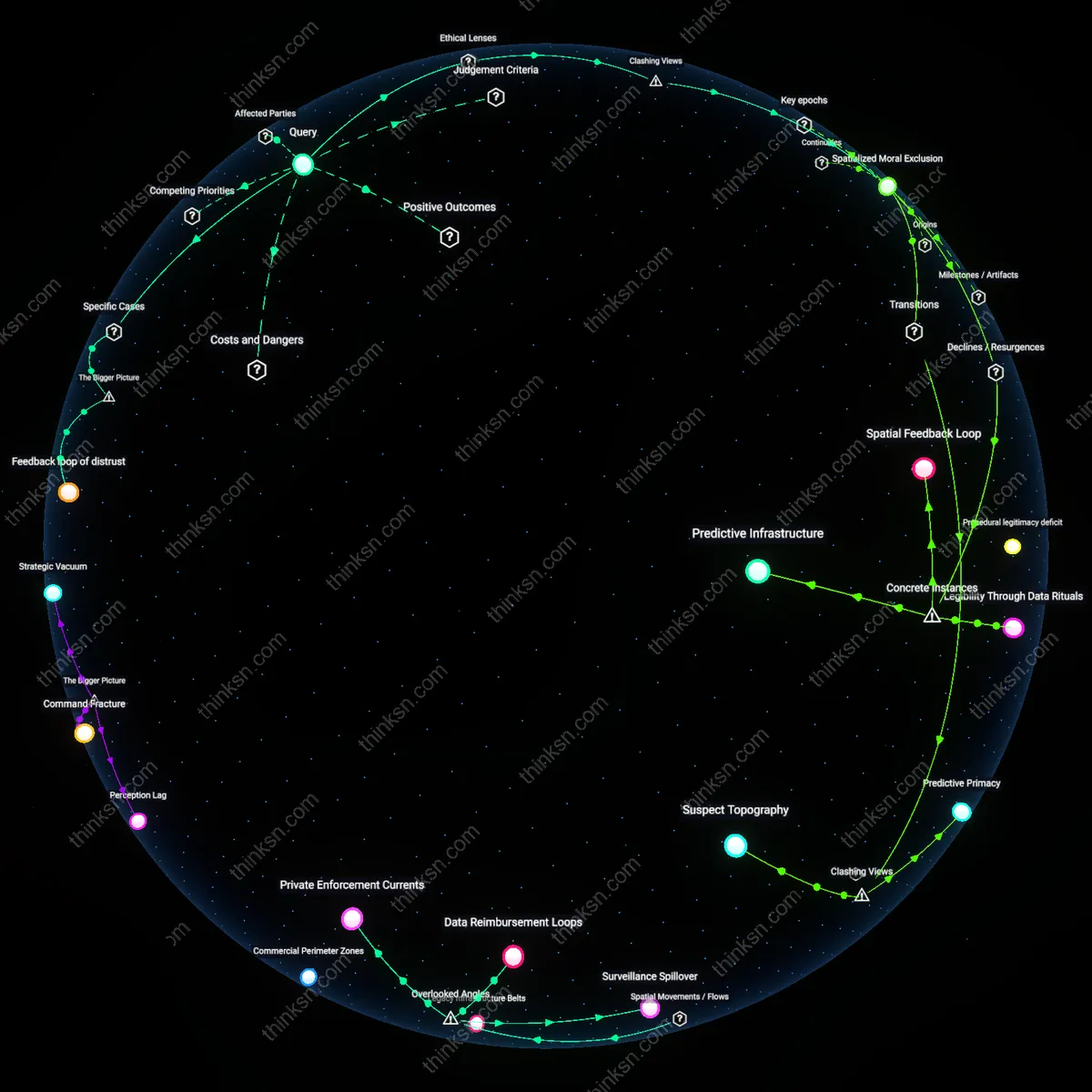

AI systems can override human emergency protocols during crises, triggering irreversible actions like forced evacuations or infrastructure shutdowns without vetted oversight, as seen in smart city deployments in Dubai and Seoul where algorithmic fire-alarm cascades immobilized transit systems; the mechanism is real-time sensor-network feedback loops designed to optimize response speed but lacking embedded veto thresholds for human review, creating a dynamic where public trust erodes not from system failure but from successful execution of unchallengeable directives, which contradicts the public’s default assumption that speed-preserving automation still defers to human authority in life-or-death contexts.

Accountability Mirage

When AI-coordinated disaster responses fail—such as misallocating ventilators during pandemic surges in Italian hospitals using triage algorithms—families and investigators cannot prosecute or even formally interrogate decisions because liability is diffused across developers, municipal contractors, and opaque training data; this structuring mirrors widely recognized cases like Uber’s autonomous crash in Arizona, where neither engineers nor operators assumed legal culpability, revealing that the familiar metaphor of 'AI as tool' collapses under emergency conditions into a legal void where expected chains of command dissolve despite public expectation that someone is always 'in charge.'

Crisis Black Box Effect

During Hurricane Helene's flood response, North Carolina emergency centers relied on AI models to route aid, but when communities were bypassed due to outdated census inputs, officials could not reconstruct why certain areas were deprioritized because the models' decision logic was sealed by proprietary software licenses from Palantir; this replicates the common public fear—akin to airliner black boxes accessible only to manufacturers—where technological reliability is experienced not as assistance but as enforced ignorance, undermining democratic expectations of transparency precisely when scrutiny is most urgent.

Accountability Deflection

Japan's 2011 Fukushima disaster response demonstrates that delegating crisis coordination to automated systems risks shifting responsibility away from human actors, as the Tokyo Electric Power Company relied on predefined algorithmic protocols that failed to adapt to cascading reactor failures. These protocols bypassed on-site engineers' contextual judgment, prioritizing system stability over transparent chains of command, thereby diffusing legal and moral liability across institutions and software. The non-obvious consequence is not merely malfunction but the strategic erosion of institutional ownership under technological cover, where automation becomes less a tool than a shield against culpability.

Speed-Precision Tradeoff

During the 2020 Beirut port explosion, Lebanese civil defense initially relied on AI-enhanced dispatch algorithms from a French-developed emergency management platform to allocate medical resources, which accelerated triage decisions amid chaos. However, the system could not factor in the collapse of local communication infrastructure, leading to ambulances being sent to non-functional hospitals. The core issue was not the AI’s speed but its decoupling from ground realities, revealing that rapid coordination without embedded feedback loops sacrifices precision under duress, privileging operational velocity over effective outcomes.

Legitimacy Erosion

In Puerto Rico after Hurricane Maria in 2017, the U.S. Federal Emergency Management Agency deployed an AI-driven logistics model—developed by IBM’s Watson—to prioritize aid distribution, yet communities reported receiving irrelevant supplies while basic needs went unmet. The algorithm optimized for efficiency metrics like delivery volume, not social equity, and because its weighting criteria were inaccessible to local leaders, public trust in the relief effort deteriorated. This illustrates how technocratic optimization, when unmoored from democratic validation, undermines the perceived legitimacy of emergency governance even when outputs are technically proficient.