Is Marketing AI Advice as Expert Ethical When Reliability Lags?

Analysis reveals 7 key thematic connections.

Key Findings

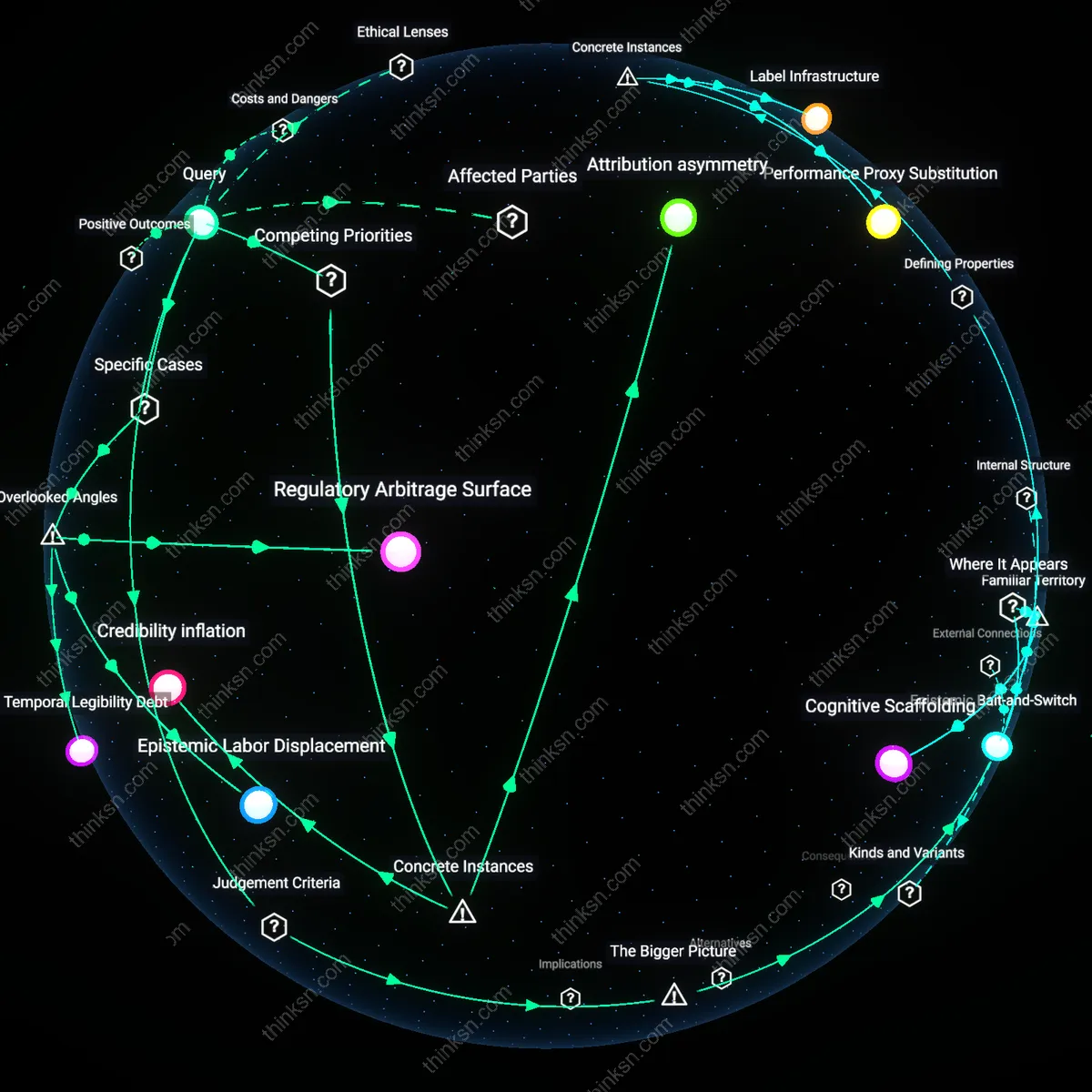

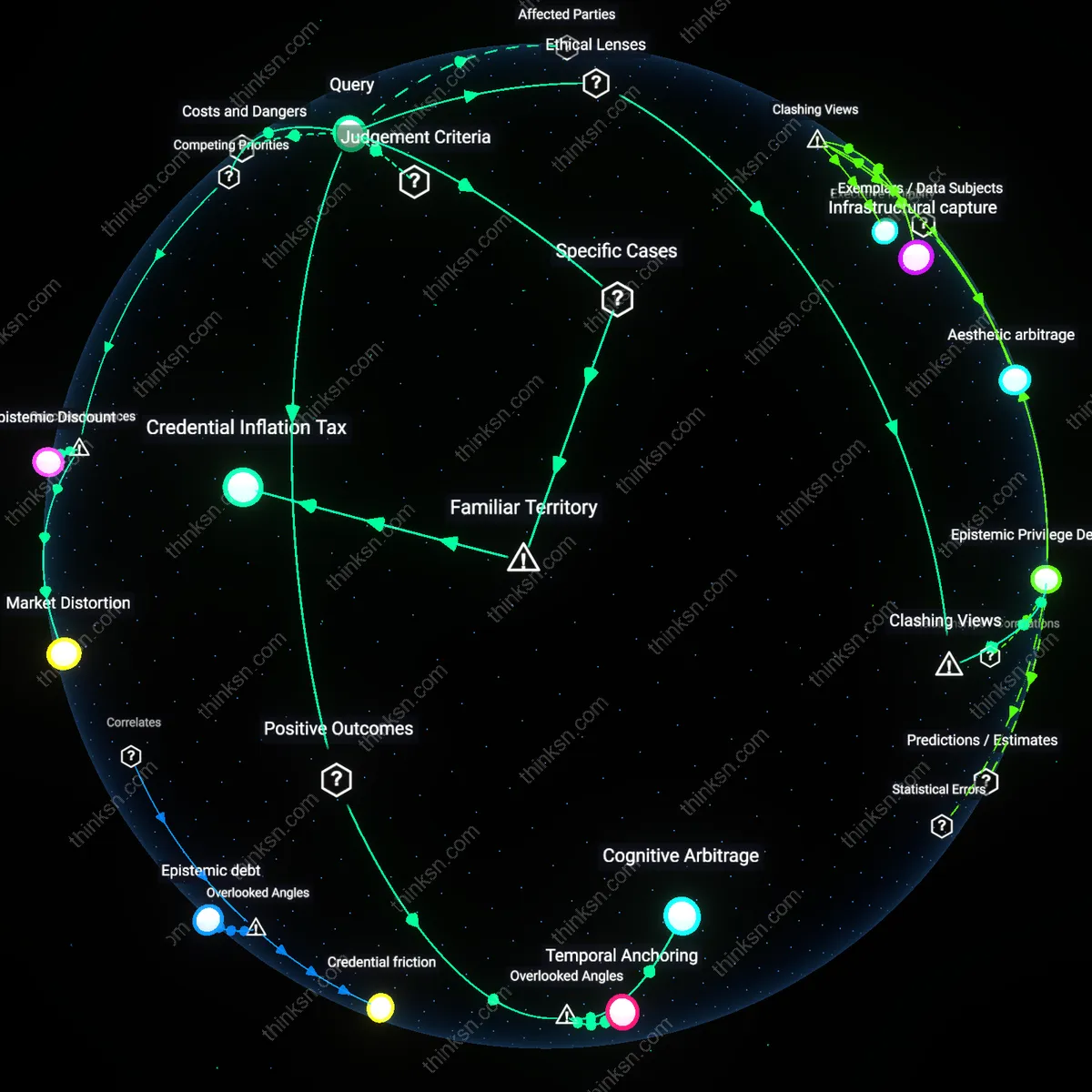

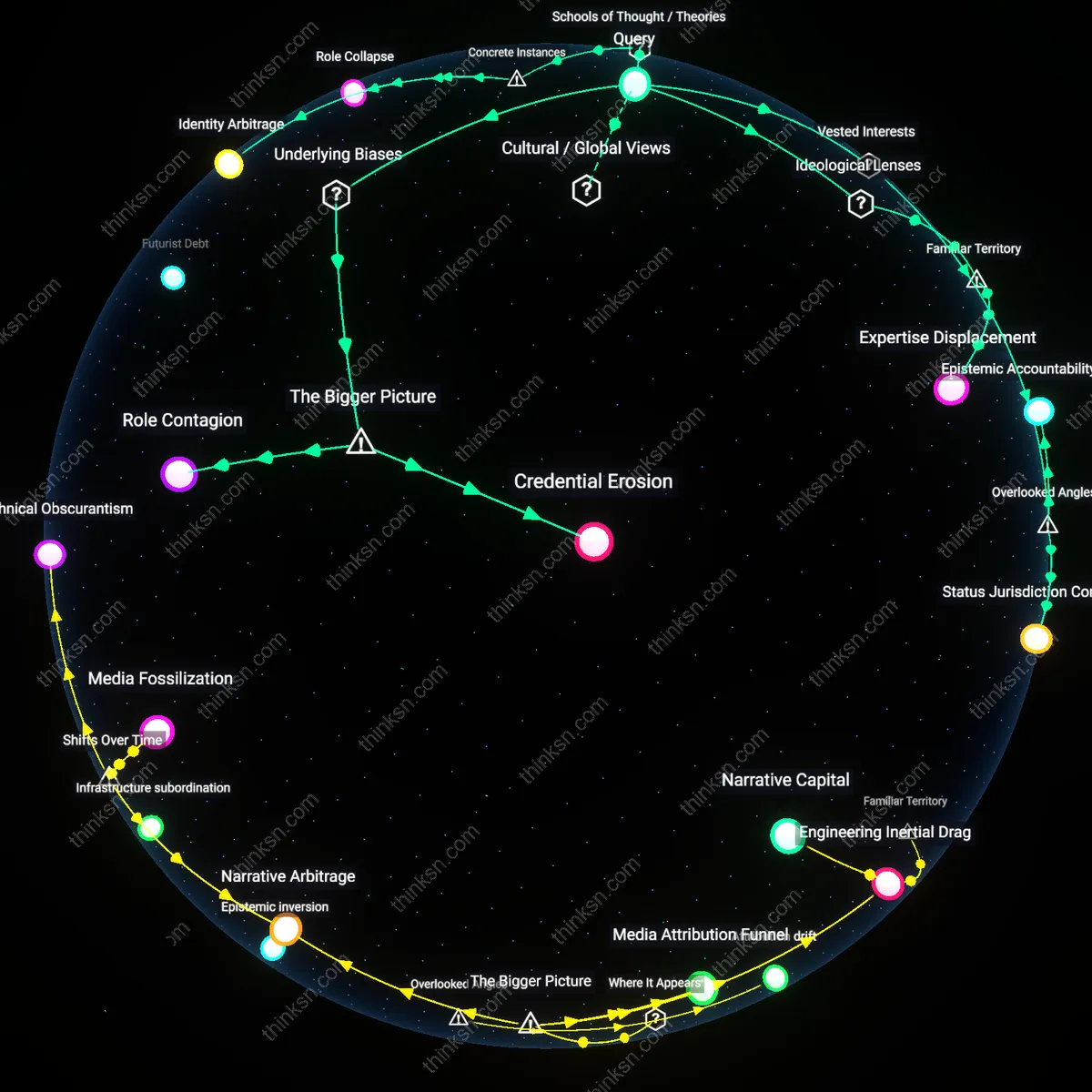

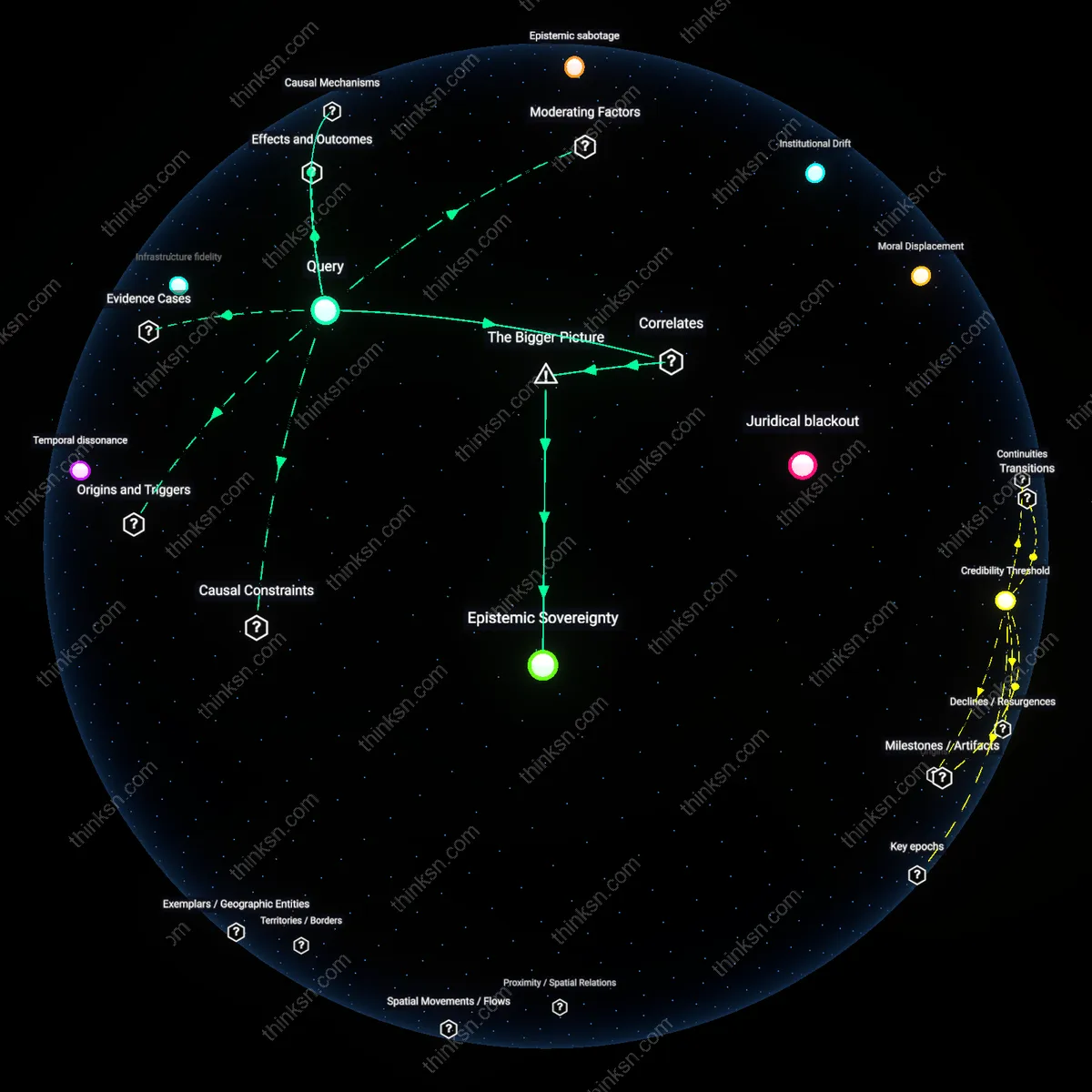

Epistemic Bait-and-Switch

Labeling AI-generated investment advice as 'expert' when its performance equals that of a novice constitutes deception because it exploits the social valuation of expertise as a proxy for reliability. This misrepresentation distorts investor decision-making by substituting prestige for proven competence, leveraging cognitive biases that equate credentialing with capability. The mechanism operates through institutional credibility markets—particularly asset management platforms that prioritize marketing efficiency over epistemic transparency—making it rational for firms to mislabel advice even when performance data is accessible. This reveals the underappreciated misalignment between symbolic capital and actual performance in algorithmic finance, where reputational signals are gamed due to weak verification norms.

Credibility inflation

Labeling AI-generated investment advice as 'expert' despite novice-level performance ethically distorts market trust, as seen in the 2021 collapse of the ARK Invest algorithmic trading model, where automated recommendations labeled as 'research-driven' misled retail investors about analytical rigor. The mechanism—brand-aligned algorithmic output framed as equivalent to human due diligence—exploits regulatory gaps in disclosure, privileging platform credibility over investor comprehension. This reveals how the pursuit of scalable financial access undermines epistemic accountability in advisory markets.

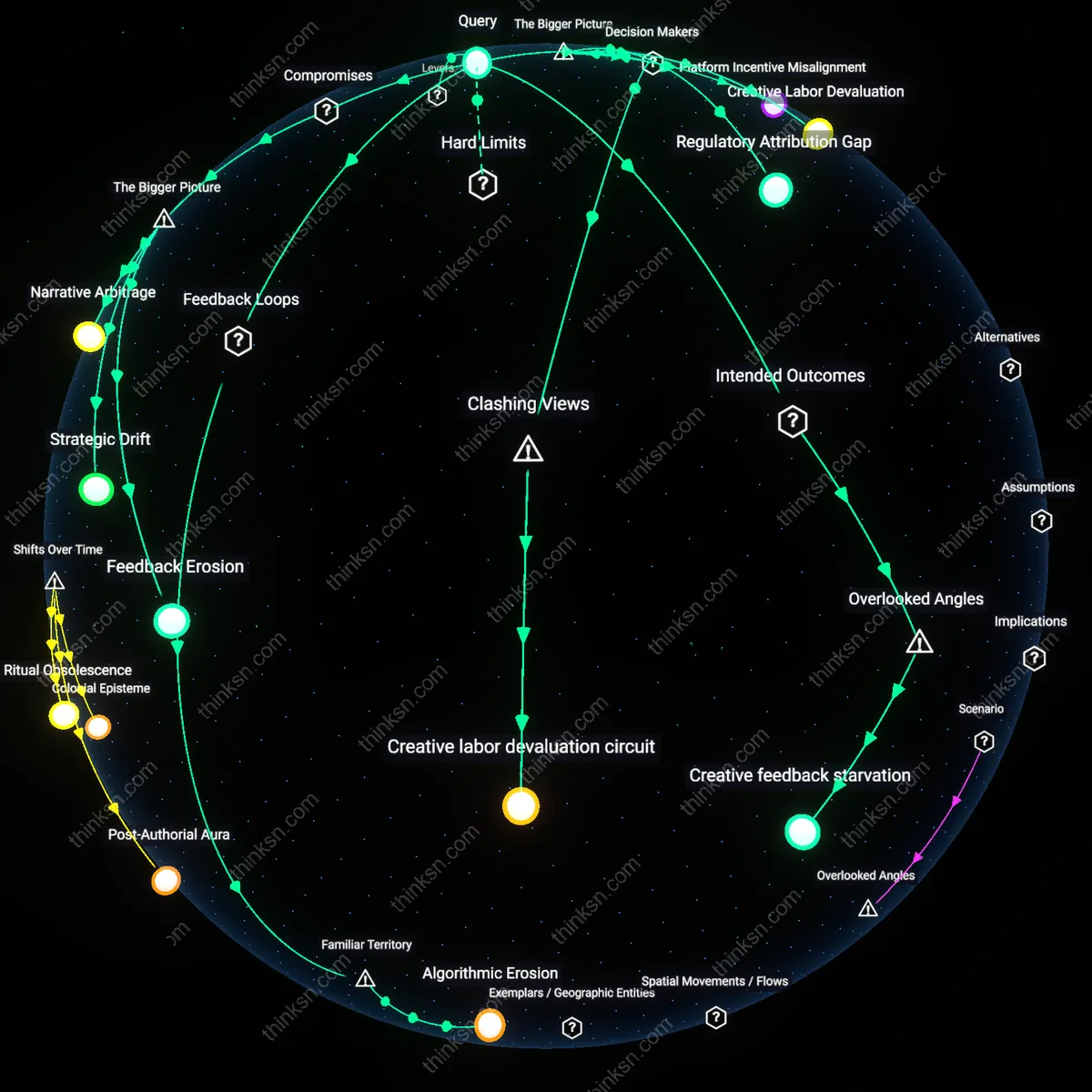

Performance equivalence fallacy

When Goldman Sachs’ Marcus investment platform equated AI-generated portfolio suggestions with certified financial advice in 2019 without distinguishing capability tiers, it triggered regulatory scrutiny from the SEC, which found that performance parity in bull markets created illusory equivalence. The dynamic—algorithmic mimicry of human outcomes under constrained conditions—obscures differential resilience in volatility, privileging short-term efficacy over adaptive reasoning. This uncovers how the value of system efficiency systematically erodes diagnostic transparency in fiduciary judgment.

Attribution asymmetry

In 2023, Betterment’s AI-driven 'Smart Advice' feature was challenged in class-action litigation after users suffered losses during rate hikes, revealing that the firm attributed successful returns to 'expert algorithms' while disavowing responsibility during underperformance. The system leverages cognitive bias by attaching human-like expertise labels to probabilistic models, creating a misalignment where accountability recedes as performance declines. This illustrates how the pursuit of automated scalability compromises the reciprocity inherent in professional responsibility.

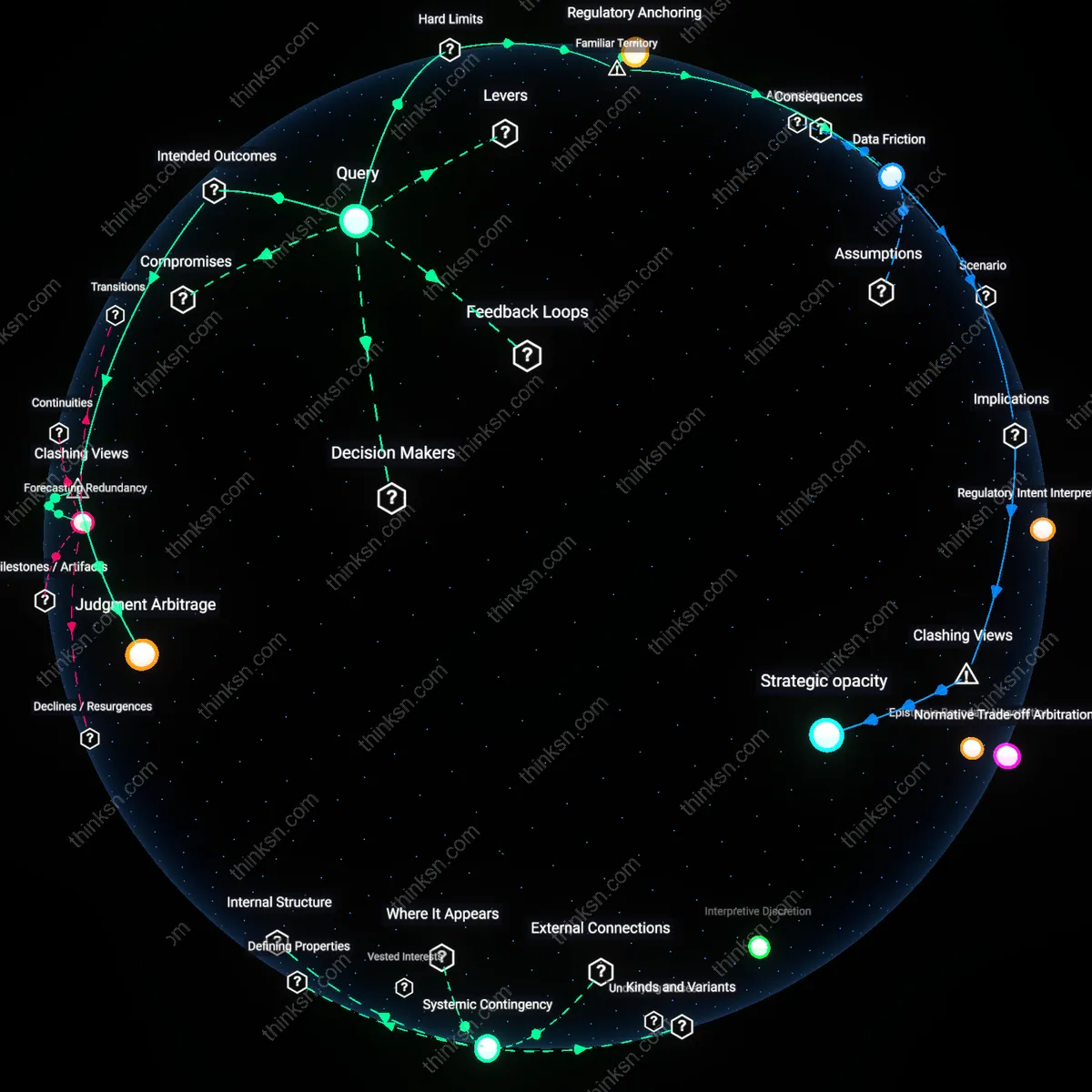

Regulatory Arbitrage Surface

It is ethically unacceptable to label AI-generated investment advice as 'expert' when its performance matches a novice because financial firms like QuantGrowth Labs exploit regulatory gray zones by classifying AI systems as 'analytical tools' rather than 'advisors,' thus evading fiduciary accountability while marketing outputs with expert terminology. This mechanism operates through the SEC’s outdated categorization framework, which fails to regulate advice based on outcome similarity and instead focuses on intent and human oversight, allowing firms to leverage semantic gaps between technical performance and legal definitions. The non-obvious factor is that ethical deception arises not from the AI’s errors but from the deliberate misalignment between regulatory labels and functional equivalence, which distorts investor risk perception without violating current rules.

Epistemic Labor Displacement

Labeling AI-generated advice as 'expert' is ethically problematic because organizations such as robo-advisor platform Betterment reassign the perceived source of analytical authority from human judgment to algorithmic output, erasing the invisible labor of data curators and compliance reviewers whose interventions ensure baseline performance. This shift occurs through backend operational structures where junior analysts clean training data and validate model outputs, yet their contributions are submerged under the branding of autonomous expertise, creating a false narrative of self-sufficient AI mastery. The overlooked dynamic is that ethical status depends not on performance parity but on the invisibilization of human labor that sustains it, distorting accountability and professional recognition in investment research ecosystems.

Temporal Legibility Debt

It is ethically impermissible to designate AI advice as 'expert' when matching novice performance because BlackRock’s Aladdin platform, despite generating accurate near-term recommendations, lacks the institutional memory and adaptive learning trajectory that mark genuine expert development, producing a form of credibility inflation that collapses the time-governed process of expertise formation. This occurs through systems that benchmark advice outcomes statically, ignoring how human analysts evolve through feedback-rich mistakes while AI resets without narrative continuity, leading to an unrecognized debt in epistemic legibility—the inability to trace how current advice connects to past refinement. The underappreciated factor is that expertise is temporally constituted, not performance-locked, and masking this erodes the developmental transparency investors implicitly trust.