Do Energy Firms Underreport Pollution Through Custom Standards?

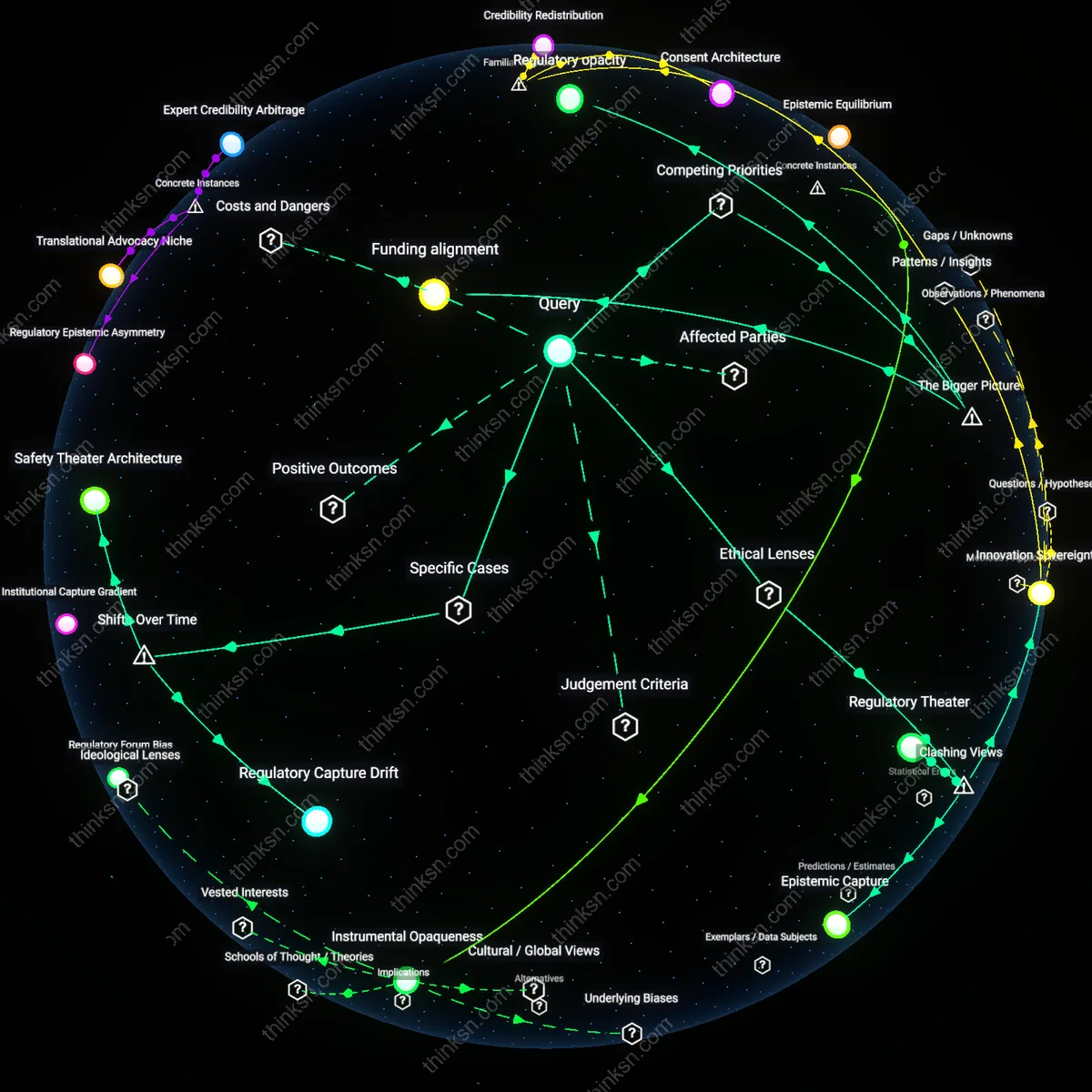

Analysis reveals 10 key thematic connections.

Key Findings

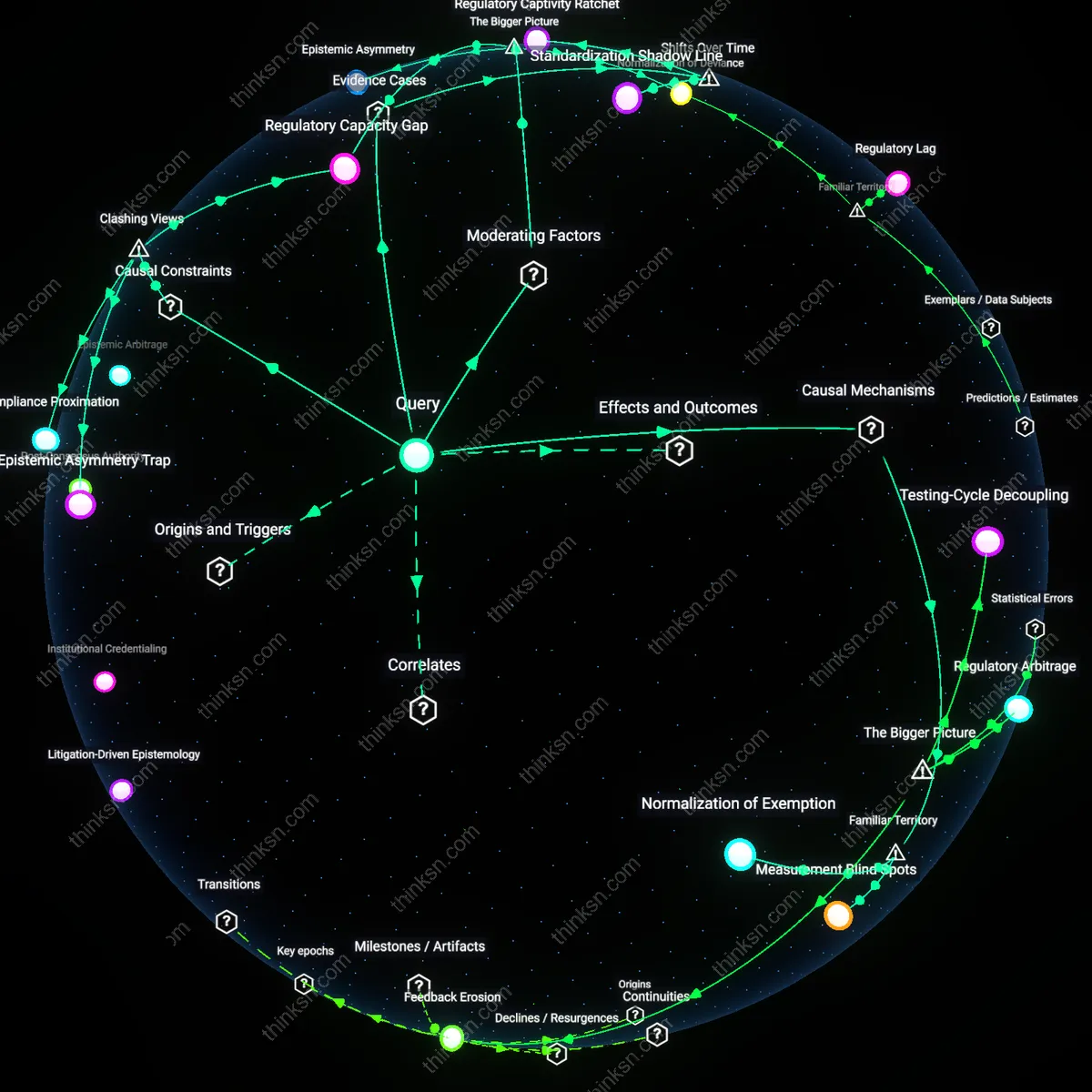

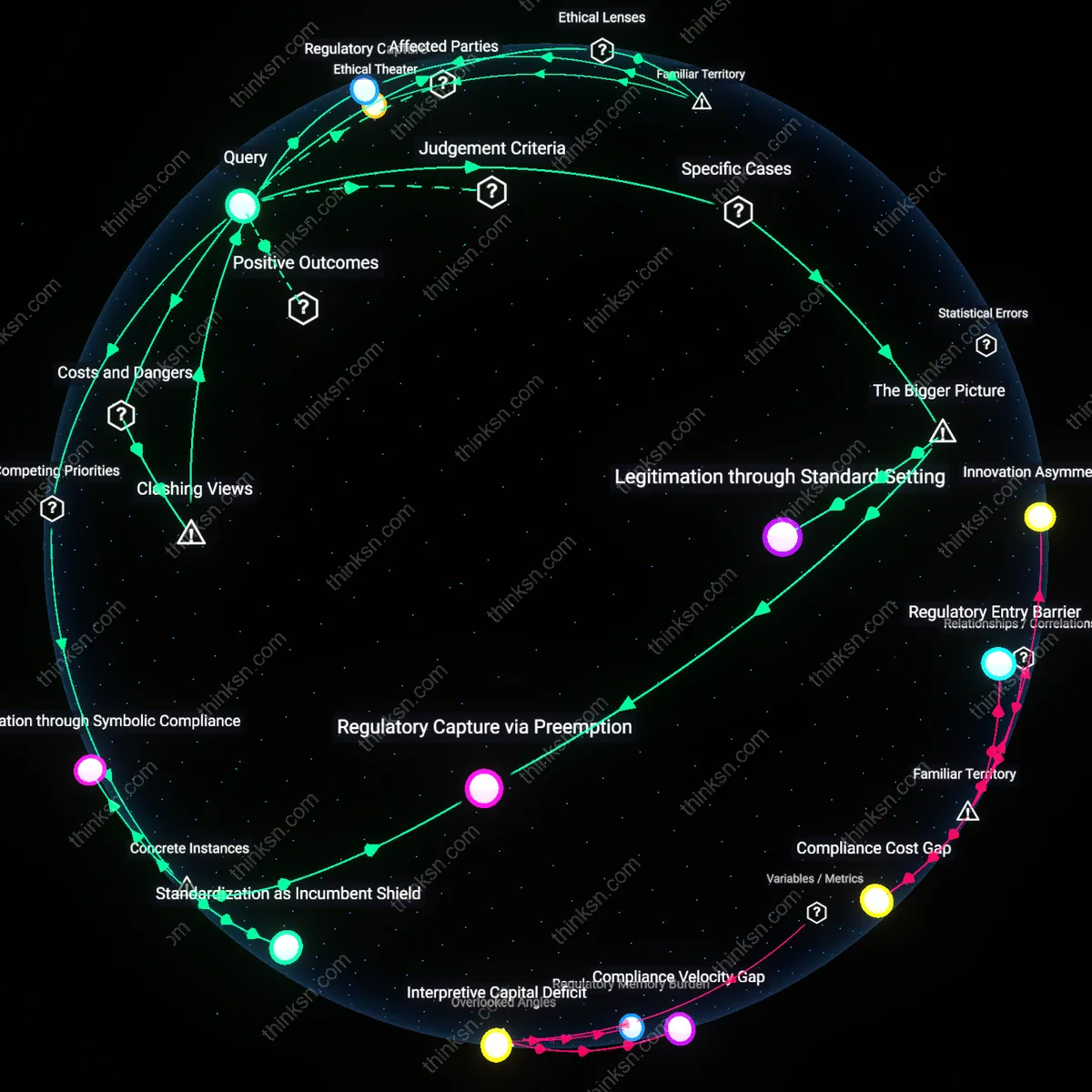

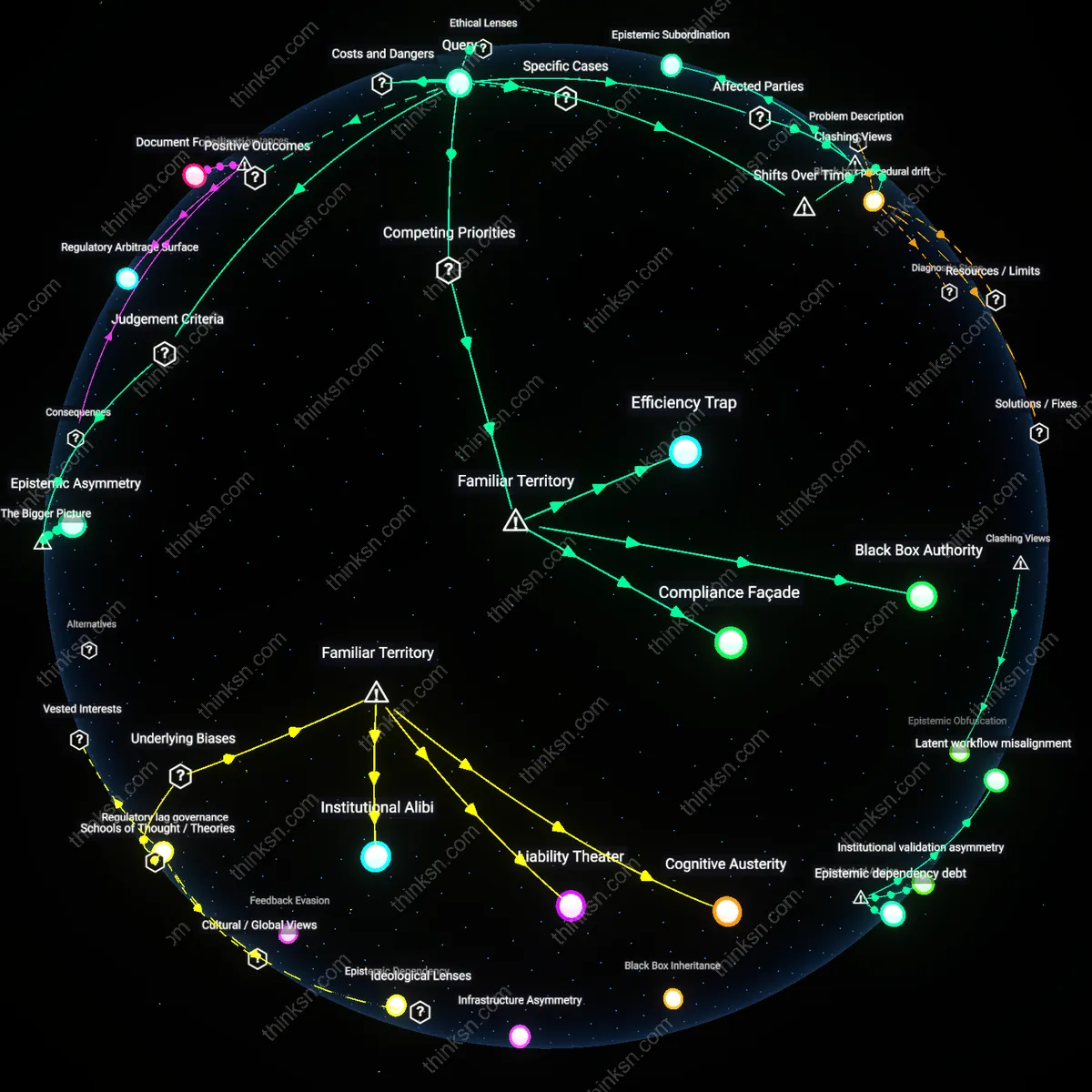

Regulatory Capture

Industry-led development of emissions-measurement standards leads to underestimation of pollution because firms with direct financial stakes design testing protocols that exclude or downweight real-world operating conditions. Regulatory agencies accept these standards by relying on industry expertise and pre-existing technical frameworks, which creates path dependency and reduces perceived legitimacy of external scrutiny. The non-obvious consequence within this familiar dynamic is that the appearance of technical neutrality masks embedded incentives, making captured standards feel scientifically objective rather than institutionally biased.

Measurement Blind Spots

Industry-led standards systematically omit diffuse or episodic pollution sources because measurement protocols prioritize consistent, steady-state operations that are easier to monitor and certify. Regulators accept these incomplete models because they align with legacy compliance infrastructure, such as continuous emissions monitoring systems designed for smokestacks, not fugitive methane leaks or supply chain emissions. The underappreciated effect is that familiar measurement tools don't just fail to capture full emissions—they redefine what pollution 'counts' as measurable, narrowing regulatory imagination.

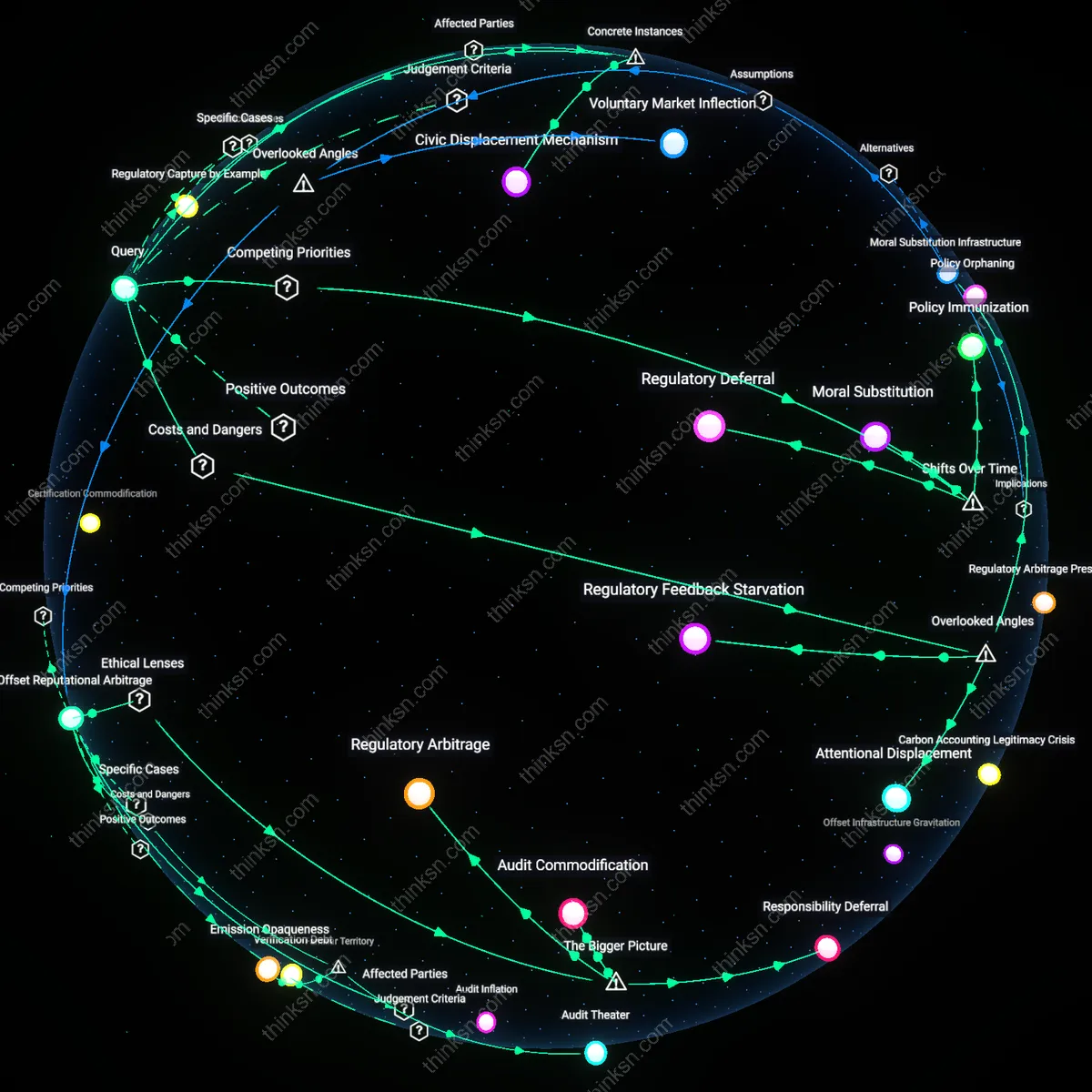

Normalization of Exemption

Industry shapes emissions standards by institutionalizing exemptions for specific processes, technologies, or byproducts under the premise of technical infeasibility or economic disruption, which directly lowers reported totals. Regulatory acceptance follows from iterative accommodation, where temporary concessions become permanent features of standards through grandfather clauses and cost-benefit analyses that value compliance ease over accuracy. What remains hidden in plain sight is that widespread, routine exceptions are not failures of enforcement but core mechanisms of the standard itself, making underestimation structural rather than incidental.

Regulatory Capacity Gap

Industry-led development of emissions-measurement standards leads to underestimation of pollution because regulatory agencies in under-resourced jurisdictions often lack the technical staff and monitoring infrastructure to independently verify industry data, making them structurally dependent on self-reported metrics. This dependency is amplified when budget cuts or political resistance weaken agencies like the U.S. EPA or India’s Central Pollution Control Board, forcing them to accept industry methodologies as de facto standards. The non-obvious implication is that underestimation is not merely a result of corporate malfeasance but of systemic institutional frailty that repositions regulators as passive validators rather than active overseers.

Epistemic Asymmetry

Industry-led development of emissions-measurement standards leads to underestimation of pollution because private firms control proprietary measurement technologies and data models—such as satellite-derived methane algorithms or stack-monitoring software—creating an information monopoly that regulators cannot easily replicate or challenge. Agencies like the European Environment Agency must rely on industry-submitted datasets because they lack access to raw sensor networks or algorithmic code, effectively embedding corporate assumptions into compliance benchmarks. The underappreciated dynamic is that epistemic control—not just lobbying or legal influence—shapes regulatory outcomes by defining what counts as 'valid' evidence.

Normalization of Deviance

Industry-led development of emissions-measurement standards leads to underestimation of pollution because repeated acceptance of narrow or incomplete metrics—such as measuring only direct emissions while excluding supply chain leaks—gradually redefines regulatory baselines, making undercounting appear technically legitimate over time. This is institutionalized through international frameworks like the GHG Protocol, where corporate stakeholders co-author guidelines, embedding conservative assumptions that later become locked in via carbon markets or ESG reporting. The overlooked mechanism is that regulatory acceptance stems not from deception but from the slow, consensual erosion of measurement ambition, where deviation becomes standard.

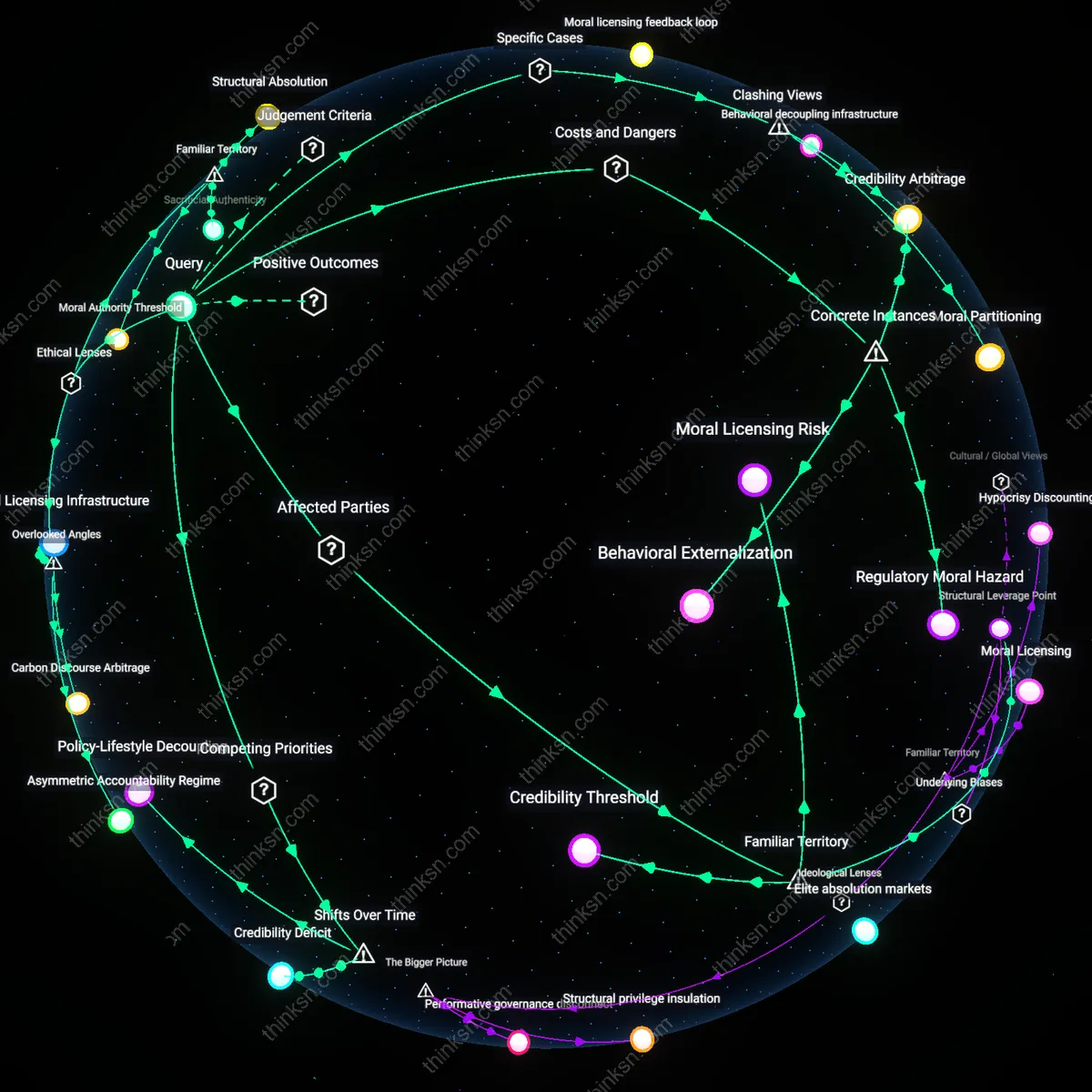

Epistemic Asymmetry Trap

Industry-led standards systematically understate emissions not because of data falsification but because methodological design privileges measurable, routine emissions while excluding episodic or diffuse release events—such as methane super-emitter pulses during maintenance—rendering them invisible in compliance metrics. Regulators accept these standards not out of compromise but because they lack access to alternative modeling frameworks, creating a condition where the most technically available standard, however incomplete, becomes normatively binding. This undermines regulatory science not through corruption but through a monopoly on epistemic legitimacy, exposing how the appearance of objectivity in measurement protocols masks a silent foreclosure of alternative environmental realities.

Compliance Proximation

Regulatory acceptance of industry-led standards persists not due to captured agencies or weak laws, but because measurement protocols are designed to align with legally defined compliance thresholds—such as EPA’s methane rule limits—so closely that any deviation appears both technically unjustified and legally disruptive. Once a standard is embedded in permitting and reporting systems, like the GHG Reporting Program, altering it triggers cascading administrative costs, making regulators functionally averse to more accurate but incompatible methods even when evidence emerges. This reveals that accuracy is not the regulatory priority—predictability and administrative coherence are—making underestimation a stable feature, not a bug, of an enforcement regime optimized for manageability over ecological fidelity.

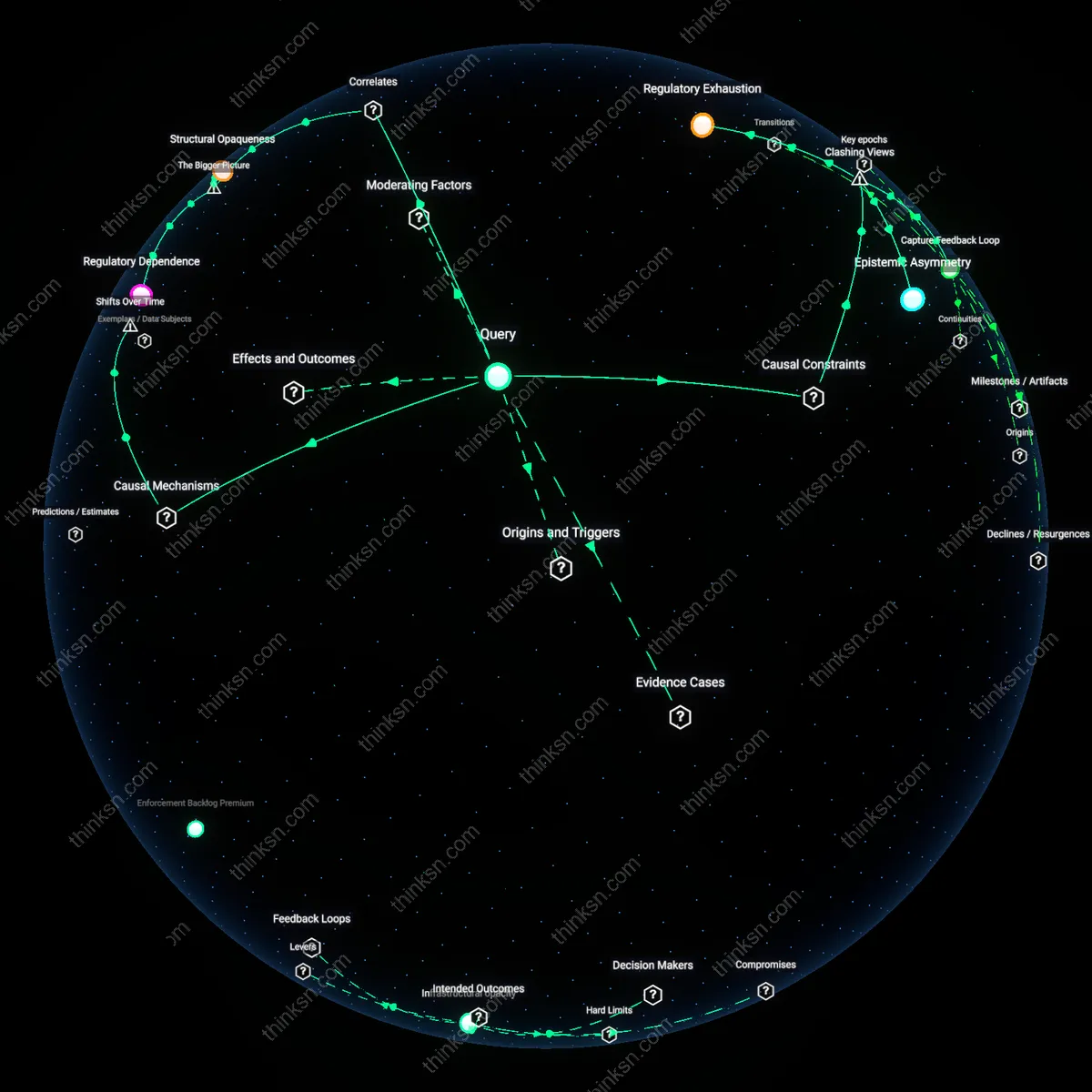

Regulatory Captivity Ratchet

Industry-led development of emissions-measurement standards led to underestimation of pollution because private actors redefined compliance thresholds during the post-1990 Clean Air Act Amendments era, when regulated firms gained disproportionate influence over EPA-guided protocols through cost-benefit analyses that systematically marginalized worst-case leakage scenarios—this shift entrenched a feedback loop where methodological conservatism became institutionalized, masking true dispersion levels in sectors like oil and gas. The non-obvious consequence of this trajectory is not mere bias but a ratcheting tightening of regulatory imagination, where each successive standard absorbs prior industry concessions as baseline legitimacy, thus narrowing the range of what counts as measurable harm over time.

Standardization Shadow Line

Emissions underestimation intensified after 2015 when international climate commitments pressured governments to adopt scalable metrics, leading ISO and ASTM to formalize industry-designed protocols that prioritized harmonization over sensitivity, particularly in biomass and waste-to-energy sectors across Northern Europe—this institutional turn to global standardization masked divergence between actual plume behavior and modeled outputs, privileging consistency for reporting over ecological accuracy. The overlooked outcome of this shift was the creation of a shadow boundary within data systems, where standardized comparability became valued more than scientific fidelity, producing a false coherence in transnational climate inventories.