Biometric Data Privacy: Balancing Health Apps with Insurer Power

Analysis reveals 5 key thematic connections.

Key Findings

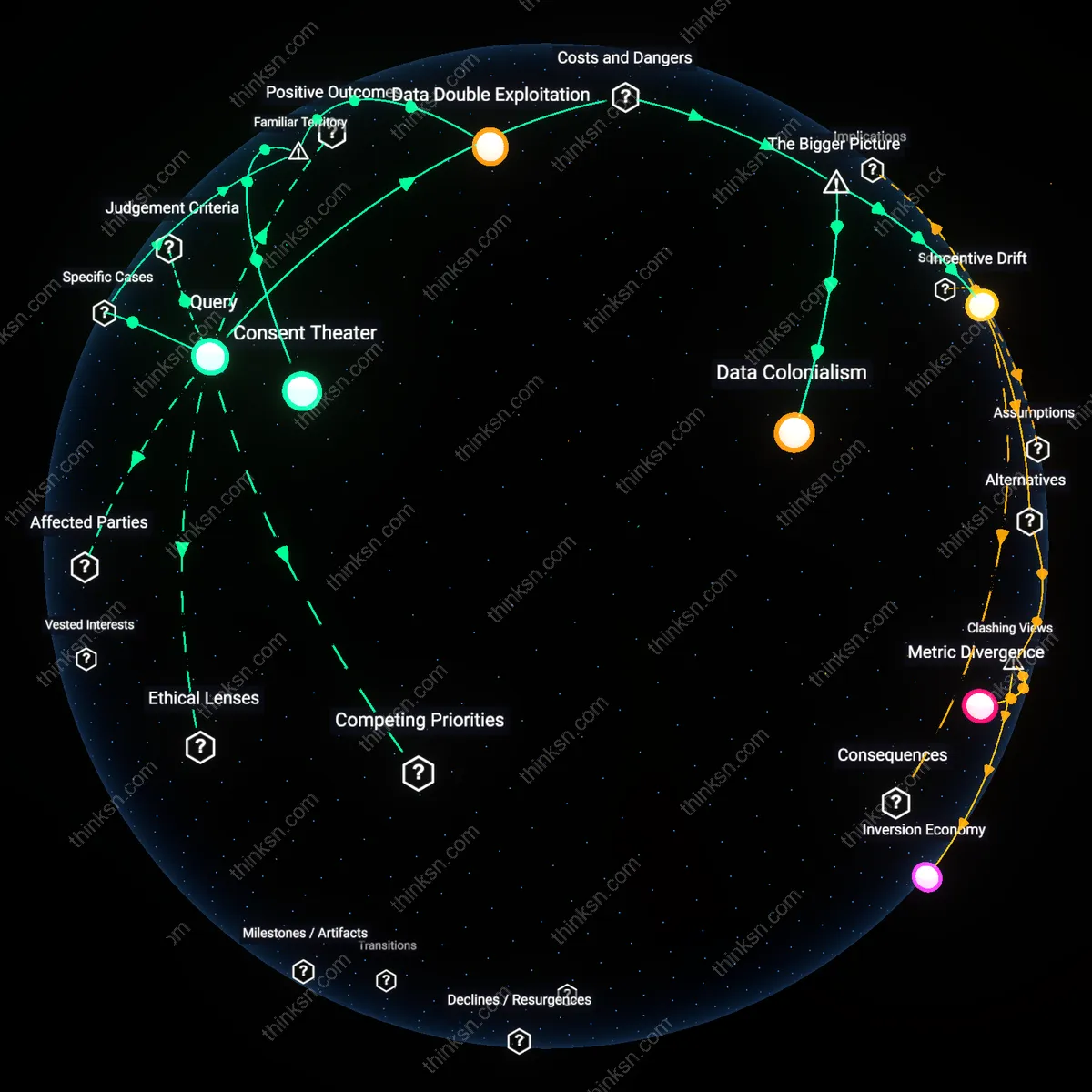

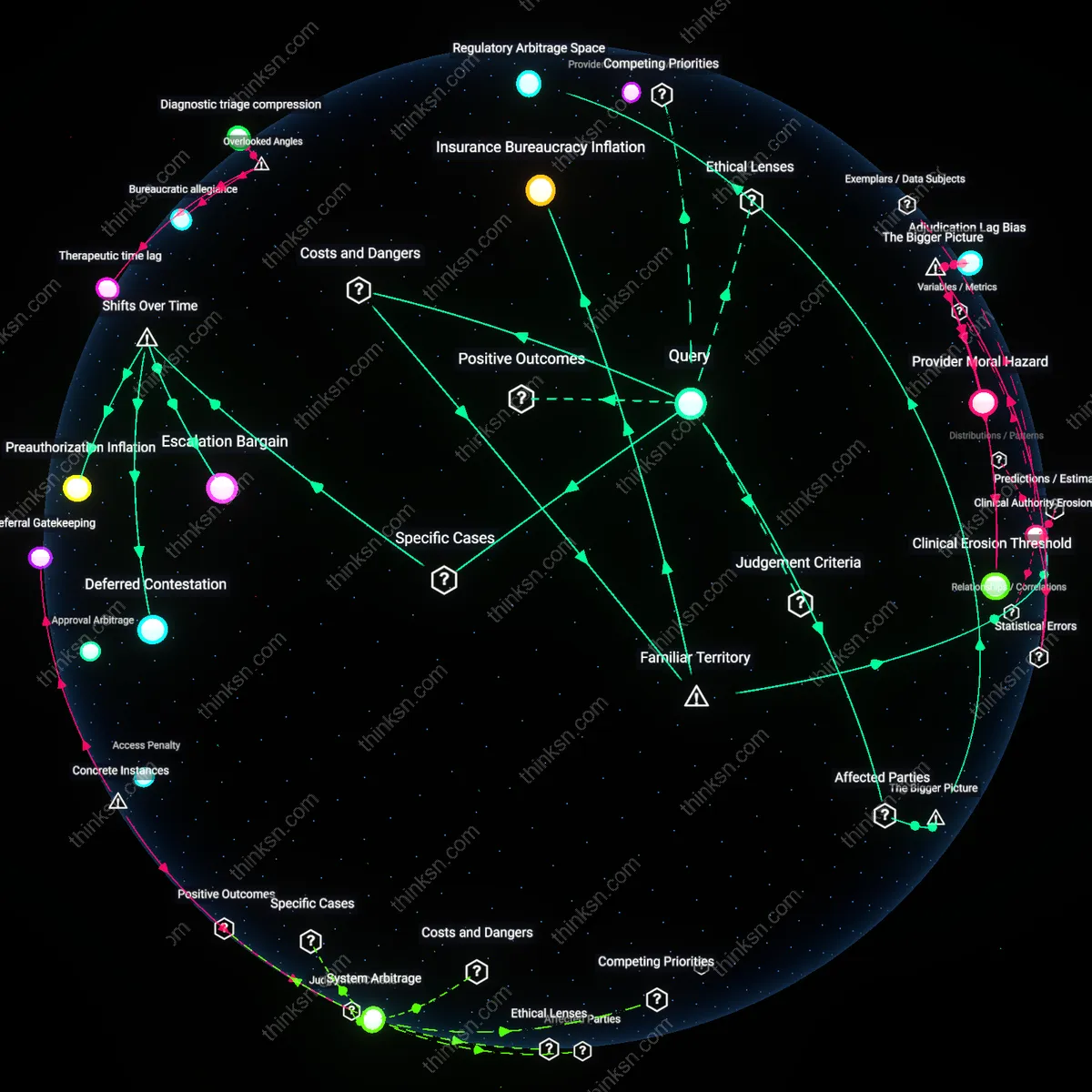

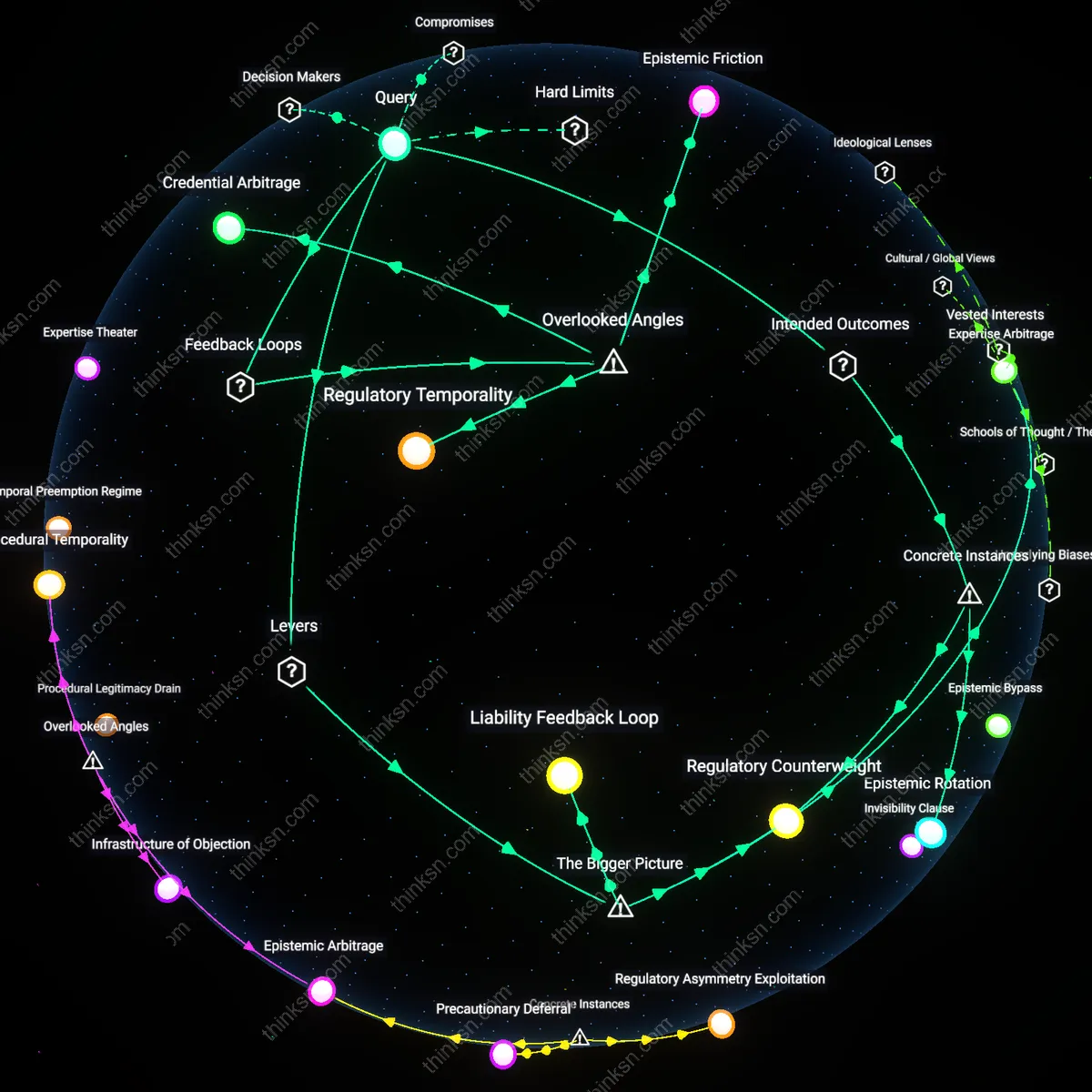

Data Colonialism

Individuals cannot meaningfully consent to biometric data use by insurers because the structural power imbalance between commercial entities and users replicates colonial extraction dynamics, where personal health becomes raw material expropriated without equitable return. Insurers, as powerful actors embedded in privatized healthcare markets, leverage jurisdictional gaps in U.S. federal law—like the absence of comprehensive privacy legislation—to integrate third-party app data into risk assessments through intermediaries such as data brokers. This creates a feedback loop in which user data harvested under the guise of wellness is repurposed to refine actuarial models that disproportionately penalize vulnerable populations, effectively turning bodily autonomy into a liability front. Research consistently shows that once biometric data enters commercial ecosystems, it is nearly impossible to trace or retract, enabling downstream discrimination that is both systemic and obscured by algorithmic opacity.

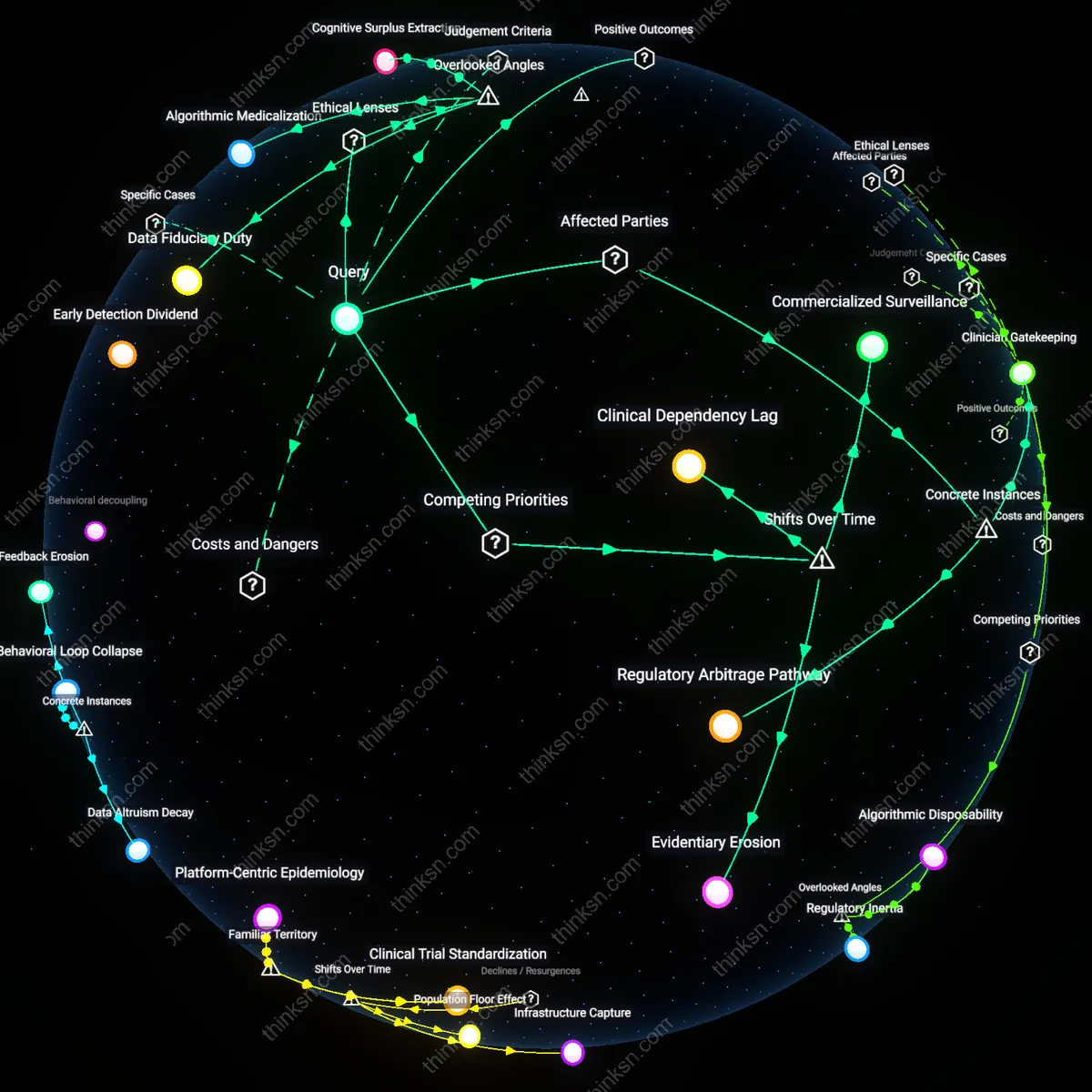

Incentive Drift

Health apps initially promoted as tools for personal empowerment become conduits for insurer risk profiling because venture capital–backed platforms are structurally incentivized to monetize data at scale, shifting their original mission toward alignment with actuarial interests. As these apps rely on continuous biometric monitoring to sustain valuation, they design features that encourage over-sharing—such as gamified step counts or sleep tracking—while obscuring how such data could be repurposed by entities like life insurers who purchase aggregated datasets from analytics firms. The enabling condition is regulatory permissiveness in the United States, where HIPAA does not cover consumer-facing apps, allowing unregulated data flows into risk assessment systems that redefine 'voluntary' health participation as a prerequisite for affordability. This distortion transforms preventive health into a surveillance mechanism where the cost of noncompliance is higher premiums, effectively reversing the social contract of insurance.

Data Double Exploitation

Individuals should restrict biometric sharing with health apps because companies like Fitbit and Apple Health routinely license aggregated user data to third-party analytics firms, which reinsure and pharmaceutical entities then use to model risk pools without user knowledge—enabling a shadow market where personal physiology becomes actuarial capital. This occurs through data supply chains that anonymize individual identities while preserving behavioral patterns, making it possible to infer population-level health risks that insurers exploit to adjust policy structures. The non-obvious reality is that consent forms obscure this secondary data lifecycle, allowing users to believe they control usage while the real economic value accrues downstream through actuarial repurposing.

Consent Theater

Individuals must treat health app permissions as performative safeguards because platforms such as MyFitnessPal and Whoop present lengthy, legally dense terms of service that simulate transparency while structurally disabling informed choice—functioning not as protective mechanisms but as liability shields. This dynamic operates through standardized digital consent architectures mandated by regulations like HIPAA’s loopholes for non-covered entities, allowing firms to claim compliance while diverting data into commercial ecosystems. What’s overlooked is that the ritual of clicking 'I agree' gives users the sensation of agency, even as the system ensures they cannot realistically parse or negotiate how their biometrics will be monetized.

Wellness Surveillance

People should resist continuous biometric monitoring because corporate wellness programs, such as those administered by Virgin Pulse in partnership with major U.S. employers, incentivize app integration while creating de facto health surveillance infrastructures that feed actuarial predictions—turning voluntary participation into implicit data extraction. This system leverages financial rewards and workplace culture to normalize constant tracking, blurring the line between personal health improvement and institutional risk profiling. The underappreciated consequence is that employees who opt in to lower insurance premiums may unknowingly generate evidence that could be retroactively used to justify coverage exclusions or tiered benefit structures.