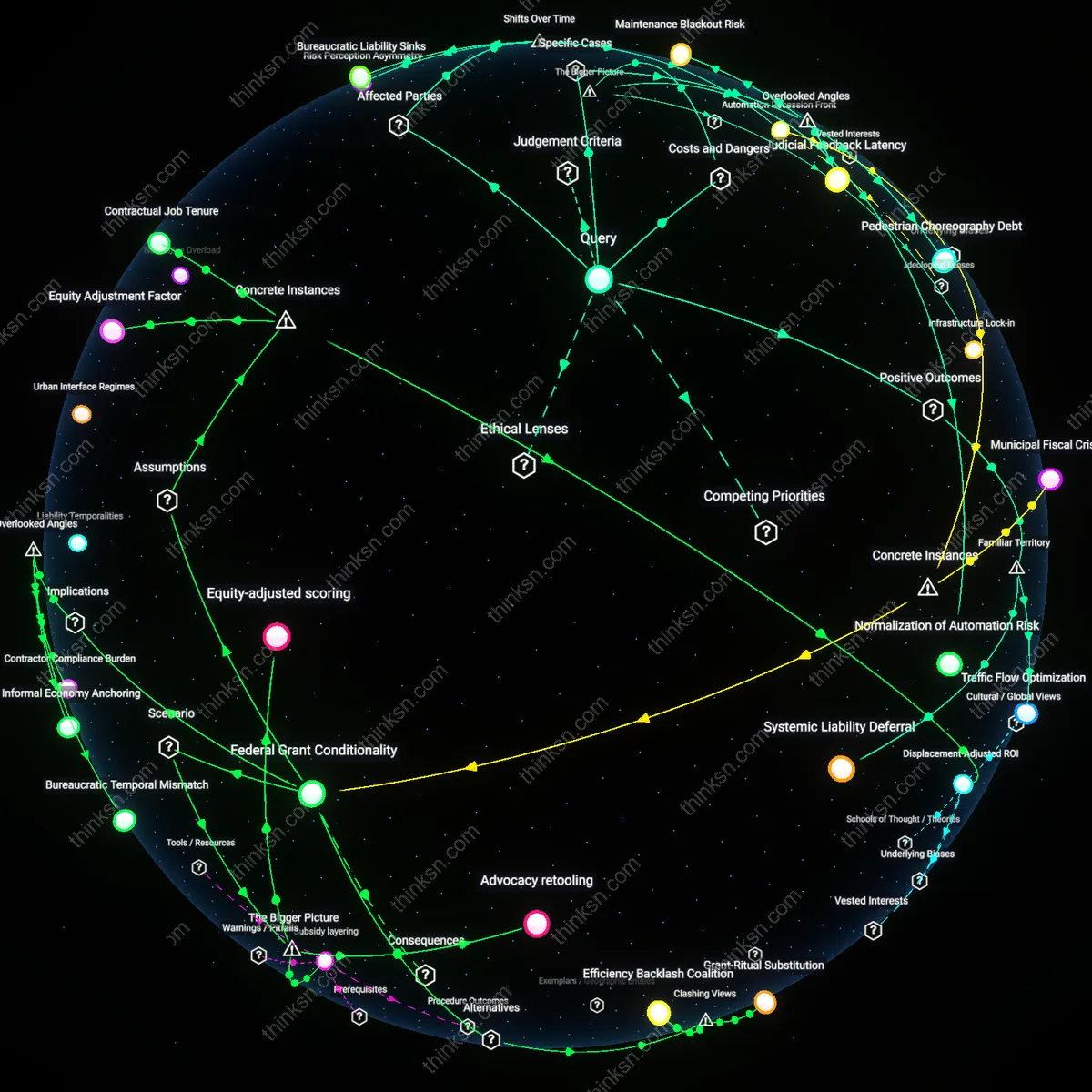

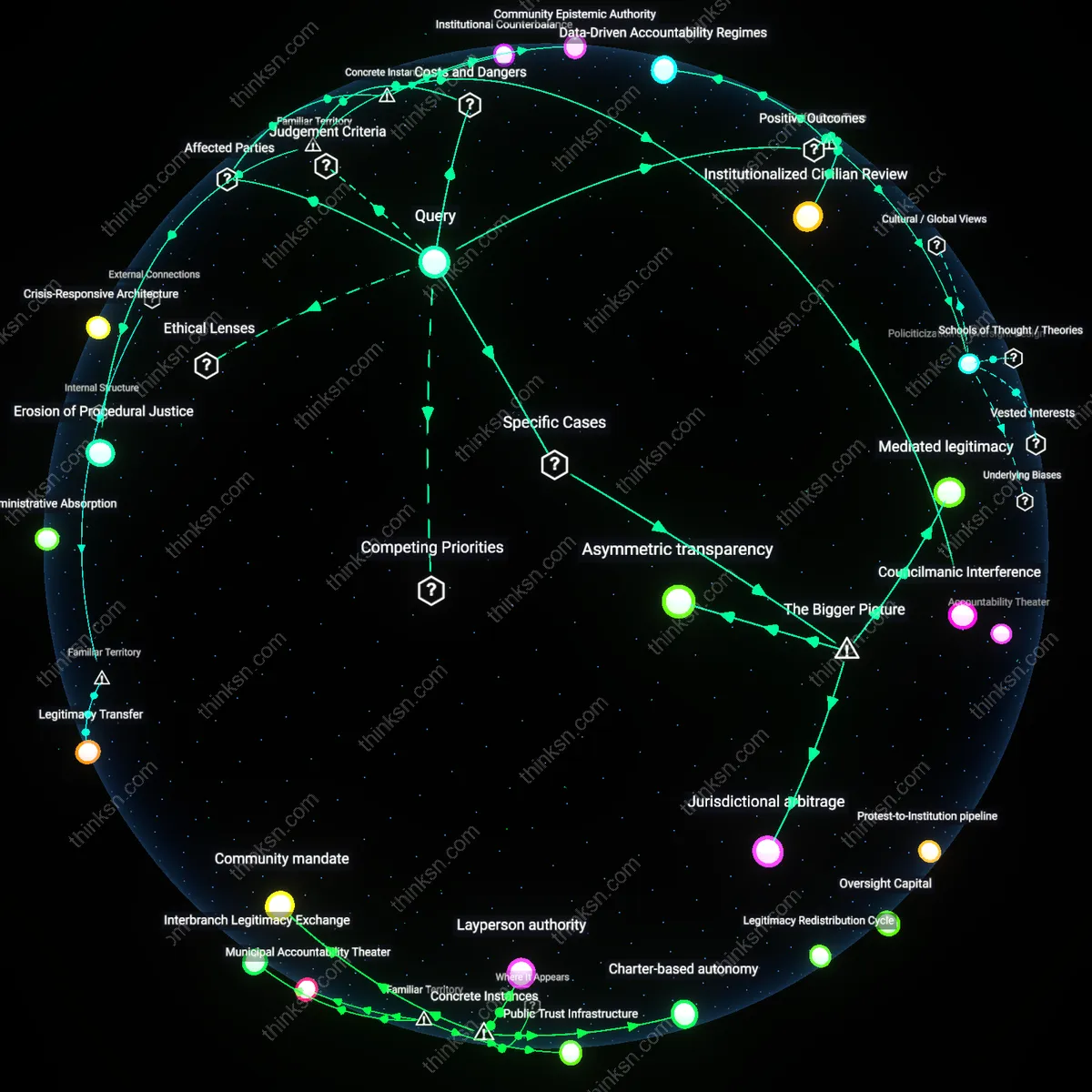

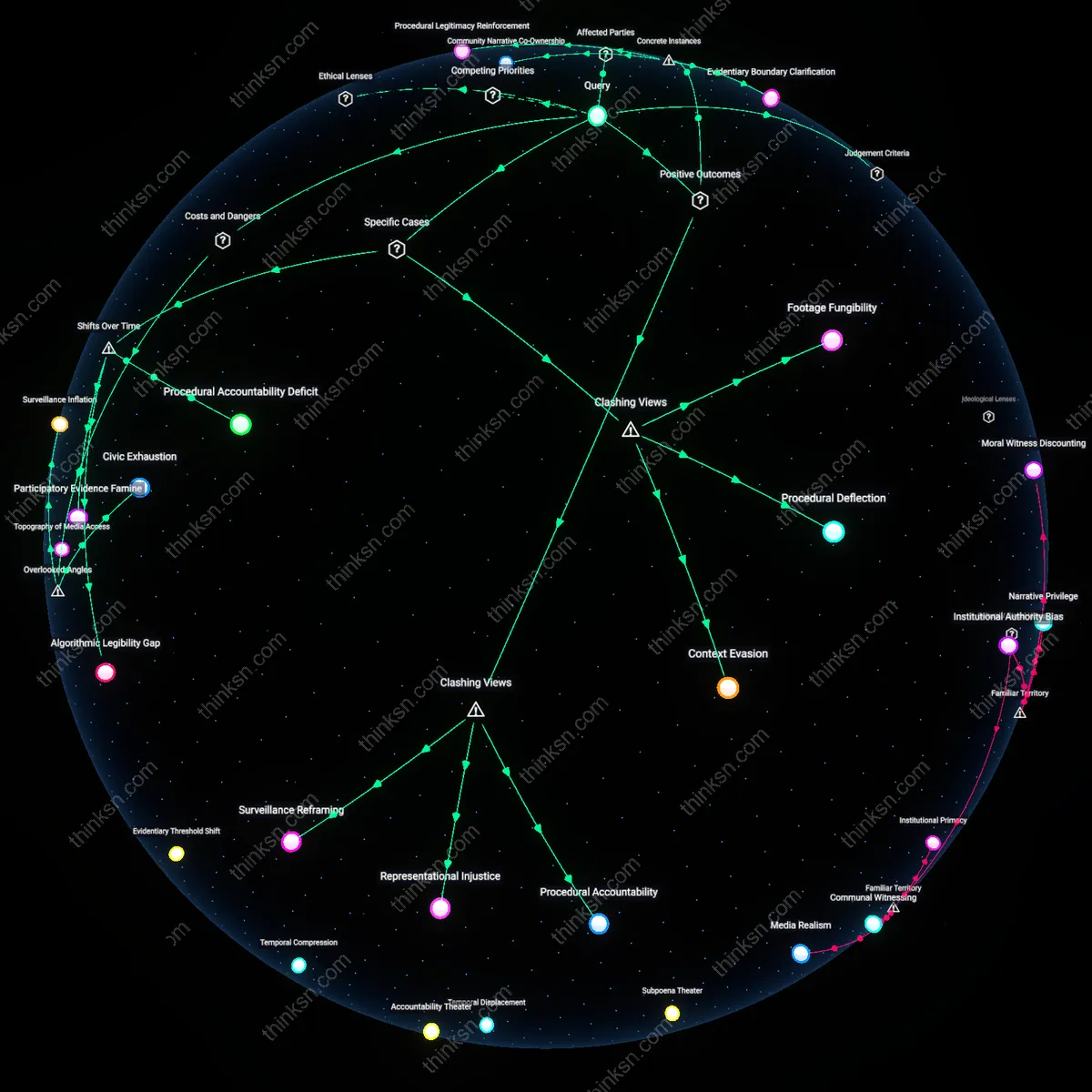

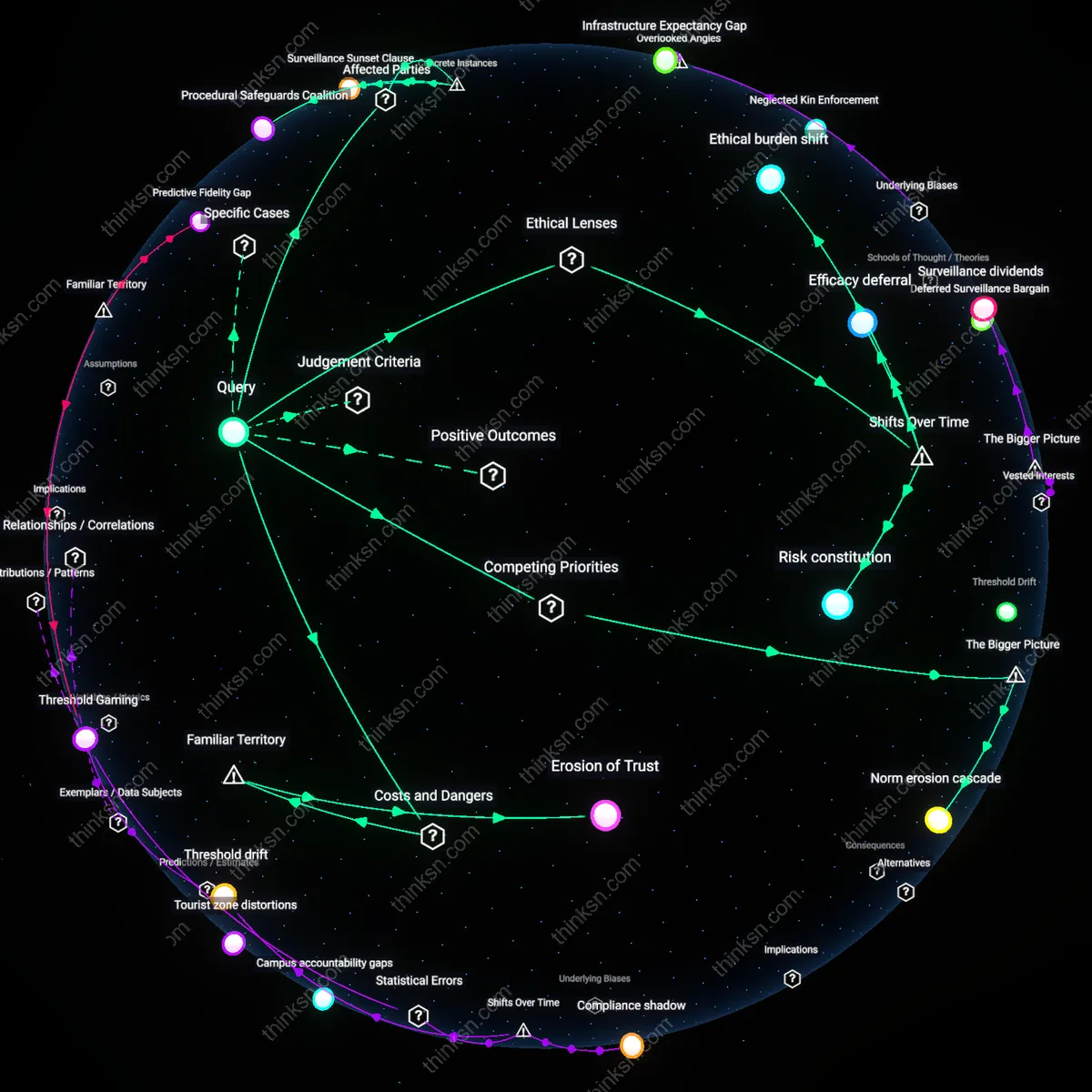

Are AI Traffic Controllers Worth the Accountability Risk?

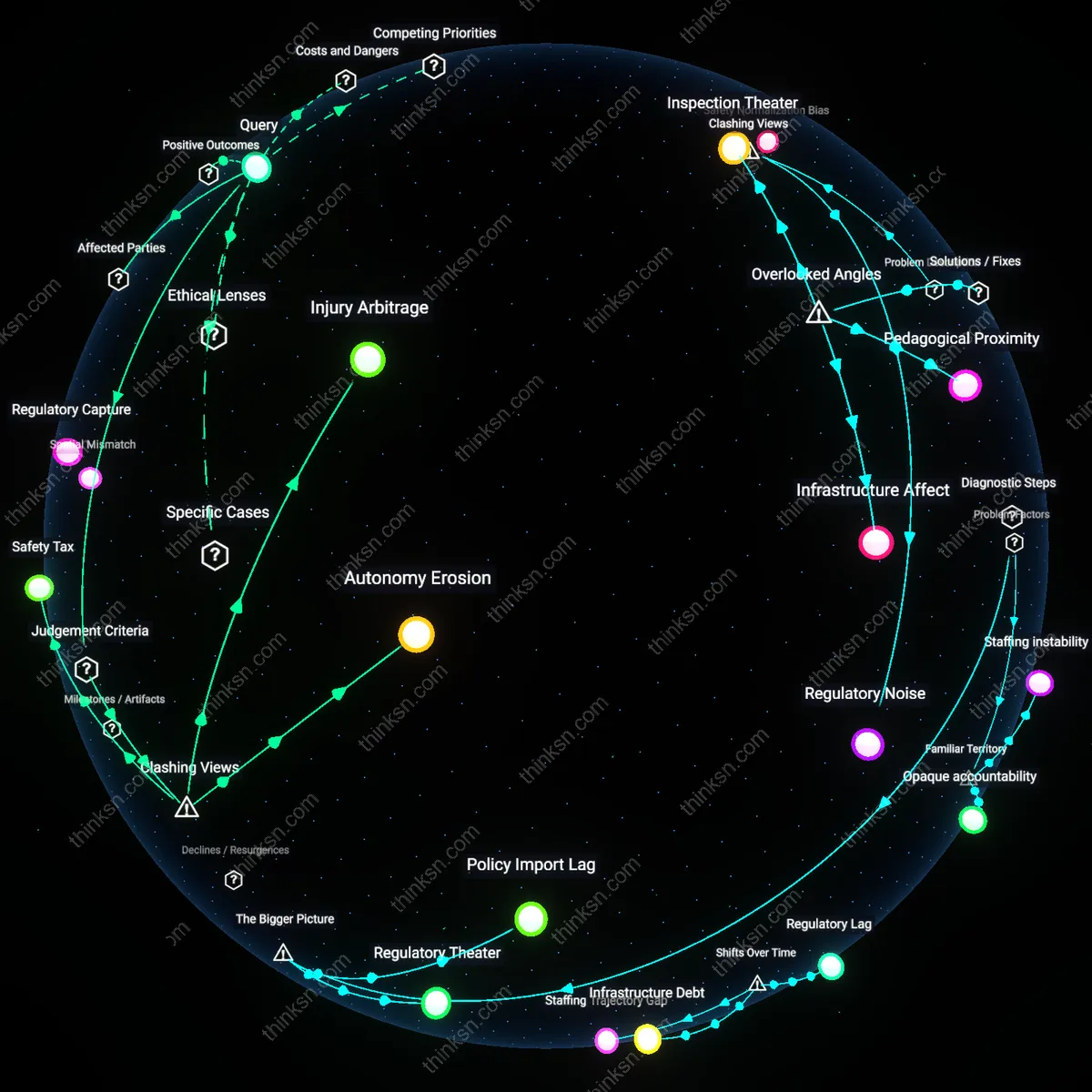

Analysis reveals 12 key thematic connections.

Key Findings

Bureaucratic Liability Sinks

A city can justify replacing human traffic controllers with AI systems because the shift from municipal clerical oversight in the 1970s to algorithmic traffic management in the 2010s has transferred accountability from individual officers to opaque software contracts, leaving affected parties like pedestrians and drivers without recourse when errors occur—this transition reveals how liability evaporates when responsibility is diffused across vendors, city agencies, and machine-learning models that no single entity fully controls, exposing a systemic erosion of accountability mechanisms that was previously centralized and adjudicable.

Automation Recession Front

A city can justify replacing human traffic controllers with AI systems because the post-2008 fiscal austerity era pressured municipal budgets to adopt cost-saving automation, shifting the role of traffic oversight from unionized public workers to outsourced AI platforms—this transition has disproportionately impacted working-class communities who once relied on these city jobs as stable employment, revealing how efficiency justifications mask deeper economic displacements that recast urban infrastructure as a site of labor erosion rather than public service innovation.

Risk Perception Asymmetry

A city can justify replacing human traffic controllers with AI systems because the normalization of algorithmic decision-making since the 2010s has redefined public tolerance for error, shifting perception from viewing human mistakes as forgivable to treating AI failures as inevitable byproducts of progress—this change enables institutions to sidestep accountability by framing accidents as statistical anomalies rather than moral failures, thereby altering how victims, insurers, and regulators interpret harm, and producing an uneven burden where marginalized road users absorb the consequences of 'learning phases' once borne by visible public employees.

Traffic Flow Optimization

A city can justify replacing human traffic controllers with AI systems because AI enables real-time adaptation of signal timing to vehicle density, reducing congestion during peak hours in dense urban corridors like Manhattan or downtown Seoul. This mechanism operates through sensor-fused neural networks that process camera, radar, and loop-detector data to adjust green light duration within seconds, a responsiveness unattainable by human-monitored systems. What’s underappreciated is that the primary benefit isn’t speed alone but the compounding time savings across thousands of intersections, which collectively enhance urban mobility and reduce fuel waste from idling—outcomes already associated with smart cities in public discourse.

Predictive Incident Prevention

A city can justify replacing human traffic controllers with AI systems because machine learning models trained on historical crash data can anticipate high-risk conditions—like slippery roads at intersections during rush hour—and proactively modify traffic light sequences to reduce conflict points. This operates through predictive analytics embedded in systems like those tested in Singapore’s Intelligent Transport System, where AI adjusts right-turn phasing before accidents occur, not after. Though the public typically frames AI in traffic as reactive or surveillance-oriented, the non-obvious advantage is its preventive capacity—shaping behavior before harm emerges, mirroring familiar safety logic in air traffic control automation.

Systemic Liability Deferral

A city can justify replacing human traffic controllers with AI systems because AI shifts accountability from individual error to institutional oversight, allowing municipal agencies to standardize decisions under auditable algorithms rather than variable human judgment during accidents. This functions through centralized command platforms like those in Los Angeles’ ATSAC system, where every signal change is logged and governed by policy-rule matrices, making liability more diffuse but also more manageable in legal and PR contexts. While the public assumes accountability gaps undermine legitimacy, the familiar framing of ‘the system did it’ actually aligns with existing public tolerance for bureaucratic decisions in other automated domains, such as credit scoring or school placements.

Liability Vacuum

A city cannot justify replacing human traffic controllers with AI systems because when accidents occur, responsibility dissipates across developers, municipal agencies, and operational protocols, creating a legal and ethical void where no single actor is clearly accountable. This dispersion is exacerbated by proprietary algorithms and fragmented oversight regimes, which prevent transparent inquiry or corrective action after harm, thereby weakening public trust and incentivizing risk externalization. The underappreciated danger is not merely inefficiency in redress, but the systemic erosion of responsibility norms in urban governance under algorithmic automation.

Infrastructure Lock-in

Deploying AI traffic control entrenches cities into long-term technological dependencies on private vendors, whose update cycles, data requirements, and performance metrics reshape municipal decision-making around corporate logic rather than civic need. This shift subtly reorients urban planning toward data-hungry, surveillance-compatible systems that prioritize predictive efficiency over equity or community input, with path dependency ensuring these priorities become structural. The overlooked consequence is that the initial cost-saving rationale morphs into a systemic constraint, limiting future policy flexibility and embedding commercial interests in public safety infrastructure.

Normalization of Automation Risk

Replacing human controllers with AI systems gradually desensitizes both policymakers and the public to the risks of autonomous decision-making in life-critical domains, treating each efficiency gain as validation despite unresolved failure modalities. This normalization is driven by performance metrics that emphasize flow optimization and cost reduction while downgrading less quantifiable harms like algorithmic bias or rare but catastrophic errors. The deeper systemic effect is the incremental displacement of human judgment from high-stakes urban systems, where the absence of visible accountability becomes invisible not because it is resolved, but because it is habituated.

Maintenance Blackout Risk

A city cannot justify replacing human traffic controllers with AI systems because the hidden dependency on uninterrupted municipal maintenance schedules creates systemic vulnerability when AI systems fail unpredictably. In Delhi, where embedded AI traffic lights depend on coordinated sensor recalibration and utility power, even brief lapses in municipal upkeep — such as delayed cleaning of dust-covered optical sensors or erratic power restoration — lead to cascading signal failures that human controllers would adaptively override. This exposes how efficiency gains are predicated on flawless maintenance — a non-obvious, fragile layer beneath technical performance — which cities underfund and rarely model in AI transition plans.

Judicial Feedback Latency

A city cannot justify replacing human traffic controllers with AI systems because the absence of real-time legal precedent formation creates accountability vacuums that paralyze post-accident resolution. In Jacksonville, incidents involving AI-managed intersections have stalled in municipal courts for months due to the lack of established protocols to assign liability between the city, the software vendor, and third-party data providers, delaying compensation and enabling repeat failures. This latency distorts the feedback loop between accident and reform, making the legal system a passive observer rather than a corrective mechanism — a hidden institutional lag that undermines both justice and iterative safety improvement.

Pedestrian Choreography Debt

A city cannot justify replacing human traffic controllers with AI systems because long-standing informal pedestrian movement patterns — like the staggered crossing rhythms at Accra’s Kaneshie Market intersection — are invisible to AI sensors trained on vehicular throughput metrics and thus erased from decision logic. These organic pedestrian choreographies, developed over decades to manage crowd density and informal vending, are misread as anomalies, increasing collision risks not because of AI inefficiency but because it actively disrupts embodied urban knowledge. The loss of this choreographic debt — the accumulated adaptive behavior embedded in human movement — reveals that efficiency gains are asymmetric, privileging machines while destabilizing social coordination that was never formally documented.