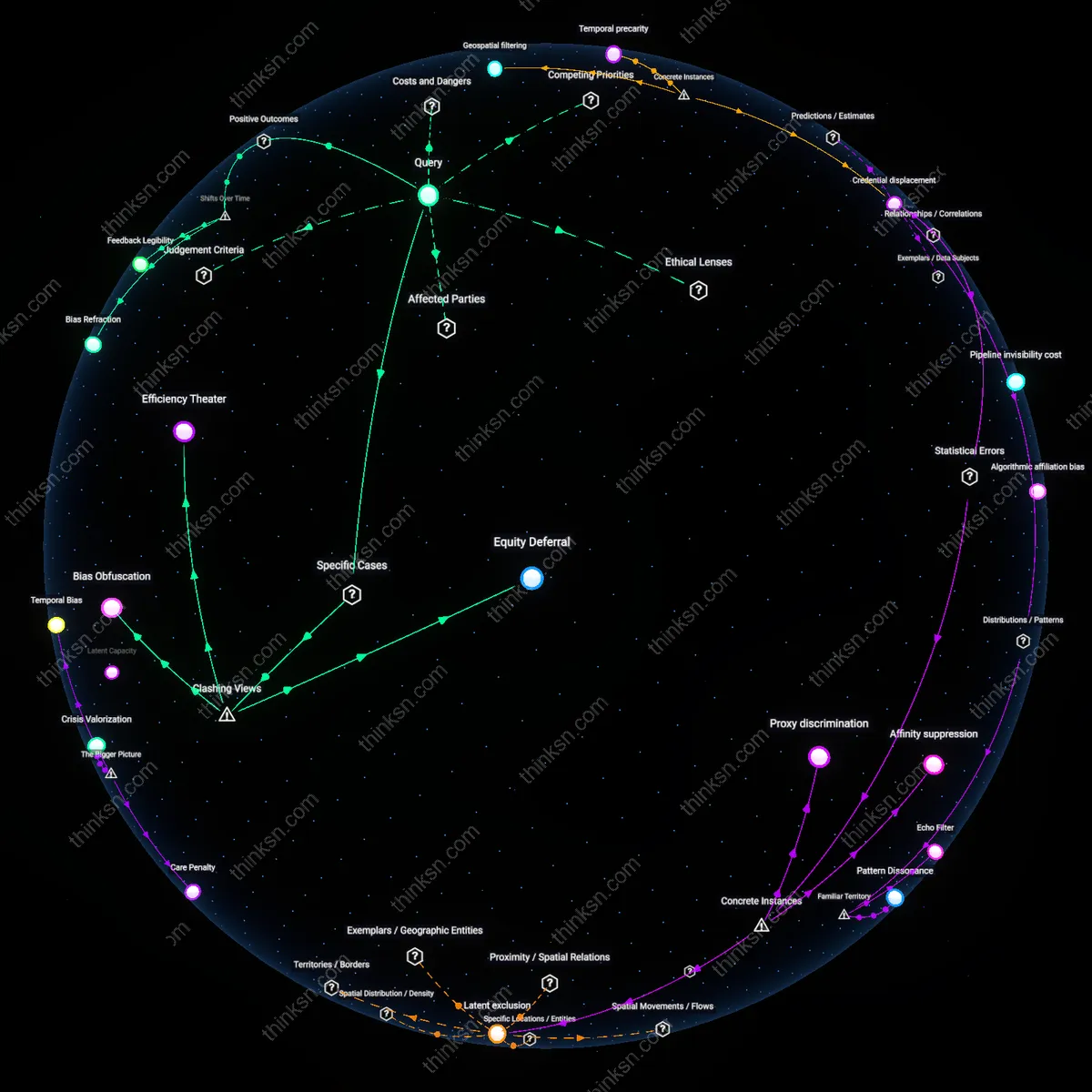

Credential displacement

AI hiring tools at Amazon between 2014 and 2018 systematically downgraded resumes containing the word 'women’s'—as in 'women’s coding club'—because the algorithm trained on predominantly male tech applicants interpreted such signals as negative predictors, resulting in the systematic exclusion of candidates affiliated with gendered developmental experiences that diverged from the dominant male pattern, revealing how productivity proxies can erase qualification pathways that are more common among underrepresented groups.

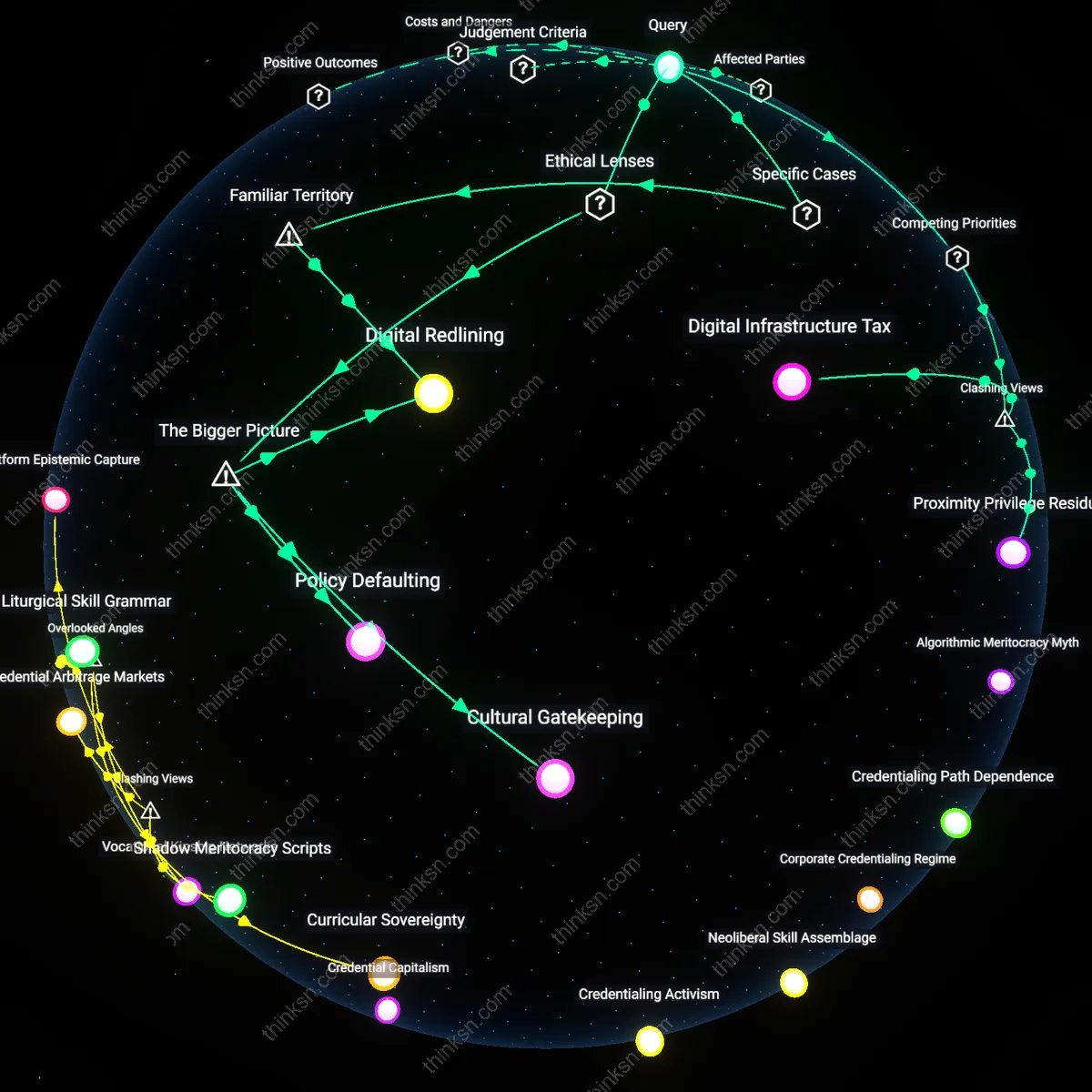

Geospatial filtering

In 2020, a major U.S. logistics company deployed an AI tool that prioritized delivery speed predictions to staff last-mile routes, which disproportionately excluded applicants from predominantly Black neighborhoods in Chicago after zip-code-level mobility data was used as a proxy for reliability—exposing how infrastructure inequities become encoded as productivity risks, and thus how geography, not merit, becomes a silent disqualifier shaped by historical disinvestment.

Temporal precarity

A 2022 audit of an automated hiring platform used by a national retail chain found that applicants with inconsistent work-hour patterns—such as those with caregiving responsibilities, often women of color—were flagged as low-predictability hires due to fragmented time-stamp data from past shifts, demonstrating how algorithmic preference for temporal regularity penalizes labor patterns tied to social reproductive roles, thus converting structural care burdens into measurable disqualification.

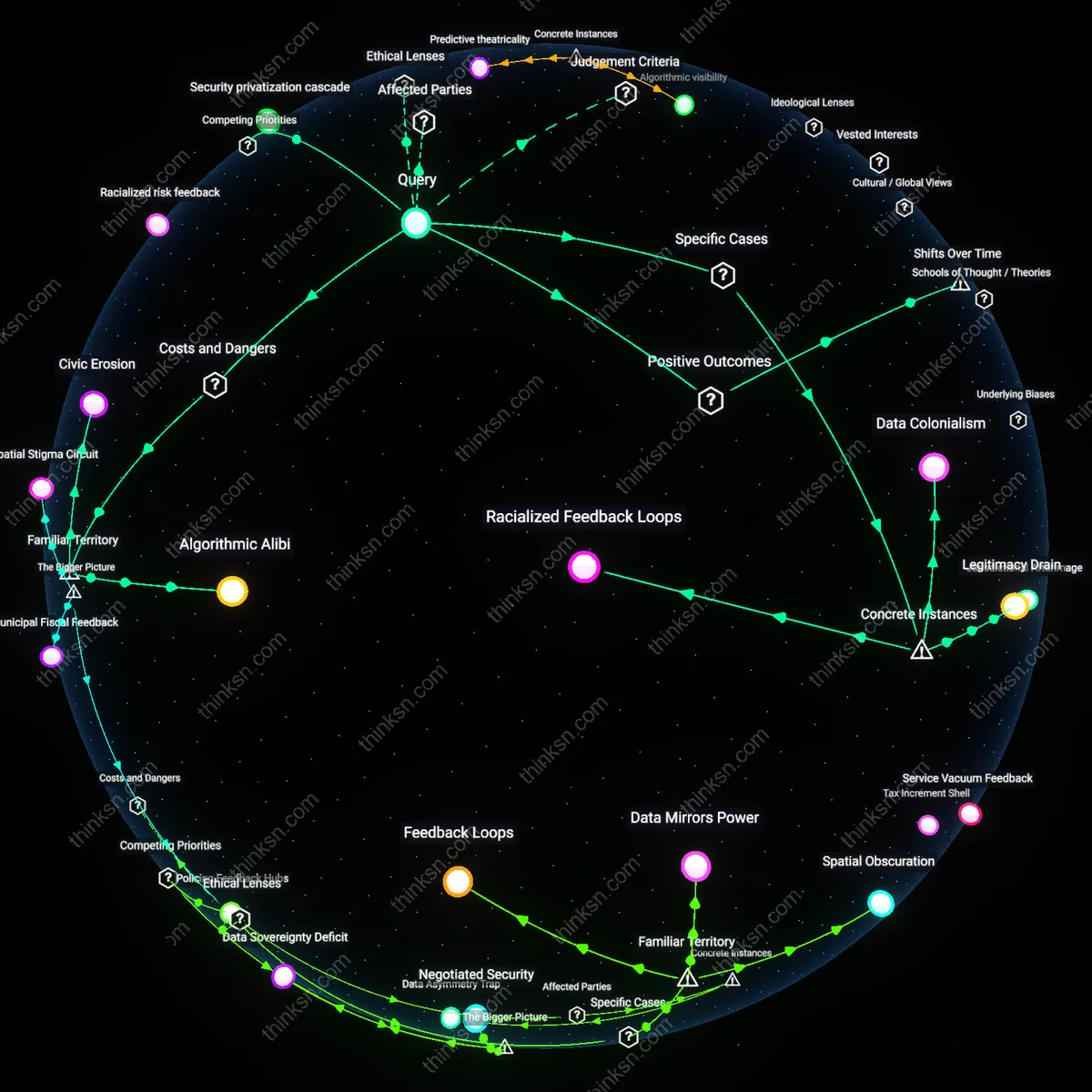

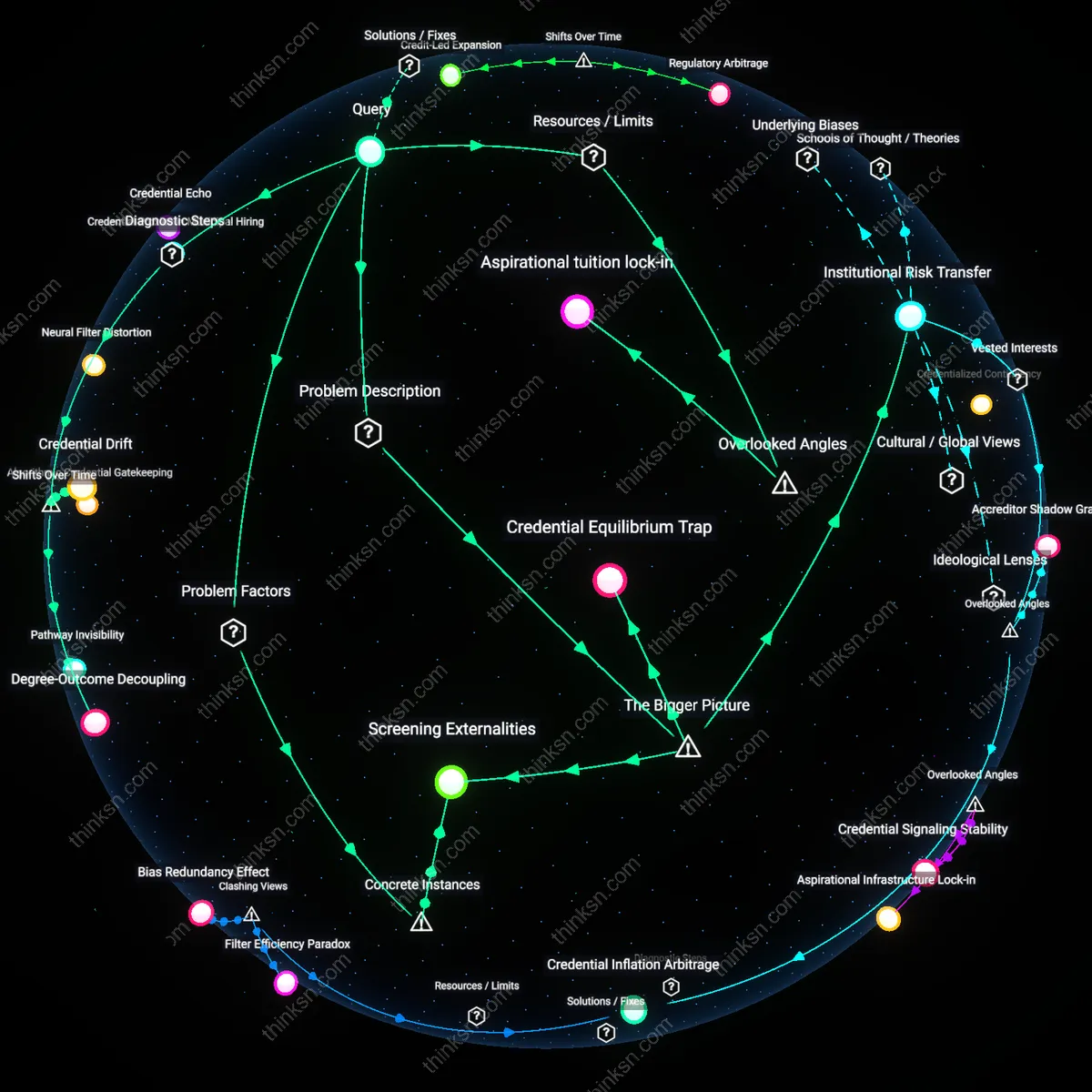

Latent Credential Discounting

AI hiring tools increasingly screen out candidates from non-traditional educational or career pathways—particularly Black, Latino, and rural applicants—by favoring productivity proxies calibrated on elite tech sector performance data from the early 2010s, when engineering teams were overwhelmingly white and male; this shift from qualifications-based to behavior-predictive models, institutionalized after 2018 by firms like HireVue and Pymetrics, entrenches historical workforce asymmetries not through explicit exclusion but by optimizing for behavioral patterns that correlate with already dominant groups. The mechanism operates through machine learning models trained on employee performance and retention datasets from Amazon, Google, and similar firms, where high productivity is statistically associated with specific communication styles, pacing, and cognitive test responses that reflect cultural capital more than raw skill. What is underappreciated is that this shift does not merely continue past biases but reconfigures them into seemingly neutral algorithmic thresholds that are harder to contest—a transformation enabled by the post-2015 wave of venture-funded HR tech that reframed fairness as 'predictive accuracy.'

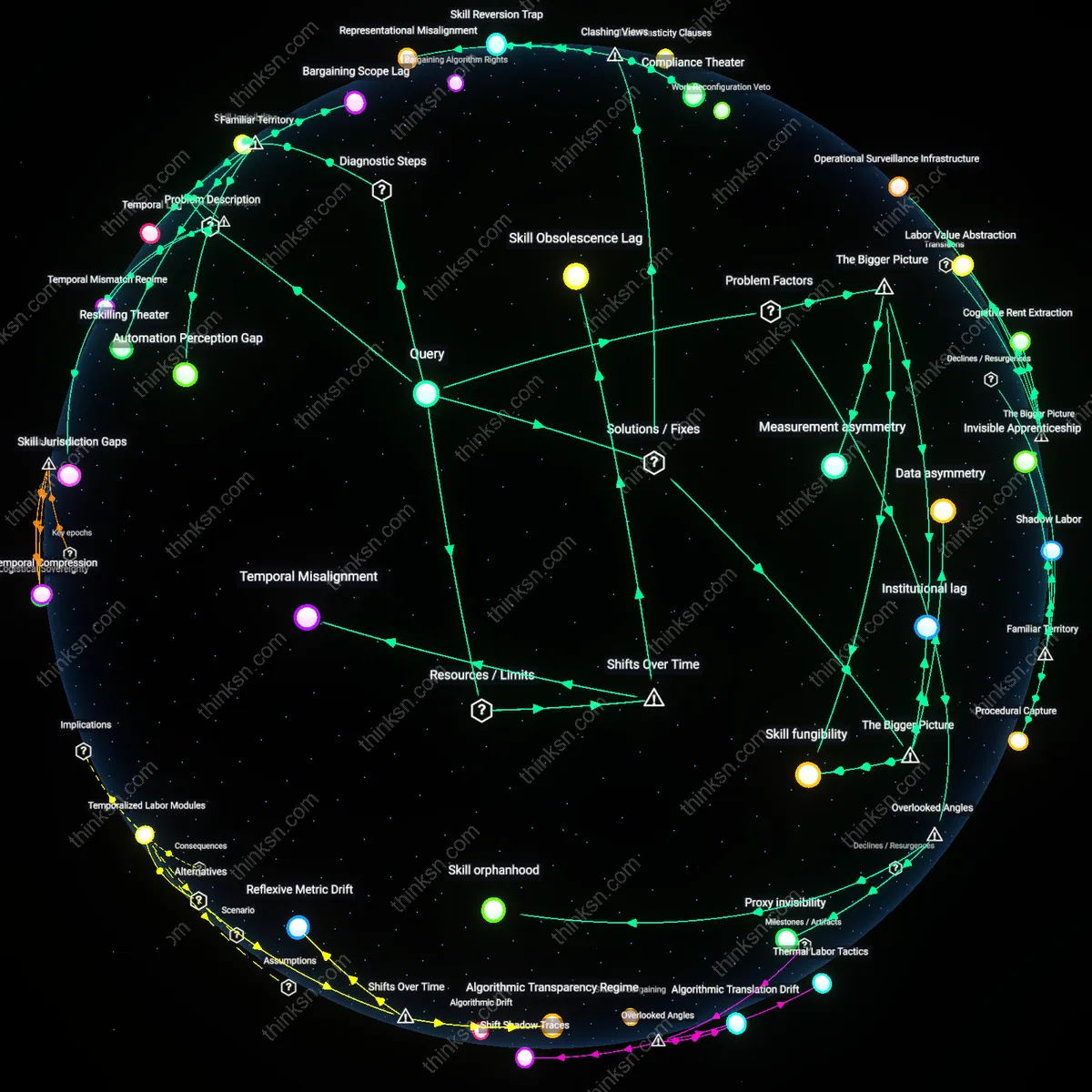

Temporal Narrowing Gap

As AI hiring systems have shifted from assessing formal credentials to short-term productivity forecasts—especially after 2020, during the rapid adoption of automated screening in gig platforms and warehousing—the likelihood of excluding older workers, caregivers, and those with episodic employment has increased sharply, as these groups possess qualifications that accumulate over time but do not conform to compressed, continuous-work trajectories that algorithms interpret as 'reliable output.' The shift from longitudinal evaluation (e.g., resumes spanning decades) to narrow behavioral windows (e.g., 90-second video responses or gamified tasks) reflects a broader temporal compression in labor valuation, driven by just-in-time staffing demands at companies like Uber, Instacart, and Amazon fulfillment centers. This recency bias in time-weighted prediction models systematically disadvantages women and disabled workers, who are more likely to have career interruptions; the non-obvious consequence is that the very metric of 'adaptability'—now central to AI assessments—has become an engine of temporal discrimination disguised as dynamism.

Proxy exclusion

AI hiring tools that prioritize predicted productivity disproportionately screen out candidates from underrepresented groups because the predictive models rely on historical performance data from existing employees, who are predominantly from majority demographics; this creates a correlation where qualifications tied to nontraditional pathways—such as alternative education or career interruptions—are negatively associated with productivity scores, despite no inherent deficiency in output. The mechanism operates through proxy variables like tenure in previous roles or frequency of promotions, which are mathematically linked to organizational norms favoring linear, uninterrupted career trajectories more common among privileged groups; the strength of this correlation increases in industries with entrenched demographic homogeneity. This dynamic is significant because it reveals that algorithmic bias does not require explicit demographic data to reproduce inequality—it emerges structurally from what the model treats as predictive, challenging the intuitive belief that removing race or gender from inputs ensures fairness.

Algorithmic Blind Spots

AI hiring tools systematically exclude candidates with nontraditional backgrounds because predictive models rely on historical productivity metrics that are statistically unrepresentative of minority populations. These models interpret gaps in formal employment, unconventional education paths, or lower access to high-profile internships as negative signals, even though such patterns are more prevalent among marginalized groups due to systemic inequities in opportunity. The margin of doubt in productivity predictions—often obscured by high confidence intervals in training data—amplifies exclusion, as error rates are rarely disaggregated by demographic. This creates a false sense of objectivity, where familiar proxies for competence (e.g., Ivy League degrees, linear career trajectories) are reinforced as universal standards.

Credential Heuristics

Candidates without elite academic credentials or recognizable brand employers in their work history are disproportionately screened out when AI systems equate prior affiliation with future performance. This mechanism operates through pattern-matching algorithms trained on past hires at top-tier firms, where underrepresentation of certain racial, economic, and geographic groups is already baked into the dataset. Statistically, the confidence intervals around productivity predictions widen significantly for applicants outside dominant profile clusters, yet the interface of these tools rarely communicates uncertainty—instead presenting rejections as definitive. The non-obvious consequence is that the most visible solution—asking AI to 'ignore' sensitive attributes—fails because proxies for identity are embedded in seemingly neutral features like school name or job title.

Equity Debt

Underrepresented groups accumulate exclusion over repeated hiring cycles because AI systems interpret their lower representation in high-productivity labeled datasets as evidence of lower individual potential, not structural scarcity. This operates through feedback loops where initial biases in training data reduce future visibility of marginalized candidates, increasing the margin of doubt in their predicted outcomes without adjusting for historical disadvantage. While standard deviations in predicted productivity often exceed meaningful differences between groups, decision-makers rely on point estimates that obscure such uncertainty. The familiar narrative—that AI scales meritocracy—masks this debt, positioning exclusion as statistical necessity rather than inherited imbalance.

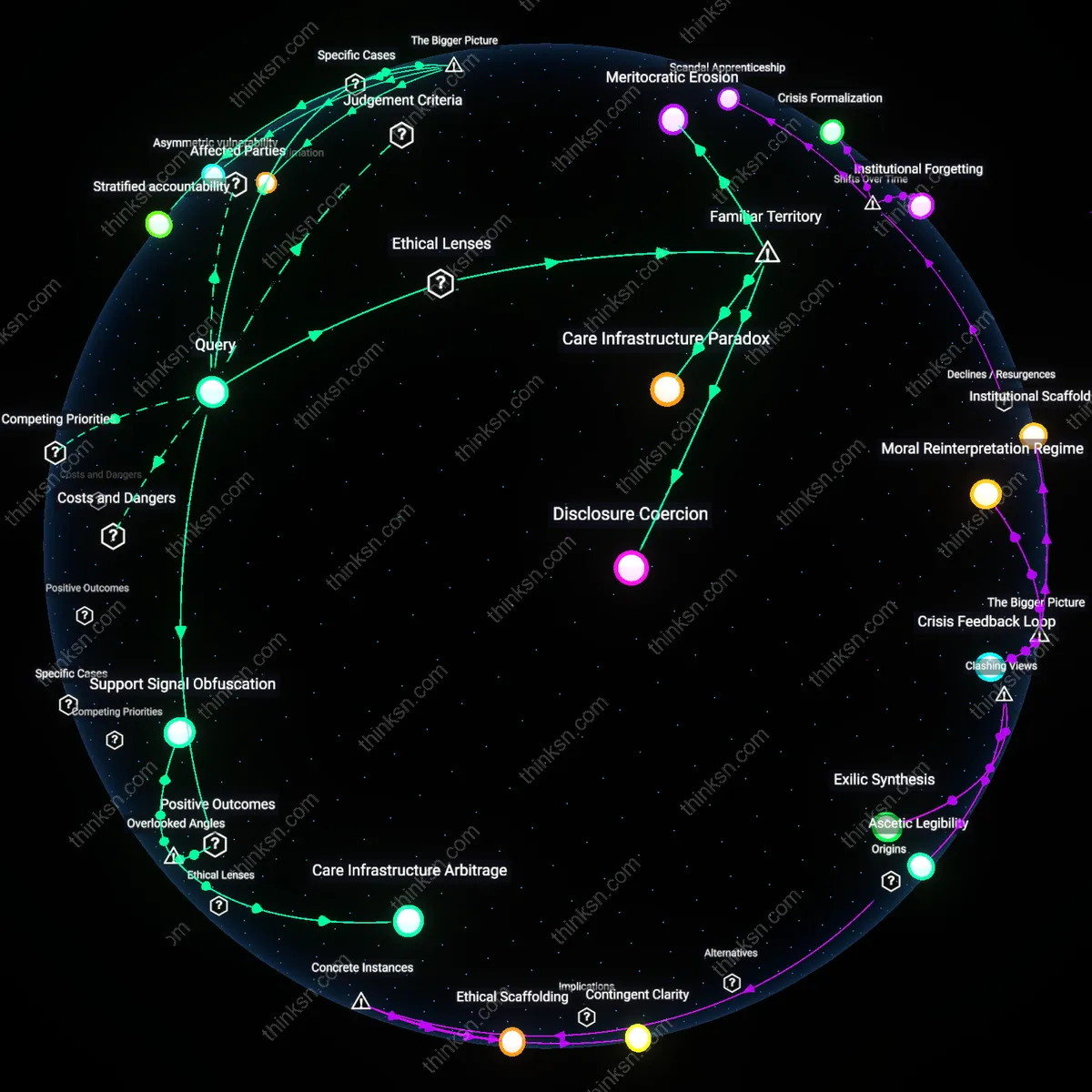

Temporal misalignment penalty

AI hiring tools that prioritize predicted productivity disproportionately screen out older job seekers, particularly those over 45, because algorithmic models conflate career continuity with competence and interpret employment gaps as markers of diminished output potential—this mechanism operates through training data derived from high-growth tech sectors where continuous upskilling correlates narrowly with age-cohort trends, creating a temporal bias that penalizes those who retrained mid-career or took non-linear paths due to caregiving or economic disruption; what is overlooked is that predicted productivity systems assume time-based consistency in skill application, which systematically disadvantages demographics more likely to experience compressed work histories not due to disengagement but structural inequities—this shifts understanding from overt discrimination to invisible time-based penalties embedded in model assumptions.

Geospatial inference drift

AI hiring systems that lack direct access to demographic data often infer candidate suitability using proxy variables like ZIP code, device type, or internet speed, leading to higher rejection rates for applicants from rural or digitally underserved regions—this operates through productivity models that correlate fast application completion times or mobile-only submissions with lower engagement, when in fact these signals reflect infrastructure inequality rather than capability; the overlooked dynamic is that predicted productivity becomes entangled with locational digital latency, meaning candidates from Appalachia, the Navajo Nation, or persistent-poverty counties are filtered out not because of skill deficits but because their environments generate ‘slow’ digital footprints—this reveals a hidden spatial bias in ostensibly meritocratic systems.

Non-categorical skill invisibility

Candidates with hybrid or non-institutional skill acquisition—such as self-taught coders from refugee backgrounds or informal economy workers transitioning to formal roles—are systematically excluded by AI tools that map qualifications through categorical credentials like degrees or certified experience, even when productivity prediction could support their inclusion—this occurs because model architectures treat skill inputs as discrete, certifiable events rather than continuous learning trajectories, making episodic, community-based, or language-diverse expertise statistically noisy and thus deprioritized; the overlooked factor is that underrepresentation here stems not from lack of access but from epistemological mismatch—algorithms trained on credentialized productivity fail to recognize competence that didn’t enter through institutional gates, distorting predictions in ways that amplify systemic absence.