Should App Stores Censor Political Lies for Democracy?

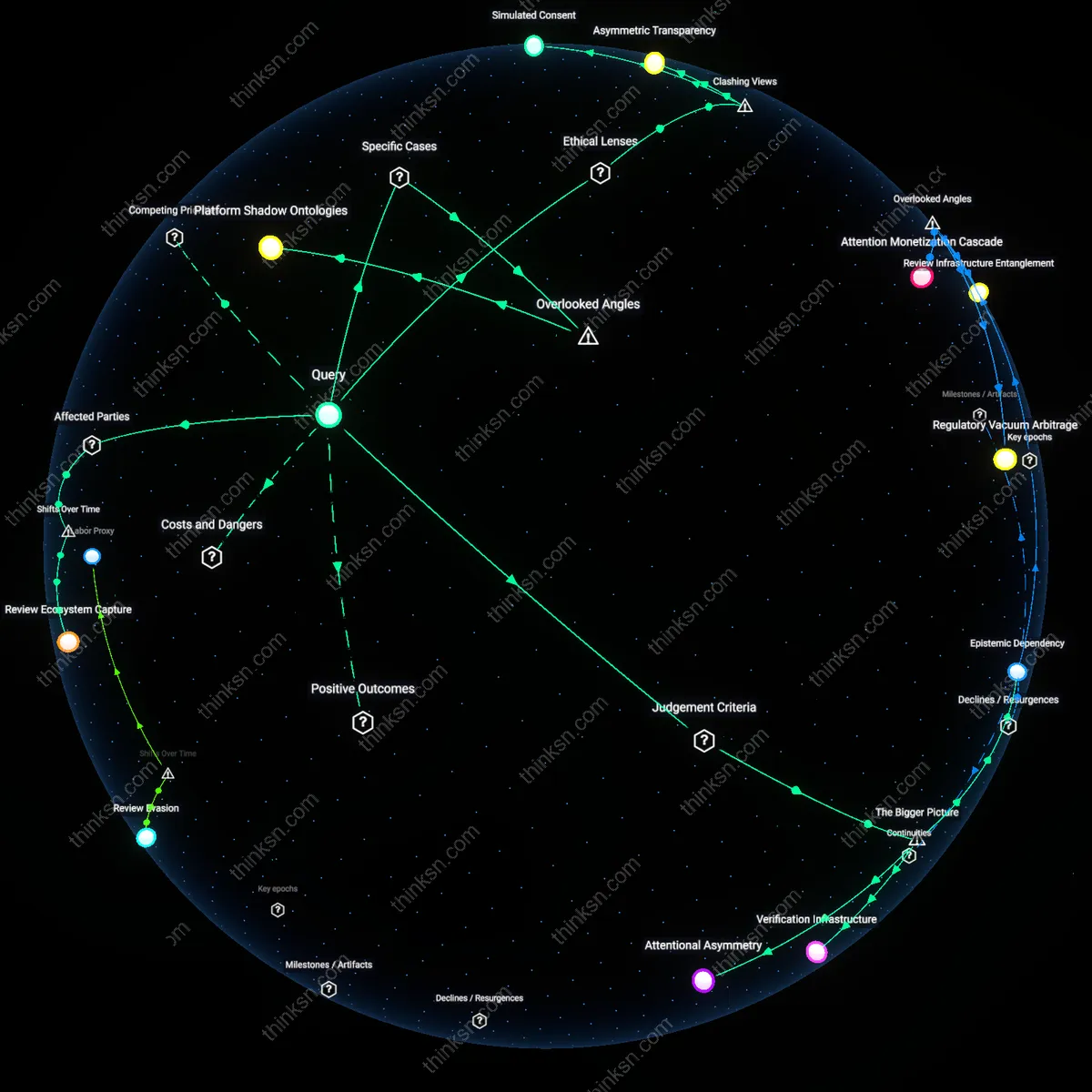

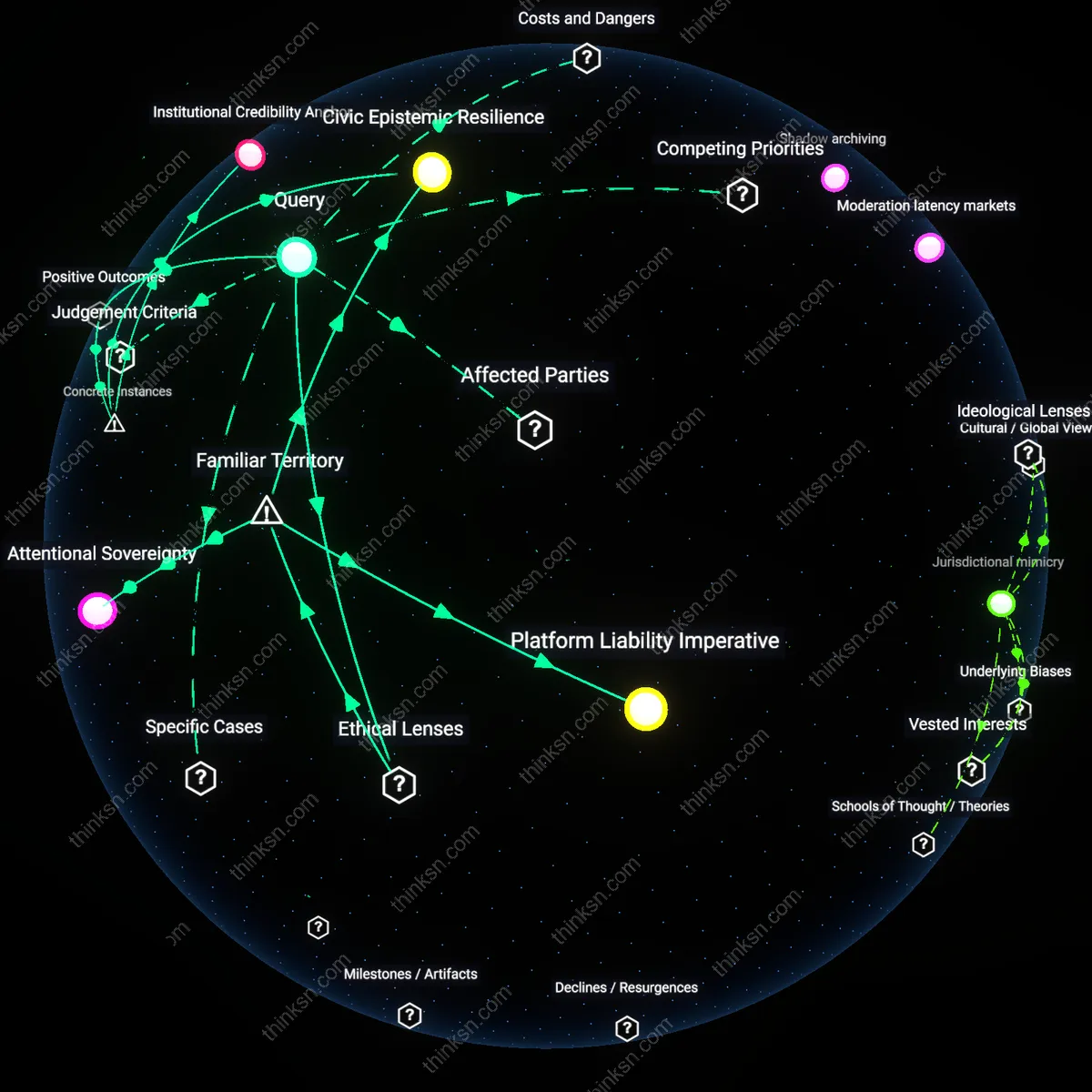

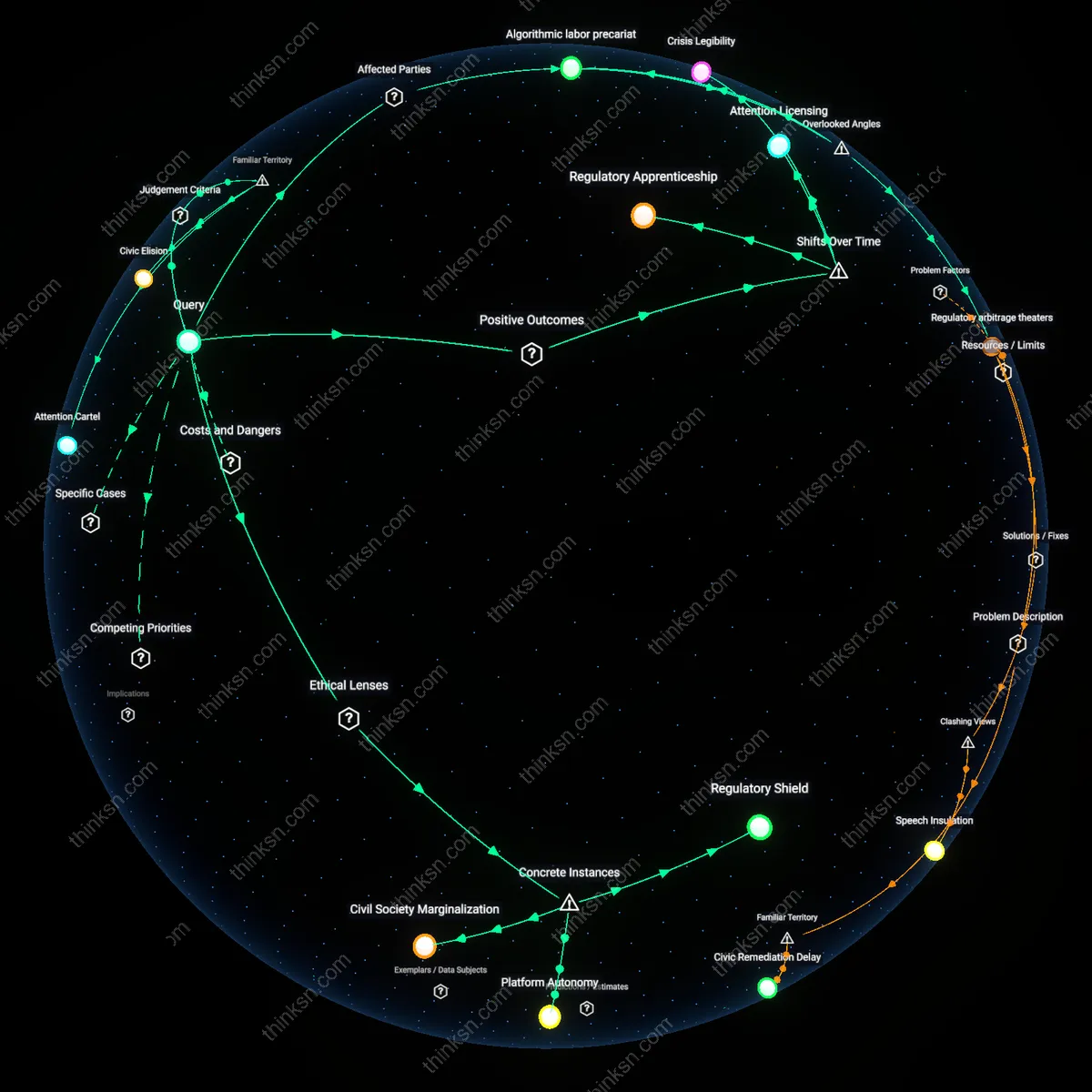

Analysis reveals 6 key thematic connections.

Key Findings

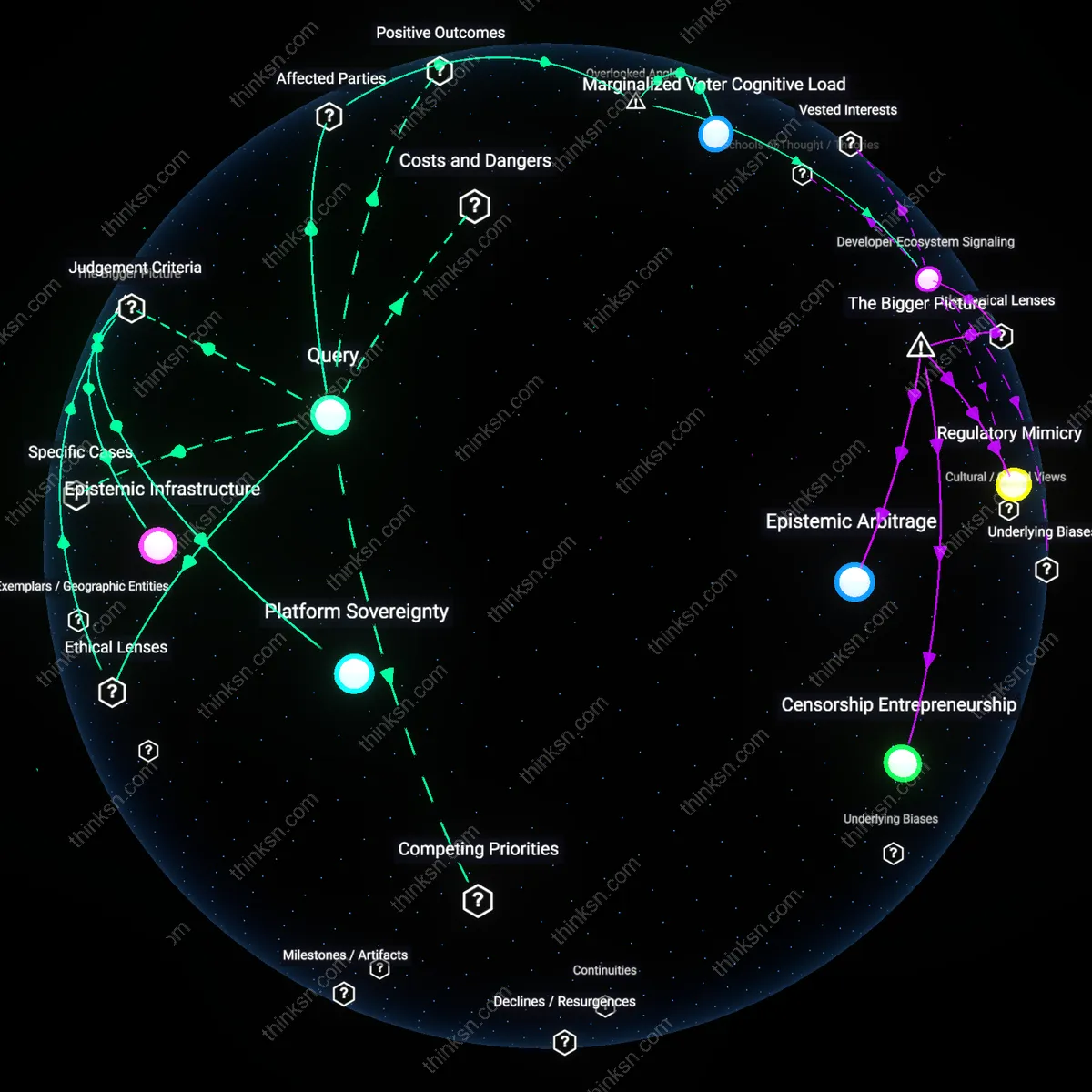

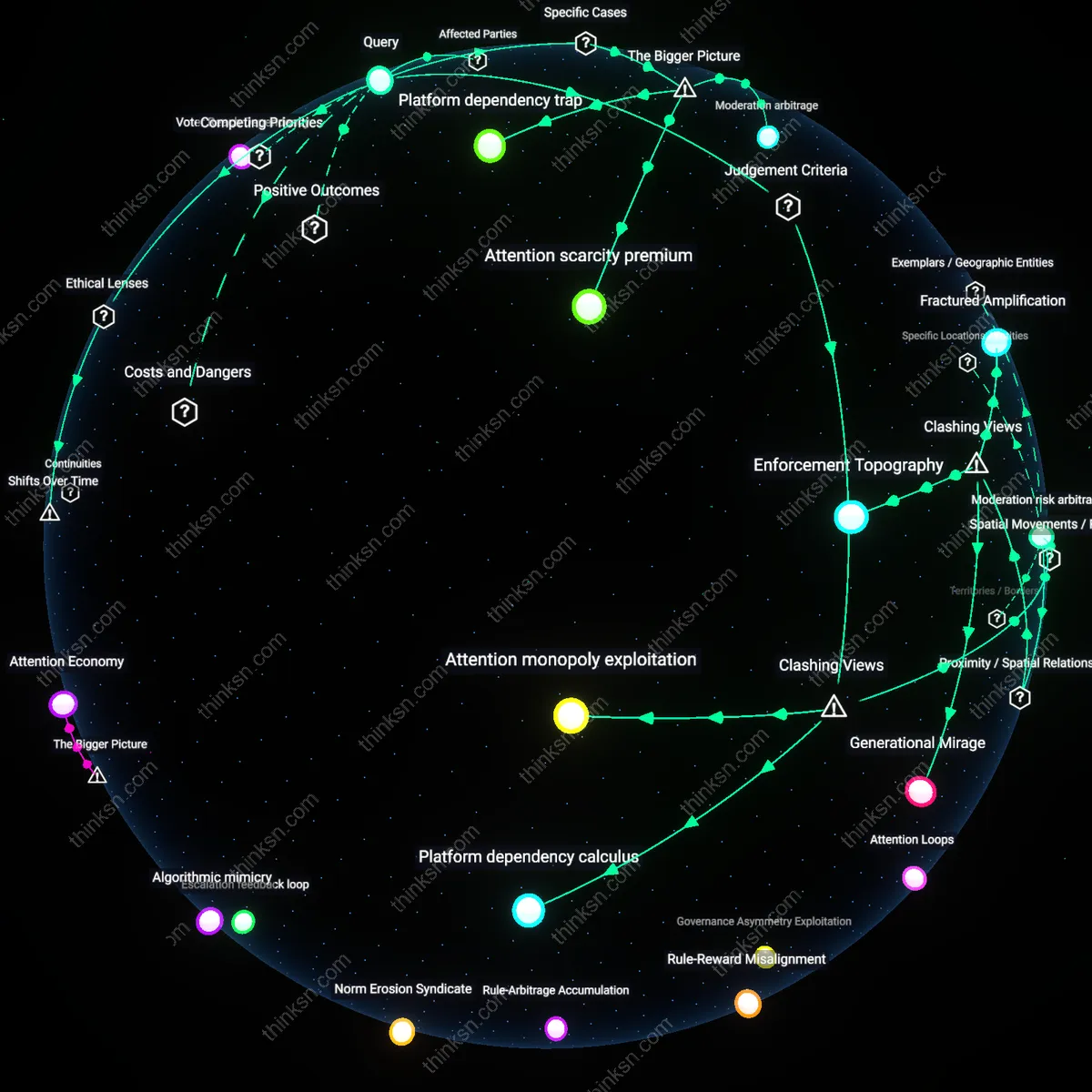

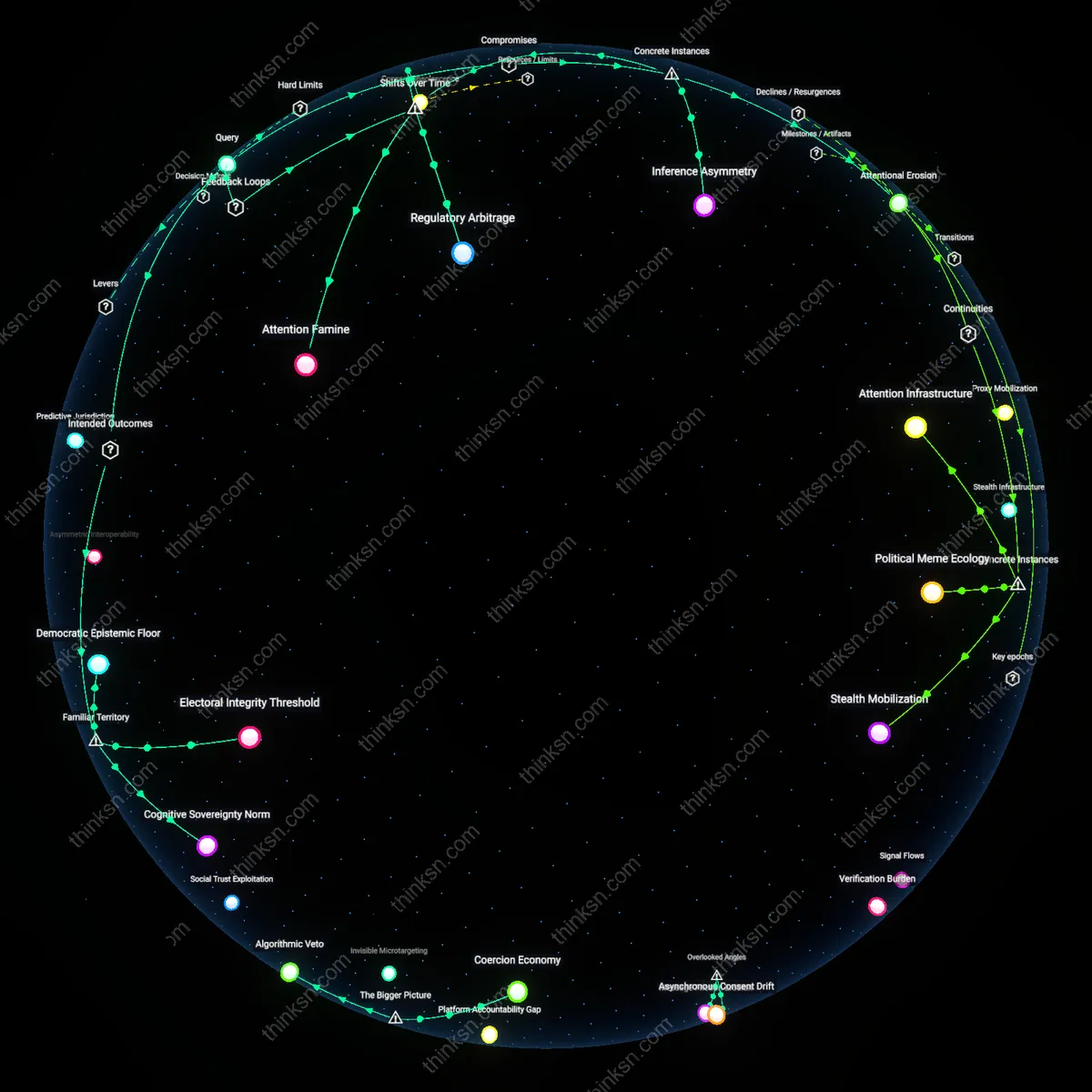

Platform Epistemic Sovereignty

App stores must ban political apps spreading unverified claims to preserve the epistemic integrity of digital public forums, because platforms have effectively become the infrastructure of civic understanding and must curate credible information to prevent cascading misinformation during electoral events. Major app stores operate as de facto speech governors in polities with fragmented media oversight, such as in the U.S., where the absence of federal disinformation regulation places technical intermediaries in a gatekeeping role—not by choice but by systemic necessity—making their content thresholds a form of emergent epistemic policy. This shifts the moral weight from market freedom to institutional responsibility, revealing that the overlooked function of app stores is not distribution but epistemic gatekeeping, a role rarely acknowledged in antitrust or free speech debates.

Marginalized Voter Cognitive Load

Banning unverified political apps is justifiable because it reduces cognitive burden on low-information and marginalized voters who disproportionately absorb misinformation due to design asymmetries in digital navigation, such as older Black and Latino populations in swing states like Georgia and Michigan who rely on mobile devices as primary internet endpoints but face algorithmic environments optimized for engagement over clarity. These users experience compounded epistemic harm when apps exploit interface trust (e.g., App Store branding) to legitimize false claims, making platform curation a form of cognitive justice rather than suppression. The overlooked mechanism here is not censorship but unequal cognitive taxation, where market freedom, if left unchecked, systematically overloads the decision-making capacity of already disadvantaged voters.

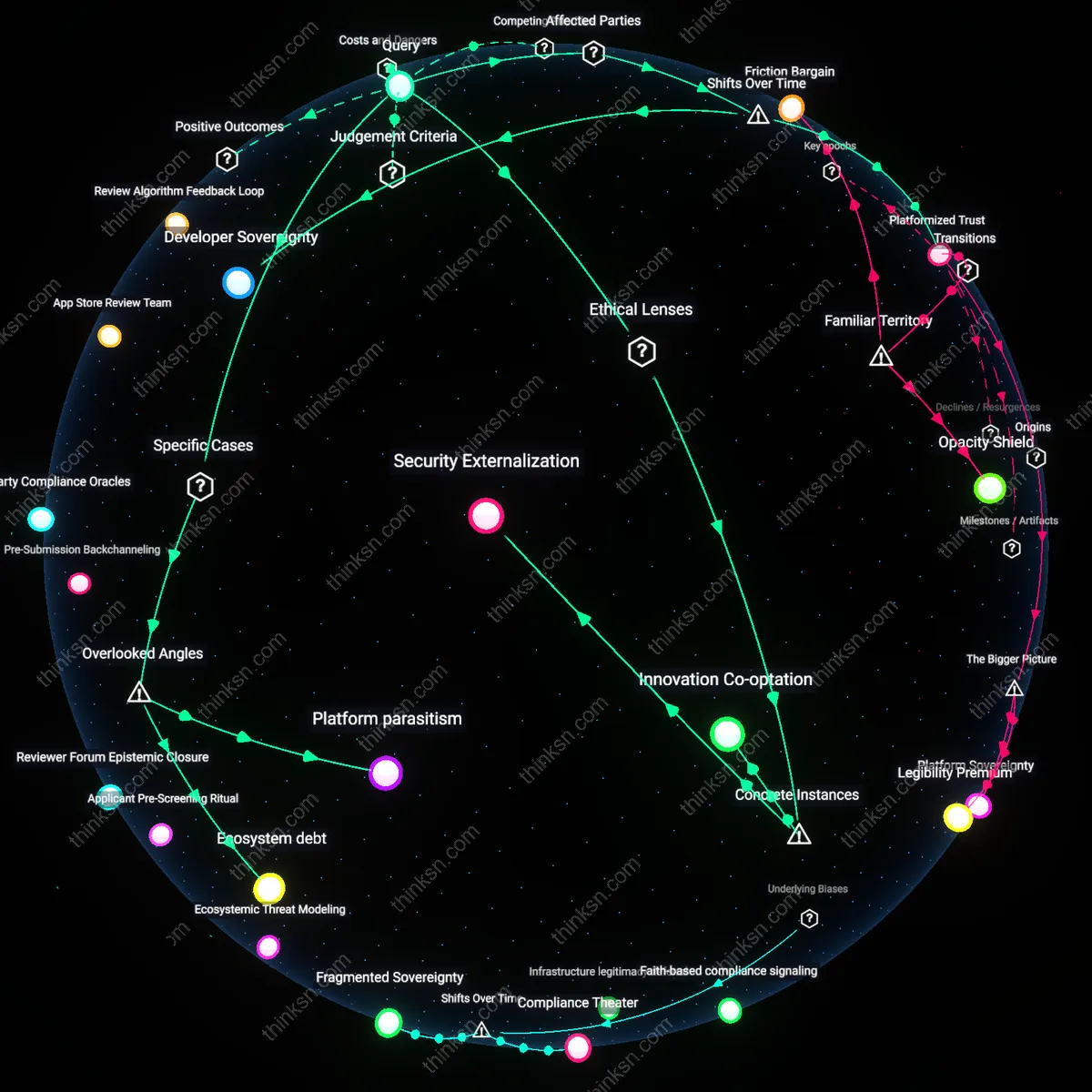

Developer Ecosystem Signaling

App stores should ban political apps with unverified claims because their enforcement decisions shape long-term developer behavior, setting normative precedents that deter future malign actors from weaponizing platform legitimacy for political disruption, as seen in the aftermath of the 2020 U.S. election when fringe developers tested boundaries on iOS and Android to legitimize election denial narratives. By removing such apps, platforms send a costly signal—akin to antitrust penalties—that alters the risk calculus for app creators, discouraging epistemic parasitism on digital infrastructure. The residual effect is not immediate harm reduction but the preservation of ecosystem trust, a dynamic rarely considered in free speech debates that focus on individual apps rather than the broader developer incentive structure.

Platform Sovereignty

Yes, it is justifiable for an app store to ban a political app spreading unverified claims because private digital platforms function as de facto public utilities in electoral discourse, and their content moderation decisions reflect a duty of care under the ethical framework of digital republican theory, which prioritizes civic resilience over absolute market freedom; this becomes systemically significant when actors like Apple or Google exercise unchecked curatorial power during election cycles, enabling them to unilaterally redefine the boundaries of legitimate speech under the guise of technical compliance, a dynamic intensified by the lack of interoperable app distribution in the U.S. mobile ecosystem. The non-obvious consequence is that market concentration in operating systems transforms corporate terms of service into binding speech regimes, making platform sovereignty a de facto regulatory force in democratic participation.

Epistemic Infrastructure

Yes, it is justifiable because the spread of unverified political claims undermines shared epistemic infrastructure—the collectively relied-upon systems for verifying truth in public debate—and app stores, as dominant gatekeepers of software distribution, bear responsibility under the ethics of care to prevent cascading epistemic harm; this is particularly acute in contexts like the 2020 U.S. election or the January 6 insurrection, where research consistently shows coordinated misinformation ecosystems exploited app distribution channels to bypass traditional media safeguards, thereby destabilizing democratic legitimacy through manufactured belief cascades enabled by algorithmic amplification and weak verification protocols. The underappreciated mechanism is that app stores do not merely host content but scaffold the credibility of information ecosystems by legitimizing certain actors as 'software providers,' thus embedding normative judgments about knowledge validity into technical certification processes.

Regulatory Arbitrage

No, it is not justifiable when app store bans operate as covert regulatory arbitrage, where private companies absorb the political risk of content enforcement that elected bodies avoid due to free speech protections under liberal democratic constitutions, thereby shifting the burden of democratic accountability onto unaccountable intermediaries; this occurs within the systemic context of Section 230 in the United States and the EU’s Digital Services Act, where legal ambiguity incentivizes platforms to over-enforce against controversial speech to pre-empt liability, creating a chilling effect that disproportionately targets fringe or oppositional political movements regardless of their factual accuracy. The non-obvious outcome is that market freedom becomes eroded not by state action but by the strategic preemptiveness of corporate governance, which exploits legal gray zones to consolidate control over political expression under the banner of neutrality.