What Happens When Platforms Control Your Professional Reputation?

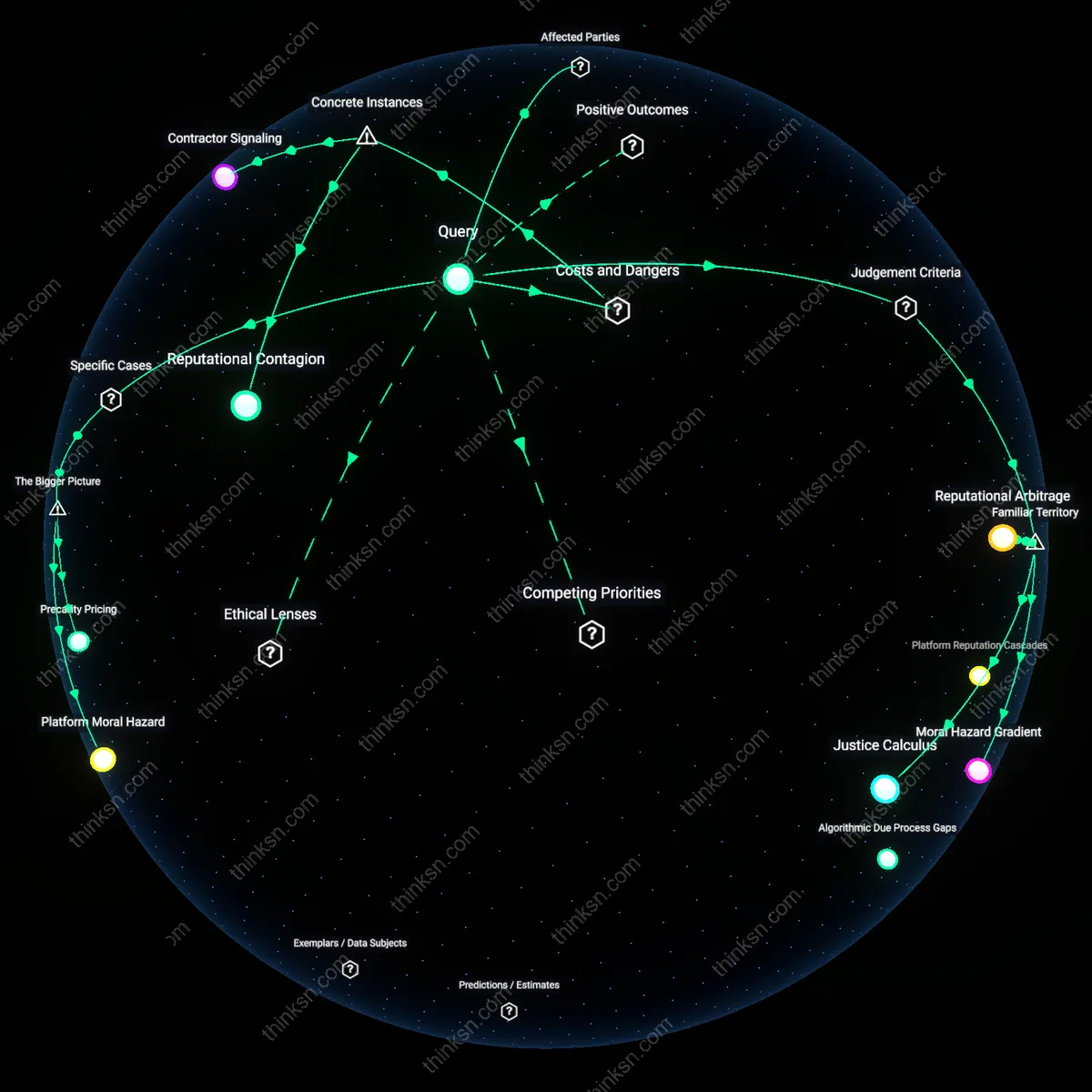

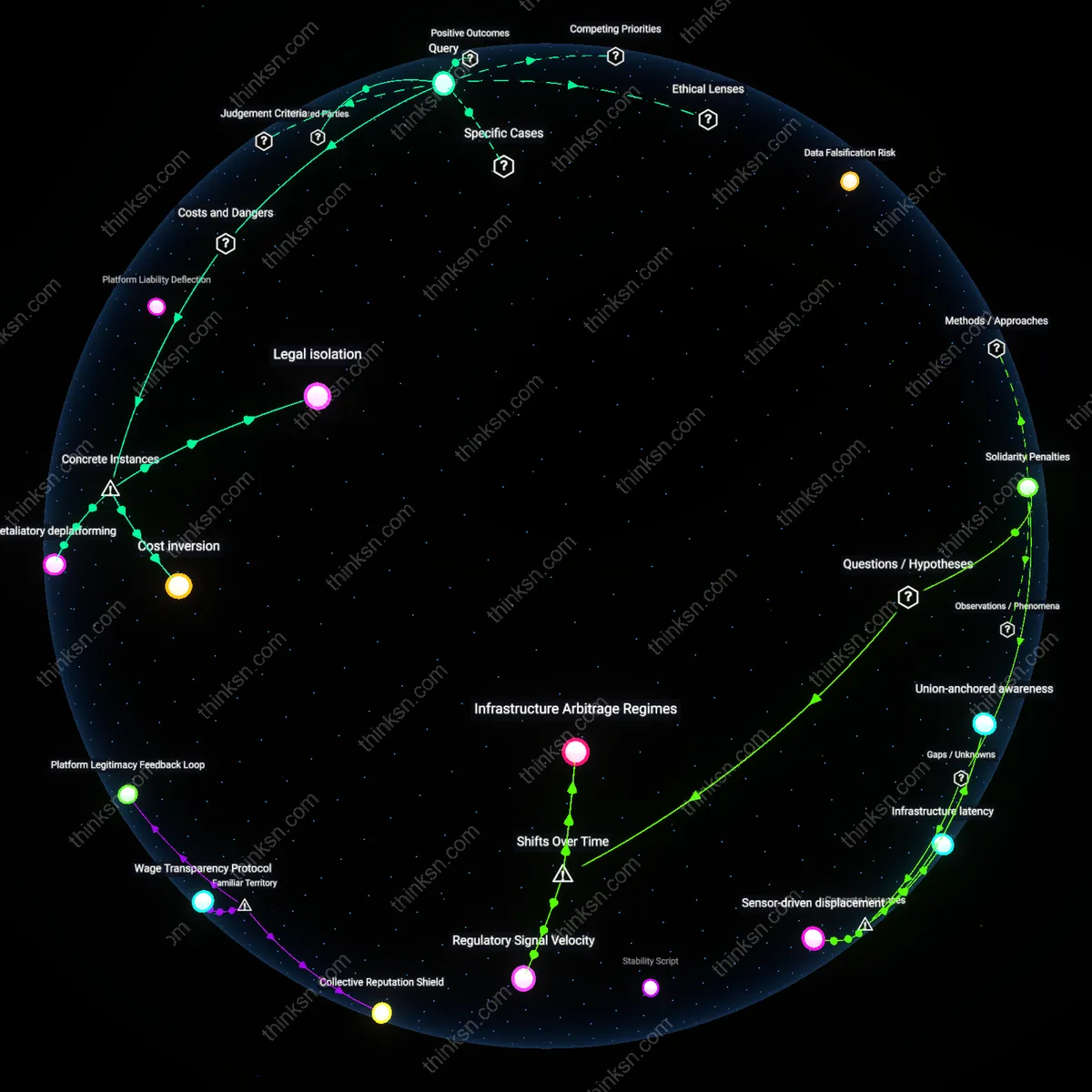

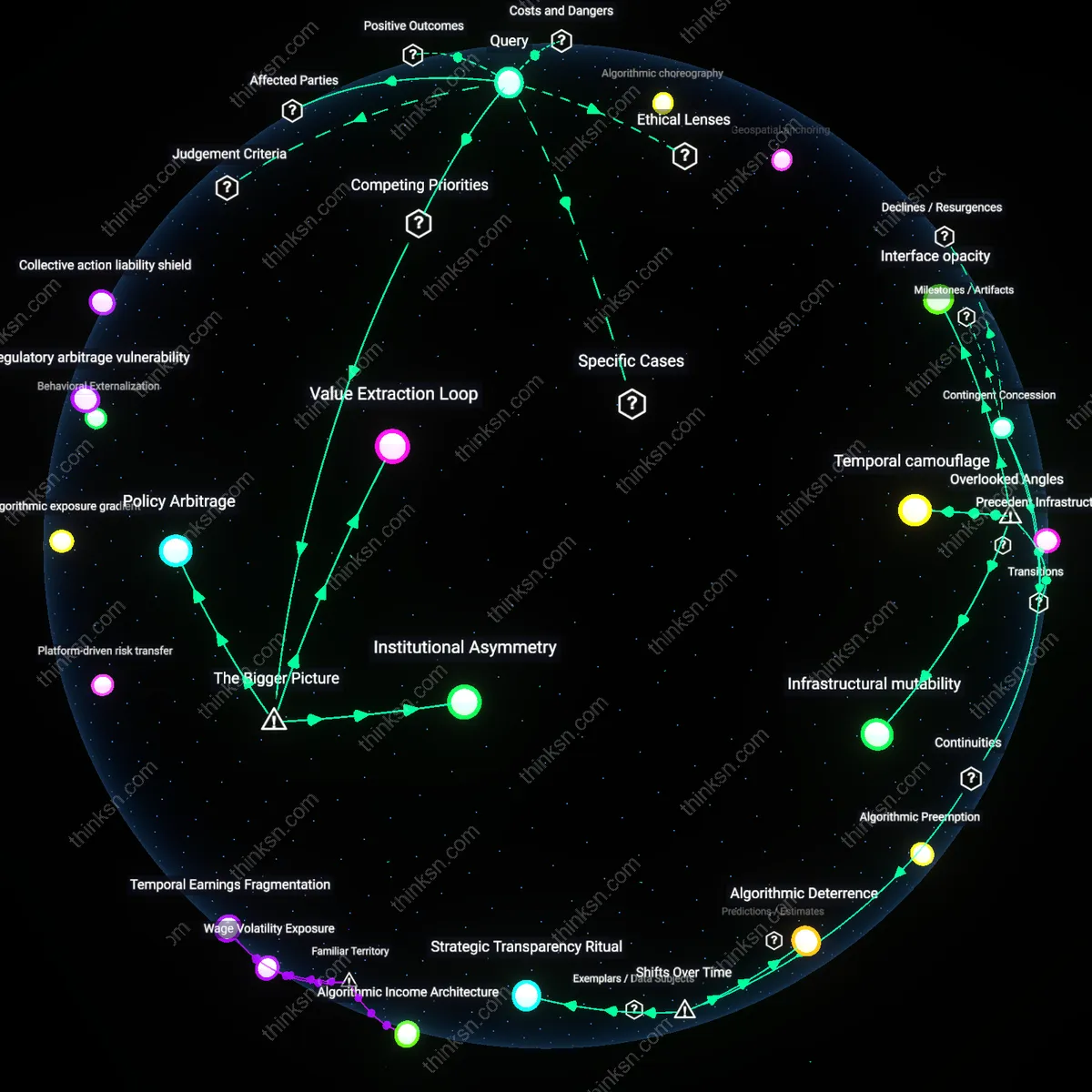

Analysis reveals 6 key thematic connections.

Key Findings

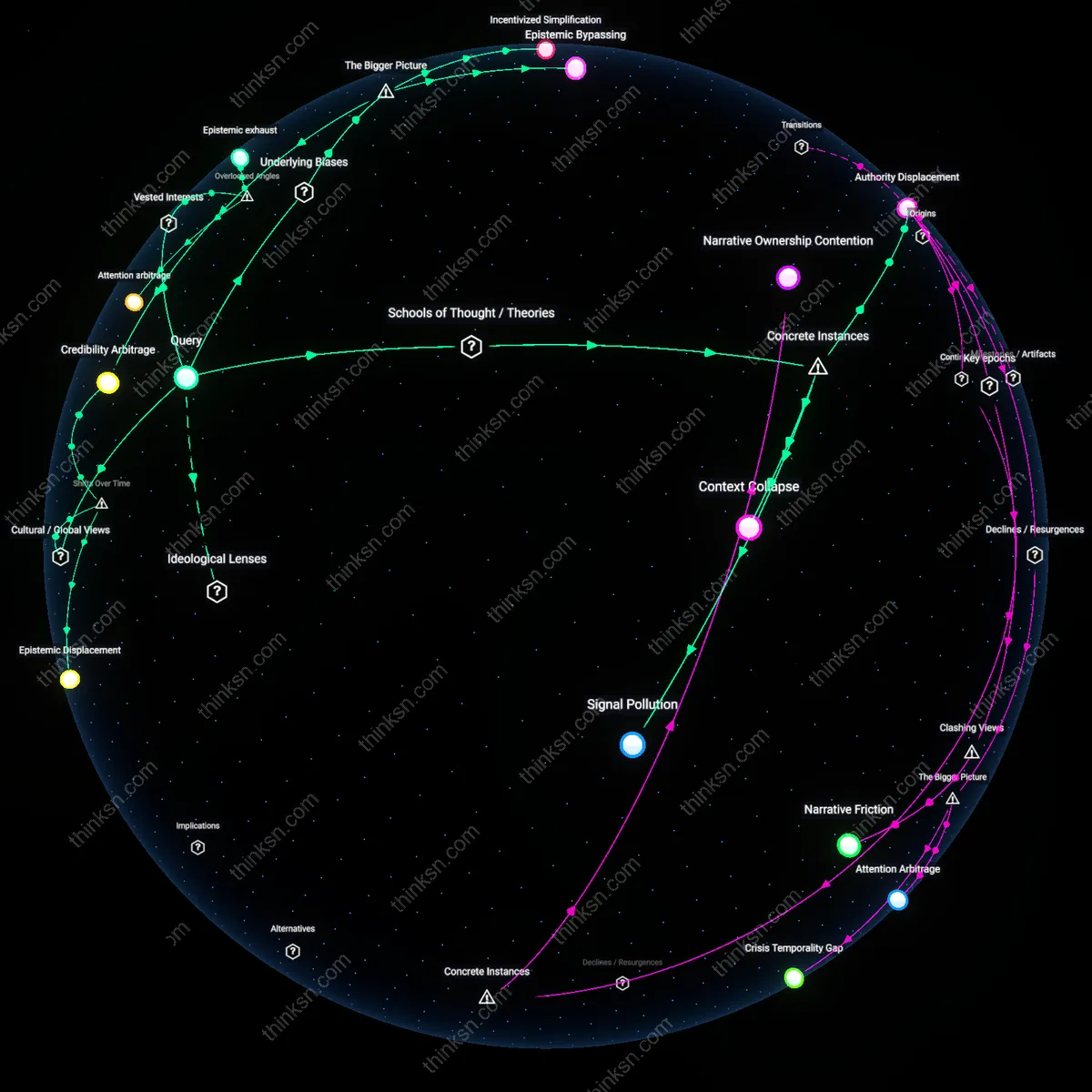

Behavioral arbitrage

Opaque reputation algorithms enable platforms to selectively penalize users in ways that reinforce corporate control over labor practices. When gig economy platforms like Uber or DoorDash adjust reputation scores without disclosure, they exploit information asymmetry to manage driver behavior—such as accepting low-paying gigs or working during peak hours—without formal penalties, thereby externalizing operational risks onto workers. This mechanism functions through algorithmic surveillance infrastructure monitored by platform data science teams, sustained by weak digital labor regulation, and normalized via user agreements that preempt accountability—making the covert manipulation of work conditions appear technically neutral. The non-obvious consequence is that reputation becomes less a measure of trustworthiness and more a lever for invisible workplace discipline.

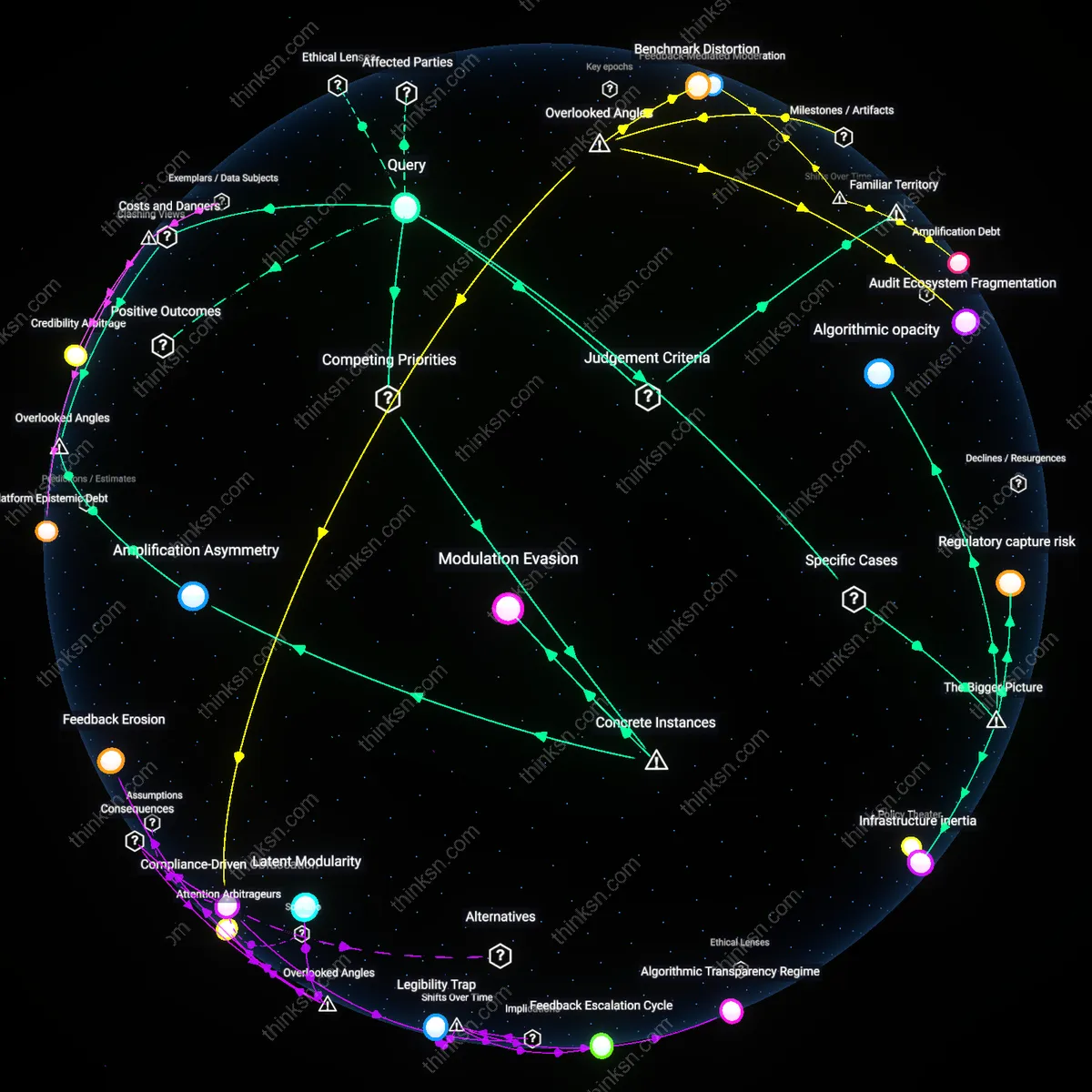

Reputational redlining

Commercial platforms that downgrade user reputations without transparency replicate systemic exclusion patterns akin to historical redlining, where marginalized communities—such as low-income users or non-native speakers—are disproportionately flagged due to biased training data and culturally insensate algorithms. This occurs because machine learning models often use behavioral proxies (e.g., response time, dispute frequency) that correlate with socioeconomic disadvantage, and their feedback loops are calibrated against majority-user norms curated by engineering teams in Silicon Valley firms. The process embeds structural bias into personal digital standing through ostensibly neutral metrics, effectively locking vulnerable users out of economic or social opportunities hosted on the platform. What’s underappreciated is that reputation systems don’t just reflect trust—they actively construct it along socially stratified lines.

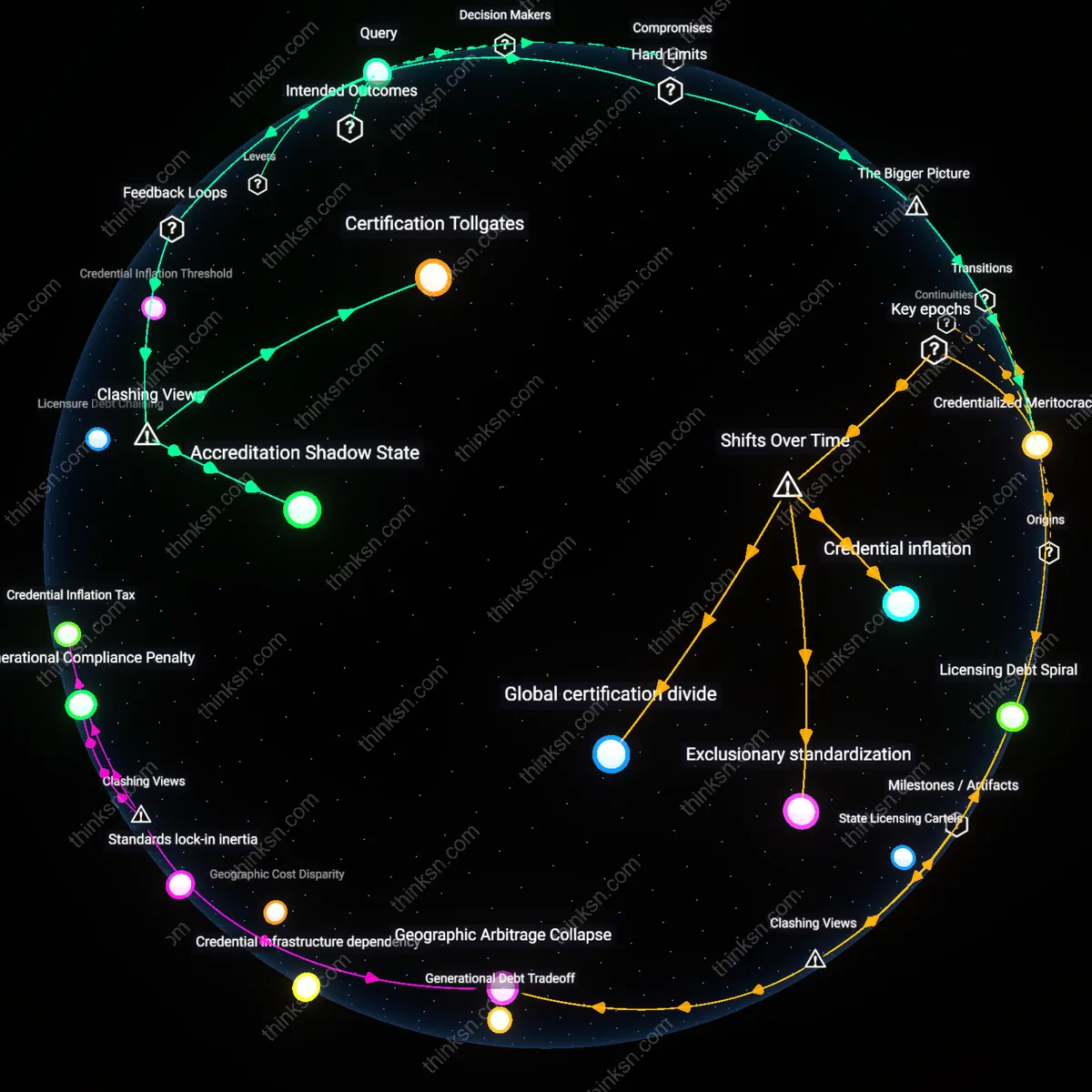

Governance displacement

When platforms unilaterally alter reputation scores without appeal or clarity, they displace traditional regulatory governance—shifting dispute resolution, fairness standards, and due process into privately held code and corporate policies managed by platform trust-and-safety departments. This transfers authority from public institutions accountable to citizens to unelected tech executives and automated systems whose primary fiduciary duty is to shareholders, not users. The mechanism thrives on jurisdictional fragmentation, where global platforms operate across legal regimes without consistent oversight, enabling them to position themselves as both marketplaces and rule-makers. The overlooked dynamic is that reputation is no longer just a commercial signal but a form of private law, with downgrades functioning as extrajudicial sanctions.

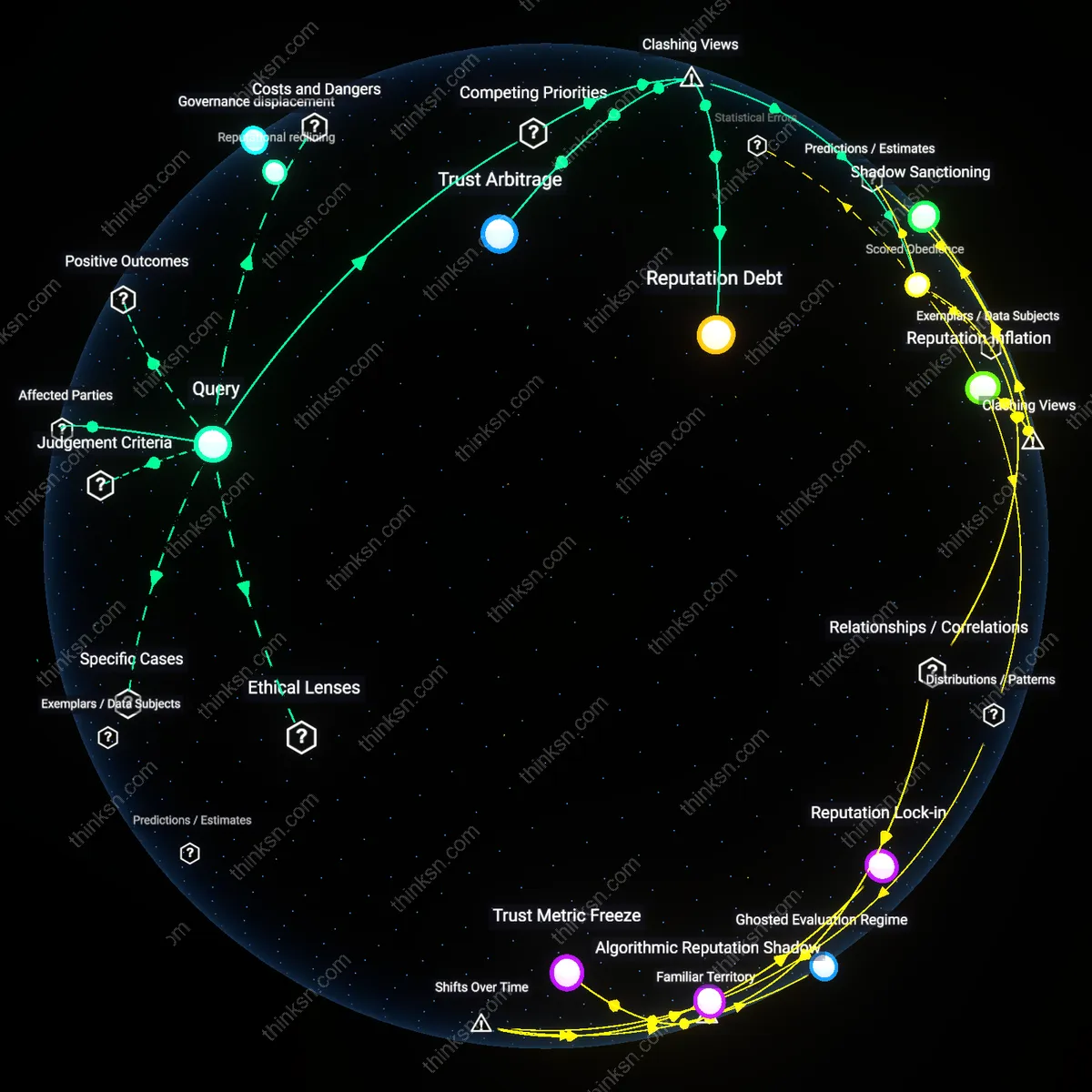

Reputation Debt

Commercial platforms impose lasting liability on users by downgrading reputations without recourse, turning opaque scoring into a one-way extraction of trust capital. This mechanism enables platforms like Uber or Airbnb to enforce compliance with their operational norms—such as driver availability or guest behavior—while insulating themselves from accountability for the downstream consequences, such as loss of income or access. The non-obvious reality is that users absorb reputational risk as a hidden cost of participation, not through misconduct but through arbitrary algorithmic adjustments that mimic financial default without due process, effectively privatizing trust and commodifying its withdrawal.

Scored Obedience

Reputation downgrades without transparent criteria function not as feedback but as behavioral conditioning, where users internalize surveillance to avoid unseen penalties—a shift from rule-breaking detection to preemptive self-discipline. Platforms like Amazon Mechanical Turk or freelance gig markets use opaque scoring to induce compliant labor practices, such as accepting low pay or high workloads, not because rules are broken but because fear of unexplained demotion suppresses resistance. This reveals that the primary product of reputation systems is not quality control but normalized deference, reframing transparency losses not as bugs but as features essential to sustaining platform authority over distributed workforces.

Trust Arbitrage

Platforms exploit asymmetric accountability by downgrading user reputations to transfer risk onto individuals while consolidating trust in the platform’s brand, as seen in marketplace apps like Etsy or Fiverr where seller ratings affect visibility and earnings without appeal. The platform leverages its monopoly on reputation logic to position itself as a neutral arbiter, even as it manipulates scoring to reduce moderation costs and increase user dependency. The dissonance lies in recognizing that opacity isn’t a failure of governance but a strategic enabler—allowing platforms to extract user compliance and data while avoiding the burdens of judicial fairness, thus turning trust into a speculative asset they alone can trade.