Is FTC Case Law Enough to Combat AI Bias?

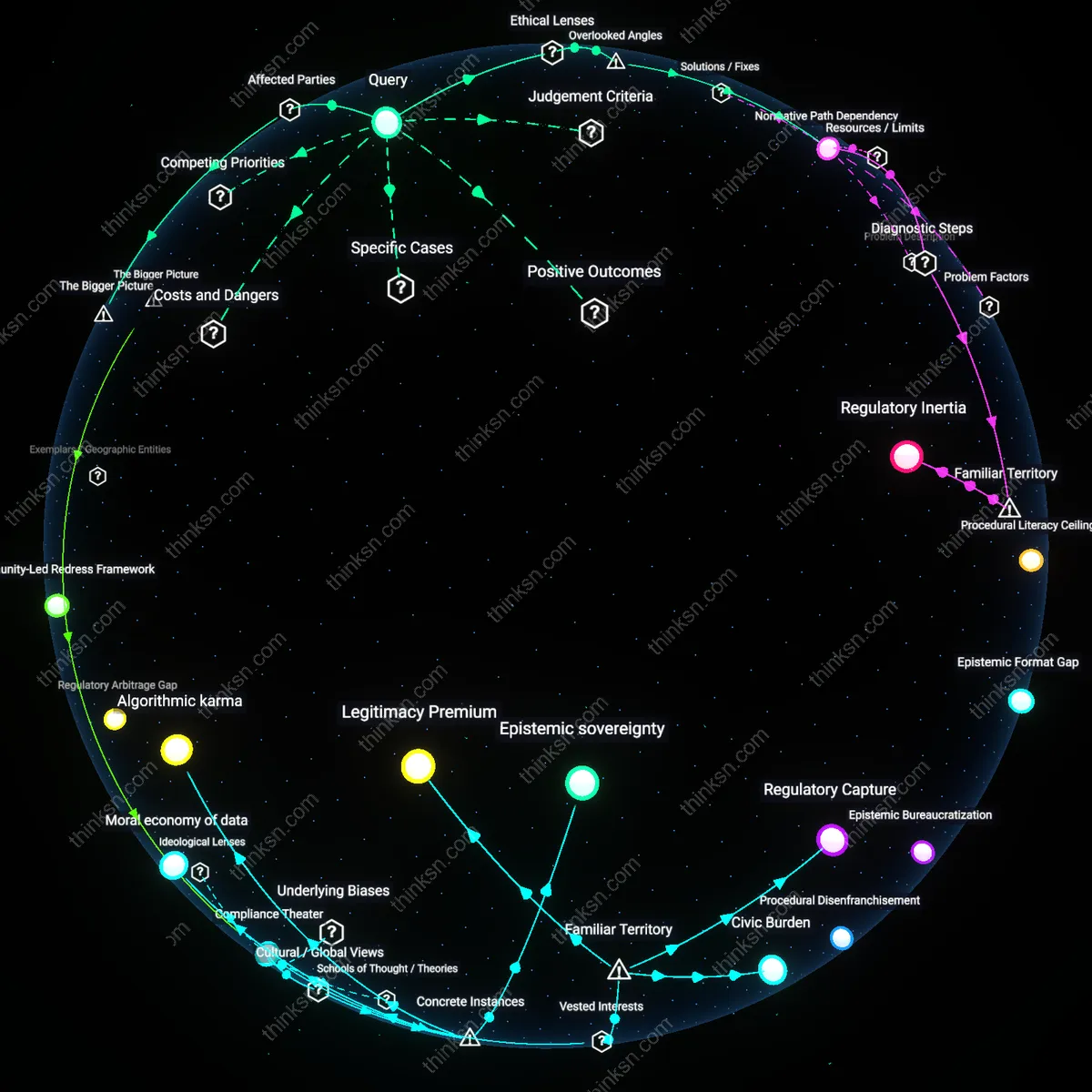

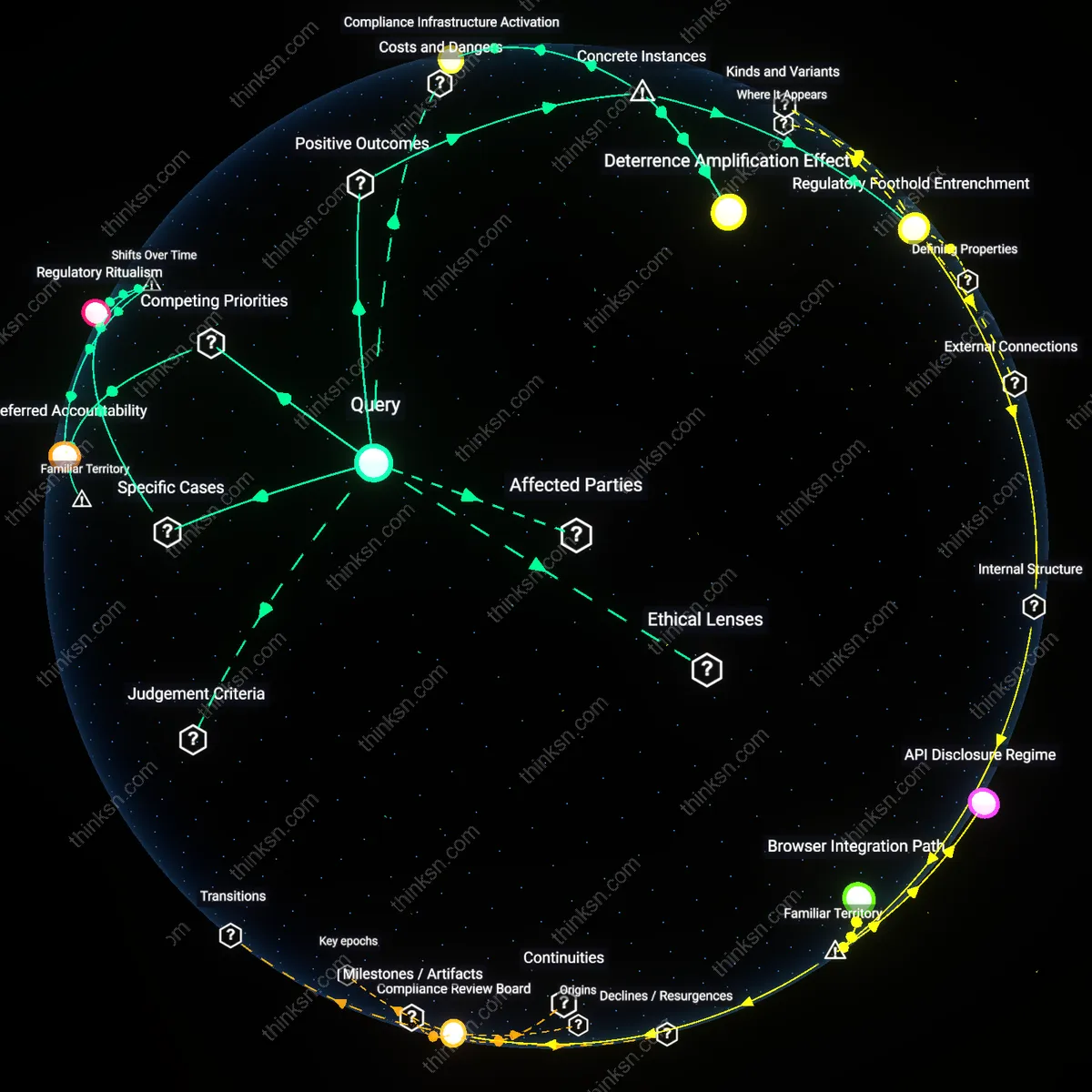

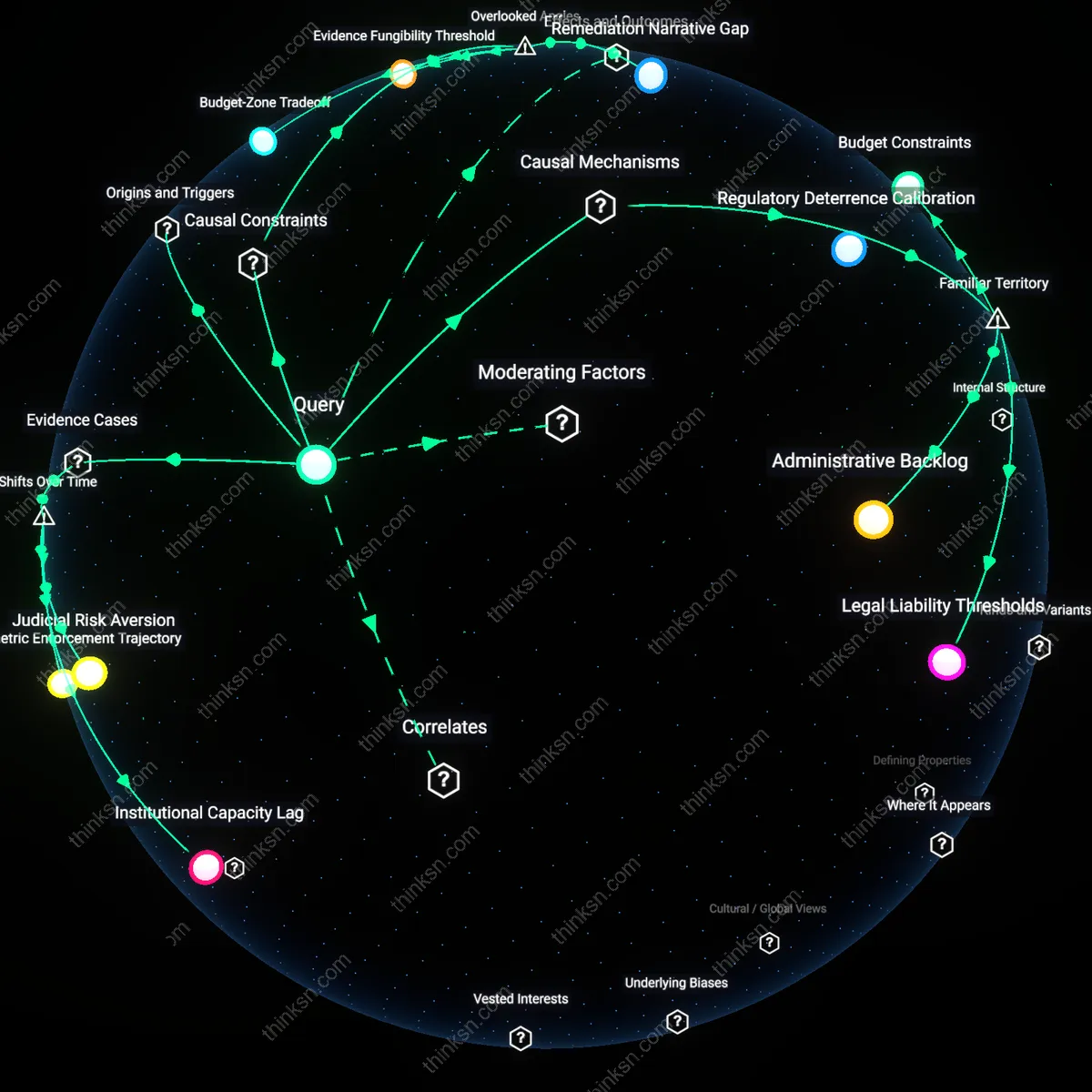

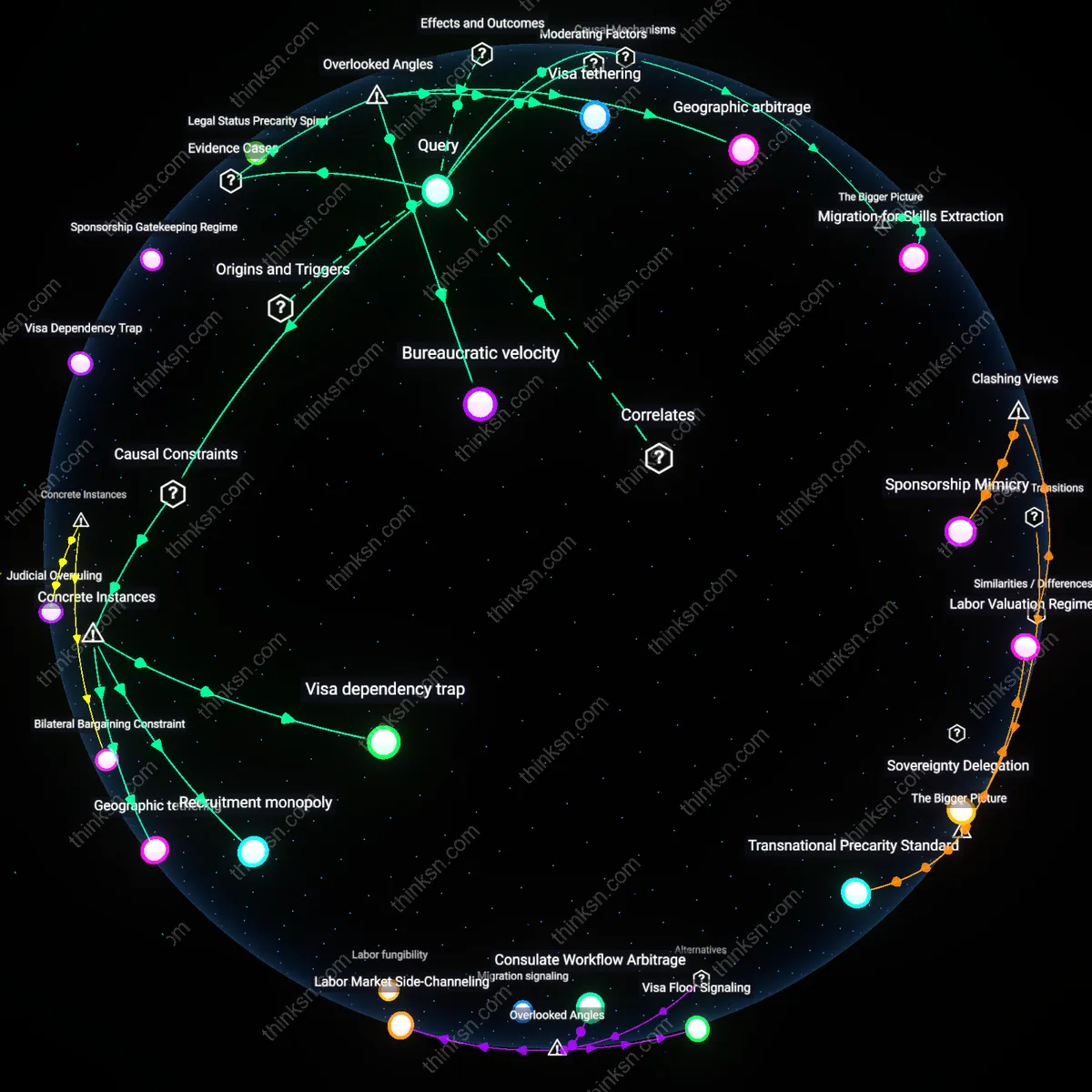

Analysis reveals 3 key thematic connections.

Key Findings

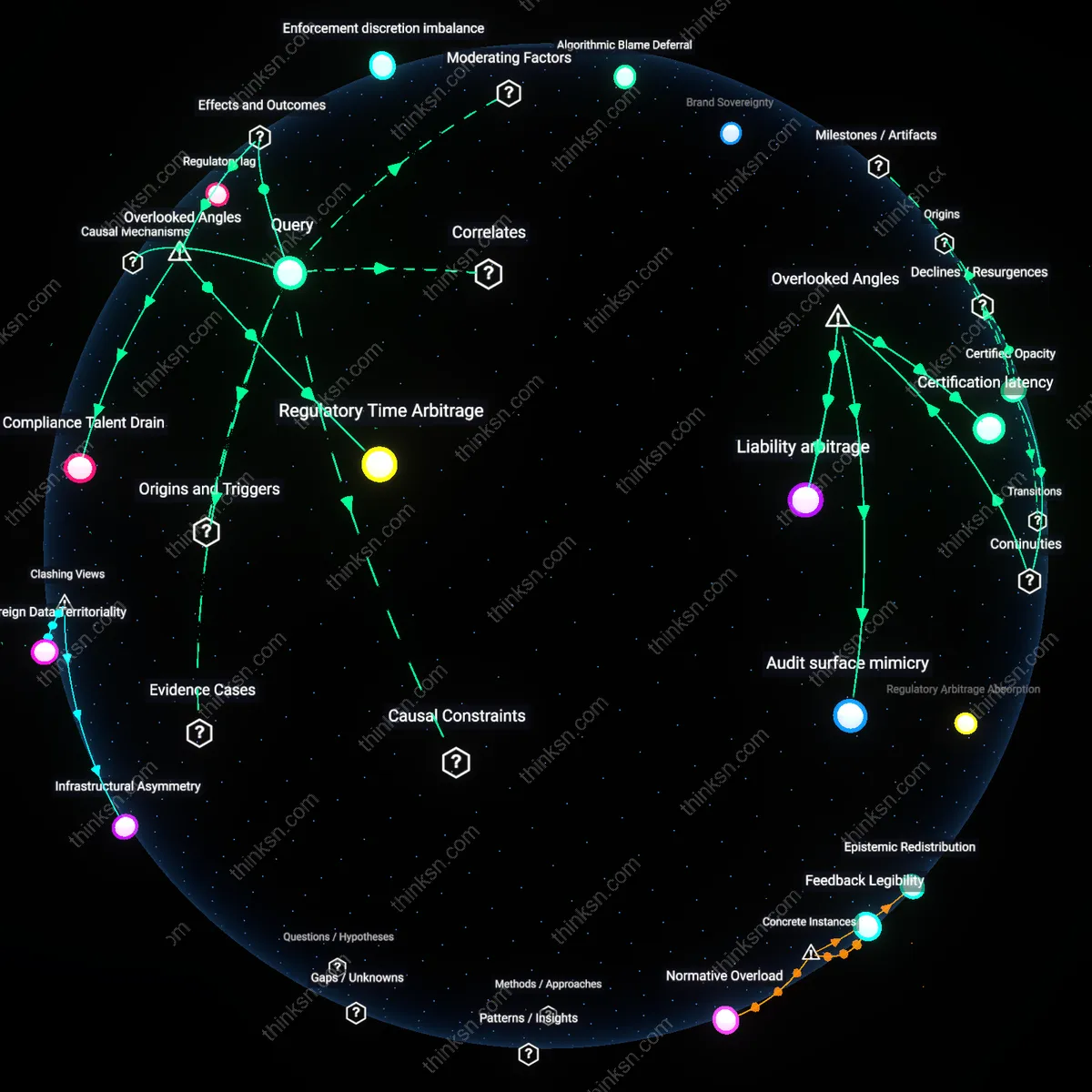

Regulatory Asymmetry

The U.S. reliance on case-by-case FTC enforcement for AI bias systematically advantages wealthy corporations because only well-resourced firms can absorb the legal unpredictability and enforcement lag, while smaller developers and marginalized user groups bear disproportionate harm. Large tech firms leverage in-house compliance teams, lobbying influence, and delayed enforcement timelines to shape norms reactively, whereas under-resourced startups and communities lack capacity to anticipate or contest enforcement actions—this creates a de facto barrier to equitable market participation. The non-obvious consequence is that enforcement unpredictability functions not as a corrective mechanism but as a stabilizing force for incumbent power, privileging organizational resilience over fairness. What makes this connection hold is the misalignment between the FTC’s resource-constrained reactive posture and the scale of algorithmic diffusion, enabling dominant firms to treat enforcement as a cost of doing business rather than a constraint.

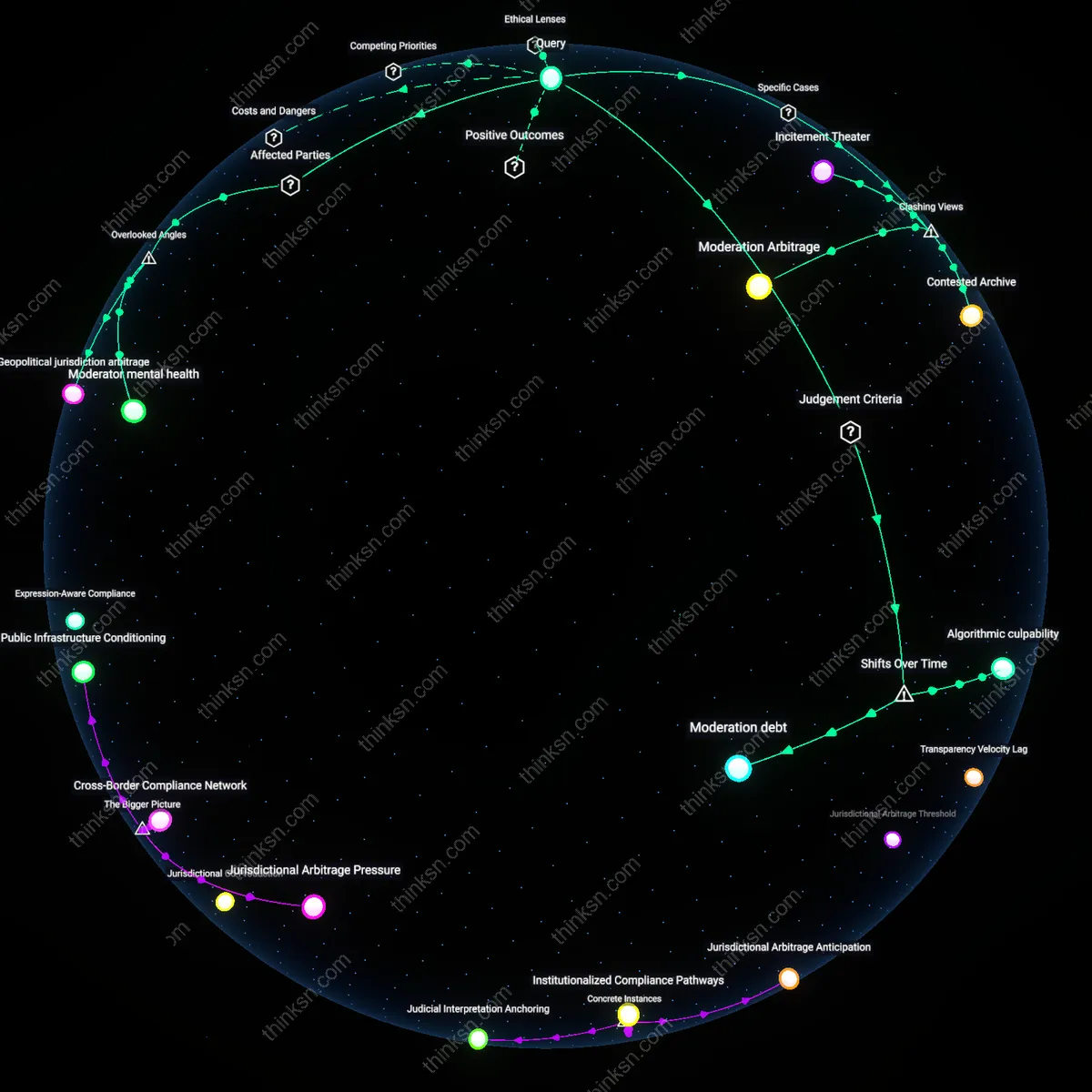

Epistemic Burden Displacement

The FTC’s case-by-case model for addressing AI bias effectively transfers the cognitive and evidentiary burden of proving harm onto marginalized users and public-interest advocates, while insulating corporate developers from the epistemic costs of accountability—a dynamic rooted in liberal legal doctrines of standing and materiality that assume symmetric access to information. Unlike ex ante regulatory regimes, this approach requires harmed individuals to not only detect but also interpret, contextualize, and legally substantiate algorithmic discrimination using opaque or proprietary systems, a task that demands technical fluency, data access, and legal support typically concentrated in well-resourced institutions. The overlooked reality is that the legal system treats knowledge *of* harm as the injured party’s responsibility to produce, not the developer’s to prevent—making ignorance a defensible corporate position—and thereby transforming epistemology into a hidden variable of regulatory equity. This shifts the discussion from enforcement capacity to the political economy of knowing, revealing how legal epistemology can function as a covert enabler of structural advantage.

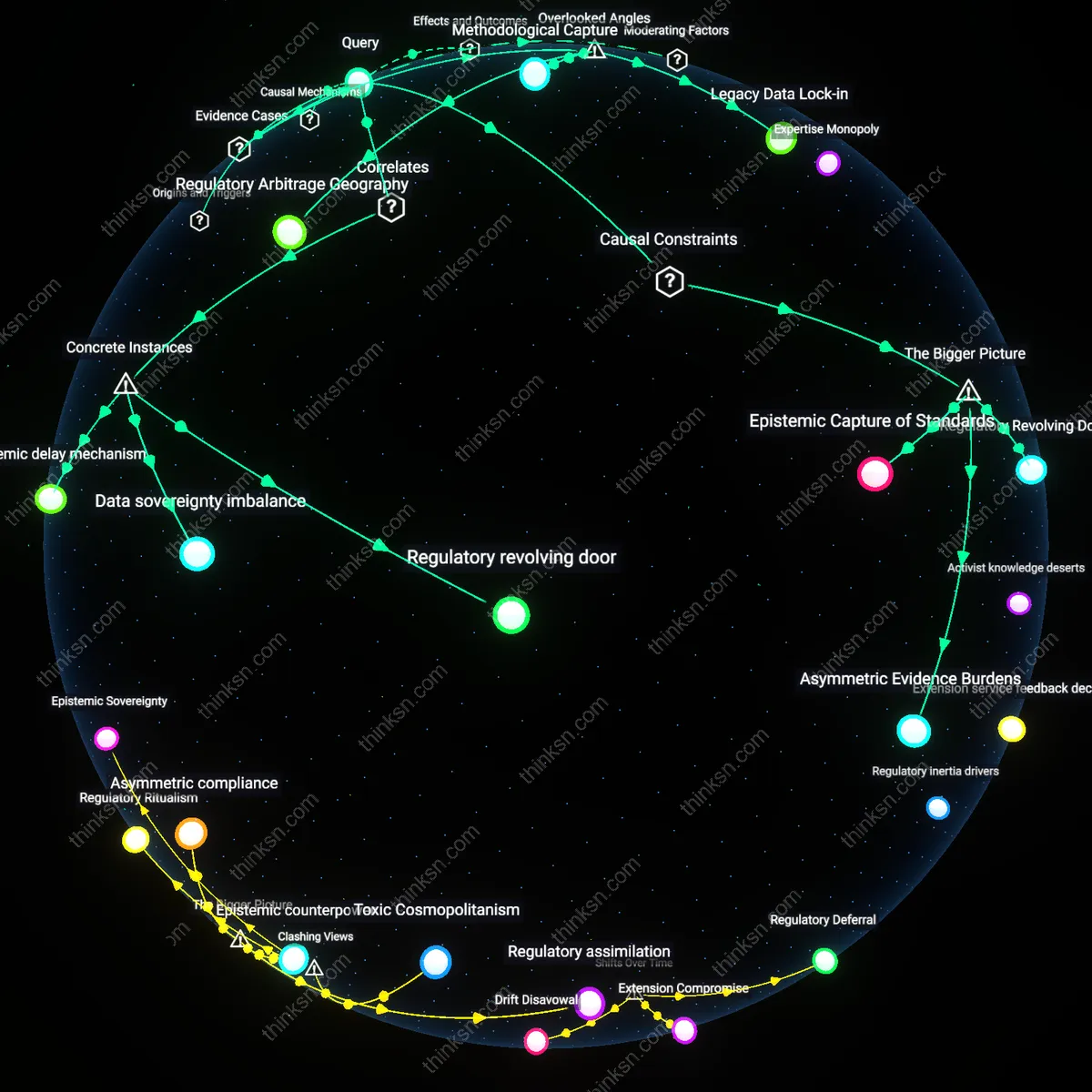

Normative Path Dependency

By resolving AI bias issues through isolated FTC enforcement actions rather than codified standards, the U.S. regulatory approach allows corporate practices to incrementally define the boundaries of 'acceptable' AI behavior, effectively letting enforcement outcomes retroactively legitimize existing power configurations in data governance. Each case-by-case settlement becomes a tacit precedent that shapes industry expectations not through general rules but through precedent-by-exception, where the absence of a ruling against a practice implies permissibility until challenged—rewarding firms that can outlast or outmaneuver scrutiny. Crucially, this process entrenches early-adopter norms, especially in domains like predictive analytics or automated underwriting, where repeated unchallenged deployments crystallize into de facto standards, making future systemic reform politically and economically costly. This dimension is rarely acknowledged because most critiques assume regulation precedes practice, when in fact regulatory latency allows practice to manufacture normalization, turning enforcement scarcity into a normative forge.