Why Health App Gaps Hide Privacy Costs of Convenience?

Analysis reveals 8 key thematic connections.

Key Findings

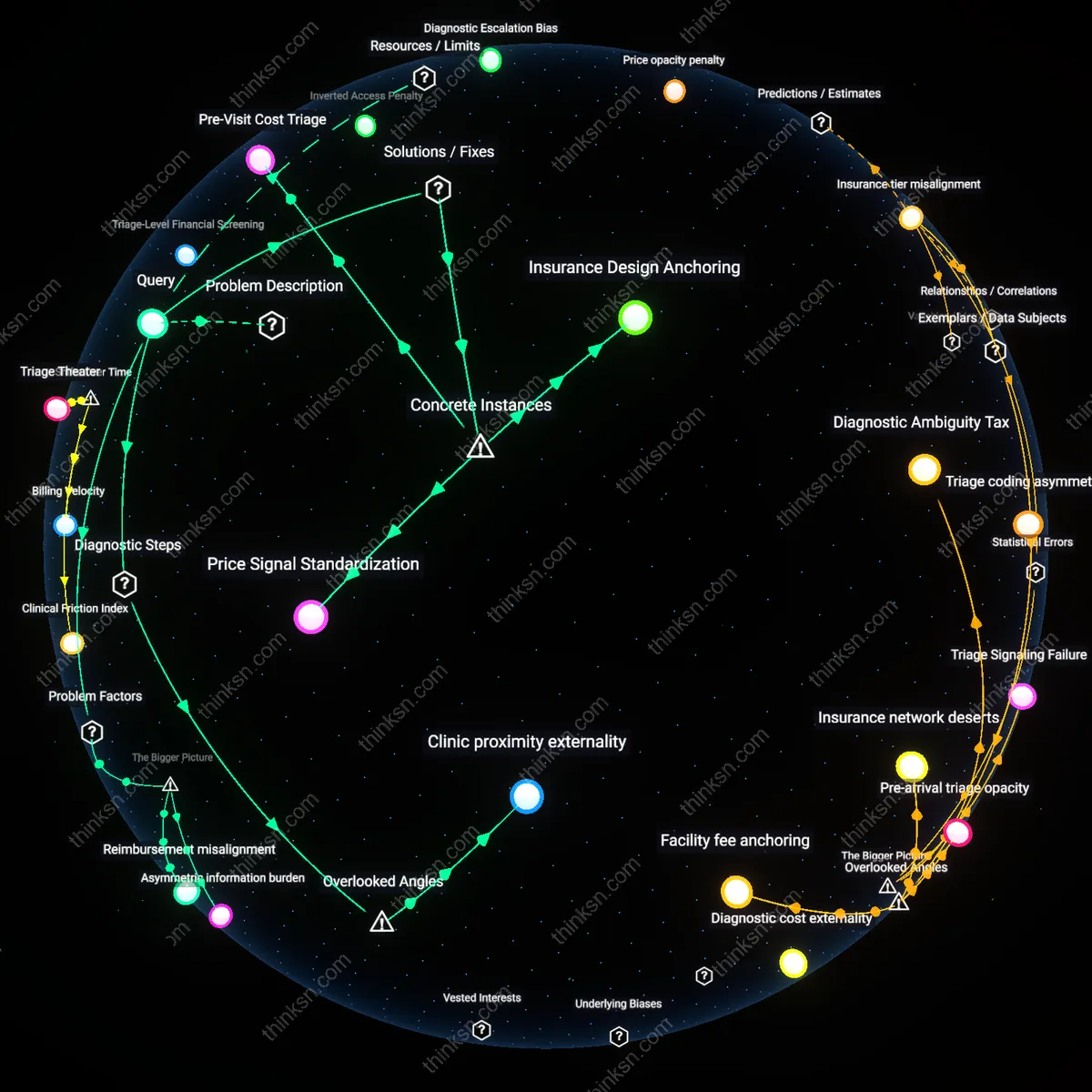

Data philanthropy illusion

Health app users unknowingly participate in a culture of implicit data donation, where interfaces frame consent as civic contribution—such as 'helping research'—which obscures the commercial repurposing of personal metrics. Behavioral design tactics, like celebratory prompts after data sharing or badges for consistency, borrow from gamified altruism platforms to create a psychological contract of goodwill, masking downstream sales to pharmaceutical prospectors or actuarial modelers. What remains hidden is that the perceived trade-off is not between convenience and privacy, but between emotional gratification and systemic data extraction, shifting risk accountability onto users’ moral self-conception. This reframes privacy erosion not as negligence but as a socially engineered default shaped by affective interface design.

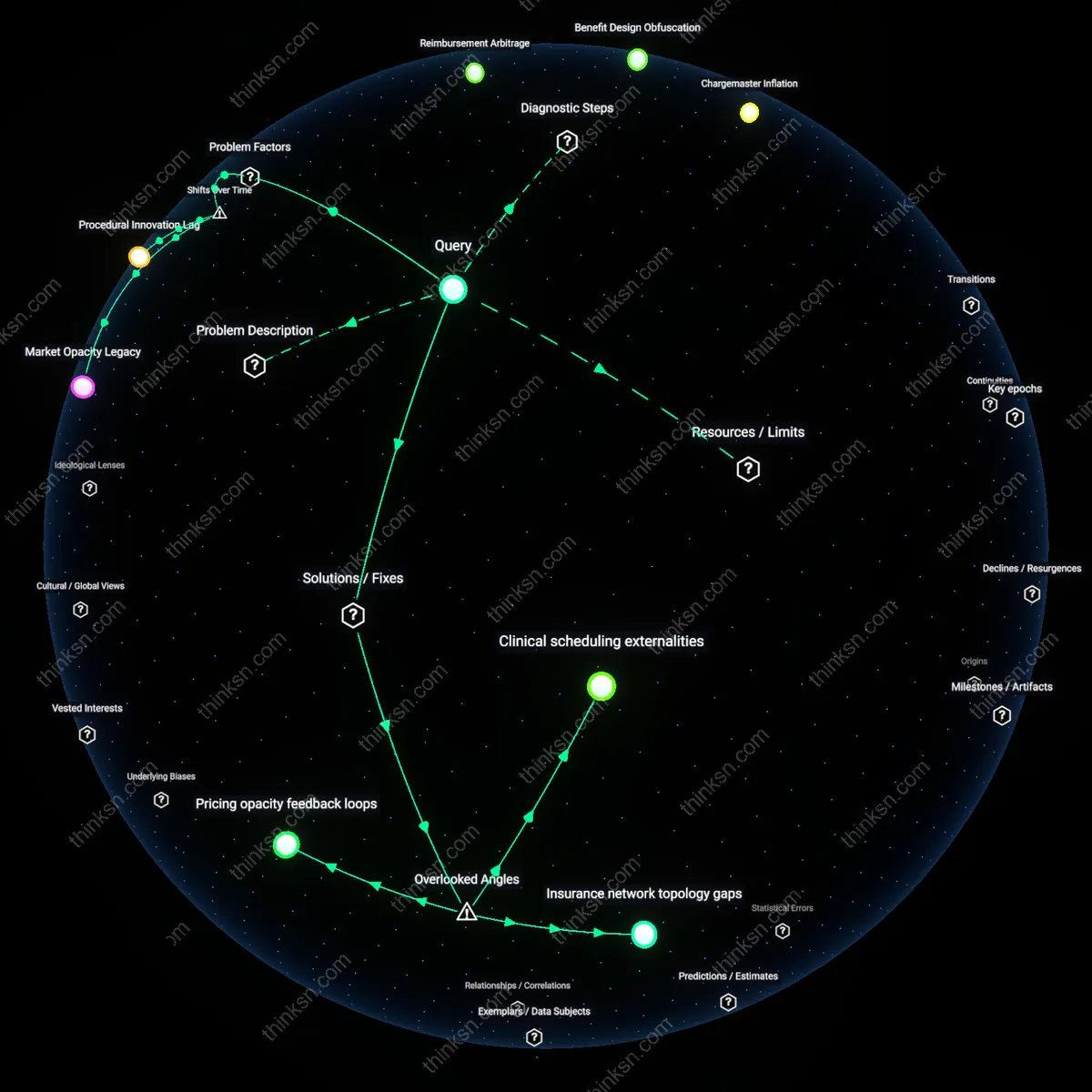

Diagnostic ambiguity premium

The more ambiguous a health app’s medical classification—neither clearly wellness nor clinical—the greater the regulatory blind spot enabling unfettered data monetization, with developers intentionally designing borderline functionality to exploit jurisdictional gray zones. Apps that straddle categories, like fertility trackers that suggest ovulation windows while rejecting diagnostic claims, are shielded from FDA scrutiny yet feed reproductive data into insurance underwriting markets. This strategic indeterminacy is not accidental but a calculated product design doctrine that leverages regulatory thresholds as exploitable thresholds, not safeguards. The overlooked mechanism is that ambiguity itself becomes a monetizable asset, where the lack of clear categorization is not a flaw but a feature generating privacy gaps by design.

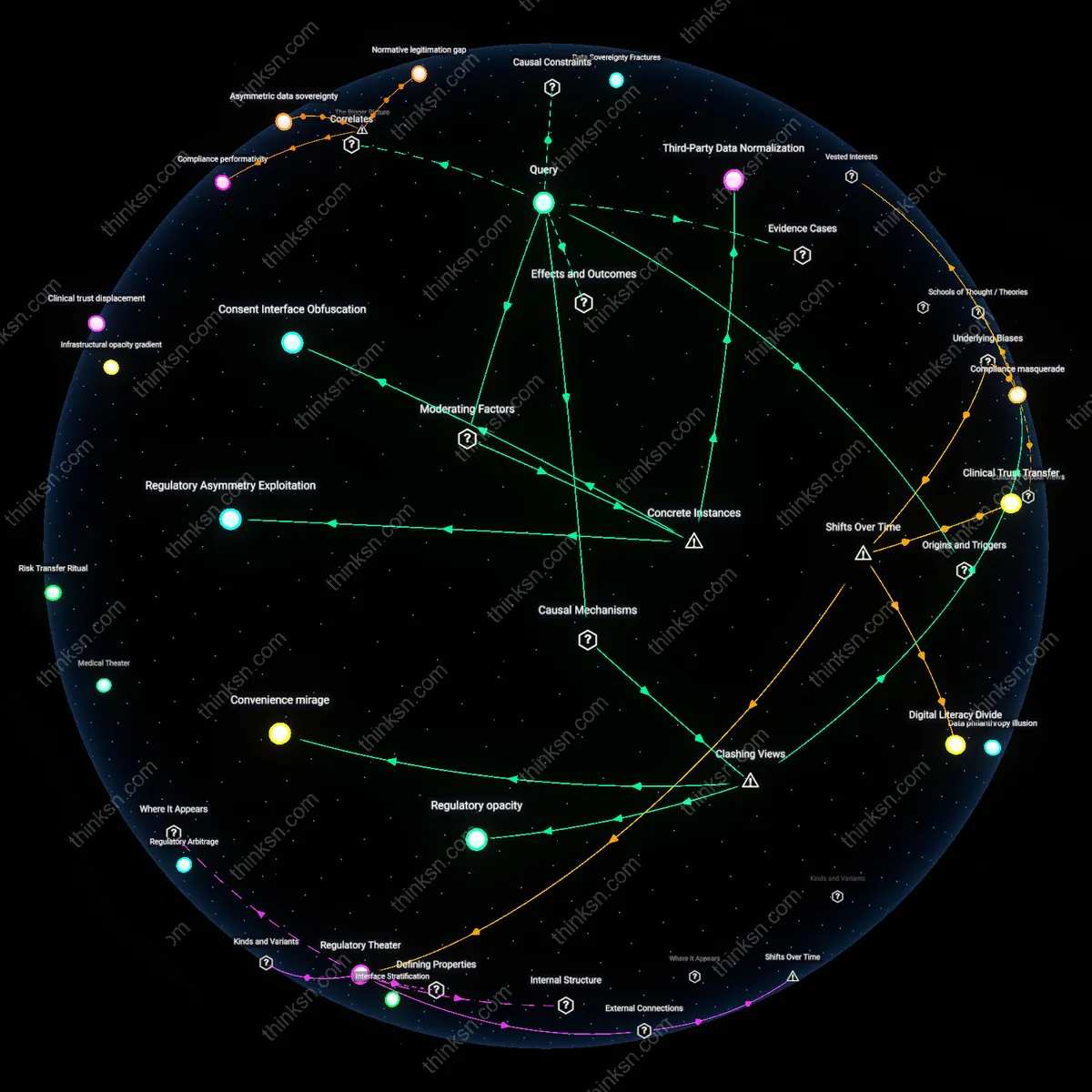

Regulatory opacity

Privacy gaps in health-app regulations obscure user understanding by institutionalizing ambiguous data standards that allow app developers to legally classify sensitive biometrics as non-PHI, thereby bypassing HIPAA safeguards and creating a false impression of systemic protection where none exists; this mechanism operates through the HHS’s narrow jurisdictional interpretation of ‘covered entities,’ which excludes most consumer-facing apps despite their handling of intimate health data, and such exclusion is non-obvious because users assume health data is universally protected like hospital records, when in reality fragmentation between FDA, FTC, and OCR jurisdictions enables deliberate regulatory invisibility.

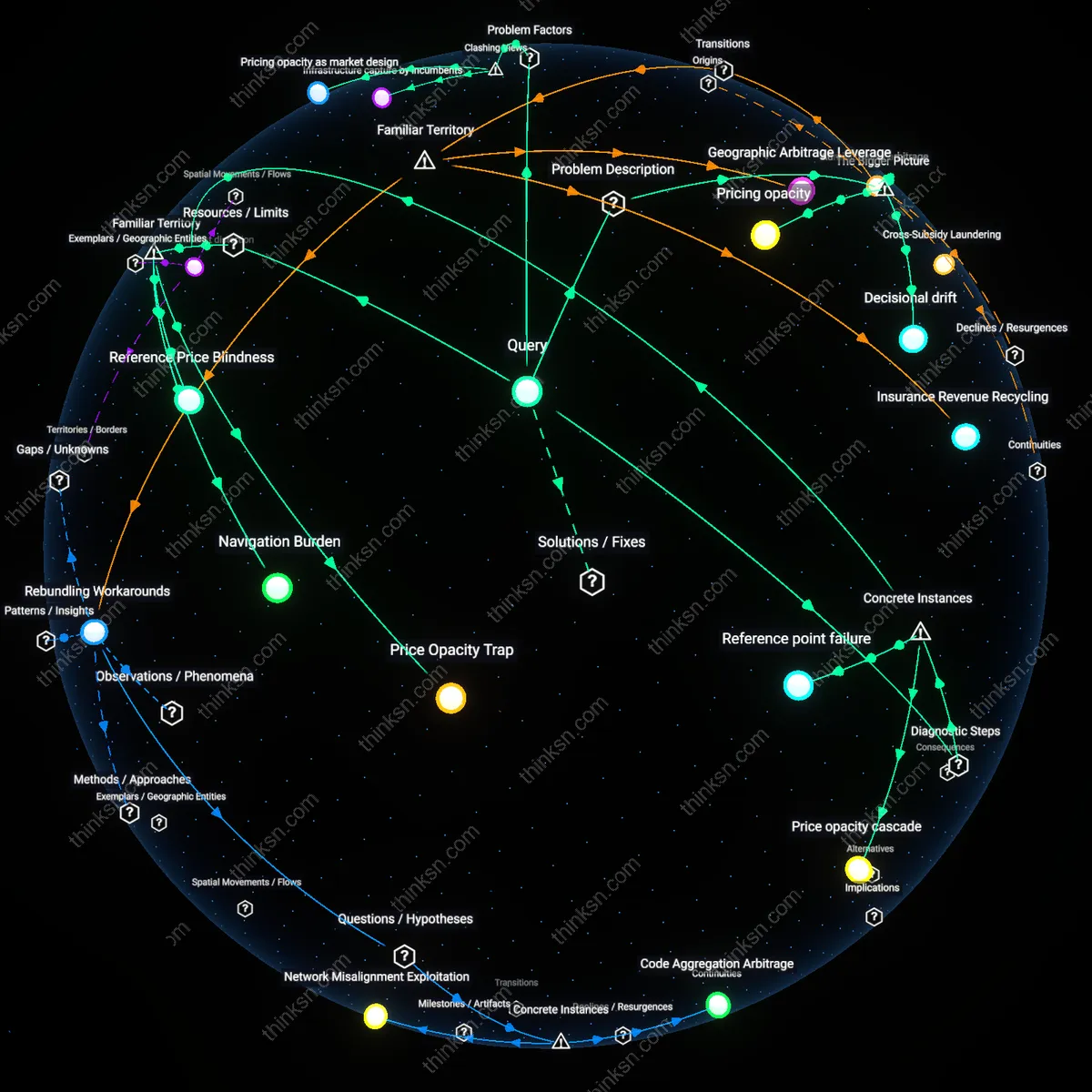

Convenience mirage

Users misunderstand the data-risk trade-off not because they are uninformed, but because health apps engineer a functional illusion of necessity—where features like instant symptom checkers or medication reminders are designed to feel indispensable despite being decoupled from clinical validation, thus making data sharing appear trivial in comparison; this operates through behavioral design patterns like frictionless onboarding and just-in-time consent prompts that exploit cognitive biases during moments of health anxiety, a mechanism that challenges the dominant narrative of user consent as rational choice by revealing how perceived medical utility is manufactured to justify extraction.

Compliance masquerade

Health apps preserve user misunderstanding by adopting the aesthetics of regulation—such as privacy policies, toggle buttons, or GDPR-style banners—without adhering to enforceable health-data fiduciary duties, which creates a symbolic compliance that satisfies user expectations of safety while legally permitting unrestricted data brokerage; this mimetic display functions through the FTC’s enforcement of deceptive practices only after harm occurs, not pre-emptively, and it contradicts the intuitive belief that visible governance cues reflect actual data stewardship, exposing how regulatory theater substitutes for substantive oversight.

Regulatory Asymmetry Exploitation

The 2019 Flo Health incident, in which the menstrual tracking app shared user data with Facebook despite minimal privacy disclosures, demonstrates that lax enforcement of HIPAA-exempt digital health tools enables companies to leverage jurisdictional blind spots for data exploitation. Because the app operated outside traditional healthcare regulation while handling sensitive reproductive data, developers could obscure data flows under UX design that prioritized frictionless sharing over informed consent, thereby masking the trade-off as a technical default rather than a negotiable risk. This illustrates how jurisdictional fragmentation between medical and consumer software amplifies data extraction by letting firms normalize risky sharing under the guise of convenience, a mechanism often overlooked because privacy policies appear voluntary rather than structurally coerced.

Consent Interface Obfuscation

In 2020, Singapore’s TraceTogether app, initially promoted as a Bluetooth-based contact tracing tool with strong privacy safeguards, later revealed expanded data access for police investigations—a shift enabled by vague consent language buried in update notifications rather than standalone user re-authorization. The change exploited the high-trust public health context to reframe data reuse as a continuity of service, not a new intrusion, thereby leveraging crisis-era acceptance to dampen resistance to expanded surveillance. This case reveals how state-backed health apps can use emergency legitimacy to obscure trade-offs, making data repurposing feel like operational necessity rather than policy choice—a non-obvious manipulation of temporal trust dynamics.

Third-Party Data Normalization

The integration of Google Fit into India’s Ayushman Bharat digital health ecosystem in 2022 enabled seamless access to government health records through a consumer platform not subject to equivalent data protection laws, normalizing the inclusion of ad-tech-affiliated infrastructures in sensitive health transactions. Because users perceived Google’s interface as a mere convenience layer—not a data intermediary—few recognized that health data synced through the platform fell under Google’s broader data governance model, which permits cross-service profiling. This illustrates how the technical coupling of public health systems with dominant tech platforms dampens perceived risk by assimilating medical data into everyday digital routines, a silent normalization that shifts behavioral expectations without overt policy change.