Do FCC Experts Favor Big Tech in Standards?

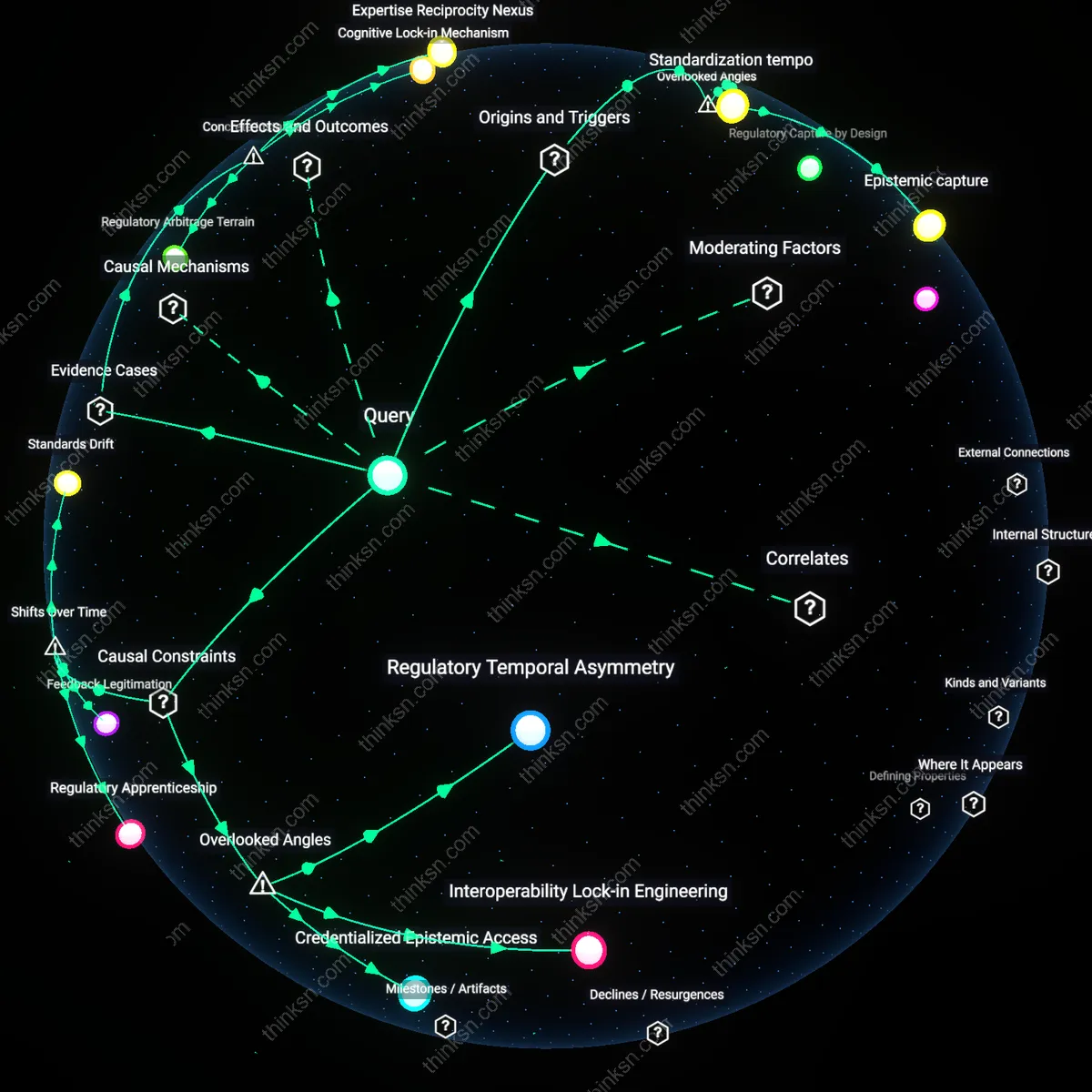

Analysis reveals 12 key thematic connections.

Key Findings

Epistemic capture

The inclusion of tech-sector experts in FCC advisory panels systematically entrenches the cognitive frameworks of dominant firms by treating their operational assumptions as technical necessities, not contested choices. These experts, often drawn from large platforms with regulatory compliance teams, frame issues like spectrum allocation or data interoperability through risk-averse, scale-dependent logics that make innovation appear inherently disruptive to network stability—thus privileging firms with existing infrastructure. This is an overlooked angle because the bias is not in overt lobbying but in the quiet normalization of a single epistemic regime, where what counts as 'feasible' or 'secure' is defined by those who already meet those standards. The result is regulation that appears neutral but de facto penalizes alternative architectures only because they don't fit a preordained model of how technology should evolve.

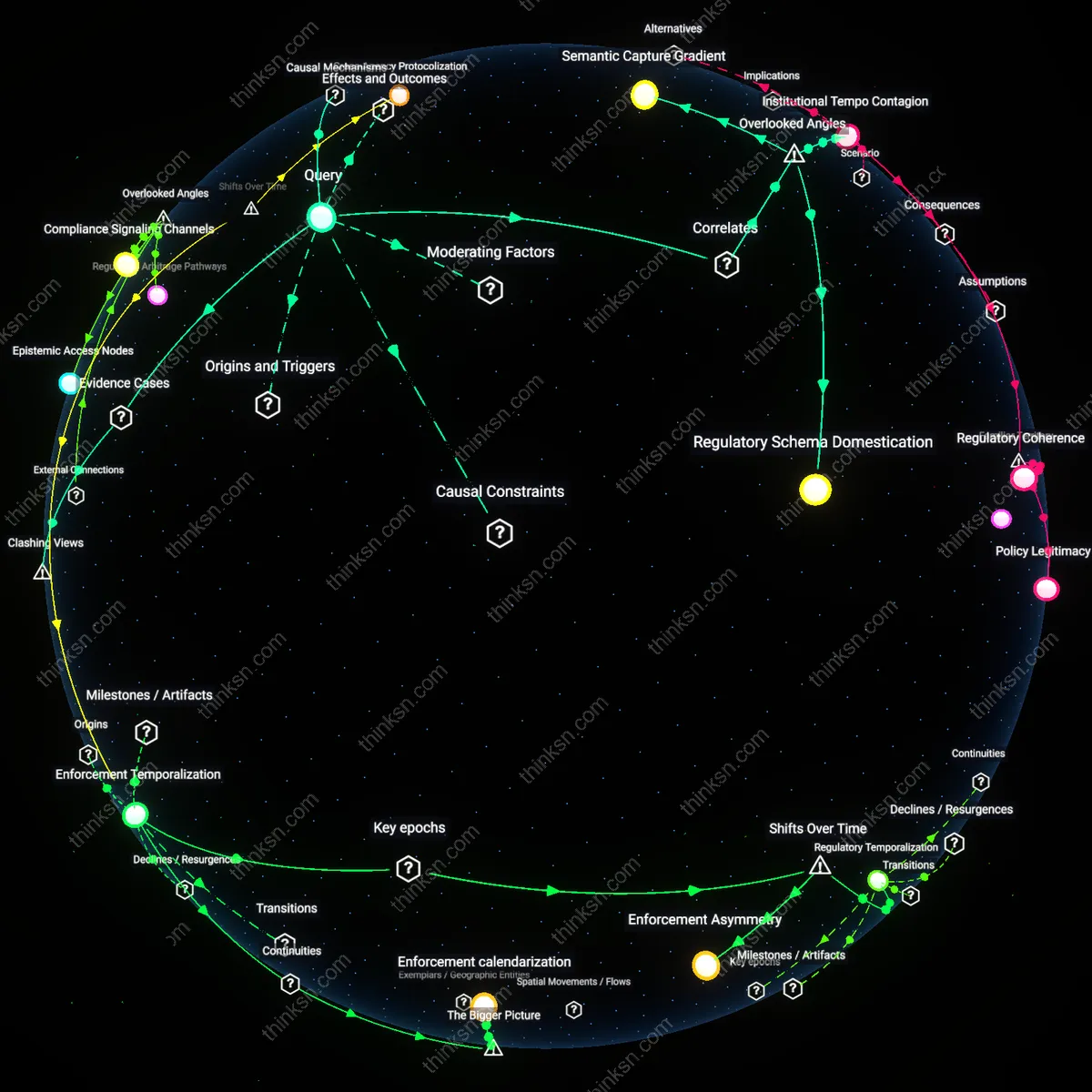

Standardization tempo

Established tech firms benefit from advisory influence that calibrates the pace of standard-setting to match their internal development cycles, effectively drowning out faster, leaner entrants. Experts from major companies embedded in FCC panels subtly delay or stagger deadlines for compliance, advocate for extended transition periods, and demand extensive pilot testing—tactics that align with their legacy system upgrade timelines but impose prohibitive wait-and-see costs on startups. This mechanism is overlooked because attention focuses on the substance of standards, not the rhythm of their rollout; yet timing is a form of gatekeeping, and controlling the tempo allows incumbents to treat regulatory milestones as strategic inflection points rather than existential threats. The systemic driver is not just influence over content, but over the clock itself.

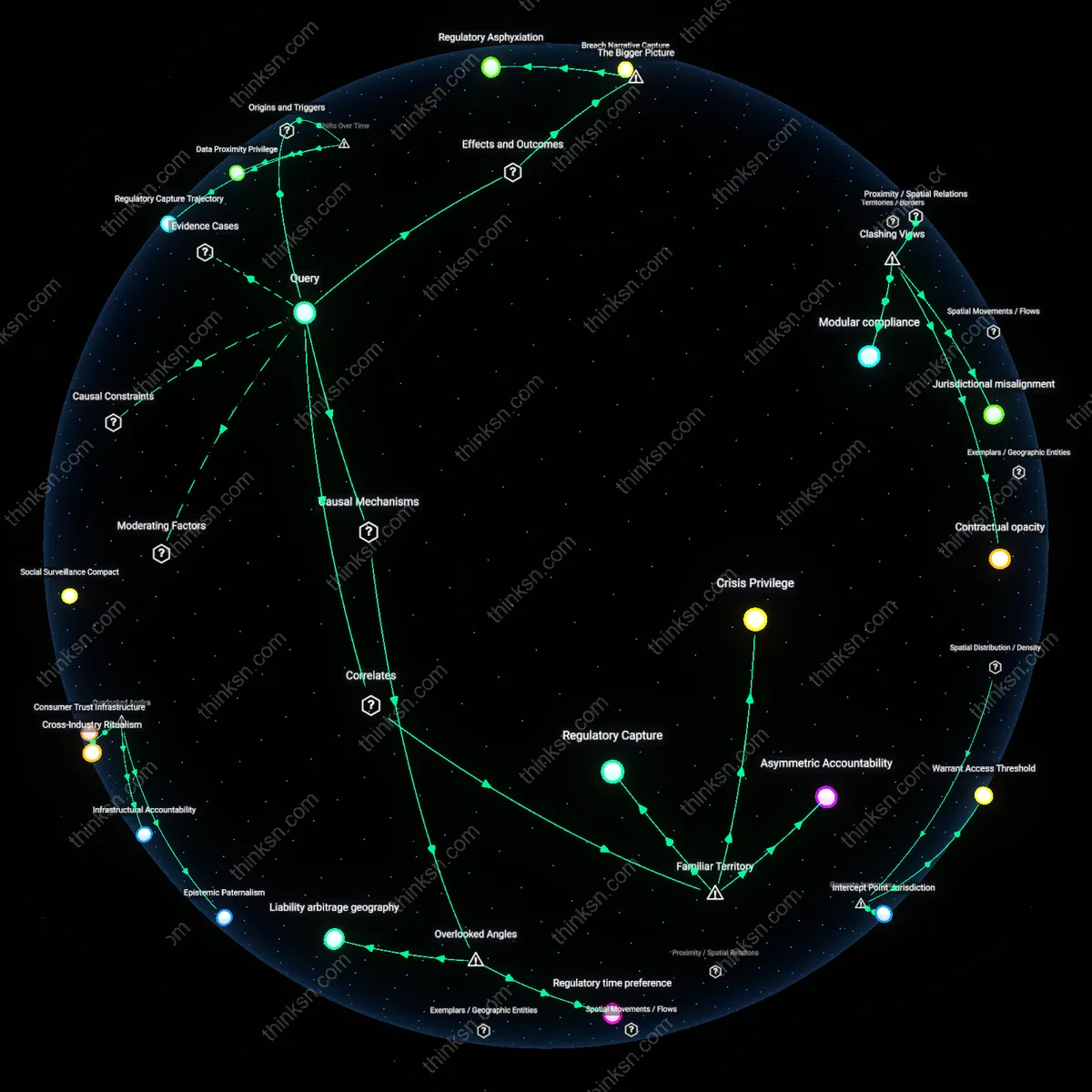

Compliance surface debt

Regulatory standards shaped by incumbent tech experts embed hidden compliance burdens—such as reporting formats, audit trails, or infrastructure redundancies—that favor firms with pre-existing governance machinery. These requirements appear universally applicable but are in fact scaled to organizations with legal, security, and engineering teams already structured to absorb such tasks, while imposing disproportionate overhead on new entrants who must build compliance capacity before achieving scale. The overlooked dynamic is that 'expert'-driven standards often encode the internal bureaucracy of large firms as external obligations, mistaking organizational maturity for technical necessity. This creates a form of technical debt not in code, but in administrative architecture—which new firms must repay before they can even compete.

Regulatory Temporal Asymmetry

Tech-sector experts on FCC panels shape procedural timelines that compress standard-setting cycles, privileging firms with pre-existing compliance infrastructure. Because established companies can rapidly adapt to accelerated deadlines using dedicated regulatory affairs teams and legacy data systems, they clear temporal hurdles that overwhelm new entrants lacking synchronized legal-engineering workflows. This bottleneck—temporal synchronization between rule publication and operational readiness—is rarely acknowledged in equity assessments of rulemaking, yet it systematically converts calendar time into a barrier to entry. The non-obvious insight is that speed itself, not just content, becomes a regulatory weapon when technical experts design processes that mirror their own organizational rhythms.

Interoperability Lock-in Engineering

Advisory experts embed implicit assumptions about network architecture into FCC technical standards, requiring new entrants to achieve seamless interoperability with dominant firms’ legacy protocols. Because these specifications are treated as neutral engineering necessities rather than strategic choices, they conceal path dependencies that favor incumbents who control foundational layers like spectrum allocation maps or signaling frameworks. Startups must reverse-engineer de facto standards that were never formally codified, creating a hidden dependency on incumbent design logic. This bottleneck reveals that the real constraint isn’t access to technology, but forced alignment with embedded architectural hegemonies masked as technical consensus.

Credentialized Epistemic Access

FCC advisory participation is conditioned on recognized technical credentials—such as IEEE affiliations or prior standardization experience—which function as epistemic gatekeepers excluding alternative knowledge forms like community network practices or open-source governance models. Established firms systematically cultivate these credentials across their engineers, ensuring their norms dominate the epistemology of what counts as 'reliable' input. The causal bottleneck is not influence per se, but the preemptive narrowing of who qualifies as a 'valid' expert, thereby foreclosing regulatory imagination around non-corporate innovation trajectories. This credential filter, though formally neutral, entrenches an unrecognized epistemic monopoly that shapes standards before debate begins.

Regulatory Apprenticeship

Tech-sector experts on FCC advisory panels institutionalize legacy infrastructure assumptions by normalizing practices from the 1996 Telecommunications Act era, when regional Bell operating companies shaped national broadband rollout, thus embedding path dependencies that render newer, agile deployment models—like decentralized wireless mesh networks—non-compliant by design; this mechanism locks in advantage not through explicit exclusion but through tacit technical standardization shaped by incumbents who helped define the original rollout. The non-obvious insight is that advisory influence operates not via lobbying but through time-contingent epistemic alignment, where post-1996 standardization eras became indistinguishable from public-interest norms, making deregulatory innovation appear as technical deviation rather than competitive advancement.

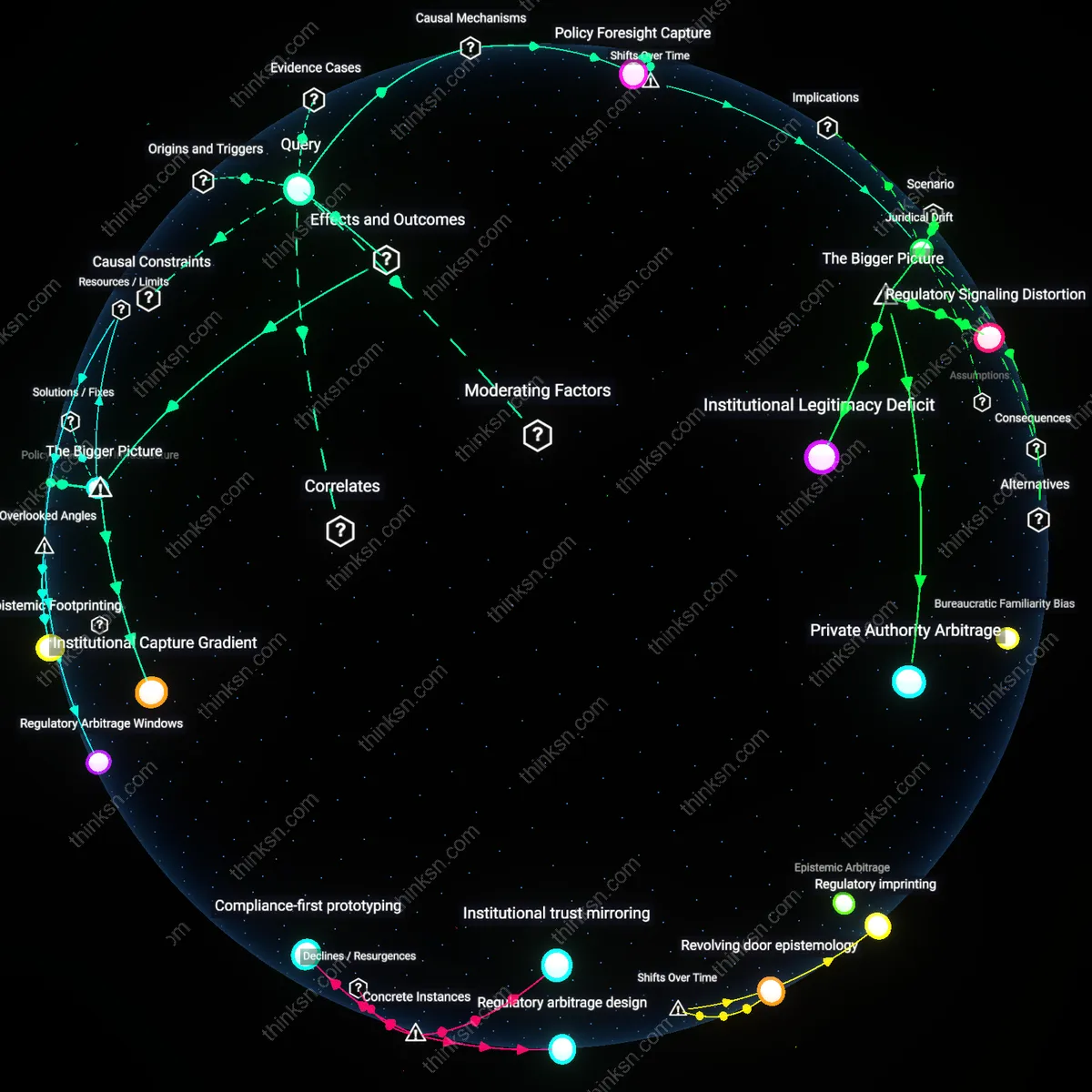

Feedback Legitimation

After the Federal Advisory Committee Act’s 1972 amendments expanded private-sector participation in federal policymaking, FCC technology panels began selecting experts predominantly from firms with existing spectrum holdings, creating a feedback loop where prior regulatory compliance becomes the implicit credential for future rule design; as a result, post-2000 spectrum allocation standards increasingly required costly interoperability certifications—originally developed for 3G networks—that new entrants could not afford, while established carriers leveraged prior investments as regulatory capital. The shift from pre-1990 advisory bodies focused on public utility principles to post-1990 market-optimization panels reveals how legitimacy, once earned through compliance, became a self-renewing prerequisite for influence, obscuring structural bias as technical continuity.

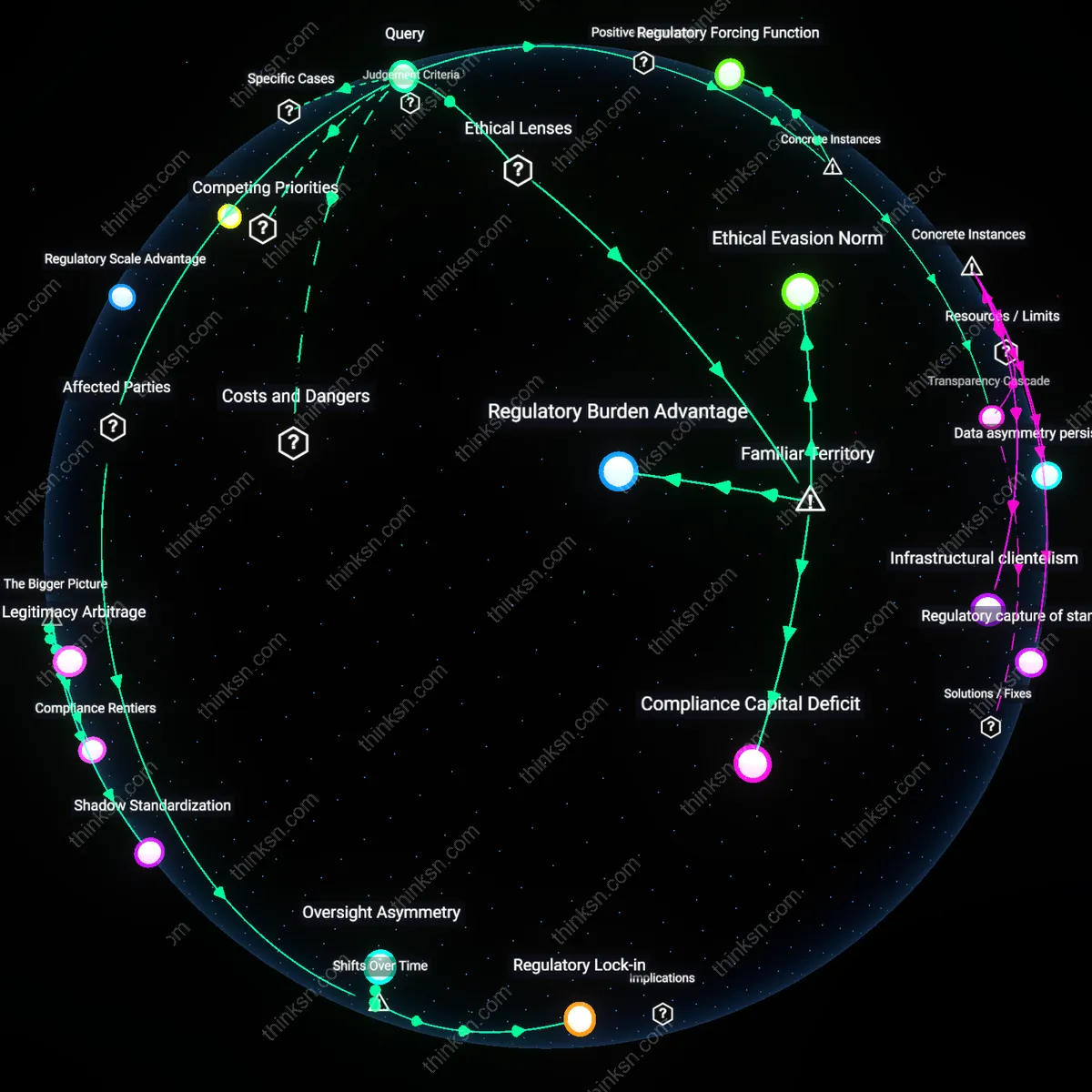

Standards Drift

Beginning in the mid-2010s, FCC technocrats increasingly populated advisory roles with engineers from dominant platform firms like Google and Apple, who contributed to rulemaking on digital television transition and 5G wireless coordination, but oriented standards around software-defined networking protocols that favored cloud-integrated ecosystems; this redefined compliance not around physical infrastructure ownership—as in earlier 20th-century regulation—but around data orchestration capacity, a capability accumulated slowly over the 2010–2020 decade and thus inaccessible to startups despite lower entry costs in hardware. The overlooked consequence is that temporal advantage in data architecture accumulation has replaced spectrum access as the decisive bottleneck, with standardization now drifting incrementally toward invisible, code-level requirements that mirror the developmental trajectory of dominant firms’ internal systems.

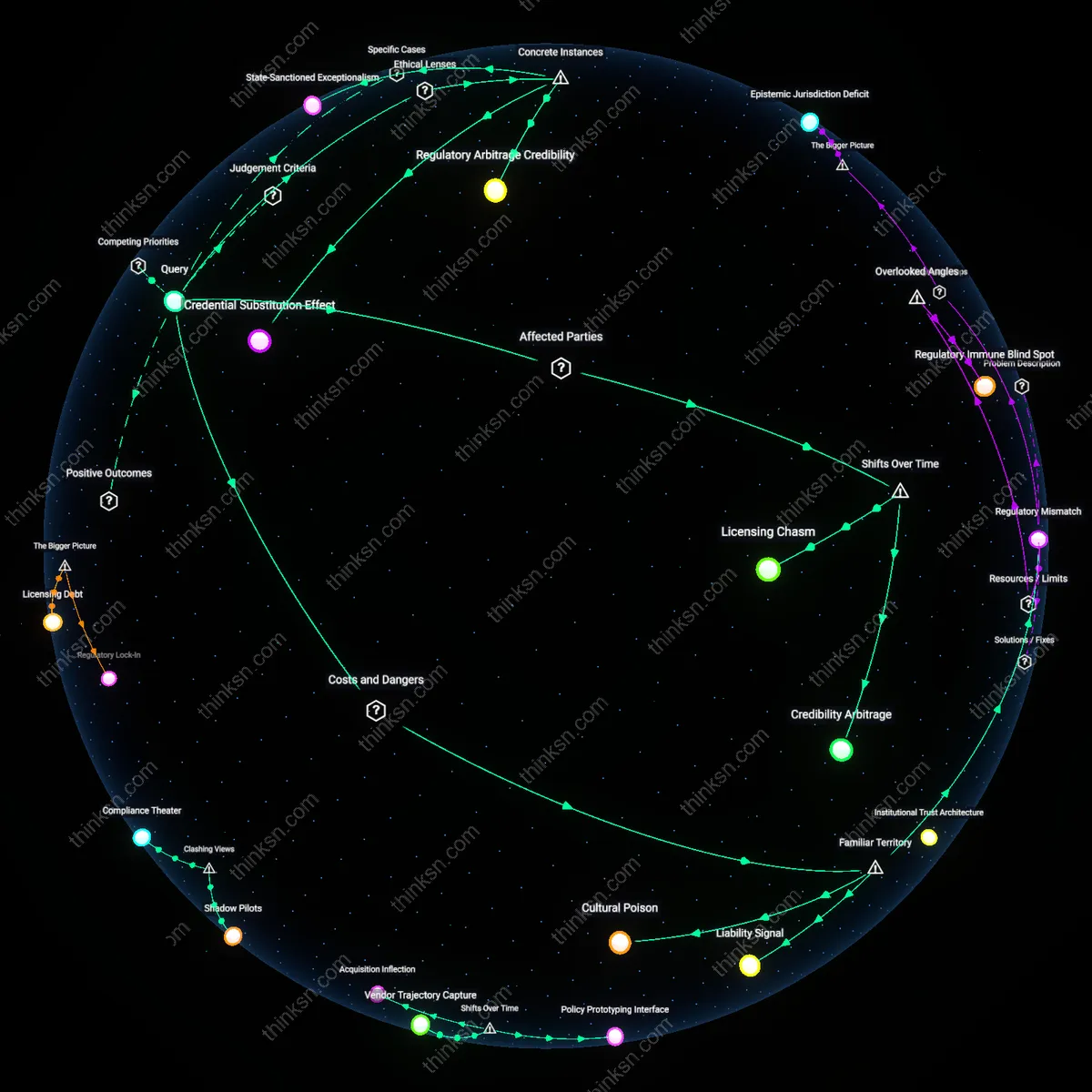

Regulatory Arbitrage Terrain

When tech executives from Google, Apple, and Qualcomm dominated the FCC’s Technological Advisory Council during the 2010–2015 spectrum reform debates, their influence steered technical standards toward systems requiring extensive engineering resources and pre-existing infrastructure, such as Licensed Assisted Access (LAA) in the 3.5 GHz band. This privileging of complexity and integration with legacy networks raised barriers for startups lacking spectrum portfolios or RF design teams, turning regulatory standardization into a terrain where compliance became a form of competitive exclusion. The case reveals how expertise structures in advisory bodies do not merely reflect technological realities but actively construct them in ways that reward organizational scale and prior access.

Cognitive Lock-in Mechanism

During the FCC’s 2017 deliberations on the Citizens Broadband Radio Service (CBRS), technical advisors drawn primarily from incumbent carriers and hardware vendors normalized assumptions about interference modeling that favored centralized databases and tiered access—design choices aligned with existing network architectures. These assumptions, embedded in the Spectrum Access System (SAS) standards, disadvantaged distributed or mesh-based technologies that startups like Federated Wireless had to retrofit into a framework optimized for hierarchical control. The result was a subtle but decisive cognitive lock-in, where the framing of technical risk during advisory panels pre-empted alternative designs before they reached the standardization stage.

Expertise Reciprocity Nexus

The repeated participation of engineers from Intel and Cisco in FCC working groups on millimeter wave (mmWave) policy prior to the 2016 5G Fast Lane initiative created a feedback loop where proprietary test results and channel modeling assumptions were adopted as public technical benchmarks, effectively positioning their R&D pipelines as de facto regulatory templates. This reciprocity—where private research becomes public reference—allowed early movers to define what counts as 'verified performance,' shutting out smaller innovators who lacked both measurement infrastructure and access to the informal networks shaping advisory consensus. The dynamic exposes how the institutional credibility of experts functions not just as advisory input but as a covert standard-setting vector.