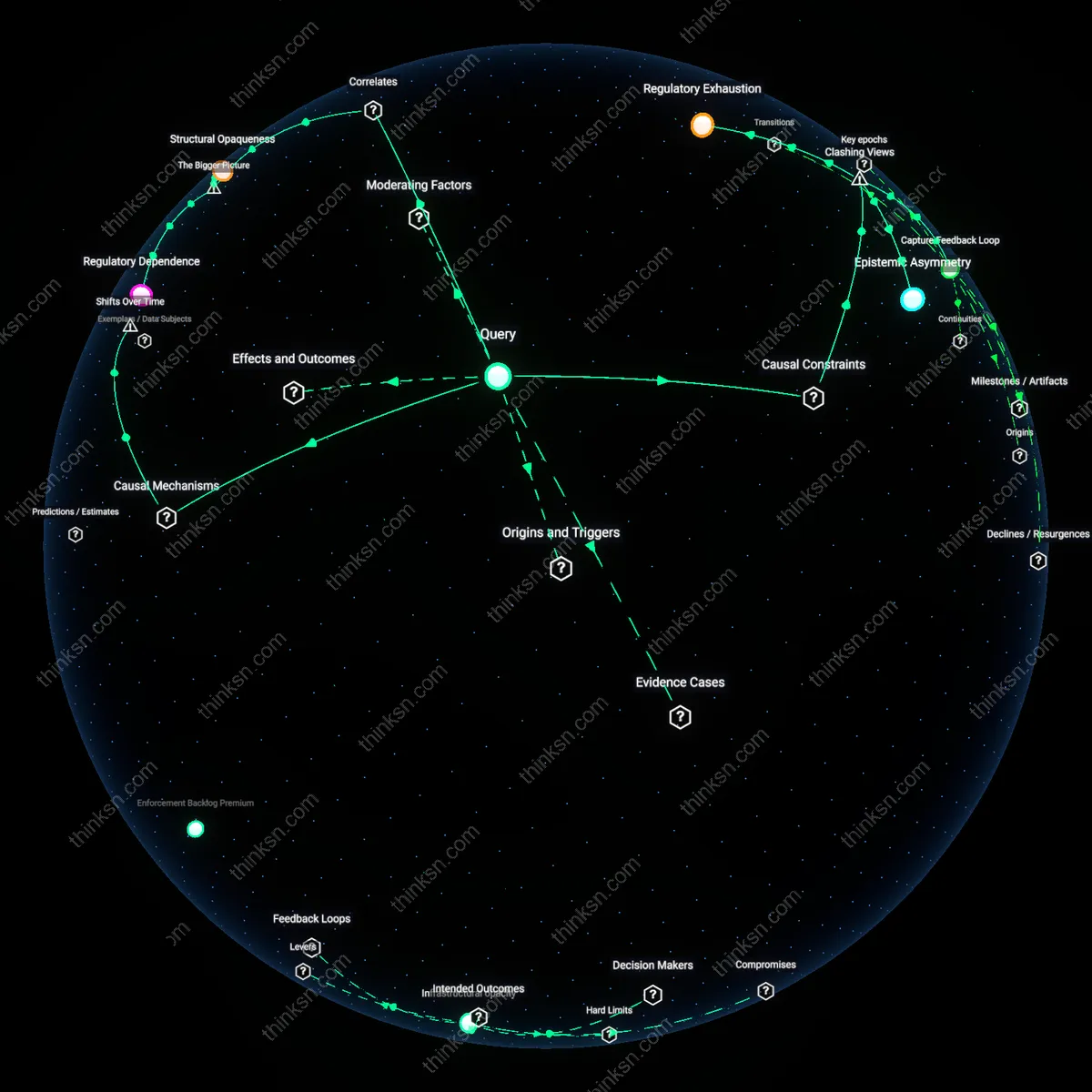

Regulatory Epistemic Dependence

Regulators first began systematically relying on industry-generated technical data in the U.S. federal pesticide regulation of the 1940s, when the USDA and later the EPA lacked in-house capacity to assess chemical toxicity and environmental impact, forcing them to accept test results directly from manufacturers under the Federal Insecticide, Fungicide, and Rodenticide Act (FIFRA). This dependence became institutionalized as the volume and complexity of data grew, privileging firms with the resources to produce standardized, GLP-compliant studies, which smaller entrants could not afford. The non-obvious consequence was not mere influence but the structural delegation of epistemic authority—where defining what counts as valid evidence became controlled by those who could fund it, embedding asymmetry into regulatory legitimacy itself.

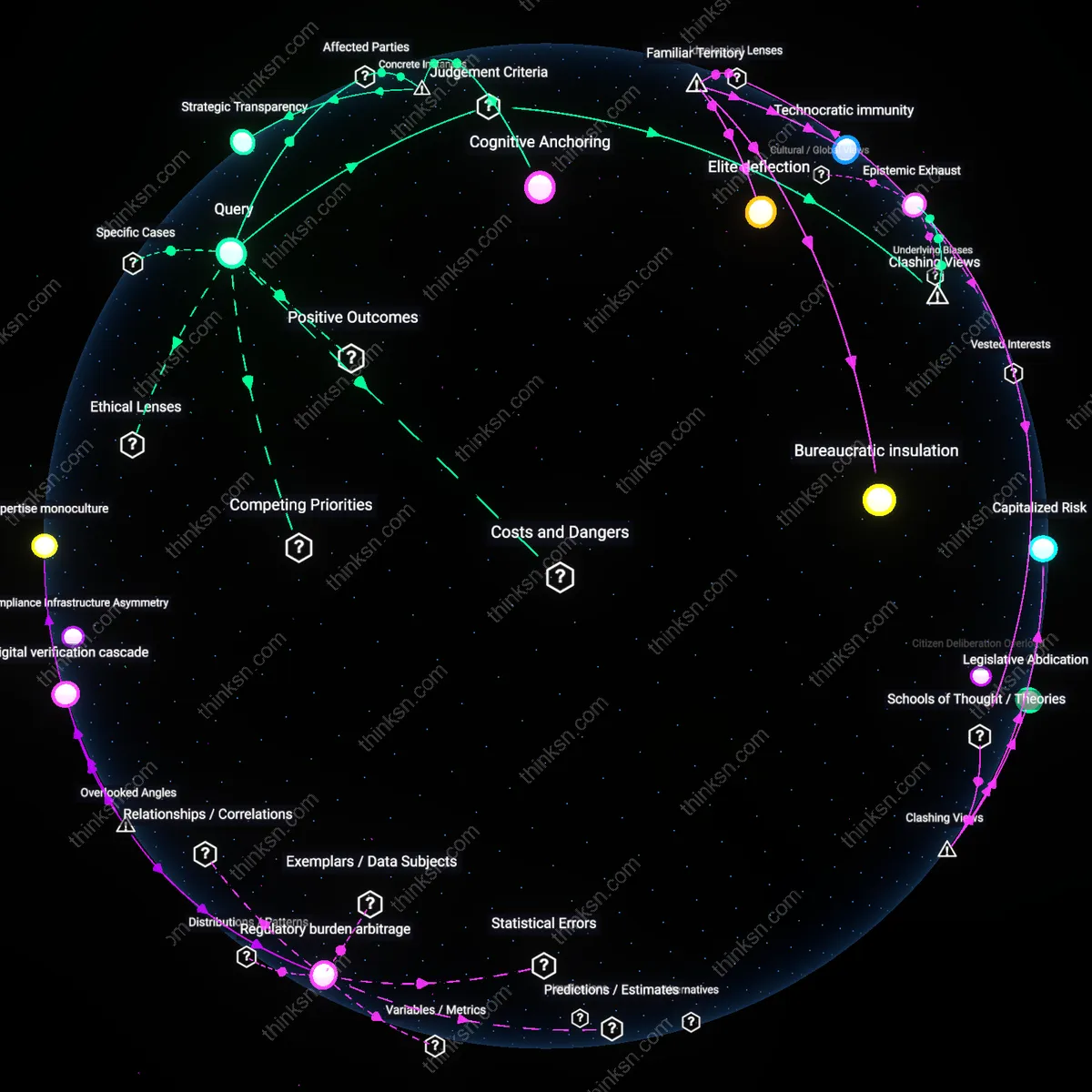

Compliance Infrastructure Asymmetry

Beginning in the 1980s, international regulatory harmonization efforts like the OECD’s Mutual Acceptance of Data (MAD) framework standardized technical testing protocols across member states, requiring all data submitted for chemical, pesticide, and pharmaceutical approval to originate from laboratories certified under Good Laboratory Practice (GLP). This global standardization favored multinational corporations that could amortize the high fixed costs of building and maintaining GLP-compliant labs across jurisdictions, while smaller firms faced prohibitive duplication or exclusion. The overlooked dynamic is that harmonization, often billed as reducing trade friction, actually deepened structural dependence on large firms by making compliance a function of infrastructural reach rather than scientific merit.

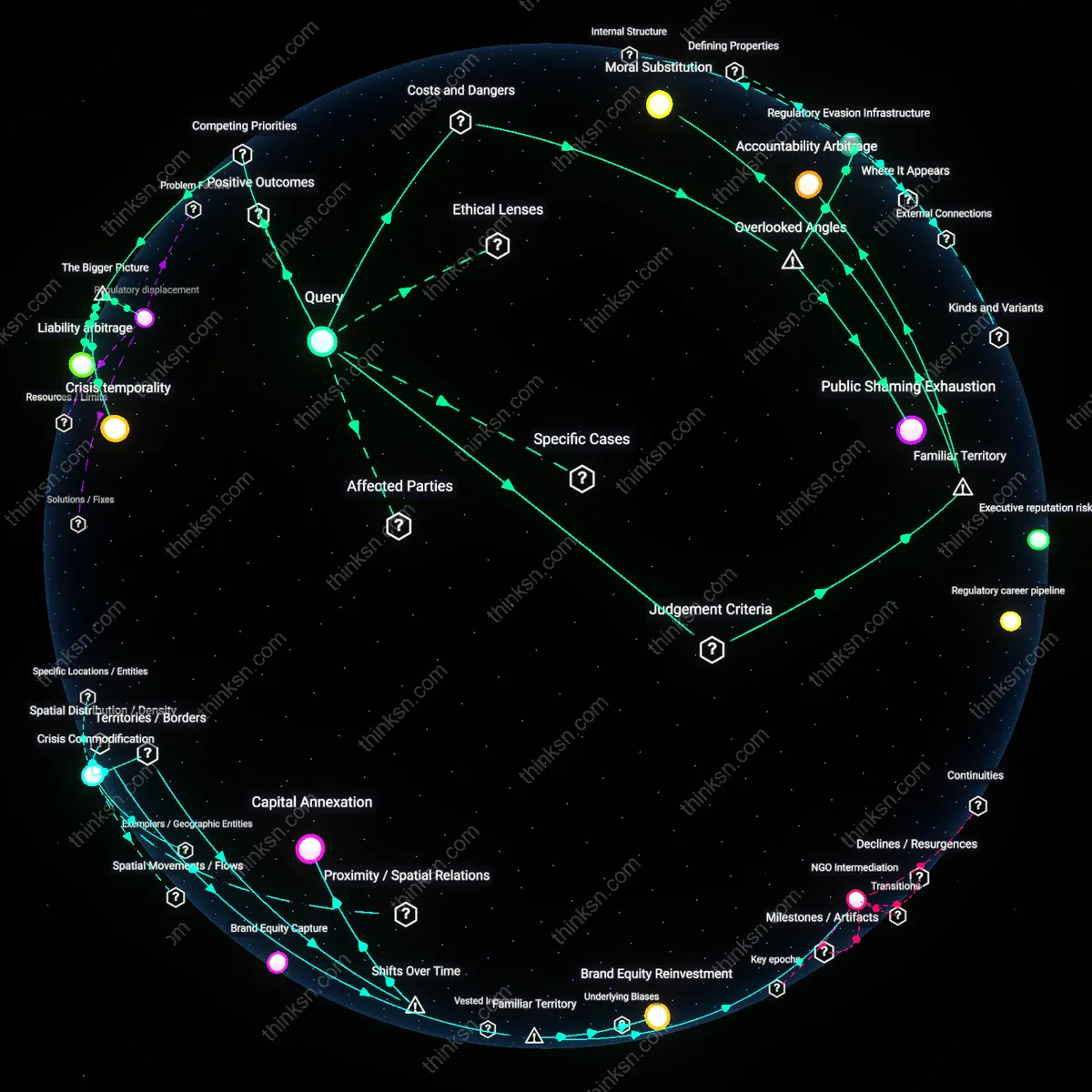

Regulatory Capture Nexus

Regulators increasingly depended on industry-supplied technical data because regulatory agencies, chronically under-resourced, lacked the in-house capacity to independently generate or verify complex scientific and engineering benchmarks—creating a structural dependency where large firms, with greater resources to produce compliant data, effectively set the evidentiary standards. This dynamic was entrenched not through overt corruption but through the institutional alignment of agency workflows with industry-submitted documentation, normalizing reliance on private expertise in rulemaking and compliance assessments. The non-obvious consequence is that regulatory legitimacy became contingent on technical inputs only large, vertically integrated firms could consistently provide, subtly excluding smaller competitors unable to meet data production thresholds.

Asymmetry Enabling Condition

The shift toward data-intensive regulation disproportionately favored large firms because complex reporting requirements and compliance metrics evolved in ways that implicitly assumed access to dedicated regulatory affairs teams, advanced modeling software, and historical data repositories—capabilities systematically concentrated in well-capitalized corporations. As formal rules became more standardized and prescriptive, smaller entities faced escalating marginal costs to meet evidentiary burdens, while incumbent firms leveraged economies of scale to absorb and even instrumentalize regulatory complexity. This created a systemic asymmetry where regulatory design, though facially neutral, operated as a barrier to entry, reinforcing oligopolistic control over data-driven compliance regimes.

Regulatory burden arbitrage

The 1970 Clean Air Act Amendments mandated extensive emissions testing data submissions, creating a compliance infrastructure that only large firms could cost-effectively sustain, thus turning data generation capacity into a regulatory barrier to entry. This shifted regulatory reliance from observable emissions outcomes to audited technical reports, which were more readily produced by established firms with in-house engineering teams and certification histories, making data density a proxy for credibility. The overlooked mechanism is not regulatory capture per se, but the silent reconfiguration of compliance burden into a technicality—where the ability to format and deliver data in federally required structures became more decisive than pollution performance itself, privileging firms already resourced for bureaucracy.

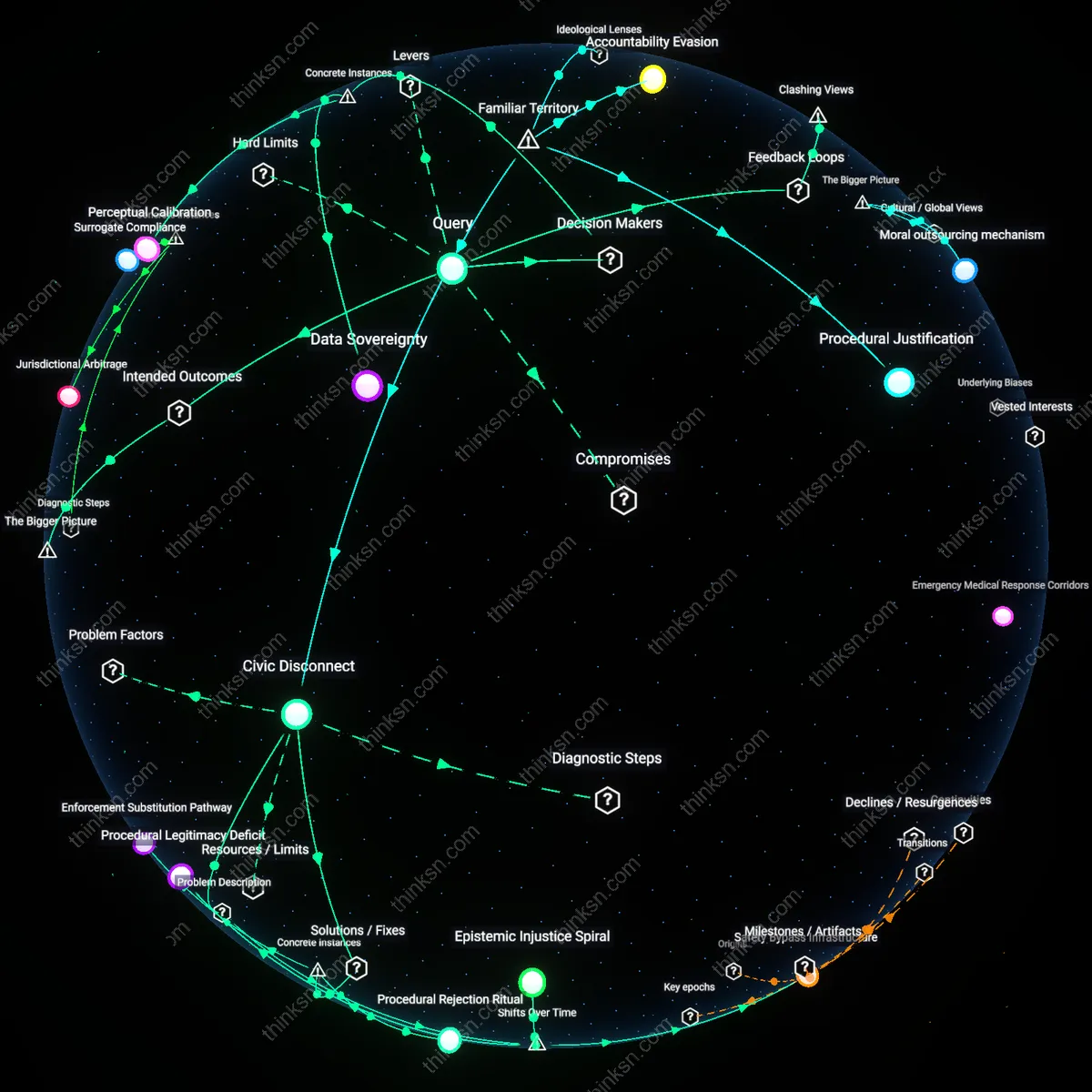

Expertise monoculture

The Office of Management and Budget’s Standard Form 30 (rev. 1984) institutionalized the use of quantitative risk assessments in federal rulemaking, a move that standardized regulatory review around models requiring specialized training in toxicology and exposure modeling, disciplines predominantly taught in programs funded by industry consortia. This created a feedback loop where regulators increasingly depended on experts trained in frameworks shaped by corporate research agendas, normalizing assumptions about acceptable uncertainty and exposure thresholds. The overlooked dependency is epistemic homogeneity—the narrowing of acceptable scientific interpretation not through overt lobbying, but through the quiet alignment of credentialing systems with industrial knowledge ecosystems, which marginalized alternative analytical traditions.

Digital verification cascade

The 2015 EPA eReporting mandate for greenhouse gas emissions required electronic submission in schema-defined formats, rendering small emitters’ manual records invisible unless converted by third-party software vendors, many of whom scaled services only for large industrial clients. This technical standardization privileged firms already invested in data infrastructure, turning timely regulatory visibility into a function of digital readiness rather than environmental impact. The overlooked dynamic is administrative detectability—where compliance became inseparable from format compatibility, enabling regulators to rely on data not because it was more accurate, but because it was computationally tractable, thereby automating a structural advantage for large firms.