Who Holds the Keys to AI Ethics? Former Officials or Industry Titans?

Analysis reveals 5 key thematic connections.

Key Findings

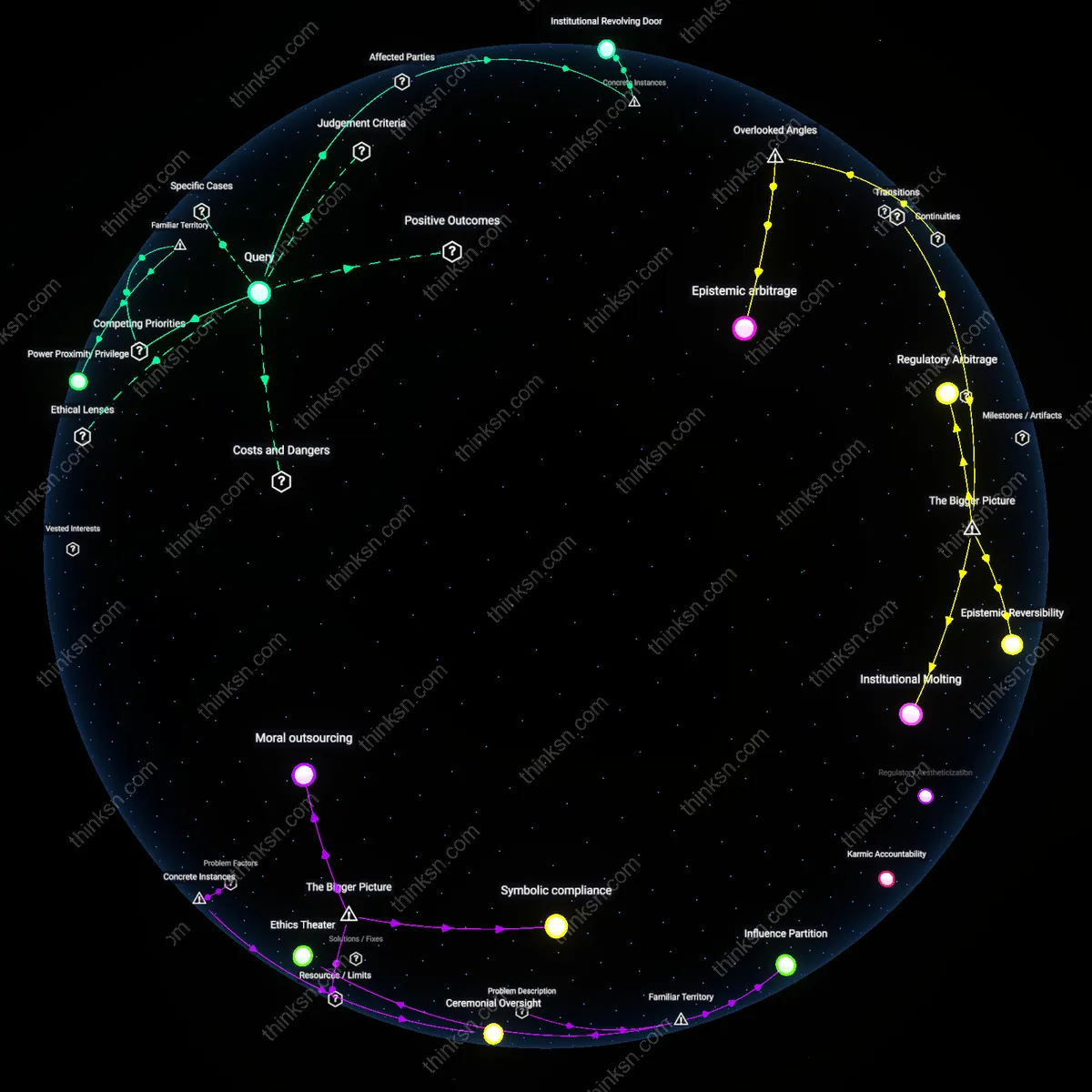

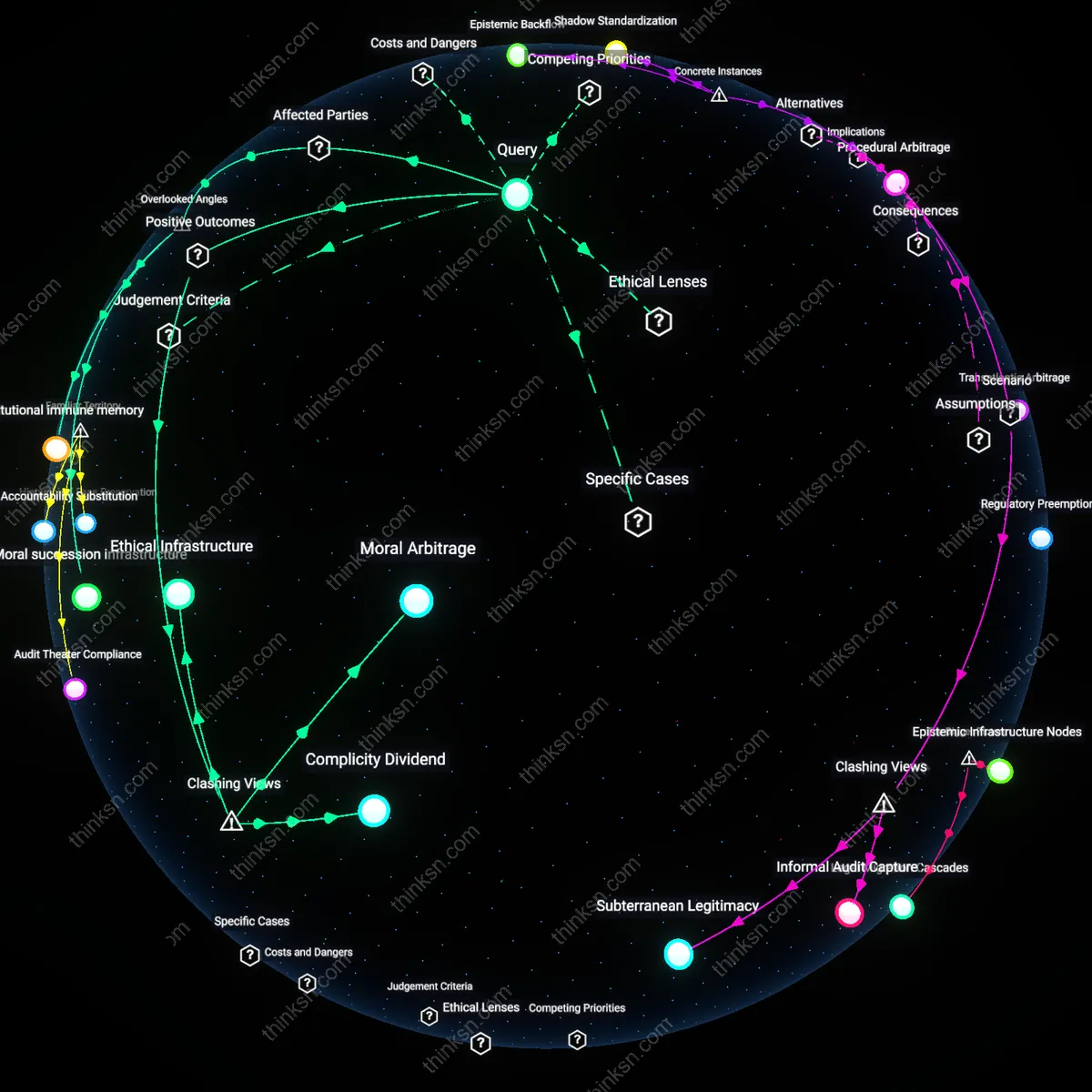

Institutional Revolving Door

The overrepresentation of former government officials on AI-ethics advisory boards indicates that career trajectories between regulatory agencies and tech firms normalize influence exchange, as seen in the case of Aza Raskin, co-founder of the Center for Humane Technology and former advisor to the U.S. Digital Service, who now shapes AI governance discourse while advancing private-sector aligned initiatives like the AI Safety Summit’s industry-driven framework; this movement across sectors institutionalizes a feedback loop where public legitimacy is leveraged to endorse corporate-led governance, a mechanism rarely scrutinized as structural rather than incidental. This reveals how personal mobility between state and corporate roles embeds asymmetrical accountability into the design of ethical oversight itself.

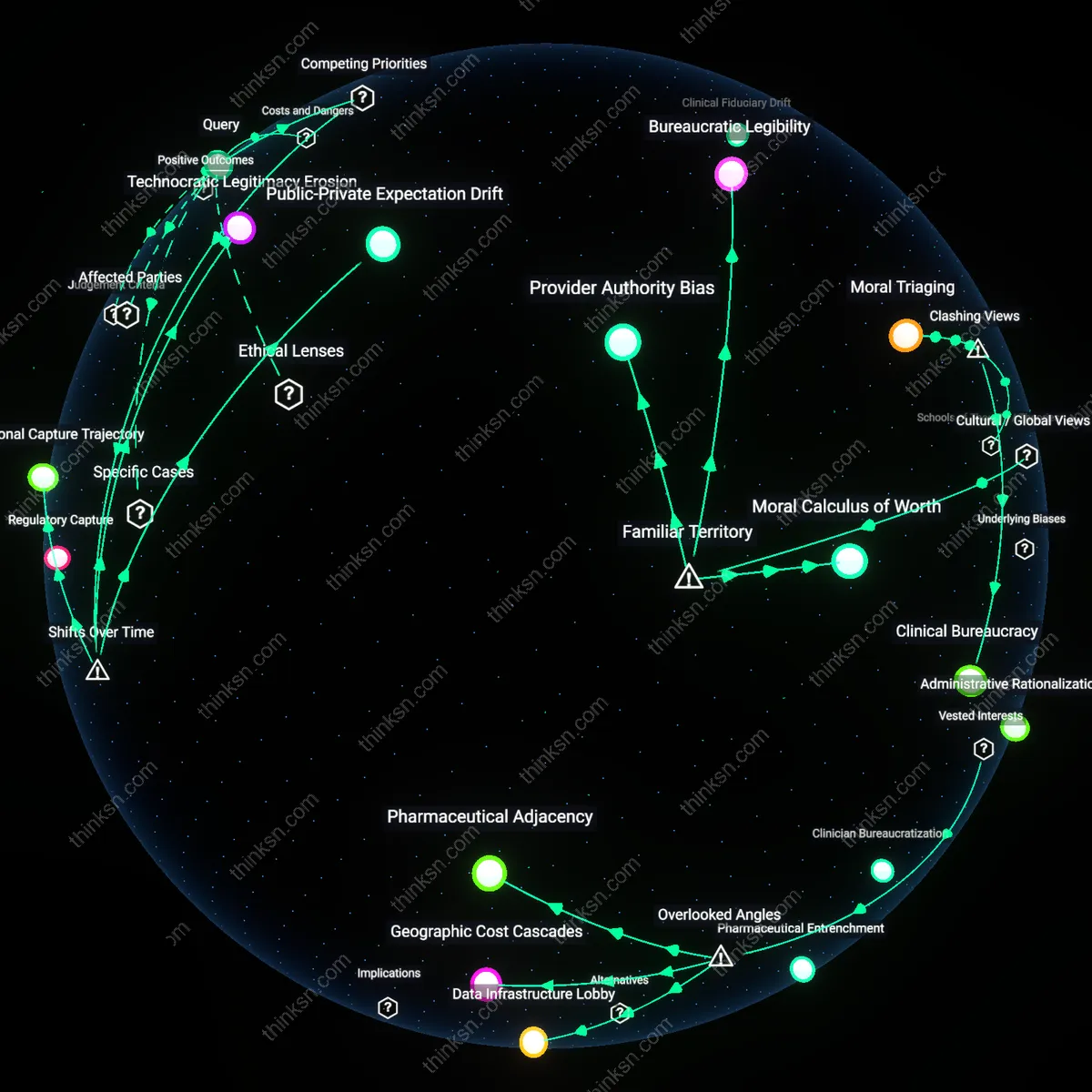

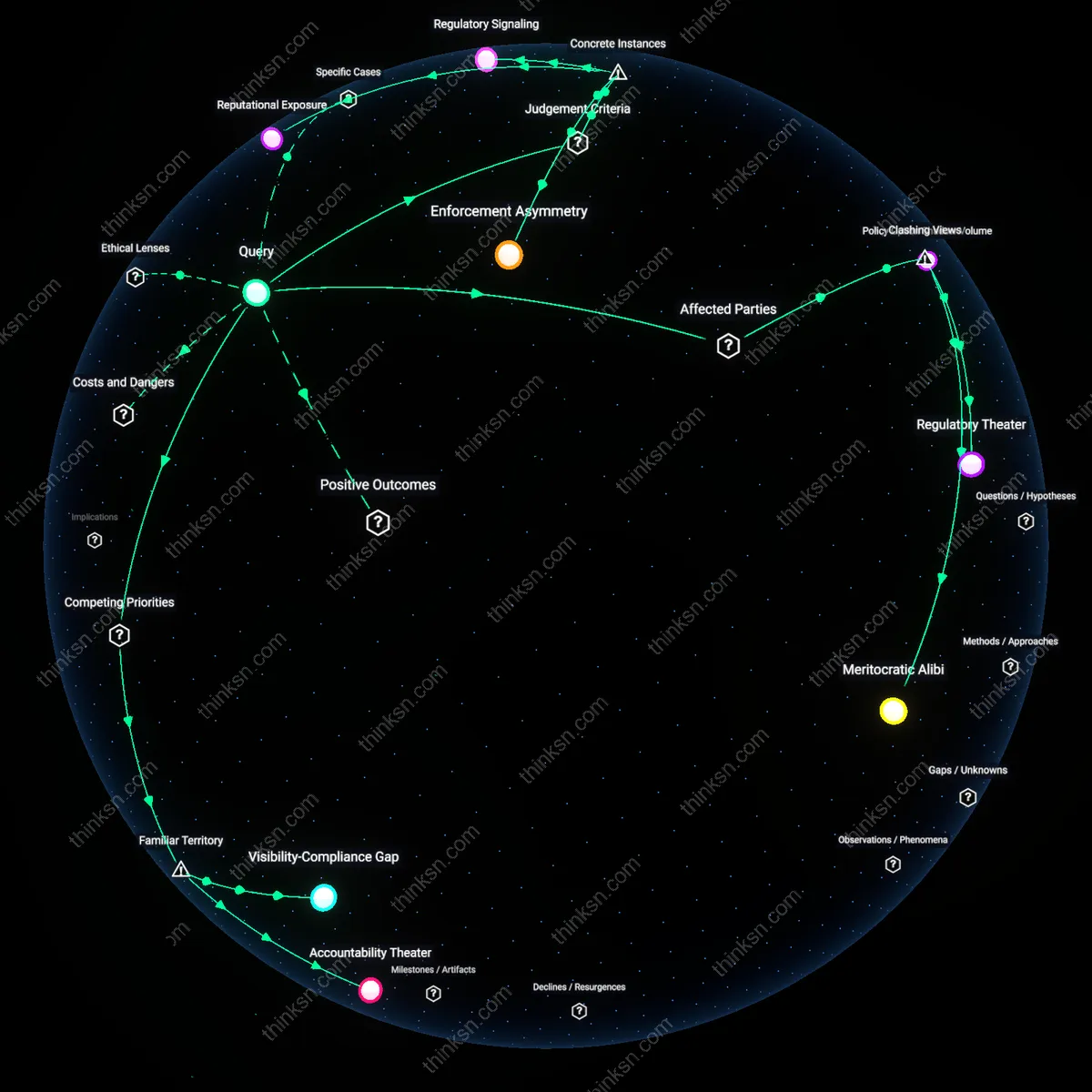

Legitimacy Laundering

The inclusion of ex-regulators like Meg Whitman, who served in the Clinton administration before leading HP and joining AI governance dialogues through the Partnership on AI, signals a strategy where corporate entities absorb public-sector credibility to insulate emerging AI systems from democratic contestation, operating through the specific mechanism of perceived neutrality conferred by former officials; this dynamic is critical because it masks power consolidation under the veneer of pluralism, transforming advisory roles into ceremonial conduits that absorb public trust without redistributing decision-making authority. The non-obvious consequence is not mere bias, but the systemic conversion of democratic legitimacy into proprietary governance capital.

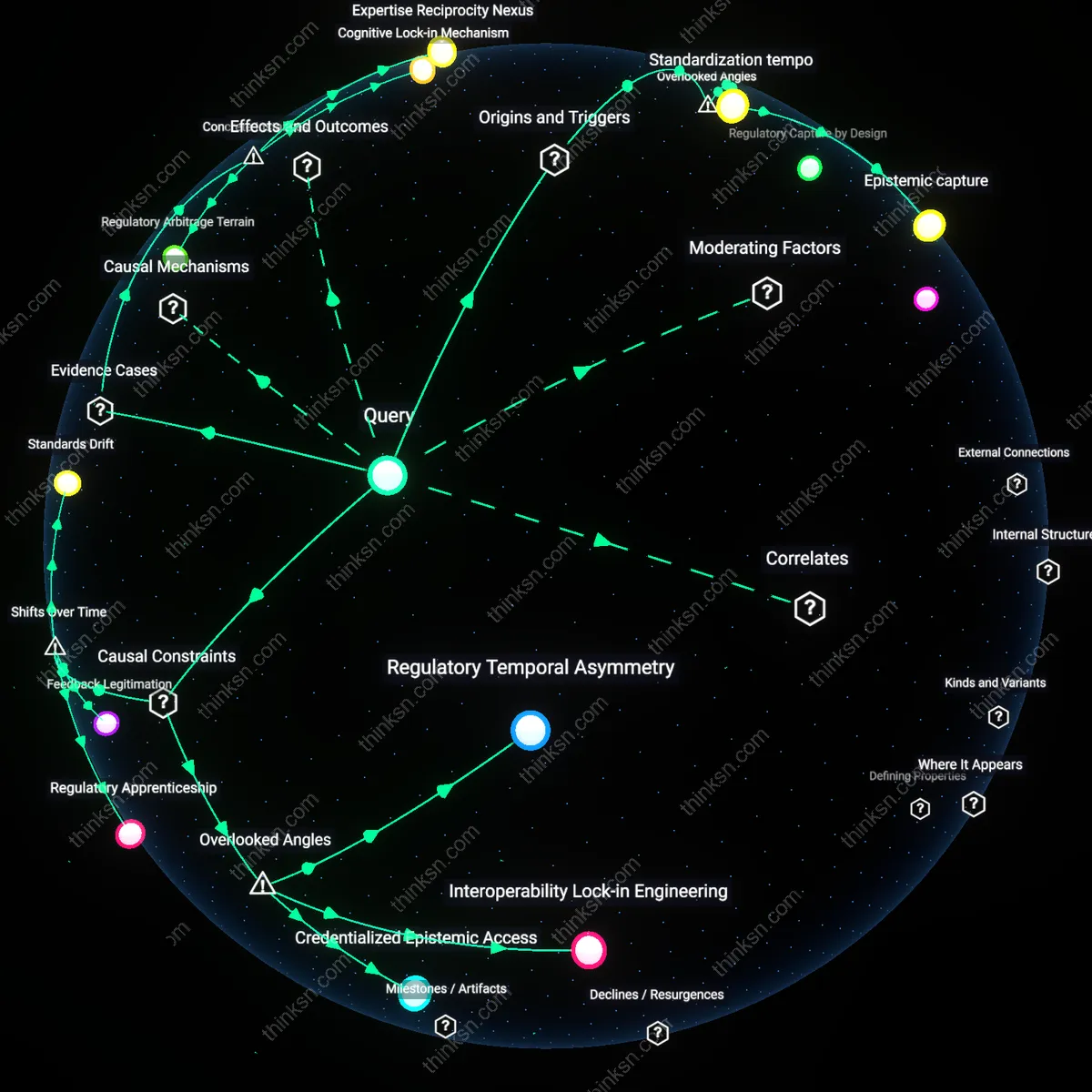

Accountability Arbitrage

When figures such as Chris Hoofnagle, a privacy law scholar and advisor to the FTC, consult for AI ethics boards at firms like Microsoft, they navigate conflicting fiduciary norms by favoring legal minimalism over transformative regulation, a dynamic evident in Microsoft’s adoption of ‘responsible AI’ frameworks that align with existing compliance thresholds rather than societal risk; this selective transfer of public-interest expertise enables firms to meet symbolic expectations while evading binding oversight, exposing how expertise becomes a regulatory currency that corporations optimize across jurisdictions and standards. The underappreciated mechanism is not corruption but calculated jurisdictional evasion—exploiting gaps between public duty and private mandate.

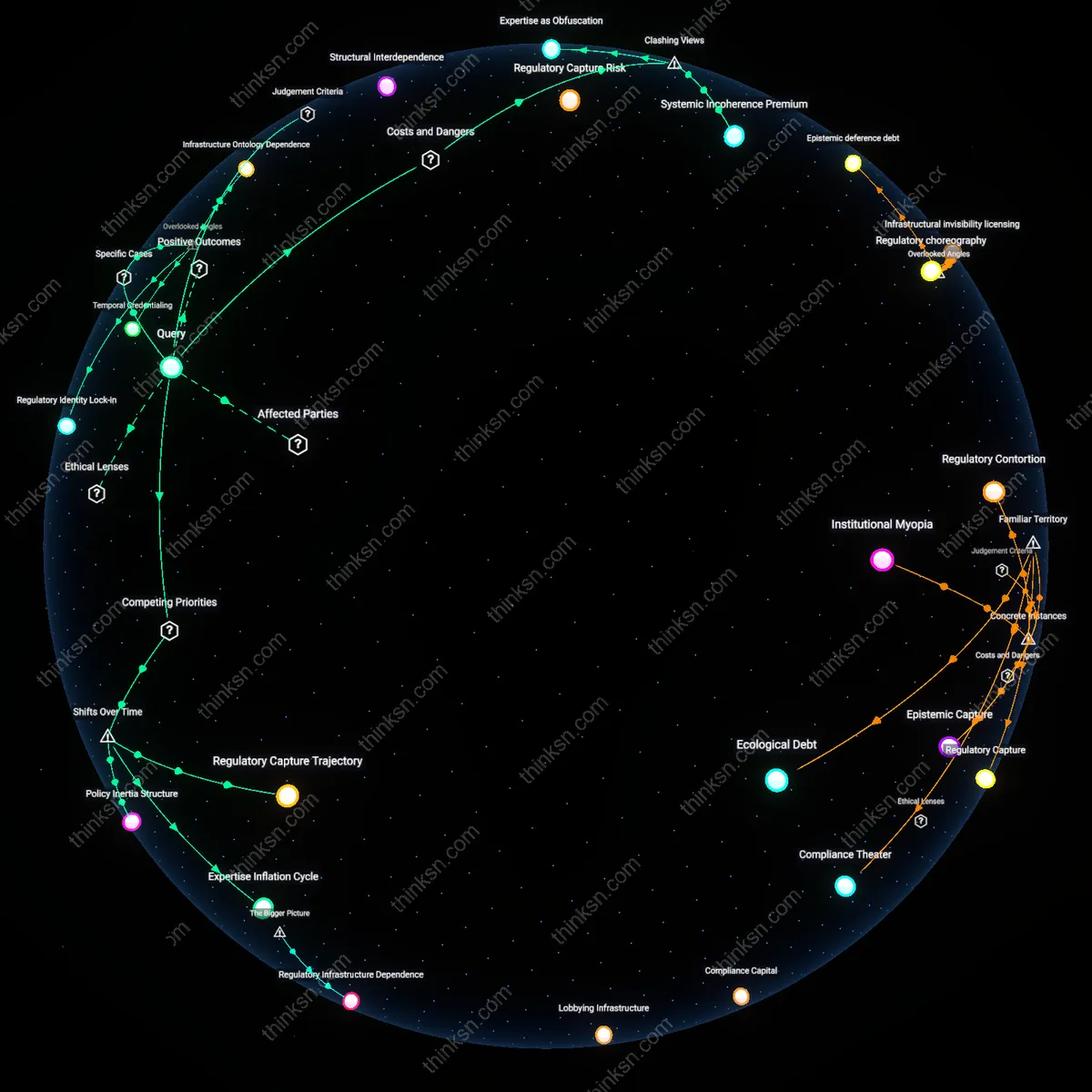

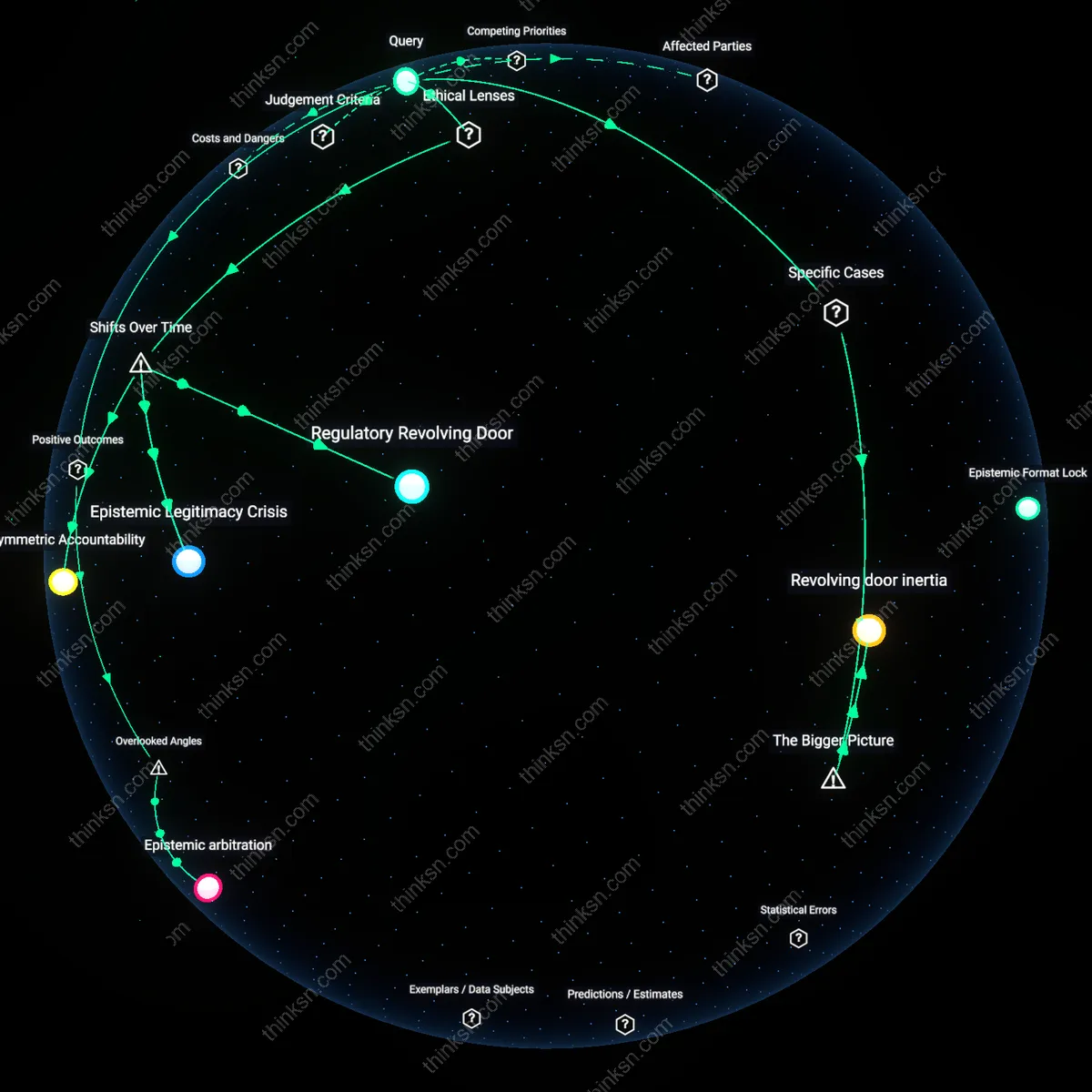

Regulatory Revolving Door

The overrepresentation of former government officials on AI-ethics advisory boards indicates that corporate entities leverage ex-regulators to pre-empt or dilute future regulation, because those individuals carry embedded norms and access networks from their prior roles, enabling firms to shape ethical guidelines in ways that align with favorable regulatory outcomes. This mechanism functions through institutional memory and personal relationships that blur enforcement boundaries, making it harder to impose strict oversight later. What’s underappreciated is not that these figures lend credibility, but that their presence systematically disables accountability by converting ethics into anticipatory compliance—a move widely recognized in public discourse around lobbying but rarely attributed to 'ethical' governance structures.

Power Proximity Privilege

Corporate AI ethics boards favor former government officials because proximity to state power is itself a form of capital that grants strategic advantage in shaping policy narratives, access, and enforcement timelines, privileging those who have already operated inside political institutions over grassroots experts or independent scholars. This dynamic operates through a social economy where influence is accrued not through technical or ethical expertise but through demonstrated access to authoritative spaces, reinforcing a hierarchy where only those validated by prior systems are trusted to govern emerging risks. Though people commonly associate credibility with titles and past office, what remains unseen is how this defaults to a self-replicating elite circuit—familiar from 'old boys' networks in politics and finance—now embedded in the moral oversight of transformative technologies.