Does EU’s Trustworthy AI Hinder Global Competition?

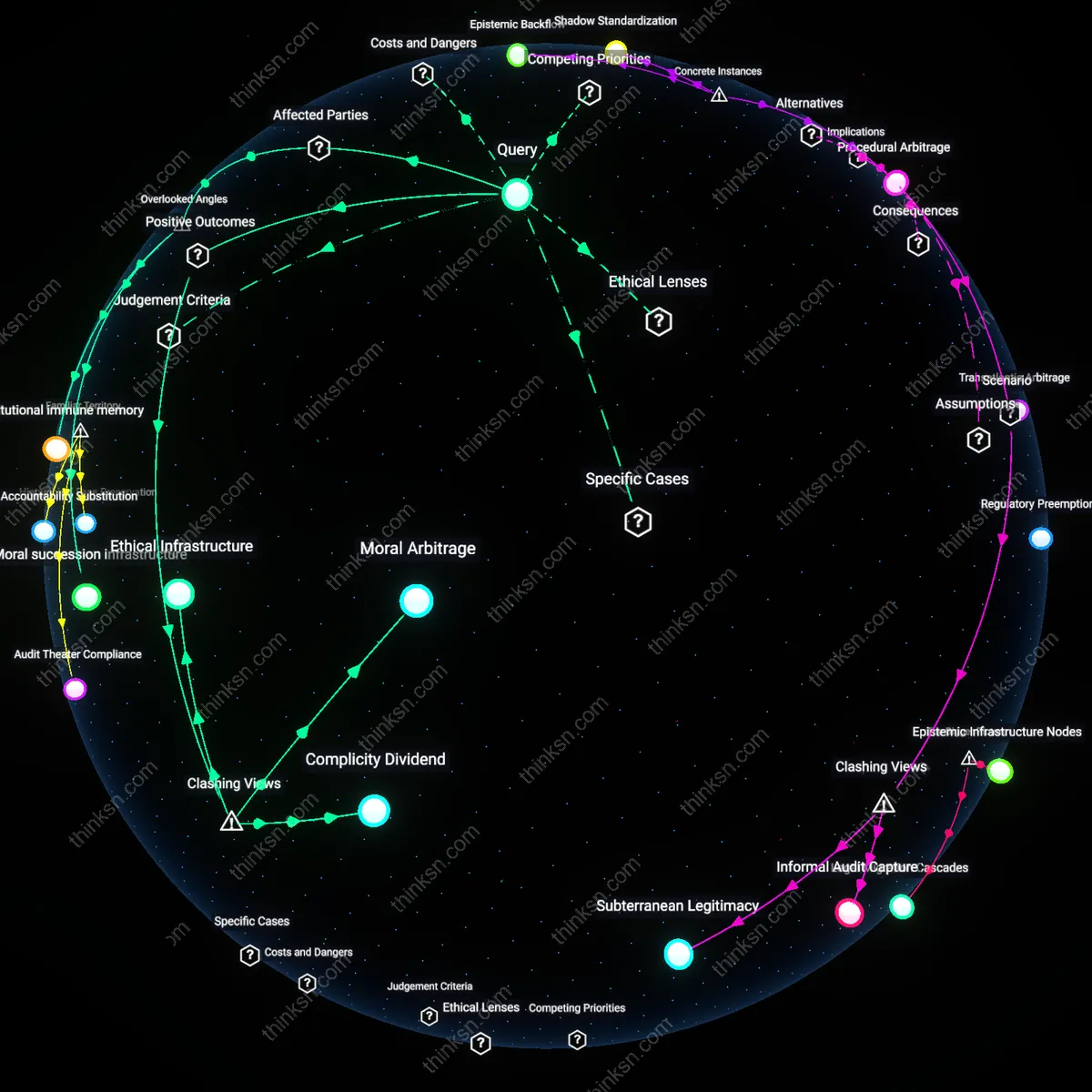

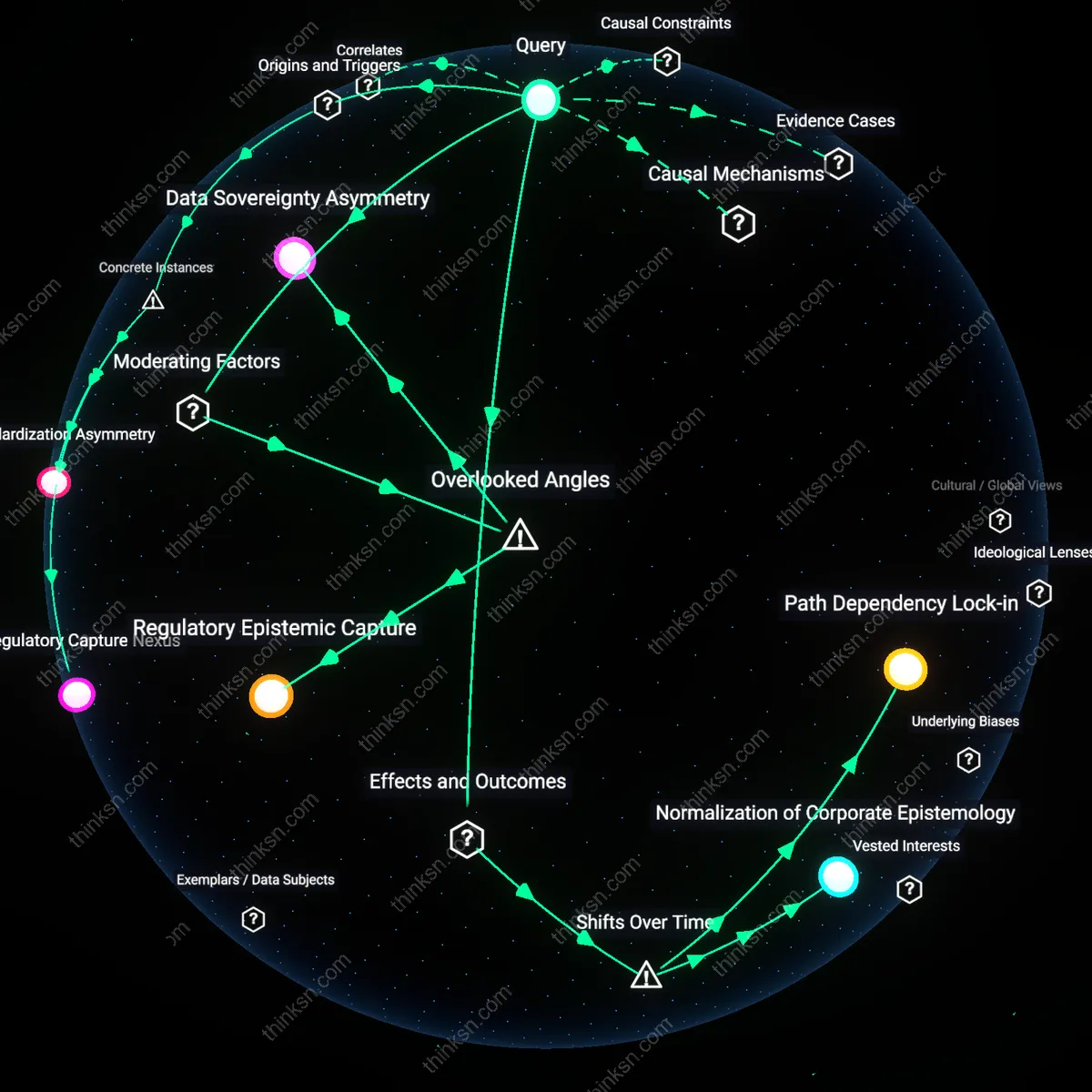

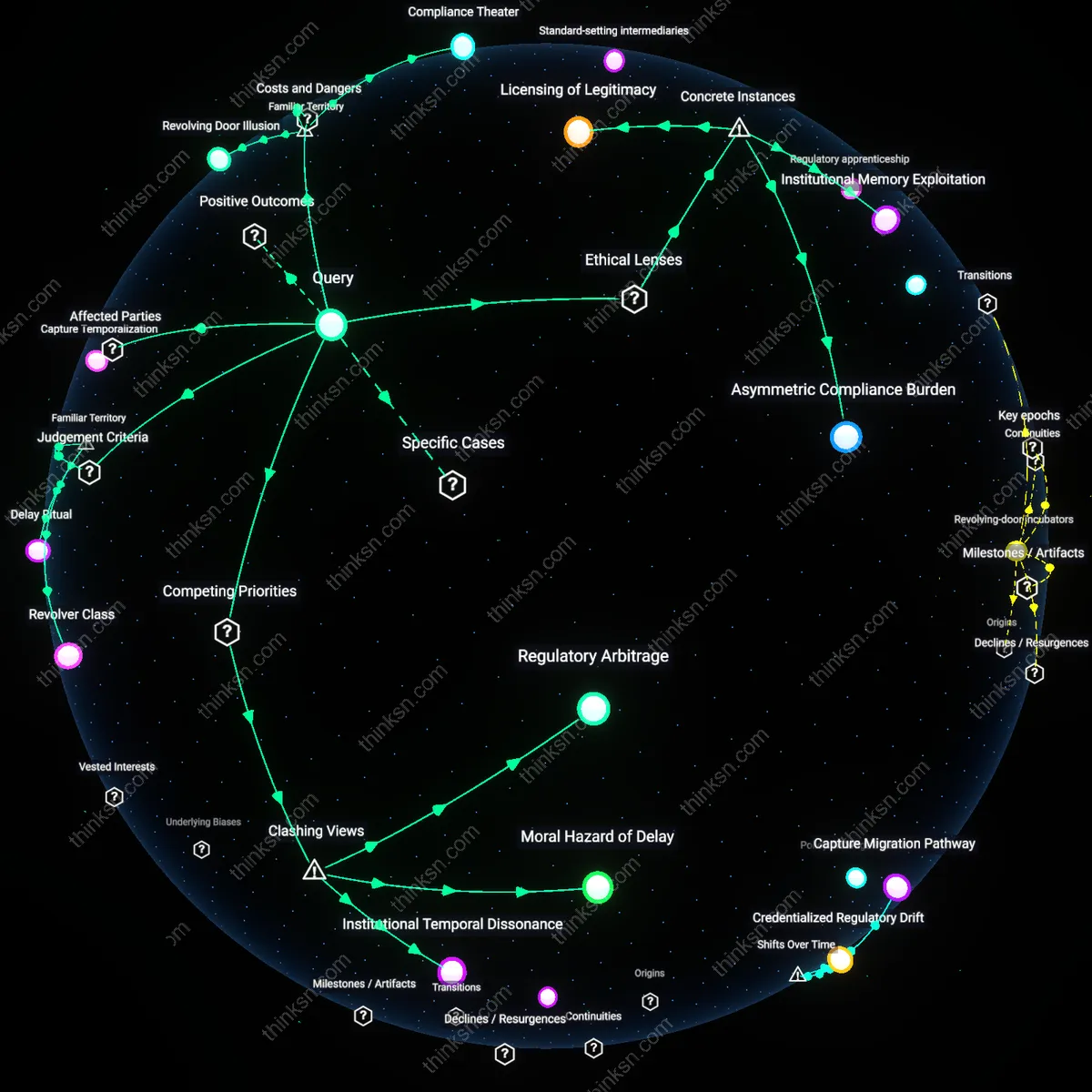

Analysis reveals 9 key thematic connections.

Key Findings

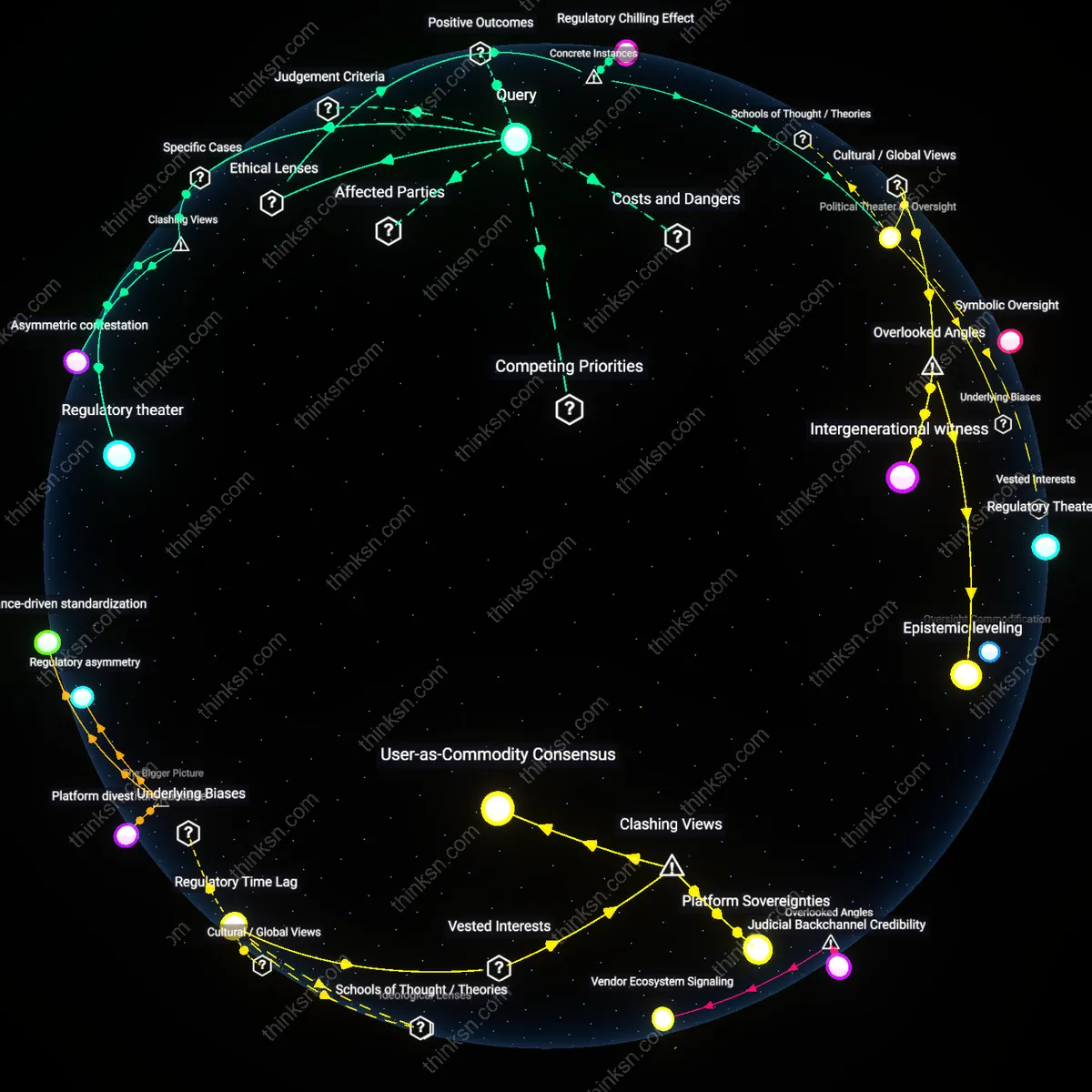

Regulatory Asymmetry

The EU’s ‘trustworthy AI’ standards emerged as a strategic instrument of digital sovereignty, driven by the 2018 GDPR precedent and solidified during the 2021 AI Act proposal, which reframed ethical compliance as a prerequisite for market access. This mechanism places disproportionate administrative and technical burdens on non-EU firms lacking embedded data governance structures aligned with European norms, particularly U.S.-based AI developers accustomed to sectoral, minimal regulation. Unlike earlier trade barriers based on tariffs or quotas, this shift marks the rise of values-based regulatory divergence as a non-tariff barrier—obscuring protectionist effects under ethical legitimacy. The non-obvious insight is that compliance cost is not an unintended side effect but a designed filter, leveraging the EU’s market power to export regulatory standards in the absence of dominant AI infrastructure.

Ethical Trade Regime

The push for ‘trustworthy AI’ originated in post-financial-crisis EU institutions seeking legitimacy through moral leadership, especially after 2016 when the European Commission prioritized human-centric technology in response to growing public skepticism of U.S.-dominated digital platforms. By institutionalizing ethical audits, risk classifications, and fundamental rights impact assessments into the AI Act draft, the EU transitioned from reactive data protection (post-Snowden) to proactive normative framing—effectively turning privacy and fairness into trade-conditioning mechanisms by the late 2010s. This shift reveals that ethical standards now function not merely as safeguards but as gatekeeping criteria, allowing Brussels to act as a norm entrepreneur that shapes global AI development trajectories despite lacking competitive AI firms. The underappreciated dynamic is that moral framing becomes a vector for industrial upgrading when material competitiveness is absent.

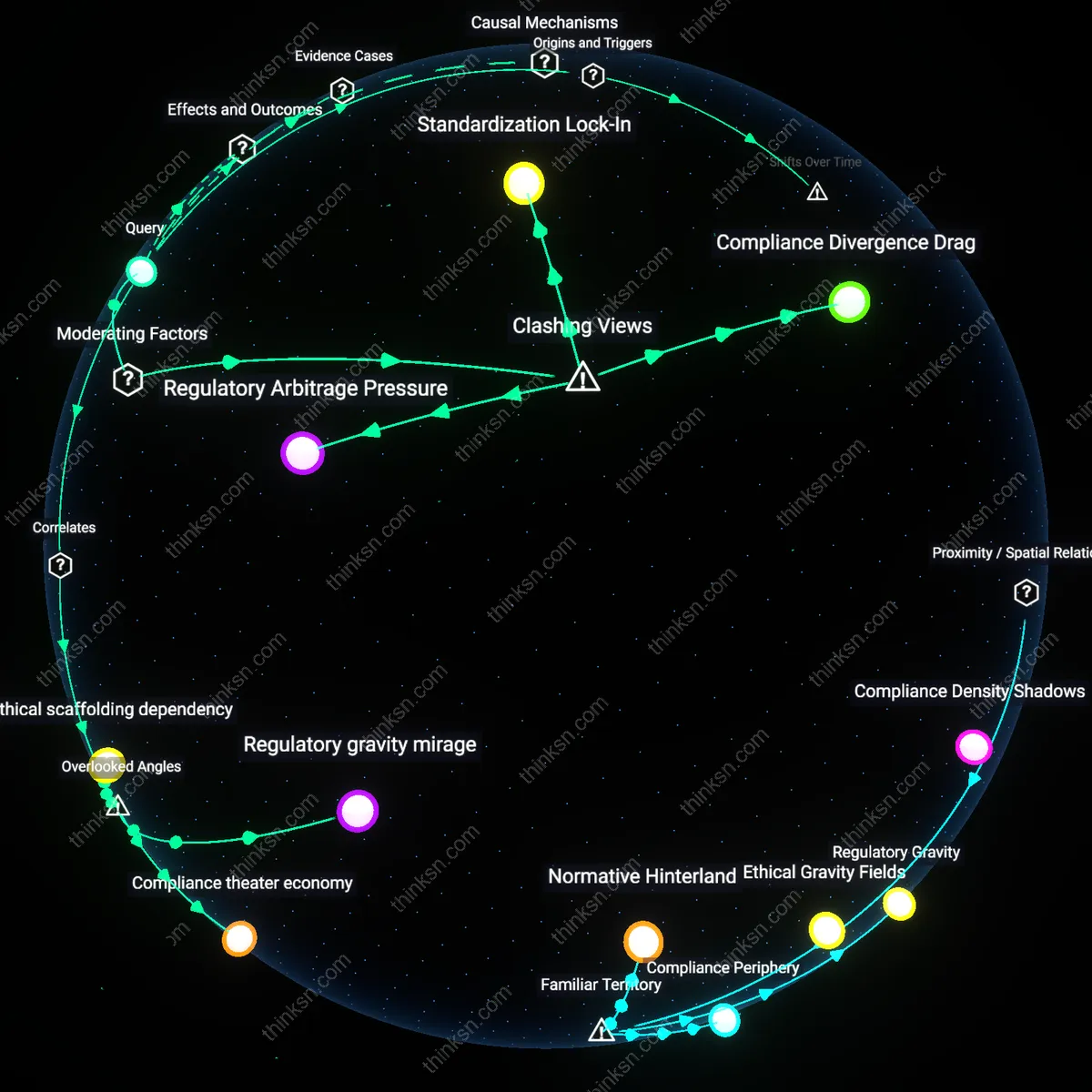

Regulatory Arbitrage Pressure

The EU's 'trustworthy AI' standards primarily amplify European industrial competitiveness not by blocking foreign firms outright, but by triggering a race among third-country companies to reconfigure their AI governance toward Brussels-aligned models, thereby elevating compliance as a strategic burden that favors incumbents with proximity to EU regulatory networks. Multinational AI developers from the U.S. and China must now divert investment into audit trails, human oversight units, and data governance reforms tailored to EU specifications—not because they are banned, but because selective market access forces regulatory mimicry, privileging EU-based firms that already operate under these constraints and reducing the agility of competitors from regulatory rival regimes. This dynamic undermines the common view that the AI Act is protectionist by design, revealing instead how stringent regulation can weaponize administrative complexity to restructure global competition through indirect alignment. What is non-obvious is that the real barrier isn't market exclusion—it's the cost of becoming a transnational regulatory hybrid.

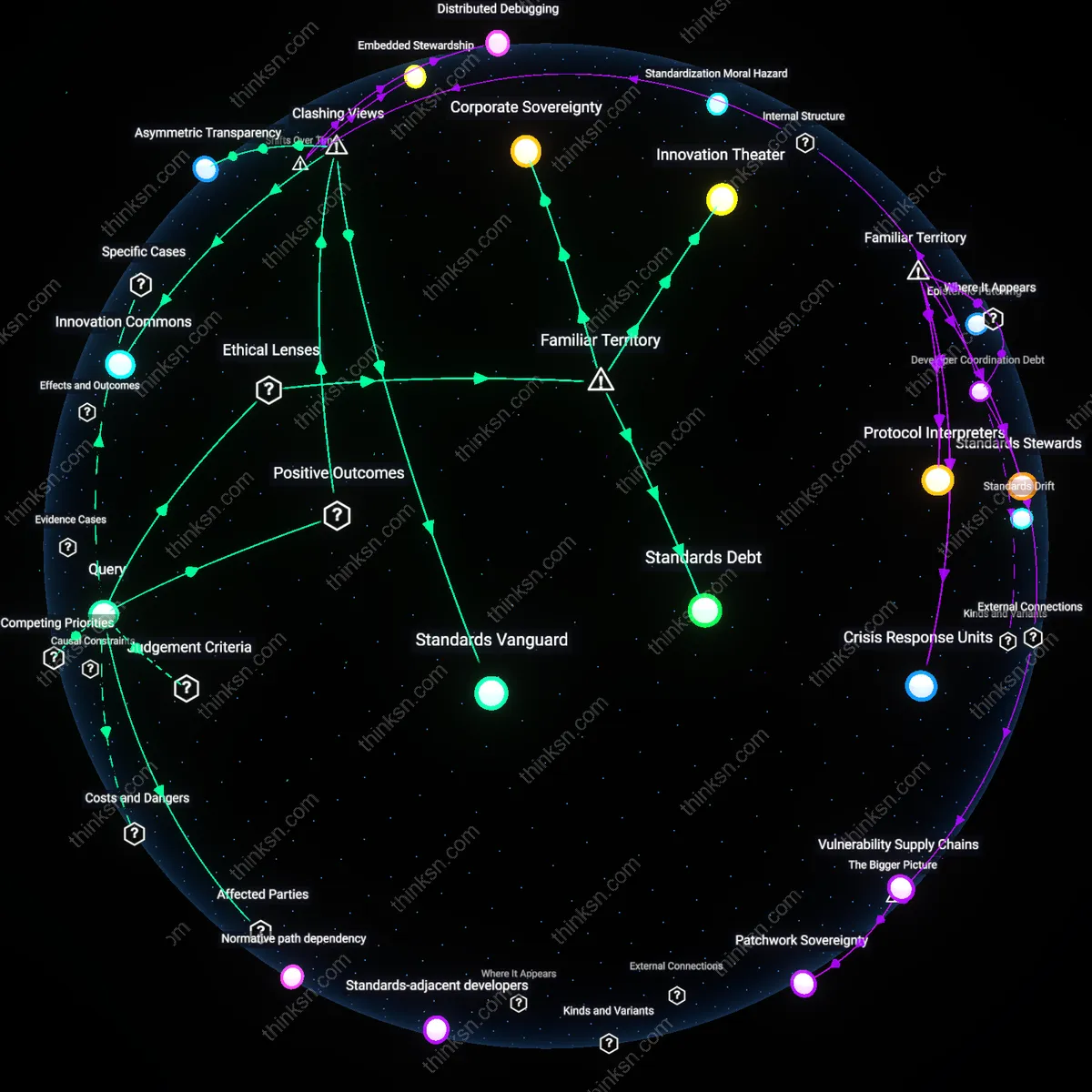

Standardization Lock-In

The 'trustworthy AI' framework strengthens European industrial influence not by creating trade barriers per se, but by positioning EU institutions as the default standard-setters for ethical-by-design AI systems in sectors where global coordination is essential—such as healthcare, transportation, and public services—thus attracting non-EU actors who voluntarily adopt EU norms to gain interoperability and legitimacy. Firms in countries without mature AI governance frameworks, particularly in Africa and parts of Southeast Asia, increasingly align with the EU’s risk classification and documentation requirements not due to coercion but because Brussels offers the most operable template, effectively locking in European architectures as global defaults. This challenges the assumption that regulatory reach equals economic exclusion, exposing how the EU leverages institutional credibility and normative clarity to turn regulatory stringency into soft power, making compliance a path to influence rather than a penalty. The underappreciated mechanism is not resistance to foreign firms, but the voluntary capture of global AI development workflows by EU-centric governance logic.

Compliance Divergence Drag

Rather than strengthening European industrial dominance, the EU's AI standards risk weakening its global standing by creating a 'compliance divergence drag' that disproportionately burdens small and mid-sized EU-based AI innovators, who lack the legal infrastructure to navigate evolving obligations, while larger non-EU tech conglomerates absorb compliance costs as marginal expenses. Unlike U.S. or Chinese hyperscalers with dedicated regulatory affairs divisions and cross-jurisdictional operational buffers, European startups face existential strain from mandatory conformity assessments, documentation, and high-risk system monitoring, effectively shifting competitive advantage toward well-resourced foreign firms already adept at managing multi-market complexity. This inverts the narrative of regulatory protectionism by showing how 'trustworthy AI' may functionally serve as a de facto subsidy for well-capitalized non-EU actors able to exploit the EU's rule-making authority without bearing its disproportionate impact on domestic experimentation and scaling. The overlooked reality is that regulatory leadership does not automatically translate into industrial ascendancy—especially when the cost of compliance becomes a filter that sorts by corporate scale, not geography.

Regulatory gravity mirage

The EU's 'trustworthy AI' framework amplifies European industrial influence not by directly favoring domestic firms, but by positioning EU standards as ethically pre-legitimized in global markets, thereby attracting voluntary compliance from non-EU firms seeking reputational capital. This occurs because multinationals in AI, particularly from the US and Asia, anticipate consumer and investor preferences in prestige-sensitive sectors—like healthcare or finance—to align with EU-defined trust markers, even absent legal obligation. The non-obvious dynamic is that compliance barriers function not as enforced restrictions but as gravitational pull toward an ethical branding ecosystem dominated by European norms, which reshapes competitive entry conditions without explicit protectionism. This reframes standard analyses that focus solely on regulatory compliance costs by revealing a soft-power mechanism where normative leadership substitutes for trade barriers.

Compliance theater economy

Non-EU AI firms increasingly adopt EU-style documentation, impact assessments, and auditing procedures not because they operate in Europe, but to signal organizational maturity to non-regulatory gatekeepers such as venture capital firms and cloud infrastructure providers that use conformity to EU standards as a proxy for governance readiness. This dynamic emerges because platforms like AWS, Azure, and Google Cloud—seeking to reduce liability exposure—now offer compliance tooling aligned with EU AI Act templates, thereby extending regulatory formality beyond jurisdictional reach. The overlooked angle is that the cost of compliance is not primarily imposed as a trade barrier but absorbed voluntarily within supply-chain onboarding processes where perceived operational reliability matters more than legal risk, transforming regulation into a de facto technical standard in global developer ecosystems.

Ethical scaffolding dependency

Smaller European AI startups, despite lacking scale, gain asymmetric access to public procurement and cross-border data pools by embedding 'trustworthy AI' compliance into their initial architecture, creating path dependency for pan-European digital infrastructure that prioritizes interoperability with EU-native governance logic. This advantage is invisible in macroeconomic trade analyses because it operates through technical middleware—such as data protection-certified AI pipelines or algorithmic transparency layers—that become embedded in EU-funded smart-city or health-data projects. The overlooked mechanism is that trustworthy AI standards function less as industrial protection and more as a systemic lock-in for technical governance interfaces, privileging firms that build within EU-defined architectural constraints from inception, thus shaping long-term ecosystem dependence on regionally compliant components.

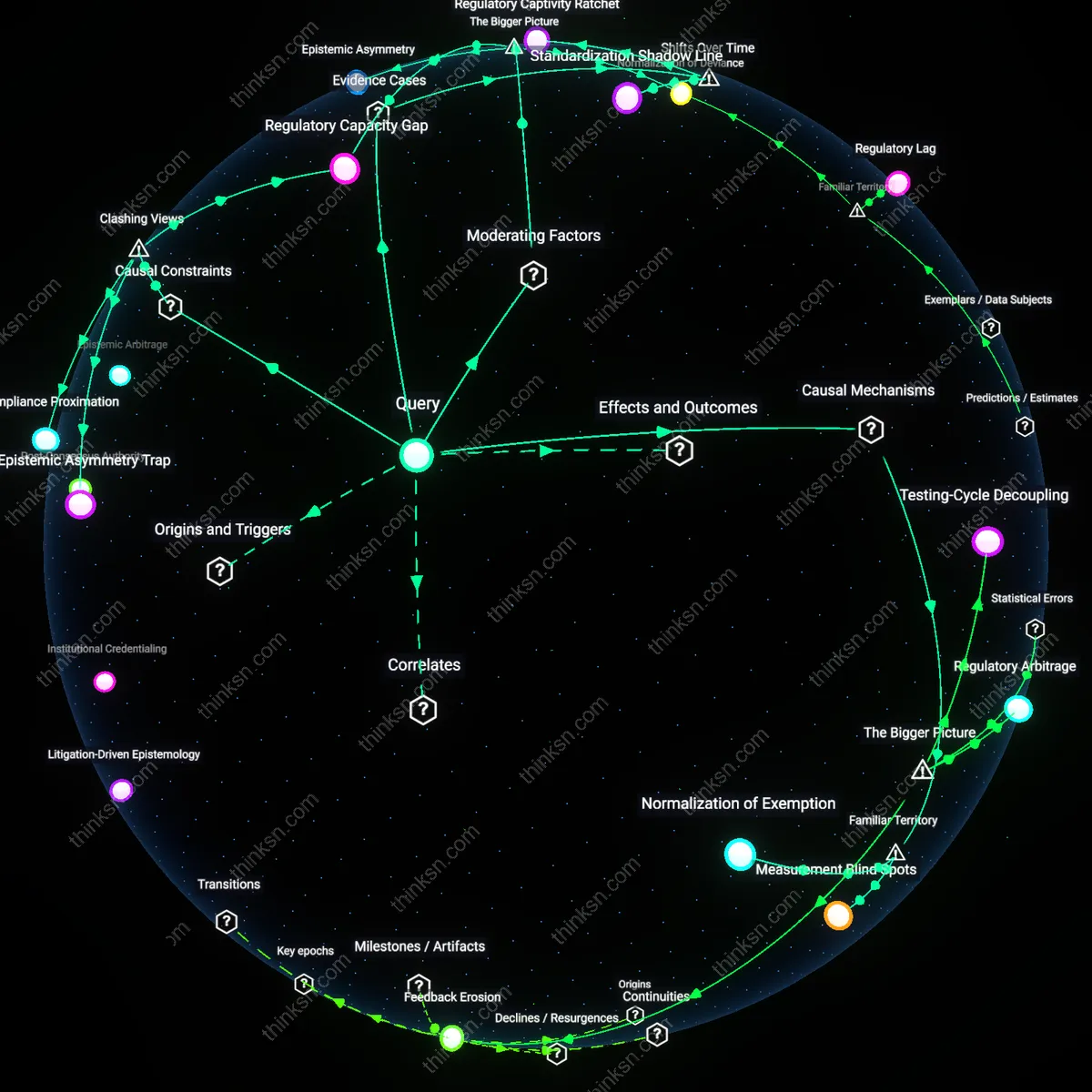

Compliance Ladders

The French data protection authority’s selective delay in certifying foreign AI-driven medical diagnostics—exemplified by the 14-month review of Babylon Health’s GP-assist tool compared to three months for Wipro’s EU-partnered system—reveals that equivalence is granted unevenly across geopolitical alliances. The causal constraint is not technical compliance alone, but the requirement for substantive local presence as an implicit prerequisite for timely approval, a condition waived de facto for firms with EU subsidiaries. This uncovers a hidden gradation in enforcement that functions as a graduated entry barrier, where 'trustworthiness' operates as a staged credential rather than a binary threshold.