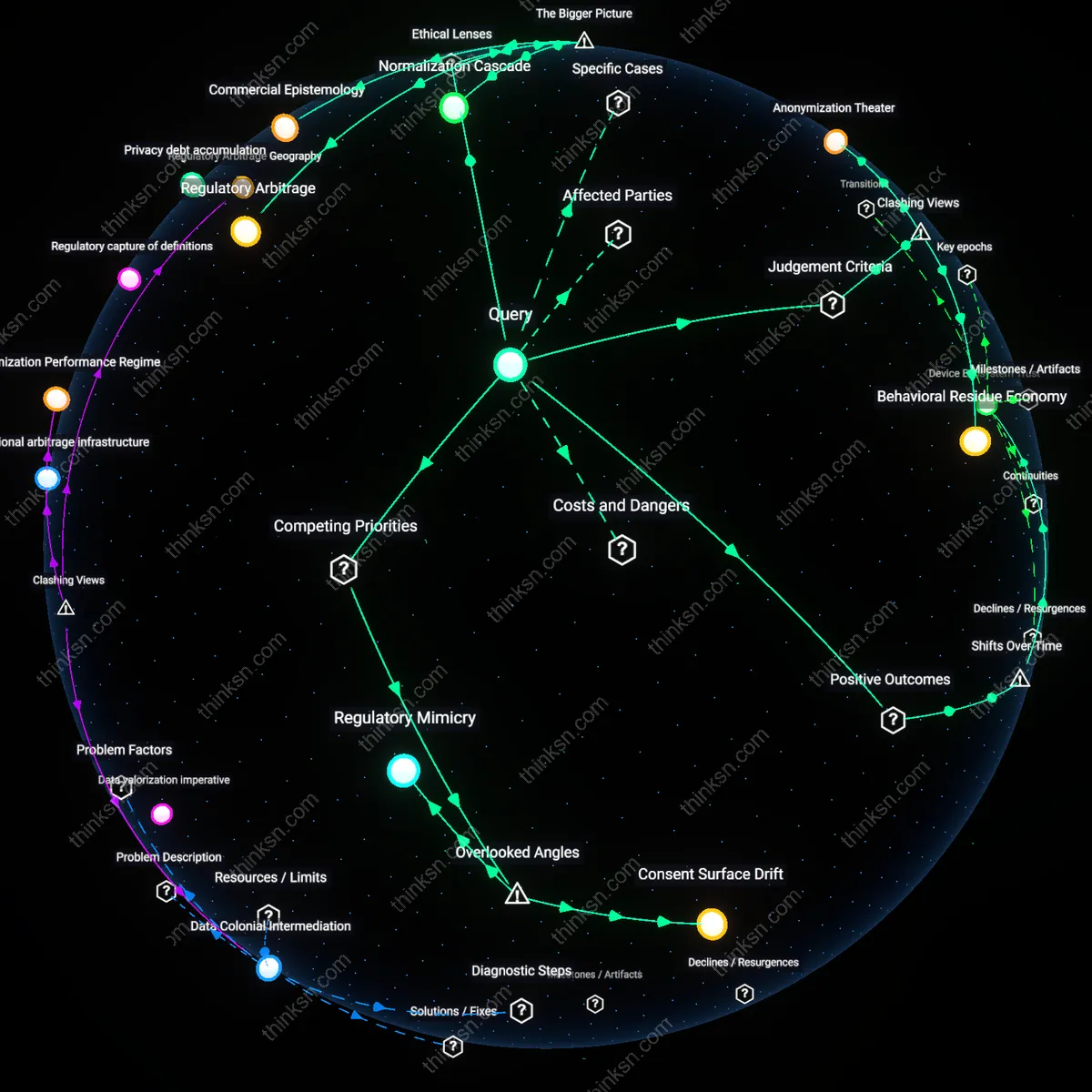

Do Smart Home Anonymization Policies Hide Privacy Risks?

Analysis reveals 9 key thematic connections.

Key Findings

Anonymization Theater

Data anonymization in smart-home devices fails to prevent profiling because aggregated behavioral metadata—such as appliance usage timing and environmental adjustments—can re-identify users through pattern uniqueness even without personal identifiers, revealing that the practice serves regulatory compliance over actual privacy protection. This mechanism operates through machine learning models trained on longitudinal device interactions, which exploit behavioral biometrics to reconstruct identity with high accuracy, as demonstrated in studies analyzing smart meter data. The claim challenges the dominant assumption that anonymization aligns with privacy preservation by exposing its function as a procedural shield rather than a technical barrier, thereby normalizing surveillance-ready data ecosystems. What is non-obvious is that anonymization, intended as a privacy safeguard, actively enables broader data exploitation by assuring consumers of protection while permitting continuous, legally compliant data harvesting.

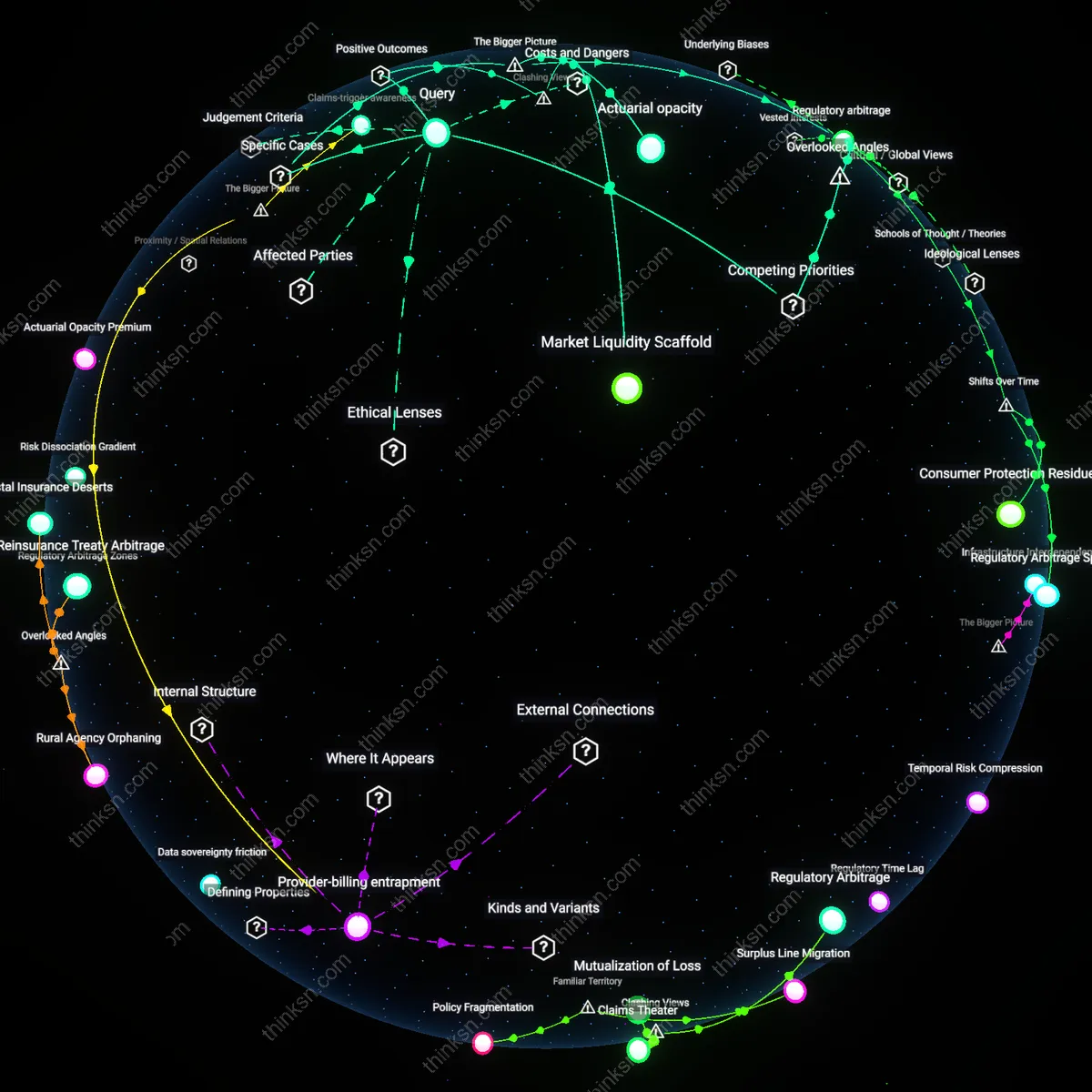

Behavioral Residue Economy

The anonymization of smart-home data does not obscure but instead fuels privacy degradation by commodifying behavioral residue—non-identifiable traces of everyday routines—into tradable prediction assets within IoT data markets, where machine learning infrastructures profile users via behavioral clusters rather than individual identities. This occurs through data brokers like LiveRamp and The Trade Desk, which integrate smart-home metadata into cross-platform behavioral targeting systems by linking anonymized sequences to psychographic profiles via probabilistic matching. Contrary to the intuitive belief that anonymization reduces harm, it enables a stealthier form of profiling that bypasses individual consent while maintaining predictive power, thus expanding surveillance capitalism’s reach under the guise of privacy compliance. The non-obvious revelation is that anonymization doesn’t weaken profiling—it reframes it, shifting from identity-based tracking to behavior-based anticipation, which evades existing privacy laws and undermines autonomy as a core application principle.

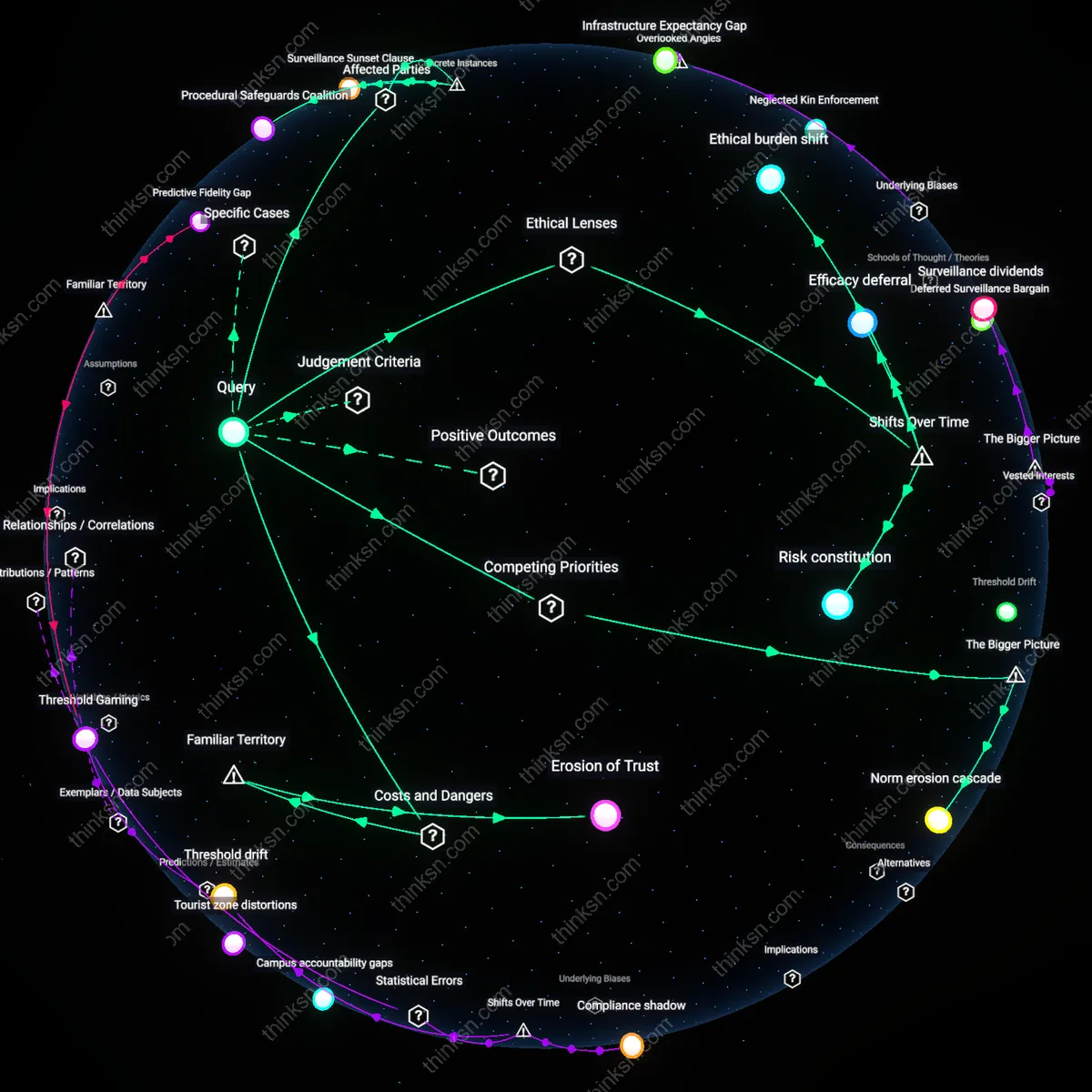

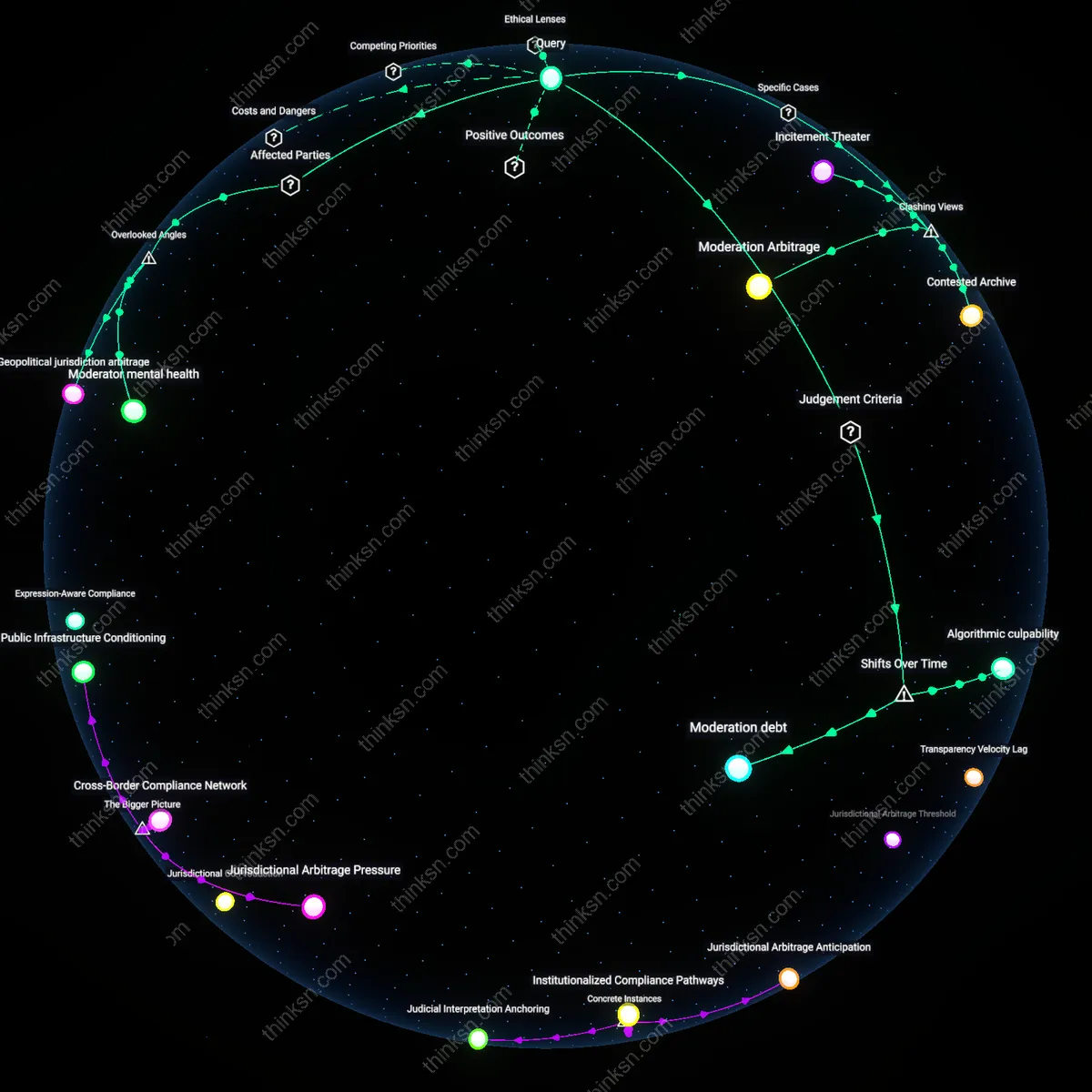

Regulatory Surface Compliance

Claims of data anonymization in smart-home devices obscure rather than prevent privacy degradation because regulatory frameworks like GDPR and CCPA prioritize procedural adherence to anonymization standards over the functional reality of re-identification risk, allowing companies to legally monetize data while appearing compliant. This dynamic manifests in firms like Amazon Ring and Google Nest, which release ‘anonymized’ aggregate reports to municipalities while retaining granular datasets for internal AI training and third-party partnerships, exploiting loopholes that treat anonymization as a legal threshold rather than a technical guarantee. The clash lies in viewing anonymization as a privacy success when, in practice, it functions as a governance alibi—an institutional mechanism that satisfies auditor scrutiny without impeding data exploitation. The non-obvious insight is that regulatory validation of anonymization legitimizes systemic privacy erosion by decoupling accountability from actual harm, transforming compliance into a permissive layer for continuous monitoring.

Device Ecosystem Trust

Following the fragmentation of smart-home markets between 2016 and 2019, anonymization claims gained constructive value not through technical robustness but by serving as a signaling mechanism that reassured consumers during a period of intense interoperability experimentation, particularly in the EU’s FIWARE-based smart city integrations. When disparate devices from Philips, Bosch, and Amazon began sharing environmental usage patterns via municipal IoT hubs, the adoption of anonymization-certified APIs—audited by independent bodies like ENISA—created a minimal trust substrate that allowed ecosystem expansion despite public skepticism. The shift from isolated device logging to federated learning frameworks after 2020 revealed anonymization not as a privacy endpoint but as a transitional trust infrastructure that enabled broader societal uptake of energy-optimized home networks.

Consent Surface Drift

The promise of anonymization distorts the temporal economy of consent by retroactively invalidating earlier user agreements through data reaggregation. When anonymized smart-home data is combined with external datasets—such as utility consumption records or geolocation histories from third-party apps—re-identification becomes feasible not through technical failure but through statistical convergence, undermining the original premise of the consent framework. The overlooked mechanism is that anonymization does not freeze identity risk but defers it, allowing data collectors to exploit future correlation pathways unforeseeable at the time of consent, thereby rendering initial permissions epistemically obsolete.

Regulatory Mimicry

Anonymization practices in smart-home ecosystems simulate compliance to preempt regulatory scrutiny, creating a surface alignment with privacy norms while maintaining backend systems optimized for granular behavioral logging. Companies structure log retention and metadata tagging to technically satisfy anonymization requirements—such as removing direct identifiers—yet preserve feature-level data patterns (e.g., appliance usage cadences) that are unique enough to serve as behavioral fingerprints. The overlooked dynamic is that regulators evaluate compliance at the level of data format, not functional utility, allowing firms to fulfill legal obligations while continuing to feed profiling systems—a performance of privacy that protects institutions more than individuals.

Regulatory Arbitrage

Data anonymization in smart-home devices obscures privacy degradation because regulatory frameworks like GDPR permit re-identification loopholes under aggregated data clauses, enabling firms such as Amazon and Google to exploit definitional gaps between 'anonymous' and 'pseudonymous' data. These companies systematically design data pipelines that technically comply with anonymization mandates while retaining re-linkability through cross-service tracking and device fingerprints, a practice sustained by fragmented oversight among national data protection authorities. The non-obvious consequence is that compliance itself becomes a mechanism of obfuscation, where adherence to legal standards legitimizes continuous surveillance under ethical justifications of user consent and utility.

Commercial Epistemology

The claim of anonymization enables profiling by institutionalizing a commercial epistemology in which user behavior is treated as inherently datafiable and predictive value trumps informational self-determination, as seen in the machine learning infrastructures of companies like Nest and Ring. These systems are designed not to protect privacy but to refine behavioral models through ambient sensing, where even 'anonymized' metadata—such as timing, duration, and device interaction patterns—feeds into actuarial logics of risk assessment deployed in insurance, policing, and targeted advertising. The underappreciated systemic dynamic is that anonymization serves as a rhetorical shield allowing these epistemic norms to naturalize surveillance as a necessary condition for 'smart' functionality.

Normalization Cascade

Anonymization claims degrade privacy collectively by initiating a normalization cascade in which repeated exposure to minimal data disclosures recalibrates societal expectations of intimacy and domestic autonomy, particularly in urban populations dependent on connected home services. This process is driven by landlords, insurers, and city planners who increasingly mandate smart-home installations for efficiency or safety compliance, embedding anonymized data collection into housing infrastructure itself. The unacknowledged trigger is that privacy erosion becomes invisible not through coercion but through incremental acceptance, where each seemingly harmless data submission reinforces a broader socio-technical regime that marginalizes privacy as an obsolete ideal.